Computer Science / Cryptography and Security

Computer Science / Machine Learning

Computer Science / Multiagent Systems

Statistics / Machine Learning

Toward Optimal Adversarial Policies in the Multiplicative Learning System with a Malicious Expert

Reading time: 2 minute

...

📝 Original Info

- Title: Toward Optimal Adversarial Policies in the Multiplicative Learning System with a Malicious Expert

- ArXiv ID: 2001.00543

- Date: 2020-09-21

- Authors: S. Rasoul Etesami, Negar Kiyavash, Vincent Leon, H. Vincent Poor

📝 Abstract

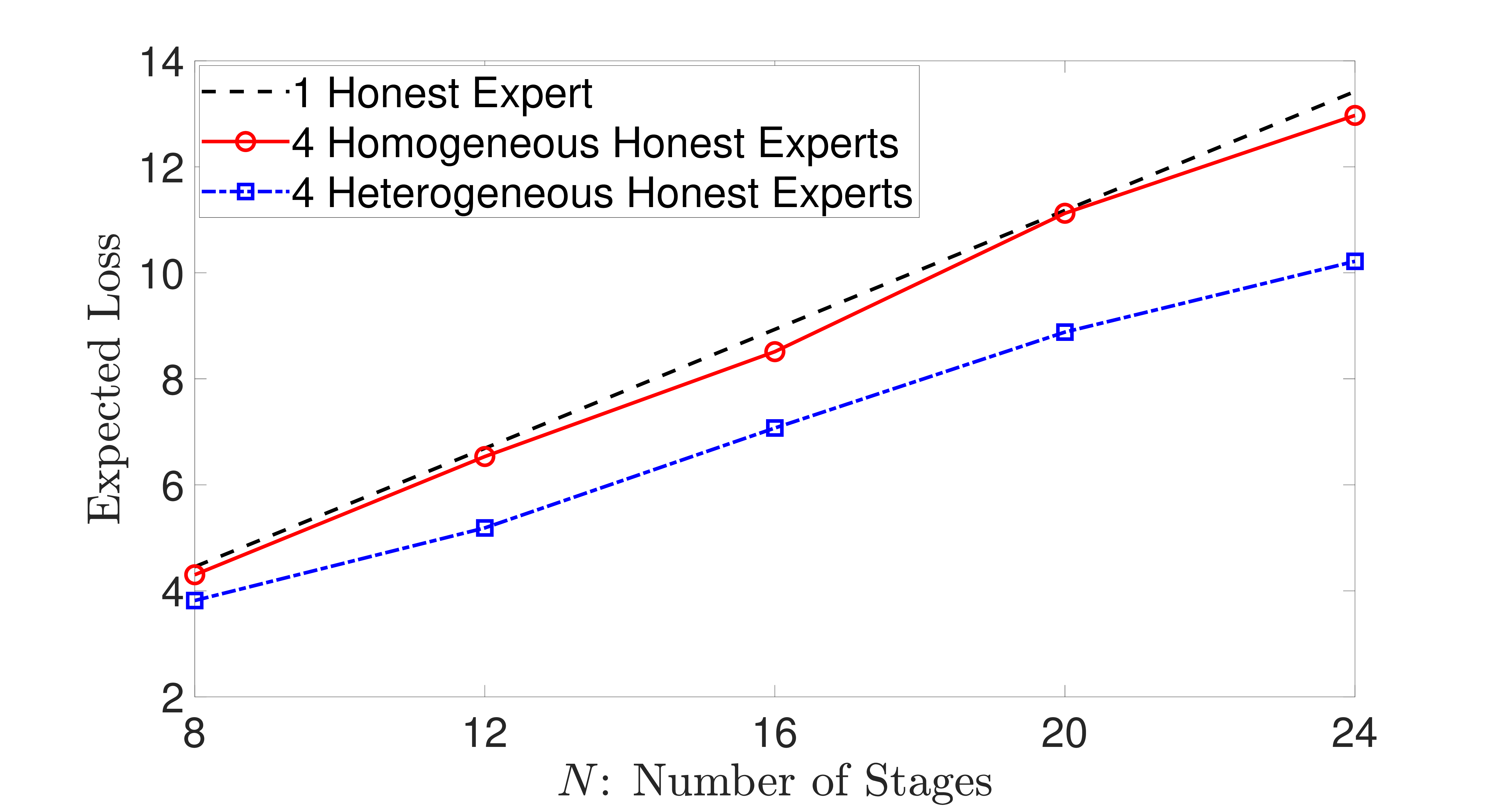

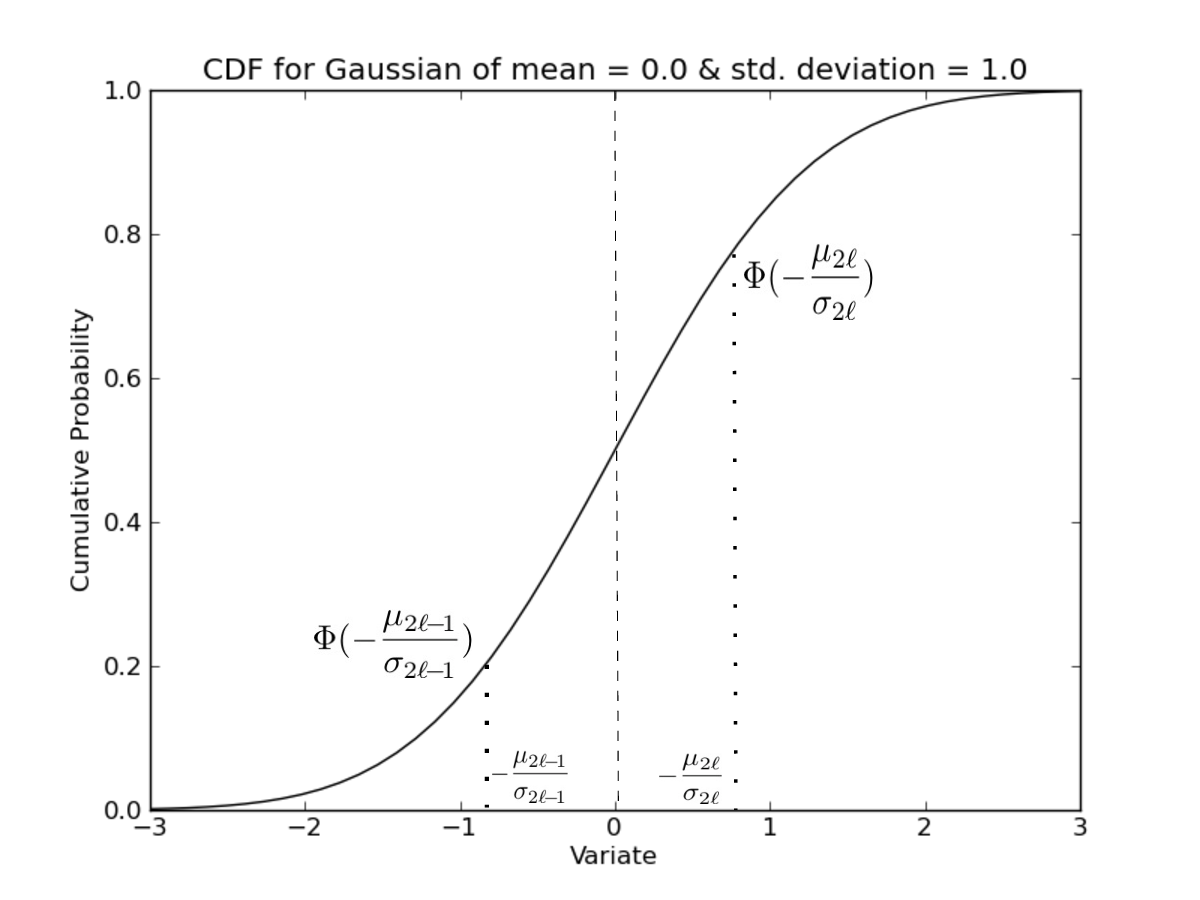

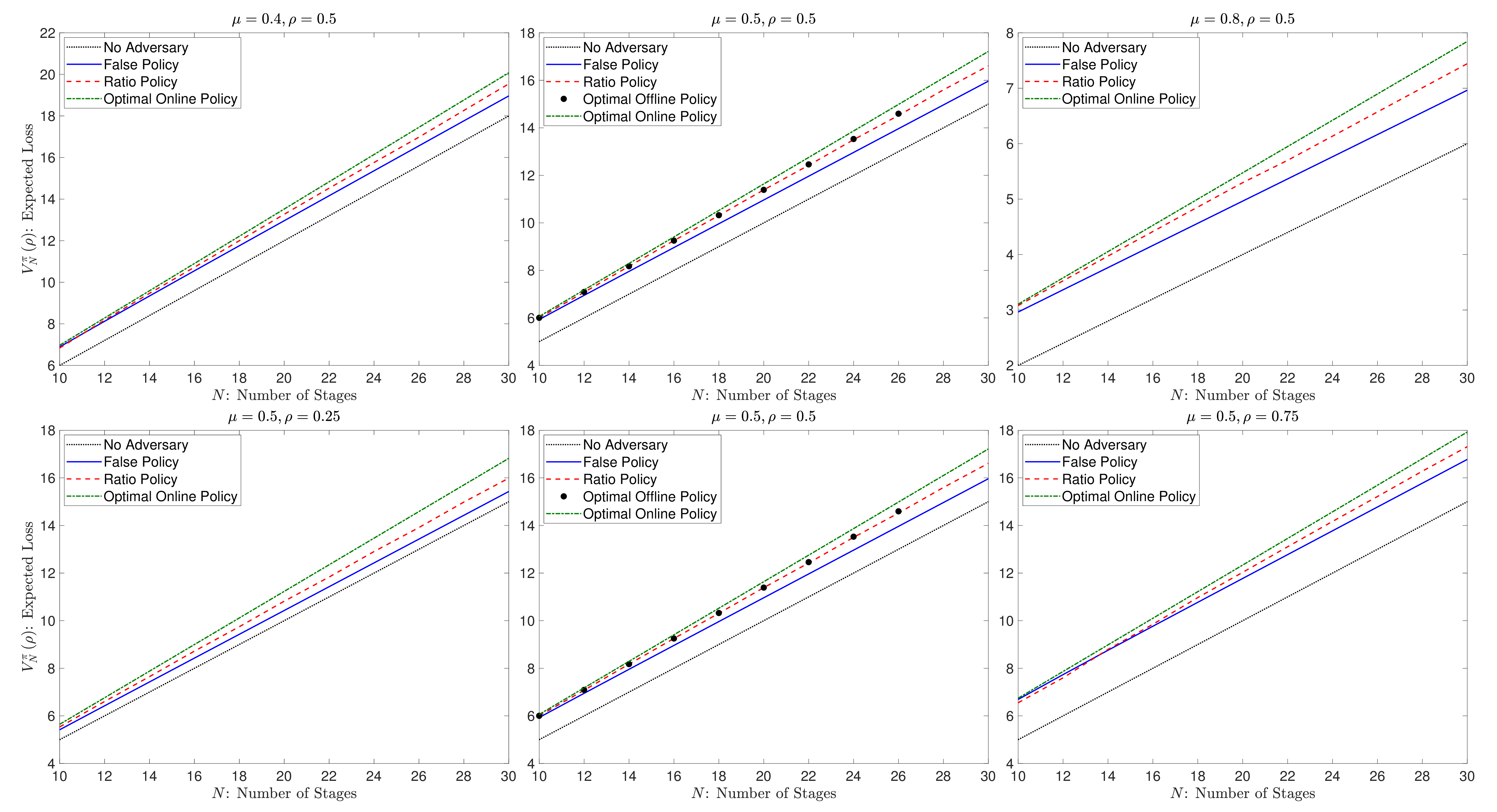

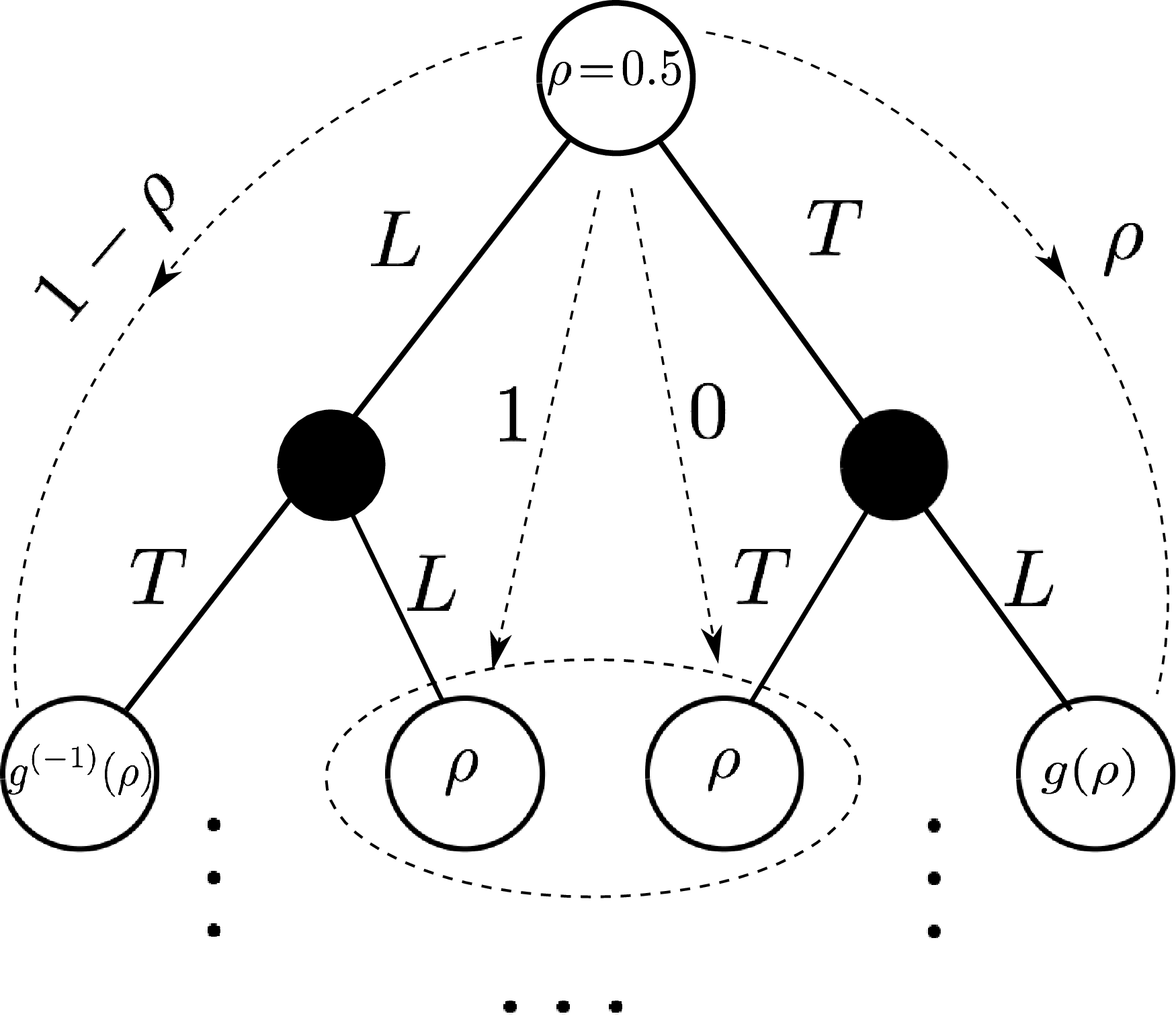

We consider a learning system based on the conventional multiplicative weight (MW) rule that combines experts' advice to predict a sequence of true outcomes. It is assumed that one of the experts is malicious and aims to impose the maximum loss on the system. The loss of the system is naturally defined to be the aggregate absolute difference between the sequence of predicted outcomes and the true outcomes. We consider this problem under both offline and online settings. In the offline setting where the malicious expert must choose its entire sequence of decisions a priori, we show somewhat surprisingly that a simple greedy policy of always reporting false prediction is asymptotically optimal with an approximation ratio of $1+O(\sqrt{\frac{\ln N}{N}})$, where $N$ is the total number of prediction stages. In particular, we describe a policy that closely resembles the structure of the optimal offline policy. For the online setting where the malicious expert can adaptively make its decisions, we show that the optimal online policy can be efficiently computed by solving a dynamic program in $O(N^3)$. Our results provide a new direction for vulnerability assessment of commonly used learning algorithms to adversarial attacks where the threat is an integral part of the system.📄 Full Content

📸 Image Gallery

Reference

This content is AI-processed based on open access ArXiv data.