Computer Science / Information Theory

Computer Science / Machine Learning

Computer Science / Systems and Control

Electrical Engineering and Systems Science / eess.SY

Mathematics / math.IT

A New Approach to Distributed Hypothesis Testing and Non-Bayesian Learning: Improved Learning Rate and Byzantine-Resilience

Reading time: 2 minute

...

📝 Original Info

- Title: A New Approach to Distributed Hypothesis Testing and Non-Bayesian Learning: Improved Learning Rate and Byzantine-Resilience

- ArXiv ID: 1907.03588

- Date: 2019-07-09

- Authors: Aritra Mitra, John A. Richards and Shreyas Sundaram

📝 Abstract

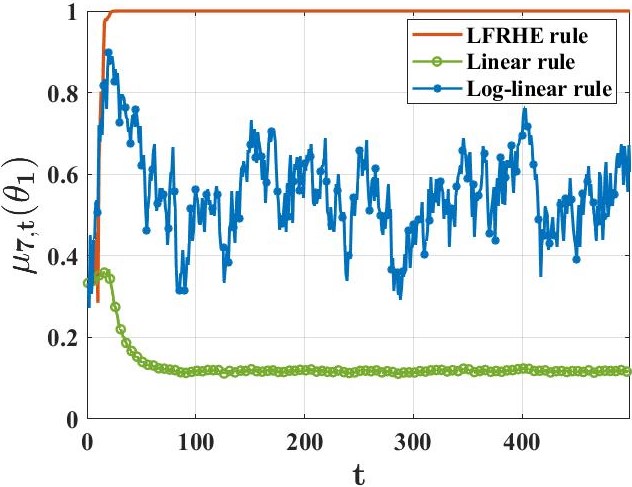

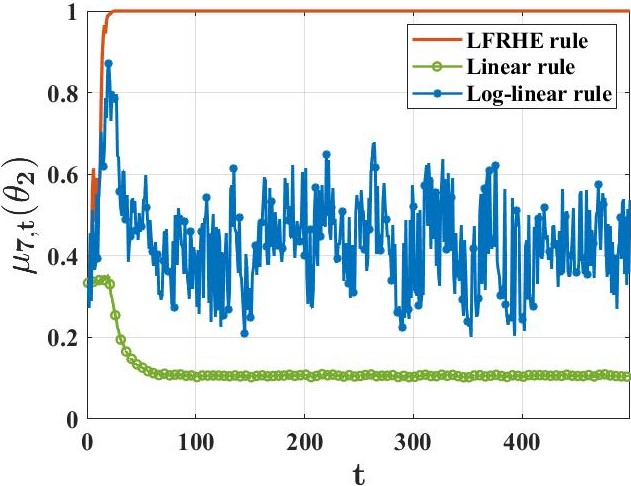

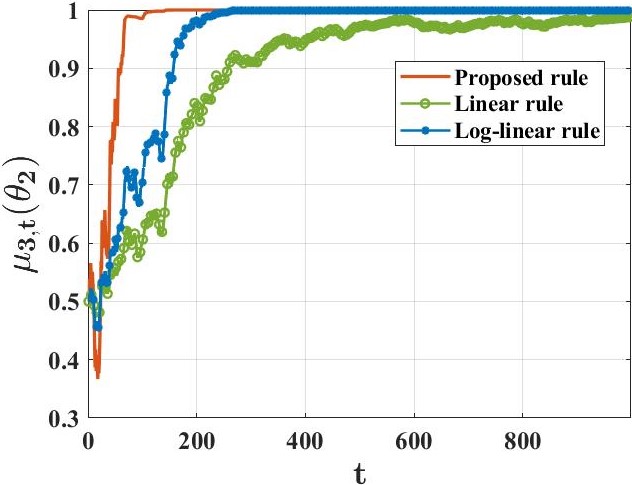

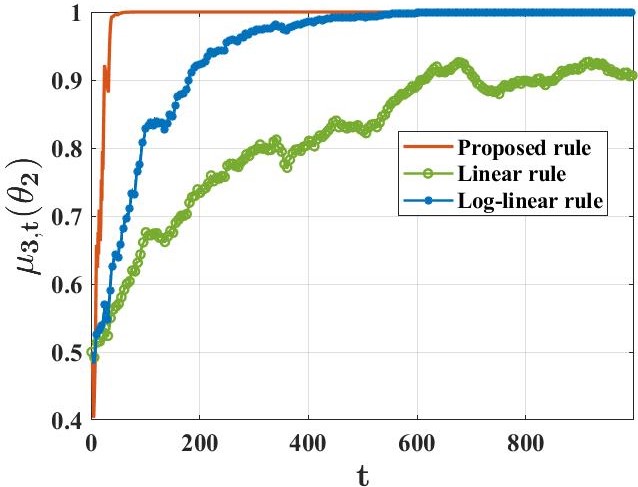

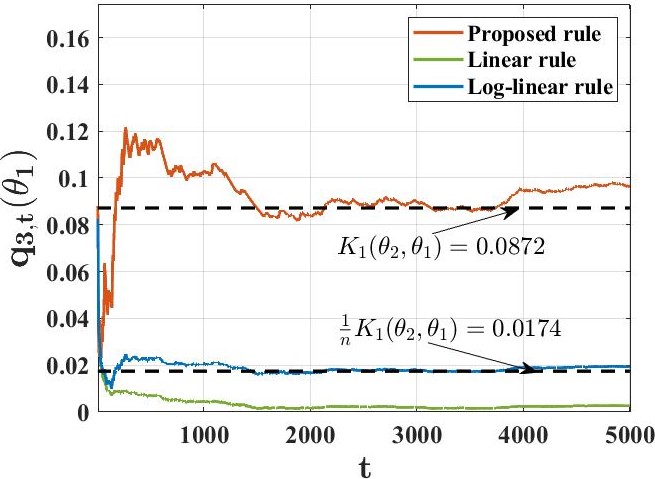

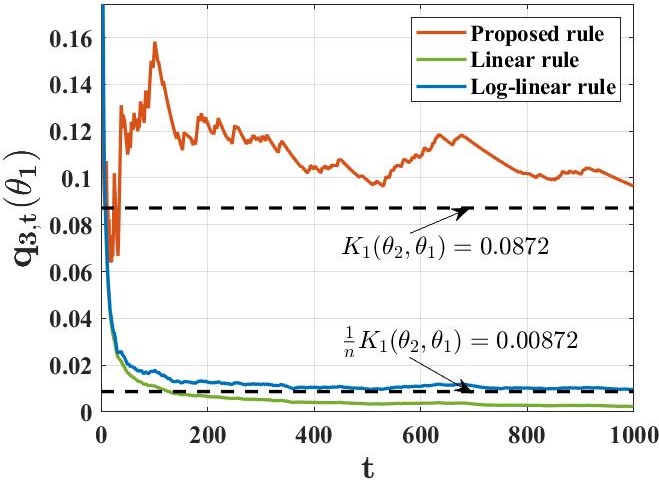

We study a setting where a group of agents, each receiving partially informative private signals, seek to collaboratively learn the true underlying state of the world (from a finite set of hypotheses) that generates their joint observation profiles. To solve this problem, we propose a distributed learning rule that differs fundamentally from existing approaches, in that it does not employ any form of "belief-averaging". Instead, agents update their beliefs based on a min-rule. Under standard assumptions on the observation model and the network structure, we establish that each agent learns the truth asymptotically almost surely. As our main contribution, we prove that with probability 1, each false hypothesis is ruled out by every agent exponentially fast at a network-independent rate that is strictly larger than existing rates. We then develop a computationally-efficient variant of our learning rule that is provably resilient to agents who do not behave as expected (as represented by a Byzantine adversary model) and deliberately try to spread misinformation.📄 Full Content

📸 Image Gallery

Reference

This content is AI-processed based on open access ArXiv data.