MiroEval: Benchmarking Multimodal Deep Research Agents in Process and Outcome

Recent progress in deep research systems has been impressive, but evaluation still lags behind real user needs. Existing benchmarks predominantly assess final reports using fixed rubrics, failing to evaluate the underlying research process. Most also…

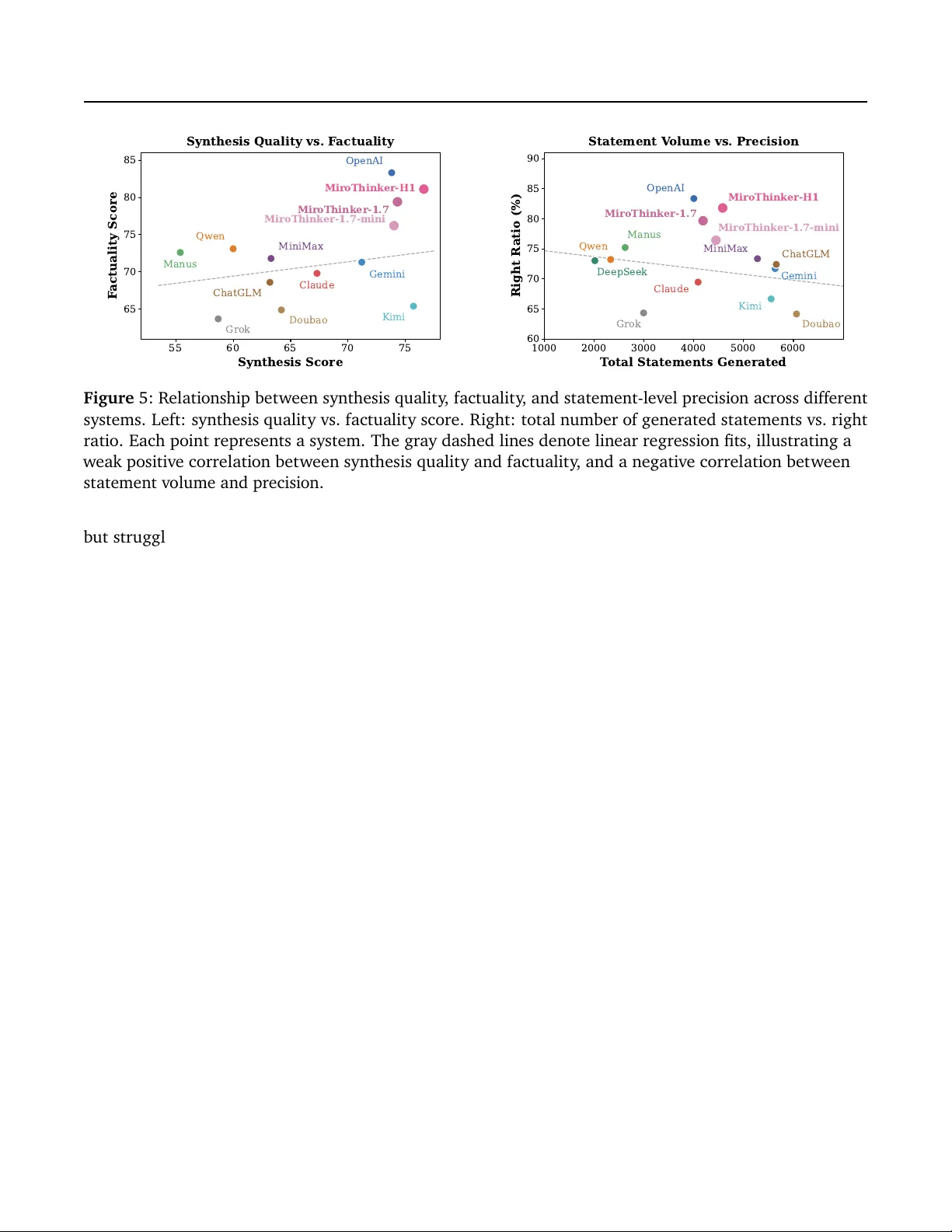

Authors: Fangda Ye, Yuxin Hu, Pengxiang Zhu