Optimal Demixing of Nonparametric Densities

Motivated by applications in statistics and machine learning, we consider a problem of unmixing convex combinations of nonparametric densities. Suppose we observe $n$ groups of samples, where the $i$th group consists of $N_i$ independent samples from…

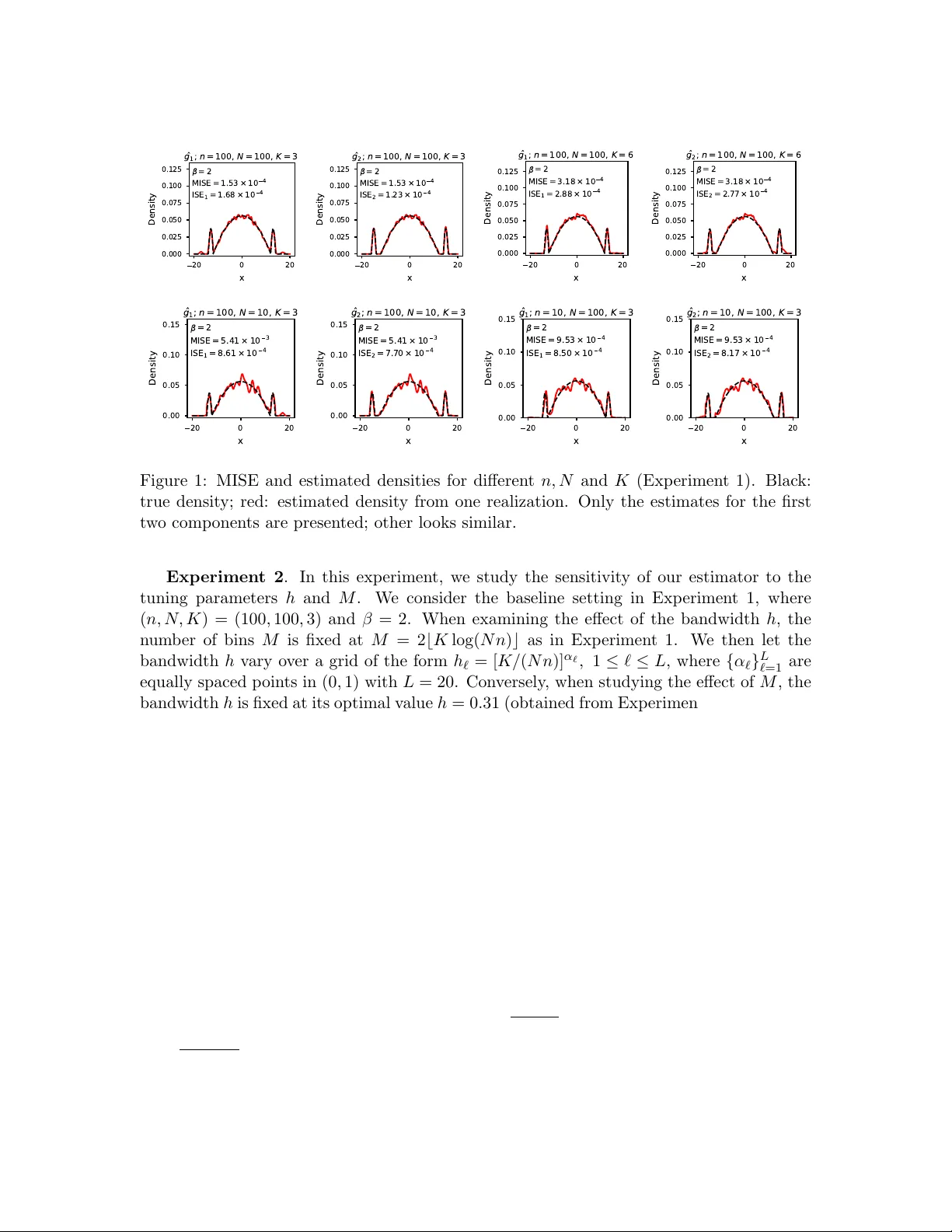

Authors: Jianqing Fan, Zheng Tracy Ke, Zhaoyang Shi