A Comparative Study in Surgical AI: Datasets, Foundation Models, and Barriers to Med-AGI

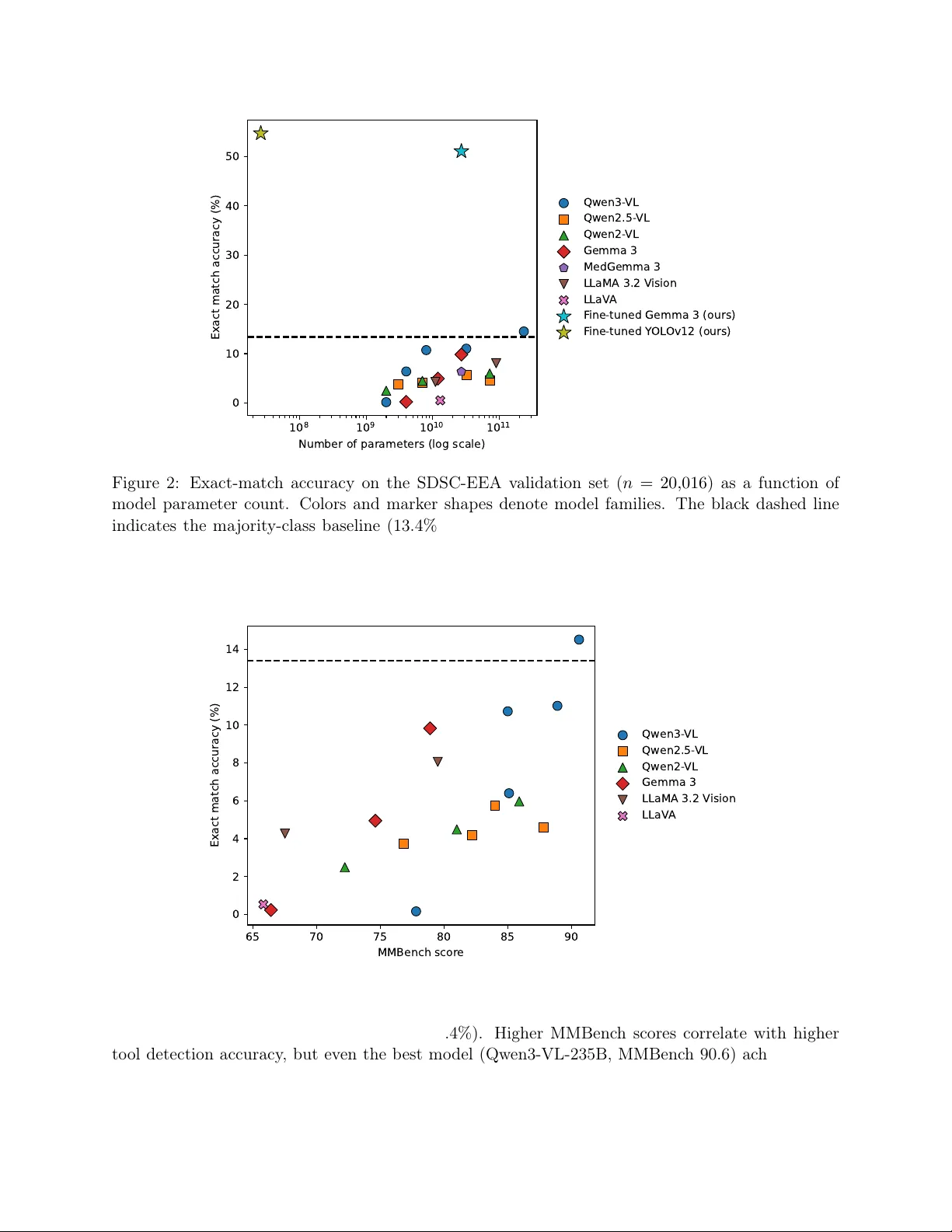

Recent Artificial Intelligence (AI) models have matched or exceeded human experts in several benchmarks of biomedical task performance, but have lagged behind on surgical image-analysis benchmarks. Since surgery requires integrating disparate tasks -…

Authors: Kirill Skobelev, Eric Fithian, Yegor Baranovski