Uncertainty-Guided Label Rebalancing for CPS Safety Monitoring

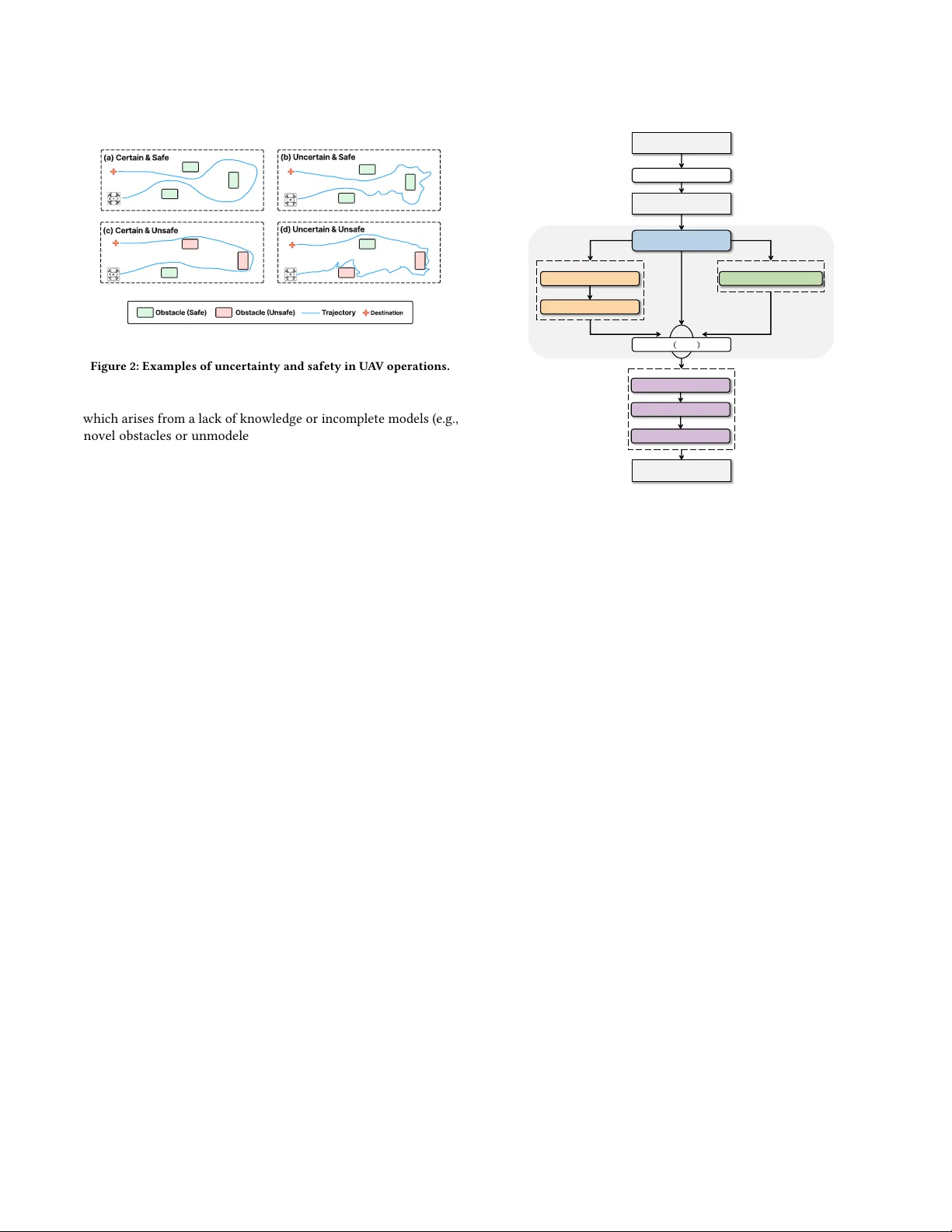

Safety monitoring is essential for Cyber-Physical Systems (CPSs). However, unsafe events are rare in real-world CPS operations, creating an extreme class imbalance that degrades safety predictors. Standard rebalancing techniques perform poorly on tim…

Authors: John Ayotunde, Qinghua Xu, Guancheng Wang