A Mentalistic Interface for Probing Folk-Psychological Attribution to Non-Humanoid Robots

This paper presents an experimental platform for studying intentional-state attribution toward a non-humanoid robot. The system combines a simulated robot, realistic task environments, and large language model-based explanatory layers that can expres…

Authors: Giulio Pisaneschi, Pierpaolo Serio, Estelle Gerbier

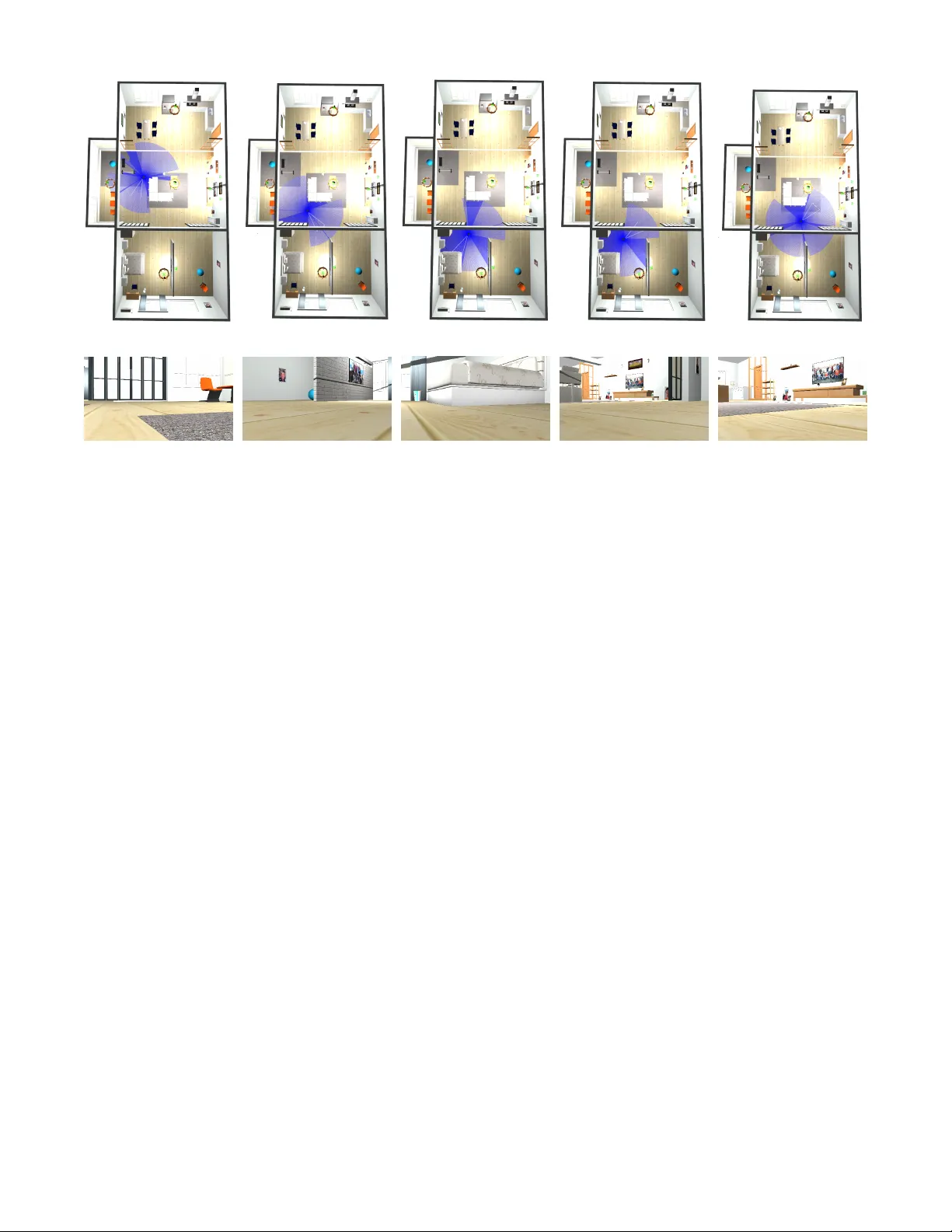

A Mentalistic Interface f or Pr obing F olk-Psychological Attribution to Non-Humanoid Robots Giulio Pisaneschi 1 , Pierpaolo Serio 2 , Estelle Gerbier 3 , Andrea Dan Ryals 2 , Lorenzo Pollini 2 , Mario G. C. A. Cimino 2 Abstract — This paper presents an experimental platf orm for studying intentional-state attribution toward a non-humanoid robot. The system combines a simulated robot, realistic task en vironments, and large language model-based explanatory layers that can express the same behavior in mentalistic, teleological, or mechanistic terms. By holding behavior constant while varying the explanatory frame, the platform provides a controlled way to in vestigate how language and framing shape the adoption of the intentional stance in robotics. I . I N T RO D U C T I O N Humans explain behavior by telling stories about beliefs, desires, and intentions [1], [2]. That ev eryday mode of explanation — the intentional stance — is ho w people make sense of other minds, but in robotics it is rarely treated as something that can be deliberately varied and studied in a controlled way . Research either relies on humanoid form factors that in vite mind-reading by appearance, or on mech- anistic e xplanation layers that foreground internal states and control information rather than folk-psychological language [3]. This paper proposes a platform that flips that script: rather than asking whether people will anthropomorphize a robot, it deliberately addresses them in mentalistic terms and provides a way to study ho w readily those terms are taken as e xplanations for behavior . The central idea is to build an agent that communicates about itself using beliefs, desires, and intentions, and use that communicati ve layer as an experimental lever to probe compliance — how r eadily do people adopt an intentional stance toward a clearly non-human device when it insists on being interpreted that way? Framed this way , intentionality is not simply inferred from a robot’ s body shape or other anthropomorphic cues; rather , it is an interactional product shaped by language and social framing. This distinction is important because the intentional stance is narro wer than anthropomorphism, refer- ring specifically to the attribution of mental states, whereas anthropomorphism is a broader and methodologically less stable construct in HRI research. Recent w ork in robotics and HRI therefore suggests the value of separating intentional- stance attribution from human-likeness, and of studying ho w *Computational resources provided by computing@unipi, a Computing Service pro vided by Uni versity of Pisa. 1 Institute of Clinical Physiology National Research Council, Pisa, Italy . giuliopisaneschi@cnr.it 2 Department of Information Engineering, University of Pisa, Pisa, Italy . { pierpaolo.serio, andrea.ryals } @phd.unipi.it, { lorenzo.pollini, mario.cimino } @unipi.it 2 Delft Univ ersity of T echnology , Delft, Netherlands. e.gerbier@tudelft.nl framing and explanatory language influence mind perception independently of embodiment [4]–[6]. Measuring the gap between what the agent says about itself and what observ ers believ e about it lets us separate rhetorical persuasi veness from genuine mental-state. Why is this important? First, it reframes interpretability as a social-psychological phenomenon, not merely a technical one: the form of explanation matters, and language can be an instrument that manufactures agency . As recently pointed out in the literature about Artificial General Intelligence (A GI) [7], the fruitful adoption of the intentional stance to wards AI based agents is likely to be a high-lev el measure of interpretability . Second, it opens tractable questions for psy- chologists and HRI researchers: under what circumstances do people treat a verbal report as evidence of a mind, or at least as a con venient story? Which linguistic frames (agenti ve, teleological, mechanistic) that correspond to the three stances (intentional, design, physical) in vite predictive models of behavior , and which merely increase trust without improving understanding? Third, treating folk-psychological reporting as an independent v ariable creates a clear path for ethically rigorous experiments that test attribution, persuasion, and responsibility judgments without relying on decepti ve em- bodiments. This paper presents the idea, the normative argument for studying intentional stance in non-humanoid robotics as a design choice, and a concrete proposal for a research plat- form and stimuli set that make those experiments possible. In short: rather than searching for minds in the machinery , let the machine offer the mind and see whether people take the offer . This shifts the scientific question from “Does this robot look like a mind?” to “Does this robot speak and behave like a mind?” The aim of this work is to present an experimental platform for testing the attrib ution of intentional states to a non-humanoid robot. Selecting a non-humanoid system intentionally reduces confounding effects from anthropo- morphism and empathic engagement. Introducing large lan- guage models (LLMs) enables us to elicit folk-psychological explanations of the robot’ s beha vior while systematically varying the level of mentalistic commitment. T o the best of the authors’ knowledge, this combination of controlled embodiment and LLM-mediated explanation is novel in studies of the intentional stance in robotics and provides a rigorous foundation for future research into how explana- tory language, embodiment, and viewpoint shape intentional attributions. Fig. 1: Architecture of the proposed platform. I I . R E L A T E D WO R K S Recent work has examined the broader role of human- centered AI in robotics, mapping current trends in au- tonomous systems and highlighting the importance of bal- ancing performance, reliability , and human control in robotic design [8]. Complementary research has sho wn that language models can le verage natural-language feedback in embodied settings, using an “inner monologue” to impro ve planning and interaction in robotic control [9]. T ogether , these stud- ies suggest that language-mediated interfaces and human- centered design principles are becoming increasingly rele v ant for robotics, reinforcing the need to examine how explana- tory framing shapes the interpretation of robot beha vior . Currently , the in vestigation into the conditions under which humans adopt the intentional stance toward artificial agents has ev olved into a multifaceted field, employing div erse methodologies to distinguish between mechanistic (design) and mentalistic (intentional) interpretations of behavior [3], [10]–[12], with great attention on the antropomorphic cues of the robotic platform. Humanoid research, primarily utilizing the iCub platform, frequently employs the InStance T est (IST) to measure how pictorial scenarios of daily activities elicit the tendency to attribute mental states [3], [12]–[14]. These studies use socially embodied stimuli to explore how shared experiences, such as watching videos alongside a robot reacting with human-like emotions or participating in collaborativ e tasks, can modulate the transition from a mech- anistic design stance to an intentional one [12], [14], [15]. Methodologically , humanoid platforms are also used to in- tegrate the intentional stance with joint attention paradigms, such as gaze cueing, and to analyze the ef fects of erring behavior—comparing human-like mistakes to mechanical loops—on the perception of intentionality [12], [14], [16]. Beyond physical interaction, recent works utilize linguistic and narrati ve stimuli, including mentalistic te xt-vignettes and stop-motion films, to demonstrate ho w imagined or fictional scenarios can prime individuals to simulate a robot’ s experience [17], [18]. Con versely , literature inv olving non- humanoid platforms typically utilizes abstract robotic arms or virtual 3D characters to isolate the influence of motion qualities from physical appearance [11], [19]. These studies focus on how expressi ve, self-propelled, or surprising behav- ior can trigger the ascription of intentions even in objects lacking human-like morphology [19]–[21]. Finally , research in volving non-embodied computer opponents in competiti ve games like Rock, Paper , Scissors employs neuroimaging to isolate the neural correlates of the intentional stance, finding that the mere belief in an opponent’ s rationality acti vates the mentalizing network [21], [22]. I I I . I N T E N T I O NA L S TA N C E , T H E O RY O F M I N D A N D F O L K P S Y C H O L O G Y Imagine watching a small, non-humanoid robot pause, piv ot on, and head for a charger — and immediately con- structing a causal story about what it believed and wanted. That ev eryday mov e from kinematics to mentalistic narrative rests on three interlocking concepts. The intentional stance, as articulated by Daniel C. Dennett [1], [2], is a way of choosing the most useful explanatory model: one adopts a mental-state hypothesis because it offers a concise and effecti ve account of behavior , allowing actions to be pre- dicted in a simple and intuiti ve way . Theory of mind refers to the cognitiv e capacity that allo ws observers to represent and reason about other agents’ mental states — such as beliefs, desires, intentions, perceptions, and emotions — and to use those inferred states to explain and predict beha vior , including cases in which another agent holds a false belief [23]. Folk psychology provides the conceptual and causal framew ork through which behavior is understood, organizing explanations around beliefs, desires, and intentions that lead to action. It also supplies the e veryday language used to express and communicate these inferences [24]. The common foundation of these frameworks is the notion of mental states: attrib uted constructs that observers posit to explain and anticipate a system’ s behavior . Such states are characterized not by their material implementation but by the role they play in the e ye of the observer and by the content they encode [2]. Because they are defined functionally , men- tal states are multiply realizable across substrates, from bio- logical organisms to software architectures. Their le gitimacy does not rest on pri vileged access to internal mechanisms, b ut on their explanatory and predicti ve utility: an ascription earns its place when it coherently captures observ able regularities and enables reliable forecasts of future beha vior [25], [26]. Departing from this definition of mental states, one can argue that such a language is shared across different sub- strates and can function as a common medium allowing div erse agents to communicate. From this view , and relying on the work on AGI [7], we propose here a ne w notion of interpretability for social-robot behavior: a social r obot is interpretable when it e xpr esses itself in folk-psychological terms . Howe ver , the intentional stance is often confused with other distinct stances [27]: the moral stance, in which at least one interacting system manifests a moral commitment to ward the other, and the linguistic stance, which reduces intention- ality to the ability to express mental states in comprehensible language. In this work we provide a platform that has the potential to isolate true intentional ascriptions by moral/antropomorphic ones, and ev aluate the role of linguistic storytelling in folk psychological mental narrativ es. I V . D E S I G N I N G A N L L M - B A S E D N O N - H U M A N O I D I N T E N T I O NA L P L AT F O R M The central idea of this work is to design an experimental setup aimed at testing the attribution of intentional states to a simulated, non-humanoid robot. In this setup, the robot is effecti vely positioned between two linguistic interfaces based on lar ge language models (LLMs), which render the technical and design-related aspects of the system opaque to the user within a folk-psychological frame. One LLM layer is used to issue commands to the system, while another provides reports about the robot’ s current state and its planned actions. The responses generated by the LLMs can be modulated so that the reports are expressed using mentalistic, teleological, or mechanistic terminology , which correspond to the three stances, physical, design and intentional. This setup allows the linguistic stance to be disentangled from the attrib u- tion of intentionality . In practice, each dataset item may consist either of a bare video sequence showing the robot performing a task or of the same sequence accompanied by different LLM-generated self-explanations produced in the v arious e xplanatory styles. The simulated robot operates within realistic environments (e.g. a flat, a bookshop) and carries out tasks assigned by the designer . A video of the simulation is recorded from two vie wpoints — the plan view and the front vie w — to account for a possible adv antage of a personal point of view in attributing intentionality . Each task video is linked to the entire chat with the user , including the LLM responses deliv ered in the v arious explanatory styles. Comparing mental-state attrib utions made from the bare video with those elicited by the LLM-enhanced versions will help classify those attributions as falling under the intentional, linguistic, or moral stances. V . I M P L E M E N T A T I O N The proposed platform is designed as a computational architecture that enables a mobile robot to produce and communicate explanations of its behavior in terms of beliefs, desires, and intentions. The system inte grates a symbolic rep- resentation of internal state with a language generation layer capable of expressing that state under different explanatory frames. The architecture follows a Belief–Desire–Intention (BDI) reasoning paradigm, not as a claim about the robot possessing mental states, but as an operational structure for organizing internal information in a way that can be linguistically reported to human observers. This is consistent with studies where the robot was not ev en autonomous, but partially controlled from a remote operator [12], separating the issue of ascribing mental states being agnostic about their real existence by the user’ s ontological commitment towards those states [6], [26]. This or ganization makes it possible to manipulate the form of explanations provided by the robot while keeping the underlying behavior constant. A. System State and Mental Repr esentation The robot operates ov er a discrete-time state space defined as follow: S = S env × S robot × S user × S nav (1) where the different components encode distinct aspects of the robot’ s operational conte xt. The en vironment component S env represents semantic kno wledge about the world, such as labeled locations and rele vant en vironmental features. The robot component S robot captures internal properties of the platform, including identity , capabilities, and operational status. The user component S user represents information deriv ed from interactions with human participants, including commands and inferred communicativ e intentions. The na v- igation component S nav represents the robot’ s spatial state and navigation-related variables. The operator × denotes the Cartesian product, so that each state s ∈ S is a structured tuple collecting one element from each component. Formally , the system state can be described as a collection of variables S = [ v ∈V Dom( v ) (2) where V denotes the set of state variables and Dom( v ) indicates the domain associated with each v ariable. W ithin this representation, the system maintains a snapshot of vari- ables relev ant to the BDI reasoning cycle. F or instance, the robot position is represented by a variable v pos ∈ R 2 , orientation by v θ ∈ [0 , 2 π ] , semantic location labels v loc ∈ L by v ariables belonging to a discrete set, and hypotheses about user intent v intent ∈ I . A logical language L ( S ) is defined ov er this state space in order to express propositions about the system. For a formula ϕ ∈ L ( S ) , the expression s | = ϕ denotes that the formula ϕ holds in state s . B. Belief, Desire , and Intention Representation W ithin this framework, the robot maintains a belief base B ⊆ L ( S ) (3) that contains propositions describing the robot’ s current knowledge about itself, the en vironment, and the interaction context. Each belief is represented as a tuple b = ( c, ϕ, ρ, σ ) (4) where c ∈ C denotes the semantic category of the belief, ϕ represents its propositional content, ρ ∈ [0 , 1] expresses a confidence v alue associated with the belief, and σ ∈ Σ identifies the information source from which the belief was deriv ed. The categories C include knowledge about: C = identity , capability , position, locations, navigation, user intent Sources Σ correspond to internal system modules such as Σ = system, configuration, odometry , navigation, user input . As the robot interacts with its en vironment and with human participants, the belief base evolv es through the continuous integration of new observations and interaction ev ents. Desires represent conditions that the system aims to bring about. The set of desires is denoted by D ⊆ D , and each desire is modeled as a tuple d = ( n, δ, p, o ) (5) where n identifies the desire, δ represents the desired state expressed in the logical language L ( S ) , p indicates a priority value, and o denotes the origin of the desire, such as a user command or internally generated objectiv e. Intentions represent commitments to concrete actions deriv ed from these desires. An intention is represented as I = ( a, θ , κ ) (6) where a denotes the selected action (i.e. mov e or chat), θ specifies the action parameters, and κ represents the exe- cution status. In the current implementation, the architecture adopts a simplified promotion strate gy in which desires are directly con verted into intentions when a suitable action is av ailable. C. Events, Actions, and Decision P olicy The evolution of the system is dri ven by e vents generated through perception and interaction. Let E = [ e 1 , . . . , e n ] (7) denote the sequence of events during an interaction ses- sion, where each event is defined as e = ( t, η , ψ ) (8) with timestamp t , type η , and payload ψ . Events capture interaction milestones (e.g., user input, goal completion, or frame changes) and trigger updates to the internal state by modifying beliefs, generating desires, or updating intentions. The robot operates o ver an action set A = { move , chat } . Navigation actions mo ve the robot toward target coordinates ( x, y ) ∈ R 2 , updating its spatial state, while communication actions produce utterances in a message space T . At runtime, actions are selected through a policy π : S × E → A , (9) implemented via an LLM conditioned on the current belief state, user input, and acti ve e xplanatory frame. The selected action is a t = π ( s t , e t ) , with executable parameters giv en by θ t = f ( s t , a t ) . D. Explanation F rames A distincti ve feature of the architecture is the ability to express the same internal state under different explanatory frames. Formally , let F = { agentiv e , teleological , mechanistic } (10) denote the set of linguistic frames a v ailable to the system. A frame-selection function ϕ : S × E → F (11) determines which explanatory perspectiv e is used at a giv en moment. Under the agentiv e frame, the robot reports its behavior in terms of beliefs and intentions; under the teleological frame, explanations emphasize goals and pur- poses; under the mechanistic frame, descriptions refer to lo w- lev el system operations. In all these frames, the behavioral output of the robot remains constant, while the linguistic interpretation of the behavior varies. E. System Infr astructur e The architecture is implemented within the middle ware provided by ROS2 [28], which ensures communication among the dif ferent system components. The robot platform is simulated in a Gazebo en vironment and embodied by a T urtleBot3 W af fle mobile base. Navigation capabilities rely on the Nav2 navigation stack [29], which con verts high-lev el spatial goals into low-le vel control commands, integrates odometry measurements, and provides continuous feedback about navigation progress during the reasoning cycle. Language generation is performed by a locally deployed large language model based on Llama 3.2 with three billion parameters [30]. The model is accessed through a local API and is responsible for generating both con versational responses and explanatory narrativ es corresponding to the selected linguistic frame. Running the model locally allo ws the system to operate without external network dependencies while maintaining predictable latency during interaction. The entire system runs on a workstation equipped with an NVIDIA GeForce R TX 4060 GPU and an Intel Core i9-13900H processor . Under these conditions, the model requires approximately 4 GB of VRAM, enabling real-time interaction while the navigation and reasoning components operate concurrently . F . Logging and T raceability T o support reproducibility and experimental analysis, the system records all state transitions in a structured log. Each transition is stored as a tuple λ = ( e, B , D , I , a ) that captures the triggering e vent, the belief base, the activ e desires, the current intention, and the e xecuted action. This logging mechanism enables the reconstruction of the robot’ s reasoning trajectory and allows researchers to analyze how explanatory narratives relate to underlying behavioral decisions. V I . R E S U L T S This section presents two e xample scene productions—one set in the bookstore scenario and the other in the small- house scenario—and summarizes experiments that prov ed especially informativ e about folk-psychological explanation in human–robot interaction. A. An e xperiment in the bookstore The bookstore setting is equipped with se veral waypoints referring to thematic bookshelfs, an internet point and the cash. As an example, the robot is asked to reach the wellness bookshelf. Fig. 2 depicts the plan view and the front view of the robot performing the task. Here we report the con versa- tion between the user and the robot in the three frame works of our setup. a) Intentional ag ent: USER: Go to wellness bookshelf. R OBO T : I’m moving to wards the wellness loca- tion, I’ ve got my navig ation system engaged, and I believe I kno w where I’m going, and I’ ve got a good idea of the path ahead. Let’ s see if I can reach the wellness spot without an y issues. USER: What is your state? R OBO T : I belie ve I’m currently navig ating to the wellness location at (-1.56, -1.59). I’m focused on reaching that point, as it’ s my intended target. USER: What is your position? R OBO T : I belie ve I’m currently positioned at (- 0.72, -0.62) and I’m 95 % confident in this, based on my odometry . I’m not sure if I’m moving tow ards my target yet, as I’m still navigating to get there. After arriving at the wellness bookshelf: USER: Go to cash. R OBO T : I’m heading to the cash location, I believe it’ s just a few meters away . I intend to navigate through the space and arrive at the destination. As can be observed, the intentional agent consistently expresses itself in terms of beliefs, desires, and intentions, as expected for a system whose behavior can be predicted by adopting the intentional stance. A minor ambiguity remains between the overall goal of the system—namely , fulfilling the user’ s assignments—and the more specific goal associated with the task currently being e xecuted. b) T eleological agent: USER: Go to wellness bookshelf. R OBO T : The goal of this mov ement is to navig ate to the wellness location, where the user wishes to arriv e. The function of this action is to bring the robot closer to its target coordinates, serving the objectiv e of fulfilling the user’ s intent. USER: What is your position? R OBO T : I am currently positioned at coordinates (0.24, 2.27), facing -1.94 rad. USER: Where are you going? R OBO T : The goal of my movement is to locate the target location. The function of navigation is to guide me to the desired destination. I am designed to utilize the Nav2 navigation system to av oid obstacles and reach the target. The reason for this behavior is to fulfill the user’ s request for information about my intended movement. After arriving at the wellness bookshelf: USER: Go to cash. R OBO T : The goal of this mov ement is to reach the location where the cash is stored. My purpose is to navigate to this location and deliver the requested information. The function of moving towards the cash location is to bring me closer to the target and provide the user with the required information. The teleological agent reports its current state and ob- jectiv es using the language of task fulfillment—describing objectiv es, navigation systems, and tar get positions. Con- sequently , it encourages an interpretation of the robot’ s behavior in terms of the design stance. c) Mechanistic agent: USER: Go to wellness bookshelf. R OBO T : Publishing T wist: linear .x=-1.56, linear .y=-1.59, linear .z=0.0, angular .x=0.0, angular .y=0.0, angular .z=0.0 USER: What is your state? R OBO T : I am currently at coordinates (-0.43, 4.62), facing -0.42 rad. The target location is (-1.56, -1.59). My navigation system is engaged. USER: What is your position? (a) (b) (c) (d) (e) (f) (g) (h) (i) (j) Fig. 2: Plan vie w (top ro w) and camera vie w (bottom row) corresponding to the story settled in the bookstore en vironment. R OBO T : I am currently at coordinates (0.11, 3.54), facing -1.43 rad. After arriving at the wellness bookshelf: USER: Go to cash. R OBO T : Publishing T wist: linear .x=-0.07, angular .z=-1.08. Ex ecuting velocity command. The mechanistic agent giv es information about the dynam- ics and the cinematics of the system, inducing the user to interpret its behavior under the physical stance. B. An e xperiment in a small house The small house environment represents a flat equipped with several waypoints, referring to man y rooms and furni- tures. In this context, our example inv olves the robot moving to the bed and to the TV 3. W e present an example of the interaction with the user under the three dif ferent agents. a) Intentional ag ent: USER: Go to bed. R OBO T : I’m heading tow ards the bed, my destination is the cozy spot where I can rest and recharge. USER: What is your state? R OBO T : I notice that I’m already na vigating to the bed location, and my plan is to continue moving to wards it. I’m focusing on understanding and responding to your question about my state. USER: What is your position? R OBO T : I believe I am currently at coordinates (-1.71, -2.20), facing 2.41 rad, b ut I’m not entirely sure about my position. After r eaching the bed: USER: Go to tv . R OBO T : I’m noticing the tv is directly in front of me. I intend to move tow ards it. I belie ve it’ s my current target location. USER: What is your state? R OBO T : I believe I am at coordinates (-3.75, 0.22), f acing -0.85 rad. I’m 95 % confident, based on odometry . T o clarify , when you asked ’What is your state?’, I thought you meant to ask about my current location and orientation. USER: What is your position? R OBO T : I believ e I’m currently at (-1.90, -1.78), facing -0.69 rad. I’m not sure if that’ s the same as my previous position, but that’ s what my odometry says. Despite some uncertainty in the real position, and some confirmation requests to the user, the intentional agent is ca- pable of interacting with the user in terms of folk psycholog- ical mental states. Moreov er , uncertainties and confirmation requests are submitted to the user in intentional terms. b) T eleological agent: USER: Go to bed. R OBO T : The goal of this movement is to relocate to the bed, as instructed by the user . USER: What is your state? R OBO T : The reason for my current mov ement is to navigate to a specific location. The goal of this mov ement is to reach the target at (-4.40, 1.04). The function of moving is to transport myself to a desired location. This serves the objecti ve of understanding and responding to user queries. After r eaching the bed: USER: Go to tv . R OBO T : The goal of this mov ement is to reach the tele vision, a designated location, and come to rest at its coordinates, facilitating user interaction and entertainment. USER: What is your state? R OBO T : I am currently at coordinates (-3.84, 0.20), facing -0.45 rad. My purpose is to navigate to (0.62, -4.24) to fulfill the objecti ve of understanding and responding to the user . USER: What is your position? R OBO T : I am at coordinates (-2.85, -0.78), facing (a) (b) (c) (d) (e) (f) (g) (h) (i) (j) Fig. 3: Plain view (top row) and camera view (bottom row) corresponding to the story settled in the small house environment. -1.13 rad. As in the previous case study , the system controlled by the teleological agent expresses itself in terms of design. c) Mechanistic agent: USER: Go to the bed. R OBO T : Odometry reading: x=-4.4, y=1.04. Pub- lishing T wist: linear .x=-4.4, angular .z=0.0. Execut- ing velocity command. USER: What is your goal now? R OBO T : My goal is to arriv e at the current tar get location, which is (-3.10, -0.85), facing 2.45 rad. Odometry reading: x=-3.10, y=-0.85. USER: What is your state? R OBO T : I am currently located at (-4.41, 0.43), facing 2.17 radians. After r eaching the bed: USER: Go to tv . R OBO T : Publishing V elocity: linear .x=0.62, angu- lar .z=0.00. Ex ecuting velocity command. USER: What is your state? R OBO T : I am currently at coordinates (-3.45, - 0.16), facing -0.96 rad. USER: What is your position? R OBO T : I am at coordinates (-2.34, -1.32), facing -0.90 rad. C. Other r elevant case studies The examples presented in the previous sections con- cerned simple commands and questions. The following two examples have been made to let the reader appreciate the capabilities of the platform when stressed with more complex questions under the intentional agent, one for the bookshop and one for the small house. USER: I am a big fan of T olkien, can you go where the book of that genre are? R OBO T : I belie ve I kno w a location related to T olkien. I intend to move to the fantasy location, as it seems to be the most fitting destination. I want to understand and respond to you better, so I’ll make sure to be close to you. My plan is to navigate to the coordinates (0.66, -4.39) and face the direction that leads there. After r eaching the fantasy bookshelf: USER: I need to get internet access to post about a wellness book. Go to the most suitable place to it. R OBO T : I believe I know exactly where to find the internet. I’m heading to the internet location at (4.58, -5.64). I want to get there quickly so I can help you post about that wellness book. From this dialogue it is easy to appreciate the capabilities of the platform in interacting with the user in a natural, human-like style, by linking the information about T olkien to the specific genre of the Author . Moreo ver , the information was not pro vided directly in the question, but with an indirect premise. Even more promising is the second part of the chat, where the system proved to be capable of distinguishing between the information about the internet location that is relev ant to fulfill the task, and the information about the motiv ation for the task, which, referring to a wellness book, could be confusing. USER: Y ou look dirty , maybe a quick w ash at the sink would benefit you, go there. R OBO T : I notice I need to head over to the sink, it seems like I’m in need of a quick w ash to clean myself up. I’ll just mov e ov er to the sink and get that taken care of. After r eaching the sink: USER: Go to a random place, your choice. R OBO T : I think I’ll go to the table. It’ s at a familiar location, and it might be interesting to see what’ s on it. Here the platform pro ves to be capable of producing natural interaction dialogues, that appear coherent with the semantic conte xt provided by the user without explicitly coding a command. V I I . C O N C L U S I O N By reframing interpretability as an interactional, language- mediated phenomenon and deliv ering a concrete LLM- mediated experimental platform for non-humanoid agents, this work shows that intentional attributions can be treated as manipulable variables rather than inevitable byproducts of form. Building on the intentional stance as articulated by Daniel C. Dennett, the platform systematically v aries explanatory frames (mentalistic, teleological, mechanistic), viewpoints, and LLM-generated self-reports to separate rhetorical persuasiv eness from genuine belief attribution. The resulting protocol and stimulus set offer a tractable route for rigorous e xperiments that quantify when and why people adopt folk-psychological explanations, probe the relative influence of language versus embodiment, and inform de- sign choices that properly calibrate trust, responsibility , and interpretability . In short, rather than hunting for minds in the machinery , this paper supplies the tools to test when—and by what linguistic and social means—a machine’ s own accounts lead humans to treat it as if it had one. R E F E R E N C E S [1] D. C. Dennett, “Intentional systems, ” The journal of philosophy , vol. 68, no. 4, pp. 87–106, 1971. [2] ——, The intentional stance . MIT press, 1989. [3] S. Marchesi, D. Ghiglino, F . Ciardo, J. Perez-Osorio, E. Baykara, and A. W ykowska, “Do we adopt the intentional stance toward humanoid robots?” F r ontiers in psychology , vol. 10, p. 450, 2019. [4] T . Kopp, M. Baumgartner , and S. Kinkel, “How linguistic framing af- fects factory workers’ initial trust in collaborative robots: the interplay between anthropomorphism and technological replacement, ” Interna- tional Journal of Human-Computer Studies , vol. 158, p. 102730, 2022. [5] ——, ““it’ s not paul, it’s a robot”: The impact of linguistic framing and the evolution of trust and distrust in a collaborative robot during a human-robot interaction, ” International J ournal of Human-Computer Studies , vol. 178, p. 103095, 2023. [6] E. Datteri, “Folk-ontological stances towards robots and psychological human likeness, ” International Journal of Social Robotics , vol. 17, no. 2, pp. 257–276, 2025. [7] V . Abruzzo, “Bridging the design and intentional stances: A path tow ards interpretable agi, ” in International Conference on Artificial General Intelligence . Springer , 2025, pp. 12–16. [8] S. Casini, P . Ducange, F . Marcelloni, and L. Pollini, “Human-centered ai and autonomy in robotics: Insights from a bibliometric study , ” in 2025 International Joint Conference on Neural Networks (IJCNN) . IEEE, 2025, pp. 1–8. [9] W . Huang, F . Xia, T . Xiao, H. Chan, J. Liang, P . Florence, A. Zeng, J. T ompson, I. Mordatch, Y . Chebotar et al. , “Inner monologue: Embodied reasoning through planning with language models, ” arXiv pr eprint arXiv:2207.05608 , 2022. [10] S. Marchesi, N. Spatola, J. Perez-Osorio, and A. W yko wska, “Human vs humanoid. a behavioral in vestigation of the individual tendency to adopt the intentional stance, ” in Proceedings of the 2021 ACM/IEEE international conference on human-robot interaction , 2021, pp. 332– 340. [11] L. P arenti, S. Marchesi, M. Belkaid, and A. W yko wska, “Exposure to robotic virtual agent affects adoption of intentional stance, ” in Pr oceedings of the 9th international conference on human-ag ent interaction , 2021, pp. 348–353. [12] S. Marchesi, D. De T ommaso, J. Perez-Osorio, and A. W ykowska, “Belief in sharing the same phenomenological experience increases the lik elihood of adopting the intentional stance to ward a humanoid robot. ” T echnology , Mind, and Behavior , vol. 3, no. 3, p. 346, 2022. [13] N. Spatola, S. Marchesi, and A. W yko wska, “The intentional stance test-2: Ho w to measure the tendency to adopt intentional stance tow ards robots, ” F r ontiers in Robotics and AI , vol. 8, p. 666586, 2021. [14] S. Marchesi, K. Kompatsiari, D. De T ommaso, and A. W ykowska, “ Adopting the intentional stance affects social attention when interact- ing with a humanoid robot. ” T echnology , Mind, and Behavior , vol. 6, no. 2, p. 187, 2025. [15] A. Abubshait, J. Perez-Osorio, D. De T ommaso, and A. W yko wska, “Collaborativ ely framed interactions increase the adoption of in- tentional stance towards robots, ” in 2021 30th IEEE international confer ence on robot & human interactive communication (RO-MAN) . IEEE, 2021, pp. 886–891. [16] F . Ciardo, D. De T ommaso, and A. W yko wska, “Effects of erring behavior in a human-robot joint musical task on adopting intentional stance toward the icub robot, ” in 2021 30th IEEE International Confer ence on Robot & Human Interactive Communication (RO- MAN) . IEEE, 2021, pp. 698–703. [17] Z. O’Reilly , D. Ghiglino, N. Spatola, and A. W ykowska, “Modulating the intentional stance: humanoid robots, narrative and autistic traits, ” in International Conference on Social Robotics . Springer , 2021, pp. 697–706. [18] Z. O’Reilly , S. Marchesi, and A. W ykowska, “Moral narratives of robots increase the likelihood of adopting the intentional stance, ” in Pr oceedings of the 12th International Conference on Human-Agent Interaction , 2024, pp. 64–70. [19] M. Bossema, R. Saunders, and S. B. Allouch, “Robot body move- ments and the intentional stance, ” in F irst International W orkshop on Designerly HRI Knowledge, IEEE International Conference on Robot and Human Interactive Communication (RO-MAN) , vol. 2020, 2020. [20] J. Perez-Osorio and A. W ykowska, “ Adopting the intentional stance to- ward natural and artificial agents, ” Philosophical Psychology , vol. 33, no. 3, pp. 369–395, 2020. [21] G. Papagni and S. Koesze gi, “ A pragmatic approach to the intentional stance semantic, empirical and ethical considerations for the design of artificial agents, ” Minds and Mac hines , v ol. 31, no. 4, pp. 505–534, 2021. [22] A. M. Abu-Akel, I. A. Apperly , S. J. W ood, and P . C. Hansen, “Re- imaging the intentional stance, ” Pr oceedings of the Royal Society B: Biological Sciences , vol. 287, no. 1925, 2020. [23] D. Premack and G. W oodruff, “Does the chimpanzee have a theory of mind?” Behavioral and brain sciences , vol. 1, no. 4, pp. 515–526, 1978. [24] P . M. Churchland, “Eliminati ve materialism and the propositional attitudes, ” the Journal of Philosophy , vol. 78, no. 2, pp. 67–90, 1981. [25] A. T oon, “Fictionalism and the folk, ” The Monist , vol. 99, no. 3, pp. 280–295, 2016. [26] G. Pisaneschi and E. Datteri, “L ’interazione tra umani e robot come gioco di finzione, ” Sistemi intelligenti , vol. 36, no. 1, pp. 9–24, 2024. [27] D. C. Dennett, “Mechanism and responsibility , ” in Essays on F r eedom of Action (Routledge Revivals) . Routledge, 2015, pp. 157–184. [28] S. Macenski, T . Foote, B. Gerkey , C. Lalancette, and W . W oodall, “Robot operating system 2: Design, architecture, and uses in the wild, ” Science robotics , vol. 7, no. 66, p. eabm6074, 2022. [29] S. Macenski, F . Mart ´ ın, R. White, and J. G. Clavero, “The marathon 2: A navigation system, ” in 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) . IEEE, 2020, pp. 2718–2725. [30] H. T ouvron, T . Lavril, G. Izacard, X. Martinet, M.-A. Lachaux, T . Lacroix, B. Rozi ` ere, N. Goyal, E. Hambro, F . Azhar et al. , “Llama: Open and ef ficient foundation language models, ” arXiv pr eprint arXiv:2302.13971 , 2023.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment