LARD 2.0: Enhanced Datasets and Benchmarking for Autonomous Landing Systems

This paper addresses key challenges in the development of autonomous landing systems, focusing on dataset limitations for supervised training of Machine Learning (ML) models for object detection. Our main contributions include: (1) Enhancing dataset …

Authors: Yassine Bougacha, Geoffrey Delhomme, Mélanie Ducoffe

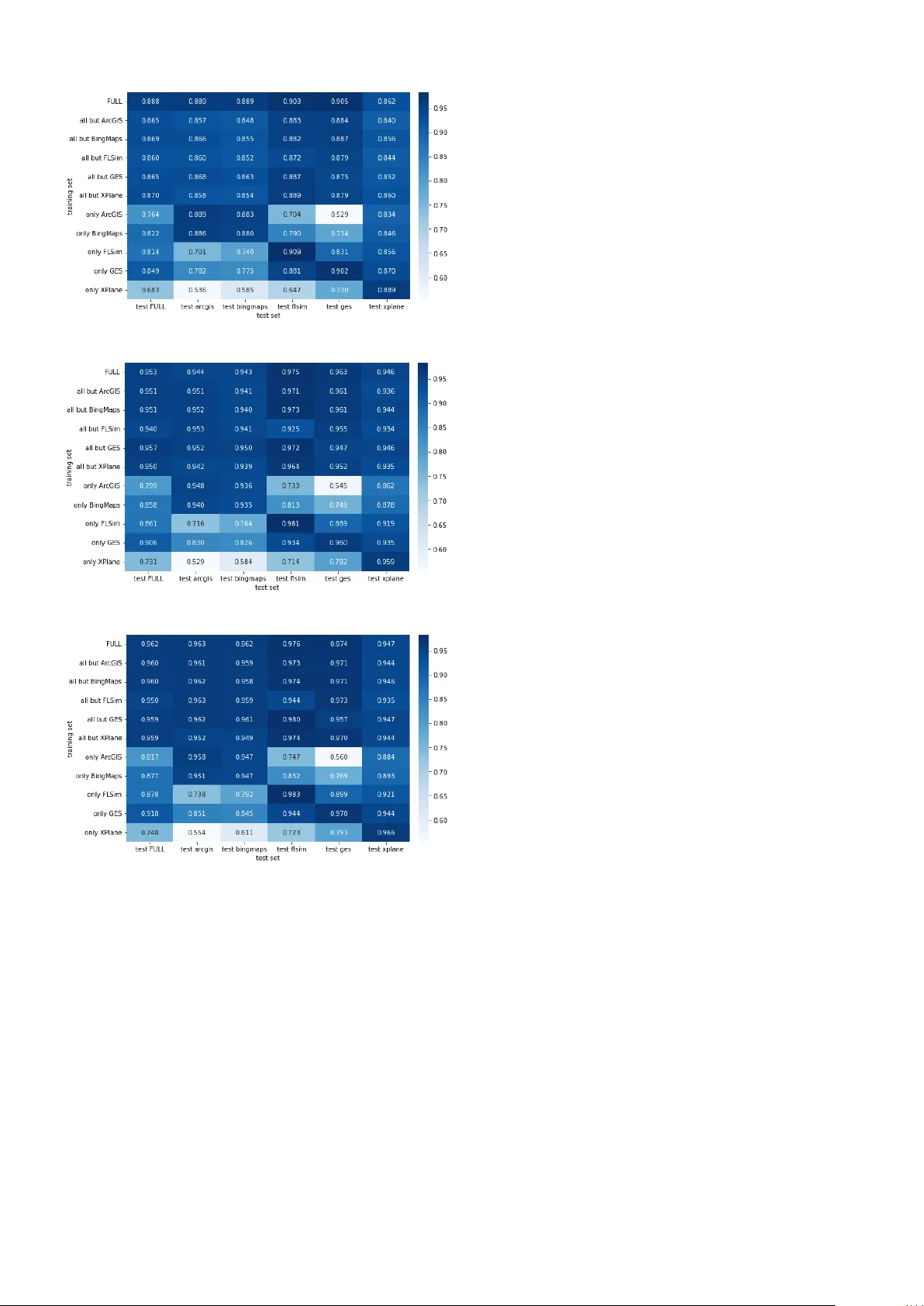

L A R D 2.0: Enhanced Dataset and Benchmarking f or A utonomous Landing Systems Y assine Bougacha 1 , Geoffre y Delhomme 5 , Mélanie Ducoffe 1 2 , Augustin Fuchs 3 , Jean-Brice Ginestet 4 , Jacques Girard 1,5 , Sofiane Kraïem 3 , Franck Mamalet 1 , V incent Mussot 1 , Claire Pagetti 3 , and Thierry Sammour 2 1 IRT Saint Exupéry , T oulouse , F rance – firstname .lastname@irt-saintexupery .com, 2 Airbus, T oulouse, F rance – firstname.lastname@airb us.com, 3 ONERA, T oulouse, F rance – fir stname.lastname@onera.fr , 4 DGA, T oulouse, F rance – fir stname.lastname@intradef .gouv .fr , 5 Thales, T oulouse, F rance – fir stname.lastname@thalesgr oup.com A B S T R AC T This paper addresses key challenges in the de velopment of au- tonomous landing systems, focusing on dataset limitations for supervised training of Machine Learning (ML) models for ob- ject detection. Our main contributions include: (1) Enhanc- ing dataset di versity , by advocating for the inclusion of new sources such as BingMap aerial images and Flight Simulator , to widen the generation scope of an existing dataset generator used to produce the L A R D dataset; (2) Refining the Opera- tional Design Domain (ODD), addressing issues lik e unrealis- tic landing scenarios and expanding co verage to multi-runw ay airports; (3) Benchmarking ML models for autonomous land- ing systems, introducing a framework for ev aluating object detection subtask in a complex multi-instances setting, and providing associated open-source models as a baseline for AI models’ performance. 1 . I N T R O D U C T I O N As interest in autonomous systems grows, machine learning (ML) vision-based algorithms are increasingly used. One of the major challenges is the collection of sufficient and repre- sentativ e real-world data. In the field of autonomous landing systems in aerospace, despite significant practical and com- mercial interest, there remains a notable lack of open-source datasets containing real-world aerial images. This shortage has led previous efforts to rely hea vily on synthetic datasets. W e can cite BARS in X-Plane, L A R D in Google Earth [ 3 , 9 ]. Notably , the L A R D dataset, which pro vides high-quality aerial images collected from Google Earth for runway detec- tion during the approach and landing phases, also offers manu- ally labelled images from real landing footage. Although such a data set has contributed significantly to autonomous aerial tasks, sev eral limitations persist. One specificity of L A R D compared to other dataset is the def- inition of a proper Operation Design Domain (ODD). L A R D introduced a useful frame work for defining the operational do- main coverage for autonomous systems, which has supported Y assine Bougacha et al. This is an open-access article distributed under the terms of the Creative Commons Attribution 4.0 License, which permits un- restricted use, distribution, and reproduction in any medium, provided the original author and source are credited. many AI certification efforts [ 25 , 2 , 8 , 20 ]. Howe ver , some aspects of this domain, particularly the sampling of images (such as the cone trajectory), lead to unrealistic landing sce- narios. These scenarios make it dif ficult to assess the true per - formance of AI models in real operational domain, as they do not reflect real-world conditions. Furthermore, the initial version of L A R D focused solely on single-runway airports, which is a significant limitation since 80% of commercial air traffic is managed by airports with two or more runways. The ODD for multi-runway detection has not been clearly defined in the literature. Second, as highlighted in [ 6 ], relying solely on simulated data is inadequate for training and validating na vigation systems in complex airport en vironments. One key challenge lies in the lack of diversity within these datasets. A first step toward a solution would be to enhance the existing dataset by incorpo- rating data from a variety of simulators. While there exist some open-source models a vailable, like [ 7 ], most prior vision-based landing papers do not release their model weights or code. A contributing factor to this gap is the lack of a clear and standardized definition of the ML tasks and adequate metrics for runway detection. While L A R D , or BARS mentioned object detection as a key task, little ex- ploration has been done to compare runway detection to gen- eral object detection frameworks and the efficiency of current models. For e xample, [ 17 ] trained object detection models, but as they noted, detecting a single object (such as one run- way) is less complex than multiple ones. Notably , they did not disclose their models. In this work, we will address the limitations identified above. Subsequently , we will refer to as L A R D V 1 when discussing the [ 9 ] paper and dataset; and to L A R D V 2 when presenting the contribution and enhanced dataset of this paper . Our main contributions are the follo wing: • Refinement of the Operational Design Domain (ODD) : W e refine the definition of our previous ODD for vision- based landing by focusing on the approach segment, tight- ening and segmenting the approach cone, and extending it to multi-runway airports. W e also introduce an Extended ODD band with tolerance margins, which explicitly cap- tures near-boundary poses around the nominal cone. European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 1 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems • Enhancing Dataset Div ersity : Recognizing the limita- tions of L A R D V 1, we propose a second version av ail- able at [ 10 ], built from a calibrated scenario generator that produces consistent images across multiple virtual globes (Google Earth, Bing Maps, ArcGIS) and flight simula- tors (X-Plane and Flight Simulator). The resulting dataset cov ers hundreds of major multi-runway airports with pre- cise per-runway annotations and In ODD / Extended ODD labels. • ODD-aware benchmarking ML models f or runway de- tection : W e propose an extended detection metric ( e-mAP ) that accounts for both In and Extended ODD runways, and use it to benchmark modern object detectors on this second version of L A R D, publicly av ailable at [ 11 ]. In particular , we compare training strategies that include or exclude Extended ODD runways, we quantify the impact of simulator div ersity using single-source, leav e-one-out and full multi-source training configurations, and we pro- vide baseline results that can serve as reference models for future work re volving around runway detection. The simulator and the dataset are a vailable on github at [ 10 ], while the models presented in this paper are provided in a sep- arate repository [ 11 ]. 2 . O P E R AT I O NA L D E S I G N D O M A I N The Operational Design Domain (ODD) [ 23 ] is a description of the set of constraints under which a system is designed to operate. In our case, it includes the geometry of the landing or the type of airports and runways considered, but also factors that can af fect optical sensors, and by extension ML compo- nent capabilities, such as time of day and weather conditions. 2.1. A pproach cone In our work, we only consider the approac h segment which ranges from -6000 m to -280 m along-track distance from Landing Threshold Point (L TP). Throughout this se gment, the acceptable aircraft positions remain similar but not equiv alent to the ODD of L A R D V 1 [ 9 ]: the lateral path angle lies within [-3°,3°], originating from the L TP , while the vertical path angle takes its values in [-1.8°,-5.2°] w .r .t. the V erti- cal Reference Point (VRP), a point on the centerline located 305 m beyond the L TP . For the aircraft attitude, the range for the pitc h 1 stays constant, between [-15°, +5°], which translates to an av erage nose-do wn orientation of about 5° relati ve to the horizon. Ho wev er, to define appropriate ranges for the aircraft r oll 1 and yaw 2 , the approach cone is split into 3 segments as described in table 1 . Along-track distance (m) Y aw range (°) Roll range (°) [ − 6000 , − 4500] [ − 24 , 24] [ − 30 , 30] [ − 4500 , − 2500] [ − 24 , 24] [ − 15 , 15] [ − 2500 , − 280] [ − 18 . 5 , 18 . 5] [ − 10 , 10] T able 1. Allowed yaw and r oll ranges as a function of along- track distance from the L TP 1 Aircraft body reference 2 Runway true heading reference 2.2. Other hypotheses Unlike L A R D V 1 which handled only single-runway airports, the second version targets the world’ s major commercial ones: First, we identified the 300 airports with the most traf fic world- wide, then reduced this list according to the quality of the run- way imagery we could obtain. Airports whose satellite images were badly pix elated or too poor for reliable analysis were fil- tered out, leaving us with 260 single- and multi-runway air- ports, distributed as shown in Figure 1 . Thus, because most of these have multiple runways, we extended L A R D to this more complex context, and had to define a rigorous approach for the runways labelling, described in Section 4.2 . Figure 1. Distrib ution of runways per airports in L A R D V 2 W e preserve other ODD restrictions from L A R D V 1, such as the presence of a piano on the runway , and the optimal weather conditions. Despite using only the major commercial airports, the former constraint was still needed to remov e smaller run- ways with dif ferent types of markings that may be present in these multi-runways airports. The latter constraint was pre- served for easier comparison between our various sources of data, considering that some of these cannot simulate night im- ages or adverse weather conditions. Howe ver , the addition of new types of simulators (especially X-Plane and Flight Sim- ulator ) opens the door to the creation of dedicated dataset, allowing to test AI algorithms in more complex and realistic conditions. 2.3. Intended function As described in [ 9 ], the ML constituent architecture should fulfil the intended function up to the pose estimation. W e rely on a 3-stage architecture directly inspired from Daedalaen A G [ 1 ] and illustrated in Figure 2 . Figure 2. VBL constituent architecture with 3 stages The first stage is based on an object detection step that is in charge of computing a bounding box around the detected run- way . The image is then cropped around the bounding box and a second stage is in charge of identifying key features on the runways, typically the 4 corners and specific runway con- tour lines. These keypoints then serve for the pose estimation stage, which can be done with a non-ML approach by the last stage. As [ 9 ], we focus only on the first object detection stage. European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 2 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems Figure 3. Lard V2 scenario generation and labeling workflo w . 3 . S Y N T H E T I C DAT A G E N E R A T O R S L A R D V 1 relies solely on Google Earth Studio to generate images. This tool, based on a 3D projection of Google Earth satellite imagery , produces images that may be partially de- formed, or with various lev el of quality depending on the satel- lite cov erage of the targeted area. This can be compensated by using multiple virtual globes and satellite sources for the same images. For this reason, we extended L A R D to inter- face with a tool based on Cesium, which allows to generate images from dif ferent satellite layers, including Google Earth, ArcGIS and Bing Maps. This interface also eliminates the need to go through the tedious process of generating images through Google Earth Studio interface which is not initially designed for that purpose. Howe ver , virtual globes alone are unable to simulate clouds and v ariable weather conditions, and they provide limited sup- port for night scenes. Recent flight simulators, on the other hand, of fer almost photorealistic en vironments with control- lable atmospheric conditions and lighting. F or this reason, we further extended L A R D V 2 to interface with two such simula- tors, X-Plane and Flight Simulator . Using the unified interface presented in Section 3.1 allows us to generate, for each sce- nario, a family of images that includes heterogeneous satellite textures and more realistic simulator renderings. 3.1. Interoperability Figure 3 presents the overall workflow used to generate the L A R D V 2 dataset and illustrates the interoperability of our tooling with different data sources. Interoperability between the dif ferent tools is ensured by a common scenario descrip- tion stored in a .yaml file, which contains: • A header with global information, including the expected image size and field of view , • A series of poses, defined by the position and attitude of the aircraft, the targeted runway and airport, and a date and time. Figure 4 shows a typical .yaml scenario used in L A R D V 2. The header encodes global information such as the list of air- ports and runways in volv ed and the image geometry (resolu- airports_runways : LFBO : - 32R - 14L - 32L - 14R image : height : 1024 width : 1024 fov_x : 60.0 fov_y : 60.0 poses : - uuid : 468b7855-064c-473d-b0bd-b7bee9b26bab airport : LFBO runway : 14R pose : - 1.3271, - 43.6604, - 286.1787, - 140.4716, - 86.1030, - 6.7669 time : second : 1 minute : 0 hour : 10 day : 1 month : 6 year : 2020 runways_database : ./data/runways_db_V2_GES.json trajectory : sample_number : 1 Figure 4. Example of .yaml scenario file used in L A R D V 2 , showing an aircraft pose at T oulouse Blagnac airport (LFBO), during an approach on runway 14R. tion and field of vie w), while each element of the poses array specifies one camera pose with its associated airport, runway , six-degree-of-freedom position and attitude, and timestamp. This scenario file is the output of the first generation step im- plemented in L A R D . While the legac y tool Google Earth Stu- dio used in L A R D V 1 still requires a specific file format 3 , the .yaml scenario which is directly consumed by all the new tools to render images in L A R D V 2 , typically X-Plane, Flight Sim- ulator and virtual globe accessible through Cesium. A limita- tion of this approach is that the same geographical coordinates 3 the .esp file which can be generated alongside the .yaml file. European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 3 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems are not always mapped to exactly the same apparent positions in each tool, which can result in slight spatial of fsets of objects in their respectiv e 3D spaces. These discrepancies arise from sev eral factors: imperfect mapping of satellite imagery onto the Earth, difference in elev ation models and ground altitudes between providers, and geometric simplifications introduced in flight simulators. In our case, e ven a small misalignment of a fe w meters at a runway corner will make the annotation unusable, which is why we had to define the calibration pro- cedure presented in section 3.2 . In practice, the scenario generator provided in L A R D V 2 al- lows to generate discrete poses along an approach trajectory , with the possibility to manually tune se veral aspects of this trajectory: each parameter (typically distance, lateral and ver- tical de viations and attitude constraints) can be sampled either from a bounded uniform distribution or from a normal distri- bution specified by a mean v alue and a standard deviation. For the construction of the L A R D V 2 dataset, we opted for sim- ple uniform draws within the ranges of the parameters defined in our ODD presented in Section 2 , with ten poses per ODD segment and per runway . Alternativ ely , users can bypass the generator and provide their o wn scenarios directly as lists of poses in the form of simple sequences of 6 degree of freedom positions. External tools that pro vide trajectories, for example from ADS-B recordings, can thus be con verted in the same .yaml representation and then rendered by our generators. 3.2. Calibration The starting point of our work is an open database [ 22 ] con- taining the corners of the runways of all airports in the world. This database is highly valuable but inaccurate. In general, the latitude, longitude or altitude of the points provided do not match the exact corners of the runways. The position er- ror usually represents a few meters but could be higher when the runways’ markings are renewed due to maintenance, or repainting, or when the satellite imagery is misaligned. More- ov er , ev en when the coordinates match a specific data source, they will often be different for other sources, due to problems described in Section 3.1 . This represents a major issue for our labels which must be as precise as possible, to ensure the feasi- bility of the various tasks and subtasks considered. This forced us to define a process to correct the coordinates of all the run- ways, resulting in a set of high-precision dedicated databases for each of the data sources. A natural first idea w ould be to rely directly on each rendering tool to obtain accurate runway coordinates. Unfortunately , this turns out to be impractical. In virtual globes, runways are only represented as textures on the terrain: the coordinates of run- way corners are not exposed as structured objects and cannot be retriev ed through queries. In flight simulators, one might expect internal na vigation or scenery databases to pro vide this information, but in practice, these data are either inaccessible, only partially documented, or of insuf ficient quality . More- ov er , such extraction strate gy would not solv e the problem for the virtual globes at all, for which no runway metadata is a vail- able. F or all these reasons, we designed a unified e xternal cal- ibration procedure. For a single data source (virtual globe or simulator), this procedure consists of four successiv e steps: 1. Runway acquisition: For each candidate threshold, we place a camera at a fix ed altitude abo ve the threshold cen- ter , facing the ground and aligned with the runway axis. W e then capture the corresponding image using prede- fined field of vie w and image sizes, which guarantees that the piano is fully visible near the center of the resulting picture. 2. Data curation: The resulting set of images can then be revie wed manually: frames with non-visible runways, or missing specific threshold markings can be discarded. This quality-control pass guarantees that the runways are kept only if their representation within this data source is ac- curate. 3. A utomatic cor ner detection: Our goal is to automati- cally find the corners at the bottom of the threshold (since the runway is facing up thanks to the camera orientation set at step 1). After a manual labelling of pianos on 500 images, we were able to train an object detector (a Y olo- v11 [ 14 ]) on these markings. Because each image is cen- tered on e xactly one piano, the label obtained precisely contains the location of the two bottom corners of the run- way . 4. Geometric pr ojection: Kno wing the image metadata de- fined at step 1 (camera geodetic position, intrinsic param- eters and pixel dimensions) and the pixel coordinates of the runway corners from step 3, we can compute their geodetic positions by back-projection, resulting in high- accuracy latitude and longitude for each runway end. It is worth noting that step 1 requires a way to precisely set the altitude to a specific value abov e ground lev el, to ensure the quality of the back-projection at step 4. It is usually feasible in the simulators and also using the Cesium-based tool, or using publicly av ailable API like Google ele vation API. This process produces a specific database of runway coordi- nates that is tailored to each data source. As a result, we can generate images based on a single .yaml file in multiple tools, and use the appropriate source-specific runw ays database dur - ing the labelling process to systematically obtain high quality labels. 4 . D A TA S E T - L A R D V 2 The second version of the LARD dataset is open source and av ailable at [ 10 ]. This section describes its organisation and how automatic labelling is done to account for multiple run- ways. 4.1. Strategy of dataset definition L A R D V 2 is built from 1024 × 1024 -pixel pictures captured in the three se gments defined in Section 2 . This choice of size ensures that, at 6000 m, with a glide slope close to 3 ◦ (ensured by the ODD), runways are still visible and recognisable. For each se gment, we take 10 images at random positions and ori- entations within the acceptable ranges of the ODD, resulting in 30 pictures for e very runway and generator . W ith close to 980 runw ays in the current list of airports, this corresponds, in principle, to roughly 30 000 images per data source. After completion of the generation and cleaning stages, ho w- ev er , L A R D V 2 now contains around 110 000 images across the fiv e generators, ranging from around 18 000 for Bing Maps, European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 4 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems to 26 000 for Flight Simulator . This is below the theoretical number of samples defined by our scenarios, because a non- negligible fraction of images had to be discarded during qual- ity control. T ypical rejection cases include poses located in- side surrounding relief or below ground lev el in the elev ation models, as well as se ver artefacts in the virtual globes: heavily degraded or missing runway markings, and runway with im- plausible altitude profiles, exhibiting strong oscillations every few tens of meters that make both the runway boundaries and primary markings unusable for detection. Image generation for each back-end requires on the order of 15 to 40 hours on standard hardw are, while, once the calibration procedure of Section 3.2 has been performed for a giv en source, the subse- quent labelling of all images for that source is fully automatic and completes in a few minutes. Finally , it is worth noting that for model dev elopment, we de- cided to split the airport list in tw o halves, with approximately 50% of airports for the training set 4 and 50% reserved for the test set, to prev ent location-specific leakage, and ensure that runways seen during testing have not appeared during train- ing. 4.2. Labelling A ke y challenge when dealing with multiple runways at an air - port is to determine which runway to label in each image. T o address this, we use the ODD defined in Section 2 as a refer- ence. For each pose in a landing scenario, we consider all the runways that could appear in the camera’ s field of view based on the current position and orientation. Then we check if the aircraft is within the approach cone of each candidate runw ay , as specified in the ODD. The starting point for this is to deter- mine the along-track distance between the aircraft and the tar - geted threshold, to identify the appropriate segment as defined in T able 1 . Once each parameter has been validated against each of the acceptable ranges, the runw ay can be labelled with an additional flag indicating that it is In ODD . Nev ertheless, when looking into the details, this per-parameter range veri- fication is less trivial than it appears. There are indeed some new challenges arising re garding the labelling process, origi- nating from both the data source spatial offsets and the com- putation of orientation during generation: • Data sour ce offsets: The scenarios are usually generated from a single data source, but the corresponding images will be rendered on multiple sources, where runways may hav e slight position differences, resulting in changes in relativ e positions. If the initial position was close to the border of the approach cone, there is a chance that this same position will be outside of the cone for a different source, which would result in an Out of ODD flag. • Coupled r otations: When rotations are combined during scenario generation, the pitch and roll are measured rel- ativ e to the aircraft body , while the yaw is relative to the runway heading. As a result, these three parameters may impact each other during successi ve single-axis rotations. For instance, applying a rotation on the roll, follo wed by the pitch of an aircraft already alters the yaw relativ e to the runway . 4 Dataset that will be used by the ML engineer to train and potentially v alidate their models before separate testing T o address these issues, we introduce tolerance margins around the acceptable angles and ranges, to account for these po- tential v ariations. Moreo ver , we defined an in-between Ex- tended ODD category , when any parameter exceeds the nor- mal ranges but remains within twice the acceptable limits. This means that only the two cate gories In ODD and Extended ODD are considered valid situations where the runway should be recognisable by the model, but it also opens the door to a relaxed ev aluation of the model performance, reducing the penalties when a model fails to detect a runway Extended ODD . 5 . M L TA S K S A N D M E T R I C S In this paper , we focus e xclusiv ely on the first stage of the run- way detection pipeline: the object detection task. This stage aims to locate and generate bounding boxes around runways present in aerial or satellite images. The output of this de- tection phase is critical, as it directly feeds into subsequent processing steps such as corner detection and aircraft pose es- timation, but these downstream tasks are beyond the scope of the present study . W e consider se veral objectiv es that dif fer by the subset of data used during training. In particular , we define two complemen- tary configurations that will structure the experiments in Sec- tion 6 . First, we compare models trained only on In ODD runways, with models trained on both In ODD and Extended ODD runways, in order to assess the impact of including near - boundary examples in the learning process. Second, we vary the composition of the training dataset with respect to the im- age generators, using models trained on a single data source, on all sources b ut one ( leave-one-out ), or on the full combina- tion, to quantify the influence of simulator div ersity on gener- alization. 5.1. ML tasks Unlike the approach presented in the L A R D V 1 paper , which primarily addressed scenarios containing a single runway per image, our work extends this formulation to more complex scenes where multiple runways may be simultaneously present within the field of view . This reflects more realistic opera- tional environments and introduces additional challenges for the detection models, such as dif ferentiating between multiple adjacent or ov erlapping runways. 5.2. Metrics - extended mAP T o ev aluate the performance of the object detection models in this extended context, we adopt the classical Mean A ver - age Precision (mAP) metric, widely used in object detection benchmarks. Howe ver , in our case, the mAP computation is adapted to handle extended runway scenarios, including run- ways that are not necessarily part of the Operational Design Domain (ODD). This ensures that the metric remains mean- ingful even when detecting runways that may not be relev ant for the specific mission or operational constraints. Figure 5 introduces some notations required for the metric. The mAP metric [ 24 , 12 ] is commonly used in object detection benchmarks (Note that since we have a single class ’ runway’, the mAP coincide with the AP ). The mAP metric e valuates the agreement between the predicted bounding boxes ˆ B i and the European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 5 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems Input image RGB I mg shape (H,W ,3) Runway BB B i = ( x i , y i , w i , h i ) ( x i , y i ) center of the BB Runway ODD indices (or flag) o i ∈ { 0 , 1 } indicate if the runway is in the ODD Predicted BB ˆ B i = ( ˆ x i , ˆ y i , ˆ w i , ˆ h i , ˆ s i ) ˆ s i is confidence score List of In ODD runways I = { i | o i = 1 } List of out-of-ODD runways J = { j | o j = 0 } List of predicted runways P = { i | ˆ s i > 0 . 5 } can also use the NMS (Non Maximum Suppression) algorithm Figure 5. Notations to define mAP and extended mAP ground truth bounding boxes B i . Since the object detector is trained to detect runways that are within the ODD, the refer- ence metric is computed using only the ODD runways: mAP τ ( B i ) i ∈ I , ( ˆ B p ) p ∈ P Howe ver , since some runways outside the ODD ( Extended ODD ) may also be visible and thus detected by the object detector , we additionally ev aluate an extended version of the mAP metric, which takes into account an y possible detection of Extended ODD runways: e - mAP τ = max J ⊂{ j | o j =0 } mAP τ ( B i ) i ∈ I ∪ J , ( ˆ B p ) p ∈ P This extended metric e-mAP τ searches, among all subsets J of runways outside the nominal ODD, the subset that yields the highest possible score when these additional runways are treated as v alid targets alongside the In ODD ones (from the set I ). It can bee seen as an oracle that, a posteriori, selects which Extended ODD runways should be considered relev ant for the ev aluation, and reports the best mAP value attainable for this selection. By construction, e-mAP τ ≥ mAP τ (the case where J = ∅ results in the standard mAP on In ODD runways only ). This construction reduces the sensitivity of the score to the models’ prediction on Extended ODD runways, which are no longer automatically counted as hard false positi ves, but can instead be matched to a subset of extended ground truth runways if they are geometrically consistent. The e-mAP thus represents a more stable basis for comparing models that differ in their behaviour near the ODD boundary . 6 . E X P E R I M E N T S This section presents the experiments conducted to assess the factors influencing runway detection performance and gener- alization. Our e valuation is structured into two parts. First, we examine the impact of inclusion of the Extended ODD run- ways in the training set on model performance and their ability to generalize across div erse conditions. Second, we analyze how the choice of source simulator in the training set affects the resulting models. All models are publicly a vailable at [ 11 ]. 6.1. Influence of Extended ODD runways As defined in Section 2 , the intended function of the model is to detect runways for which the aircraft lies within the ap- proach cone, referred to as In ODD runways. A first naive baseline would therefore restrict the training set to these In ODD runways as ground truth targets. Howe ver , learning to correctly reject Extended ODD runways that lie very close to the ODD frontier can be challenging and may negati vely af- fect the training. Moreov er, it is well established that ML models often generalize better when e xposed to richer or more div erse training data. In this context, the Extended ODD run- ways can be interpreted as a form of tar geted data augmen- tation, providing additional geometric and visual variability around the operational boundary . For this reason, we com- pare two training strate gies: one where only In ODD runways are used as training target (model M I N ), and another where In ODD and Extended ODD runways are treated as the same target class(model M E X T ). Performance and generalization are then assessed on the test set using the mAP and e-mAP (Section 5.2 ). Experiments in this subsection use Y oloV8 de- tectors trained with the Ultralytics frame work [ 14 ] under the same hyperparameters, the only dif ference being the compo- sition of the training targets. T able 2 reports the results. On the strict In ODD test subset, the model M I N , trained on In ODD runways, reaches higher mAP than M E X T . This is expected, as M I N is trained to detect only In ODD runways and tend to be more conserva- tiv e, whereas M E X T detects many Extended ODD runways that are ignored by the In ODD metric and therefore counted as false positiv es, which in the end degrades the mAP score. When considering the combined In ODD and Extended ODD test set, the situation reverses: the mAP decreases for M I N and increases for M E X T , sho wing that including Extended ODD examples in training leads to better o verall performances once those near-boundary runw ays are considered valid tar- gets. The extended metric e-mAP pro vides a more nuanced view of this trade-off. The column “GT” indicates the number of run- ways that were considered as ground truth in the metric com- putation. For both models, the values of the first two lines are indicated for reference, meaning we hav e 53610 runways In ODD , and 8178 Extended ODD runways (for a total of 61788 runways). Howe ver , for the e-mAP rows, this v alue represents the maximum number of runways correctly detected, that re- sulted in the best value for the e-mAP metric. Interestingly , M I N managed to correctly detect 4632 Extended ODD run- ways (56,2%), meaning it already generalizes f airly well to near-boundary configurations, despite nev er seeing them as targets. Howe ver , it is surpassed by M E X T which recovers the v ast majority of these and almost doubles this number , de- tecting 7808 Extended ODD runways (ov er 95,4%). In other words, including Extended ODD e xamples in training slightly degrades precision in the strict core of the ODD, but signifi- cantly impro ves generalization capability in a mar gin around the ODD boundary . European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 6 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems Model: archi (T rained on) Score T est GT GT Detections mAP mAP50 mAP75 M I N : yoloV8 (IN_ODD) mAP IN_ODD 53610 80002 0.6974 0.9671 0.8122 mAP IN_ODD + EXTENDED_ODD 61788 80002 0.6498 0.9084 0.7539 e-mAP IN_ODD + EXTENDED_ODD 58242 80002 0.6882 0.9654 0.7999 M E X T : yoloV8 (IN_ODD+ EXTENDED_ODD) mAP IN_ODD 53610 100504 0.6265 0.8703 0.7256 mAP IN_ODD + EXTENDED_ODD 61788 100504 0.6939 0.9669 0.8052 e-mAP IN_ODD + EXTENDED_ODD 61418 100504 0.6976 0.9685 0.8072 T able 2. Performance comparison between a yolo v8 trained on In ODD runways only , and one trained on In ODD and Extended ODD runways. Figure 6. Comparison of mAP@50 performances for se veral training setup 6.2. Influence of simulator sources In this section we try to ev aluate the influence of the simu- lator source on the training and generalization. For this we train elev en dif ferent yolov11 models under the same archi- tecture and hyperparameters, varying only in the composition of training data. Fi ve models are trained on images from a single source (ArcGIS, BingMaps, FLSim, GES and XPlane), fiv e on all sources but one (lea ve-one-out configurations), and one on the union of all sources (FULL). Experiments in this subsection use Y oloV11 detectors trained with the Ultralytics framew ork [ 14 ] under the same hyperparameters, the only dif- ference being the composition of the training targets. Figure 6 summarizes the mAP@50 performance of these mod- els on the full test set. The FULL configuration consistently achiev es the best a verage performance, which seem to indicate that combining multiple simulator and virtual globes during training is beneficial. Moreover , the f act that no leav e-one- out configuration outperforms the FULL one confirms that each source effecti vely contributes in some way to the global performance. Among the single-source models, the X-Plane- only configuration clearly underperforms, while the GES-only achiev es the highest mAP . T o better understand these behaviours, Figure 7 reports cross- source performance where each heat-map shows, for a giv en metric ( mAP on In ODD , mAP on In and Extended ODD , and e-mAP ), how each model configuration performs when tested on images from each source. Three trends can be observed. First, the X-Plane-only model performs well on X-Plane test images but poorly on all other sources. This suggests that its 3-dimensional models of runways and their textures lead to ov er-fitting to that particular domain, and that training only on such rendering generalize poorly to other virtual globes. Second, ArcGIS and Bing Maps models behave similarly and generalize reasonably well to each other , indicating proxim- ity in their rendering and their domain. This was expected as these virtual globes hav e flat satellites texture mapped on the ground with altitude corrections. On the opposite, their results on Flight Simulator and GES reflect dif ferences in te x- tures, lighting and rendering between satellite imagery and 3D en vironments generated by simulators 5 . Third, the GES-only model generalizes better than other single-source models to most other generators, which is consistent with the way it ren- ders images, combining satellite imageries and 3D rendering. Here again, the leav e-one-out configurations lie between the single-source and FULL extremes, their performance being 5 Google Earth Studio provides 3D rendering of surrounding buildings, mov- ing objects, as well as some nature elements on top of satellite imagery . European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 7 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems (a) mAP on In ODD runways (b) mAP on In ODD and Extended ODD runways (c) e-mAP Figure 7. Crossbar mAP performances of the elev en trained Y OLOv11 models on each sub-test set. closer to that of FULL both in-domain and across domains. The relative ordering of models is also preserved when com- paring mAP In ODD and e-mAP, with the only case where a leav e-one-out configuration outperforms the FULL is the all but GES model on test images from Flight Simulator . How- ev er , this ordering frequently changes for mAP computed on the combined In ODD and Extended ODD test set, as shown in Figure 7b , which illustrates ho w unstable this metric can be in that specific context. This behaviour matches the issue identi- fied in Section 5.2 and is precisely what our e-mAP definition is designed to correct. 7 . R E L AT E D W O R K S In this section, we first re view pipelines for autonomous or assisted landing, then survey a vailable runway datasets and simulators, and finally discuss studies that specifically rely on L A R D V 1 and its ODD. 7.1. V ision-based landing pipelines Recent work on vision-based landing focuses on deep-learning pipelines that cover a large part of the approach-and-landing chain, from runway detection to pose estimation and guid- ance, and that are validated in realistic flight or simulator cam- paigns [ 1 , 13 ]. Other studies combine YOLO-like detectors, segmentation and geometric post-processing to deri ve relati ve pose and even close the loop with guidance and control in sim- ulation [ 19 , 29 ], while lightweight variants such as YOMO- Runwaynet tar get embedded fixed-wing platforms by combin- ing Y OLO-style heads with MobileNet-based backbones on dedicated runway datasets [ 5 ]. More recently , Ferreira et al. presented an approach which uses a tracker and orientation estimator on runway imagery to generate guidance commands for fixed-wing UA Vs [ 13 ]. Complementary work by V alen- -tin et al. focuses on the downstream pose-estimation and assurance layers, first proposing probabilistic parameter esti- mators and calibration metrics for pose estimation from run- way image features [ 27 ], and more recently introducing a real- time vision pipeline with calibrated predictiv e uncertainties and RAIM-based runtime monitoring for a computer vision landing system [ 26 ]. In parallel, K ouvaros et al. apply for- mal verification techniques to semantic keypoint detection net- works used for aircraft pose estimation, providing robustness guarantees for the perception stage of an autonomous landing system [ 15 ]. These works demonstrate that vision-only land- ing is technically feasible, but they typically rely on propri- etary data or single-simulator sources, and they rarely expose the underlying dataset design or its Operational Design Do- main (ODD) in detail. 7.2. Runway datasets and simulators Public datasets for runway detection are still scarce. In 2023, the L A R D V 1 dataset was introduced as an open benchmark for front-vie w runway detection during approach and landing, combining a scenario generator based on Google Earth satel- lite imagery with a smaller set of real landing videos and, cru- cially , an explicit definition of the approach cone as an image- lev el ODD [ 9 ]. Around the same time, Chen et al. proposed B ARS, an airport runway segmentation benchmark b uilt from X-Plane 11, with instance masks for runway and markings and a smoothing-oriented evaluation metric [ 3 ]. More recently , W ang et al. introduced V ALNet which included a Runway Landing Dataset (RLD), also based mainly on X-Plane, tar - geting instance segmentation ov er full landing sequences and div erse weather conditions [ 28 ]. Beyond the runway domain, recent work on responsible dataset design stresses the impor- tance of diversifying data sources and conditions to improv e robustness and representation [ 21 ]. Compared to these resources, L A R D V 2 is designed e xplicitly around: (i) multi-runway airports selected among the world’ s busiest ones; (ii) interoperability between multiple virtual glo- -bes (Google Earth, Bing Maps, ArcGIS) and flight simulators European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 8 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems (X-Plane, Flight Simulator); and (iii) an ODD-aw are labelling scheme that distinguishes In ODD and Extended ODD run- ways in each image. While B ARS and RLD primarily study segmentation and boundary quality within a single simulator , L A R D V 2 focuses on object detection across heterogeneous generators, providing a complementary view on cross-source generalization. 7.3. W ork building on L A R D Since its release, L A R D has been used as a reference dataset for several methodological studies. YOLO-R WY [ 17 ] intro- duces an anchor-free Y OLO variant tailored to runway detec- tion and reports significant gains on the L A R D splits (syn- thetic, real-nominal, real-edge). Other papers use L A R D to study robustness and security: a recent federated adversar - ial learning approach treats runway detection as a federated downstream task and ev aluates adversarial resilience on LARD images [ 18 ], while Zouzou et al. propose a metric called Conformal mean A verage Precision (C-mAP) to equip Y OLO- based detectors with statistical uncertainty guarantees on run- way imagery [ 30 ]. Finally , other lines of work leverage L A R D for complementary goals, for instance BE-LIME [ 4 ], which studies the explainability of runway detection models trained on it. The ODD itself has also become an explicit object of study . Cappi et al. describe a process to design and verify datasets with respect to a predefined ODD, translating system-lev el constraints into image-le vel Data Quality Requirements [ 2 ]. The paper illustrates the whole process on L A R D, for the ODD of a vision-based landing system. Our current paper can be seen as an extension of these ef forts at the dataset lev el: we refine the original approach cone, introduce an Extended ODD band around it, and propagate those labels consistently across our five data sources. On top of that, we define an e x- tended detection metric ( e-mAP ) that explicitly exploits the ODD structure and is orthogonal to the C-mAP from [ 30 ], so that uncertainty guarantees could be layered on top of our ev al- uation if needed. 8 . C O N C L U S I O N In this paper , we introduced L A R D V 2, an enhanced dataset and benchmarking framework for runway detection in vision- based autonomous landing systems. Compared to L A R D V 1, the ne w v ersion refines the Operational Design Domain by fo- cusing on the approach segment, tightening and se gmenting the approach cone, and extending it to multi-runway airports selected among the ones with the most traffic worldwide. On the data generation side, we proposed a calibrated workflow that uses a unified .yaml scenario description to produce ac- curately labelled images from multiple virtual globes (Google Earth, Bing Maps, ArcGIS) and flight simulators (X-Plane, Flight Simulator). This required a dedicated calibration pro- cedure and an ODD-a ware labelling scheme that separates In ODD and Extended ODD runways at the image le vel. On the ev aluation side, we defined an e xtended detection met- ric (e-mAP) that explicitly exploits this ODD structure by re- warding correct detections on both In ODD and Extended ODD runways. Using this framew ork, we reported experiments on two key factors: the impact of augmenting training data with new samples slightly beyond the ranges of the ODD, and the influence of simulator div ersities. The results sho w that training on both In ODD and Extended ODD examples improv es robustness near the ODD boundary as captured by the e-mAP , and that models trained on a mix- ture of all generators (FULL configuration) generalize sub- stantially better across sources than single-simulator models, with X-Plane-only training proving particularly brittle. More generally , the proximity between generators also matters: vir- tual globes based purely on satellite textures (ArcGIS and Bing Maps) transfer well to one another but less to full 3D simu- lators, whereas hybrid tools which combine satellite imagery and 3D geometry (such as Google Earth Studio) tend to gen- eralize better across both families. Despite the recent growth of the literature, there is still no pub- lic dataset that combines multi-runway airports, multiple co- ordinated simulators and virtual globes, and an precise opera- tional ODD with an associated detection benchmark; existing datasets typically rely on a single generator, and often consider single-runway settings with limited domain shift [ 9 , 3 , 28 ]. Existing methodological work on top of L A R D mostly treats the data and ODD as fixed. By releasing L A R D V 2 and its calibrated multi-source generator , and by organising experi- ments around Extended ODD training and simulator div ersity , we aim to complement these works by providing an ODD- aware, multi-runway , multi-source benchmark on which mod- els can be more meaningfully assessed, opening the way to follow-up studies on robustness, explainability and certification- oriented assurance methods. As a way forward, we plan to extend the present benchmark along two complementary axes. First, we are interested in a systematic comparison of dif ferent state-of-the-art object de- tection architectures (including transformer-based detectors, anchor-free models, and dif ferent Y OLO v ariants), as a thor- ough assessment of their respectiv e strengths and weaknesses in the context of our well-defined ODD. Beyond global scores, the structure of this ODD, composed of three distance-based approach segments, will allow us to stratify ev aluation by ap- parent runway size and scale, and thus to quantify , for each architecture, ho w performance ev olves as the aircraft moves along the approach and as bounding boxes become larger and less ambiguous. Second, we intend to leverage the new capa- bilities offered by the support of modern flight simulators in L A R D V 2 , to systematically vary clouds, weather conditions, illumination (including night-time scenarios) and seasonal ap- pearance, and to generate dedicated subsets that extend the nominal ODD tow ards more complex and operationally rele- vant cases. Such situations had already emerged in L A R D V 1, where they were handled through a manually curated "edge cases" subset of real images and videos. As demonstrated in [ 16 ], this approach will enable deeper analyses of model robustness under realistic conditions, and will support future work on gradually tightening assurance ar guments as the ODD is extended be yond the current nominal approach segment. Acknowledgement This work was carried out within the DEEL project, 6 which is part of IR T Saint Exupéry and the 6 https://www.deel.ai/ European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 9 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems ANITI AI cluster . The authors acknowledge the financial sup- port from DEEL ’ s Industrial and Academic Members and the France 2030 program – Grant agreements n°ANR-10-AIR T - 01 and n°ANR-23-IA CL-0002. This work has also benefited from the P A TO project funded by the French government through the France Relance program, based on the funding from and by the European Union through the NextGenerationEU pro- gram. R E F E R E N C E S [1] Giov anni Balduzzi, Martino Ferrari Bravo, Anna Cher - nov a, Calin Cruceru, Luuk van Dijk, Peter de Lange, Juan Jerez, Nathanaël Koehler , Mathias K oerner , Corentin Perret-Gentil, et al. Neural network based runway landing guidance for general a viation autoland. T echnical report, United States. Department of Trans- portation. Federal A viation Administration, 2021. [2] Cyril Cappi, Noémie Cohen, Mélanie Ducof fe, Christophe Gabreau, Laurent Gardes, Adrien Gauf- friau, Jean-Brice Ginestet, Franck Mamalet, V incent Mussot, Claire Pagetti, et al. How to design a dataset compliant with an ml-based system odd? In 12th Eur o- pean Congress on Embedded Real T ime Software and Systems (ERTS) , 2024. [3] W enhui Chen, Zhijiang Zhang, Liang Y u, and Y ichun T ai. Bars: a benchmark for airport runway segmenta- tion. Applied Intelligence , 2023. [4] Xi Chen, Ruchao Zhang, Lei Dong, and Jiachen Liu. Explainable object detection for aircraft visual landing system based on be-lime method. Aer ospace Systems , 2025. [5] W ei Dai, Zhengjun Zhai, Dezhong W ang, Zhaozi Zu, Siyuan Shen, Xinlei Lv , Sheng Lu, and Lei W ang. Y omo-runwaynet: A lightweight fixed-wing aircraft runway detection algorithm combining yolo and mo- bilerunwaynet. Dr ones , 2024. [6] Miguel Ángel de Frutos Carro, Carlos Cerdán V illa- longa, and Antonio Barrientos Cruz. V alidating syn- thetic data for perception in autonomous airport navi- gation tasks. Aer ospace , 2024. [7] Geoffre y Delhomme. Y olo models on LARD V1. github .com/geoffre y-g-delhomme/lard-yolov8 , 2025. [8] Ewen Denney and Ganesh Pai. Assurance-dri ven de- sign of machine learning-based functionality in an avi- ation systems conte xt. In 2023 IEEE/AIAA 42nd Digital A vionics Systems Confer ence (D ASC) . IEEE, 2023. [9] Mélanie Ducoffe, Maxime Carrere, Léo Féliers, Adrien Gauf friau, V incent Mussot, Claire Pagetti, and Thierry Sammour . Lard–landing approach runway detection–dataset for vision based landing. preprint arXiv:2304.09938 , 2023. [10] V incent Mussot et al. LARD V2. github.com/deel- ai/LARD , 2025. [11] V incent Mussot et al. Y olo models on LARD V2. github .com/deel-ai-papers/Y olo_models_LARD_V2 , 2025. [12] Mark Everingham, Luc V an Gool, Christopher KI W illiams, John W inn, and Andrew Zisserman. The pas- cal visual object classes (voc) challenge. International journal of computer vision , 2010. [13] João PK Ferreira, João P Pinto, Júlia Moura, Y i Li, Cristiano L Castro, and Plamen Angelov . V ision-based landing guidance through tracking and orientation esti- mation. In 2025 IEEE/CVF W inter Conference on Ap- plications of Computer V ision (W A CV) . IEEE, 2025. [14] Glenn Jocher and Jing Qiu. Ultralytics yolo11, 2024. [15] Panagiotis Kouv aros, Francesco Leofante, Blake Ed- wards, Calvin Chung, Dragos Mar gineantu, and Alessio Lomuscio. V erification of semantic key point detection for aircraft pose estimation. In Pr oceedings of the International Confer ence on Principles of Knowl- edge Repr esentation and Reasoning , 2023. [16] Sofiane Kraïem, Augustin Fuchs, Gustav Öman Lundin, T udor A v arvarei, Cédric Seren, and Mario Cas- saro. A pose-based visual servoing strategy for the au- tonomous landing of an airliner using a yolov11 model for runw ay detection. In EUCASS 2025-European Con- fer ence for Aer oSpace Sciences , 2025. [17] Y e Li, Y u Xia, Guangji Zheng, Xiaoyang Guo, and Qingfeng Li. Y olo-rwy: A novel runway detection model for vision-based autonomous landing of fix ed- wing unmanned aerial vehicles. Dr ones , 2024. [18] Y i Li, Plamen Angelov , Zhengxin Y u, Alv aro Lopez Pellicer , and Neeraj Suri. Federated adversarial learning for robust autonomous landing runway detec- tion. In International Conference on Artificial Neural Networks . Springer , 2024. [19] Y ing-Xi Lin and Y ing-Chih Lai. Deep learning- based navigation system for automatic landing ap- proach of fixed-wing uavs in gnss-denied en viron- ments. Aer ospace , 12, 2025. [20] Bastian Luettig, Y assine Akhiat, and Zamira Da w . Ml meets aerospace: challenges of certifying airborne ai. F r ontiers in Aer ospace Engineering , 2024. [21] W ill Orr and Kate Crawford. Building better datasets: Sev en recommendations for responsible design from dataset creators, 2024. [22] OurAirports. Open Airport and Navaid Data. ourair- ports.com/data . Accessed: 2025-11-25. [23] SAE. J3016 Levels of Automated Dri ving, 2019. [24] Hinrich Schütze, Christopher D Manning, and Prab- hakar Raghavan. Intr oduction to information retrie val , volume 39. Cambridge University Press Cambridge, 2008. [25] Huiying Shuaia, Jiwen W ang, Anqi W ang, Ran Zhang, and Xin Y ang. Adv ances in assuring artificial intelli- gence and machine learning dev elopment lifecycle and their applications in a viation. In 2023 5th International Academic Exchang e Confer ence on Science and T ech- nology Innovation (IAECST) . IEEE, 2023. [26] Romeo V alentin, Sydne y M. Katz, Artur B. Carneiro, Don W alker , and Mykel J. K ochenderfer . Predictiv e un- certainty for runtime assurance of a real-time computer vision-based landing system. In 44th Digital A vionics Systems Confer ence (D ASC) , 2025. [27] Romeo V alentin, Sydne y M Katz, Joonghyun Lee, Don W alker , Matthew Sorgenfrei, and Mykel J Kochender - fer . Probabilistic parameter estimators and calibration metrics for pose estimation from image features. In 2024 AIAA DA TC/IEEE 43rd Digital A vionics Systems Confer ence (D ASC) . IEEE, 2024. European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 10 L A R D 2.0: Enhanced Dataset and Benchmarking for Autonomous Landing Systems [28] Qiang W ang, W enquan Feng, Hongbo Zhao, Binghao Liu, and Shuchang L yu. V alnet: V ision-based au- tonomous landing with airport runway instance seg- mentation. Remote Sensing , 2024. [29] Y u Zhang, Lincheng Shen, Y irui Cong, Dianle Zhou, and Daibing Zhang. Ground-based visual guidance in autonomous uav landing. In Sixth International Con- fer ence on Machine V ision (ICMV 2013) . SPIE, 2013. [30] Alya Zouzou, Mélanie Ducoffe, Ryma Boumazouza, et al. Robust vision-based runway detection through conformal prediction and conformal map. pr eprint arXiv:2505.16740 , 2025. European Congress of Embedded Real T ime Systems, ISSN 2680-0918, 2026 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment