Inference in Regression Discontinuity Designs with Clustered Data

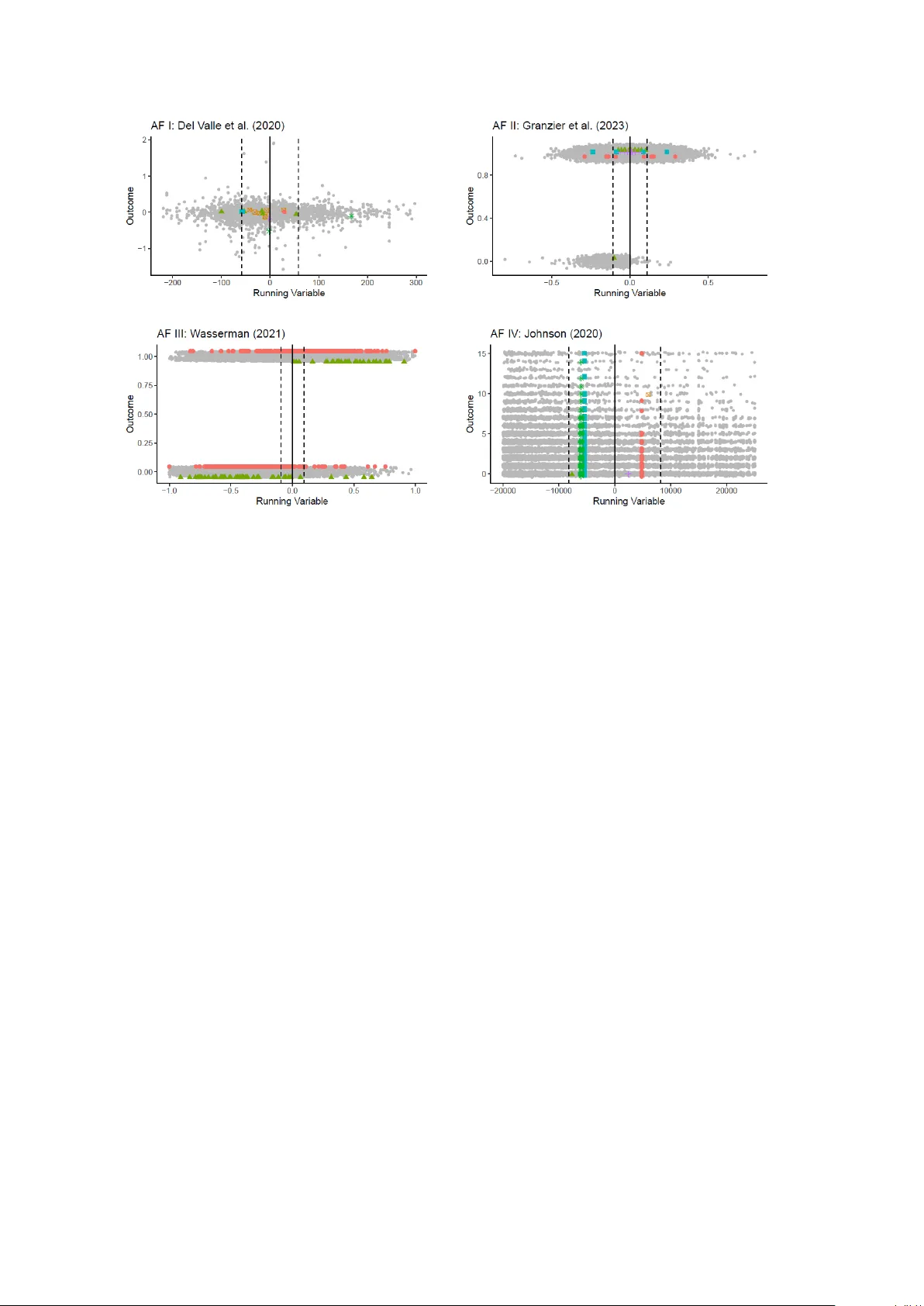

Clustered sampling is prevalent in empirical regression discontinuity (RD) designs, but it has not received much attention in the theoretical literature. In this paper, we introduce a general model-based framework for such settings and derive high-le…

Authors: Claudia Noack, Tomasz Olma, Christoph Rothe