JUCAL: Jointly Calibrating Aleatoric and Epistemic Uncertainty in Classification Tasks

We study post-calibration uncertainty for trained ensembles of classifiers. Specifically, we consider both aleatoric (label noise) and epistemic (model) uncertainty. Among the most popular and widely used calibration methods in classification are tem…

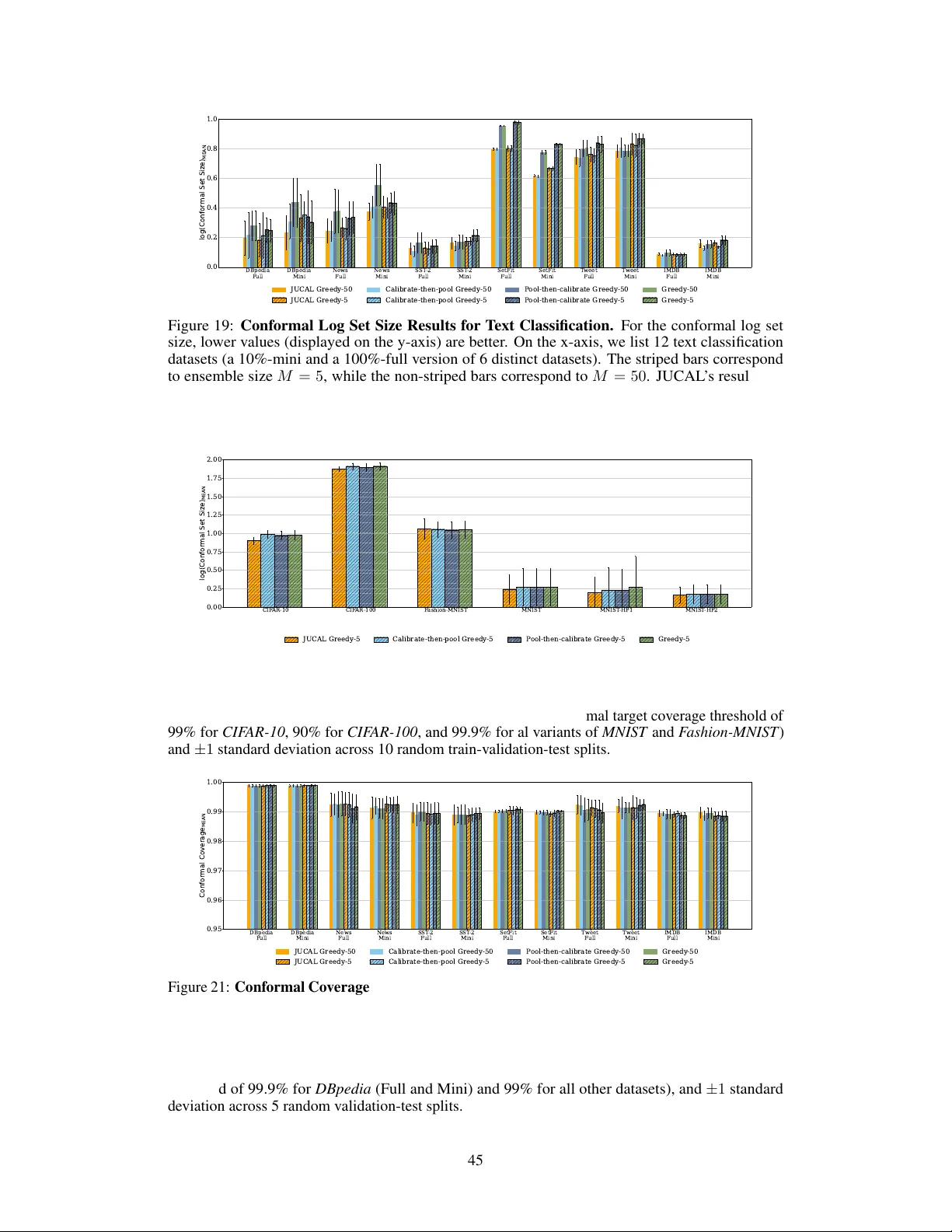

Authors: Jakob Heiss, Sören Lambrecht, Jakob Weissteiner