MGD: Moment Guided Diffusion for Maximum Entropy Generation

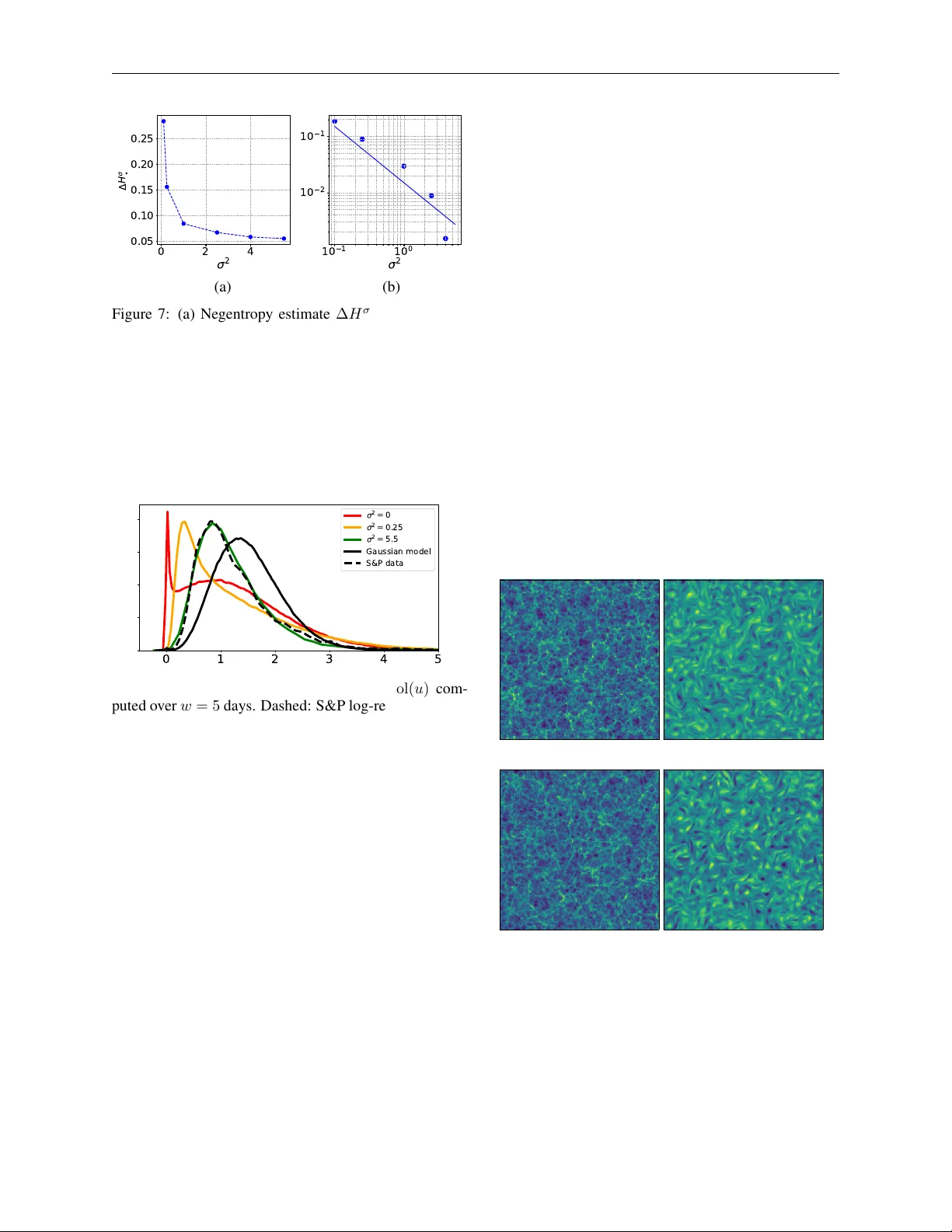

Generating samples from limited information is a fundamental problem across scientific domains. Classical maximum entropy methods provide principled uncertainty quantification from moment constraints but require sampling via MCMC or Langevin dynamics…

Authors: Etienne Lempereur, Nathanaël Cuvelle--Magar, Florentin Coeurdoux