FEM-Bench: A Structured Scientific Reasoning Benchmark for Evaluating Code-Generating LLMs

As LLMs advance their reasoning capabilities about the physical world, the absence of rigorous benchmarks for evaluating their ability to generate scientifically valid physical models has become a critical gap. Computational mechanics, which develops…

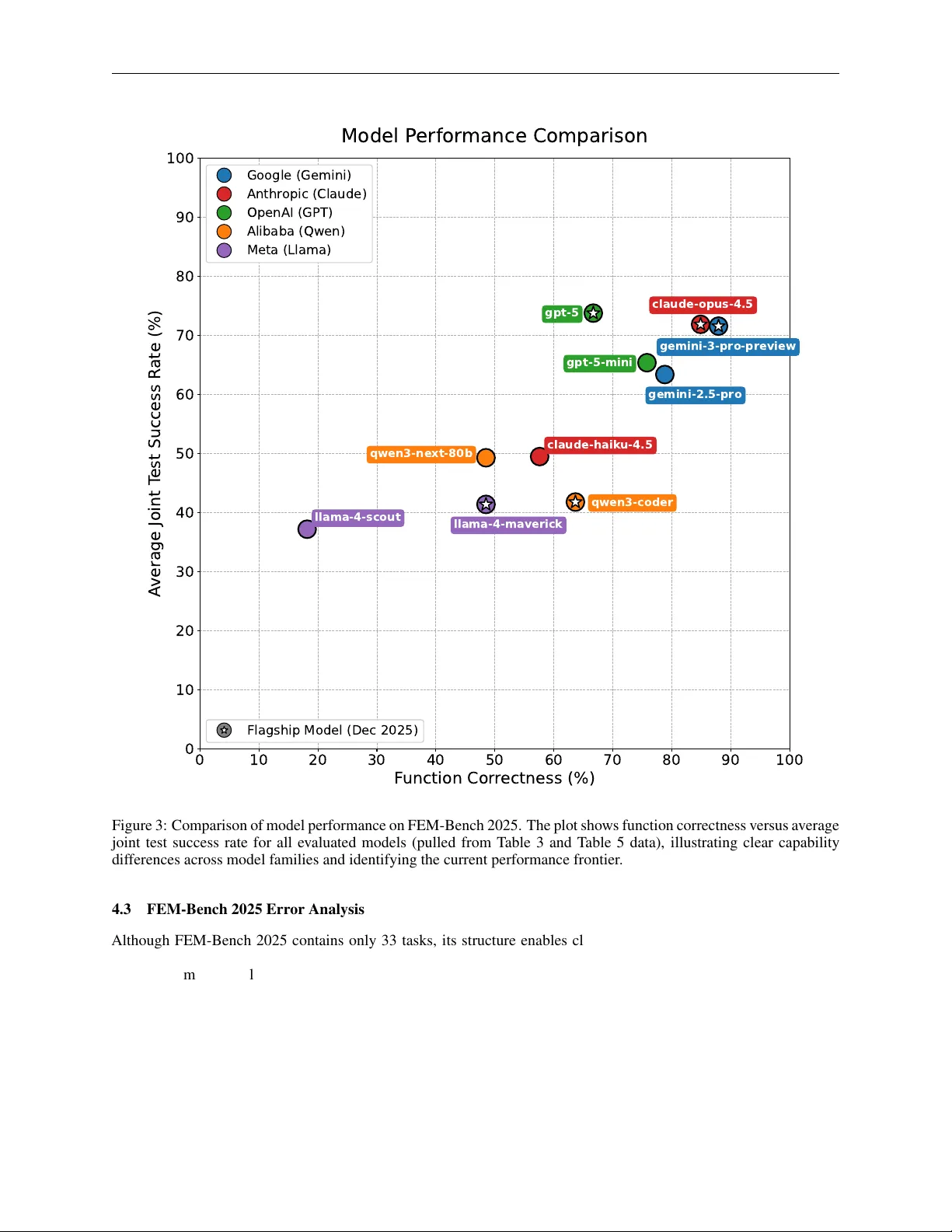

Authors: ** - Saeed Mohammadzadeh* (Department of Mechanical Engineering, Boston University) - Erfan Hamdi* (Department of Mechanical Engineering, Boston University) - Joel Shor (Move37 Labs; Department of Mechanical Engineering