Robust Regression for Safe Exploration in Control

We study the problem of safe learning and exploration in sequential control problems. The goal is to safely collect data samples from operating in an environment, in order to learn to achieve a challenging control goal (e.g., an agile maneuver close …

Authors: Anqi Liu, Guanya Shi, Soon-Jo Chung

Proceedings of Machine Learning Research vol 120: 1 – 16 , 2020 2nd Annual Conference on Learning for Dynamics and Control Rob ust Regr ession f or Safe Exploration in Contr ol Anqi Liu A N Q I L I U @ C A LTE C H . E D U Guanya Shi G S H I @ C A LTE C H . E D U Soon-Jo Chung S J C H U N G @ C A LTE C H . E D U Anima Anandkumar A N I M A @ C A LTE C H . E D U Y isong Y ue Y Y U E @ C A LTE C H . E D U California Institute of T echnology Editors: A. Bayen, A. Jadbabaie, G. J. P appas, P . Parrilo, B. Recht, C. T omlin, M.Zeilinger Abstract W e study the problem of safe learning and exploration in sequential control problems. The goal is to safely collect data samples from operating in an en vironment, in order to learn to achiev e a challenging control goal (e.g., an agile maneuv er close to a boundary). A central challenge in this setting is how to quantify uncertainty in order to choose prov ably-safe actions that allow us to collect informativ e data and reduce uncertainty , thereby achieving both improved controller safety and optimality . T o address this challenge, we present a deep robust regression model that is trained to directly predict the uncertainty bounds for safe e xploration. W e deriv e generalization bounds for learning, and connect them with safety and stability bounds in control. W e demonstrate empirically that our robust re gression approach can outperform con ventional Gaussian process (GP) based safe exploration in settings where it is dif ficult to specify a good GP prior . Keyw ords: Safe Exploration, Rob ust Regression, Co v ariate Shift, Generalization, Stability 1. Introduction A k ey challenge in data-driv en design for robotic controllers is automatically and safely collecting training data. Consider safely landing a drone at fast landing speeds (e.g., be yond a human e xpert’ s piloting abilities). The dynamics are both highly non-linear and poorly modeled as the drone ap- proaches the ground ( Cheeseman and Bennett , 1955 ), but such dynamics can be learnable gi ven the appropriate training data ( Shi et al. , 2019 ). T o collect such data autonomously , one must guarantee safety while operating in the en vironment, which is the problem of safe explor ation . In the drone landing e xample, collecting informativ e training data requires the drone to land increasingly faster while not crashing. Figure 1 depicts an example, where the goal is to learn the most aggressive yet safe trajectory (orange), while not being ov erconfident and execute trajectories that crash (green); the initial nominal controller may only be able to ex ecute very conserv ati v e trajectories (blue). Figure 1: Fast drone landing In order to safely collect such informati ve train- ing data, we need to o vercome two dif ficulties. First, we must quantify the learning errors in out-of-sample data. Every step of data collection creates a shift in the training data distribution. More specifically , our setting is an instance of cov ariate shift, where the un- derlying true physics stay constant, but the sampling c 2020 A. Liu, G. Shi, S.-J. Chung, A. Anandkumar & Y . Y ue. R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T RO L of the state space is biased by the data collection ( Chen et al. , 2016 ). In order to lev erage modern learning approaches, such as deep learning, we must reason about the impact of cov ariate shift when predicting on states not well represented by the training set. Second, we must reason about how to guarantee safety and stability when controlling using the current learned model. Our ultimate goal is to control the dynamical system with desired properties b ut staying safe and stable while data collection. The imperfect dynamical model’ s error translate to possible control error , which must be quantified and controlled. Our Contributions. In this paper , we propose a deep rob ust regression approach for safe ex- ploration in model-based control. W e vie w exploration as a data shift problem, i.e., the “test” data in the proposed exploratory trajectory comes from a shifted distribution compared to the training set. Our approach explicitly learns to quantify uncertainty under such cov ariate shift, which we use to learn robust dynamics models to quantify uncertainty of entire trajectories for safe e xploration. W e analyze learning performance from both generalization and data perturbation perspecti v es. W e use our robust regression analysis to derive stability bounds for control performance when learn- ing rob ust dynamics models, which is used for safe exploration. W e empirically show that our ap- proach outperforms con v entional safe e xploration approaches with much less tuning effort in two scenarios: (a) in verted pendulum trajectory tracking under wind disturbance; and (b) fast drone landing using an aerodynamics simulation based on real-world flight data ( Shi et al. , 2019 ). 2. Problem Setup At a high lev el, our problem can be framed as a three-way interaction of: (i) learning the unmodeled, or residual, dynamics from collected data, (ii) determining whether the current learned dynamics model enables tracking a gi v en trajectory within a safety set, and (iii) selecting trajectories for data collection that are both safe and informati ve, i.e., safe exploration. In the drone landing example in Figure 1 , the residual dynamics is the ground effect that perturbs the nominal multi-rotor model, the safety set is not crashing into the ground, and safe exploration pertains to selecting the most aggressi ve landing trajectory that is pro v ably safe with the current learned dynamics model. A Mixed Model f or Robotic Dynamics. W e consider a standard mixed model for continuous robotic dynamics ( Shi et al. , 2019 ): M ( q ) ¨ q + C ( q , ˙ q ) ˙ q + G ( q ) − B u = d ( q , ˙ q ) | {z } unknown , with generalized coordinates q ∈ R n (and their first & second time deri v ati ves, ˙ q & ¨ q ), control input u ∈ R m , inertia matrix M ( q ) ∈ S n ++ , centrifugal and Coriolis terms C ( q , ˙ q ) ∈ R n × n , gravitational forces G ∈ R n , actuation matrix B ∈ R n × m and some unkno wn residual dynamics d ∈ R n . Note that the C matrix is chosen to make ˙ M − 2 C ske w-symmetric from the relationship between the Riemannian metric M ( q ) and Christof fel symbols. Here d is general, which potentially captures both parametric and nonparametric unmodeled terms. W e aim to learn the unknown, or residual, dynamics d ( q, ˙ q ) using machine learning models. The intuition behind this hybrid dynamical model is the sample efficiency of learning the residual should be much smaller than learning the whole model directly from data. Model Based Nonlinear Control. T o keep the ancillary design choices simple, we employ a standard nonlinear controller design ( Shi et al. , 2019 ). Define the reference trajectory as ˙ q r = ˙ q g − Λ ˜ q , where ˜ q = q − q g , and the composite variable as s = ˙ q − ˙ q r = ˙ ˜ q + Λ ˜ q , where Λ is uniformly positive definite. The control objectiv e is to driv e s to 0 or a small error ball in the presence of bounded uncertainty . Assuming we had a good estimate ˆ d ( q , ˙ q ) of d ( q , ˙ q ) , then our 2 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T RO L controller is: u = B † ( M ( q ) ¨ q r + C ( q , ˙ q ) ˙ q r − K s + G ( q ) − ˆ d ( q , ˙ q )) , (1) where K is a uniformly positi ve definite matrix, and † denotes the Moore-Penrose pseudoin v erse. W ith the control la w Eq. 1 , we will hav e the follo wing closed-loop dynamics: M ( q ) 0 0 I ˙ s ˙ ˜ q + C ( q , ˙ q ) + K 0 − I Λ s ˜ q = d − ˆ d 0 = 0 . (2) where = d − ˆ d is the approximation error between d and ˆ d . Safety Requirements. For any time-varying desired trajectory , x g ( t ) = [ q g ( t ) , ˙ q g ( t )] , we must certify safety during trajectory tracking: x ( t ) ∈ S , ∀ t , with high probability , where S is some safety set. It is obvious that x g ( t ) ∈ S , ∀ t . Ho wev er , because of unknown dynamics d ( q , ˙ q ) , the tracking error ˜ x ( t ) , x ( t ) − x g ( t ) may be large such that ∃ t, x ( t ) / ∈ S . In the drone landing example in Figure 1 , the safety set is that the vertical velocity at the point of landing should not exceed an upper limit (otherwise the drone is considered to ha v e crash landed). Safe Exploration. The ultimate goal is to identify a model (and accompanying controller) that can safely track trajectories with minimal cost. W e assume that the cost function o ver trajectories is kno wn (e.g., landing as quickly as possible), b ut certifying safety is difficult. The goal of safe ex- ploration is then to select a trajectory to track that is both prov ably safe (with the current model) and leads to informati ve training data for improving safety certification. Our safe e xploration procedure is thus to choose the lowest cost safe trajectory , which is a trajectory that lies at the boundary of the current safety set and closest to the ov erall minimal cost trajectory . Feat ure Mat chi ng Adver sary Q ( y | x ) AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevHYgv2AdinZNNvGZpMlyYrL2v/gxYMiXv0/3vw3pu0etPpg4PHeDDPzgpgzbVz3yymsrK6tbxQ3S1vbO7t75f2DtpaJIrRFJJeqG2BNORO0ZZjhtBsriqOA004wuZ75nXuqNJPi1qQx9SM8EixkBBsrtZvV9PHhdFCuuDV3DvSXeDmpQI7GoPzZH0qSRFQYwrHWPc+NjZ9hZRjhdFrqJ5rGmEzwiPYsFTii2s/m107RiVWGKJTKljBorv6cyHCkdRoFtjPCZqyXvZn4n9dLTHjpZ0zEiaGCLBaFCUdGotnraMgUJYanlmCimL0VkTFWmBgbUMmG4C2//Je0z2qeW/Oa55X6VR5HEY7gGKrgwQXU4QYa0AICd/AEL/DqSOfZeXPeF60FJ585hF9wPr4BDOmOxQ== AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevHYgv2AdinZNNvGZpMlyYrL2v/gxYMiXv0/3vw3pu0etPpg4PHeDDPzgpgzbVz3yymsrK6tbxQ3S1vbO7t75f2DtpaJIrRFJJeqG2BNORO0ZZjhtBsriqOA004wuZ75nXuqNJPi1qQx9SM8EixkBBsrtZvV9PHhdFCuuDV3DvSXeDmpQI7GoPzZH0qSRFQYwrHWPc+NjZ9hZRjhdFrqJ5rGmEzwiPYsFTii2s/m107RiVWGKJTKljBorv6cyHCkdRoFtjPCZqyXvZn4n9dLTHjpZ0zEiaGCLBaFCUdGotnraMgUJYanlmCimL0VkTFWmBgbUMmG4C2//Je0z2qeW/Oa55X6VR5HEY7gGKrgwQXU4QYa0AICd/AEL/DqSOfZeXPeF60FJ585hF9wPr4BDOmOxQ== AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevHYgv2AdinZNNvGZpMlyYrL2v/gxYMiXv0/3vw3pu0etPpg4PHeDDPzgpgzbVz3yymsrK6tbxQ3S1vbO7t75f2DtpaJIrRFJJeqG2BNORO0ZZjhtBsriqOA004wuZ75nXuqNJPi1qQx9SM8EixkBBsrtZvV9PHhdFCuuDV3DvSXeDmpQI7GoPzZH0qSRFQYwrHWPc+NjZ9hZRjhdFrqJ5rGmEzwiPYsFTii2s/m107RiVWGKJTKljBorv6cyHCkdRoFtjPCZqyXvZn4n9dLTHjpZ0zEiaGCLBaFCUdGotnraMgUJYanlmCimL0VkTFWmBgbUMmG4C2//Je0z2qeW/Oa55X6VR5HEY7gGKrgwQXU4QYa0AICd/AEL/DqSOfZeXPeF60FJ585hF9wPr4BDOmOxQ== AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevHYgv2AdinZNNvGZpMlyYrL2v/gxYMiXv0/3vw3pu0etPpg4PHeDDPzgpgzbVz3yymsrK6tbxQ3S1vbO7t75f2DtpaJIrRFJJeqG2BNORO0ZZjhtBsriqOA004wuZ75nXuqNJPi1qQx9SM8EixkBBsrtZvV9PHhdFCuuDV3DvSXeDmpQI7GoPzZH0qSRFQYwrHWPc+NjZ9hZRjhdFrqJ5rGmEzwiPYsFTii2s/m107RiVWGKJTKljBorv6cyHCkdRoFtjPCZqyXvZn4n9dLTHjpZ0zEiaGCLBaFCUdGotnraMgUJYanlmCimL0VkTFWmBgbUMmG4C2//Je0z2qeW/Oa55X6VR5HEY7gGKrgwQXU4QYa0AICd/AEL/DqSOfZeXPeF60FJ585hF9wPr4BDOmOxQ== Estimat or P ( y | x ) AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevFYwX5Au5Rsmm1js8mSZMVl7X/w4kERr/4fb/4b03YP2vpg4PHeDDPzgpgzbVz32ymsrK6tbxQ3S1vbO7t75f2DlpaJIrRJJJeqE2BNORO0aZjhtBMriqOA03Ywvp767QeqNJPizqQx9SM8FCxkBBsrtRrV9OnxtF+uuDV3BrRMvJxUIEejX/7qDSRJIioM4VjrrufGxs+wMoxwOin1Ek1jTMZ4SLuWChxR7WezayfoxCoDFEplSxg0U39PZDjSOo0C2xlhM9KL3lT8z+smJrz0MybixFBB5ovChCMj0fR1NGCKEsNTSzBRzN6KyAgrTIwNqGRD8BZfXiats5rn1rzb80r9Ko+jCEdwDFXw4ALqcAMNaAKBe3iGV3hzpPPivDsf89aCk88cwh84nz8LYI7E AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevFYwX5Au5Rsmm1js8mSZMVl7X/w4kERr/4fb/4b03YP2vpg4PHeDDPzgpgzbVz32ymsrK6tbxQ3S1vbO7t75f2DlpaJIrRJJJeqE2BNORO0aZjhtBMriqOA03Ywvp767QeqNJPizqQx9SM8FCxkBBsrtRrV9OnxtF+uuDV3BrRMvJxUIEejX/7qDSRJIioM4VjrrufGxs+wMoxwOin1Ek1jTMZ4SLuWChxR7WezayfoxCoDFEplSxg0U39PZDjSOo0C2xlhM9KL3lT8z+smJrz0MybixFBB5ovChCMj0fR1NGCKEsNTSzBRzN6KyAgrTIwNqGRD8BZfXiats5rn1rzb80r9Ko+jCEdwDFXw4ALqcAMNaAKBe3iGV3hzpPPivDsf89aCk88cwh84nz8LYI7E AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevFYwX5Au5Rsmm1js8mSZMVl7X/w4kERr/4fb/4b03YP2vpg4PHeDDPzgpgzbVz32ymsrK6tbxQ3S1vbO7t75f2DlpaJIrRJJJeqE2BNORO0aZjhtBMriqOA03Ywvp767QeqNJPizqQx9SM8FCxkBBsrtRrV9OnxtF+uuDV3BrRMvJxUIEejX/7qDSRJIioM4VjrrufGxs+wMoxwOin1Ek1jTMZ4SLuWChxR7WezayfoxCoDFEplSxg0U39PZDjSOo0C2xlhM9KL3lT8z+smJrz0MybixFBB5ovChCMj0fR1NGCKEsNTSzBRzN6KyAgrTIwNqGRD8BZfXiats5rn1rzb80r9Ko+jCEdwDFXw4ALqcAMNaAKBe3iGV3hzpPPivDsf89aCk88cwh84nz8LYI7E AAAB7XicbVBNSwMxEJ2tX7V+VT16CRahXsquCHosevFYwX5Au5Rsmm1js8mSZMVl7X/w4kERr/4fb/4b03YP2vpg4PHeDDPzgpgzbVz32ymsrK6tbxQ3S1vbO7t75f2DlpaJIrRJJJeqE2BNORO0aZjhtBMriqOA03Ywvp767QeqNJPizqQx9SM8FCxkBBsrtRrV9OnxtF+uuDV3BrRMvJxUIEejX/7qDSRJIioM4VjrrufGxs+wMoxwOin1Ek1jTMZ4SLuWChxR7WezayfoxCoDFEplSxg0U39PZDjSOo0C2xlhM9KL3lT8z+smJrz0MybixFBB5ovChCMj0fR1NGCKEsNTSzBRzN6KyAgrTIwNqGRD8BZfXiats5rn1rzb80r9Ko+jCEdwDFXw4ALqcAMNaAKBe3iGV3hzpPPivDsf89aCk88cwh84nz8LYI7E Estima t e Wo rs t - ca se Error Bound Tr a j e c t o r y Po ol Contro ller Query Tr a j e c t o r y New Data Figure 2: Our overall formulation. In the learning component, our estimator is robust to the worst- case model of the dynamics that is consistent with the observed source data, which we elaborate in Section 3 . The learning and tracking error bound is then used for picking a trajectory that is safe if the worst case scenario is safe, whose details are in Section 4 . W ith more source data, the worst-case model is constrained tighter along the e xploration. W e present the full algorithm in Section 5 . 3. Learning Residual Dynamics as Rob ust Regression under Covariate Shift Our learning problem is to estimate the residual dynamics d ( q , ˙ q ) in a way that admits rigorous un- certainty estimates for safety certification. The ke y challenge is that the training data and test data are not sampled from the same distribution, which can be framed as cov ariate shift ( Shimodaira , 3 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T RO L 2000 ). Cov ariate shift refers to distrib ution shift caused by the input v ariables P ( x ) , while keeping P ( y ≡ d ( x ) | x ) fixed. In our moti v ating safe landing example, there is a univ ersal “true” aerody- namics model, but we typically only observe training data from a limited source data distrib ution P src ( x ) . Certifying safety of a proposed trajectory will inevitably cov er states that are not well- represented by the training, i.e., data from a target data distribution P trg ( x ) . In other words, the distribution of states in a proposed trajectory is not the distrib ution states in the training data. General intuition. W e use robust regression ( Chen et al. , 2016 ) to estimate the residual dy- namics under covariate shift. Robust regression is deriv ed from a minimax estimation framework ( Gr ¨ unwald et al. , 2004 ), where the estimator P ( y | x ) tries to minimize a loss function on tar get data distribution L , and the adv ersary Q ( y | x ) tries to maximize the loss under source data constraints Γ : min P ( y | x ) max Q ( y | x ) ∈ Γ L . (3) Using the minimax framework, we achiev e rob ustness to the worst-case possible conditional dis- tribution that is “compatible” with finite training data if the estimator reaches the Nash equilibrium by minimizing a loss function defined on target data distrib ution. T echnical Design Choices. Our deri v ation hinges on a choice of loss function L and constraint set for the adversary Γ , from which one can deriv e a formal objectiv e, a learning algorithm, and an uncertainty bound. W e use a relativ e loss function defined as the dif ference in conditional log- loss between an estimator P ( y | x ) and a baseline conditional distribution P 0 ( y | x ) on the target data distribution P trg ( x ) P ( y | x ) : relati ve loss L := E P trg ( x ) Q ( y | x ) h − log P ( y | x ) P 0 ( y | x ) i . T o construct the constraint set Γ , we utilize statistical properties of the source data distribution P src ( x ) : Γ := { Q ( y | x ) || E P src ( x ) Q ( y | x ) [Φ( x, y )] − c | ≤ λ, } where φ ( x, y ) correspond to the sufficient statistics of the estimation task, and c = 1 n P n i = n Φ( x i , y i ) is a vector of sample mean of statistics in the source data. This constraint means the adversary can- not choose a distribution whose suf ficient statistics de viate too f ar from the collected training data. The consequence of the above choices is that the solution has a parametric form: P ( y | x ) ∝ P 0 ( y | x ) e P src ( x ) P trg ( x ) θ T Φ( x,y ) . This form has two useful properties. First, it is straightforward to compute gradients on θ using only the training data. One can also train deep neural networks by treating Φ( x, y ) as the last hidden layer , i.e, we learn a representation of the suf ficient statistics. Second, this form yields a concrete uncertainty bound (see Section 4 ) that can be used to certify safety . For specific choices of P 0 and Φ , the uncertainty is Gaussian distrib uted, which can be useful for many stochastic control approaches that assume Gaussian uncertainty . 1 . 4. From Learning Guarantees to T racking Guarantees W e demonstrate that we bound the learning errors on possible target data and further bound the tracking error . W e then apply the bound to certify safety . The proofs are in the appendix. Learning Guarantees. The learning performance of robust re gression approach can be ana- lyzed from tw o perspecti ves: generalization error under covaraite shift and perturbation error based on Lipschitz continuity . The generalization error reflects the expected error on a tar get distribution gi ven certain function class, bounded distrib ution discrepancy , and base distrib ution. The perturba- tion error reflects the maximum error if target data deviates from training but stays in a Lipschitz 1. Our method generalizes naturally to multidimensional output setting, where we predict a multidimensional Gaussian. 4 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T RO L ball. These error bounds are compatible with deep neural networks whose Rademacher complexity and Lipschitz constant can be controlled and measured (e.g., spectral-normalized neutral networks). Theorem 1 Assume S is a training set with i.i.d. data x i , ..., x n sampled fr om P sr c ( x ) , F is a r e gression function class satisfying sup x ∈X ,f ,f 0 ∈F | f ( x ) − f 0 ( x ) | ≤ M , ˆ R S ( F ) is the Rademacher complexity on S , W is the upper bound of true density ratio sup x ∼ P src ( x ) P trg ( x ) P src ( x ) ≤ W , θ y > 0 is lower bounded by B , the weight estimation for the pr ediction ˆ r ( x ) = ˆ P src ( x ) ˆ P trg ( x ) is lower bounded: inf x ∈ S ˆ r ( x ) ≥ R , base distribution variance is σ 2 0 , and λ is the upperbound of all λ i among the dimensions of φ ( x ) . When learning a ˆ f ∈ F on P trg ( x, y ) , the following generalization err or bound holds with pr obability at least 1 − δ , E P trg ( x,y ) [( y − ˆ f ( x )) 2 ] ≤ W (2 RB + σ − 2 0 ) − 1 + λ + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n . If we assume that targ et data samples x ’ s stay in a ball B ( ) with diameter fr om the sour ce data S , B ( ) = { x | sup x 0 ∈ S k x − x 0 k ≤ } , the true function f ( x ) is Lipsc hitz continuous with constant L , and the r ob ust r e gr ession mean estimator ˆ f is also Lipschitz continuous with constant ˆ L , then, sup x ∈ B ( ) ,y ∼ f ( x ) [( y − ˆ f ( x )) 2 ] ≤ ((2 RB + σ − 2 0 ) − 1 / 2 + √ λ + L + ˆ L k k ) 2 . (4) The density ratio W can be controlled by choosing the tar get distrib ution carefully in the safe exploration algorithm (Alg. 1). In other words, we can design the desired trajectories to be close enough to the training set so that the resulting tracking bounds are tight enough to guarantee safety . T racking Guarantees. W e set k k 2 = k y − ˆ f ( x ) k 2 to correspond with the learning bounds. The tar get data is set to a single proposed trajectory x trg ( t ) , which means W can be bounded. The second option is to use a perturbation bound, where x trg ( t ) ∈ B ( ) . W e emphasize that k k is upper bounded with k k ≤ sup x ∈ x trg ( t ) k ( x ) k when we define target data in a specific set and use rob ust regression for learning dynamics. W e sho w k x ( t ) − x g ( t ) k , k ˜ x ( t ) k (Euclidean distance between the desired trajectory and the real trajectory) is bounded when the error of the dynamics estimation is bounded. Again, recall that x = [ q , ˙ q ] is our state, and x g ( t ) is the desired trajectory . Theorem 2 Suppose x is in some compact set X , and m = sup x ∈X k k . Then ˜ x will e xponentially con ver ge to the following ball: lim t →∞ k ˜ x ( t ) k = γ · m , wher e γ = λ max ( M ) λ min ( K ) λ min ( M ) s ( 1 λ min (Λ) ) 2 + (1 + λ max (Λ) λ min (Λ) ) 2 , (5) wher e λ max denotes the maximum eigen value and λ min denotes the minimum eigen value. Integration to safe exploration. W e can integrate the bounds on learning error and tracking error into safe e xploration. Specially , if we can design a compact set X and find the corresponding maximum error bound m = sup x ∈X k k on it, we can use it to decide whether a trajectory in this set is safe or not by checking whether its worst-case possible tracking trajectory is in the safety set S . Then we only pick the safe trajectories with the minimum cost in data collection. 5 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L 5. Safe Exploration Algorithm For simplicity , we maintain a finite set of candidate trajectories to select from for safe exploration; future w ork includes integration with continuous trajectory optimization ( Nakka and Chung , 2019 ). The worst-case tracking trajectories can be computed by generating a “tube” using euclidean dis- tance in Theorem 2 . W e then eliminate unsafe ones and choose the most “aggressiv e” one in terms of our cost function for the next iteration. Instead of ev aluating the actual upper bound, we use β · max x σ ( x ) for measuring m as an approximation, since it is guaranteed that the error is within β · max x σ ( x ) with high probability as long as the prediction is a Gaussian distribution, if the true function is dra wn from the same distribution. Here σ ( x ) is the standard de viation of the Gaussian distribution predicted by our rob ust regression algorithm. Algorithm 1 describes this procedure. 6. Experiments Algorithm 1 Safe Exploration for Control using Robust Dynamics Estimation Input : Pool of desired trajectories x k g ( t ) , k = 1 , 2 , ...., K ; cost function J ; robust regression model of dynamics f ; controller U ; safety set S ; base distribution N ( µ 0 , σ 2 0 ) ; parameter β Initialize dynamics model f 0 = N ( µ 0 , σ 2 0 ) Initialize training set = ∅ , f = f 0 While e = 1 , ..., E Safe trajectory list L = ∅ For k = 1 , ..., K Predict ( µ, σ 2 ) = f ( x k g ( t )) σ m = max σ ( x k g ( t )) ; m = β · σ m If worst-case trajectory in S Add x k g ( t ) to L T rack x ∗ g ( t ) = arg min x g ( t ) ∈ L J ( x g ( t )) to collect data x 0 ( t ) using controller U Add data x 0 ( t ) to T raining set T rain dynamics model f 0 , f = f 0 Output : dynamics model f , last desired trajec- tory x E ( t ) and actual trajectory x 0 E ( t ) W e conduct simulation experiments on the in- verted pendulum and drone landing. W e use ker - nel density estimation to estimate the density ra- tios. W e demonstrate that our approach can reli- ably and safely con verge to optimal beha vior . W e also compare with a Gaussian process (GP) ver - sion of Algorithm 1 . In general, we find it is dif- ficult to tune the GP kernel parameters, especially in the multidimensional output cases. Example 1 (inv erted pendulum with external wind). Unlike the classical pendulum model, we consider unknown external wind. Dynamics can be described as ml 2 ¨ q − ml g sin q − u = d ( q , ˙ q ) , where d ( q , ˙ q ) is e xternal torque gener- ated by the unknown wind. Our control goal is to track q g ( t ) = sin( t ) , and the safety set is S = { ( q, ˙ q ) : | q | < 1 . 5 } . W e design a desired trajectory pool using P ( C ) = { q g ( t ) = C · sin( t ) , 0 < C ≤ 1 } . The ground truth of wind comes from quadratic air drag model. W e use the angle upper bound in trajectory as the re ward function for choosing “most aggressiv e” trajectories. W e use base distrib ution N (0 , 0 . 5) to start with and β = 0 . 5 . 𝛼 𝑙 𝑚 𝑢 𝑔 u nknown ex ternal wind uns afe regi on 𝑧 u nknown aerodynam ic s 𝑚 𝑔 ground 𝑢 sa fe co nd itio n: l anding spe ed < 1m /s Figure 3: Illustration of two examples Example 2 (drone landing with ground effect) W e consider drone landing with unkno wn ground effect. Dynamics is m ¨ q + mg − c T u 2 = d ( q , ˙ q ) , where c T is the thrust coefficient. The control goal is smooth and quick landing, i.e., quickly driving q → 0 . The safety set is S = { ( q , ˙ q ) : when q = 0 , ˙ q > − 1 } , i.e., the drone cannot hit the ground with high velocity . Our desired trajectory pool is P ( C , h g ) = { q g ( t ) = e − C t (1 + C t )(1 . 5 − h g ) + h g , 0 < C, 0 ≤ h g < 1 . 5 } , which means the drone smoothly mo ves from z (0) = 1 . 5 to the desired height h d . If h d = 0 , the 6 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L drone lands successfully . Greater C means faster landing. W e use landing time to determine the next “aggressi ve” trajectory . The ground truth of aerodynamics comes from a dynamics simulator that is trained in ( Shi et al. , 2019 ), where d ( q , ˙ q ) is a four-layer ReLU neural network trained by real flying data. W e use base distribution N (0 , 1) for rob ust regression and β = 1 . (a) (b) (c) (d) (e) (f) (g) (h) Figure 4: T op Ro w . The pendulum task: (a)-(c) are the phase portraits of angle and angular v eloc- ity; Blue curve is tracking the desired trajectory with ground-truth disturbance; the worst- case possible trajectory is calculated according to Theorem 2 ; heatmap is the difference between predicted dynamics (the wind) and the ground truth; and (d) is the tracking er- ror and the maximum density ratio. Bottom Row . The drone landing task: (e)-(g) are the phase portraits with height and v elocity; heatmap is dif ference between the predicted ground ef fect) and the ground truth; (h) is the comparison with GPs in landing time. Result Analysis Figure 4 (a) to (c) and (e) to (g) demonstrate the exploration process with selected desired trajectories, worst-case tracking trajectory under current dynamics model, tracking trajecto- ries with the ground truth unknown dynamics model, and actual tracking trajectories. Note that for landing we learn three-dimensional ground ef fect where d ( q , ˙ q ) corresponds to the z -component, while the trajectory design and error bound computation depend on z -component. In both tasks, the algorithm selects small C to guarantee safety at the beginning, and gradually is able to select larger C v alues and track it while staying safe. W e also demonstrate the decaying tracking error in each iteration for the pendulum task in Figure 4 (d). W e v alidate that our density ratio is al ways bounded along the exploration. W e examine the drone landing time in Figure 4 (h) and compare against multitask GP models ( Bonilla et al. , 2008 ) with both RBF kernel and Matern kernel. Our approach outperforms all GP models. Modeling the ground effect is notoriously challenging ( Shi et al. , 2019 ), and the GP suffers from model misspecification, especially in the multidimensional setting ( Owhadi et al. , 2015 ). Besides, GP models are also more computationally expensi ve than our method in making predictions. In contrast, our approach can fit general non-linear function estimators such as deep neural networks adapti vely to the av ailable data ef ficiently , which leads to more flexible inducti ve bias and better fitting of the data and uncertainty quantification. 7 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L 7. Related W ork Safe Exploration. Most approaches for safe exploration use Gaussian processes (GPs) to quantify uncertainty ( Sui et al. , 2015 , 2018 ; Kirschner et al. , 2019 ; Akametalu et al. , 2014 ; Berkenkamp et al. , 2016 ; T urchetta et al. , 2016 ; W achi et al. , 2018 ; Berk enkamp et al. , 2017 ; Fisac et al. , 2018 ; Khalil and Grizzle , 2002 ). These methods are related to bandit algorithms ( Bubeck et al. , 2012 ) and typically employ upper confidence bounds ( Auer , 2002 ) to balance exploration versus exploita- tion ( Srini vas et al. , 2010 ). Howe ver , GPs are sensitiv e to model (i.e., the kernel) selection, and thus are often not suitable for tasks that aim to gradually reach boundaries of safety sets in a highly non- linear en vironment. In the high-dimensional case and under finite information, GPs suffer from bad priors e ven more se verely ( Owhadi et al. , 2015 ). One could blend GP-based modeling with general function approximations (such as deep learning) ( Berkenkamp et al. , 2017 ; Cheng et al. , 2019a ), but the resulting optimization-based control problem can be challenging to solve. Other approaches either require having a safety model pre-specified upfront ( Alshiekh et al. , 2018 ), are restricted to relati vely simple models ( Moldov an and Abbeel , 2012 ), hav e no con vergence guarantees during learning ( T aylor et al. , 2019 ), or have no safety guarantees ( Garcia and Fern ´ andez , 2012 ). Distribution Shift. The study of learning under distribution shift has seen increasing interest, o wing to the widespread practical issue of distribution mismatch. Our work is stylistically similar to ( Liu et al. , 2015 ; Chen et al. , 2016 ; Liu and Ziebart , 2014 , 2017 ), which also frame uncertainty quantification through the lens of cov ariate shift, although ours is the first to e xtend to deep neural networks with rigorous guarantees. Dealing with domain shift is a fundamental challenge in deep learning, as highlighted by their vulnerability to adversarial inputs ( Goodfellow et al. , 2014 ), and the implied lack of robustness. Beyond robust estimation, the typical approaches are to either re gularize ( Sri v astav a et al. , 2014 ; W ager et al. , 2013 ; Le et al. , 2016 ; Bartlett et al. , 2017 ; Miyato et al. , 2018 ; Shi et al. , 2019 ; Benjamin et al. , 2019 ; Cheng et al. , 2019b ) or synthesize an augmented dataset that anticipates the domain shift ( Prest et al. , 2012 ; Zheng et al. , 2016 ; Stew art and Ermon , 2017 ). W e also utilize spectral normalization ( Bartlett et al. , 2017 ) in conjunction with robust estimation. Robust and Adaptiv e Control. Rob ust control ( Zhou and Doyle , 1998 ) and adapti ve control ( Slotine et al. , 1991 ) are two classical frameworks to handle uncertainties in the dynamics. GPs hav e been combined with nonlinear MPC for online adaptation and uncertainty estimation ( Ostafew et al. , 2016 ). Ho we ver , robust control suf fers from large uncertainty set and it is hard to analyse con ver gence and quantify uncertainty in adapti ve control. Ours is the first to explicitly consider cov ariate shift in learning dynamics. W e pick the re gion to estimate uncertainty carefully and adapt the controller to track safe proposed trajectory in data collection. 8. Conclusion In this paper , we propose an algorithmic framework for safe exploration in model-based control. T o quantify uncertainty , we de velop a rob ust deep regression method for dynamics estimation. Using robust re gression, we e xplicitly deal with data shifts during episodic learning, and in particular can quantify uncertainty ov er entire trajectories. W e prove the generalization and perturbation bounds for rob ust regression, and show how to integrate with control to deri ve safety bounds in terms of stability . These bounds explicitly translates the error in dynamics learning to the tracking error in control. From this, we design a safe exploration algorithm based on a finite pool of desired trajectories. W e empirically sho w that our method achieves superior performance than GP-based methods in control of an in verted pendulum and drone landing examples 8 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L Acknowledgments Anqi Liu is supported by PIMCO Postdoctoral Fellowship at Caltech. Prof. Anandkumar is sup- ported by Bren endowed Chair , faculty aw ards from Microsoft, Google, and Adobe, D ARP A P AI and LwLL grants. This work is also funded in part by Caltechs CAST and the Raytheon Company . References Anayo K Akametalu, Jaime F Fisac, Jeremy H Gillula, Shahab Kaynama, Melanie N Zeilinger , and Claire J T omlin. Reachability-based safe learning with gaussian processes. In 53rd IEEE Confer ence on Decision and Contr ol , pages 1424–1431. IEEE, 2014. Mohammed Alshiekh, Roderick Bloem, R ¨ udiger Ehlers, Bettina K ¨ onighofer , Scott Niekum, and Ufuk T opcu. Safe reinforcement learning via shielding. In Thirty-Second AAAI Confer ence on Artificial Intelligence , 2018. Peter Auer . Using confidence bounds for exploitation-exploration trade-of fs. J ournal of Machine Learning Resear ch , 3(Nov):397–422, 2002. Peter L Bartlett, Dylan J Foster , and Matus J T elgarsky . Spectrally-normalized margin bounds for neural networks. In Advances in Neural Information Pr ocessing Systems , pages 6240–6249, 2017. Ari S Benjamin, Da vid Rolnick, and Konrad Kording. Measuring and regularizing networks in function space. In International Confer ence on Learning Representations (ICLR) , 2019. Felix Berkenkamp, Angela P Schoellig, and Andreas Krause. Safe controller optimization for quadrotors with gaussian processes. In 2016 IEEE International Confer ence on Robotics and Automation (ICRA) , pages 491–496. IEEE, 2016. Felix Berkenkamp, Matteo T urchetta, Angela Schoellig, and Andreas Krause. Safe model-based reinforcement learning with stability guarantees. In Advances in neural information pr ocessing systems , pages 908–918, 2017. Edwin V Bonilla, Kian M Chai, and Christopher Williams. Multi-task g aussian process prediction. In Advances in neural information pr ocessing systems , pages 153–160, 2008. S ´ ebastien Bubeck, Nicolo Cesa-Bianchi, et al. Regret analysis of stochastic and nonstochastic multi- armed bandit problems. F oundations and T rends R in Machine Learning , 5(1):1–122, 2012. IC Cheeseman and WE Bennett. The effect of ground on a helicopter rotor in forw ard flight. 1955. Xiangli Chen, Mathe w Monfort, Anqi Liu, and Brian D Ziebart. Robust cov ariate shift regression. In Artificial Intelligence and Statistics , pages 1270–1279, 2016. Richard Cheng, G ´ abor Orosz, Richard M Murray , and Joel W Burdick. End-to-end safe reinforce- ment learning through barrier functions for safety-critical continuous control tasks. In Conference on Artificial Intelligence (AAAI) , 2019a. 9 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L Richard Cheng, Abhinav V erma, Gabor Orosz, Swarat Chaudhuri, Y isong Y ue, and Joel Burdick. Control regularization for reduced v ariance reinforcement learning. In International Confer ence on Machine Learning (ICML) , 2019b. Jaime F Fisac, Anayo K Akametalu, Melanie N Zeilinger , Shahab Kaynama, Jeremy Gillula, and Claire J T omlin. A general safety framew ork for learning-based control in uncertain robotic systems. IEEE T ransactions on Automatic Contr ol , 2018. Javier Garcia and Fernando Fern ´ andez. Safe exploration of state and action spaces in reinforcement learning. Journal of Artificial Intellig ence Researc h , 45:515–564, 2012. Ian J Goodfello w , Jonathon Shlens, and Christian Szegedy . Explaining and harnessing adv ersarial examples. arXiv preprint , 2014. Peter D Gr ¨ unwald, A Philip Dawid, et al. Game theory , maximum entrop y , minimum discrepanc y and robust bayesian decision theory . the Annals of Statistics , 32(4):1367–1433, 2004. Hassan Khalil and Jessy Grizzle. Nonlinear systems. Pr entice hall , 2002. Johannes Kirschner , Mojm ´ ır Mutn ` y, Nicole Hiller , Rasmus Ischebeck, and Andreas Krause. Adap- ti ve and safe bayesian optimization in high dimensions via one-dimensional subspaces. In Inter - national Confer ence on Machine Learning (ICML) , 2019. Hoang M. Le, Andrew Kang, Y isong Y ue, and Peter Carr . Smooth imitation learning for online sequence prediction. In International Confer ence on Machine Learning (ICML) , 2016. Anqi Liu and Brian Ziebart. Robust classification under sample selection bias. In Advances in neural information pr ocessing systems , pages 37–45, 2014. Anqi Liu and Brian D Ziebart. Robust cov ariate shift prediction with general losses and feature vie ws. arXiv pr eprint arXiv:1712.10043 , 2017. Anqi Liu, Lev Reyzin, and Brian D Ziebart. Shift-pessimistic activ e learning using robust bias- aw are prediction. In T wenty-Ninth AAAI Confer ence on Artificial Intelligence , 2015. T akeru Miyato, T oshiki Kataoka, Masanori K oyama, and Y uichi Y oshida. Spectral normalization for generati ve adv ersarial networks. arXiv pr eprint arXiv:1802.05957 , 2018. T eodor Mihai Moldov an and Pieter Abbeel. Safe exploration in markov decision processes. In International Confer ence on Machine Learning (ICML) , 2012. Y ashwanth Kumar Nakka and Soon-Jo Chung. T rajectory optimization for chance-constrained non- linear stochastic systems. In Confer ence on Decision and Contr ol (CDC) , 2019. Chris J Ostafew , Angela P Schoellig, and T imothy D Barfoot. Robust constrained learning-based nmpc enabling reliable mobile robot path tracking. The International Journal of Robotics Re- sear ch , 35(13):1547–1563, 2016. Houman Owhadi, Clint Scovel, and Tim Sulliv an. On the brittleness of bayesian inference. SIAM Revie w , 57(4):566–582, 2015. 10 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L Alessandro Prest, Christian Leistner , Ja vier Ci vera, Cordelia Schmid, and V ittorio Ferrari. Learning object class detectors from weakly annotated video. In 2012 IEEE Confer ence on Computer V ision and P attern Recognition , pages 3282–3289. IEEE, 2012. Guanya Shi, Xichen Shi, Michael O’Connell, Rose Y u, Kamyar Azizzadenesheli, Animashree Anandkumar , Y isong Y ue, and Soon-Jo Chung. Neural lander: Stable drone landing control using learned dynamics. International Confer ence on Robotics and Automation (ICRA) , 2019. Hidetoshi Shimodaira. Improving predicti ve inference under cov ariate shift by weighting the log- likelihood function. Journal of statistical planning and infer ence , 90(2):227–244, 2000. Jean-Jacques E Slotine, W eiping Li, et al. Applied nonlinear contr ol , volume 199. Prentice hall Engle wood Clif fs, NJ, 1991. Niranjan Sriniv as, Andreas Krause, Sham M Kakade, and Matthias Seeger . Gaussian process opti- mization in the bandit setting: No regret and experimental design. In International Confer ence on Machine Learning (ICML) , 2010. Nitish Sriv astav a, Geoffre y Hinton, Alex Krizhevsky , Ilya Sutskev er, and Ruslan Salakhutdinov . Dropout: a simple way to prevent neural networks from ov erfitting. The Journal of Machine Learning Resear ch , 15(1):1929–1958, 2014. Russell Ste wart and Stefano Ermon. Label-free supervision of neural networks with physics and domain kno wledge. In Thirty-F irst AAAI Confer ence on Artificial Intelligence , 2017. Y anan Sui, Alkis Gotovos, Joel Burdick, and Andreas Krause. Safe exploration for optimization with gaussian processes. In International Confer ence on Machine Learning , pages 997–1005, 2015. Y anan Sui, V incent Zhuang, Joel W Burdick, and Y isong Y ue. Stage wise safe bayesian optimization with gaussian processes. In International Confer ence on Machine Learning (ICML) , 2018. Andre w J T aylor , V ictor D Dorobantu, Hoang M Le, Y isong Y ue, and Aaron D Ames. Episodic learning with control lyapunov functions for uncertain robotic systems. arXiv pr eprint arXiv:1903.01577 , 2019. Matteo T urchetta, Felix Berkenkamp, and Andreas Krause. Safe exploration in finite mark ov deci- sion processes with gaussian processes. In Advances in Neural Information Pr ocessing Systems , pages 4312–4320, 2016. Akifumi W achi, Y anan Sui, Y isong Y ue, and Masahiro Ono. Safe exploration and optimization of constrained mdps using gaussian processes. In Thirty-Second AAAI Confer ence on Artificial Intelligence , 2018. Stefan W ager , Sida W ang, and Percy S Liang. Dropout training as adaptiv e regularization. In Advances in neural information pr ocessing systems , pages 351–359, 2013. Stephan Zheng, Y ang Song, Thomas Leung, and Ian Goodfellow . Improving the robustness of deep neural netw orks via stability training. In Pr oceedings of the ieee confer ence on computer vision and pattern r ecognition , pages 4480–4488, 2016. 11 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L K emin Zhou and John Comstock Doyle. Essentials of r obust contr ol , volume 104. Prentice hall Upper Saddle Ri ver , NJ, 1998. 12 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L A ppendix A. A ppendix A.1. Additional Theoretical Results A . 1 . 1 . I M P ROV E D B O U N D S F O R C O N T R O L As explained in the paper , we can further improve the learning bounds in the control context when we control the target data in a strategically way . In Theorem 1 , W is the upper bound of the true density ratio of this two distribution, which potentially can be v ery large when tar get data is a v ery dif ferent one from the source. Howe ver , we can choose our next trajectory as the one not deviate too much from the source data in practice, so that further constraining W and also in Theorem 1 . W e can rewrite the theorem as: Theorem 3 [Improv ed Generalization and perturbation bounds in general cases] Assume S is a training set S with i.i.d. data x i , ..., x n sampled fr om P sr c ( x ) , F is the function class of mean estimator ˆ f in r obust r e gression, it satisfies sup x ∈X ,f ,f 0 ∈F | f ( x ) − f 0 ( x ) | ≤ M , ˆ R S ( F ) is the Rademacher complexity on S , W is the upper bound of true density ratio sup x ∼ P src ( x ) P trg ( x ) P src ( x ) ≤ W 0 , θ y is lower bounded by B , the weight estimation sup x ∈ S r ( x ) ≤ R , base distribution variance is σ 2 0 , λ is the upperbound of all λ i among the dimensions of φ ( x ) , we have the generalization err or bound on P trg ( x, y ) hold with pr obability 1 − δ , E P trg ( x ,y ) [( y − ˆ f ( x )) 2 ] ≤ W 0 (2 RB + σ − 2 0 ) − 1 + λ + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n If we assume targ et data samples x ’ s stay in a ball B ( ) with diameter 0 fr om the source data S , B ( ) = x | sup x 0 ∈ S k x − x 0 k ≤ 0 the true function f ( x ) is Lipschitz continuous with constant L and the r obust r egr ession mean estimator ˆ f is also Lipschitz continuous with constant ˆ L , sup x ∈ B ( ) ,y ∼ f ( x ) [( y − ˆ f ( x )) 2 ] ≤ ((2 RB + σ − 2 0 ) − 1 / 2 + √ λ + L + ˆ L k 0 k ) 2 (6) Note that in generalization bound, we can further impro ve the bound if we kno w what is the method for estimating density ratio r and further relate the o verall learning performance with the density ration estimation. Here, we just use r as if it is a v alue that is gi ven to us beforehand. A . 1 . 2 . H I G H P RO B A B I L I T Y B O U N D S F O R G AU S S I A N D I S T R I B U T I O N In Algorithm 1 , we use β σ ( x ) as our approximation of the learning error from the robust re gression instead of measuring the actual learning upper bound, which is hard to e valuate. Here we gi ve the justification. If the prediction from robust regression is N ( µ ( x ) , σ 2 ( x )) , assuming true function is drawn from the same distribution, we hav e P r {| f ( x ) − µ ( x ) | > √ β σ ( x ) } ≤ e − β / 2 . Also, for a unit normal distrib ution r ∼ N (0 , 1) , we hav e P r { r > c } = e − c 2 / 2 (2 π ) − 1 / 2 R e − ( r − c ) 2 / 2 − c ( r − c ) dr ≤ e − c 2 / 2 P r { r > 0 } = (1 / 2) e − c 2 / 2 . Therefore, for data S , | f ( x ) − µ ( x ) | ≤ β − 1 / 2 σ ( x ) hold with probability greater than 1 − | S | e − β σ/ 2 . Therefore, we can choose β in practice and it corresponds with dif ferent probability in bounds. 13 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L A.2. Proof of Theoretical Results A . 2 . 1 . P R O O F O F T H E O R E M 1 Proof W e first prov e the generalization bound using standard Redemacher Complexity for re gres- sion problems: E P trg ( x,y ) [( y − ˆ y ( x )) 2 ] = P trg ( x, y ) P src ( x, y ) E P src ( x,y ) [( y − ˆ y ( x )) 2 ] ( Cov ariate Shift Assumption ) = P trg ( x ) P src ( x ) E P src ( x,y ) [( y − ˆ y ( x )) 2 ] ≤ W 1 n n X i =1 ( y i − ˆ y ( x i )) 2 + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n = W 1 n n X i =1 ( y i − ˆ y ( x i )) 2 + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n ≤ W 1 n n X i =1 | y 2 i − ˆ y ( x i ) 2 | + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n = W 1 n n X i =1 σ 2 ( x i ) + λ + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n ( Gradient of training v anishes: y 2 − ( µ ( x ) 2 + σ 2 ( x )) − λ = 0) ≤ W 1 n n X i =1 σ 2 ( x i ) + λ + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n (7) where sup x ∈ X ; f ,f 0 ∈F | h ( x ) − h 0 ( x ) | ≤ M , ˆ R S ( F ) is the Rademacher complexity on the function class of mean estimate, and the variance term σ 2 ( x ) is the empirical variance of the robust regression model and follows the sigma function Chen et al. ( 2016 ). This is a data-dependent bound that relies on training samples. W e next prove the perturbation bounds. Assuming x stays in a ball B ( δ ) with diameter δ from the source training data S , B ( ) = { x | sup x 0 ∈ S k x − x 0 k ≤ } , the true function f ( x ) is Lips- chitz continuous with constant L and the mean function of our learned estimator is also Lipschitz continuous with constant ˆ L , then we hav e 14 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L sup x ∈ B ( ) ,y ∼ f ( x ) ( y − ˆ y ( x )) 2 ≤ sup x ∈ S,y ∼ f ( x ) ( | y − ˆ y ( x ) | + ( L + ˆ L ) k k ) 2 ≤ sup x ∈ S,y ∼ f ( x ) ( | y − ˆ y ( x ) | + ( L + ˆ L ) k k ) 2 ≤ sup x ∈ S,y ∼ f ( x ) ( p | y − ˆ y ( x ) | 2 + ( L + ˆ L ) k k ) 2 ≤ sup x ∈ S,y ∼ f ( x ) ( p | y 2 − ˆ y 2 ( x ) | + ( L + ˆ L ) k k ) 2 = sup x ∈ S ( v u u t 1 n n X i =1 σ 2 ( x i ) + λ + ( L + ˆ L ) k k ) 2 = sup x ∈ S ( v u u t 1 n n X i =1 σ 2 ( x i ) + √ λ + ( L + ˆ L ) k k ) 2 (8) The last equality is due to the satisfaction of the follo wing: y 2 − ( µ ( x ) 2 + σ 2 ( x )) − λ = 0 (9) when gradient of rob ust regression v anishes Chen et al. ( 2016 ). If we hav e an upperbound for the parameter θ y > B and the weight estimation r ≤ R , we ha ve 1 n n X i =1 σ 2 ( x i ) ≤ (2 RB + σ − 2 0 ) − 1 (10) Therefore, the generalization bound and perturbation bounds can be written as E P trg ( x,y ) [( y − ˆ y ( x )) 2 ] ≤ W (2 RB + σ − 2 0 ) − 1 + λ + 4 M ˆ R S ( F ) + 3 M 2 s log 2 δ 2 n (11) sup x ∈ B ( ) ,y ∼ f ( x ) ( y − ˆ y ( x )) 2 ≤ ((2 RB + σ − 2 0 ) − 1 / 2 + √ λ + ( L + ˆ L ) k k ) 2 (12) A . 2 . 2 . P R O O F O F T H E O R E M 2 Proof Consider the follo wing L yapunov function: V = s T M s. (13) Using the closed-loop Eq. 2 and the property ˙ M − 2 C ske w-symmetric, we will hav e d dt V = − 2 s T K s + 2 s T . (14) Note that d dt V ≤ − 2 λ min ( K ) k s k 2 + 2 k s k m . (15) 15 R O B U S T R E G R E S S I O N F O R S A F E E X P L O R A T I O N I N C O N T R O L Using the comparison lemma Khalil and Grizzle ( 2002 ), we will hav e k s k ≤ s λ max ( M ) λ min ( M ) e − K λ max ( M ) t k s (0) k + λ max ( M ) λ min ( K ) λ min ( M ) (1 − e − K λ max ( M ) t ) · m . (16) Therefore s will exponentially con verge to λ max ( M ) λ min ( K ) λ min ( M ) · m (17) Since s = ˙ ˜ q + Λ ˜ q , k ˜ q k will exponentially con verge to λ max ( M ) λ min (Λ) λ min ( K ) λ min ( M ) · m . (18) Moreov er, since k ˙ ˜ q k ≤ k s k + λ max (Λ) k ˜ q k , (19) k ˙ ˜ q k will con verge to ( λ max ( M ) λ min ( K ) λ min ( M ) + λ max (Λ) λ max ( M ) λ min (Λ) λ min ( K ) λ min ( M ) ) · m . (20) Recall that ˜ x = [ ˜ q , ˙ ˜ q ] . Thus finally we have the follo wing upper bound of the error ball: k ˜ x k → λ max ( M ) λ min ( K ) λ min ( M ) s ( 1 λ min (Λ) ) 2 + (1 + λ max (Λ) λ min (Λ) ) 2 · m . (21) 16

Original Paper

Loading high-quality paper...

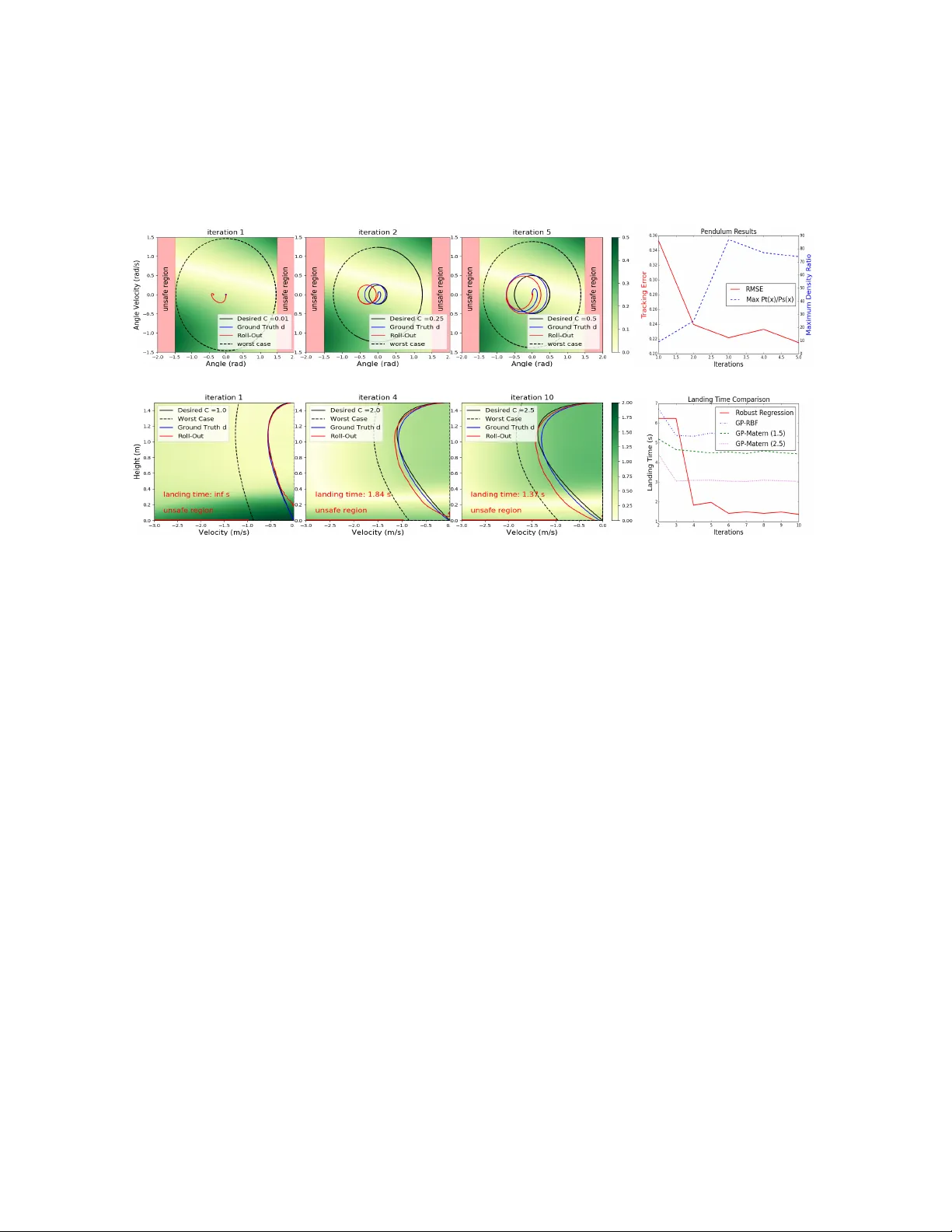

Comments & Academic Discussion

Loading comments...

Leave a Comment