Factorized Higher-Order CNNs with an Application to Spatio-Temporal Emotion Estimation

Training deep neural networks with spatio-temporal (i.e., 3D) or multidimensional convolutions of higher-order is computationally challenging due to millions of unknown parameters across dozens of layers. To alleviate this, one approach is to apply l…

Authors: Jean Kossaifi, Antoine Toisoul, Adrian Bulat

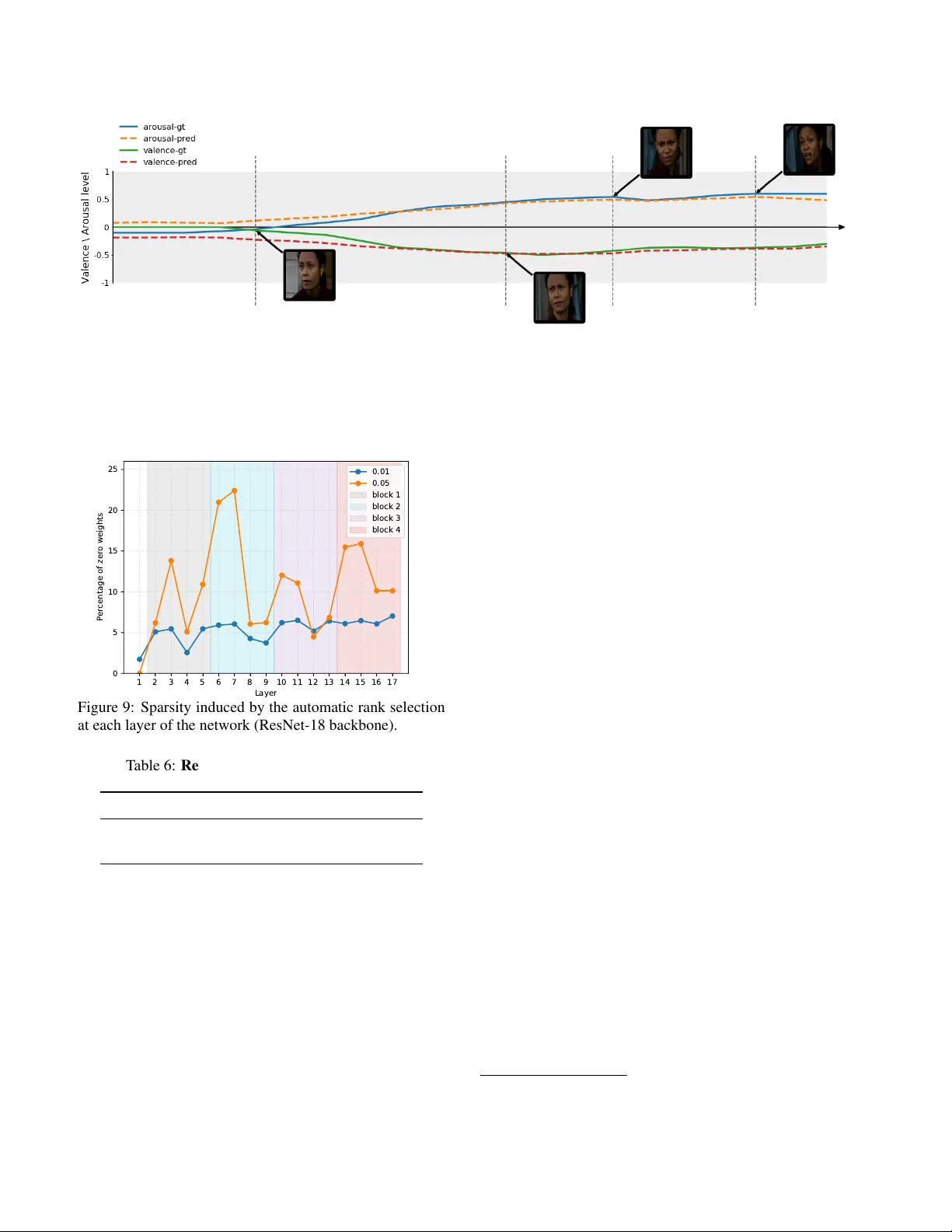

F actorized Higher -Order CNNs with an A pplication to Spatio-T emporal Emotion Estimation Jean K ossaifi ∗ † NVIDIA Antoine T oisoul ∗ Samsung AI Center Adrian Bulat Samsung AI Center Y annis Panagakis † Univ ersity of Athens T imothy Hospedales Samsung AI Center Maja Pantic † Imperial College London Abstract T r aining deep neural networks with spatio-tempor al (i.e., 3D) or multidimensional con volutions of higher-or der is computationally challenging due to millions of unknown parameters across dozens of layer s. T o alleviate this, one appr oach is to apply low-rank tensor decompositions to con volution kernels in order to compress the network and r educe its number of parameters. Alternatively , new con vo- lutional blocks, suc h as MobileNet, can be dir ectly designed for ef ficiency . In this paper , we unify these two appr oaches by pr oposing a tensor factorization frame work for effi- cient multidimensional (separable) con volutions of higher- or der . Inter estingly , the pr oposed fr amework enables a novel higher-or der transduction, allowing to train a net- work on a given domain (e.g ., 2D images or N-dimensional data in gener al) and using transduction to gener alize to higher-or der data such as videos (or (N+K)–dimensional data in general), capturing for instance temporal dynamics while pr eserving the learnt spatial information. W e apply the pr oposed methodology , coined CP-Higher- Or der Convolution ( HO-CPCon v ), to spatio-temporal fa- cial emotion analysis. Most existing facial affect models focus on static imagery and discard all temporal informa- tion. This is due to the above-mentioned bur den of training 3D convolutional nets and the lack of larg e bodies of video data annotated by experts. W e address both issues with our pr oposed frame work. Initial training is first done on static imagery befor e using transduction to gener alize to the tem- poral domain. W e demonstrate superior performance on thr ee challenging lar g e scale affect estimation datasets, Af- fectNet, SEW A, and AFEW -V A. ∗ Joint first authors. † Jean Kossaifi, Y annis Panagakis and Maja Pantic were with Samsung AI Center , Cambridge and Imperial College London. 1. Introduction W ith the unprecedented success of deep con v olutional neural networks came the quest for training always deeper networks. Howe ver , while deeper neural networks gi ve bet- ter performance when trained appropriately , that depth also translates into memory and computationally heavy models, typically with tens of millions of parameters. This is es- pecially true when training training higher-order con volu- tional nets –e.g. third order (3D) on videos. Howe ver , such models are necessary to perform predictions in the spatio- temporal domain and are crucial in many applications, in- cluding action recognition and emotion recognition. In this paper , we depart from conv entional approaches and propose a nov el factorized multidimensional con volu- tional block that achiev es superior performance through ef- ficient use of the structure in the data. In addition, our model can first be trained on the image domain and extended seam- lessly to transfer performance to the temporal domain. This nov el transduction method is made possible by the structure of our proposed block. In addition, it allows one to drasti- cally decrease the number of parameters, while improving performance and computational ef ficiency and it can be ap- plied to already existing spatio-temporal network architec- tures such as a ResNet3D [ 47 ]. Our method leverages a CP tensor decomposition, in order to separately learn the disentangled spatial and temporal information of 3D con- volutions. This improv es accuracy by a large margin while reducing the number of parameters of spatio-temporal ar- chitectures and greatly facilitating training on video data. In summary , we make the follo wing contributions: • W e show that many of deep nets architectural im- prov ements, such as MobileNet or ResNet ’ s Bottlenec k blocks, are in fact drawn from the same larger family of tensor decomposition methods (Section 3 ). W e pro- pose a general frame work unifying tensor decomposi- tions and efficient architectures, sho wing how these ef- 1 Tra ns du ct ion Sta ti c Se pa ra bl e ( 2D) Conv ol u ti on Spat io -Temp ora l ( 3D) Sep ar ab l e Conv ol u tion … … Time Static I mage Factors of the convolution + … + + Factors of the convolution + … + + Static Prediction Temporal Prediction Valen ce Arousal … t 1 t 2 t 3 t T Valen ce Arousal Figure 1: Overview of our method , here represented for a single channel of a single input. W e start by training a 2D CNN with our proposed factorized con volutional block on static images (left) . W e then apply transduction to extend the model from the static to the spatio-temporal domain (right) . The pretrained spatial factors (blue and red) are first kept fix ed, before jointly fine-tuning all the parameters once the temporal factors (green) ha ve been trained. ficient architectures can be deri ved by applying tensor decomposition to con volutional k ernels (Section 3.4 ) • Using this framew ork, we propose factorized higher- order con volutional neural networks, that lev erage ef- ficient general multi-dimensional con volutions. These achiev e the same performance with a fraction of the parameters and floating point operations (Section 4 ). • Finally , we propose a novel mechanism called higher- order transduction which can be employed to con vert our model, trained in N dimensions, to N + K dimen- sions. • W e show that our f actorized higher-order networks outperform existing works on static af fect estimation on the AffectNet, SEW A and AFEW -V A datasets. • Using transduction on the static models, we also demonstrate state-of-the-art results for continuous fa- cial emotion analysis from video on both SEW A and AFEW -V A datasets. 2. Background and r elated work Multidimensional con volutions arise in sev eral math- ematical models across dif ferent fields. They are a corner- stone of Conv olutional Neural Networks (CNNs) [ 26 , 28 ] enabling them to effecti vely learn from high-dimensional data by mitigating the curse of dimensionality [ 34 ]. Ho w- ev er , CNNs are computationally demanding, with the cost of con volutions being dominant during both training and in- ference. As a result, there is an increasing interest in im- proving the ef ficiency of multidimensional con volutions. Sev eral efficient implementations of con volutions hav e been proposed. For instance, 2D con volution can be effi- ciently implemented as matrix multiplication by con verting the conv olution kernel to a T oeplitz matrix. Howe ver , this procedure requires replicating the kernel v alues multiple times across different matrix columns in the T oeplitz ma- trix, thus increasing the memory requirements. Implement- ing con volutions via the im2col approach is also memory intensiv e due to the space required for building the column matrix. These memory requirements may be prohibiti ve for mobile or embedded de vices, hindering the deployment of CNNs in resource-limited platforms. In general, most existing attempts at efficient con volu- tions are isolated and there currently is no unified frame- work to study them. In particular we are interested in two different branches of work, which we re view next. Firstly , approaches that le verage tensor methods for efficient con- volutions, either to compress or reformulate them for speed. Secondly , approaches that directly formulate ef ficient neu- ral architecture, e.g., using separable con volutions. T ensor methods f or efficient deep networks The prop- erties of tensor methods [ 14 , 41 , 17 ] make them a prime choice for deep learning. Beside theoretical study of the properties of deep neural networks [ 3 ], they have been es- pecially studied in the context of reparametrizing existing layers [ 49 ]. One goal of such reparametrization is parame- ter space savings [ 7 ]. [ 31 ] for instance proposed to reshape the weight matrix of fully-connected layers into high-order tensors with a T ensor-T rain (TT) [ 32 ] structure. In a follow- up work [ 4 ], the same strategy is also applied to con volu- tional layers. Fully connected layers and flattening layers can be removed altogether and replaced with tensor regres- sion layers [ 21 ]. These express outputs through a low-rank multi-linear mapping from a high-order activ ation tensor to an output tensor of arbitrary order . Parameter space saving can also be obtained, while maintaining multi-linear struc- ture, by applying tensor contraction [ 20 ]. Another advantage of tensor reparametrization is com- putational speed-up. In particular , a tensor decomposition is an efficient way of obtaining separable filters from con volu- tional k ernels. These separable con volutions were proposed 2 in computer vision by [ 36 ] in the context of filter banks. [ 12 ] first applied this concept to deep learning and pro- posed leveraging redundancies across channels using sepa- rable con volutions. [ 1 , 27 ] proposed to apply CP decompo- sition directly to the (4–dimensional) kernels of pretrained 2D con volutional layers, making them separable. The in- curred loss in performance is compensated by fine-tuning. Efficient re writing of con volutions can also be obtained using T ucker decompositions instead of CP to decompose the con volutional layers of a pre-trained network [ 15 ]. This allows rewriting the con volution as a 1 × 1 con volution, fol- lowed by regular con volution with a smaller kernel and an- other 1 × 1 con volution. In this case, the spatial dimensions of the con volutional kernel are left untouched and only the input and output channels are compressed. Again, the loss in performance is compensated by fine-tuning the whole network. Finally , [ 46 ] propose to remove redundancy in con volutional layers and express these as the composition of two con volutional layers with less parameters. Each 2D filter is approximated by a sum of rank– 1 matrices. Thanks to this restricted setting, a closed-form solution can be read- ily obtained with SVD. Here, we unify the above works and propose factorized higher -order (separable) con volutions. Efficient neural networks While concepts such as sep- arable con volutions have been studied since the early suc- cesses of deep learning using tensor decompositions, they hav e only relativ ely recently been “rediscov ered” and pro- posed as standalone end-to-end trainable ef ficient neural network architectures. The first attempts in the direction of neural network architecture optimization were proposed early in the ground-breaking VGG network [ 42 ] where the large con volutional kernels used in AlexNet [ 25 ] were re- placed with a series of smaller ones that ha ve an equiv alent receptiv e field size: i.e. a con volution with a 5 × 5 kernel can be replaced by two consecutive con volutions of size 3 × 3 . In parallel, the idea of decomposing larger kernels into a series of smaller ones is explored in the multiple iterations of the Inception block [ 43 , 44 , 45 ] where a con volutional layer with a 7 × 7 kernel is approximated with two 7 × 1 and 1 × 7 kernels. [ 8 ] introduced the so-called bottleneck module that reduces the number of channels on which the con volutional layer with higher kernel size ( 3 × 3 ) operate on by projecting back and forth the features using two con- volutional layers with 1 × 1 filters. [ 48 ] expands upon this by replacing the 3 × 3 conv olution with a grouped con vo- lutional layer that can further reduce the complexity of the model while increasing representational power at the same time. Recently , [ 10 ] introduced the MobileNet architecture where they proposed to replace the 3 × 3 con volutions with a depth-wise separable module: a depth-wise 3 × 3 con volu- tion (the number of groups is equal to the number of chan- nels) followed by a 1 × 1 con volutional layer that aggregates the information. These type of structures were sho wn to of- fer a good balance between the performance of fered and the computational cost they incur . [ 39 ] goes one step further and incorporates the idea of using separable con volutions in an in verted bottleneck module. The proposed module uses 1 × 1 layers to expand and then contract the channels (hence in verted bottleneck) while using separable con volutions for the 3 × 3 con volutional layer . Facial affect analysis is the first step towards better human-computer interactions. Early research focused on detecting discrete emotions such as happiness and sadness, based on the hypothesis that these are universal . Howe ver , this categorization of human af fect is limited and does not cov er the wide emotional spectrum displayed by humans on a daily basis. Psychologists have since moved to wards more fine grained dimensional measures of affect [ 35 , 38 ]. The goal is to estimate continuous levels of valence –how positiv e or negati ve an emotional display is– and arousal – how exciting or calming is the emotional experience. This task is the subject of most of the recent research in af fect estimation [ 37 , 19 , 23 , 50 ] and is the focus of this paper . V alence and arousal are dimensional measures of affect that vary in time. It is these changes that are important for accurate human-af fect estimation. Capturing temporal dy- namics of emotions is therefore crucial and requires video rather than static analysis. Howe ver , spatio-temporal mod- els are dif ficult to train due to their lar ge number of parame- ters, requiring v ery large amounts of annotated videos to be trained successfully . Unfortunately , the quality and quan- tity of av ailable video data and annotation collected in nat- uralistic conditions is low [ 40 ]. As a result, most work in this area of affect estimation in-the-wild focuses on affect estimation from static imagery [ 23 , 30 ]. Estimation from videos is then done on a frame-by-frame basis. Here, we tackle both issues and train spatio-temporal networks that outperform existing methods for af fect estimation. 3. Con volutions in a tensor framework In this section, we explore the relationship between ten- sor methods and deep neural networks’ con volutional lay- ers. Without loss of generality , we omit the batch size in all the following formulas. Mathematical backgr ound and notation W e denote 1 st –order tensors (vectors) as v , 2 nd –order tensor (matrices) as M and tensors of order ≥ 3 as T . W e denote a regular con volution of X with W as X ? n W . For 1 –D con volu- tions, we write the con volution of a tensor X ∈ R I 0 , ··· ,I N with a vector v ∈ R K along the n th –mode as X ? n v . In practice, as done in current deep learning framew orks [ 33 ], we use cross-correlation, which dif fers from a con volution by a flip of the kernel. This does not impact the results since the weights are learned end-to-end. In other words, ( X ? n v ) i 0 , ··· ,i N = P K k =1 v k X i 0 , ··· ,i n − 1 ,i n + k,i n +1 , ··· ,I N . 3 3.1. 1 × 1 con volutions and tensor contraction W e show that 1 × 1 con volutions are equi valent to a ten- sor contraction with the kernel of the conv olution along the channels dimension. Let’ s consider a 1 × 1 con volution Φ , defined by kernel W ∈ R T × C × 1 × 1 and applied to an ac- tiv ation tensor X ∈ R C × H × W . W e denote the squeezed version of W along the first mode as W ∈ R T × C . The tensor contraction of a tensor T ∈ R I 0 × I 1 ×···× I N with matrix M ∈ R J × I n , along the n th –mode ( n ∈ [0 . . N ] ), kno wn as n–mode product , is defined as P = T × n M , with: P i 0 , ··· ,i N = P I n k =0 T i 0 , ··· ,i n − 1 ,k,i n +1 , ··· ,i N M i n ,k By plugging this into the expression of Φ( X ) , we readily observe that the 1 × 1 con volution is equiv alent with an n- mode product between X and the matrix W : Φ( X ) t,y ,x = X ? W = C X k =0 W t,k,y ,x X k,y ,x = X × 0 W 3.2. Kruskal con volutions Here we sho w how separable conv olutions can be ob- tained by applying CP decomposition to the kernel of a reg- ular con volution [ 27 ]. W e consider a conv olution defined by its kernel weight tensor W ∈ R T × C × K H × K W , applied on an input of size R C × H × W . Let X ∈ R C × H × W be an arbitrary acti v ation tensor . If we define the resulting feature map as F = X ? W , we hav e: F t,y ,x = C X k =1 H X j =1 W X i =1 W ( t, k , j , i ) X ( k , j + y , i + x ) (1) Assuming a lo w-rank Kruskal structure on the kernel W (which can be readily obtained by applying CP decomposi- tion), we can write: W t,s,j,i = R − 1 X r =0 U ( T ) t,r U ( C ) s,r U ( H ) j,r U ( W ) i,r (2) By plugging 2 into 1 and re-arranging the terms, we get: F t,y,x = R − 1 X r =0 U ( T ) t,r W X i =1 U ( W ) i ,r H X j =1 U ( H ) j ,r " C X k =1 U ( C ) k ,r X ( k , j + y , i + x ) # | {z } 1 × 1 con v | {z } depthwise conv | {z } depthwise conv | {z } 1 × 1 con volution This allows to replace the original con volution by a series of ef ficient depthwise separable con volutions [ 27 ], figure 2 . Figure 2: Illustration of a 2D Kruskal conv olution. 3.3. T ucker con volutions As pre viously , we consider the con volution F = X ? W . Howe ver , instead of a Kruskal structure, we now assume a low-rank T ucker structure on the kernel W (which can be readily obtained by applying T ucker decomposition) and yields an efficient formulation [ 15 ]. W e can write: W ( t, s, j, i ) = R 0 − 1 X r 0 =0 R 1 − 1 X r 1 =0 R 2 − 1 X r 2 =0 R 3 − 1 X r 3 =0 G r 0 ,r 1 ,r 2 ,r 3 U ( T ) t,r 0 U ( C ) s,r 1 U ( H ) j,r 2 U ( W ) i,r 3 Plugging back into a con volution, we get: F t,y,x = C X k =1 H X j =1 W X i =1 R 0 − 1 X r 0 =0 R 1 − 1 X r 1 =0 R 2 − 1 X r 2 =0 R 3 − 1 X r 3 =0 G r 0 ,r 1 ,r 2 ,r 3 U ( T ) t,r 0 U ( C ) k,r 1 U ( H ) j,r 2 U ( W ) i,r 3 X k,j + y ,i + x W e can further absorb the factors along the spacial di- mensions into the core by writing H = G × 2 U ( H ) j,r 2 × 3 U ( W ) i,r 3 . In that case, the expression abo ve simplifies to: F t,y,x = C X k =1 H X j =1 W X i =1 R 0 − 1 X r 0 =0 R 1 − 1 X r 1 =0 H r 0 ,r 1 ,j,i U ( T ) t,r 0 U ( C ) k,r 1 X k,j + y,i + x (3) In other words, this is equiv alence to first transforming the number of channels, then applying a (small) con volu- tion before returning from the rank to the target number of channels. This can be seen by rearranging the terms from Equation 3 : F t,y,x = R 0 − 1 X r 0 =0 U ( T ) t, r 0 H X j =1 W X i =1 R 1 − 1 X r 1 =0 H r 0 , r 1 , j , i C X k =1 U ( C ) k , r 1 X ( k , j + y , i + x ) | {z } 1 × 1 con v | {z } H × W conv | {z } 1 × 1 con v Figure 3: Illustration of a T ucker conv olution expressed as a series of small ef ficient con volutions. Note that this is the approach taken by ResNet for the Bottleneck blocks. In short, this simplifies to the following expression, also illustrated in Figure 3 : F = X × 0 U ( C ) ? G × 0 U ( T ) (4) 4 3.4. Efficient architectur es in a tensor framework While tensor decompositions have been explored in the field of mathematics for decades and in the context of deep learning for years, they are regularly rediscovered and re- introduced in different forms. Here, we re visit popular deep neural network architectures under the lens of tensor fac- torization. Specifically , we show ho w these blocks can be obtained from a regular con volution by applying tensor de- composition to its kernel. In practice, batch-normalisation layers and non-linearities are inserted in between the inter- mediary con volution to f acilitate learning from scratch. ResNet Bottleneck block [ 9 ] introduced a block, coined Bottleneck block in their seminal w ork on deep residual net- works. It consists in a series of a 1 × 1 con volution, to reduce the number of channels, a smaller regular ( 3 × 3 ) con volu- tion, and another 1 × 1 con volution to restore the rank to the desired number of output channels. Based on the equiv a- lence deri ved in Section 3.3 , it is straightforward to see this as applying T ucker decomposition to the kernel of a re gular con volution. ResNext and Xception ResNext [ 48 ] builds on this bot- tleneck architecture, which, as we hav e shown, is equiv a- lent to applying T ucker decomposition to the conv olutional kernel. In order to reduce the rank further , the output is expressed as a sum of such bottlenecks, with a lo wer- rank. This can be reformulated efficiently using grouped- con volution [ 48 ]. In parallel, a similar approach was pro- posed by [ 2 ], but without 1 × 1 conv olution following the grouped depthwise con volution. MobileNet v1 MobileNet v1 [ 10 ] uses building blocks made of a depthwise separable con volutions (spatial part of the con volution) followed by a 1 × 1 conv olution to adjust the number of output channels. This can be readily obtained from a CP decomposition (Section 3.2 ) as follows: first we write the conv olutional weight tensor as detailed in Equa- tion 2 , with a rank equal to the number of input channels, i.e. R = C . The first depthwise-separable conv olution can be obtained by combining the two spatial 1 D conv olutions U ( H ) and U ( W ) . This results into a single spatial factor U ( S ) ∈ R H × W × R , such that U ( S ) j,i,r = U ( H ) j,r U ( W ) i,r . The 1 × 1 conv olution is then gi ven by the matrix-product of the remaining factors, U ( F ) = U ( T ) U ( C ) > ∈ R T × C . This is illustrated in Figure 4 . MobileNet v2 MobileNet v2 [ 39 ] employs a similar ap- proach by grouping the spatial factors into one spatial f actor U ( S ) ∈ R H × W × R , as explained previously for the case of MobileNet. Howe ver , the other factors are left untouched. The rank of the decomposition, in this case, corresponds, for each layer, to the expansion factor × the number of input channels. This results in two 1 × 1 con volutions and a 3 × 3 depthwise separable con volution. Finally , the kernel weight Figure 4: MobileNet blocks ar e a special case of CP con- volutions , without the first con volution, and with spatial factors are combined into one. tensor (displayed graphically in Figure 5 ) is expressed as: W t, s , j,i = R − 1 X r =0 U ( T ) t, r U ( C ) s , r U ( S ) j,i , r (5) In practice, MobileNet-v2 also includes batch- normalisation layers and non-linearities as well as a skip connection to facilitate learning. Figure 5: MobileNet-v2 blocks are a special case of CP con volutions , with the spatial factors merged into a depth- wise separable con volution. 4. F actorized higher -order con volutions W e propose to generalize the frame work introduced abov e to con v olutions of any arbitrary order . Specifically , we express, in the general case, separable ND-con volutions as a series of tensor contractions and 1D con volutions. W e show how this is deri ved from a CP con volution on the N– dimensional kernel. W e then detail how to expand our pro- posed factorized higher-order conv olutions, trained in N- dimensions to ( N + 1) dimensions. Efficient N-D conv olutions via higher-order factor - ization In particular , here, we consider an N + 1 th –order input acti vation tensor X ∈ R C × D 0 ×···× D N − 1 correspond- ing to N dimensions with C channels. W e define a gen- eral, high order separable con volution Φ defined by a ker- nel W ∈ R T × C × K 0 ×···× K N − 1 , and expressed as a Kruskal tensor , i.e. W = J λ ; U ( T ) , U ( C ) , U ( K 0 ) , · · · , U ( K N − 1 ) K . W e can then write: Φ( X ) t,i 0 , ··· ,i N − 1 = R X r =0 C X s =0 K 0 X i 0 =0 · · · · · · K N − 1 X i N − 1 =0 λ r U ( T ) t,r U ( C ) s,r U ( K 0 ) i 0 ,r · · · U ( K N − 1 ) i N − 1 ,r X s,i 0 , ··· ,i N − 1 By rearranging the terms, this expression can be re writ- 5 ten as: F = ρ X × 0 U ( T ) × 0 diag( λ ) U ( C ) (6) where ρ applies the 1D spatial con volutions: ρ ( X ) = X ? 1 U ( K 0 ) ? 2 U ( K 1 ) ? · · · ? N +1 U ( K N − 1 ) T ensor decompositions (and, in particular, decomposed con volutions) are notoriously hard to train end-to-end [ 12 , 27 , 46 ]. As a result, most existing approach rely on first training an uncompressed network, then decomposing the con volutional kernels before replacing the con volution with their efficient re writing and fine-tuning to recover lost per- formance. Howe ver , this approach is not suitable for higher- order con volutions where it might not be practical to train the full N-D con volution. It is possible to facilitate training from scratch by absorbing the magnitude of the f actors into the weight vector λ . W e can also add add non-linearities Ψ (e.g. batch normalisation combined with RELU), leading to the follo wing expression, resulting in an ef ficient higher- order CP con volution: F = ρ Ψ X × 0 U ( T ) × 0 diag( λ ) U ( C ) (7) Skip connection can also be added by introducing an additional factor U ( S ) ∈ R T × C and using F 0 = X + F × 0 U ( S ) . This formulation is significantly more efficient than that of a regular con volution. Let’ s consider an N-dimensional con volution, with C input channels and T output channels, i.e. a weight of size W ∈ R C × T × I 0 ×···× I N − 1 . Then a reg- ular 3D con volution has C × T × Q N − 1 k =0 I k parameters. By contrast, our HO-CP con volution with rank R has only R C + T + P N − 1 k =0 I k + 1 parameters. The +1 term ac- counts for the weights λ . For instance, for a 3D con volu- tion with a cubic kernel (of size K × K × K , a regular 3D con volution would have C T K 3 parameters, v ersus only R ( C + T + 3 K ) for our proposed factorized v ersion. This reduction in the number of parameters translates into much more efficient operation in terms of floating point operations (FLOPs). W e show , in Figure 6 , a visualisation of the number of Giga FLOPs (GFLOPs, with 1GFLOP = 1 e 9 FLOPs), for both a regular 3D con volution and our pro- posed approach, for an input of size 32 × 32 × 16 , varying the number of input and output channels, with a kernel size of 3 × 3 × 3 . Higher -Order T ransduction Here, we introduce trans- duction , which allo ws to first train an N –dimensional con- volution and e xpand it to ( N + K ) dimensions, K > 0 . Thanks to the ef ficient formulation introduced in Equa- tion 7 , we now hav e an effecti ve w ay to go from N di- mensions to N + 1 . W e place ourselves in the same set- ting as for Equation 7 , where we hav e a regular N-D con- volution with spatial dimensions and extend the model to 128 × 128 128 × 256 256 × 256 256 × 512 512 × 512 0 50 100 150 200 GFLOPs CP-HOConv-3 CP-HOConv-6 3D-Conv Figure 6: Comparison of the number of Giga-FLOPs between regular 3D con volutions and our pr oposed method. W e consider inputs of size 32 × 32 × 16 , and vary the numer of the input and output channels (the x-axis shows input × output channels). Our proposed CP-HO con- volution, here for a rank equal to 6 and 3 times the input channels ( CP-HOConv-6 and CP-HOConv-3 ), has signifi- cantly less FLOPs than regular con volution ( 3D-Con v ). N + 1 dimensions. T o do so, we introduce a new factor U ( K N +1 ) ∈ R ( K N +1 × R ) corresponding to the new N + 1 th dimension. The final formulation is then : F = ˆ ρ Ψ X × 0 U ( T ) × 0 diag( λ ) U ( C ) , (8) with ˆ ρ ( X ) = ρ ( X ) ? N +1 U ( K N +1 ) . Note that only the new factor needs to be trained, e.g. transduction can be done by simply training K N +1 × R ad- ditional parameters. A utomatic Rank Selection Our proposed factorized higher-order con volutions introduce a ne w additional pa- rameter , corresponding to the rank of the f actorization. This can be efficiently incorporated in the formulation by intro- ducing a vector of weights, represented by λ in Equation 8 . This allows us to automatically tune the rank of each of the layers by introducing an additional Lasso term in the loss function, e.g., an ` 1 regularization on λ . Let λ l be the v ec- tor of weights for each layer l ∈ [0 . . L − 1] of our neural network, associated with a loss L . The overall loss with regularization will become L reg = L + γ P L − 1 l =0 | λ l | , where γ controls the amount of sparsity in the weights. 5. Experimental setting Datasets for In-The-Wild Affect Estimation W e vali- date the performance of our approach on established large scale datasets for continuous affect estimation in-the-wild. AffectNet [ 30 ] is a large static dataset of human faces and labelled in terms of facial landmarks, emotion cate- gories as well as valence and arousal values. It contains 6 more than a million images, including 450 , 000 manually labelled by twelve e xpert annotators. AFEW -V A [ 23 ] is composed of video clips taken from feature films and accurately annotated per frame in terms of continuous levels of v alence and arousal. Besides 68 accu- rate facial landmarks are also provided for each frame. SEW A [ 24 ] is the largest video dataset for affect estima- tion in-the-wild. It contains o ver 2000 minutes of audio and video data annotated in terms of facial landmarks, valence and arousal values. It contains 398 subjects from six dif- ferent cultures, is gender balanced and uniformly spans the age range 18 to 65. Implementation details W e implemented all models using PyT orch [ 33 ] and T ensorL y [ 22 ]. In all cases, we divided the dataset in subject independent training, v alida- tion and testing sets. For our factorized higher-order con- volutional network, we further removed the flattening and fully-connected layers and replace them with a single ten- sor re gression layer [ 21 ] in order to fully preserve the struc- ture in the activations. For training, we employed an Adam optimizer [ 16 ] and validated the learning rate in the range [10 − 5 ; 0 . 01] , the beta parameters in the range [0 . 0; 0 . 999] and the weight decay in the range [0 . 0; 0 . 01] using a ran- domized grid search. W e also decreased the learning rate by a factor of 10 every 15 epochs. The regularization param- eter γ was validated in the range [10 − 4 ; 1 . 0] on AffectNet and consequently set to 0 . 01 for all other experiments. For our baseline we use both a 3D ResNet and a ResNet 2+1D [ 47 ], both with a ResNet-18 backbone. For our method, we use the same ResNet-18 architecture but replace each of the conv olutional layers with our proposed higher- order factorized con volution. W e initialised the rank so that the number of parameters would be the same as the origi- nal con volution. When performing transduction, the added temporal factors to the CP con volutions are initialized to a constant v alue of one. In a first step, these factors are op- timized while keeping the remaining parameters fixed. The whole network is then fine-tuned. This av oids the trans- ducted factors to pollute what has already been learnt in the static case. The full process is summarized in Figure 1 . Perf ormance metrics and loss function In all cases, we report performance for the RMSE , SA GR , PCC , and CCC which are common metrics employed in affect estimation. Let y be a ground-truth signal and ˆ y the associated predic- tion by the model. The RMSE is the well kno wn Root Mean Square Error: RMSE ( y , ˆ y ) = p E (( y − ˆ y ) 2 ) . The SA GR assesses whether the sign of the two signals agree: S AGR ( Y , ˆ y ) = 1 n P n i =1 δ ( sign ( y i ) , sign ( ˆ y i )) . The PCC is the Pearson product-moment correlation co- efficient and measures ho w correlated the two signals are: P C C ( y , ˆ y ) = E ( y − µ y )( ˆ y − µ ˆ y ) σ y σ ˆ y . The CCC is Lin’ s Concordance Correlation Coefficient and assesses the correlation of the two signals b ut also how close the two signals are: C C C ( y , ˆ y ) = 2 σ y σ ˆ y PCC ( y , ˆ y ) σ 2 y + σ 2 ˆ y +( µ y − µ ˆ y ) 2 . The goal for continuous affect estimation is typically to maximize the correlations coefficients PCC and CCC. How- ev er minimizing the RMSE also helps maximizing the cor- relations as it gives a lower error in each individual predic- tion. Our regression loss function reflects this by incorpo- rating three terms: L = 1 α + β + γ ( α L RM S E + β L P C C + γ L C C C ) , with L RM S E = RMSE valence + RMSE arousal , L P C C = 1 − PCC valence + PCC arousal 2 and L C C C = 1 − CCC valence + CCC arousal 2 . The coef ficients α , β and γ are shake- shake regularization coefficients [ 5 ] sampled randomly in the range [0; 1] following a uniform distrib ution. These en- sures none of the terms are ignored during optimization. On AffectNet, where discrete classes of emotions are a v ailable, we jointly perform a regression of the valence and arousal values and a classification of the emotional class by adding a cross entropy to the loss function. 6. Perf ormance evaluation In this section, we report the performance of our meth- ods for facial af fect estimation in the wild. First, we report results on static images. W e then show how the higher-order transduction allo ws us to extend these models to the tempo- ral domain. In all cases, we compare with the state-of-the- art and show superior results. Static affect analysis in-the-wild with factorized CNNs First we show the performance of our models trained and tested on individual (static) imagery . W e train our method on AffectNet, which is the lar gest database but con- sists of static images only . There, our method outperforms all other works by a large margin (T able 1 ). W e observe sim- ilar results on SEW A (T able 2 ) and AFEW -V A (T able 3 ). In the supplementary document, we also report results on LSEMSW [ 11 ] and CIF AR10 [ 25 ]. T emporal pr ediction via higher -order transduction W e then apply transduction as described in the method sec- tion to con vert the static model from SEW A (T able 2 , tem- poral case) and AFEW -V A (T able 3 , temporal case) to the temporal domain, where we simply train the added tempo- ral factors. This approach allows to efficiently train tem- poral models on ev en small datasets. Our method outper - forms other approaches, despite having only 11 million pa- rameters, compared to 33 million for the corresponding 3D ResNet18, and 31 million parameters for a (2+1)D ResNet- 18. Interestingly , in all cases, we notice that valence is bet- ter predicted than arousal, which, is in line with finding by psychologists that humans are better at estimating v alence from visual data [ 38 , 6 ]. Using the automatic rank selection procedure detailed in 7 T able 1: Results on the AffectNet dataset V alence Arousal Network Acc. RMSE SA GR PCC CCC RMSE SA GR PCC CCC Af fectNet baseline [ 30 ] 0.58 0.37 0.74 0.66 0.60 0.41 0.65 0.54 0.34 Face-SSD [ 13 ] - 0.44 0.73 0.58 0.57 0.39 0.71 0.50 0.47 VGG-F ace+2M imgs [ 18 ] 0.60 0.37 0.78 0.66 0.62 0.39 0.75 0.55 0.54 Baseline ResNet-18 0.55 0.35 0.79 0.68 0.66 0.33 0.8 0.58 0.57 Ours 0.59 0.35 0.79 0.71 0.71 0.32 0.8 0.63 0.63 T able 2: Results on the SEW A database V alence Arousal Case Network RMSE SA GR PCC CCC RMSE SA GR PCC CCC Static [ 24 ] - - 0.32 0.31 - - 0.18 0.20 VGG16+TRL [ 29 ] 0.33 - 0.50 0.47 0.39 - 0.44 0.39 ResNet-18 0.37 0.62 0.33 0.29 0.52 0.62 0.28 0.19 Ours 0.33 0.65 0.64 0.6 0.39 0.75 0.48 0.44 T emporal ResNet-3D 0.37 0.59 0.47 0.41 0.41 0.69 0.29 0.21 ResNet-(2+1)D 0.35 0.63 0.59 0.49 0.41 0.63 0.39 0.31 Ours – scratch 0.33 0.63 0.62 0.54 0.40 0.72 0.42 0.32 Ours – transduction 0.24 0.69 0.84 0.75 0.32 0.80 0.60 0.52 T able 3: Results on the AFEW -V A database V alence Arousal Case Network RMSE SA GR PCC CCC RMSE SA GR PCC CCC Static RF Hybrid DCT [ 23 ] 0.27 - 0.407 - 0.23 - 0.45 - ResNet50+TRL [ 29 ] 0.40 - 0.33 0.33 0.41 - 0.42 0.4 ResNet-18 0.43 0.42 0.05 0.03 0.41 0.68 0.06 0.05 Ours 0.24 0.64 0.55 0.55 0.24 0.77 0.57 0.52 T emporal Baseline ResNet-18-3D 0.26 0.56 0.19 0.17 0.22 0.77 0.33 0.29 ResNet-18-(2+1)D 0.31 0.50 0.17 0.16 0.29 0.73 0.33 0.20 Af fW ildNet [ 19 ] - - 0.51 0.52 - - 0.575 0.556 Ours – scratch 0.28 0.53 0.12 0.11 0.19 0.75 0.23 0.15 Ours – transduction 0.20 0.67 0.64 0.57 0.21 0.79 0.62 0.56 section 4 , we let the model learn end-to-end the rank of each of the factorized higher-order con volutions. W e found that on av erage, 8 to 15% of the parameters can be set to zero for optimal performance. In practice, about 1 million (of the 11 million) parameters were set to zero by the Lasso regu- larization. An in-depth study of the ef fect of the automatic rank selection is provided in the supplementary document. 7. Conclusion W e established the link between tensor factorizations and efficient con volutions, in a unified framework. Based on this, we proposed a factorized higher-order (N-dimensional) con volutional block. This results in efficient models that outperform traditional networks, while being more com- putationally and memory ef ficient. W e also introduced a higher-order transduction algorithm for conv erting the ex- pressiv e power of trained N –dimensional conv olutions to any N + K dimensions. W e then applied our approach to continuous facial affect estimation in naturalistic condi- tions. Using transduction, we transferred the performance of the models trained on static images to the temporal do- main and reported state-of-the-art results in both cases. 8 References [1] Marcella Astrid and Seung-Ik Lee. Cp-decomposition with tensor power method for conv olutional neural networks com- pression. CoRR , abs/1701.07148, 2017. 3 [2] Franc ¸ ois Chollet. Xception: Deep learning with depthwise separable conv olutions. In Computer V ision and P attern Recognition , 2017. 5 [3] Nadav Cohen, Or Sharir , and Amnon Shashua. On the ex- pressiv e power of deep learning: A tensor analysis. In Con- fer ence on Learning Theory , pages 698–728, 2016. 2 [4] Timur Garipov , Dmitry Podoprikhin, Alexander Novikov , and Dmitry V etrov . Ultimate tensorization: compressing con volutional and fc layers alike. NIPS workshop: Learn- ing with T ensors: Why Now and How? , 2016. 2 [5] Xavier Gastaldi. Shake-shake regularization. arXiv preprint arXiv:1705.07485 , 2017. 7 [6] Michael Grimm and Kristian Kroschel. Emotion estimation in speech using a 3d emotion space concept. In Michael Grimm and Kristian Kroschel, editors, Robust Speec h , chap- ter 16. IntechOpen, Rijeka, 2007. 7 [7] Julia Gusak, Maksym Kholiavchenko, Evgeny Ponomarev , Larisa Markee va, Philip Blagov eschensky , Andrzej Ci- chocki, and Ivan Oseledets. Automated multi-stage compres- sion of neural networks. In The IEEE International Confer- ence on Computer V ision (ICCV) W orkshops , Oct 2019. 2 [8] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. In Computer V ision and P attern Recognition , 2016. 3 [9] K. He, X. Zhang, S. Ren, and J. Sun. Deep residual learn- ing for image recognition. In Computer V ision and P attern Recognition , 2016. 5 [10] Andrew G. How ard, Menglong Zhu, Bo Chen, Dmitry Kalenichenko, W eijun W ang, T obias W eyand, Marco An- dreetto, and Hartwig Adam. Mobilenets: Efficient conv olu- tional neural networks for mobile vision applications. CoRR , abs/1704.04861, 2017. 3 , 5 [11] Guosheng Hu, Li Liu, Y ang Y uan, Zehao Y u, Y ang Hua, Zhi- hong Zhang, Fumin Shen, Ling Shao, Timothy Hospedales, Neil Robertson, et al. Deep multi-task learning to recognise subtle facial expressions of mental states. In ECCV , pages 103–119, 2018. 7 , 11 [12] M. Jaderberg, A. V edaldi, and A. Zisserman. Speeding up con volutional neural networks with low rank expansions. In British Machine V ision Conference , 2014. 3 , 6 [13] Y oungkyoon Jang, Hatice Gunes, and Ioannis P atras. Registration-free face-ssd: Single shot analysis of smiles, fa- cial attrib utes, and affect in the wild. Computer V ision and Image Under standing , 2019. 8 [14] Majid Janzamin, Rong Ge, Jean Kossaifi, Anima Anandku- mar , et al. Spectral learning on matrices and tensors. F ounda- tions and T rends R in Machine Learning , 12(5-6):393–536, 2019. 2 [15] Y ong-Deok Kim, Eunhyeok Park, Sungjoo Y oo, T aelim Choi, Lu Y ang, and Dongjun Shin. Compression of deep con volutional neural netw orks for fast and lo w power mobile applications. ICLR , 2016. 3 , 4 [16] Diederik P Kingma and Jimmy Ba. Adam: A method for stochastic optimization. arXiv pr eprint arXiv:1412.6980 , 2014. 7 [17] T amara G. K olda and Brett W . Bader . T ensor decompositions and applications. SIAM REVIEW , 51(3):455–500, 2009. 2 [18] Dimitrios Kollias, Shiyang Cheng, Evangelos V erveras, Irene Kotsia, and Stefanos Zafeiriou. Generating faces for affect analysis. CoRR , abs/1811.05027, 2018. 8 [19] Dimitrios K ollias, Panagiotis Tzirakis, Mihalis A Nicolaou, Athanasios P apaioannou, Guoying Zhao, Bj ¨ orn Schuller , Irene Kotsia, and Stefanos Zafeiriou. Deep affect prediction in-the-wild: Af f-wild database and challenge, deep architec- tures, and beyond. International Journal of Computer V ision , 127(6-7):907–929, 2019. 3 , 8 [20] Jean Kossaifi, Aran Khanna, Zachary Lipton, T ommaso Furlanello, and Anima Anandkumar . T ensor contraction lay- ers for parsimonious deep nets. In Computer V ision and P at- tern Recognition W orkshops (CVPRW) , 2017. 2 [21] Jean Kossaifi, Zachary C. Lipton, Aran Khanna, T ommaso Furlanello, and Anima Anandkumar . T ensor re gression net- works. CoRR , abs/1707.08308, 2018. 2 , 7 [22] Jean Kossaifi, Y annis Panagakis, Anima Anandkumar, and Maja P antic. T ensorly: T ensor learning in python. Journal of Machine Learning Resear ch , 20(26):1–6, 2019. 7 [23] Jean K ossaifi, Geor gios Tzimiropoulos, Sinisa T odorovic, and Maja Pantic. Afew-v a database for v alence and arousal estimation in-the-wild. Image and V ision Computing , 65:23– 36, 2017. 3 , 7 , 8 [24] J. K ossaifi, R. W alecki, Y . Panag akis, J. Shen, M. Schmitt, F . Ringev al, J. Han, V . Pandit, A. T oisoul, B. W . Schuller, K. Star, E. Hajiyev , and M. Pantic. Sewa db: A rich database for audio-visual emotion and sentiment research in the wild. IEEE T ransactions on P attern Analysis and Machine Intelli- gence , pages 1–1, 2019. 7 , 8 [25] Alex Krizhe vsky and Geoffre y Hinton. Learning multiple layers of features from tiny images. T echnical report, Cite- seer , 2009. 3 , 7 , 11 [26] Alex Krizhevsky , Ilya Sutskever , and Geoffrey E Hinton. Imagenet classification with deep con volutional neural net- works. In Advances in neural information pr ocessing sys- tems , pages 1097–1105, 2012. 2 [27] V adim Lebede v , Y aroslav Ganin, Maksim Rakhuba, Ivan V . Oseledets, and V ictor S. Lempitsky . Speeding-up conv olu- tional neural networks using fine-tuned cp-decomposition. In ICLR , 2015. 3 , 4 , 6 [28] Y . Lecun, L. Bottou, Y . Bengio, and P . Haffner. Gradient- based learning applied to document recognition. Proceed- ings of the IEEE , 86(11):2278–2324, Nov 1998. 2 [29] A. Mitenk ov a, J. K ossaifi, Y . Panagakis, and M. Pantic. V a- lence and arousal estimation in-the-wild with tensor meth- ods. In IEEE International Conference on Automatic F ace Gestur e Recognition (FG 2019) , 2019. 8 [30] Ali Mollahosseini, Behzad Hasani, and Mohammad H Ma- hoor . Affectnet: A database for facial expression, valence, and arousal computing in the wild. IEEE Tr ansactions on Affective Computing , 10(1):18–31, 2017. 3 , 6 , 8 , 11 9 [31] Alexander No vikov , Dmitry Podoprikhin, Anton Osokin, and Dmitry V etrov . T ensorizing neural networks. In Neu- ral Information Pr ocessing Systems , 2015. 2 [32] I. V . Oseledets. T ensor-train decomposition. SIAM J. Sci. Comput. , 33(5):2295–2317, Sept. 2011. 2 [33] Adam Paszk e, Sam Gross, Soumith Chintala, Gregory Chanan, Edward Y ang, Zachary DeV ito, Zeming Lin, Al- ban Desmaison, Luca Antiga, and Adam Lerer . Automatic differentiation in p ytorch. In NIPS-W , 2017. 3 , 7 [34] T Poggio and Q Liao. Theory i: Deep networks and the curse of dimensionality . Bulletin of the P olish Academy of Sciences: T echnical Sciences , pages 761–773, 01 2018. 2 [35] Jonathan Posner , James A Russell, and Bradley S Peter- son. The circumplex model of affect: An integrativ e ap- proach to af fective neuroscience, cogniti ve development, and psychopathology . Development and psychopathology , 17(3):715–734, 2005. 3 [36] R. Rigamonti, A. Sironi, V . Lepetit, and P . Fua. Learning separable filters. In 2013 IEEE Confer ence on Computer V i- sion and P attern Recognition , June 2013. 3 [37] Fabien Ringev al, Bj ¨ orn Schuller , Michel V alstar, Nicholas Cummins, Roddy Cowie, Leili T av abi, Maximilian Schmitt, Sina Alisamir, Shahin Amiriparian, Eva-Maria Messner, et al. Audio/visual emotion challenge 2019: State-of-mind, detecting depression with ai, and cross-cultural af fect recog- nition. 2019. 3 [38] J.A. Russell, Bachorowski J.A, and J.M. Fernandez-Dols. Facial and vocal expressions of emotions. Annu. Rev . Psy- chol. , 54:329–349, 2003. 3 , 7 , 11 [39] Mark Sandler , Andrew Howard, Menglong Zhu, Andrey Zh- moginov , and Liang-Chieh Chen. Mobilenetv2: In verted residuals and linear bottlenecks. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2018. 3 , 5 [40] Evangelos Sariyanidi, Hatice Gunes, and Andrea Ca vallaro. Automatic analysis of facial affect: A survey of registration, representation, and recognition. IEEE Tr ansactions on P at- tern Analysis and Machine Intelligence , 37(6):1113–1133, 2015. 3 [41] Nicholas D Sidiropoulos, Liev en De Lathauwer, Xiao Fu, Kejun Huang, Evangelos E Papalexakis, and Christos Falout- sos. T ensor decomposition for signal processing and ma- chine learning. IEEE T ransactions on Signal Pr ocessing , 65(13):3551–3582, 2017. 2 [42] Karen Simon yan and Andrew Zisserman. V ery deep con vo- lutional networks for large-scale image recognition. arXiv pr eprint arXiv:1409.1556 , 2014. 3 [43] Christian Sze gedy , Sergey Ioffe, V incent V anhoucke, and Alexander A Alemi. Inception-v4, inception-resnet and the impact of residual connections on learning. In Thirty-F irst AAAI Confer ence on Artificial Intelligence , 2017. 3 [44] Christian Szegedy , W ei Liu, Y angqing Jia, Pierre Sermanet, Scott Reed, Dragomir Anguelov , Dumitru Erhan, V incent V anhoucke, and Andrew Rabinovich. Going deeper with con volutions. In Pr oceedings of the IEEE confer ence on computer vision and pattern r ecognition , 2015. 3 [45] Christian Szegedy , V incent V anhoucke, Serge y Ioffe, Jon Shlens, and Zbigniew W ojna. Rethinking the inception archi- tecture for computer vision. In Pr oceedings of the IEEE con- fer ence on computer vision and pattern r ecognition , 2016. 3 [46] Cheng T ai, T ong Xiao, Xiaogang W ang, and W einan E. Con volutional neural networks with low-rank regularization. CoRR , abs/1511.06067, 2015. 3 , 6 [47] Du Tran, Heng W ang, Lorenzo T orresani, Jamie Ray , Y ann LeCun, and Manohar Paluri. A closer look at spatiotemporal con volutions for action recognition. In Proceedings of the IEEE confer ence on Computer V ision and P attern Recogni- tion , pages 6450–6459, 2018. 1 , 7 [48] Saining Xie, Ross Girshick, Piotr Doll ´ ar , Zhuowen Tu, and Kaiming He. Aggregated residual transformations for deep neural networks. In Pr oceedings of the IEEE confer ence on computer vision and pattern r ecognition , 2017. 3 , 5 [49] Y ongxin Y ang and Timoth y M. Hospedales. Deep multi-task representation learning: A tensor factorisation approach. In ICLR , 2017. 2 [50] Stefanos Zafeiriou, Dimitrios K ollias, Mihalis A Nicolaou, Athanasios Papaioannou, Guoying Zhao, and Irene K otsia. Aff-wild: V alence and arousal in-the-wildchallenge. In Com- puter V ision and P attern Reco gnition W orkshops (CVPRW) , 2017. 3 10 APPENDIX Here, we gi ve additional details on the method, as well as results on other tasks (image classification on CIF AR-10, and gesture estimation from videos). Dimensional model of affect Discrete emotional classes are too coarse to summarize the full range of emotions displayed by humans on a daily basis. This is the reason why finer, dimensional af fect mod- els, such as the valence and arousal are now fa voured by psychologists [ 38 ].In this circumplex, which can be seen in Figure 7 , the v alence lev el corresponds to how positi ve or negati ve an emotion is, while the arousal lev el e xplains how calming or exciting the emotion is. Pos it iv e Exciting Calming Negativ e Angry Sleepy Tired Depressed Bored Neutral Fear Happy Contemp t Surprised Sad Rel axed Miserable Excit ed Disgust Pleased Figure 7: The valence and arousal circumplex. This dimen- sional model of af fect covers the continuous range of emo- tions displayed by human on a daily basis. The images are taken from the Af fectNet dataset [ 30 ] A visualization of the prediction of v alence and arousal of our model can be seen in Figure 8 , along with some rep- resentativ e frames. A utomatic rank selection Using the automatic rank selection procedure detailed in the method section, we let the model learn end-to-end the rank of each of the factorized higher -order con volutions. In Figure 9 , we show the number of parameters set to zero by the network for a regularization parameter of 0 . 01 and 0 . 05 . The lasso regularization is an easy way to au- tomatically tune the rank. W e found that on average 8 to 15% of the parameters can be set to zero for optimal per- formance. In practice, about 1 million parameters were re- mov ed thanks to this regularization. In T able 4 we report the number of parameters of spatio- temporal baselines and compare it to our CP factorized model. Besides having less parameters, our approach has the advantage of ha ving a very lo w number of temporal pa- rameters which facilitates the training on spatio-temporal data once it has been pretrained on static data. T able 4: Number of parameters optimized to train the temporal model Network T otal # parameters # parameters remov ed with LASSO # parameters optimized for video ResNet18-(2+1)D 31M - 31M ResNet-18-3D 33M - 33M Ours [ λ = 0 . 01] 11M 0.7 0.24 Ours [ λ = 0 . 05] 11M 1.3M 0.24 Results on LSEMSW LSEMSW [ 11 ] is the Large-scale Subtle Emotions and Mental States in the W ild database. It contains more than 175 , 000 static images annotated in terms of subtle emotions and cogniti ve states such as helpless, suspicious, arrogant, etc. W e report results on LSEMSW in table. 5 . T able 5: Results on the LSEMSW database Method Accuracy ResNet 34 [ 11 ] 28.39 % Ours 34.55% Results on CIF AR-10 While in the paper we focus on affect estimation, we re- port here results on a traditional image classification dataset, CIF AR 10. CIF AR-10 [ 25 ] is a dataset for image classification com- posed of 10 classes with 6 , 000 images which, di vided into 5000 images per class for training and 1000 images per class for testing, on which we report the results. W e used a MobileNet-v2 as our baseline. For our ap- proach, we simply replaced the full MobileNet-v2 blocks with ours (which, in the 2D case, differs from MobileNet- v2 by the use of two separable con volutions along the spa- tial dimensions instead of a single 2D kernel). W e kept all the parameters the same for all e xperiments to allow for fair comparison and reported performance a veraged across 3 runs. The standard de viation was 0 . 033 for MobileNet-v2 and 0 . 036 for our approach. W e optimized the loss using stochastic gradient descent with a mini-batch size of 128 , starting with a learning rate of 0 . 1 , decreased by a factor of 10 after 150 and 250 epochs, for a total of 400 epochs, with a momentum of 0 . 9 . For MobileNet-v2, we used a weight 11 Figure 8: Evolution of the ground-truth ( gt ) and pr edicted ( pred ) levels of v alence and arousal as a function of time, for one of the test videos of the AFEW -V A dataset. 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 Layer 0 5 10 15 20 25 Percentage of zero weights 0.01 0.05 block 1 block 2 block 3 block 4 Figure 9: Sparsity induced by the automatic rank selection at each layer of the network (ResNet-18 backbone). T able 6: Results on the CIF AR-10 dataset Network # parameters Accuracy (%) MobileNet-v2 2 . 30 M 94 Ours 2 . 29 M 94 decay of 4 e − 5 and 1 e − 4 for our approach. Optimization was done on a single NVIDIA 1080T i GPU. W e compared our method with a MobileNet-v2 with a comparable number of parameters, T able 6 . Unsurpris- ingly , both approach yield similar results since, in the 2D case, the two networks architectures are similar . It is worth noting that our method has marginally less param- eters than MobileNet-v2, for the same number of chan- nels, ev en though that network is already optimized for ef- ficiency . Results on gesture estimation In this section, we report additional results on the Jester dataset. W e compare a netw ork that employs regular con vo- lutional blocks to the same network that uses our proposed higher-order f actorized con volutional blocks. 20BN-Jester v1 is a dataset 1 composed of 148 , 092 videos, each representing one of 27 hand gestures (e.g. swiping left , thumb up , etc). Each video contains a person performing one of gestures in front of a web camera. Out of the 148 , 092 videos 118 , 562 are used for training, 14 , 787 for validation on which we report the results. For the 20BN-Jester dataset, we used a con volutional column composed of 4 con v olutional blocks with kernel size 3 × 3 × 3 , with respecti ve input and output of channels: (3 , 64) , (64 , 128) , (128 , 256) and (256 , 256) , follo wed by two fully-connected layers to reduce the dimensionality to 512 first, and finally to the number of classes. Between each con volution we added a batch-normalisation layer , non- linearity (ELU) and 2 × 2 × 2 max-pooling. The full ar- chitecture is graphically represented in Figure 10 of the ar - chitecture detailed in the paper , for clarity . For our approach, we used the same setting but replaced the 3D con volutions with our proposed block and used, for each layer , 6 × n input-channels for the rank of the HO-CP con- volution. The dataset was processed by batches of 32 se- quences of RGB images, with a temporal resolution of 18 frames and a size of 84 × 84 . The loss is optimized by mini-batches of 32 samples using stochastic gradient de- scent, with a starting learning-rate of 0 . 001 , decreased by a factor of 10 on plateau, a weight decay of 1 e − 5 and mo- mentum of 0 . 9 . All optimization was done on 2 NVIDIA 1080T i GPUs. Results for 3D conv olutional networks For the 3D 1 Dataset available at https://www.twentybn.com/ datasets/jester/v1 . 12 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l Ba t c h N o r m E L U 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l Ba t c h N o r m E L U 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l B a t c h N o r m E L U 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l B a t c h N o r m E L U 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l B a t c h N o r m E L U 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l B a t c h N o r m E L U 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l B a t c h N o r m E L U 3 D Co n v B. 3 x 6 4 x 1 x 2 x 2 P o o l B a t c h N o r m E L U E L U L i n e a r 1 2 8 0 0 x 5 1 2 L i n e ar 5 1 2 x 2 7 Figure 10: Architectur e of our 3D conv olutional network . W e employed the same architecture for both our baseline and our approach, where the only difference is the 3D conv olutional block used (3D Con v B): for the baseline a regular 3D con v , and for our method, our proposed HO-CP con v-S. Each conv olution is followed by a batch-normalisation, non-linearity (ELU) and a max pooling (ov er 2 × 2 × 2 non-overlapping re gions). T able 7: Results on the 20BN-Jester Dataset #con v Accuracy (%) Network parameters T op-1 T op-5 3D-Con vNet 2 . 9 M 83 . 2 97 . 0 HO-CP Con vNet ( Ours ) 1 . 2 M 83 . 8 97 . 4 HO-CP Con vNet-S ( Ours ) 1 . 2 M 85 . 4 98 . 6 case, we test our Higher-Order CP conv olution with a regu- lar 3D conv olution in a simple neural network architecture, in the same setting, in order to be able to compare them. Our approach is more computationally efficient and gets better performance as shown in T able 7 . In particular, the basic version without skip connection and with RELU (emphHO- CP Con vNet) has 1 . 7 million less parameters in the con- volutional layers compared to the regular 3D network, and yet, con verges to better T op-1 and T op-5 accuracy . The v er- sion with skip-connection and PReLU ( HO-CP Con vNet-S ) beats all approaches. Algorithm f or our CP con volutions W e summarize our efficient higher -order factorized con- volution in algorithm 1 . Algorithm 1 Higher-order CP con volutions Input • Input activ ation tensor X ∈ R C × D 0 ×···× D N • CP kernel weight tensor W : W = J U ( T ) , U ( C ) , U ( K 0 ) , · · · , U ( K N ) K • Skip connection weight matrix U ( S ) ∈ R T × C Output Ef ficient factorized N-D con volution X ? W H ⇐ X × 0 U ( C ) for i:=1 to N − 2 do H ⇐ H ? i U ( K i ) ( 1 –D con v along the i th mode) H ⇐ PReLU ( H ) or ReLU ( H ) [optional] H ⇐ Batch-Norm ( H ) [optional] end for H ⇐ H × 1 U ( T ) if skip-connection then retur n H + X × 0 U ( S ) else retur n H end if 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment