Convolutional Neural Network Architectures for Signals Supported on Graphs

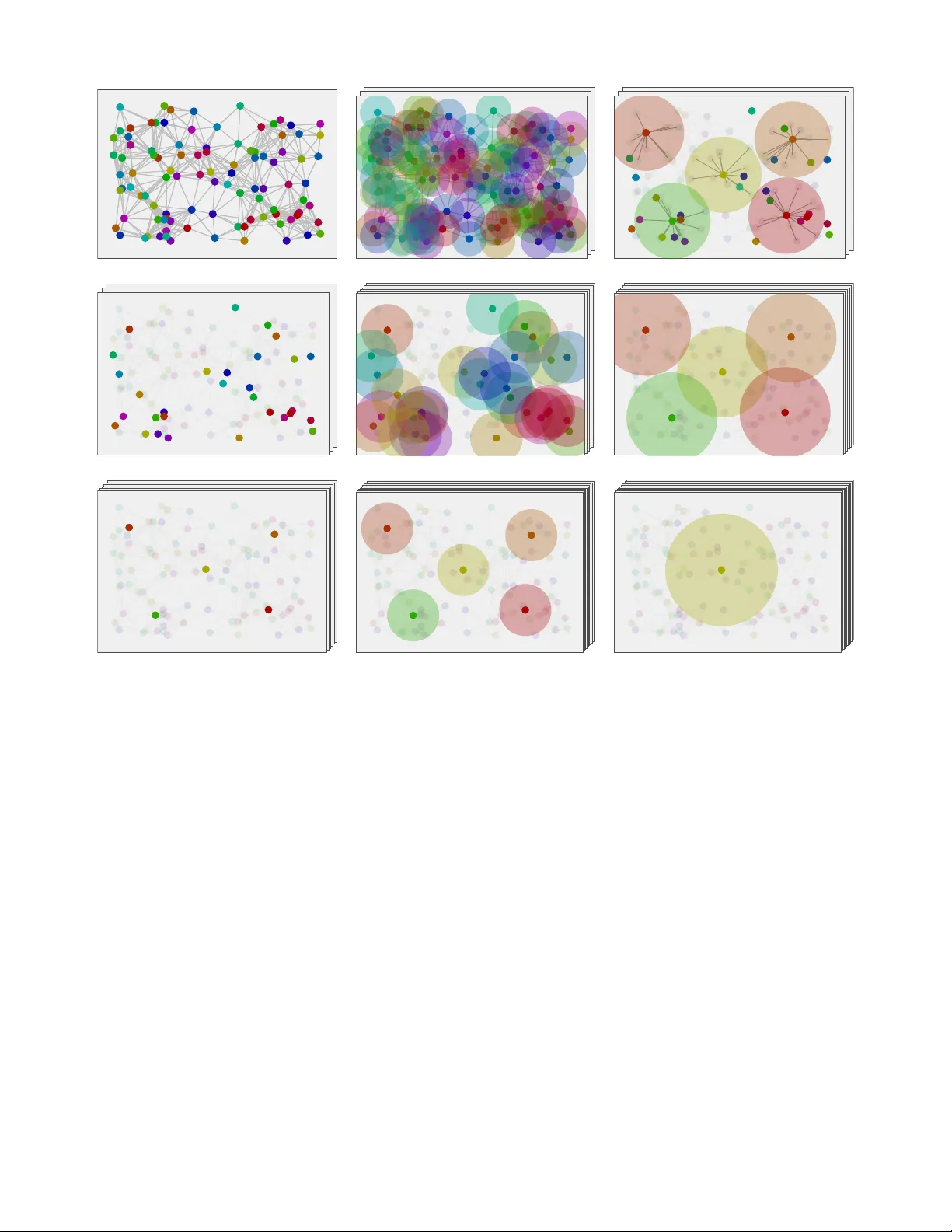

Two architectures that generalize convolutional neural networks (CNNs) for the processing of signals supported on graphs are introduced. We start with the selection graph neural network (GNN), which replaces linear time invariant filters with linear …

Authors: Fern, o Gama, Antonio G. Marques