Convolutional Neural Networks and Language Embeddings for End-to-End Dialect Recognition

Dialect identification (DID) is a special case of general language identification (LID), but a more challenging problem due to the linguistic similarity between dialects. In this paper, we propose an end-to-end DID system and a Siamese neural network…

Authors: Suwon Shon, Ahmed Ali, James Glass

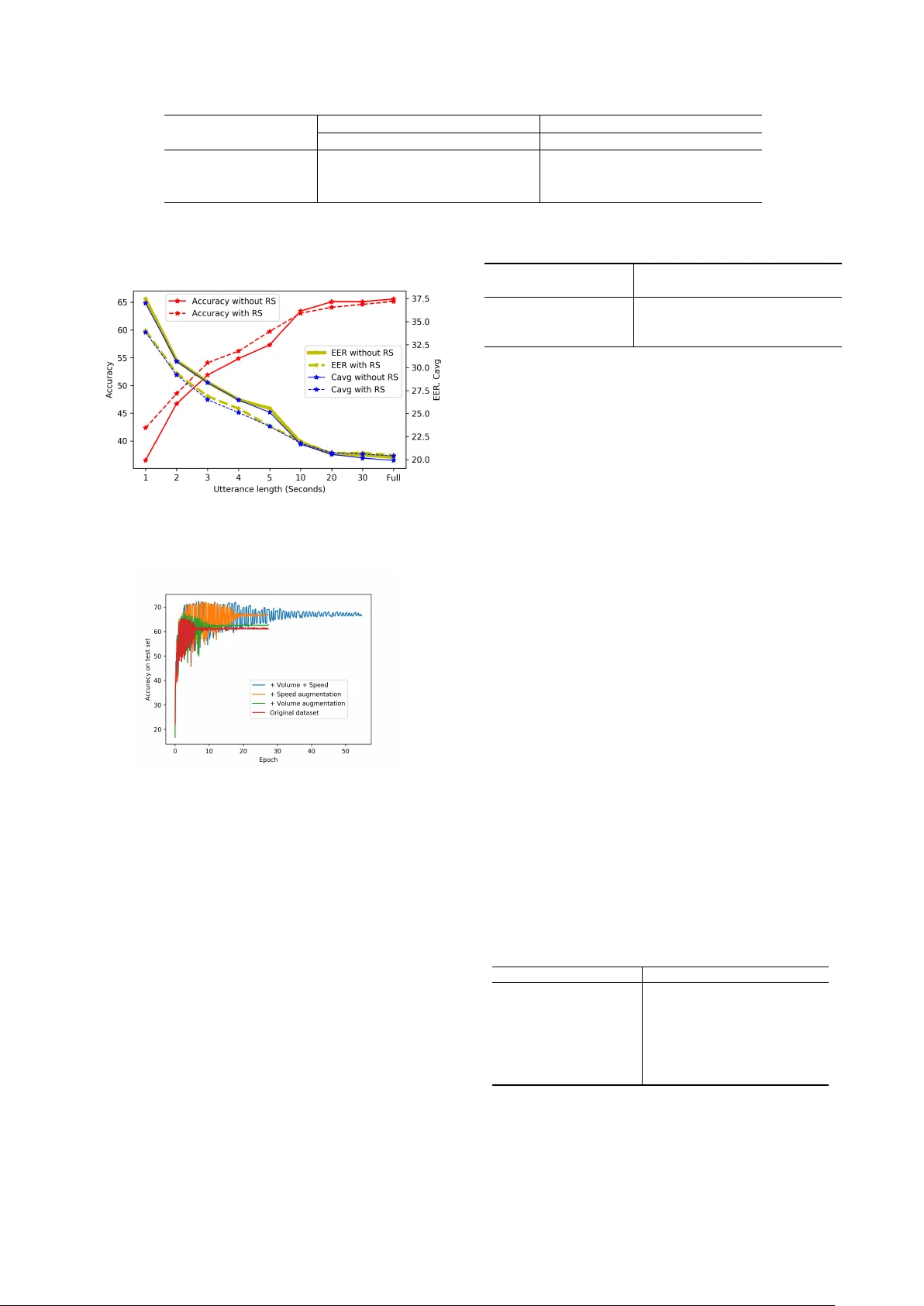

Con volutional Neural Netw orks and Language Embeddings f or End-to-End Dialect Recognition Suwon Shon 1 , Ahmed Ali 2 , J ames Glass 1 Massachusetts Institute of T echnology , Cambridge, MA, USA 1 Qatar Computing Research Institute, HBKU, Doha, Qatar 2 { swshon,glass } @mit.edu amali@hbku.edu.qa Abstract Dialect identification (DID) is a special case of general lan- guage identification (LID), b ut a more challenging problem due to the linguistic similarity between dialects. In this paper , we propose an end-to-end DID system and a Siamese neural net- work to extract language embeddings. W e use both acoustic and linguistic features for the DID task on the Arabic dialectal speech dataset: Multi-Genre Broadcast 3 (MGB-3). The end- to-end DID system was trained using three kinds of acoustic features: Mel-Frequency Cepstral Coefficients (MFCCs), log Mel-scale Filter Bank energies (FB ANK) and spectrogram en- ergies. W e also in vestigated a dataset augmentation approach to achieve rob ust performance with limited data resources. Our linguistic feature research focused on learning similarities and dissimilarities between dialects using the Siamese network, so that we can reduce feature dimensionality as well as impro ve DID performance. The best system using a single feature set achiev es 73% accuracy , while a fusion system using multiple features yields 78% on the MGB-3 dialect test set consisting of 5 dialects. The experimental results indicate that FBANK fea- tures achiev e slightly better results than MFCCs. Dataset aug- mentation via speed perturbation appears to add significant ro- bustness to the system. Although the Siamese network with lan- guage embeddings did not achiev e as good a result as the end- to-end DID system, the two approaches had good synergy when combined together in a fused system. 1. Introduction A significant step forward in speaker and language identifica- tion (LID) was obtained by combining i-vectors and Deep Neu- ral Netw orks (DNNs) [1, 2, 3]. The task of dialect identification (DID) is relatively unexplored compared to speaker and lan- guage recognition. One of the main reasons is due to a lack of common datasets, while another reason is that DID is often regarded as a special case of LID, so researchers tend to con- centrate on the more general problems of language and speaker recognition. Howe ver , DID can be an extremely challenging task since the similarities among language dialects tends to be much higher than those used in the more general LID task. Arabic is an appropriate language for which to explore DID, due to its uniqueness and widespread use. While 22 countries in the Arab world use Modern Standard Arabic (MSA) as their official language, citizens use their own local dialect in their ev eryday life. Arabic dialects are historically related and share Arabic characters, howe ver , the y are not mutually comprehensi- ble. Arabic DID therefore poses different challenges compared to other language dialects containing comprehensible vernacu- lar . Arabic dialects are challenging to distinguish because they belong to the same language family . Arabic dialects typically share a common phonetic in ventory and other linguistic feature like characters, so words and phonemes can be utilized by auto- matic speech recognition (ASR) contrary to general LID. The natural language processing (NLP) community has tended to partition the Arabic language into 5 broad categories: Egyptian (EGY), Lev antine (LEV), Gulf (GLF), North African (NOR), and MSA. The Multi Genre Broadcast(MGB) chal- lenge 1 committee established an Arabic dialect dataset in 2016, and holds a DID challenge series that attracts interest in Arabic DID from researchers in both the NLP and speech communi- ties. The MGB-3 dataset contains 5 dialects with 63.6 hours of training data and 10.1 hours of test data. A DID benchmark of the task w as attained using the i-v ector frame work using bottle- neck features [4]. Using linguistic features such as words and characters results in similar performance to that obtained via acoustic features with a Con volutional Neural Network (CNN)- based backend [5, 6, 7]. Since the linguistic feature space is different from the acoustic feature space, a fusion of the results from both feature representations has been shown to be ben- eficial [6, 5, 8]. A recent study achie ves 70% [9] for a single system and 80% [10] for a fused system. Giv en that there are only 5 dialects, these accuracies are relativ ely low , affirming the difficulty of this DID task. Recently , many Deep Neural Network (DNN)-based end- to-end and speaker embedding approaches have achie ved im- pressiv e results for text independent speaker recognition and LID [11, 12, 13, 14]. This research indicates that these meth- ods perform close to or slightly better than traditional i-vectors, though it has focused on improving upon i-vectors, so there is room for further detailed analyses of various task conditions. In this work, we propose two different approaches for DID using both acoustic and linguistic features. For acoustic fea- tures, we explore an end-to-end DID model based on a CNN with global average pooling. W e examine three representations based on Mel-Frequency Cepstral Coefficients (MFCCs), log Mel-scale filter banks energies (FB ANK) and spectrogram en- ergies. W e employ data augmentation via speech and volume perturbation to analyze the impact of dataset size. Finally , we analyze the ef fectiveness of training with random utterance seg- mentation, as a strategy to cope with short input utterances. For language embeddings, we adopt a Siamese neural net- work [15] model using a cosine similarity metric to learn a di- alect embedding space based on text-based linguistic features. The network is trained to learn similarities between the same dialects and dissimilarities between different dialects. Linguis- tic feature are extracted from an automatic speech recognizer 1 www .mgb-challenge.org Figure 1: Illustration of acoustic and linguistic feature creation. (ASR), and based on words, characters and phonemes. Siamese neural networks are usually applied on verification tasks for end-to-end systems, howe ver a previous study showed that this approach could be also applied on an identification task to make the original features more robust [9]. W e applied this approach on linguistic features to extract language embeddings to im- prov e DID performance while reducing the enormous feature dimensionality . Python and T ensorflow code for the end-to-end system 2 and Siamese network language embeddings 3 is avail- able, to allow others to reproduce our results, and apply these ideas to similar LID and speaker verification tasks. 2. Dialect Identification Baseline 2.1. Acoustic features For LID and DID, the i-v ector is re garded as the state-of-the-art for general tasks, while recent studies show combinations with DNN-based approaches produce competitiv e performance [2]. Apart from the basic i-vector approach, bottleneck (BN) fea- tures extracted from an ASR acoustic model were successfully applied to LID [1, 16, 2, 5, 6]. Although the acoustic model is usually trained on single language, it performs reasonably well on LID tasks [16]. The mismatch does appear to matter on speaker recognition tasks howe ver [17, 18]. A stacked BN feature can be extracted from a second DNN that has a BN layer , and its input is the output of the first BN layer with con- text expansion [1, 16]. A Gaussian Mixture Model - Universal Background Model (GMM-UBM) and a total v ariability matrix are trained in an unsupervised manner with BN features from a large dataset. Although the main purpose of LID is to obtain phonetic characteristics of each languages while suppressing in- dividual speaker’ s voice characteristics, the training scheme is exactly the same as training a GMM-UBM and total variability matrix for speaker recognition. The only difference is that the subsequent projection of the i-vector is based on measuring the distances with other languages as opposed to other speakers. 2.2. Linguistic features While the inherent similarity between dialects makes DID a more difficult task than LID, DID can take advantage of linguis- tic features because dialects tend to share a common phonetic in ventory . W ord and character sequences can be e xtracted using 2 https://github .com/swshon/dialectID e2e 3 https://github .com/swshon/dialectID siam a state-of-the-art ASR system, such as the Time delayed Neu- ral Network (TDNN) [19] or Bidirectional Long Short-T erm Memory Recurrent Neural Network (BLSTM-RNN) acous- tic model with RNN-based language model rescoring. Ex- tracted text sequence can then be con verted into a V ector Space Model(VSM) [6]. The VSM represents each utterance as a fixed length, sequence lev el, high-dimensional sparse vector ~ u : ~ u = ( f ( u, x 1 ) , f ( u, x 2 ) , ..., f ( u, x D )) (1) where f ( u, x i ) is the number of times word x i occurs in ut- terance u , and D is the dictionary size. The v ector ~ u can also consist of an n -gram histogram. Figure 1 illustrates the linguis- tic feature extraction concept based on acoustic features. For the MGB-3 Arabic dataset, the tri-gram word dictio- nary size D is 466k, and most of f ( u, x i ) is 0. Due to this high dimensionality , the kernel trick method used with Support V ector Machine’ s (SVMs) is a suitable approach for classifi- cation. Another linguistic feature, the character and phoneme feature, can also be extracted by an ASR system or stand- alone phonetic recognizer . Although both ASR and phonetic recognizers start from an acoustic input, the linguistic feature space consists of higher lev el features that contain semantic information of variable duration speech segments. While an ASR system, and an acoustic feature-based speaker recogni- tion or LID/DID system are vulnerable to domain mismatched conditions [20, 21, 22, 23], the af fected results in the higher - lev el linguistic spaces will be dif ferent. Thus, linguistic feature- based approaches could complement acoustic feature-based ap- proaches to produce a superior fusion result. 3. End-to-End DID with Acoustic F eatures Recently , end-to-end approaches which do not use i-v ector tech- niques sho wed impressiv e results on both LID and speaker recognition [11, 12, 13, 14]. They did not start from raw wav e- forms, b ut from acoustic features ranging from MFCCs to spec- trograms. The deep learning models consisted of CNNs, RNNs and Fully Connected (FC) DNN layers. Recent studies [14, 13] report combinations of CNN and FC with a global pooling layer obtains the best result for a speaker representation from text- independent and variable length speech inputs. The global pool- ing layer has a simple function such as av eraging sequential in- puts, but it effecti vely con vert frame le vel representations to ut- terance le vel representations, which is adv antageous for speaker recognition and LID. The size of the final softmax layer is de- termined by the task specific speaker or language labels. For identification tasks, the Softmax output can be used directly as a score for each class. For verification tasks, usually the acti- vation of the last hidden layer is extracted as a speaker or lan- guage representation vector , and is used to calculate a distance between enrollment and test data. Our end-to-end system is based on [14, 13], but instead of a VGG [24] or Time Delayed Neural Network, we used four 1-dimensional CNN (1d-CNN) layers (40 × 5 - 500 × 7 - 500 × 1 - 500 × 1 filter sizes with 1-2-1-1 strides and the number of fil- ters is 500-500-500-3000) and two FC layers (1500-600) that are connected with a Global av erage pooling layer which av er- ages the CNN outputs to produce a fixed output size of 3000 × 1. After global average pooling, the fix ed length output is fed into two FC layers and a Softmax layer . The structure of end-to-end dialect identification system is represented in Figure 2. The four CNN layers span a total of 11 frames, with strides of 2 frames ov er the input. For Arabic DID, the Softmax layer size is 5. W e Figure 2: End-to-End dialect identification system structure. examined the FB ANK, MFCC and spectrogram as acoustic fea- tures. Filter size on first CNN layer changes to 200 × 5 when we use Spectrogram. The Softmax output was used to compute similarity scores of each dialect for identification. 3.1. Internal Dataset augmentation Neural network-based deep learning models are but the most recent example of the age-old ASR adage that “there’ s no data like more data. ” These models are able to absorb large quan- tities of training data, and generally do better, the more data they are exposed to. Gi ven a fixed training data size, researchers hav e found that augmenting the corpus size by artificial means can also be ef fectiv e. In this paper, we explored internal dataset augmentation methods. One augmentation approach is segmen- tation of the training dataset into small chunks [13, 14, 11]. W e did not segment the dataset in advance, but did random seg- mentations on mini-batches. The random length was selected to hav e a uniform distribution between 2 and 10 seconds with 1 second intervals including original length. From previous stud- ies, it was found that random segmentation yields better per- formance on short utterances [13, 14, 11]. Howe ver , the in- vestigations did not show the performance before applying this method, so it is dif ficult to judge ho w much gains were achie ved from this approach. W e analyzed performances before and after applying random segmentation on v arious test set lengths. The other technique for augmentation is perturbation of the original dataset in term of speed [25] which is used ASR. The idea is to perturb the audio to play slightly faster or slo wer . W e in vestigated the ef fectiv eness of speed and volume perturbation on the DID task using speed factors of 0.9 and 1.1 and volume factors of 0.25 and 2.0. 4. DID Linguistic F eature Embeddings In previous study [5], they explored CNN back-end for the lin- guistic feature using cross-entropy objecti ve function with soft- max output of the network. For identification task, it is natural to consider that the cross-entrop y objectiv e function between class labels and output of the network. Howe ver , our recent study [9] shows that using the Euclidean distance loss function between the label and the cosine distance of the NN output pair which is usually adopted on a binary classification task is still useful for identification task by learning similarity and dissimilarity Figure 3: Siamese neural network structure. between classes. Thus, we adopted a Siamese neural network approach to extract language embeddings from linguistic fea- tures. The Siamese neural network has two parallel neural net- works, G W , which shares the same set of weights and biases W as shown in Figure 3. While the network has a symmetric structure, the input pair to the network consists of language rep- resentativ e vectors u d and linguistic feature ~ u , where d is lan- guage/dialect index and i is utterance index. The language rep- resentativ e v ector can be represented as u d = (1 /n d ) P n d i =1 ~ u d i , where n d is the number of utterances for each dialect d and ~ u d i is a segment lev el feature of dialect d . Thus, the pair of network inputs is the combination of the language representati ve vectors and all linguistic features. Let Y be the label for the pair , where Y = 1 if the pair belongs to the same language/dialect, and Y = − 1 if a different language/dialect. T o optimize the net- work, we use a Euclidean distance loss function with stochastic gradient descent (SGD) between the label and the cosine simi- larity of the pair , D W , where L ( u d , ~ u, Y ) = || Y − D W ( u d , ~ u ) || 2 2 (2) FC layers hav e 1500-600-200 neurons. All layers use Rectified Linear Unit (ReLU) activ ations. From the network G W , lan- guage embeddings can be extracted from both language repre- sentativ e vectors and linguistic features by obtaining activ ations from the last hidden layer (200 dimensions). 5. Dialectal Speech Dataset The MGB-3 dataset partitions are shown in T able 1. Each par- tition consists of fiv es Arabic dialects : EGY , LEV , GLF , NOR, MSA. Detailed corpus statistics are found in [4]. Although the dev elopment set is relativ ely small compared to the training set, it matches the test set channel conditions, and thus provides valuable information about the test domain. Dataset T raining Dev elopment T est Utterances 13,825 1,524 1,492 Size 53.6 hrs 10 hrs 10.1 hrs Channel (recording) Carried out at 16kHz Downloaded directly from a high-quality video server T able 1: MGB-3 Dialectal Arabic Speech Dataset Properties. Feature Maximum Con verged Accuracy(%) EER(%) C avg Accuracy(%) EER(%) C avg MFCC 65.55 20.24 19.92 61.33 21.95 21.53 FB ANK 64.81 20.22 19.91 61.26 22.12 21.79 Spectrogram 57.57 24.48 24.49 54.22 25.90 25.09 T able 2: Performance on end-to-end dialect identification system by features Figure 4: End-to-end DID accuracy by epoch. 6. DID Experiments 6.1. Implementation and T raining For i-vector implementation, the BN features were extracted from an ASR system trained for MSA speech recognition [26] with a similar configuration as [1]. A DNN that extracted BN features was trained on 60 hours of manually transcribed Al- Jazeera MSA ne ws recordings [27]. Then, the GMM-UBM with 2,048 mixtures and a 400 dimensional i-vector extractor was trained based on the BN features. The detailed configuration is described in [6]. After extracting i-vectors, a whitening transfor- mation and length normalization are applied. Similarity scores were calculated by Cosine Similarity (CS) and Gaussian Back- end (GB). Linear Discriminant Analysis (LD A) was applied after extracting i-vectors with language labels. On this e xper- iment, LD A reduces the 400 dimensional i-v ector to 4 dimen- sions. For phoneme features, we used four different phoneme rec- ognizers: Czech (CZ), Hungarian (HU) and Russian (R U) us- ing narrow-band models, and English (EN) using a broadband model [28]. For words and character features, word sequences are extracted using an Arabic ASR system built as part of the MGB-2 challenge on Arabic broadcast speech [29]. From the word sequence, character sequences can also be obtained by splitting words into characters. Finally , phoneme, character and word feature can be represented in VSM by using each se- quence. W e use unigrams for word features, and trigrams for character and phoneme feature. The word and character feature dimensions are 41,657 and 13,285, respectively . Phoneme fea- ture dimensions are 47,466 (CZ), 50,320 (HU), 51,102 (RU) and 33,660 (EN). An SVM was used to measure similarity be- tween the test utterance and 5 dialects [6]. T o train the end-to-end dialect identification system, we Figure 5: End-to-end DID mini-batch training loss by epoch. used 3 features: MFCC, FB ANK and spectrogram. The struc- ture of the DNN is shown in Figure 2 and described fully in Section 3. The SGD learning rate was 0.001 with decay in every 50000 mini-batches with a factor of 0.98. ReLUs were used for activ ation nonlinearities. W e used both the training and devel- opment dataset for training the DNN and excluded 10% of de- velopment dataset of each dialect for a v alidation set. For acous- tic input, the FFT window length was 400 samples with a 160 sample hop which is equivalent to 25ms and 10ms respectively for 16kHz audio. A total of 40 coef ficients were extracted for MFCCs and FBANKs, and 200 for spectrograms. All features were normalized to hav e zero-mean and unit v ariance. T o train the Siamese network, we made utterance pairs from the training and dev elopment sets. Since the number of true (”same dialect”) pairs is naturally lower than false (”dif ferent dialect”) pairs, we adjust the training batch to hav e the same 50%:50% ratio of true and false pairs. Since the test set domain is mismatched with the training set, the dev elopment dataset is very important because it contains channel information of the target domain. Thus, we expose development set pairs more than training set pairs by a factor of 5. All scores were normalized by Z-norm for each dialect, with fusion was done by logistic re gression. Performance was measured in accuracy , Equal Error Rate (EER) and minimum decision cost function C avg . Accuracy was measured by taking the dialect showing the maximum score between a test utterance and the 5 dialects. Minimum C avg was computed from hard de- cision errors and a fixed set of costs and priors from [30] 6.2. Experimental Result and Discussion For acoustic features, Figures 4 and 5 sho w the test set accurac y and mini-batch loss on training set for corresponding epochs. From the figures, it appears that the test set accuracy is not Augmentation method (feature = MFCC) Maximum Con verged Accuracy(%) EER(%) C avg Accuracy(%) EER(%) C avg V olume 67.49 20.37 20.00 62.47 21.55 21.08 Speed 70.51 17.54 17.39 65.42 19.87 19.19 V olume and speed 70.91 17.79 17.93 67.02 19.37 19.01 T able 3: Performance on end-to-end DID system using augmented dataset. Figure 6: Performance comparison with and without Random Segmentation (RS) on training dataset. Figure 7: DID accuracy by epoch using augmented data. exactly correlated with loss and all three features show better performance before the network con ver ged. W e measured per- formance for tw o conditions denoted as ”Maximum” and ”Con- ver ged” in T able 2. The maximum condition means the network achiev es the best accuracy on the validation set. The con ver ged condition means the av erage loss of 100 mini-batches goes un- der 0.00001. From the table, the difference between maximum and conv erged is 5-10% in all scenarios, so a validation set is essential to judge the performance of the network and should always be monitored to stop the training. MFCC and FBANK features achie ve similar DID perfor- mance while the spectrogram is worse. Theoretically , spectro- grams have more information than MFCCs or FBANKs, but it seems hard to optimize the network using the limited dataset. T o obtain better performance on spectrograms, it seems to re- quire using a pre-trained network on an external large dataset like VGG as in [13]. Figure 6 shows performance gains from random segmen- tation. Random segmentation shows most gain when the test Feature (on augmented dataset) Accuracy(%) EER(%) C avg MFCC 70.91 17.79 17.93 FB ANK 71.92 18.01 17.63 Spectrogram 68.83 18.70 18.69 T able 4: Performance on end-to-end DID system using aug- mented dataset. utterance is very short such as under 10 seconds. F or over 10 seconds or original length, it still shows performance improve- ment because random segmentation provides div ersity giv en a limited training dataset. Howe ver the impact is not as great as on short utterances. Figure 7 and T able 3 show the performance on data aug- mented by speed and volume perturbation. The accurac y fluc- tuated similarly to that observed on the original data as in Fig- ure 4, but all numbers are higher . V olume perturbation sho ws slightly better results than using the original dataset in both the Maximum and Conv erged condition. Howev er, speed pertur- bation shows impressi ve gains on performance, particularly , a 15% impro vement on EER. Using both v olume and speed seem to have advantages when the network has con verged, but it need more epochs to con verge because of the larger data size com- pared to volume or speed perturbation. T able 4 shows a performance comparison on different fea- tures when using volume and speed data augmentation. The per- formance gains from dataset augmentation are 8%, 11% and 20% in accuracy for MFCC, FBANK and spectrogram respec- tiv ely . Spectrograms still attain the worst performance, as on the original dataset (table 2). Ho wev er it is some what surpris- ing that the g ain from increasing the dataset size is much higher than for MFCCs which achieve a relatively small increase. T a- ble 5 shows performance comparisons between i-vectors and the end-to-end approach. A combination of i-vectors and LD A shows better performance on EER and C avg than the original i- vector . Howe ver , the end-to-end system in T able 2 (which uses the same original dataset as the i-vector system) outperforms the i-vector -LD A for all features. All end-to-end systems in T able 5 made use of random seg- System Accuracy(%) EER(%) C avg i-vector (CDS) 60.32 26.98 26.35 i-vector (GB) 58.58 22.50 22.56 i-vector -LD A (CDS) 62.60 21.05 20.12 i-vector -LD A (GB) 63.94 19.45 19.17 End-to-End (MFCC) 71.05 18.01 17.97 End-to-End (FBANK) 73.39 16.30 15.96 End-to-End (Spectrogram) 70.17 17.64 17.27 T able 5: Acoustic feature performance measurement on Arabic dialect MGB-3 dataset. All end-to-end systems were trained us- ing random se gmentation and volume/speed data augmentation. Phoneme Recognizer System Accuracy(%) EER(%) C avg Hungarian Baseline 48.86 29.94 29.16 Embedding 54.49 28.69 27.77 Russian Baseline 45.04 31.30 30.65 Embedding 44.24 38.09 36.69 English Baseline 33.78 39.46 39.04 Embedding 48.86 42.02 36.76 Czech Baseline 45.64 31.85 31.16 Embedding 53.55 29.26 28.67 T able 6: Phoneme feature based on 4 different phoneme recog- nizer performance measurement. Feature System Accurac y(%) EER(%) C avg Character Baseline 51.34 30.03 30.17 Embedding 58.18 25.48 25.68 W ord Baseline 50.00 30.73 30.41 Embedding 58.51 24.87 24.99 T able 7: Character and word feature performance measurement. mentation and volume/speed data augmentation during training. Since the internal dataset augmentation approach giv es diver - sity on limited datasets, the end-to-end system could be signifi- cantly improved without an y additional dataset. It is interesting that the most refined feature, MFCC, shows less performance improv ement after dataset augmentation was applied (8% in ac- curacy) than less refined, more raw features, i.e. FB ANK and spectrogram show comparativ ely higher improvement by 14% and 22%. Finally the spectrogram feature performs slightly bet- ter than MFCC in EER and C avg . It implies that if we ha ve large dataset, we can use raw signals as input features. At the same time, howe ver , it is difficult to determine how much training data is required for training raw features. T ables 6 and 7 show performance ev aluations on language embeddings based on phoneme, character and word features. These features are also compared with the baseline system. Lan- guage embeddings sho w an a verage 17% improvement on all metrics. Language embeddings based on word features achieve the best performance among the three features. Another bene- fit is that the linguistic feature dimension can be significantly reduced. For example, the 41,657 dimension character feature was reduced to 200 and is only 0.5% of the original dimension- ality , though the EER improv es about 15%. T able 8 shows the performance of fusion systems with var - ious combinations. W e use Hungarian phonemes for phonetic- based language embeddings which attains the best performance among phoneme features. The end-to-end system used aug- mented data as shown in T able 5. From the result, it is clear that fusion between acoustic and language embeddings sho ws better efficienc y than fusion between end-to-end systems such as MFCC and FBANK, although the performance on language embeddings itself shows comparably lower performance than acoustic end-to-end systems. Finally , all the scores from each feature were fused, with the fusion system using FBANK fea- tures achieving better performance than MFCC or spectrogram- based fusion systems on all measurements. Also, we find that the spectrogram system performs slightly better than the MFCC counterpart. Although fusion of multiple systems is somewhat not fea- sible for a practical situation, we fused 5 dif ferent systems (i- vector with LD A and GB, FBANK based end-to-end system, language embeddings) and verified the best performance com- Fusion system ( Bold : end-to-end system italic : language embedding) Accuracy(%) EER(%) C avg FBANK + wor d 76.94 13.66 13.57 FBANK + c har 76.61 13.89 13.87 FBANK + phoneme 75.13 14.95 14.79 FBANK + MFCC 74.40 15.63 15.50 MFCC + word + char + phoneme 77.48 14.02 14.00 FBANK + wor d + char + phoneme 78.15 12.77 12.51 Spectrogram + wor d + char + phoneme 77.88 13.34 13.24 i-vector + FB ANK + word + char + phoneme 81.36 11.03 10.90 T able 8: Performance measurement of score fusion systems with end-to-end system and language embeddings. Systems Accuracy(%) Single System Fusion System Khurana et al. [5] 67 73 Shon et al. [9] 69.97 75.00 Najafian et al. [7] 59.72 73.27 Bulut et al. [10] - 79.76 Our approach 73.39 81.36 T able 9: Performance comparison to previous works on same MGB-3 dataset pared to pre vious studies. T able 9 sho ws summary performance of previous studies on same MGB-3 Arabic dialect dataset. Both single and fusion system show outstanding performance than others. 7. Conclusion In this paper , we describe an end-to-end dialect identification system using acoustic features and language embeddings based on te xt-based linguistic features. W e in vestigated sev eral acous- tic and linguistic features along with various dataset augmen- tation techniques using a limited dataset resource. W e verified that the end-to-end system based on acoustic feature outper- forms i-vectors and also that language embeddings deriv ed from a Siamese neural network boost the performance by learning the similarities between utterances with the same dialect and dissimilarities between different dialects. Experiments on the MGB-3 Dialectical Arabic corpus sho w that the best single sys- tem achieves 73% accuracy , and the best fusion system shows 78% accuracy . From the experiments, spectrograms could be utilized as acoustic feature when the training dataset is large enough. W e also observe that the end-to-end dialect identifica- tion system can be significantly improv ed using random seg- mentation and volume/speed perturbation to increase the diver - sity and amount of training data. The end-to-end dialect identifi- cation system has a simplified topology and training methodol- ogy compared to a bottleneck feature based i-vector extraction scheme. Finally , using a Siamese network to learn language em- beddings reduces the linguistic-feature dimensionality signifi- cantly , and provide syner gistic fusion with acoustic features. 8. References [1] Patrick Cardinal, Najim Dehak, Y u Zhang, and James Glass, “Speaker adaptation using the i-vector technique for bottleneck features, ” in Interspeech , 2015, pp. 2867– 2871. [2] Fred Richardson, Douglas Reynolds, and Najim Dehak, “A Unified Deep Neural Network for Speaker and Lan- guage Recognition, ” in Interspeech , 2015, pp. 1146–1150. [3] Najim Dehak, Pedro a. T orres-Carrasquillo, Douglas Reynolds, and Reda Dehak, “Language recognition via Ivectors and dimensionality reduction, ” in Interspeech , 2011, number August, pp. 857–860. [4] Ahmed Ali, Stephan V ogel, and Stev e Renals, “Speech Recognition Challenge in the W ild: ARABIC MGB-3, ” in IEEE W orkshop on Automatic Speech Recognition and Understanding (ASR U) , 2017, pp. 316–322. [5] Sameer Khurana, Maryam Najafian, Ahmed Ali, T uka Al Hanai, Y onatan Belinkov , and James Glass, “QMDIS : QCRI-MIT Advanced Dialect Identification System, ” in Interspeech , 2017, pp. 2591–2595. [6] Ahmed Ali, Najim Dehak, Patrick Cardinal, Sameer Khu- rana, Sree Harsha Y ella, James Glass, Peter Bell, and Stev e Renals, “ Automatic dialect detection in Arabic broadcast speech, ” in Interspeech , 2016, vol. 08-12-Sept, pp. 2934–2938. [7] Maryam Najafian, Sameer Khurana, Suwon Shon, Ahmed Ali, and James Glass, “Exploiting Con volutional Neural Networks for Phonotactic Based Dialect Identification , ” in ICASSP , 2018. [8] Maryam Najafian, Saeid Safa vi, Phil W eber, and Martin Russell, “Identification of British English regional accents using fusion of i-vector and multi-accent phonotactic sys- tems, ” in Pr oceedings of Odysse y - The Speaker and Lan- guage Reco gnition W orkshop , 2016, pp. 132–139. [9] Suwon Shon, Ahmed Ali, and James Glass, “MIT -QCRI Arabic Dialect Identification System for the 2017 Multi- Genre Broadcast Challenge, ” in IEEE W orkshop on Au- tomatic Speech Recognition and Understanding (ASRU) , 2017, pp. 374–380. [10] Ahmet E. Bulut, Qian Zhang, Chunlei Zhang, Fahimeh Bahmaninezhad, and John H. L. Hansen, “UTD-CRSS Submission for MGB-3 Arabic Dialect Identification: Front-end and Back-end Advancements on Broadcast Speech, ” in IEEE W orkshop on Automatic Speech Recog- nition and Understanding (ASR U) , 2017. [11] Ma Jin, Y an Song, Ian McLoughlin, W u Guo, and Li Rong Dai, “End-to-end language identification using high-order utterance representation with bilinear pooling, ” in Inter- speech , 2017, pp. 2571–2575. [12] T rung Ngo Trong, V ille Hautamaki, and Kong Aik Lee, “Deep Language : a comprehensive deep learning ap- proach to end-to-end language recognition, ” in Pr oceed- ings of Odysse y - The Speaker and Language Recognition W orkshop , 2016, pp. 109–116. [13] Arsha Nagraniy , Joon Son Chung, and Andrew Zisserman, “V oxCeleb: A large-scale speaker identification dataset, ” in Interspeech , 2017, pp. 2616–2620. [14] David Snyder , Peg ah Ghahremani, Daniel Pov ey , Daniel Garcia-Romero, and Y ishay Carmiel, “Deep Neural Net- work Embeddings for T ext-Independent Speaker V erifica- tion, ” in Interspeech , 2017, pp. 165–170. [15] Jane Bromle y , James W . Bentz, L ´ eon Bottou, Isabelle Guyon, Y ann Lecun, Cliff Moore, Eduard S ¨ ackinger , and Roopak Shah, “Signature V erification Using a “Siamese” T ime Delay Neural Network, ” International Journal of P attern Recognition and Artificial Intelligence , vol. 07, no. 04, pp. 669–688, 1993. [16] Pa vel Matejka, Le Zhang, T im Ng, Sri Harish Mallidi, On- drej Glembek, Jeff Ma, and Bing Zhang, “Neural Netw ork Bottleneck Features for Language Identification, ” 2014. [17] Suwon Shon, Seongkyu Mun, and Hanseok K o, “Re- cursiv e Whitening Transformation for Speaker Recogni- tion on Language Mismatched Condition, ” in Inter speech , 2017, pp. 2869–2873. [18] Suwon Shon and Hanseok K o, “KU-ISPL Speaker Recog- nition Systems under Language mismatch condition for NIST 2016 Speaker Recognition Evaluation, ” ArXiv e- prints arXiv:1702.00956 , 2017. [19] V ijayaditya Peddinti, Daniel Pov ey , and Sanjeev Khudan- pur , “A time delay neural network architecture for effi- cient modeling of long temporal contexts, ” in Interspeec h , 2015, pp. 2440–2444. [20] Hagai Aronowitz, “Inter dataset variability compensa- tion for speaker recognition, ” in IEEE ICASSP , 2014, pp. 4002–4006. [21] Daniel Garcia-Romero, Xiaohui Zhang, Alan McCree, and Daniel Pove y , “Improving Speaker Recognition Per- formance in the Domain Adaptation Challenge Using Deep Neural Netw orks, ” in IEEE Spok en Languag e T ech- nology W orkshop (SLT) , 2014, pp. 378–383. [22] Stephen Shum, Douglas a. Reynolds, Daniel Garcia- Romero, and Alan McCree, “Unsupervised Clustering Approaches for Domain Adaptation in Speaker Recogni- tion Systems, ” in Proceedings of Odyssey - The Speaker and Language Recognition W orkshop , 2014, pp. 265–272. [23] Suwon Shon, Seongkyu Mun, W ooil Kim, and Hanseok K o, “Autoencoder based Domain Adaptation for Speaker Recognition under Insufficient Channel Information, ” in Interspeech , 2017, pp. 1014–1018. [24] Karen Simonyan and Andrew Zisserman, “V ery Deep Con volutional Networks for Large-Scale Image Recogni- tion, ” arXiv preprint , pp. 1–14, 2014. [25] T om Ko, V ijayaditya Peddinti, Daniel Pove y , and Sanjeev Khudanpur , “ Audio Augmentation for Speech Recogni- tion, ” in Interspeech , 2015, pp. 3586–3589. [26] Sameer Khurana and Ahmed Ali, “QCRI advanced tran- scription system (QA TS) for the Arabic Multi-Dialect Broadcast media recognition: MGB-2 challenge, ” in IEEE W orkshop on Spoken Language T echnology(SL T) , 2016, pp. 292–298. [27] Ahmed Ali, Y ifan Zhang, Patrick Cardinal, Najim Dahak, Stephan V ogel, and James Glass, “A complete KALDI recipe for building Arabic speech recognition systems, ” in IEEE Spoken Language T echnology W orkshop (SLT) . dec 2014, pp. 525–529, IEEE. [28] P . Schwarz, P . Matejka, and J. Cernocky , “Hierarchical Structures of Neural Networks for Phoneme Recognition, ” in IEEE ICASSP . 2006, vol. 1, pp. I–325–I–328, IEEE. [29] Ahmed Ali, Peter Bell, James Glass, Y acine Messaoui, Hamdy Mubarak, Stev e Renals, and Y ifan Zhang, “The MGB-2 challenge: Arabic multi-dialect broadcast me- dia recognition, ” in IEEE Spoken Languag e T echnology W orkshop (SLT) . dec 2016, pp. 279–284, IEEE. [30] “The NIST 2015 Language Recognition Evaluation Plan”, A vailable : https://www .nist.gov/document/lre15 ev alplanv23pdf, ” .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment