Adversarial Machine Learning at Scale

Adversarial examples are malicious inputs designed to fool machine learning models. They often transfer from one model to another, allowing attackers to mount black box attacks without knowledge of the target model's parameters. Adversarial training …

Authors: Alexey Kurakin, Ian Goodfellow, Samy Bengio

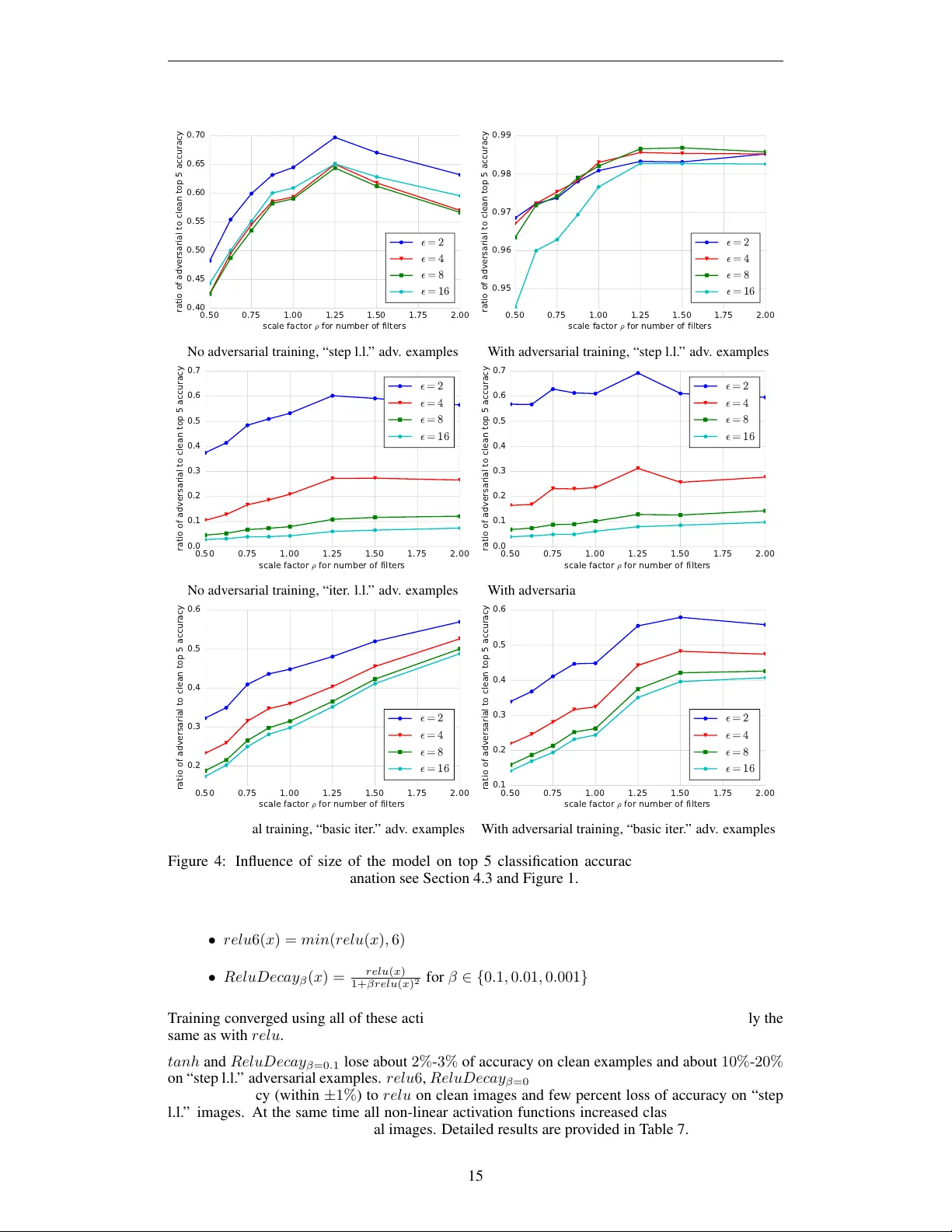

Published as a conference paper at ICLR 2017 A D V E R S A R I A L M A C H I N E L E A R N I N G A T S C A L E Alexey Kurakin Google Brain kurakin@google.com Ian J. Goodfello w OpenAI ian@openai.com Samy Bengio Google Brain bengio@google.com A B S T R AC T Adversarial examples are malicious inputs designed to fool machine learning models. They often transfer from one model to another , allowing attackers to mount black box attacks without knowledge of the target model’ s parameters. Adversarial training is the process of explicitly training a model on adversarial examples, in order to make it more rob ust to attack or to reduce its test error on clean inputs. So far , adversarial training has primarily been applied to small prob- lems. In this research, we apply adversarial training to ImageNet (Russakovsky et al., 2014). Our contributions include: (1) recommendations for how to succes- fully scale adversarial training to large models and datasets, (2) the observ ation that adversarial training confers robustness to single-step attack methods, (3) the finding that multi-step attack methods are some what less transferable than single- step attack methods, so single-step attacks are the best for mounting black-box attacks, and (4) resolution of a “label leaking” effect that causes adversarially trained models to perform better on adversarial examples than on clean examples, because the adversarial example construction process uses the true label and the model can learn to exploit re gularities in the construction process. 1 I N T RO D U C T I O N It has been shown that machine learning models are often vulnerable to adversarial manipulation of their input intended to cause incorrect classification (Dalvi et al., 2004). In particular, neural networks and many other categories of machine learning models are highly vulnerable to attacks based on small modifications of the input to the model at test time (Biggio et al., 2013; Szegedy et al., 2014; Goodfellow et al., 2014; P apernot et al., 2016b). The problem can be summarized as follows. Let’ s say there is a machine learning system M and input sample C which we call a clean e xample. Let’ s assume that sample C is correctly classified by the machine learning system, i.e. M ( C ) = y true . It’ s possible to construct an adversarial example A which is perceptually indistinguishable from C but is classified incorrectly , i.e. M ( A ) 6 = y true . These adv ersarial e xamples are misclassified far more often than e xamples that hav e been perturbed by noise, even if the magnitude of the noise is much larger than the magnitude of the adversarial perturbation (Szegedy et al., 2014). Adversarial examples pose potential security threats for practical machine learning applications. In particular , Szegedy et al. (2014) showed that an adversarial example that was designed to be misclassified by a model M 1 is often also misclassified by a model M 2 . This adversarial example transferability property means that it is possible to generate adv ersarial e xamples and perform a mis- classification attack on a machine learning system without access to the underlying model. Papernot et al. (2016a) and Papernot et al. (2016b) demonstrated such attacks in realistic scenarios. It has been shown (Goodfello w et al., 2014; Huang et al., 2015) that injecting adversarial examples into the training set (also called adversarial training) could increase robustness of neural networks to adversarial examples. Another existing approach is to use defensiv e distillation to train the net- work (Papernot et al., 2015). Howe ver all prior work studies defense measures only on relativ ely small datasets like MNIST and CIF AR10. Some concurrent work studies attack mechanisms on ImageNet (Rozsa et al., 2016), focusing on the question of how well adversarial examples transfer between different types of models, while we focus on defenses and studying how well different types of adversarial e xample generation procedures transfer between relativ ely similar models. 1 Published as a conference paper at ICLR 2017 In this paper we studied adversarial training of Inception models trained on ImageNet. The contri- butions of this paper are the follo wing: • W e successfully used adversarial training to train an Inception v3 model (Szegedy et al., 2015) on ImageNet dataset (Russako vsky et al., 2014) and to significantly increase rob ust- ness against adversarial examples generated by the fast gradient sign method (Goodfellow et al., 2014) as well as other one-step methods. • W e demonstrated that different types of adversarial examples tend to hav e different trans- ferability properties between models. In particular we observed that those adversarial ex- amples which are harder to resist using adversarial training are less likely to be transferrable between models. • W e sho wed that models which have higher capacity (i.e. number of parameters) tend to be more robust to adversarial examples compared to lower capacity model of the same architecture. This provides additional cue which could help b uilding more robust models. • W e also observed an interesting property we call “label leaking”. Adversarial examples constructed with a single-step method making use of the true labels may be easier to classify than clean adversarial examples, because an adversarially trained model can learn to exploit regularities in the adv ersarial example construction process. This suggests using adversarial example construction processes that do not make use of the true label. The rest of the paper is structured as follo ws: In section 2 we revie w different methods to generate adversarial examples. Section 3 describes details of our adversarial training algorithm. Finally , section 4 describes our experiments and results of adv ersarial training. 2 M E T H O D S G E N E R A T I N G A D V E R S A R I A L E X A M P L E S 2 . 1 T E R M I N O L O G Y A N D N OTA T I O N In this paper we use the following notation and terminology re garding adversarial examples: 1. X , the clean image — unmodified image from the dataset (either train or test set). 2. X adv , the adversarial image : the output of any procedure intended to produce an approx- imate worst-case modification of the clean image. W e sometimes call this a candidate adversarial image to emphasize that an adversarial image is not necessarily misclassified by the neural network. 3. Misclassified adversarial image — candidate adversarial image which is misclassified by the neural network. In addition we are typically interested only in those misclassified ad- versarial images when the corresponding clean image is correctly classified. 4. : The size of the adversarial perturbation. In most cases, we require the L ∞ norm of the perturbation to be less than , as done by Goodfellow et al. (2014). W e always specify in terms of pixel values in the range [0 , 255] . Note that some other work on adversarial examples minimizes the size of the perturbation rather than imposing a constraint on the size of the perturbation (Szegedy et al., 2014). 5. The cost function used to train the model is denoted J ( X , y true ) . 6. C lip X , ( A ) denotes element-wise clipping A , with A i,j clipped to the range [ X i,j − , X i,j + ] . 7. One-step methods of adversarial example generation generate a candidate adversarial im- age after computing only one gradient. They are often based on finding the optimal per- turbation of a linear approximation of the cost or model. Iterative methods apply many gradient updates. They typically do not rely on any approximation of the model and typi- cally produce more harmful adversarial e xamples when run for more iterations. 2 . 2 A T TAC K M E T H O D S W e study a variety of attack methods: 2 Published as a conference paper at ICLR 2017 Fast gradient sign method Goodfellow et al. (2014) proposed the fast gradient sign method (FGSM) as a simple way to generate adversarial e xamples: X adv = X + sign ∇ X J ( X , y true ) (1) This method is simple and computationally efficient compared to more complex methods like L- BFGS (Szegedy et al., 2014), ho wev er it usually has a lo wer success rate. On ImageNet, top-1 error rate on candidate adversarial images for the FGSM is about 63% − 69% for ∈ [2 , 32] . One-step target class methods FGSM finds adversarial perturbations which increase the value of the loss function. An alternati ve approach is to maximize probability p ( y targ et | X ) of some specific target class y targ et which is unlikely to be the true class for a given image. For a neural network with cross-entropy loss this will lead to the following formula for the one-step target class method: X adv = X − sign ∇ X J ( X , y targ et ) (2) As a target class we can use the least likely class predicted by the network y LL = arg min y p ( y | X ) , as suggested by Kurakin et al. (2016). In such case we refer to this method as one-step least likely class or just “step l.l. ” Alternativ ely we can use a random class as target class. In such a case we refer to this method as “step rnd. ”. Basic iterative method A straightforward extension of FGSM is to apply it multiple times with small step size: X adv 0 = X , X adv N +1 = C l ip X, n X adv N + α sign ∇ X J ( X adv N , y true ) o In our experiments we used α = 1 , i.e. we changed the value of each pixel only by 1 on each step. W e selected the number of iterations to be min( + 4 , 1 . 25 ) . See more information on this method in Kurakin et al. (2016). Below we refer to this method as “iter . basic” method. Iterative least-likely class method By running multiple iterations of the “step l.l. ” method we can get adversarial e xamples which are misclassified in more than 99% of the cases: X adv 0 = X , X adv N +1 = C l ip X, X adv N − α sign ∇ X J ( X adv N , y LL ) α and number of iterations were selected in the same way as for the basic iterativ e method. Below we refer to this method as the “iter . l.l. ”. 3 A DV E R S A R I A L T R A I N I N G The basic idea of adversarial training is to inject adversarial examples into the training set, con- tinually generating new adversarial examples at e very step of training (Goodfello w et al., 2014). Adversarial training was originally dev eloped for small models that did not use batch normaliza- tion. T o scale adversarial training to ImageNet, we recommend using batch normalization (Ioffe & Szegedy, 2015). T o do so successfully , we found that it was important for examples to be grouped into batches containing both normal and adversarial examples before taking each training step, as described in algorithm 1. W e use a loss function that allows independent control of the number and relativ e weight of adver- sarial examples in each batch: Loss = 1 ( m − k ) + λk X i ∈ C LE AN L ( X i | y i ) + λ X i ∈ ADV L ( X adv i | y i ) ! where L ( X | y ) is a loss on a single example X with true class y ; m is total number of training examples in the minibatch; k is number of adversarial examples in the minibatch and λ is a parameter which controls the relative weight of adversarial examples in the loss. W e used λ = 0 . 3 , m = 32 , 3 Published as a conference paper at ICLR 2017 Algorithm 1 Adversarial training of network N . Size of the training minibatch is m . Number of adversarial images in the minibatch is k . 1: Randomly initialize network N 2: r epeat 3: Read minibatch B = { X 1 , . . . , X m } from training set 4: Generate k adversarial e xamples { X 1 adv , . . . , X k adv } from corresponding clean examples { X 1 , . . . , X k } using current state of the network N 5: Make ne w minibatch B 0 = { X 1 adv , . . . , X k adv , X k +1 , . . . , X m } 6: Do one training step of network N using minibatch B 0 7: until training conv erged and k = 16 . Note that we replace each clean example with its adversarial counterpart, for a total minibatch size of 32 , which is a departure from previous approaches to adv ersarial training. Fraction and weight of adversarial examples which we used in each minibatch differs from Huang et al. (2015) where authors replaced entire minibatch with adversarial examples. Howe ver their ex- periments was done on smaller datasets (MNIST and CIF AR-10) in which case adversarial training does not lead to decrease of accuracy on clean images. W e found that our approach works better for ImageNet models (corresponding comparativ e experiments could be found in Appendix E). W e observed that if we fix during training then networks become rob ust only to that specific v alue of . W e therefore recommend choosing randomly , independently for each training example. In our experiments we achiev ed best results when magnitudes were drawn from a truncated normal distribution defined in interv al [0 , 16] with underlying normal distribution N ( µ = 0 , σ = 8) . 1 4 E X P E R I M E N T S W e adversarially trained an Inception v3 model (Szegedy et al., 2015) on ImageNet. All experiments were done using synchronous distrib uted training on 50 machines, with a minibatch of 32 examples on each machine. W e observ ed that the network tends to reach maximum accurac y at around 130 k − 150 k iterations. If we continue training beyond 150 k iterations then ev entually accuracy might decrease by a fraction of a percent. Thus we ran experiments for around 150 k iterations and then used the obtained accuracy as the final result of the e xperiment. Similar to Szegedy et al. (2015) we used RMSProp optimizer for training. W e used a learning rate of 0 . 045 except where otherwise indicated. W e looked at interaction of adversarial training and other forms or regularization (dropout, label smoothing and weight decay). By default training of Inception v3 model uses all three of them. W e noticed that disabling label smoothing and/or dropout leads to small decrease of accuracy on clean examples (by 0 . 1% - 0 . 5% for top 1 accuracy) and small increase of accuracy on adversarial examples (by 1% - 1 . 5% for top 1 accuracy). On the other hand reducing weight decay leads to decrease of accuracy on both clean and adv ersarial examples. W e experimented with delaying adversarial training by 0 , 10 k , 20 k and 40 k iterations. In such case we used only clean examples during the first N training iterations and after N iterations included both clean and adversarial examples in the minibatch. W e noticed that delaying adversarial training has almost no ef fect on accuracy on clean examples (difference in accuracy within 0 . 2% ) after sufficient number of training iterations (more than 70 k in our case). At the same time we noticed that larger delays of adversarial training might cause up to 4% decline of accuracy on adversarial examples with high magnitude of adversarial perturbations. For small 10 k delay changes of accuracy was not statistically significant to recommend against it. W e used a delay of 10 k because this allowed us to reuse the same partially trained model as a starting point for many dif ferent experiments. For e valuation we used the ImageNet validation set which contains 50 , 000 images and does not intersect with the training set. 1 In T ensorFlow this could be achieved by tf.abs(tf.truncated normal(shape, mean=0, stddev=8)) . 4 Published as a conference paper at ICLR 2017 4 . 1 R E S U LT S O F A D V E R S A R I A L T R A I N I N G W e experimented with adversarial training using sev eral types of one-step methods. W e found that adversarial training using any type of one-step method increases robustness to all types of one-step adversarial examples that we tested. Ho wev er there is still a gap between accuracy on clean and adversarial examples which could vary depending on the combination of methods used for training and ev aluation. Adversarial training caused a slight (less than 1% ) decrease of accuracy on clean examples in our Im- ageNet experiments. This differs from results of adversarial training reported previously , where ad- versarial training increased accuracy on the test set (Goodfellow et al., 2014; Miyato et al., 2016b;a). One possible explanation is that adversarial training acts as a regularizer . For datasets with few la- beled examples where o verfitting is the primary concern, adversarial training reduces test error . For datasets like ImageNet where state-of-the-art models typically hav e high training set error, adding a regularizer like adversarial training can increase training set error more than it decreases the gap between training and test set error . Our results suggest that adv ersarial training should be employed in two scenarios: 1. When a model is ov erfitting, and a regularizer is required. 2. When security against adversarial examples is a concern. In this case, adversarial training is the method that provides the most security of any known defense, while losing only a small amount of accuracy . By comparing different one-step methods for adversarial training we observed that the best results in terms or accurac y on test set are achie ved using “step l.l. ” or “step rnd. ” method. Moreover using these two methods helped the model to become robust to adversarial examples generated by other one-step methods. Thus for final experiments we used “step l.l. ” adversarial method. For bre vity we omitted a detailed comparison of dif ferent one-step methods here, b ut the reader can find it in Appendix A. T able 1: T op 1 and top 5 accuracies of an adversarially trained network on clean images and ad- versarial images with various test-time . Both training and e v aluation were done using “step l.l. ” method. Adversarially training caused the baseline model to become robust to adversarial exam- ples but lost some accuracy on clean examples. W e therefore also trained a deeper model with two additional Inception blocks. The deeper model benefits more from adversarial training in terms of robustness to adversarial perturbation, and loses less accuracy on clean examples than the smaller model does. Clean = 2 = 4 = 8 = 16 Baseline top 1 78.4% 30.8% 27.2% 27.2% 29.5% (standard training) top 5 94.0% 60.0% 55.6% 55.1% 57.2% Adv . training top 1 77.6% 73.5% 74.0% 74.5% 73.9% top 5 93.8% 91.7% 91.9% 92.0% 91.4% Deeper model top 1 78.7% 33.5% 30.0% 30.0% 31.6% (standard training) top 5 94.4% 63.3% 58.9% 58.1% 59.5% Deeper model top 1 78.1% 75.4% 75.7% 75.6% 74.4% (Adv . training) top 5 94.1% 92.6% 92.7% 92.5% 91.6% Results of adversarial training using “step l.l. ” method are provided in T able 1. As it can be seen from the table we were able to significantly increase top-1 and top-5 accurac y on adv ersarial e xamples (up to 74% and 92% correspondingly) to make it to be on par with accuracy on clean images. Howe ver we lost about 0 . 8% accuracy on clean e xamples. W e were able to slightly reduce the gap in the accuracy on clean images by slightly increasing the size of the model. This was done by adding two additional Inception blocks to the model. For specific details about Inception blocks refer to Szegedy et al. (2015). Unfortunately , training on one-step adversarial examples does not confer robustness to iterative adversarial e xamples, as shown in T able 2. 5 Published as a conference paper at ICLR 2017 T able 2: Accuracy of adversarially trained network on iterativ e adversarial examples. Adversarial training was done using “step l.l. ” method. Results were computed after 140 k iterations of training. Overall, we see that training on one-step adv ersarial e xamples does not confer resistance to iterati ve adversarial e xamples. Adv . method Training Clean = 2 = 4 = 8 = 16 Iter . l.l. Adv . training top 1 77.4% 29.1% 7.5% 3.0% 1.5% top 5 93.9% 56.9% 21.3% 9.4% 5.5% Baseline top 1 78.3% 23.3% 5.5% 1.8% 0.7% top 5 94.1% 49.3% 18.8% 7.8% 4.4% Iter . basic Adv . training top 1 77.4% 30.0% 25.2% 23.5% 23.2% top 5 93.9% 44.3% 33.6% 28.4% 26.8% Baseline top 1 78.3% 31.4% 28.1% 26.4% 25.9% top 5 94.1% 43.1% 34.8% 30.2% 28.8% W e also tried to use iterati ve adversarial examples during training, howe ver we were unable to gain any benefits out of it. It is computationally costly and we were not able to obtain robustness to adversarial examples or to prevent the procedure from reducing the accuracy on clean examples significantly . It is possible that much larger models are necessary to achieve robustness to such a large class of inputs. 4 . 2 L A B E L L E A K I N G W e discovered a label leaking effect: when a model is trained on FGSM adversarial examples and then ev aluated using FGSM adversarial examples, the accuracy on adv ersarial images becomes much higher than the accuracy on clean images (see T able 3). This effect also occurs (but to a lesser degree) when using other one-step methods that require the true label as input. W e say that label for specific example has been leaked if and only if the model classifies an adver- sarial e xample correctly when that adversarial example is generated using the true label b ut misclas- sifies a corresponding adversarial example that was created without using the true label. If too man y labels has been leaked then accuracy on adversarial examples might become bigger than accuracy on clean examples which we observ ed on ImageNet dataset. W e believe that the effect occurs because one-step methods that use the true label perform a very simple and predictable transformation that the model can learn to recognize. The adversarial exam- ple construction process thus inadvertently leaks information about the true label into the input. W e found that the effect vanishes if we use adversarial example construction processes that do not use the true label. The effect also vanishes if an iterati ve method is used, presumably because the output of an iterativ e process is more diverse and less predictable than the output of a one-step process. Overall due to the label leaking ef fect, we do not recommend to use FGSM or other methods defined with respect to the true class label to evaluate robustness to adv ersarial examples; we recommend to use other one-step methods that do not directly access the label instead. W e recommend to replace the true label with the most likely label predicted by the model. Alter - nately , one can maximize the cross-entropy between the full distribution over all predicted labels giv en the clean input and the distrib ution ov er all predicted labels gi ven the perturbed input (Miyato et al., 2016b). W e revisited the adversarially trained MNIST classifier from Goodfello w et al. (2014) and found that it too leaks labels. The most labels are leaked with = 0 . 3 on MNIST data in [0 , 1] . With that , the model leaks 79 labels on the test set of 10,000 examples. Howe ver , the amount of label leaking is small compared to the amount of error caused by adversarial e xamples. The error rate on adversarial examples exceeds the error rate on clean examples for ∈ { . 05 , . 1 , . 25 , . 3 , . 4 , . 45 , . 5 } . This explains why the label leaking ef fect was not noticed earlier . 6 Published as a conference paper at ICLR 2017 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.20 0.25 0.30 0.35 0.40 0.45 ratio of adversarial to clean top 1 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 No adversarial training, “step l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.90 0.92 0.94 0.96 0.98 ratio of adversarial to clean top 1 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 W ith adversarial training, “step l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.00 0.05 0.10 0.15 0.20 0.25 0.30 0.35 ratio of adversarial to clean top 1 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 No adversarial training, “iter . l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.00 0.05 0.10 0.15 0.20 0.25 0.30 0.35 0.40 0.45 ratio of adversarial to clean top 1 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 W ith adversarial training, “iter . l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.2 0.3 0.4 0.5 0.6 ratio of adversarial to clean top 1 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 No adversarial training, “basic iter . ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.15 0.20 0.25 0.30 0.35 0.40 0.45 0.50 0.55 ratio of adversarial to clean top 1 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 W ith adversarial training, “basic iter . ” adv . examples Figure 1: Influence of size of the model on top 1 classification accuracy of various adversarial examples. Left column — base model without adversarial training, right column — model with adversarial training using “step l.l. ” method. T op row — results on “step l.l. ” adv ersarial images, middle row — results on “iter . l.l. ” adversarial images, bottom row — results on “basic iter . ” adversarial images. See text of Section 4.3 for explanation of meaning of horizontal and vertical axes. 7 Published as a conference paper at ICLR 2017 T able 3: Ef fect of label leaking on adversarial examples. When training and ev aluation was done using FGSM accuracy on adversarial examples was higher than on clean examples. This effect was not happening when training and e valuation was done using “step l.l. ” method. In both experiments training was done for 150 k iterations with initial learning rate 0 . 0225 . Clean = 2 = 4 = 8 = 16 No label leaking, top 1 77.3% 72.8% 73.1% 73.4% 72.0% training and ev al using “step l.l. ” top 5 93.7% 91.1% 91.1% 91.0% 90.3% W ith label leaking, top 1 76.6% 86.2% 87.6% 88.7% 87.0% training and ev al using FGSM top 5 93.2% 95.9% 96.4% 96.9% 96.4% 4 . 3 I N FL U E N C E O F M O D E L C A PAC I T Y O N A D V E R S A R I A L R O B U S T N E S S W e studied how the size of the model (in terms of number of parameters) could affect robustness to adversarial examples. W e picked Inception v3 as a base model and v aried its size by changing the number of filters in each con volution. For each experiment we pick ed a scale factor ρ and multiplied the number of filters in each conv olu- tion by ρ . In other words ρ = 1 means unchanged Inception v3, ρ = 0 . 5 means Inception with half of the usual number of filters in con volutions, etc . . . For each chosen ρ we trained two independent models: one with adversarial training and another without. Then we ev aluated accuracy on clean and adv ersarial examples for both trained models. W e ha ve run these e xperiments for ρ ∈ [0 . 5 , 2 . 0] . In earlier experiments (T able 1) we found that deeper models benefit more from adversarial training. The increased depth changed many aspects of the model architecture. These experiments varying ρ examine the effect in a more controlled setting, where the architecture remains constant except for the number of feature maps in each layer . In all experiments we observed that accuracy on clean images kept increasing with increase of ρ , though its increase slowed down as ρ became bigger . Thus as a measure of robustness we used the ratio of accurac y on adversarial images to accuracy on clean images because an increase of this ratio means that the gap between accuracy on adv ersarial and clean images becomes smaller . If this ratio reaches 1 then the accuracy on adversarial images is the same as on clean ones. For a successful adversarial e xample construction technique, we would ne ver expect this ratio to exceed 1 , since this would imply that the adversary is actually helpful. Some defecti ve adv ersarial example construction techniques, such as those suffering from label leaking, can inadvertently produce a ratio greater than 1 . Results with ratios of accuracy for v arious adversarial methods and are provided in Fig. 1. For models without adversarial training, we observed that there is an optimal value of ρ yielding best robustness. Models that are too large or too small perform worse. This may indicate that models become more robust to adversarial examples until they become large enough to overfit in some respect. For adversarially trained models, we found that robustness consistently increases with increases in model size. W e were not able to train large enough models to find when this process ends, but we did find that models with twice the normal size have an accuracy ratio approaching 1 for one-step adversarial examples. When e valuated on iterativ e adv ersarial examples, the trend to ward increasing robustness with increasing size remains but has some exceptions. Also, none of our models was large enough to approach an accuracy ratio of 1 in this regime. Overall we recommend exploring increase of accuracy (along with adv ersarial training) as a measure to improv e robustness to adv ersarial examples. 4 . 4 T R A N S F E R A B I L I T Y O F A D V E R S A R I A L E X A M P L E S From a security perspectiv e, an important property of adversarial examples is that they tend to trans- fer from one model to another , enabling an attacker in the black-box scenario to create adversarial 8 Published as a conference paper at ICLR 2017 T able 4: T ransfer rate of adversarial examples generated using different adversarial methods and perturbation size = 16 . This is equiv alent to the error rate in an attack scenario where the attacker prefilters their adversarial examples by ensuring that they are misclassified by the source model before deploying them against the target. Transfer rates are rounded to the nearest percent in order to fit the table on the page. The following models were used for comparison: A and B are Inception v3 models with different random initializations, C is Inception v3 model with ELU activ ations instead of Relu, D is Inception v4 model. See also T able 6 for the absolute error rate when the attack is not prefiltered, rather than the transfer rate of adversarial e xamples. FGSM basic iter . iter l.l. source tar get model target model tar get model model A B C D A B C D A B C D top 1 A (v3) 100 56 58 47 100 46 45 33 100 13 13 9 B (v3) 58 100 59 51 41 100 40 30 15 100 13 10 C (v3 ELU) 56 58 100 52 44 44 100 32 12 11 100 9 D (v4) 50 54 52 100 35 39 37 100 12 13 13 100 top 5 A (v3) 100 50 50 36 100 15 17 11 100 8 7 5 B (v3) 51 100 50 37 16 100 14 10 7 100 5 4 C (v3 ELU) 44 45 100 37 16 18 100 13 6 6 100 4 D (v4) 42 38 46 100 11 15 15 100 6 6 6 100 2 4 6 8 10 12 14 16 ² 0.0 0.1 0.2 0.3 0.4 0.5 0.6 top 1 transfer rate fast basic iter. iter l.l. T op 1 transferability . 2 4 6 8 10 12 14 16 ² 0.0 0.1 0.2 0.3 0.4 0.5 0.6 top 5 transfer rate fast basic iter. iter l.l. T op 5 transferability . Figure 2: Influence of the size of adversarial perturbation on transfer rate of adversarial examples. T ransfer rate was computed using tw o Inception v3 models with different random intializations. As could be seen from these plots, increase of leads to increase of transfer rate. It should be noted that transfer rate is a ratio of number of transferred adversarial examples to number of successful adversarial e xamples for source network. Both numerator and denominator of this ratio are increas- ing with increase of , howe ver we observed that numerator (i.e. number of transferred examples) is increasing much faster compared to increase of denominator . For example when increases from 8 to 16 relative increase of denominator is less than 1% for each of the considered methods, at the same time relativ e increase of numerator is more than 20% . examples for their o wn substitute model, then deploy those adversarial examples to fool a target model (Szegedy et al., 2014; Goodfello w et al., 2014; Papernot et al., 2016b). W e studied transferability of adversarial examples between the following models: two copies of normal Inception v3 (with different random initializations and order or training examples), Inception v4 (Szegedy et al., 2016) and Inception v3 which uses ELU activ ation (Clev ert et al., 2015) instead of Relu 2 . All of these models were independently trained from scratch until they achieved maximum accuracy . 2 W e achiev ed 78 . 0% top 1 and 94 . 1% top 5 accuracy on Inception v3 with ELU activ ations, which is comparable with accuracy of Inception v3 model with Relu acti vations. 9 Published as a conference paper at ICLR 2017 In each experiment we fixed the source and target networks, constructed adversarial examples from 1000 randomly sampled clean images from the test set using the source network and performed classification of all of them using both source and target networks. These experiments were done independently for different adv ersarial methods. W e measured transferability using the follo wing criteria. Among 1000 images we picked only mis- classified adversarial example for the source model (i.e. clean classified correctly , adversarial mis- classified) and measured what fraction of them were misclassified by the target model. T ransferability results for all combinations of models and = 16 are provided in T able 4. Results for various but fix ed source and target model are pro vided in Fig. 2. As can be seen from the results, FGSM adversarial examples are the most transferable, while “iter l.l. ” are the least. On the other hand “iter l.l. ” method is able to fool the network in more than 99% cases (top 1 accuracy), while FGSM is the least likely to fool the network. This suggests that there might be an in verse relationship between transferability of specific method and ability of the method to fool the network. W e haven’ t studied this phenomenon further , b ut one possible explanation could be the fact that iterati ve methods tend to o verfit to specific netw ork parameters. In addition, we observed that for each of the considered methods transfer rate is increasing with increase of (see Fig. 2). Thus potential adversary performing a black-box attack hav e an incentiv e to use higher to increase the chance of success of the attack. 5 C O N C L U S I O N In this paper we studied how to increase robustness to adversarial examples of large models (In- ception v3) trained on large dataset (ImageNet). W e showed that adversarial training provides robustness to adversarial examples generated using one-step methods. While adversarial training didn’t help much against iterativ e methods we observed that adversarial examples generated by iterativ e methods are less likely to be transferred between networks, which provides indirect robust- ness against black box adversarial attacks. In addition we observed that increase of model capacity could also help to increase robustness to adversarial examples especially when used in conjunc- tion with adversarial training. Finally we discov ered the effect of label leaking which resulted in higher accurac y on FGSM adversarial examples compared to clean examples when the netw ork w as adversarially trained. R E F E R E N C E S Battista Biggio, Igino Corona, Davide Maiorca, Blaine Nelson, Nedim ˇ Srndi ´ c, Pavel Lasko v , Gior- gio Giacinto, and Fabio Roli. Evasion attacks against machine learning at test time. In Joint Eur opean Confer ence on Machine Learning and Knowledge Discovery in Databases , pp. 387– 402. Springer , 2013. Djork-Arn ´ e Cle vert, Thomas Unterthiner , and Sepp Hochreiter . Fast and accurate deep net- work learning by exponential linear units (elus). CoRR , abs/1511.07289, 2015. URL http: //arxiv.org/abs/1511.07289 . Nilesh Dalvi, Pedro Domingos, Sumit Sanghai, Deepak V erma, et al. Adversarial classification. In Pr oceedings of the tenth A CM SIGKDD international conference on Knowledge discovery and data mining , pp. 99–108. A CM, 2004. Ian J. Goodfellow , Jonathon Shlens, and Christian Szegedy . Explaining and harnessing adversarial examples. CoRR , abs/1412.6572, 2014. URL . Ruitong Huang, Bing Xu, Dale Schuurmans, and Csaba Szepesv ´ ari. Learning with a strong adver- sary . CoRR , abs/1511.03034, 2015. URL . Serge y Ioffe and Christian Szegedy . Batch normalization: Accelerating deep network training by reducing internal cov ariate shift. 2015. Alex Kurakin, Ian Goodfellow , and Samy Bengio. Adversarial examples in the physical world. T echnical report, arXiv , 2016. URL . 10 Published as a conference paper at ICLR 2017 T akeru Miyato, Andrew M Dai, and Ian Goodfellow . V irtual adversarial training for semi-supervised text classification. arXiv pr eprint arXiv:1605.07725 , 2016a. T akeru Miyato, Shin-ichi Maeda, Masanori K oyama, Ken Nakae, and Shin Ishii. Distributional smoothing with virtual adversarial training. In International Conference on Learning Repr esen- tations (ICLR2016) , April 2016b. N. Papernot, P . McDaniel, and I. Goodfellow . T ransferability in Machine Learning: from Phe- nomena to Black-Box Attacks using Adversarial Samples. ArXiv e-prints , May 2016b. URL http://arxiv.org/abs/1605.07277 . Nicolas Papernot, Patrick Drew McDaniel, Xi W u, Somesh Jha, and Ananthram Swami. Distillation as a defense to adversarial perturbations against deep neural networks. CoRR , abs/1511.04508, 2015. URL . Nicolas Papernot, Patrick Drew McDaniel, Ian J. Goodfellow , Somesh Jha, Z. Berkay Celik, and Ananthram Swami. Practical black-box attacks against deep learning systems using adversarial examples. CoRR , abs/1602.02697, 2016a. URL . Andras Rozsa, Manuel G ¨ unther , and T errance E Boult. Are accuracy and robustness correlated? arXiv pr eprint arXiv:1610.04563 , 2016. Olga Russakovsk y , Jia Deng, Hao Su, Jonathan Krause, Sanjee v Satheesh, Sean Ma, Zhiheng Huang, Andrej Karpathy , Aditya Khosla, Michael Bernstein, et al. Imagenet large scale visual recognition challenge. arXiv preprint , 2014. Christian Szegedy , W ojciech Zaremba, Ilya Sutske ver , Joan Bruna, Dumitru Erhan, Ian J. Goodfel- low , and Rob Fergus. Intriguing properties of neural networks. ICLR , abs/1312.6199, 2014. URL http://arxiv.org/abs/1312.6199 . Christian Szegedy , V incent V anhoucke, Sergey Ioffe, Jonathon Shlens, and Zbigniew W ojna. Re- thinking the inception architecture for computer vision. CoRR , abs/1512.00567, 2015. URL http://arxiv.org/abs/1512.00567 . Christian Szegedy , Serge y Ioffe, and V incent V anhoucke. Inception-v4, inception-resnet and the impact of residual connections on learning. CoRR , abs/1602.07261, 2016. URL http: //arxiv.org/abs/1602.07261 . 11 Published as a conference paper at ICLR 2017 A ppendices A C O M P A R I S O N O F O N E - S T E P A D V E R S A R I A L M E T H O D S In addition to FGSM and “step l.l. ” methods we explored se veral other one-step adversarial methods both for training and ev aluation. Generally all of these methods can be separated into two large categories. Methods which try to maximize the loss (similar to FGSM) are in the first category . The second category contains methods which try to maximize the probability of a specific target class (similar to “step l.l. ”). W e also tried to use dif ferent types of random noise instead of adversarial images, but random noise didn’ t help with robustness against adversarial e xamples. The full list of one-step methods we tried is as follows: • Methods increasing loss function J – FGSM (described in details in Section 2.2): X adv = X + sign ∇ X J ( X , y true ) – FGSM-pred or fast method with predicted class. It is similar to FGSM but uses the label of the class predicted by the network instead of true class y true . – “Fast entropy” or fast method designed to maximize the entropy of the predicted dis- tribution, thereby causing the model to become less certain of the predicted class. – “Fast grad. L 2 ” is similar to FGSM but uses the value of gradient instead of its sign. The value of gradient is normalized to ha ve unit L 2 norm: X adv = X + ∇ X J ( X , y true ) ∇ X J ( X , y true ) 2 Miyato et al. (2016b) advocate this method. – “Fast grad. L ∞ ” is similar to “fast grad. L 2 ” but uses L ∞ norm for normalization. • Methods increasing the probability of the selected tar get class – “Step l.l. ” is one-step towards least lik ely class (also described in Section 2.2): X adv = X − sign ∇ X J ( X , y targ et ) where y targ et = arg min y p ( y | X ) is least likely class prediction by the network. – “Step rnd. ” is similar to “step l.l. ” but uses random class instead of least likely class. • Random perturbations – Sign of random perturbation. This is an attempt to construct random perturbation which has similar structure to perturbations generated by FGSM: X adv = X + sign N where N is random normal v ariable with zero mean and identity cov ariance matrix. – Random truncated normal perturbation with zero mean and 0 . 5 standard de viation defined on [ − , ] and uncorrelated pixels, which leads to the following formula for perturbed images: X adv = X + T where T is a random v ariable with truncated normal distribution. Overall, we observed that using only one of these single step methods during adversarial training is sufficient to gain robustness to all of them. Fig. 3 shows accuracy on various one-step adversarial examples when the network w as trained using only “step l.l. ” method. At the same time we observed that not all one-step methods are equally good for adv ersarial training, as shown in T able 5. The best results (achie ving both good accuracy on clean data and good accuracy on adversarial inputs) were obtained when adversarial training was done using “step l.l. ” or “step rnd. ” methods. 12 Published as a conference paper at ICLR 2017 0 4 8 12 16 20 24 28 epsilon 0.3 0.4 0.5 0.6 0.7 0.8 top1 accuracy W ith adversarial training 0 4 8 12 16 20 24 28 epsilon 0.3 0.4 0.5 0.6 0.7 0.8 top1 accuracy No adversarial training 0 4 8 12 16 20 24 28 epsilon 0.5 0.6 0.7 0.8 0.9 1.0 top5 accuracy W ith adversarial training 0 4 8 12 16 20 24 28 epsilon 0.5 0.6 0.7 0.8 0.9 1.0 top5 accuracy No adversarial training Clean FGSM FGSM-pred Fast entropy F a s t g r a d . L 2 F a s t g r a d . L ∞ Step l.l. Step rnd. Figure 3: Comparison of dif ferent one-step adversarial methods during ev al. Adversarial training was done using “step l.l. ” method. Some ev aluation methods sho w increasing accuracy with in- creasing ov er part of the curve, due to the label leaking ef fect. B A D D I T I O NA L R E S U LT S W I T H S I Z E O F T H E M O D E L Section 4.3 contains details regarding the influence of size of the model on robustness to adv ersarial examples. Here we provide additional Figure 4 which shows robustness calculated using top 5 accuracy . Generally it exhibits the same properties as the corresponding plots for top 1 accuracy . C A D D I T I O NA L R E S U LT S O N T R A N S F E R A B I L I T Y Section 4.4 contains results with transfer rate of various adversarial examples between models. In addition to transfer rate computed only on misclassified adversarial examples it is also interesting to observe the error rate of all candidate adversarial examples generated for one model and classified by other model. This result might be interesting because it models the following attack. Instead of trying to pick “good” adversarial images an adversary tries to modify all av ailable images in order to get as much misclassified images as possible. T o compute the error rate we randomly generated 1000 adversarial images using the source model and then classified them using the target model. Results for v arious models, adversarial methods 13 Published as a conference paper at ICLR 2017 T able 5: Comparison of different one-step adversarial methods for adv ersarial training. The ev alua- tion was run after 90 k training steps. *) In all cases except “fast grad L 2 ” and “fast grad L ∞ ” the e valuation was done using FGSM. For “fast grad L 2 ” and “fast grad L ∞ ” the ev aluation was done using “step l.l. ” method. In the case where both training and testing were done with FGSM, the performance on adversarial examples is artificially high due to the label leaking ef fect. Based on this t able, we recommend using “step rnd. ” or “step l.l. ” as the method of generating adversarial examples at training time, in order to obtain good accuracy on both clean and adversarial examples. W e computed 95% confidence intervals based on the standard error of the mean around the test error , using the fact that the test error was ev aluated with 50,000 samples. W ithin each column, we indicate which methods are statistically tied for the best using bold face. Clean = 2 = 4 = 8 = 16 No adversarial training 76.8% 40.7% 39.0% 37.9% 36.7% FGSM 74.9% 79.3% 82.8% 85.3% 83.2% Fast with predicted class 76.4% 43.2% 42.0% 40.9% 40.0% Fast entropy 76.4% 62.8% 61.7% 59.5% 54.8% Step rnd. 76.4% 73.0% 75.4% 76.5% 72.5% Step l.l. 76.3% 72.9% 75.1% 76.2% 72.2% Fast grad. L 2 * 76.8% 44.0% 33.2% 26.4% 22.5% Fast grad. L ∞ * 75.6% 52.2% 39.7% 30.9% 25.0% Sign of random perturbation 76.5% 38.8% 36.6% 35.0% 32.7% Random normal perturbation 76.6% 38.3% 36.0% 34.4% 31.8% and fixed = 16 are provided in T able 6. Results for fixed source and target models and various are provided in Fig. 5. Overall the error rate of transferred adversarial examples exhibits the same behavior as the transfer rate described in Section 4.4. T able 6: Error rates on adversarial examples transferred between models, rounded to the nearest percent. Results are provided for adversarial images generated using dif ferent adversarial methods and fixed perturbation size = 16 . The following models were used for comparison: A and B are Inception v3 models with different random initializations, C is Inception v3 model with ELU acti- vations instead of Relu, D is Inception v4 model. See also T able 4 for the transfer rate of adv ersarial examples, rather than the absolute error rate. FGSM basic iter . iter l.l. source target model target model target model model A B C D A B C D A B C D top 1 A (v3) 65 52 53 45 78 51 50 42 100 32 31 27 B (v3) 52 66 54 48 50 79 51 43 35 99 34 29 C (v3 ELU) 53 55 70 50 47 46 74 40 31 30 100 28 D (v4) 47 51 49 62 43 46 45 73 30 31 31 99 top 5 A (v3) 46 28 28 22 76 17 18 13 94 12 12 9 B (v3) 29 46 30 22 19 76 18 16 13 96 12 11 C (v3 ELU) 28 29 55 25 18 19 74 15 12 12 96 9 D (v4) 23 22 25 40 14 16 16 70 11 11 11 97 D R E S U L T S W I T H D I FF E R E N T A C T I V A T I O N F U N C T I O N S W e ev aluated robustness to adversarial examples when the network was trained using various non- linear activ ation functions instead of the standard r elu activ ation when used with adversarial training on “step l.l. ” adversarial images. W e tried to use following acti v ation functions instead of r elu : • tanh ( x ) 14 Published as a conference paper at ICLR 2017 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.40 0.45 0.50 0.55 0.60 0.65 0.70 ratio of adversarial to clean top 5 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 No adversarial training, “step l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.95 0.96 0.97 0.98 0.99 ratio of adversarial to clean top 5 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 W ith adversarial training, “step l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 ratio of adversarial to clean top 5 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 No adversarial training, “iter . l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 ratio of adversarial to clean top 5 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 W ith adversarial training, “iter . l.l. ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.2 0.3 0.4 0.5 0.6 ratio of adversarial to clean top 5 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 No adversarial training, “basic iter . ” adv . examples 0.50 0.75 1.00 1.25 1.50 1.75 2.00 s c a l e f a c t o r ρ f o r n u m b e r o f f i l t e r s 0.1 0.2 0.3 0.4 0.5 0.6 ratio of adversarial to clean top 5 accuracy ² = 2 ² = 4 ² = 8 ² = 1 6 W ith adversarial training, “basic iter . ” adv . examples Figure 4: Influence of size of the model on top 5 classification accuracy of various adversarial examples. For a detailed explanation see Section 4.3 and Figure 1. • rel u 6( x ) = min ( r el u ( x ) , 6) • ReluD ecay β ( x ) = rel u ( x ) 1+ β r elu ( x ) 2 for β ∈ { 0 . 1 , 0 . 01 , 0 . 001 } T raining con verged using all of these activ ations, howe ver test performance was not necessarily the same as with r elu . tanh and R eluD ecay β =0 . 1 lose about 2% - 3% of accuracy on clean examples and about 10% - 20% on “step l.l. ” adversarial examples. r elu 6 , ReluD ecay β =0 . 01 and R eluD ecay β =0 . 001 demonstrated similar accuracy (within ± 1% ) to r el u on clean images and few percent loss of accuracy on “step l.l. ” images. At the same time all non-linear activ ation functions increased classification accuracy on some of the iterativ e adversarial images. Detailed results are provided in T able 7. 15 Published as a conference paper at ICLR 2017 2 4 6 8 10 12 14 16 ² 0.20 0.25 0.30 0.35 0.40 0.45 0.50 0.55 top 1 error rate fast basic iter. iter l.l. T op 1 error rate. 2 4 6 8 10 12 14 16 ² 0.05 0.10 0.15 0.20 0.25 0.30 top 5 error rate fast basic iter. iter l.l. T op 5 error rate. Figure 5: Influence of the size of adversarial perturbation on the error rate on adversarial examples generated for one model and classified using another model. Both source and target models were Inception v3 networks with dif ferent random intializations. Overall non linear activ ation functions could be used as an additional measure of defense against iterativ e adversarial images. T able 7: Activ ation functions and robustness to adversarial examples. For each activ ation function we adversarially trained the network on “step l.l. ” adversarial images and then run classification of clean images and adversarial images generated using v arious adversarial methods and . Adv . method Activ ation Clean = 2 = 4 = 8 = 16 Step l.l. rel u 77.5% 74.6% 75.1% 75.5% 74.5% r elu 6 77.7% 71.8% 73.5% 74.5% 74.0% Rel uDecay 0 . 001 78.0% 74.0% 74.9% 75.2% 73.9% Rel uDecay 0 . 01 77.4% 73.6% 74.6% 75.0% 73.6% Rel uDecay 0 . 1 75.3% 67.5% 67.5% 67.0% 64.8% tanh 74.5% 63.7% 65.1% 65.8% 61.9% Iter . l.l. r elu 77.5% 30.2% 8.0% 3.1% 1.6% r elu 6 77.7% 39.8% 13.7% 4.1% 1.9% Rel uDecay 0 . 001 78.0% 39.9% 12.6% 3.8% 1.8% Rel uDecay 0 . 01 77.4% 36.2% 11.2% 3.2% 1.6% Rel uDecay 0 . 1 75.3% 47.0% 25.8% 6.5% 2.4% tanh 74.5% 35.8% 6.6% 2.7% 0.9% Basic iter . r elu 77.5% 28.4% 23.2% 21.5% 21.0% r elu 6 77.7% 31.2% 26.1% 23.8% 23.2% Rel uDecay 0 . 001 78.0% 32.9% 27.2% 24.7% 24.1% Rel uDecay 0 . 01 77.4% 30.0% 24.2% 21.4% 20.5% Rel uDecay 0 . 1 75.3% 26.7% 20.6% 16.5% 15.2% tanh 74.5% 24.5% 22.0% 20.9% 20.7% E R E S U L T S W I T H D I FF E R E N T N U M B E R O F A DV E R S A R I A L E X A M P L E S I N T H E M I N I BAT C H W e studied how number of adversarial examples k in the minibatch af fect accuracy on clean and adversarial e xamples. Results are summarized in T able 8. Overall we noticed that increase of k lead to increase of accuracy on adversarial examples and to decrease of accuracy on clean e xamples. At the same having more than half of adversarial examples in the minibatch (which correspond to k > 16 in our case) does not provide significant improvement of accuracy on adversarial images, howe ver lead to up to 1% of additional decrease of accuracy 16 Published as a conference paper at ICLR 2017 on clean images. Thus for most experiments in the paper we hav e chosen k = 16 as a reasonable trade-off between accurac y on clean and adversarial images. T able 8: Results of adversarial training depending on k — number of adversarial examples in the minibatch. Adversarial examples for training and e valuation were generated using step l.l. method. Row ‘No adv‘ is a baseline result without adv ersarial training (which is equiv alent to k = 0 ). Rows ‘ Adv , k = X ‘ are results of adversarial training with X adversarial examples in the minibatch. T otal minibatch size is 32 , thus k = 32 correspond to minibatch without clean examples. Clean = 2 = 4 = 8 = 16 No adv 78.2% 31.5% 27.7% 27.8% 29.7% Adv , k = 4 78.3% 71.7% 71.3% 69.4% 65.8% Adv , k = 8 78.1% 73.2% 73.2% 72.6% 70.5% Adv , k = 16 77.6% 73.8% 75.3% 76.1% 75.4% Adv , k = 24 77.1% 73.0% 75.3% 76.2% 76.0% Adv , k = 32 76.3% 73.4% 75.1% 75.9% 75.8% 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment