Ask the GRU: Multi-Task Learning for Deep Text Recommendations

In a variety of application domains the content to be recommended to users is associated with text. This includes research papers, movies with associated plot summaries, news articles, blog posts, etc. Recommendation approaches based on latent factor…

Authors: Trapit Bansal, David Belanger, Andrew McCallum

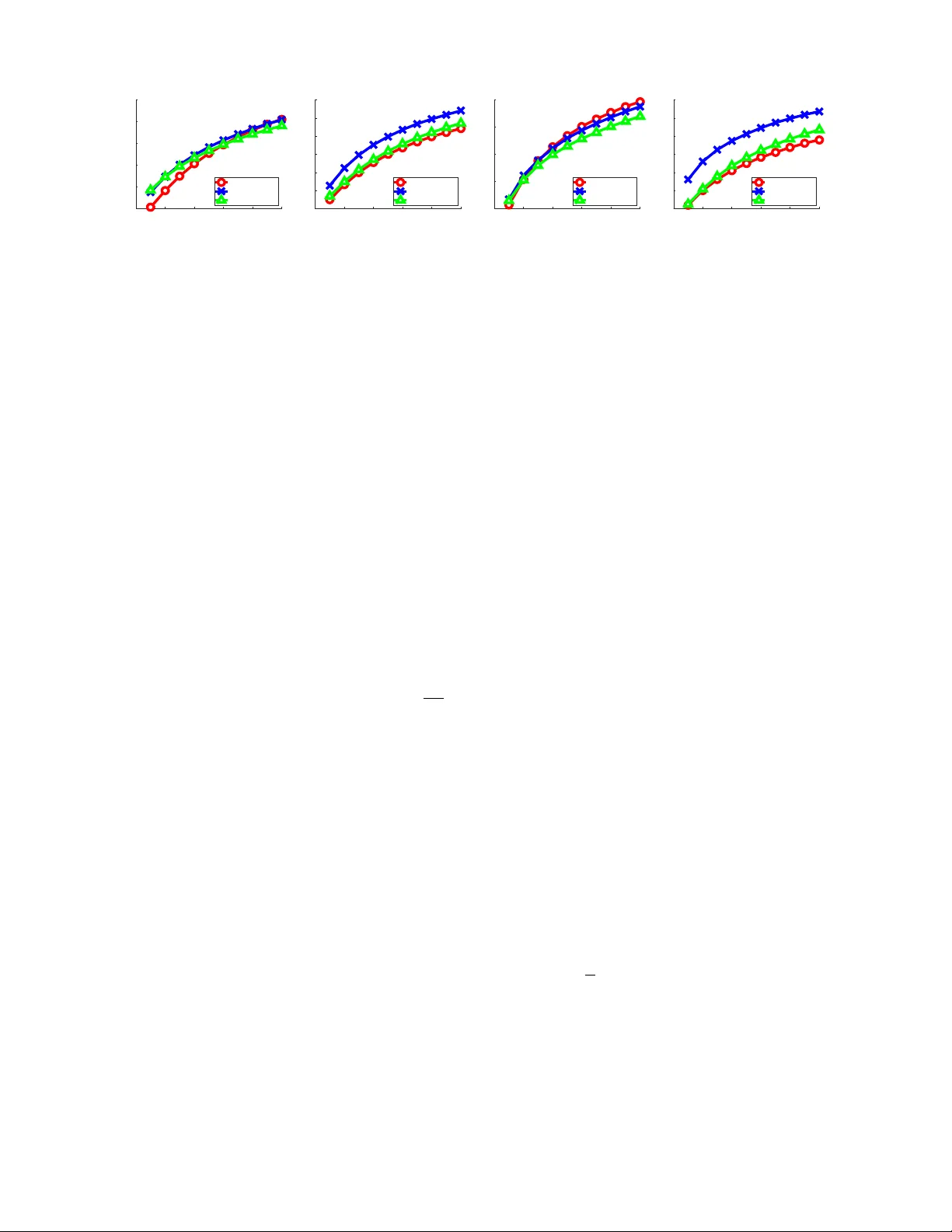

Ask the GR U : Multi-T ask Learning f or Deep T e xt Recommendations T rapit Bansal tbansal@cs.umass .edu David Belanger belanger@cs.umass .edu Andrew McCallum mccallum@cs.umass .edu College of Inf or mation and Computer Sciences, Univ ersity of Massachusetts Amherst ABSTRA CT In a v ariet y of application domains the conten t to b e recom- mended to users is asso ciated with text. This includes re- searc h pap ers, movies with asso ciated plot summaries, news articles, blog posts, etc. Recommendation approac hes based on laten t factor mo dels can b e extended naturally to lev erage text by employing an explicit mapping from text to factors. This enables recommendations for new, unseen con tent, and ma y generalize b etter, since the factors for all items are pro- duced b y a compactly-parametrized mo del. Previous w ork has used topic models or a verages of word em b eddings for this mapping. In this paper w e present a method lever- aging deep recurrent neural netw orks to enco de the text sequence in to a laten t v ector, sp ecifically gated recurren t units (GRUs) trained end-to-end on the collab orative filter- ing task. F or the task of scien tific pap er recommendation, this yields mo dels with significantly higher accuracy . In cold-start scenarios, we beat the previous state-of-the-art, all of which ignore word order. Performance is further improv ed b y multi-task learning, where the text enco der netw ork is trained for a combi nation of conten t recommendation and item metadata prediction. This regularizes the colla b ora- tiv e filtering mo del, ameliorating the problem of sparsity of the observ ed rating matrix. K eywords Recommender Systems; Deep Lea rning; Neural Netw orks; Cold Start; Multi-task Learning 1. INTR ODUCTION T ext recommendation is an imp ortan t problem that has the p otential to drive significan t profits for e-businesses thr- ough increased user engagement. Examples of text recom- mendations include recommending blogs, so cial media p osts [ 1 ], news articles [ 2 , 3 ], mo vies (based on plot summaries), products (based on reviews) [ 4 ] and research pap ers [ 5 ]. Methods for reco mmending text items can b e broadly clas- sified into collab orative filtering (CF), con ten t-based, and h ybrid methods. Collab orative filtering [ 6 ] metho ds use the user-item rating matrix to construct user and item profiles from past ratings. Classical examples of this include ma- trix factorization metho ds [ 6 , 7 ] which completely ignore text information and rely solely on the rating matrix. Suc h methods suffer from the c old-start problem – ho w to rank unseen or unrated items – whic h is ubiquitous in most do- mains. Con tent-based methods [ 8 , 9 ], on the other hand, use the item text or attributes, and make recommendations based on similarity betw een such attributes, ignoring data from other users. Such metho ds can mak e recommenda- tions for new items but are limited in their p erformance since they cannot employ similarit y b etw een user preferences [ 5 , 10 , 11 ]. Hybrid recommendation systems seek the b est of both w orlds, by leveraging b oth item con ten t and user-item ratings [ 5 , 10 , 12 , 13 , 14 ]. Hybrid recommendation metho ds that consume item text for recommendation often ignore w ord order [ 5 , 13 , 14 , 15 ], and either use bags-of-w ords as features for a linear model [ 14 , 16 ] or define an unsupervised learning ob jectiv e on the text such as a topic mo del [ 5 , 15 ]. Suc h methods are unable to fully leverage the text con ten t, being limited to bag-of-words sufficient statistics [ 17 ], and furthermore unsup ervised learning is unlikely to focus on the aspects of text relev ant for conten t recommendation. In this pap er we present a metho d lev eraging re curr ent neur al networks (RNNs) [ 18 ] to represent text items for col- laborative filtering. In recent years, RNNs hav e provided substan tial p erformance gains in a v ariety of natural lan- guage pro cessing applications suc h as language mo deling [ 19 ] and machine translation [ 20 ]. RNNs hav e a num b er of note- w orthy characteristics: (1) they are sensitive to word order, (2) they do not require hand-engineered features, (3) it is easy to leverage large unlabeled datasets, by pretraining the RNN parameters with unsupervised language modeling ob- jectiv es [ 21 ], (4) RNN computation can b e parallelized on a GPU, and (5) the RNN applies naturally in the cold-start scenario, as a feature extractor, whenever we hav e text as- sociated with new items. Due to the extreme data sparsity of con tent recommenda- tion datasets [ 22 ], regularization is also an imp ortan t con- sideration. This is particularly imp ortan t for deep models suc h as RNNs, since these high-capacit y mo dels are prone to o v erfitting. Existing hybrid metho ds ha v e used unsup er- vised learning ob jectiv es on text conten t to regularize the parameters of the recommendation mo del [ 4 , 23 , 24 ]. Ho w- ev er, since we consume the text directly as an input for prediction, w e can not use this approac h. Instead, we pro- vide regularization by p erforming multi-task learning com- bining collab orative filtering with a simple side task: pre- dicting item meta-data suc h as genres or item tags. Here, the net work pro ducing v ector represen tations for items directly from their text con tent is shared fo r b oth tag prediction and recommendation tasks. This allo ws us to make predictions in cold-start conditions, while providing regularization for the recommendation mo del. W e ev aluate our recurren t neural netw ork approach on the task of scien tific pap er recommendation using tw o publicly a v ailable datasets, where items are associated with text ab- stracts [ 5 , 13 ]. W e find that the RNN-based models yield up to 34% relativ e-improv ement in Recall@50 for cold-start recommendation o v er collaborative topic regression (CTR) approac h of W ang and Blei [ 5 ] and a word-em b edding based model model [ 25 ], while giving comp etitive p erformance for w arm-start recommendation. W e also note that a simple linear mo del that represents do cumen ts using an a verage of w ord em b eddings trained in a completely supervised fash- ion [ 25 ], obtains comp etitive results to CTR. Finally , we find that multi-task learning improv es the p erformance of all of the models significan tly , including the baselines. 2. B A CKGR OUND AND RELA TED WORK 2.1 Problem F ormulation and Notation This paper fo cuses on the task of recommending items as- sociated with text conten t. The j -th text item is a se quenc e of n j w ord tokens, X j = ( w 1 , w 2 , . . . , w n j ) where each tok en is one of V w ords from a v o cabulary . Additionally , the text items ma y b e asso ciated with m ultiple tags (user or author pro vided). If item j ∈ [ N d ] has tag l ∈ [ N t ] then w e denote it b y t j l = 1 and 0 otherwise. There are N u users who ha ve liked/rated/sa ve d some of the text items. The rating provided by user i on item j is denoted b y r ij . W e consider the implicit feedback [ 26 , 27 ] setting, where we only observe whether a p erson has viewed or liked an item and do not observ e explicit ratings. r ij = 1 if user i lik ed item j and 0 otherwise. Denote the user-item matrix of lik es b y R . Let R + i denote the set of all items liked b y user i and R − i denote the remaining items. The recommendation problem is to find for each user i a personalized ranking of all unrated items, j ∈ R − i , given the text of the items { X j } , the matrix of users’ previous likes { R i } and the tagging information of the items { t il } . The methods w e consider will often represent users, items, tags and w ords by K -dimensional vectors ˜ u i , ˜ v j , ˜ t l and ˜ e w ∈ R K , resp ectiv ely . W e will refer to such v ectors as emb e d- dings . All v ectors are treated as column v ectors. σ ( . ) will denote the sigmoid function, σ ( x ) = 1 1+ e − x . 2.2 Latent Factor Models Laten t factor mo dels [ 6 ] for con tent recommendation learn K dimensional v ector embeddings of items and users: ˆ r ij = b i + b j + ˜ u T i ˜ v j , (1) b i , b j are user and item specific biases, and ˜ u i is the vector em b edding for user i and ˜ v j is the embedding of item j . A simple method for learning the mo del parameters, θ θ θ = { b i , b j , ˜ u, ˜ v } , is to s p ecify a cost function and perform sto chas- tic gradien t descent. F or implicit feedbac k, an unobserved rating might indicate that either the user do es not like the item or the user has never seen the item. In such cases, it is common to use a weighted regularized squared loss [ 5 , 26 ]: C R ( θ θ θ ) = 1 | R | X ( i,j ) ∈ R c ij ( ˆ r ij − r ij ) 2 + Ω( θ θ θ ) (2) Often, one uses c ui = a for observed items and c ui = b for unobserv ed items, with b a [ 5 , 13 ], signifying the uncer- tainit y in the unobserved ratings. Ω( θ θ θ ) is a regularization on the parameters, for example in PMF [ 7 ] the embeddings are assigned Gaussian priors, which leads to a ` 2 regulariza- tion. Some implicit feedbac k recommendation systems use a ranking-based loss instead [ 25 , 27 ]. The metho ds w e pro- pose can b e trained with any differentiable cost function. W e will use a w eighted squared loss in our exp eriments to be consistent with the baselines [ 5 ]. 2.3 The Cold Start Problem In many applications, the factorization ( 1 ) is unusable, since it suffers from the c old-start problem [ 11 , 14 ]: new or unseen items can not be recommended to users b ecause w e do not hav e an associated embedding. This has lead to in- creased interest in hybrid CF methods whic h can levera ge additional information, such as item con tent, to make cold- start recommendations . In some cases, we may also face a cold-start problem for new users. Though w e do not con- sider this case, the techniques of this pap er can b e extended naturally to accommo date it whenever w e hav e text conten t associated with users. W e consider: ˆ r ij = b i + b j + ˜ u T i f ( X j ) , (3) Where f ( · ) is a vec tor-v alued function of the item’s text. F or differen tiable f ( · ), ( 3 ) can also b e trained using ( 2 ). Throughout the pap er, we will refer to f ( · ) as an enc o der . Existing hybrid CF methods [ 11 , 16 , 28 , 29 ] whic h use item metadata take this form. In suc h cases, f ( . ) is a linear function of manually extracted item features. F or exam- ple, Agarw al and Chen [ 16 ], Gantner et al. [ 30 ] incorporate side information through a linear regression based formu- lation on metadata lik e category , user’s age, lo cation, etc. Rendle [ 29 ] prop osed a more general framework for incorpo- rating higher order interactions among features in a factor model. Refer to Shi et al. [ 28 ], and the references therein, for a recent review on suc h hybrid CF methods. Our exp eriments compare to collab orativ e topic reg ression (CTR) [ 5 ], a state-of-the-art technique that sim ultaneously factorizes the item-w ord coun t matrix (through probabilistic topic mo deling) and the user-item rating matrix (through a laten t factor model). By learning lo w-dimensional (topical) represen tations of items, CTR is able to provide recommen- dations to unseen items. 2.4 Regularization via Multi-task Learning T ypical CF datasets are highly sparse, and th us it is im- portant to leverage all av ailable training signals [ 22 ]. In man y applications, it is useful to p erform multi-task learn- ing [ 31 ] that combines CF and auxiliary tasks, where a shared feature representation for items (or users) is used for all tasks. Collective matrix factorization [ 32 ] join tly fac- torizes multiple observ ation matrices with shared entities for relational learning. Ma et al. [ 33 ] seek to predict side information asso ciated with users. Finally , McAuley and Lesk ov ec [ 4 ] used topic models and Almahairi et al. [ 24 ] used language mo dels on review text. In many applications, text items are asso ciated with tags, including research pap ers with keyw ords, news articles with user or editor provided labels, so cial media p osts with hash- tags, movies with genres, etc. These can b e used as features X j in ( 3 ) [ 16 , 29 ]. Ho wev er, there are considerable dra w- bac ks to this approach. First, tags are often assigned by users, which ma y lead to a cold-start problem [ 34 ], since new items hav e no annotation. Moreov er, tags can b e noisy , especially if they are user-assigned, or to o general [ 3 ]. While tag annotation ma y b e unreliable and incomplete as input features, encou raging items’ represen tations to be pre- dictiv e of these tags can yield useful regularization for the CF problem. Besides providing regularization, this m ulti- task learning approach is esp ecially useful in cold-start sce- narios, since the tags are only used at train time and hence need not b e av ailable at test time. In Section 3.3 we employ this approac h. 2.5 Deep Learning In our w ork, w e represen t the item-to-em b edding mapping f ( · ) using a deep neural netw ork. See [ 35 ] for a comprehen- siv e ov erview of deep learning metho ds. W e provide here a brief review of deep learning for recommendation systems. Neural netw orks ha ve received limited atten tion from the recommendation systems comm unity . [ 36 ] used restricted Boltzmann mac hines as one of the comp onent mo dels to tac kle the Netflix c hallenge. Recently , [ 37 , 38 ] prop osed de- noising auto-encoder based mo dels for collab orativ e filter- ing which are trained to denoise corrupted versions of entire sparse vectors of user-item lik es or item-user likes (i.e. ro ws or columns of the R matrix). How ev er, these mo dels are un- able to handle the cold-start problem. W ang et al. [ 13 ] ad- dresses this by incorp orating a bag-of-w ords autoenco der in the mo del within a Bay esian framework. Elk ahky et al. [ 39 ] proposed to use neural netw orks on manually extracted user and item feature represen tations for conten t based multi- domain recommendation. Dziugaite and Roy [ 40 ] proposed to use a neural net work to learn the similarity function b e- t ween user and item laten t factors. V an den Oord et al. [ 41 ], W ang and W ang [ 42 ] developed music recommender systems which use features extracted from the m usic audio using conv olutional neural net works (CNN) or deep b elief net works. Ho wev er, these metho ds process the user-item rating matrix in isolation from the con ten t information and th us are unable to exploit the direct interaction b etw een item conten t and ratings [ 13 ]. W eston et al. [ 43 ] proposed a CNN based mo del to predict hashtags on so cial media p osts and found the learned representations to also be useful for document recommendation. Recently , He and McAuley [ 44 ] used image-features from a separately trained CNN to im- pro ve pro duct recommendation and tackle cold-start. Alma- hairi et al. [ 24 ] used neural net w ork based language mo dels [ 19 , 45 ] on review text to regularize the latent factors for product recommendation, as opp osed to using topic mo d- els, as in McAuley and Lesko vec [ 4 ]. They found that RNN based language mo dels perform p o orly as regularizers and w ord em b edding mo dels Mik olov et al. [ 45 ] p erform better. 3. DEEP TEXT REPRESENT A TION FOR COLLABORA TIVE FIL TERING This section presents neural netw ork-based encoders for explicitly mapping an item’s text con tent to a vector of la- ten t factors. This allows us to p erform cold-start prediction on new items. In addition, since the vector represen tations for items are tied together by a shared parametric mo del, w e ma y be able to generalize better from limited data. As is standard in deep learning approaches to NLP , our encoders first map input text X j = ( w 1 , w 2 , . . . , w n j ) to a se- quence of K w -dimensional embeddings [ 45 ], ( e 1 , e 2 , . . . , e n j ), using a lookup table with one v ector for ev ery word in our v o cabulary . Then, we define a transformation that collapses the sequence of em b eddings to a single vector, g ( X j ). In all of our models, w e main tain a separate item-specific em b edding ˜ v j , which helps capture user behavior that can- not be mo deled b y con ten t alone [ 5 ]. Thus, we set the final document representation as: f ( X j ) = g ( X j ) + ˜ v j (4) F or cold-start prediction, there is no training data to esti- mate the item-specific em b edding and w e set ˜ v j = 0 [ 5 , 13 ]. 3.1 Order -Insensitive Encoders A simple order-insensitiv e encoder of the do cument text can be obtained by av eraging w ord em b eddings: g ( X j ) = 1 | X j | X w ∈ X j e w . (5) This corresp onds exactly to a linear model on a bag-of-w ords represen tation for the document. Ho wev er, using the repre- sen tation ( 5 ) is useful b ecause the word embeddings can b e pre-trained, in an unsup ervised manner, on a large corpus [ 46 ]. Note that ( 5 ) is similar to the embedding-based mo del used in W eston et al. [ 43 ] for hastag prediction. Note that CTR [ 5 ], describ ed in 2.3 , also op erates on bag-of-w ords sufficient statistics. Here, it does not hav e an explicit parametric enco der g ( · ) from text to a vector, but instead defines an implicit mapping via the pro cess of doing posterior inference in the probabilistic topic mo del. 3.2 Order -Sensitive Encoders Bag-of-w ords mo dels are limited in their capacity , as they cannot distinguish b etw een sen tences that hav e similar un- igram statistics but completely different meanings [ 17 ]. As a toy example, consider the researc h pap er abstracts: “This paper is about deep learning, not LDA” and “This paper is about LD A, not deep learning” . They ha ve the same un- igram statistics but woul d b e of interest to differen t sets of users. A more pow erful model that can exploit the addi- tional information inherent in word order wo uld be exp ected to recognize this and thus p erform better recommendation. In response, we parametrize g ( · ) as a recurrent neu ral net- w ork (RNN). It reads the text one word at a time and pro- duces a single vector representation. RNNs can provide im- pressiv e compression of the salient prop erties of text. F or example, accurate translation of an English sent ence can b e performed by conditioning on a single v ector enco ding [ 47 ]. The extracted item representation is com bined with a user em b edding, as in ( 3 ), to get the predicted rating for a user- item pair. The model can then be trained for recommen- dation in a completely sup ervised manner, using a differen- tiable cost function suc h as ( 2 ). Note that a key difference betw een this approach and the existing approac hes which use item cont ent [ 5 , 13 ], apart from sensitivity to w ord or- der, is that we do not define an unsup ervised ob jectiv e (like lik eliho od of observing bag-of-words under a topic model) for extracting a text representation . Ho wev er, our mo del can benefit from unsup ervised data through pre-training of w ord e mbeddings [ 45 ] or pre-training of RNN parameters us- ing language models [ 21 ] (our exp eriments use embeddings). 3.2.1 Gated Recurr ent Units (GR Us) T raditional RNN architectures suffer from the problem of v anishing and exploding gradien ts [ 48 ], rendering optimiza- This is paper about deep lear ning . h 1 h 2 h 3 h 4 h 5 h 6 h 7 h 8 h 0 1 h 0 2 h 0 3 h 0 4 h 0 5 h 0 6 h 0 7 h 0 8 Pooling Layer Paper 42 f ( X 42 ) + g ( X 42 ) tag: RNN tag: LDA p =0 . 82 p =0 . 11 user: Y oshua user: David r =0 . 97 r =0 . 02 not LDA h 9 h 0 9 h 10 h 0 10 , Figure 1: Prop osed architecture for text item recommendation. Rectangular b oxes represen t embeddings. Tw o la ye rs of RNN with GRU are used, where the first lay er is a bi-directional RNN. The output of all the hidden units at the second la yer is po oled to pro duce a text enco ding which is com bined with an item-sp ecific em b edding to pro duce the final representation f ( X ) . Users and tags are also represented by embeddings, whic h are com bined with the item representation to do tag predection and recommendation. tion difficult and prohibiting them from learning long-term dependencies. There ha ve b een sev eral mo difications to the RNN proposed to remedy this problem, of which the most popular are long short-term memory units (LSTMs) [ 49 ] and the more recent gate d r e curr ent units (GRUs) [ 20 ]. W e use GR Us, which are simpler than LSTM, ha ve few er parame- ters, and give comp etitive p erformance to LSTMs [ 50 , 51 ]. The GR U hidden v ector output at step t , h t , for the input sequence X j = ( w 1 , . . . , w t , . . . , w n j ) is given by: f t o t = σ θ 1 ˜ e w t h t − 1 + b (6) c t = tanh( θ 2 w ˜ e w t + f t θ 2 h h t − 1 + b c ) (7) h t = (1 − o t ) h t − 1 + o t c t (8) where θ 1 ∈ R 2 K h × ( K w + K h ) , θ 2 w ∈ R K h × K w , θ 2 h ∈ R K h × K h and b, b c ∈ R K h are parameters of the GRU with K w the dimension of input word em b eddings and K h the num b er of hidden units in the RNN. denotes elemen t-wise pro d- uct. In tuitively , f t ( 6 ) acts as a ‘forget’ (or ‘reset’) gate that decides what parts of the previous hidden state to consider or ignore at the current step, c t ( 7 ) computes a candidate state for the current time step using the parts of the pre- vious hidden state as dictated by f t , and o t ( 6 ) acts as the output (or u p date) gate which decides what parts of the pre- vious memory to c hange to the new candidate memory ( 8 ). All forget and up date operations are differen tiable to allo w learning through backpropa gation. The final arc hitecture, shown in Figure 1 , consists of tw o stac ked la yers of RNN with GRU hidden units. W e use a bi-directional RNN [ 52 ] at the first la y er and feed the con- catenation of the forward and backw ard hidden states as the input to the second lay er. The output of the hidden states of the second la yer is po oled to obtain the item conten t repre- sen tation g ( X j ). In our experiments, mea n pooling performs best. Models that use the final RNN state take muc h longer to optimize. F ollo wing ( 4 ), the final item represen tation is obtained b y combining the RNN representation with an item-specific embedding v j . W e no w describ e the multi-ta sk learning setup. 3.3 Multi-T ask Learning The enco der f ( · ) can be used as a generic feature extractor for items. Therefore, we can employ the multi-task learning approac h of Section 2.4 . The tags asso ciated with papers can b e considered as a (coarse) summary or topics of the items and thus forcing the enco der to b e predictive of the tags will provide a useful inductive bias. Consider again the to y example of Figure 1 . Observing the tag “RNN” but not “LD A” on the pap er, even though the term LDA is presen t in the text, will force the net work to pay atten tion to the sequence of words “not LDA” in order to explain the tags. W e define the probability of observing tag l on item j as: P ( t j l = 1) = p j l = σ ( f ( X j ) T ˜ t l ), where ˜ t l is an embedding for tag l . The cost for predicting the tags is taken as the sum of the w eighted binary log likelihoo d of each tag: C T ( θ θ θ ) = 1 | T | X j X l { t j l log p j l + c 0 j l (1 − t j l ) log(1 − p j l ) } where c 0 j l do wn-weigh ts the cost for predicting the unob- serv ed tags. The final cost is C ( θ θ θ ) = λC R ( θ θ θ ) + (1 − λ ) C T ( θ θ θ ) with C R defined in ( 2 ), and λ is a hyperparameter. It is worth noting the differences betw een our approac h and Almahairi et al. [ 24 ], who use language mo deling on the text as an unsup ervised multi-task ob jective with the item laten t factors as the shared parameters. Almahairi et al. [ 24 ] found that the increased flexibility offered by the RNN mak es it to o strong a regularizer leading to worse p erfor- mance than simpler bag-of-words models. In con trast, our RNN is trained fully sup ervised, which forces the item rep- resen tations to b e discriminativ e for recommendation and tag prediction. F urthermore, by using the text as an input to g ( · ) at test time, rather than just for train-time regular- ization, we can alleviate the cold-start problem. 4. EXPERIMENTS 4.1 Experimental Setup Datasets: W e use tw o datasets made a v ailable b y W ang T able 1: % Recall@50 for all the methods (higher is b etter). Citeulik e-a Citeulik e-t W arm Start Cold Start T ag Prediction W arm Start Cold Start T ag Prediction GR U-MTL 38.33 49.76 60.52 45.60 51.22 62.32 GR U 36.87 46.16 —– 42.59 47.59 —– CTR-MTL 35.51 39.87 48.95 46.82 34.98 46.66 CTR 31.10 39.00 —– 40.44 33.74 —– Em b ed-MTL 36.64 41.71 60.36 43.02 38.16 62.29 Em b ed 33.95 38.53 —– 37.98 35.85 —– maxim um likelihoo d from incomplete data via the em algorithm a broadly applicable algorithm for computing maximum lik elihoo d estimates from incomplete data is presented at various lev els of generality . theory showing the monotone b eha viour of the lik eliho od and con vergence of the algorithm is deriv ed . man y examples are sk etched , including missing v alue situations , applications to group ed , censored or truncated data , finite mixture models , v ariance comp onent estimation , hy p erparameter estimation , iterativ ely rew eigh ted least squares and factor analysis . Figure 2: Saliency of eac h w ord in the abstract of the EM paper [ 53 ]. Size and color of the words indicate their leverage on the final rating. The mo del learns that ch unks of word phrases are imp ortant, such as “maxim um likelihoo d” and “iterativ ely reweigh ted least squares” , and ignores punctutations and stop words. et al. [ 13 ] from CiteULike 1 . CiteULik e is an online platform whic h allo ws registered users to create p ersonal libraries by sa ving pap ers whic h are of in terest to them. The datasets consist of th e p ap ers in the users’ libraries (which are tre ated as ‘likes’), user pro vided tags on the papers, and the title and abstract of the papers. Similar to W ang and Blei [ 5 ], w e remo v e users with less than 5 ratings (since they cannot be ev aluated properly) and remov ed tags that occur on less than 10 articles. Citeulike-a [ 5 ] consists of 5551 users, 16980 papers and 3629 tags with a total of 204,987 user-item likes. Citeulike-t [ 5 ] consists of 5219 users, 25975 pap ers and 4222 tags with a total of 134,860 user-item likes. Note Citeulike-t is m uc h more sparse (99.90%) than Citeulike-a (99.78%). Ev aluation Metho dology: F ollowin g, W ang and Blei [ 5 ], we test the mo dels on held-out user-article lik es under both warm-start and cold-start scenarios. Warm-Start: This is the case of in-matrix prediction, where eve ry test item had at least one like in the training data. F or each user we do a 5-fold split of papers from their lik e history . Papers with less than 5 likes are alw ays kept in the training data, since they cannot be ev aluated prop erly . After learning, we predict ratings across all active test set items and for each user filter out the items in their training set from the rank ed list. Cold-Start: This is the task of predicting user interest in a new pap er with no existing lik es, based on the text con tent of the pap er. The set of all papers is split in to 5 folds. Again, papers with less than 5 likes are alw ays kept in training set. F or each fold, w e remo v e all likes on the pap ers in that fold forming the test-set and keep the other folds as training-set. W e fit the models on the training set items for each fold and form predictiv e p er-user ranking of items in the test set. Evaluation Metric: Accuracy of recommendation from im- 1 h ttp://www.citeulik e.org/ plicit feedbac k is often measured b y recall. Precision is not reasonable since the zero ratings ma y mean that a user ei- ther do es not like the article or do es not know of it. Thus, w e use Recall@M [ 5 ] and av erage the p er-user metric: Recall@ M = n umber of articles user lik ed in top M total n umber of articles user liked 4.1.1 Methods W e compare the proposed metho ds with CTR , whic h mod- els item conten t using topic mo deling. The approach put forth by CTR [ 5 ] cannot p erform tag-prediction and th us, for a fair comparison, w e modify CTR to do tag prediction. This can be viewed as a probabilistic v ersion of collectiv e ma- trix factorization [ 32 ]. Deriving an alternating least squares inference algorithm along the line of [ 5 ] is not p ossible for a sigmoid loss. Thus, for CTR, we formulate tag prediction using a weigh ted squared loss instead. Learning this mo del is a straigh tforward extension of CTR: rather than performing alternating updates on tw o blo cks of parameters, w e rotate among three. W e call this CTR-MTL . The w ord em bedding- based mo del with order-insensitive do cumen t enco der (sec- tion 3.1 ) is Emb e d , and the RNN-based model (section 3.2 ) is GRU . The corresp onding mo dels trained with mu lti-task learning are Emb e d-MTL and GR U-MTL . 4.1.2 Implementation Details F or CTR, w e follow W ang and Blei [ 5 ] for setting hyper- parameters. W e use laten t factor dimension K = 200, regu- larization parameters λ u = 0 . 01 , λ v = 100 and cost weigh ts a = 1 , b = 0 . 01. The same parameters gav e go od results for CTR-MTL. CTR and CTR-MTL are trained using the EM algorithm, which updates the laten t factors using a lternating least squares on full data [ 5 , 26 ]. CTR is sensitiv e to goo d pre-processing of the text, which is common in topic mod- eling [ 5 ]. W e use the pro vided pre-processed text for CTR, 0 20 40 60 80 100 0.1 0.2 0.3 0.4 0.5 0.6 ca: warm-start CTR-MTL GRU-MTL EMBED-MTL 0 20 40 60 80 100 0.1 0.2 0.3 0.4 0.5 0.6 0.7 ca: cold-start CTR-MTL GRU-MTL EMBED-MTL 0 20 40 60 80 100 0.2 0.3 0.4 0.5 0.6 ct: warm-start CTR-MTL GRU-MTL EMBED-MTL 0 20 40 60 80 100 0.1 0.2 0.3 0.4 0.5 0.6 0.7 ct: cold-start CTR-MTL GRU-MTL EMBED-MTL Figure 3: Recall@M for the mo dels trained with multi-task learning. x -axis is the v alue of M ∈ [100] . whic h was obtained b y removing stop-wo rds and ch o osing top words based on tf-idf. W e initialized CTR with the out- put of a topic mo del trained only on the text. W e used the CTR code pro vided b y the authors. F or the Emb e d and GR U mo dels, we used word em b ed- dings of dimension K w = K = 200, in order to b e consistent with CTR. F or GRU mo dels, the first lay er of the RNN has hidden state dimension K h 1 = 400 and the second la yer (the output la yer) has hidden state dimension K h 2 = 200. W e pre-trained the word embeddings using CBOW [ 45 ] on a corpus of 440,756 ACM abstracts (including the Citeulik e abstracts). Dropout is used at every la yer of the net w ork. The probabilities of dropping a dimension are 0.1, 0.5 and 0.3 at the embedding lay er, the output of the first la yer and the output of the second lay er, resp ectiv ely . W e also reg- ularize the user embeddings with weigh t 0 . 01. W e do very mild preprocessing of the text. W e replace num b ers with a < NUM > tok en and all words whic h ha ve a total frequency of less than 5 by < UNK > . Note that we don’t remo ve stop w ords or frequent words. This leav es a vocabulary of 21,129 w ords for Citeulike-a and 24,697 words for Citeulike-t . The mo dels are optimized via stochastic gradien t descent, where mini-batc h randomly samples a subset of B users and for eac h user w e sample one positive and one negativ e exam- ple. W e set the w eigh ts c ij in ( 2 ) to c ij = 1 + α log (1 + | R i | ), where | R i | is the num b er of items liked by user i , with α = 10 , = 1 e − 8. Unlike W ang and Blei [ 5 ] w e do not w eight the cost function differently for p ositiv e and nega- tiv e samples. Since the total n um b er of negative examples is muc h larger than the p ositive examples for each user, stochastically sampling only one negative per p ositive ex- ample implicitly down-w eights the negatives. W e used a mini-batc h size of B = 512 users and used Adam [ 54 ] for optimization. W e run the mo dels for a maximum of 20k mini-batc h up dates and use early-stopping based on recall on a v alidation set from the training examples. 4.2 Quantitative Results T able 1 summarizes Recall@50 for all the models, on the t wo CiteULik e datasets, for b oth warm-start and cold-start. Figure 3 , further shows the v ariation of Recall@M for differ- en t v alues of M for the multi-task learning models. Cold-Start : Recall that for cold-start recommendation, the item-sp ecific embeddings ˜ v j in ( 4 ) are iden tically equal to zero, and th us the items’ representations depend solely on their text con tent. W e first note the p erformance of the mo dels without multi-task learning. The GRU model is b etter than the b est score of either the CTR mo del or the Embed mo del b y 18.36% (relative improv ement) on CiteUlik e-a and by 32.74% on CiteULike-t. This signifi- can t gain demonstrates that the GRU mo del is m uch b etter at represen ting the con tent of the items. Impro vemen ts are higher on the CitULike-t dataset b ecause it is muc h more sparse, and so mo dels which can utilize conten t appropri- ately giv e better recommendations. CTR and Embed mo d- els perform competitively with eac h other. Next, observ e that multi-task learning uniformly improv es performance for all mo dels. The GR U mo del’s recall im- pro ves by 7.8% on Citulike-a and by 7.6% on Citeulike- t. This leads to an ov erall improv emen t of 19.30% on Citeulik e-a and 34.22% on Citeulike-t, ov er b est of the base- lines. Comparatively , impro vemen t for CTR is smaller. This is exp ected since the Bay esian topic model pro vides strong regularization for the model parameters. Contrary to this, Em b ed mo dels also b enefits a lot by MTL (up to 8.2%). This is exp ected since unlike CTR, all the K w × V param- eters in the Embed mo del are free parameters which are trained directly for recommendation, and th us MTL pro- vides necessary regularization. W arm-Start : Collab orative filtering methods based on matrix factorization [ 6 ] often perform as well as h ybrid meth- ods in the w arm-start scenario, due to the flexibilit y of the item-specific embeddings ˜ v j in ( 4 ) [ 5 , 42 ]. Consider again the models tra ined without MTL. GR U model performs bet- ter than either the CTR or the Embed model, with rel- ativ e improv ement of 8.5% on CiteULik e-a and 5.3% on CiteULik e-t, ov er the b est of the tw o mo dels. Multi-task learning again impro ves performance for all the mo dels. Im- pro vemen ts are particularly significan t for CTR-MTL ov er CTR (up to 15.8%). Since the tags asso ciated with test items were observed during training, they pro vide a strong inductiv e bias leading to impro ved p erformance. Interest- ingly , the GRU-MTL mo del p erforms sligh tly b etter than the CTR-MTL mo del on one dataset and slightly w orse on the other. The first and third plots in Figure 3 demon- strate that the GRU-MTL p erforms slightly better than the CTR-MTL for smaller M , i.e. more relev an t articles are rank ed tow ard the top. T o quantify this, we ev aluate av er- age recipro cal Hit-Rank@10 [ 3 ]. Given a list of M ranked articles for user i , let c 1 , c 2 , . . . , c h denote the ranks of h articles in [ M ] which the user actually liked. HR is then de- fined as P h t =1 1 c t and tests whether top ranked articles are correct. GRU-MTL giv es HR@10 of 0.098 and CTR-MTL giv es HR@10 to b e 0.077, whic h confirms that the top of the list for GR U-MTL co ntains more relev an t recommendations. T ag Prediction: Although the fo cus of the models is rec- ommendation, we ev aluate the p erformance of the multi-task models on tag prediction. W e again use Recall@50 (defined per article) and ev aluate in the cold-start scenario, where there are no tags present for the test article. The GRU and Em b ed models p erform similarly . CTR-MTL is significan tly w orse, which could b e due to our use of the squared loss for training or because hyperparameters were selected for rec- ommendation p erformance, not tag prediction. 4.3 Interpr eting Prediction Decisions W e emplo y a simple, easy-to-implemen t tool for analyzing RNN predictions, bas ed on Denil et al. [ 55 ] and Li et al. [ 56 ]. W e produce a heatmap where every input word is asso ciated with its leverage on the output prediction. Supp ose that we recommended item j to user i . In other words, suppose that ˆ r ij is large. Let E j = ( e j, 1 , e j, 2 , . . . , e j,n j ) be the sequence of w ord embeddings for item j . Since f ( · ) is enco ded as a neu- ral netw ork, d ˆ r ij de j,t can b e obtained by backpropagation . T o produce the heatmap’s v alue for w ord t , we conv ert d ˆ r ij de j,t in to a scalar. This is not p ossible b y bac kpropagation, as d ˆ r ij dx j,t is not w ell-defined, since x j,t is a discrete index. Instead w e compute k d ˆ r ij de j,t k . An application is in Figure 2 . 5. CONCLUSION & FUTURE WORK W e employ deep recurrent neural net works to pro vide v ec- tor representa tions for the text con tent associated with items in collab orativ e filtering. This generic text-to-vector map- ping is useful because it can be train ed directly with gradien t descen t and provides opp ortunities to p erform multi-task learning. F or scientific pap er recommendation, the RNN and m ulti-task learning b oth pro vide complemen tary perfor- mance impro vemen ts. W e encourage further use of the tec h- nique in a v ariety of application domains. In future w ork, w e would lik e to apply deep architectures to users’ data and to explore additional ob jectiv es for multi-task learning that emplo y m ultiple modalities of inputs, suc h as movies’ images and text descriptions. 6. A CKNO WLEDGMENT This work was supp orted in part by the Center for In- telligen t Information Retriev al, in part by The Allen Insti- tute for Artificial Intelligence, in part by NSF grant #CNS- 0958392, in part by the National Science F oundation (NSF) gran t num b er DMR-1534431, and in part by DARP A un- der agreement num b er F A8750-13-2-0020. The U.S. Gov- ernmen t is authorized to repro duce and distribute reprint s for Gov ernmental purp oses notwithstanding any copyrigh t notation thereon. Any opinions, findings and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect those of the sponsor. References [1] Ido Guy , Naama Zw erdling, In bal Ronen, David Carmel, and Erel Uziel. So cial media recommendation based on p eople and tags. In SIGIR , 2010. [2] Ow en Phelan, Kevin McCarthy , and Barry Smyth. Us- ing twi tter to recommend real-time topical news. In R e cSys , 2009. [3] T rapit Bansal, Mrinal Das, and Chiranjib Bhat- tac haryya. Conten t driv en user profiling for comment- w orthy recommendations of news and blog articles. In R e cSys , 2015. [4] Julian McAuley and Jure Lesk ov ec. Hidden factors and hidden topics: understanding rating dimensions with review text. In Re cSys , 2013. [5] Chong W ang and David M Blei. Collab orativ e topic modeling for recommending scientific articles. In SIGKDD , 2011. [6] Y eh uda Koren, Rob ert Bell, and Chris V olinsky . Ma- trix factorization tec hniques for recommender systems. Computer , (8):30–37, 2009. [7] Andriy Mnih and Ruslan Salakhutdino v. Probabilistic matrix factorization. In NIPS , 2007. [8] Mark o Balabanovi ´ c and Y oav Shoham. F ab: con tent- based, collaborative recommendation. Communic ations of the ACM , 40(3):66–72, 1997. [9] Ra ymond J Mooney and Loriene Roy . Conten t-based bo ok recommending using learning for text categoriza- tion. In A CM c onfer enc e on Digital libr aries , 2000. [10] Chumki Basu, Ha ym Hirsh, William Cohen, et al. Recommendation as classification: Using social and con tent-based information in recommendation. In AAAI , 1998. [11] Andrew I Sc hein, Alexandrin Popescul, Lyle H Ungar, and David M P enno ck. Metho ds and metrics for cold- start recommendations. In SIGIR , 2002. [12] Justin Basilico and Thomas Hofmann. Unifying collab- orativ e and conten t-based filtering. In ICML , 2004. [13] Hao W ang, Naiyan W ang, and Dit-Y an Y eung. Col- laborative deep learning for recommender systems. In SIGKDD , 2015. [14] Prem Melville, Raymond J Mo oney , and Ramada ss Na- gara jan. Con ten t-b oosted collab orativ e filtering for im- pro ved recommendations. In AAAI , 2002. [15] Prem K Gopalan, Lauren t Charlin, and David Blei. Con tent-based recommendations with p oisson factor- ization. In NIPS , 2014. [16] Deepak Agarwal and Bee-Chung Chen. Regression- based laten t factor models. In SIGKDD , 2009. [17] Hanna M W allach. T opic mo deling: b eyond bag-of- w ords. In ICML , 2006. [18] Paul J W erb os. Backpropag ation through time: what it do es and how to do it. Pr o c e e dings of the IEEE , 78 (10):1550–1560, 1990. [19] T omas Mikolo v, Martin Karafi´ at, Luk as Burget, Jan Cernock` y, and Sanjeev Khudanpur. Recurrent neural net work based language model. INTERSPEECH , 2010. [20] Kyunghyun Cho, Bart v an Merrienbo er, Caglar Gul- cehre, F ethi Bougares, Holg er Sch w enk, and Y osh ua Bengio. Learning phrase representations using rnn encoder-deco der for statistical mac hine translation. In EMNLP , 2014. [21] Andrew M Dai and Quo c V Le. Semi-supervised se- quence learning. In NIPS , pages 3061–3069, 2015. [22] Rob ert M Bell and Y eh uda Koren. Lessons from the netflix prize challenge. SIGKDD Explor ations Newslet- ter , 9(2):75–79, 2007. [23] Guang Ling, Mic hael R Lyu, and Irwin King. Ratings meet reviews, a combined approac h to recommend. In R e cSys , 2014. [24] Amjad Almahairi, Kyle Kastner, Kyunghyun Cho, and Aaron Courville. Learning distributed represen tations from reviews for collaborative filtering. In R e cSys , 2015. [25] Jason W eston, Samy Bengio, and Nicolas Usunier. Ws- abie: Scaling up to large vocabulary image annotation. In IJCAI , 2011. [26] Yifan Hu, Y ehuda Koren, and Chris V olinsky . Collabo- rativ e filtering for implicit feedbac k datasets. In ICDM , 2008. [27] Steffen Rendle, Christoph F reudenthaler, Zeno Gant- ner, and Lars Schmidt-Thieme. Bpr: Bay esian person- alized ranking from implicit feedback. In UAI , 2009. [28] Y ue Shi, Martha Larson, a nd Alan Hanjalic . Collabora- tiv e filtering b eyond the user-item matrix: A surv ey of the state of the art and future challenges. ACM Com- puting Surveys , 47(1):3, 2014. [29] Steffen Ren dle. F actorization mac hines. In ICDM , 2010. [30] Zeno Gantner, Lucas Drumond, Christoph F reuden- thaler, Steffen Rendle, and Lars Schmidt-Thieme. Learning attribute-to-feature mappings for cold-start recommendations. In ICDM , 2010. [31] Rich Caruana. Multitask learning. Machine le arning , 28(1):41–75, 1997. [32] Ajit P Singh and Geoffrey J Gordon. Relational learn- ing via collective matrix factorization. In SIGKDD , 2008. [33] Hao Ma, Haixuan Y ang, Mic hael R Lyu, and Irwin King. Sorec: so cial recommendation using probabilistic matrix factorization. In CIKM , 2008. [34] Ralf Krestel, Pet er F ankhauser, and W olfgang Nejdl. Laten t dirichlet allo cation for tag recommendation. In R e cSys , 2009. [35] Y oshua Bengio Ian Go o dfello w and Aaron Courville. Deep learning. Bo ok in prep. for MIT Press, 2016. [36] Ruslan Salakh utdinov, Andriy Mnih, and Geoffrey Hin- ton. Restricted boltzmann machines for collab orative filtering. In ICML , 2007. [37] Suv ash Sedhain, Adity a Krishna Menon, Scott Sanner, and Lexing Xie. Autorec: Autoenco ders meet collab o- rativ e filtering. In WWW , 2015. [38] Y ao W u, Christopher DuBois, Alice X. Zhe ng, and Mar- tin Ester. Collab orativ e denoising auto-enco ders for top-n recommender systems. In WSDM , 2016. [39] Ali Mamdouh Elk ahky , Y ang Song, and Xiaodong He. A multi-view deep learning approach for cross domain user mo deling in recommendation systems. In WWW , 2015. [40] Gintare Karolina Dziugaite and Daniel M Ro y . Neu- ral netw ork matrix factorizatio n. arXiv pr eprint arXiv:1511.06443 , 2015. [41] Aaron V an den Oord, Sander Dieleman, and Benjamin Sc hrauw en. Deep conten t-based music recommenda- tion. In NIPS , 2013. [42] Xinxi W ang and Y e W ang. Improving con tent-based and hybrid m usic recommendation using deep learning. In International Confer enc e on Multime dia , 2014. [43] Jason W eston, Sumit Chopra, and Keith Adams. # tagspace: Semantic embeddings from hashtags. 2014. [44] R. He and J. McAuley . VBPR: visual bay esian p erson- alized ranking from implicit feedback. In AAAI , 2016. [45] T omas Mikolo v, Ilya Sutskev er, Kai Chen, Greg S Cor- rado, and Jeff Dean. Distributed representations of w ords and phrases and their compositionality . In NIPS , pages 3111–3119, 2013. [46] Ronan Collobert, Jason W eston, L ´ eon Bottou, Mic hael Karlen, Koray Kavuk cuoglu, and Pa vel Kuksa. Natural language processing (almost) from scratc h. JMLR , 12: 2493–2537, 2011. [47] Ilya S utskev er, Oriol Vin y als, and Qu o c V Le. Sequence to sequence learning with neural netw orks. In NIPS , pages 3104–3112, 2014. [48] Y oshua Bengio, Patrice Simard, and Paolo F rasconi. Learning long-term dep endencies with gradient descen t is difficult. Neur al Networks , 5(2):157–166, 1994. [49] Sepp Hochreiter and J ¨ urgen Schmid huber. Long short- term memory . Neur al c omputation , 9(8):1735–1780, 1997. [50] Juny oung Ch ung, Caglar Gulcehre, KyungHyun Cho, and Y oshua Bengio. Empirical ev aluation of gated re- curren t neural netw orks on sequence mo deling. arXiv pr eprint arXiv:1412.3555 , 2014. [51] Rafal Jozefo wicz, W o jciech Zaremba, and Ilya Sutsk ever. An empirical exploration of recurrent net- w ork arc hitectures. In ICML , 2015. [52] Mike Sch uster and Kuldip K Paliw al. Bidirectional recurren t neural net works. Signal Pr oc essing , 45(11): 2673–2681, 1997. [53] Arthur P Dempster, Nan M Laird, and Donald B Ru- bin. Maxim um likelihoo d from incomplete data via the em algorithm. Jo urnal of the r oyal statistic al so ciety. , pages 1–38, 1977. [54] Diederik Kingma and Jimmy Ba. Adam: A method for stochastic optimization. arXiv pr eprint arXiv:1412.6980 , 2014. [55] Misha Denil, Alban Demira j, and Nando de F reitas. Ex- traction of salien t sen tences from lab elled do cuments. arXiv pr eprint arXiv:1412.6815 , 2014. [56] Jiwei Li, Xinlei Chen, Eduard Hovy , and Dan Juraf- sky . Visualizing and understanding neural mo dels in nlp. 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment