Conditional Generation and Snapshot Learning in Neural Dialogue Systems

Recently a variety of LSTM-based conditional language models (LM) have been applied across a range of language generation tasks. In this work we study various model architectures and different ways to represent and aggregate the source information in…

Authors: Tsung-Hsien Wen, Milica Gasic, Nikola Mrksic

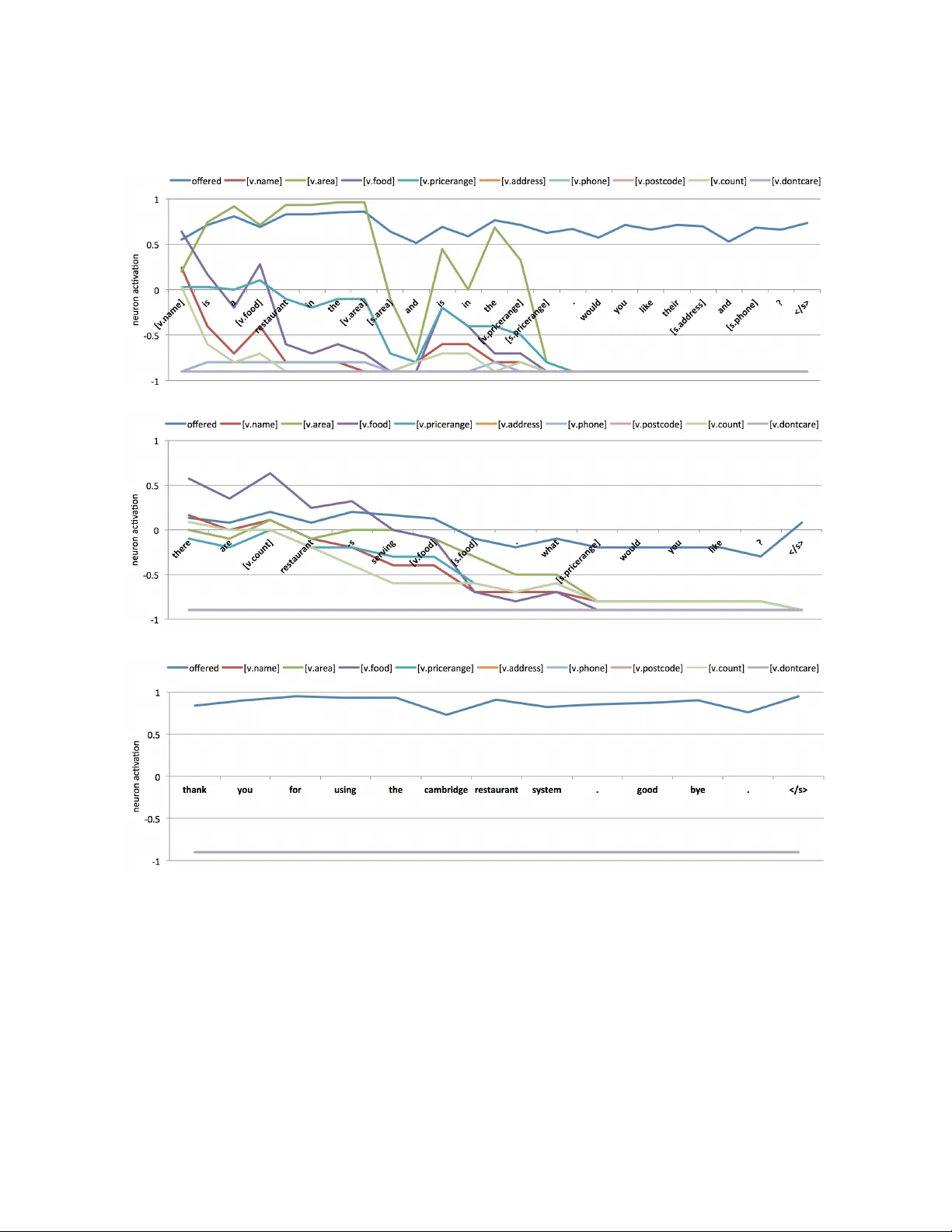

Conditional Generation and Snapshot Learning in Neural Dialogue Systems Tsung-Hsien W en, Milica Ga ˇ si ´ c, Nikola Mrk ˇ si ´ c, Lina M. Rojas-Barahona, Pei-Hao Su, Stefan Ultes, Da vid V andyke, Ste ve Y oung Cambridge Uni versity Engineering Department, T rumpington Street, Cambridge, CB2 1PZ, UK { thw28,mg436,nm480,lmr46,phs26,su259,djv27,sjy11 } @cam.ac.uk Abstract Recently a variety of LSTM-based condi- tional language models (LM) hav e been ap- plied across a range of language generation tasks. In this w ork we study various model ar - chitectures and dif ferent ways to represent and aggregate the source information in an end- to-end neural dialogue system framework. A method called snapshot learning is also pro- posed to facilitate learning from supervised sequential signals by applying a companion cross-entropy objectiv e function to the condi- tioning vector . The experimental and analyt- ical results demonstrate firstly that competi- tion occurs between the conditioning vector and the LM, and the dif fering architectures provide dif ferent trade-offs between the two. Secondly , the discriminativ e power and trans- parency of the conditioning vector is key to providing both model interpretability and bet- ter performance. Thirdly , snapshot learning leads to consistent performance improvements independent of which architecture is used. 1 Introduction Recurrent Neural Network (RNN)-based condi- tional language models (LM) hav e been shown to be very effecti ve in solving a number of real world problems, such as machine translation (MT) (Cho et al., 2014) and image caption generation (Karpa- thy and Fei-Fei, 2015). Recently , RNNs were ap- plied to task of generating sentences from an ex- plicit semantic representation (W en et al., 2015a). Attention-based methods (Mei et al., 2016) and Long Short-term Memory (LSTM)-like (Hochreiter and Schmidhuber, 1997) gating mechanisms (W en et al., 2015b) hav e both been studied to improve gen- eration quality . Although it is no w clear that LSTM- based conditional LMs can generate plausible nat- ural language, less ef fort has been put in compar - ing the different model architectures. Furthermore, conditional generation models are typically tested on relati vely straightforward tasks conditioned on a single source (e.g. a sentence or an image) and where the goal is to optimise a single metric (e.g. BLEU). In this work, we study the use of condi- tional LSTMs in the generation component of neu- ral netw ork (NN)-based dialogue systems which de- pend on multiple conditioning sources and optimis- ing multiple metrics. Neural con versational agents (V inyals and Le, 2015; Shang et al., 2015) are direct e xtensions of the sequence-to-sequence model (Sutske ver et al., 2014) in which a conv ersation is cast as a source to target transduction problem. Ho wev er , these mod- els are still far from real world applications be- cause they lack any capability for supporting domain specific tasks, for example, being able to interact with databases (Sukhbaatar et al., 2015; Y in et al., 2016) and aggregate useful information into their re- sponses. Recent work by W en et al. (2016a), ho w- e ver , proposed an end-to-end trainable neural dia- logue system that can assist users to complete spe- cific tasks. Their system used both distributed and symbolic representations to capture user intents, and these collecti vely condition a NN language genera- tor to generate system responses. Due to the div er- sity of the conditioning information sources, the best way to represent and combine them is non-tri vial. In W en et al. (2016a), the objecti ve function for learning the dialogue policy and language generator depends solely on the likelihood of the output sen- tences. Howe ver , this sequential supervision signal may not be informativ e enough to learn a good con- ditioning vector representation resulting in a gener- ation process which is dominated by the LM. This can often lead to inappropriate system outputs. In this paper, we therefore also in vestigate the use of snapshot learning which attempts to mitigate this problem by heuristically applying companion super- vision signals to a subset of the conditioning vector . This idea is similar to deeply supervised nets (Lee et al., 2015) in which the final cost from the out- put layer is optimised together with the companion signals generated from each intermediary layer . W e hav e found that snapshot learning offers se veral ben- efits: (1) it consistently impro ves performance; (2) it learns discriminativ e and robust feature representa- tions and alle viates the vanishing gradient problem; (3) it appears to learn transparent and interpretable subspaces of the conditioning vector . 2 Related W ork Machine learning approaches to task-oriented di- alogue system design have cast the problem as a partially observable Markov Decision Process (POMDP) (Y oung et al., 2013) with the aim of using reinforcement learning (RL) to train dia- logue policies online through interactions with real users (Ga ˇ si ´ c et al., 2013). In order to make RL tractable, the state and action space must be care- fully designed (Y oung et al., 2010) and the un- derstanding (Henderson et al., 2014; Mrk ˇ si ´ c et al., 2015) and generation (W en et al., 2015b; W en et al., 2016b) modules were assumed a vailable or trained standalone on supervised corpora. Due to the under- lying hand-coded semantic representation (T raum, 1999), the con versation is far from natural and the comprehension capability is limited. This motiv ates the use of neural networks to model dialogues from end to end as a conditional generation problem. Interest in generating natural language using NNs can be attrib uted to the success of RNN LMs for large vocabulary speech recognition (Mikolo v et al., 2010; Mikolo v et al., 2011). Sutske v er et al. (2011) sho wed that plausible sentences can be obtained by sampling characters one by one from the output layer of an RNN. By conditioning an LSTM on a sequence of characters, Grav es (2013) showed that machines can synthesise handwriting indistinguish- able from that of a human. Later on, this conditional generation idea has been tried in se veral research fields, for example, generating image captions by conditioning an RNN on a con v olutional neural net- work (CNN) output (Karpathy and Fei-Fei, 2015; Xu et al., 2015); translating a source to a target lan- guage by conditioning a decoder LSTM on top of an encoder LSTM (Cho et al., 2014; Bahdanau et al., 2015); or generating natural language by condition- ing on a symbolic semantic representation (W en et al., 2015b; Mei et al., 2016). Among all these meth- ods, attention-based mechanisms (Bahdanau et al., 2015; Hermann et al., 2015; Ling et al., 2016) have been shown to be very ef fectiv e improving perfor- mance using a dynamic source aggre gation strategy . T o model dialogue as conditional generation, a sequence-to-sequence learning (Sutske ver et al., 2014) frame work has been adopted. V inyals and Le (2015) trained the same model on sev eral con versa- tion datasets and showed that the model can gener - ate plausible con v ersations. Ho we ver , Serban et al. (2015b) discov ered that the majority of the gener- ated responses are generic due to the maximum lik e- lihood criterion, which was latter addressed by Li et al. (2016a) using a maximum mutual information decoding strategy . Furthermore, the lack of a con- sistent system persona was also studied in Li et al. (2016b). Despite its demonstrated potential, a ma- jor barrier for this line of research is data collection. Many works (Lowe et al., 2015; Serban et al., 2015a; Dodge et al., 2016) hav e in vestigated con versation datasets for de veloping chat bot or QA-like general purpose con v ersation agents. Ho wev er , collecting data to dev elop goal oriented dialogue systems that can help users to complete a task in a specific do- main remains difficult. In a recent work by W en et al. (2016a), this problem was addressed by design- ing an online, parallel version of Wizard-of-Oz data collection (Kelley , 1984) which allo ws lar ge scale and cheap in-domain conv ersation data to be col- lected using Amazon Mechanical T urk. An NN- based dialogue model was also proposed to learn from the collected dataset and was shown to be able to assist human subjects to complete specific tasks. Snapshot learning can be viewed as a special form of weak supervision (also kno wn as distant- or self supervision) (Craven and Kumlien, 1999; Snow et al., 2004), in which supervision signals are heuristi- cally labelled by matching unlabelled corpora with entities or attributes in a structured database. It has been widely applied to relation extraction (Mintz et al., 2009) and information extraction (Hoffmann et al., 2011) in which facts from a kno wledge base (e.g. Freebase) were used as objectives to train classifiers. Recently , self supervision was also used in mem- ory networks (Hill et al., 2016) to improve the dis- criminati ve po wer of memory attention. Conceptu- ally , snapshot learning is related to curriculum learn- ing (Bengio et al., 2009). Instead of learning eas- ier e xamples before difficult ones, snapshot learning creates an easier tar get for each example. In prac- tice, snapshot learning is similar to deeply super- vised nets (Lee et al., 2015) in which companion ob- jecti ves are generated from intermediary layers and optimised altogether with the output objecti ve. 3 Neural Dialogue System The testbed for this work is a neural network-based task-oriented dialogue system proposed by W en et al. (2016a). The model casts dialogue as a source to target sequence transduction problem (modelled by a sequence-to-sequence architecture (Sutske v er et al., 2014)) augmented with the dialogue his- tory (modelled by a belief tracker (Henderson et al., 2014)) and the current database search outcome (modelled by a database operator). The model con- sists of both encoder and decoder modules. The de- tails of each module are gi ven belo w . 3.1 Encoder Module At each turn t , the goal of the encoder is to produce a distrib uted representation of the system action m t , which is then used to condition a decoder to gen- erate the next system response in skeletal form 1 . It consists of four submodules: intent network, belief tracker , database operator, and polic y network. Intent Network The intent network takes a se- quence of tokens 1 and con verts it into a sentence em- bedding representing the user intent using an LSTM 1 Delexicalisation: slots and values are replaced by generic tokens (e.g. keyw ords like Chinese food are replaced by [v .food] [s.food] to allow weight sharing. network. The hidden layer of the LSTM at the last encoding step z t is taken as the representation. As mentioned in W en et al. (2016a), this representation can be viewed as a distributed v ersion of the speech act (T raum, 1999) used in traditional systems. Belief T rackers In addition to the intent network, the neural dialogue system uses a set of slot-based belief trackers (Henderson et al., 2014; Mrk ˇ si ´ c et al., 2015) to track user requests. By taking each user in- put as ne w evidence, the task of a belief tracker is to maintain a multinomial distribution p over v alues v ∈ V s for each informable slot s 2 , and a binary distribution for each requestable slot 2 . These prob- ability distributions p s t are called belief states of the system. The belief states p s t , together with the intent vector z t , can be vie wed as the system’ s comprehen- sion of the user requests up to turn t . Database Operator Based on the belief states p s t , a DB query is formed by taking the union of the maximum values of each informable slot. A vector x t representing different degrees of matching in the DB (no match, 1 match, ... or more than 5 matches) is produced by counting the number of matched enti- ties and expressing it as a 6-bin 1-hot encoding. If x t is not zero, an associated entity pointer is maintained which identifies one of the matching DB entities se- lected at random. The entity pointer is updated if the current entity no longer matches the search criteria; otherwise it stays the same. Policy Network Based on the vectors z t , p s t , and x t from the abo ve three modules, the policy network combines them into a single action vector m t by a three-way matrix transformation, m t = tanh( W z m z t + W xm x t + P s ∈ G W s pm p s t ) (1) where matrices W z m , W s pm , and W xm are param- eters and G is the domain ontology . 3.2 Decoder Module Conditioned on the system action vector m t pro- vided by the encoder module, the decoder mod- ule uses a conditional LSTM LM to generate the required system output token by token in skeletal form 1 . The final system response can then be formed 2 Informable slots are slots that users can use to constrain the search, such as food type or price range; Requestable slots are slots that users can ask a value for , such as phone number . This information is specified in the domain ontology . (a) Language model type LSTM (b) Memory type LSTM (c) Hybrid type LSTM Figure 1: Three dif ferent conditional generation architectures. by substituting the actual values of the database en- tries into the skeletal sentence structure. 3.2.1 Conditional Generation Network In this paper we study and analyse three dif ferent v ariants of LSTM-based conditional generation ar- chitectures: Language Model T ype The most straightforward way to condition the LSTM network on additional source information is to concatenate the condition- ing vector m t together with the input word embed- ding w j and pre vious hidden layer h j − 1 , i j f j o j ˆ c j = sigmoid sigmoid sigmoid tanh W 4 n, 3 n m t w j h j − 1 c j = f j c j − 1 + i j ˆ c j h j = o j tanh( c j ) where index j is the generation step, n is the hidden layer size, i j , f j , o j ∈ [0 , 1] n are input, forget, and output gates respectively , ˆ c j and c j are proposed cell v alue and true cell v alue at step j , and W 4 n, 3 n are the model parameters. The model is shown in Fig- ure 1a. Since it does not differ significantly from the original LSTM, we call it the language model type (lm) conditional generation network. Memory T ype The memory type (mem) condi- tional generation netw ork was introduced by W en et al. (2015b), sho wn in Figure 1b, in which the condi- tioning vector m t is gov erned by a standalone read- ing gate r j . This reading gate decides ho w much in- formation should be read from the conditioning vec- tor and directly writes it into the memory cell c j , i j f j o j r j = sigmoid sigmoid sigmoid sigmoid W 4 n, 3 n m t w j h j − 1 ˆ c j = tanh W c ( w j ⊕ h j − 1 ) c j = f j c j − 1 + i j ˆ c j + r j m t h j = o j tanh( c j ) where W c is another weight matrix to learn. The idea behind this is that the model isolates the con- ditioning vector from the LM so that the model has more flexibility to learn to trade off between the two. Hybrid T ype Continuing with the same idea as the memory type network, a complete separation of con- ditioning vector and LM (except for the gate con- trolling the signals) is provided by the hybrid type network sho wn in Figure 1c, i j f j o j r j = sigmoid sigmoid sigmoid sigmoid W 4 n, 3 n m t w j h j − 1 ˆ c j = tanh W c ( w j ⊕ h j − 1 ) c j = f j c j − 1 + i j ˆ c j h j = o j tanh( c j ) + r j m t This model was moti v ated by the f act that long-term dependency is not needed for the conditioning vec- tor because we apply this information at e very step j anyw ay . The decoupling of the conditioning vector and the LM is attracti ve because it leads to better in- terpretability of the results and provides the potential to learn a better conditioning vector and LM. 3.2.2 Attention and Belief Representation Attention An attention-based mechanism provides an effecti ve approach for aggre gating multiple infor - mation sources for prediction tasks. Like W en et al. (2016a), we explore the use of an attention mecha- nism to combine the tracker belief states in which the policy netw ork in Equation 1 is modified as m j t = tanh( W z m z t + W xm x t + P s ∈ G α j s W s pm p s t ) where the attention weights α j s are calculated by , α j s = softmax r | tanh W r · ( v t ⊕ p s t ⊕ w t j ⊕ h t j − 1 ) where v t = z t + x t and matrix W r and v ector r are parameters to learn. Belief Representation The effect of different be- lief state representations on the end performance are also studied. For user informable slots, the full belief state p s t is the original state containing all categori- cal values; the summary belief state contains only three components: the summed value of all categor- ical probabilities, the probability that the user said they “don’t care” about this slot and the probabil- ity that the slot has not been mentioned. For user requestable slots, on the other hand, the full belief state is the same as the summary belief state because the slot v alues are binary rather than categorical. 3.3 Snapshot Learning Learning conditional generation models from se- quential supervision signals can be dif ficult, because it requires the model to learn both long-term word dependencies and potentially distant source encod- ing functions. T o mitigate this difficulty , we in- troduce a novel method called snapshot learning to create a vector of binary labels Υ j t ∈ [0 , 1] d , d < dim ( m j t ) as the snapshot of the remaining part of the output sentence T t,j : | T t | from generation step j . Each element of the snapshot vector is an indica- tor function of a certain e vent that will happen in the future, which can be obtained either from the sys- tem response or dialogue context at training time. A companion cross entropy error is then computed to force a subset of the conditioning vector ˆ m j t ⊂ m j t to be close to the snapshot vector , L ss ( · ) = − P t P j E [ H (Υ j t , ˆ m j t )] (2) where H ( · ) is the cross entropy function, Υ j t and ˆ m j t are elements of vectors Υ j t and ˆ m j t , respectiv ely . In order to make the tanh activ ations of ˆ m j t compat- ible with the 0-1 snapshot labels, we squeeze each Figure 2: The idea of snapshot learning. The snap- shot vector was trained with additional supervisions on a set of indicator functions heuristically labelled using the system response. v alue of ˆ m j t by adding 1 and di viding by 2 before computing the cost. The indicator functions we use in this work have two forms: (1) whether a particular slot value (e.g., [v .food] 1 ) is going to occur , and (2) whether the sys- tem has of fered a v enue 3 , as sho wn in Figure 2. The offer label in the snapshot is produced by checking the delexicalised name token ( [v .name] ) in the en- tire dialogue. If it has occurred, e very label in sub- sequent turns is labelled with 1. Otherwise it is la- belled with 0. T o create snapshot targets for a partic- ular slot v alue, the output sentence is matched with the corresponding delexicalised token turn by turn, per generation step. At each generation step, the tar- get is labelled with 0 if that delexicalised token has been generated; otherwise it is set to 1. Howe ver , for the models without attention, the tar gets per turn are set to the same because the condition v ector will not be able to learn the dynamically changing behaviour without attention. 4 Experiments Dataset The dataset used in this work was col- lected in the W izard-of-Oz online data collection de- scribed by W en et al. (2016a), in which the task of the system is to assist users to find a restaurant in Cambridge, UK area. There are three informable slots ( food , pricerang e , ar ea ) that users can use to constrain the search and six requestable slots ( ad- dr ess , phone , postcode plus the three informable 3 Details of the specific application used in this study are giv en in Section 4 below . Architecture Belief Success(%) SlotMatch(%) T5-BLEU T1-BLEU Belief state repr esentation lm full 72.6 / 74.5 52.1 / 60.3 0.207 / 0.229 0.216 / 0.238 lm summary 74.5 / 76.5 57.4 / 61.2 0.221 / 0.231 0.227 / 0.240 Conditional architectur e lm summary 74.5 / 76.5 57.4 / 61.2 0.221 / 0.231 0.227 / 0.240 mem summary 75.5 / 77.5 59.2 / 61.3 0.222 / 0.232 0.231 / 0.243 hybrid summary 76.1 / 79.2 52.4 / 60.6 0.202 / 0.228 0.212 / 0.237 Attention-based model lm summary 79.4 / 78.2 60.6 / 60.2 0.228 / 0.231 0.239 / 0.241 mem summary 76.5 / 80.2 57.4 / 61.0 0.220 / 0.229 0.228 / 0.239 hybrid summary 79.0 / 81.8 56.2 / 60.5 0.214 / 0.227 0.224 / 0.240 T able 1: Performance comparison of dif ferent model architectures, belief state representations, and snapshot learning. The numbers to the left and right of the / sign are learning without and with snapshot, respectiv ely . The model with the best performance on a particular metric (column) is sho wn in bold face. slots) that the user can ask a v alue for once a restau- rant has been offered. There are 676 dialogues in the dataset (including both finished and unfinished dia- logues) and approximately 2750 turns in total. The database contains 99 unique restaurants. T raining The training procedure was divided into two stages. Firstly , the belief tracker parameters θ b were pre-trained using cross entropy errors be- tween tracker labels and predictions. Ha ving fixed the tracker parameters, the remaining parts of the model θ \ b are trained using the cross entropy errors from the generation network LM, L ( θ \ b ) = − P t P j H ( y t j , p t j ) + λL ss ( · ) (3) where y t j and p t j are output tok en targets and predic- tions respectiv ely , at turn t of output step j , L ss ( · ) is the snapshot cost from Equation 2, and λ is the tradeof f parameter in which we set to 1 for all mod- els trained with snapshot learning. W e treated each dialogue as a batch and used stochastic gradient de- scent with a small l 2 regularisation term to train the model. The collected corpus was partitioned into a training, v alidation, and testing sets in the ratio 3:1:1. Early stopping was implemented based on the v alidation set considering only LM log-likelihoods. Gradient clipping was set to 1. The hidden layer sizes were set to 50, and the weights were randomly initialised between -0.3 and 0.3 including word em- beddings. The vocab ulary size is around 500 for both input and output, in which rare w ords and words that can be dele xicalised hav e been removed. Decoding In order to compare models trained with dif ferent recipes rather than decoding strategies, we decode all the trained models with the av erage log probability of tokens in the sentence. W e applied beam search with a beamwidth equal to 10, the search stops when an end-of-sentence token is gen- erated. In order to consider language variability , we ran decoding until 5 candidates were obtained and performed e valuation on them. Metrics W e compared models trained with differ - ent recipes by performing a corpus-based ev aluation in which the model is used to predict each system response in the held-out test set. Three ev aluation metrics were used: BLEU score (on top-1 and top- 5 candidates) (Papineni et al., 2002), slot matching rate and objecti ve task success rate (Su et al., 2015). The dialogue is marked as successful if both: (1) the offered entity matches the task that was speci- fied to the user , and (2) the system answered all the associated information requests (e.g. what is the ad- dr ess? ) from the user . The slot matching rate is the percentage of delexicalised tokens (e.g. [s.food] and [v .ar ea] 1 ) appear in the candidate also appear in the reference. W e computed the BLEU scores on the skeletal sentence forms before substituting with the actual entity values. All the results were averaged ov er 10 random initialised networks. Results T able 1 sho ws the ev aluation results. The numbers to the left and right of each table cell are the same model trained w/o and w/ snapshot learning. (a) Hybrid LSTM w/o snapshot learning (b) Hybrid LSTM w/ snapshot learning Figure 3: Learned attention heat maps over trackers. The first three columns in each figure are informable slot trackers and the rest are requestable slot trackers. The generation model is the hybrid type LSTM. The first observation is that snapshot learning con- sistently improv es on most metrics regardless of the model architecture. This is especially true for BLEU scores. W e think this may be attrib uted to the more discriminati ve conditioning vector learned through the snapshot method, which makes the learning of the conditional LM easier . In the first block belief state repr esentation , we compare the effect of two dif ferent belief represen- tations. As can be seen, using a succinct represen- tation is better ( summary > full ) because the iden- tity of each categorical v alue in the belief state does not help when the generation decisions are done in skeletal form. In fact, the full belief state representa- tion may encourage the model to learn incorrect co- adaptation among features when the data is scarce. In the conditional arc hitectur e block, we com- pare the three dif ferent conditional generation archi- tectures as described in section 3.2.1. This result sho ws that the language model type ( lm ) and mem- ory type ( mem ) networks perform better in terms of BLEU score and slot matching rate, while the hybrid type ( hybrid ) networks achiev e higher task success. This is probably due to the degree of separation be- tween the LM and conditioning vector: a coupling approach ( lm, mem ) sacrifices the conditioning vec- tor but learns a better LM and higher BLEU; while a complete separation ( hybrid ) learns a better condi- tioning vector and of fers a higher task success. Lastly , in the attention-based model block we train the three architectures with the attention mech- Model i j f j r j / o j hybrid, full 0.567 0.502 0.405 hybrid, summary 0.539 0.540 0.428 + att. 0.540 0.559 0.459 T able 2: A verage acti vation of gates on test set. anism and compare them again. Firstly , the char - acteristics of the three models we observed above also hold for attention-based models. Secondly , we found that the attention mechanism impro ves all the three architectures on task success rate but not BLEU scores. This is probably due to the limita- tions of using n-gram based metrics like BLEU to e valuate the generation quality (Stent et al., 2005). 5 Model Analysis Gate Activations W e first studied the average ac- ti vation of each indi vidual gate in the models by av- eraging them when running generation on the test set. W e analysed the hybrid models because their reading gate to output gate activ ation ratio ( r j / o j ) sho ws clear tradeoff between the LM and the con- ditioning vector components. As can be seen in T a- ble 2, we found that the av erage forget gate activ a- tions ( f j ) and the ratio of the reading gate to the out- put gate acti vation ( r j / o j ) hav e strong correlations to performance: a better performance ( r ow 3 > r ow 2 > r ow 1 ) seems to come from models that can learn a longer word dependency (higher forget gate f t ac- ti vations) and a better conditioning vector (therefore (a) (b) Figure 4: T wo example responses generated from the hybrid model trained with snapshot and attention. Each line represents a neuron that detects a particular snapshot e vent. higher reading to output gate ratio r j / o j ). Learned Attention W e have visualised the learned attention heat map of models trained with and without snapshot learning in Figure 3. The at- tention is on both the informable slot trackers (first three columns) and the requestable slot track ers (the other columns). W e found that the model trained with snapshot learning (Figure 3b) seems to pro- duce a more accurate and discriminati ve attention heat map comparing to the one trained without it (Figure 3a). This may contribute to the better perfor - mance achie ved by the snapshot learning approach. Snapshot Neurons As mentioned earlier , snap- shot learning forces a subspace of the condition- ing vector ˆ m j t to become discriminativ e and inter- pretable. T wo e xample generated sentences together with the snapshot neuron activ ations are sho wn in Figure 4. As can be seen, when generating words one by one, the neuron activ ations were changing to detect different events they were assigned by the snapshot training signals: e.g. in Figure 4b the light blue and orange neurons switched their domination role when the token [v .address] was generated; the offer ed neuron is in a high activ ation state in Fig- ure 4b because the system was of fering a venue, while in Figure 4a it is not activ ated because the sys- tem was still helping the user to find a venue. More examples can be found in the Appendix. 6 Conclusion and Future W ork This paper has in v estigated different conditional generation architectures and a novel method called snapshot learning to improve response generation in a neural dialogue system framew ork. The results sho wed three major findings. Firstly , although the hybrid type model did not rank highest on all met- rics, it is nevertheless preferred because it achie ved the highest task success and also it provided more in- terpretable results. Secondly , snapshot learning pro- vided gains on virtually all metrics regardless of the architecture used. The analysis suggested that the benefit of snapshot learning mainly comes from the more discriminativ e and robust subspace represen- tation learned from the heuristically labelled com- panion signals, which in turn facilitates optimisation of the final target objectiv e. Lastly , the results sug- gested that by making a comple x system more inter- pretable at different le vels not only helps our under- standing but also leads to the highest success rates. Ho wev er , there is still much work left to do. This work focused on conditional generation architec- tures and snapshot learning in the scenario of gen- erating dialogue responses. It would be very helpful if the same comparison could be conducted in other application domains such as machine translation or image caption generation so that a wider vie w of the ef fectiv eness of these approaches can be assessed. Acknowledgments Tsung-Hsien W en and David V andyke are supported by T oshiba Research Europe Ltd, Cambridge Re- search Laboratory . References Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Ben- gio. 2015. Neural machine translation by jointly learning to align and translate. In ICLR . Y oshua Bengio, J ´ er ˆ ome Louradour, Ronan Collobert, and Jason W eston. 2009. Curriculum learning. In ICML . Kyungh yun Cho, Bart v an Merrienboer, C ¸ aglar G ¨ ulc ¸ ehre, Fethi Boug ares, Holger Schwenk, and Y oshua Bengio. 2014. Learning phrase representations using RNN encoder-decoder for statistical machine translation. In EMNLP . Mark Craven and Johan Kumlien. 1999. Constructing biological knowledge bases by extracting information from text sources. In ISMB . Jesse Dodge, Andreea Gane, Xiang Zhang, Antoine Bor- des, Sumit Chopra, Alexander Miller , Arthur Szlam, and Jason W eston. 2016. Ev aluating prerequi- site qualities for learning end-to-end dialog systems. ICLR . Milica Ga ˇ si ´ c, Catherine Breslin, Matthew Henderson, Dongho Kim, Martin Szummer, Blaise Thomson, Pir- ros Tsiakoulis, and Stev e Y oung. 2013. On-line policy optimisation of bayesian spoken dialogue systems via human interaction. In ICASSP . Alex Grav es. 2013. Generating sequences with recurrent neural networks. arXiv preprint:1308.0850 . Matthew Henderson, Blaise Thomson, and Stev e Y oung. 2014. W ord-based dialog state tracking with recurrent neural networks. In SIGdial . Karl Moritz Hermann, T om ´ as K ocisk ´ y, Edward Grefen- stette, Lasse Espeholt, W ill Kay , Mustafa Suleyman, and Phil Blunsom. 2015. T eaching machines to read and comprehend. In NIPS . Felix Hill, Antoine Bordes, Sumit Chopra, and Jason W e- ston. 2016. The goldilocks principle: Reading chil- dren’ s books with explicit memory representations. In ICLR . Sepp Hochreiter and J ¨ urgen Schmidhuber . 1997. Long short-term memory . Neural Computation . Raphael Hof fmann, Congle Zhang, Xiao Ling, Luke Zettlemoyer , and Daniel S. W eld. 2011. Kno wledge- based weak supervision for information extraction of ov erlapping relations. In A CL . Andrej Karpathy and Li Fei-Fei. 2015. Deep visual- semantic alignments for generating image descrip- tions. In CVPR . John F . Kelley . 1984. An iterati ve design methodology for user-friendly natural language office information applications. ACM T ransaction on Information Sys- tems . Chen-Y u Lee, Saining Xie, Patrick Gallagher, Zhengyou Zhang, and Zhuowen T u. 2015. Deeply-supervised nets. In AIST A TS . Jiwei Li, Michel Galley , Chris Brockett, Jianfeng Gao, and Bill Dolan. 2016a. A diversity-promoting ob- jectiv e function for neural con versation models. In N AACL-HL T . Jiwei Li, Michel Galley , Chris Brockett, Jianfeng Gao, and Bill Dolan. 2016b. A persona-based neural con- versation model. arXiv perprint:1603.06155 . W ang Ling, Edward Grefenstette, Karl Moritz Hermann, T om ´ as K ocisk ´ y, Andrew Senior , Fumin W ang, and Phil Blunsom. 2016. Latent predictor networks for code generation. arXiv preprint:1603.06744 . Ryan Lowe, Nissan Pow , Iulian Serban, and Joelle Pineau. 2015. The ubuntu dialogue corpus: A large dataset for research in unstructured multi-turn dia- logue systems. In SIGdial . Hongyuan Mei, Mohit Bansal, and Matthe w R. W alter . 2016. What to talk about and how? selective gen- eration using lstms with coarse-to-fine alignment. In N AACL . T om ´ a ˇ s Mikolo v , Martin Karafit, Luk ´ a ˇ s Bur get, Jan ˇ Cernock ´ y, and Sanjee v Khudanpur . 2010. Recurrent neural network based language model. In InterSpeech . T om ´ a ˇ s Mikolov , Stefan K ombrink, Luk ´ a ˇ s Burget, Jan H. ˇ Cernock ´ y, and Sanjee v Khudanpur . 2011. Exten- sions of recurrent neural network language model. In ICASSP . Mike Mintz, Stev en Bills, Rion Snow , and Dan Jurafsky . 2009. Distant supervision for relation extraction with- out labeled data. In ACL . Nikola Mrk ˇ si ´ c, Diarmuid ´ O S ´ eaghdha, Blaise Thomson, Milica Ga ˇ si ´ c, Pei-Hao Su, David V andyke, Tsung- Hsien W en, and Stev e Y oung. 2015. Multi-domain Dialog State T racking using Recurrent Neural Net- works. In ACL . Kishore Papineni, Salim Roukos, T odd W ard, and W ei- Jing Zhu. 2002. Bleu: a method for automatic ev alua- tion of machine translation. In ACL . Iulian Vlad Serban, Ryan Lowe, Laurent Charlin, and Joelle Pineau. 2015a. A survey of a vailable cor- pora for building data-dri ven dialogue systems. arXiv pr eprint:1512.05742 . Iulian Vlad Serban, Alessandro Sordoni, Y oshua Bengio, Aaron C. Courville, and Joelle Pineau. 2015b . Hier- archical neural network generativ e models for movie dialogues. In AAAI . Lifeng Shang, Zhengdong Lu, and Hang Li. 2015. Neu- ral responding machine for short-text conv ersation. In A CL . Rion Snow , Daniel Jurafsky , and Andre w Y . Ng. 2004. Learning syntactic patterns for automatic hypernym discov ery . In NIPS . Amanda Stent, Matthe w Mar ge, and Mohit Singhai. 2005. Evaluating ev aluation methods for generation in the presence of variation. In CICLing 2005 . Pei-Hao Su, David V andyke, Milica Gasic, Dongho Kim, Nikola Mrksic, Tsung-Hsien W en, and Stev e J. Y oung. 2015. Learning from real users: Rating dialogue suc- cess with neural networks for reinforcement learning in spoken dialogue systems. In Interspeech . Sainbayar Sukhbaatar , Arthur Szlam, Jason W eston, and Rob Fergus. 2015. End-to-end memory networks. In NIPS . Ilya Sutske ver , James Martens, and Geoffrey E. Hinton. 2011. Generating text with recurrent neural networks. In ICML . Ilya Sutskev er , Oriol V inyals, and Quoc V . Le. 2014. Sequence to sequence learning with neural networks. In NIPS . David R. T raum, 1999. F oundations of Rational Agency , chapter Speech Acts for Dialogue Agents. Springer . Oriol V inyals and Quoc V . Le. 2015. A neural conv ersa- tional model. In ICML Deep Learning W orkshop . Tsung-Hsien W en, Milica Ga ˇ si ´ c, Dongho Kim, Nik ola Mrk ˇ si ´ c, Pei-Hao Su, David V andyke, and Stev e Y oung. 2015a. Stochastic language generation in di- alogue using recurrent neural networks with con volu- tional sentence reranking. In SIGdial . Tsung-Hsien W en, Milica Ga ˇ si ´ c, Nik ola Mrk ˇ si ´ c, Pei-Hao Su, David V andyke, and Ste ve Y oung. 2015b. Seman- tically conditioned lstm-based natural language gener - ation for spoken dialogue systems. In EMNLP . Tsung-Hsien W en, Milica Ga ˇ si ´ c, Nik ola Mrk ˇ si ´ c, Pei-Hao Su, Stefan Ultes, Da vid V andyke, and Stev e Y oung. 2016a. A network-based end-to-end trainable task- oriented dialogue system. arXiv preprint:1604.04562 . Tsung-Hsien W en, Milica Ga ˇ si ´ c, Nik ola Mrk ˇ si ´ c, Pei-Hao Su, David V andyke, and Stev e Y oung. 2016b. Multi- domain neural network language generation for spo- ken dialogue systems. In NAA CL-HL T . Kelvin Xu, Jimmy Ba, Ryan Kiros, K yunghyun Cho, Aaron C. Courville, Ruslan Salakhutdinov , Richard S. Zemel, and Y oshua Bengio. 2015. Show , attend and tell: Neural image caption generation with visual at- tention. In ICML . Pengcheng Y in, Zhengdong Lu, Hang Li, and Ben Kao. 2016. Neural enquirer: Learning to query tables. In IJCAI . Stev e Y oung, Milica Ga ˇ si ´ c, Simon Keizer , Franc ¸ ois Mairesse, Jost Schatzmann, Blaise Thomson, and Kai Y u. 2010. The hidden information state model: A practical framework for pomdp-based spoken dialogue management. Computer , Speech and Language . Stev e Y oung, Milica Ga ˇ si ´ c, Blaise Thomson, and Ja- son D. Williams. 2013. Pomdp-based statistical spo- ken dialog systems: A revie w . Pr oceedings of IEEE . A ppendix: More snapshot neur on visualisation Figure 5: More example visualisation of snapshot neurons and dif ferent generated responses.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment