Top-N Recommender System via Matrix Completion

Top-N recommender systems have been investigated widely both in industry and academia. However, the recommendation quality is far from satisfactory. In this paper, we propose a simple yet promising algorithm. We fill the user-item matrix based on a l…

Authors: Zhao Kang, Chong Peng, Qiang Cheng

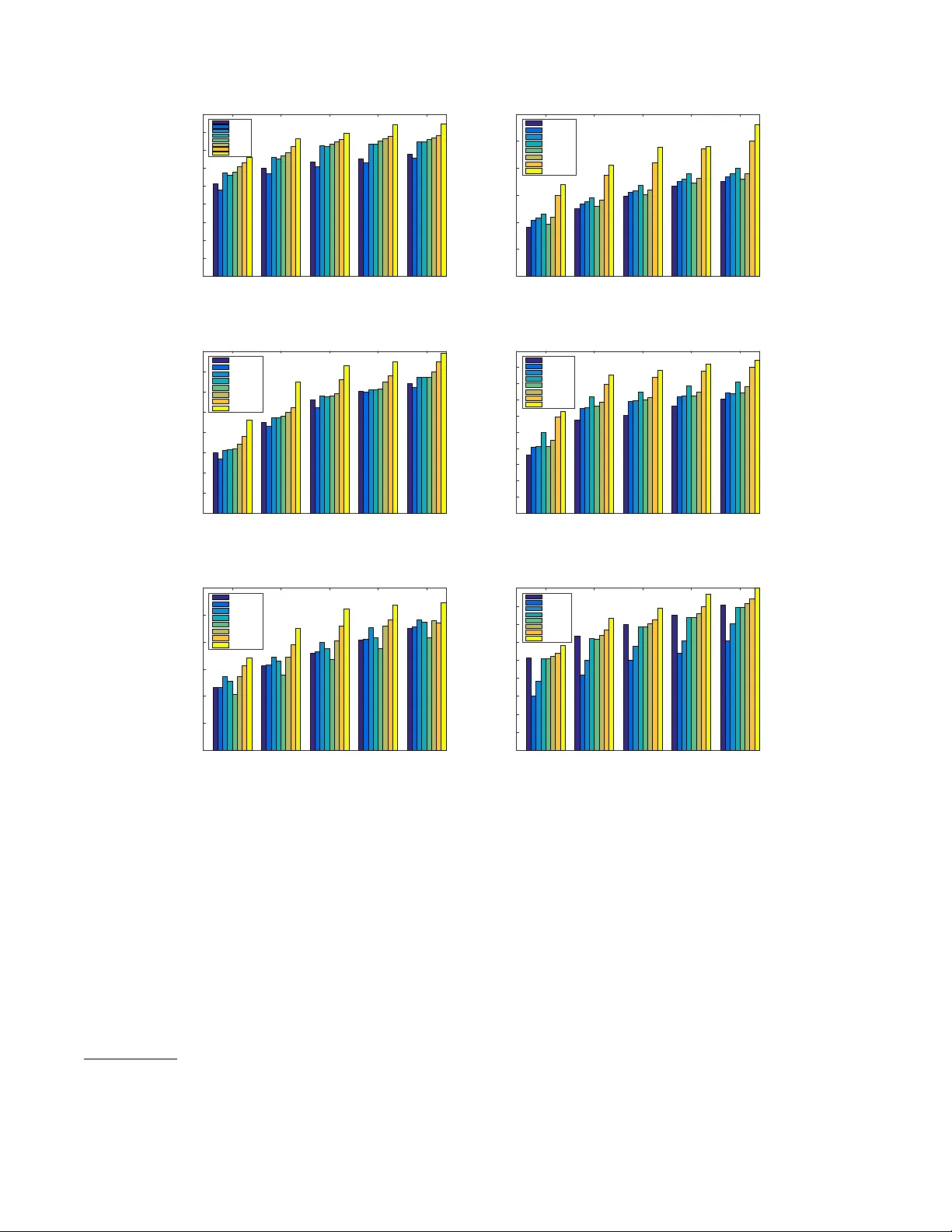

T op-N Recommender System via Matrix Completion Zhao Kang Chong Peng Qiang Cheng Department of Computer Science, Southern Illinois Univ ersity , Carbondale, IL 62901, USA { Zhao.Kang, pchong, qcheng } @siu.edu Abstract T op-N recommender systems hav e been in vestigated widely both in industry and academia. Howe ver , the recommenda- tion quality is far from satisfactory . In this paper , we propose a simple yet promising algorithm. W e fill the user-item ma- trix based on a low-rank assumption and simultaneously k eep the original information. T o do that, a noncon vex rank relax- ation rather than the nuclear norm is adopted to provide a better rank approximation and an ef ficient optimization strat- egy is designed. A comprehensiv e set of experiments on real datasets demonstrates that our method pushes the accurac y of T op-N recommendation to a new le vel. Introduction The growth of online markets has made it increasingly dif- ficult for people to find items which are interesting and use- ful to them. T op-N recommender systems have been widely adopted by the majority of e-commerce web sites to recom- mend size- N ranked lists of items that best fit customers’ personal tastes and special needs (Linden, Smith, and Y ork 2003). It works by estimating a consumer’ s response for new items, based on historical information, and suggesting to the consumer novel items for which the predicted response is high. In general, historical information can be obtained ex- plicitly , for example, through ratings/revie ws, or implicitly , from purchase history or access patterns (Desrosiers and Karypis 2011). Over the past decade, a variety of approaches ha ve been proposed for T op-N recommender systems (Ricci, Rokach, and Shapira 2011). They can be roughly di vided into three categories: neighborhood-based collaborati ve filter - ing, model-based collaborati ve filtering, and ranking-based methods. The general principle of neighborhood-based methods is to identify the similarities among users/items (Deshpande and Karypis 2004). For example, item-based k-nearest-neighbor (ItemKNN) collaborative filtering meth- ods first identify a set of similar items for each of the items that the consumer has purchased, and then recommend T op- N items based on those similar items. Ho wev er , they suffer from low accuracy since they employ few item characteris- tics. Copyright c 2016, Association for the Advancement of Artificial Intelligence (www .aaai.org). All rights reserved. Model-based methods build a model and then generate recommendations. For instance, the widely studied matrix factorization (MF) methods employ the user-item similari- ties in their latent space to e xtract the user-item purchase pat- terns. Pure singular -value-decomposition-based (PureSVD) matrix factorization method (Cremonesi, Koren, and T ur- rin 2010) characterizes users and items by the most prin- cipal singular vectors of the user-item matrix. A weighted regularized matrix factorization (WRMF) (Pan et al. 2008; Hu, Koren, and V olinsky 2008) method applies a weighting matrix to differentiate the contrib utions from observed pur- chase/rating activities and unobserv ed ones. The third category of methods rely on ranking/retriev al criteria. Here, T op-N recommendation is treated as a ranking problem. A Bayesian personalized ranking (BPR) (Rendle et al. 2009) criterion, which is the maximum posterior estima- tor from a Bayesian analysis, is used to measure the dif fer- ence between the rankings of user-purchased items and the rest items. BPR can be combined with ItemKNN (BPRkNN) and MF method (BPRMF). One common dra wback of these approaches lies in low recommendation quality . Recently , a novel T op-N recommendation method SLIM (Ning and Karypis 2011) has been proposed. From user-item matrix X of size m × n , it first learns a sparse aggre gation coefficient matrix W ∈ R n × n + by encoding each item as a linear combination of all other items and solving an l 1 -norm and l 2 -norm regularized optimization problem. Each entry w ij describes the similarity between item i and j . SLIM has obtained better recommendation accurac y than the other state-of-the-art methods. Howe ver , SLIM can only capture relations between items that are co-purchased/co-rated by at least one user, while an intrinsic characteristic of recom- mender systems is sparsity due to the fact that users typically rate only a small portion of the av ailable items. T o overcome the abo ve limitation, LorSLIM (Cheng, Y in, and Y u 2014) has also imposed a low-rank constraint on W . It solves the follo wing problem: min W 1 2 k X − X W k 2 F + β 2 k W k 2 F + λ k W k 1 + γ k W k ∗ s.t. W ≥ 0 , diag ( W ) = 0 , where k W k ∗ is the nuclear norm of W , defined as the sum of its singular values. Low-rank structure is moti v ated by the fact that a few latent variables from F that explain items’ features in factor model W ≈ F F T are of lo w rank. After obtaining W , the recommendation score for user i about an un-purchased/-rated item j is ˆ x ij = x T i w j , where x ij = 0 , x T i is the i -th ro w of X , and w j is the j -th column of W . Thus ˆ X = X W . LorSLIM can model the relations between items even on sparse datasets and thus improv es the perfor- mance. T o further boost the accuracy of T op-N recommender sys- tems, we first fill the missing ratings by solving a noncon- ve x optimization problem, based on the assumption that the user’ ratings are affected by only a fe w factors and the re- sulting rating matrix should be of lo w rank (Lee et al. 2014), and then make the T op-N recommendation. This is differ- ent from previous approaches: Middle values of the rating ranges, or the a verage user or item ratings are commonly uti- lized to fill out the missing ratings (Breese, Heckerman, and Kadie 1998; Deshpande and Karypis 2004); a more reliable approach utilizes content information (Melville, Mooney , and Nagarajan 2002; Li and Za ¨ ıane 2004; Degemmis, Lops, and Semeraro 2007), for example, the missing ratings are provided by autonomous agents called filterbots (Good et al. 1999), which rate items based on some specific character- istics of their content; a low rank matrix factorization ap- proach seeks to approximate X by a multiplication of low rank factors (Y u et al. 2009). Experimental results demon- strate the superior recommendation quality of our approach. Due to the inherent computational complexity of rank problems, the non-con ve x rank function is often relaxed to its con ve x surrogate, i.e. the nuclear norm (Cand ` es and Recht 2009; Recht, Xu, and Hassibi 2008). Howe ver , this substitution is not alw ays valid and can lead to a biased so- lution (Shi and Y u 2011; Kang, Peng, and Cheng 2015b). Matrix completion with nuclear norm regularization can be significantly hurt when entries of the matrix are sampled non-uniformly (Srebro and Salakhutdinov 2010). Noncon- ve x rank approximation has receiv ed significant attention (Zhong et al. 2015; Kang and Cheng 2015). Thus we use log-determinant ( log det ) function to approximate the rank function and design an effecti ve optimization algorithm. Problem F ormulation The incomplete user-item purchases/ratings matrix is de- noted as M of size m × n . M ij is 1 or a positiv e value if user i has e ver purchased/rated item j ; otherwise it is 0. Our goal is to reconstruct a full matrix X , which is supposed to be low-rank. Consider the following matrix completion problem: min X log det (( X T X ) 1 / 2 + I ) s.t. X ij = M ij , ( i, j ) ∈ Ω , (1) where Ω is the set of locations corresponding to the observed entries and I ∈ R n × n is an identity matrix. It is easy to show that log det (( X T X ) 1 / 2 + I ) ≤ k X k ∗ , i.e., log det is a tighter rank approximation function than the nuclear norm. log det also helps mitigate another inherent disadvantage of the nuclear norm, i.e., the imbalanced penalization of dif- ferent singular values (Kang, Peng, and Cheng 2015a). Pre- viously log det ( X + δ I ) was suggested to restrict the rank of positiv e semidefinite matrix X (Fazel, Hindi, and Boyd 2003), which is not guaranteed for more general X , and also δ is required to be small, which leads to significantly biased approximation for small singular v alues. Compared to some other noncon vex relaxations in the literature (Lu et al. 2014), our formulation enjoys the simplicity and ef ficacy . Methodology Considering that the user -item matrix is often nonnegativ e, we add nonnegati ve constraint X ≥ 0 , i.e., element-wise positivity , for easy interpretation of the representation. Let P Ω be the orthogonal projection operator onto the span of matrices vanishing outside of Ω (i.e., Ω c ) so that ( P Ω ( X )) ij = X ij , if X ij ∈ Ω; 0 , if X ij ∈ Ω c . Problem (1) can be reformulated as min X log det (( X T X ) 1 / 2 + I ) + l R + ( X ) s.t. P Ω ( X ) = P Ω ( M ) , (2) where l R + is the indicator function, defined element-wisely as l R + ( x ) = 0 , if x ≥ 0; + ∞ , otherwise. Notice that this is a noncon vex optimization problem, which is not easy to solve in general. Here we de velop an ef fectiv e optimization strategy based on augmented Lagrangian mul- tiplier (ALM) method. By introducing an auxiliary variable Y , it has the follo wing equiv alent form min X,Y log det (( X T X ) 1 / 2 + I ) + l R + ( Y ) s.t. P Ω ( X ) = P Ω ( M ) , X = Y , (3) which has an augmented Lagrangian function of the form L ( X, Y , Z ) = l ogdet (( X T X ) 1 / 2 + I ) + l R + ( Y )+ µ 2 k X − Y + Z µ k 2 F s.t. P Ω ( X ) = P Ω ( M ) , (4) where Z is a Lagrange multiplier and µ > 0 is a penalty parameter . Then, we can apply the alternating minimization idea to update X , Y , i.e., updating one of the two variables with the other fixed. Giv en the current point X t , Y t , Z t , we update X t +1 by solving X t +1 = arg min X log det (( X T X ) 1 / 2 + I )+ µ t 2 k X − Y t + Z t µ t k 2 F (5) This can be conv erted to scalar minimization problems due to the following theorem (Kang et al. 2015). Algorithm 1 Solve (3) Input: Original imcomplete data matrix M Ω ∈ R m × n , parameters µ 0 > 0 , γ > 1 . Initialize: Y = P Ω ( M ) , Z = 0 . REPEA T 1: Obtain X through (10). 2: Update Y as (12). 3: Update the Lagrangian multipliers Z by Z t +1 = Z t + µ t ( X t +1 − Y t +1 ) . 4: Update the parameter µ t by µ t +1 = γ µ t . UNTIL stopping criterion is met. Theorem 1 If F ( Z ) is a unitarily in variant function and SVD of A is A = U Σ A V T , then the optimal solution to the following pr oblem min Z F ( Z ) + β 2 k Z − A k 2 F (6) is Z ∗ with SVD U Σ ∗ Z V T , wher e Σ ∗ Z = diag ( σ ∗ ) ; mor e- over , F ( Z ) = f ◦ σ ( Z ) , wher e σ ( Z ) is the vector of nonin- cr easing singular values of Z , then σ ∗ is obtained by using the Mor eau-Y osida proximity operator σ ∗ = pr ox f ,β ( σ A ) , wher e σ A := diag (Σ A ) , and pr ox f ,β ( σ A ) := arg min σ ≥ 0 f ( σ ) + β 2 k σ − σ A k 2 2 . (7) According to the first-order optimality condition, the gra- dient of the objectiv e function of (7) with respect to each singular value should v anish. For l og det function, we hav e 1 1 + σ i + β ( σ i − σ i,A ) = 0 s.t. σ i ≥ 0 . (8) The abov e equation is quadratic and gives two roots. If σ i,A = 0 , the minimizer σ ∗ i will be 0; otherwise, there ex- ists a unique minimizer . Finally , we obtain the update of X variable with X t +1 = U diag ( σ ∗ ) V T . (9) Then we fix the values at the observ ed entries and obtain X t +1 = P Ω c ( X t +1 ) + P Ω ( M ) . (10) T o update Y , we need to solve min Y l R + ( Y ) + µ t 2 k X t +1 − Y + Z t µ t k 2 F , (11) which yields the updating rule Y t +1 = max ( X t +1 + Z t /µ t , 0) . (12) Here max( · ) is an element-wise operator . The complete pro- cedure is outlined in Algorithm 1. T o use the estimated matrix ˆ X to mak e recommendation for user i , we just sort i ’ s non-purchased/-rated items based on their scores in decreasing order and recommend the T op- N items. T able 1: The datasets used in e valuation dataset #users #items #trns rsize csize density ratings Delicious 1300 4516 17550 13.50 3.89 0.29% - lastfm 8813 6038 332486 37.7 55.07 0.62% - BX 4186 7733 182057 43.49 23.54 0.56% - ML100K 943 1682 100000 106.04 59.45 6.30% 1-10 Netflix 6769 7026 116537 17.21 16.59 0.24% 1-5 Y ahoo 7635 5252 212772 27.87 40.51 0.53% 1-5 The “#users”, “#items”, “#trns” columns sho w the number of users, number of items and number of transactions, respectively , in each dataset. The “rsize” and “csize” columns are the average number of ratings for each user and on each item (i.e., row density and column density of the user-item matrix), respectively , in each dataset. Column corresponding to “density” shows the density of each dataset (i.e., density=#trns/(#users × #items)). The “ratings” column is the rating range of each dataset with granularity 1. Experimental Evaluation Datasets W e ev aluate the performance of our method on six different real datasets whose characteristics are summarized in T able 1. These datasets are from different sources and at dif fer- ent sparsity levels. They can be broadly cate gorized into two classes. The first class includes Delicious, lastfm and BX. These three datasets have only implicit feedback (e.g., listening history), i.e., the y are represented by binary matrices. In par- ticular , Delicious was deriv ed from the bookmarking and tagging information from a set of 2 K users from Delicious social bookmarking system 1 such that each URL was book- marked by at least 3 users. Lastfm corresponds to music artist listening information which was obtained from the last.fm online music system 2 , in which each music artist was listened to by at least 10 users and each user listened to at least 5 artists. BX is a subset from the Book-Crossing dataset 3 such that only implicit interactions were contained and each book was read by at least 10 users. The second class contains ML100K, Netflix and Y ahoo. All these datasets contain multi-value ratings. Specifically , the ML100K dataset corresponds to movie ratings and is a subset of the Mo vieLens research project 4 . The Netflix is a subset of data extracted from Netflix Prize dataset 5 and each user rated at least 10 movies. The Y ahoo dataset is a subset obtained from Y ahoo!Movies user ratings 6 . In this dataset, each user rated at least 5 movies and each movie was rated by at least 3 users. Evaluation Methodology W e employ 5-fold Cross-V alidation to demonstrate the ef- ficacy of our proposed approach. For each run, each of the datasets is split into training and test sets by randomly se- lecting one of the non-zero entries for each user to be part of 1 http://www .delicious.com 2 http://www .last.fm 3 http://www .informatik.uni-freiburg.de/ czie gler/BX/ 4 http://grouplens.org/datasets/mo vielens/ 5 http://www .netflixprize.com/ 6 http://webscope.sandbox.yahoo.com/catalog.php?datatype=r T able 2: Comparison of T op-N recommendation algorithms method Delicious lastfm params HR ARHR params HR ARHR ItemKNN 300 - - - 0.300 0.179 100 - - - 0.125 0.075 PureSVD 1000 10 - - 0.285 0.172 200 10 - - 0.134 0.078 WRMF 250 5 - - 0.330 0.198 100 3 - - 0.138 0.078 BPRKNN 1e-4 0.01 - - 0.326 0.187 1e-4 0.01 - - 0.145 0.083 BPRMF 300 0.1 - - 0.335 0.183 100 0.1 - - 0.129 0.073 SLIM 10 1 - - 0.343 0.213 5 0.5 - - 0.141 0.082 LorSLIM 10 1 3 3 0.360 0.227 5 1 3 3 0.187 0.105 Our 250 4 - - 0.382 0.241 0.03 1.5 - - 0.206 0.113 method BX ML100K params HR ARHR params HR ARHR ItemKNN 400 - - - 0.045 0.026 10 - - - 0.287 0.124 PureSVD 3000 10 - - 0.043 0.023 100 10 - - 0.324 0.132 WRMF 400 5 - - 0.047 0.027 50 1 - - 0.327 0.133 BPRKNN 1e-3 0.01 - - 0.047 0.028 2e-4 1e-4 - - 0.359 0.150 BPRMF 400 0.1 - - 0.048 0.027 200 0.1 - - 0.330 0.135 SLIM 20 0.5 - - 0.050 0.029 2 2 - - 0.343 0.147 LorSLIM 50 0.5 2 3 0.052 0.031 10 8 5 3 0.397 0.207 Our 1.2e-3 1.3 - - 0.065 0.043 6e-3 2.5 - - 0.428 0.215 method Netflix Y ahoo params HR ARHR params HR ARHR ItemKNN 200 - - - 0.156 0.085 300 - - - 0.318 0.185 PureSVD 500 10 - - 0.158 0.089 2000 10 - - 0.210 0.118 WRMF 300 5 - - 0.172 0.095 100 4 - - 0.250 0.128 BPRKNN 2e-3 0.01 - - 0.165 0.090 0.02 1e-3 - - 0.310 0.182 BPRMF 300 0.1 - - 0.140 0.072 300 0.1 - - 0.308 0.180 SLIM 5 1.0 - - 0.173 0.098 10 1 - - 0.320 0.187 LorSLIM 10 3 5 3 0.196 0.111 10 1 2 3 0.334 0.191 Our 0.015 1.2 - - 0.226 0.127 5e-3 1.1 - 0.367 0.218 The parameters for each method are as follows: ItemKNN: the number of neighbors k ; PureSVD: the number of singular values and the number of iterations during SVD; WRMF: the dimension of the latent space and the weight on purchases; BPRKNN: the learning rate and regularization parameter λ ; BPRMF: the dimension of the latent space and learning rate; SLIM: the l 2 -norm regularization parameter β and the l 1 -norm regularization parameter λ ; LorSLIM: the l 2 -norm regularization parameter β , the l 1 -norm regularization parameter λ , the nuclear norm regularization parameter z and the auxiliary parameter ρ . Our: auxiliary parameters µ 0 and γ . N in this table is 10. Bold numbers are the best performance in terms of HR and ARHR for each dataset. the test set 7 . The training set is used to train a model, then a size-N ranked list of recommended items for each user is generated. The ev aluation of the model is conducted by com- paring the recommendation list of each user and the item of that user in the test set. For the following results reported in this paper , N is equal to 10. T op-N recommendation is more like a ranking problem rather than a prediction task. The recommendation quality is measured by the hit-rate (HR) and the average reciprocal hit- rank (ARHR) (Deshpande and Karypis 2004). They directly measure the performance of the model on the ground truth data, i.e., what users hav e already pro vided feedback for . As pointed out in (Ning and Karypis 2011), they are the most direct and meaningful measures in T op-N recommendation scenarios. HR is defined as H R = # hits # users , (13) where #hits is the number of users whose item in the test set is recommended (i.e., hit) in the size-N recommendation list, and #users is the total number of users. An HR value of 1.0 indicates that the algorithm is able to alw ays recommend the hidden item, whereas an HR value of 0.0 denotes that the algorithm is not able to recommend any of the hidden items. A drawback of HR is that it treats all hits equally regard- less of where they appear in the T op-N list. ARHR addresses it by rew arding each hit based on where it occurs in the T op- 7 W e use the same data as in (Cheng, Y in, and Y u 2014), with partitioned datasets kindly provided by Y ao Cheng. N list, which is defined as follows: ARH R = 1 # users # hits X i =1 1 p i , (14) where p i is the position of the test item in the ranked T op- N list for the i -th hit. That is, hits that occur earlier in the ranked list are weighted higher than those occur later , and thus ARHR measures ho w strongly an item is recom- mended. The highest value of ARHR is equal to the hit- rate and occurs when all the hits occur in the first position, whereas the lowest value is equal to HR/N when all the hits occur in the last position of the list. Comparison Algorithms W e compare the performance of the proposed method 8 with sev en other state-of-the-art T op-N recommendation algo- rithms, including the item neighborhood-based collaborati ve filtering method ItemKNN (Deshpande and Karypis 2004), two MF-based methods PureSVD (Cremonesi, Koren, and T urrin 2010) and WRMF (Hu, K oren, and V olinsky 2008), two ranking/retriev al criteria based methods BPRMF and BPRKNN (Rendle et al. 2009), SLIM (Ning and Karypis 2011), and LorSLIM (Cheng, Y in, and Y u 2014). Experimental Results T op-N recommendation perf ormance W e report the comparison results with other competing methods in T able 2. The results sho w that our algorithm per - 8 The implementation of our method is av ailable at: https://github .com/sckangz/recom mc. 5 10 15 20 25 N 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 HR ItemKNN PureSVD WRMF BPRKNN BPRMF SLIM LorSLIM Our (a) Delicious 5 10 15 20 25 N 0 0.05 0.1 0.15 0.2 0.25 0.3 HR ItemKNN PureSVD WRMF BPRKNN BPRMF SLIM LorSLIM Our (b) lastfm 5 10 15 20 25 N 0 0.01 0.02 0.03 0.04 0.05 0.06 0.07 0.08 HR ItemKNN PureSVD WRMF BPRKNN BPRMF SLIM LorSLIM Our (c) BX 5 10 15 20 25 N 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 HR ItemKNN PureSVD WRMF BPRKNN BPRMF SLIM LorSLIM Our (d) ML100K 5 10 15 20 25 N 0 0.05 0.1 0.15 0.2 0.25 0.3 HR ItemKNN PureSVD WRMF BPRKNN BPRMF SLIM LorSLIM Our (e) Netflix 5 10 15 20 25 N 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 HR ItemKNN PureSVD WRMF BPRKNN BPRMF SLIM LorSLIM Our (f) Y ahoo Figure 1: Performance for Different V alues of N . forms better than all the other methods across all the datasets 9 . Specifically , in terms of HR, our method outperforms ItemKNN, PureSVD, WRMF , BPRKNN, BPRMF , SLIM and LorSLIM by 41%, 48.14%, 35.40%, 28.69%, 36.57%, 26.26%, 12.38% on av erage, respectively , over all the six datasets; with respect to ARHR, ItemKNN, PureSVD, WRMF , BPRKNN, BPRMF , SLIM and LorSLIM are im- prov ed by 48.55%, 60.38%, 48.58%, 37.14%, 49.47%, 31.94%, 14.15% on av erage, respectiv ely . Among the sev en state-of-the-art algorithms, LorSLIM is substantially better than the others. Moreover , SLIM is a lit- 9 A bug is found, so the result in published version is updated. W e apologize for any incon venience caused. tle better than others except on lastfm and ML100K among the rest six methods. Then BPRKNN performs best among the remaining fiv e methods on average. Among the three MF-based models, BPRMF and WRMF hav e similar perfor- mance on most datasets and are much better than PureSVD on all datasets except on lastfm and ML100K. Recommendation f or Different T op-N Figure 1 shows the performance achiev ed by the various methods for different v alues of N for all six datasets. It demonstrates that the proposed method outperforms other algorithms in all scenarios. What is more, it is evident that the dif ference in performance between our approach and the other methods are consistently significant. It is interesting to note that LorSLIM, the second most competitive method, may be worse than some of the rest methods when N is large. Matrix Reconstruction W e compare our method with LorSLIM by looking at ho w they reconstruct the user-item matrix. W e take ML100K as an example, whose density is 6.30% and the mean for those non-zero elements is 3.53. The reconstructed matrix from LorSLIM ˆ X = X W has a density of 13.61%, whose non- zero v alues hav e a mean of 0.046. For those 6.30% non-zero entries in X , ˆ X recovers 70.68% of them and their mean value is 0.0665. This demonstrates that lots of information is lost. On the contrary , our approach fully preserves the original information thanks to the constraint condition in our model. Our method recovers all zero values with a mean of 0.554, which is much higher than 0.046. This suggests that our method recovers X better than LorSLIM. This may ex- plain the superior performance of our method. Parameter T unning 0.1 1 50 100 500 1000 2000 3000 7 0 35 35.5 36 36.5 37 37.5 38 HR . =1.5 . =3 . =5 Figure 2: Influence of µ 0 and γ on HR for Delicious dataset. Although our model is parameter-free, we introduce the auxiliary parameter µ during the optimization. In alternating direction method of multipliers (ADMM) (Y ang and Y uan 2013), µ is fixed and it is not easy to choose an optimal value to balance the computational cost. Thus, a dynami- cal µ , increasing at a rate of γ , is preferred in real applica- tions. γ > 1 controls the conv ergence speed. The larger γ is, the fe wer iterations are to obtain the con vergence, but mean- while we may lose some precision. W e sho w the effects of different initializations µ 0 and γ on HR on dataset Delicious in Figure 2. It illustrates that our experimental results are not sensitiv e to them, which is reasonable since they are auxil- iary parameters controlling mainly the con ver gence speed. In contrast, LorSLIM needs to tune four parameters, which are time consuming and not easy to operate. Efficiency Analysis The time complexity of our algorithm is mainly from SVD. Exact SVD of a m × n matrix has a time complexity . 1.5 2 2.5 3 4 5 Time (s) 10 15 20 25 30 35 40 Figure 3: Influence of γ on time. of O (min { mn 2 , m 2 n } ), in this paper we seek a low-rank matrix and thus only need a fe w principal singular vec- tors/values. Packages like PR OP A CK (Larsen 2004) can compute a rank k SVD with a cost of O ( min { m 2 k , n 2 k } ) , which can be advantageous when k m, n . In fact, our algorithm is much faster than LorSLIM. Among the six datasets, ML100K and lastfm datasets ha ve the smallest and largest sizes, respecti vely . Our method needs 9s and 5080s, respectiv ely , on these two datasets, while LorSLIM takes 617s and 32974s. The time is measured on the same machine with an Intel Xeon E3-1240 3.40GHz CPU that has 4 cores and 8GB memory , running Ubuntu and Matlab (R2014a). Furthermore, without losing too much accuracy , γ can speed up our algorithm considerably . This is verified by Figure 3, which shows the computational time of our method on De- licious with varying γ . Conclusion In this paper, we present a matrix completion based method for the T op-N recommendation problem. The proposed method recovers the user-item matrix by solving a rank min- imization problem. T o better approximate the rank, a non- con ve x function is applied. W e conduct a comprehensiv e set of experiments on multiple datasets and compare its perfor- mance against that of other state-of-the-art T op-N recom- mendation algorithms. It turns out that our algorithm gener- ates high quality recommendations, which improv es the per- formance of the rest of methods considerably . This makes our approach usable in real-world scenarios. Acknowledgements This work is supported by US National Science Foundation Grants IIS 1218712. Q. Cheng is the corresponding author . References [Breese, Heckerman, and Kadie 1998] Breese, J. S.; Hecker- man, D.; and Kadie, C. 1998. Empirical analysis of predic- tiv e algorithms for collaborative filtering. In Pr oceedings of the F ourteenth conference on Uncertainty in artificial intelli- gence , 43–52. Mor gan Kaufmann Publishers Inc. [Cand ` es and Recht 2009] Cand ` es, E. J., and Recht, B. 2009. Exact matrix completion via con vex optimization. F ounda- tions of Computational mathematics 9(6):717–772. [Cheng, Y in, and Y u 2014] Cheng, Y .; Y in, L.; and Y u, Y . 2014. Lorslim: Low rank sparse linear methods for top-n recommendations. In Data Mining (ICDM), 2014 IEEE In- ternational Confer ence on , 90–99. IEEE. [Cremonesi, K oren, and T urrin 2010] Cremonesi, P .; K oren, Y .; and T urrin, R. 2010. Performance of recommender al- gorithms on top-n recommendation tasks. In Proceedings of the fourth ACM conference on Recommender systems , 39–46. A CM. [Degemmis, Lops, and Semeraro 2007] Degemmis, M.; Lops, P .; and Semeraro, G. 2007. A content-collaborativ e recom- mender that exploits wordnet-based user profiles for neigh- borhood formation. User Modeling and User-Adapted Inter- action 17(3):217–255. [Deshpande and Karypis 2004] Deshpande, M., and Karypis, G. 2004. Item-based top-n recommendation algorithms. A CM T ransactions on Information Systems (TOIS) 22(1):143–177. [Desrosiers and Karypis 2011] Desrosiers, C., and Karypis, G. 2011. A comprehensive survey of neighborhood-based recommendation methods. In Recommender systems hand- book . Springer . 107–144. [Fazel, Hindi, and Boyd 2003] Fazel, M.; Hindi, H.; and Boyd, S. P . 2003. Log-det heuristic for matrix rank mini- mization with applications to hankel and euclidean distance matrices. In American Contr ol Confer ence, 2003. Proceed- ings of the 2003 , volume 3, 2156–2162. IEEE. [Good et al. 1999] Good, N.; Schafer , J. B.; Konstan, J. A.; Borchers, A.; Sarwar , B.; Herlocker, J.; and Riedl, J. 1999. Combining collaborativ e filtering with personal agents for better recommendations. In AAAI/IAAI , 439–446. [Hu, K oren, and V olinsky 2008] Hu, Y .; K oren, Y .; and V olin- sky , C. 2008. Collaborativ e filtering for implicit feedback datasets. In Data Mining, 2008. ICDM’08. Eighth IEEE In- ternational Confer ence on , 263–272. IEEE. [Kang and Cheng 2015] Kang, Zhao ang Peng, C., and Cheng, Q. 2015. Robust pca via noncon ve x rank approxi- mation. In Data Mining (ICDM), 2015 IEEE International Confer ence on , 211–220. IEEE. [Kang et al. 2015] Kang, Z.; Peng, C.; Cheng, J.; and Cheng, Q. 2015. Logdet rank minimization with application to subspace clustering. Computational intelligence and neur o- science 2015:68. [Kang, Peng, and Cheng 2015a] Kang, Z.; Peng, C.; and Cheng, Q. 2015a. Robust subspace clustering via rob ust sub- space clustering via smoothed rank approximation. SIGNAL PR OCESSING LETTERS, IEEE 22(11):2088–2092. [Kang, Peng, and Cheng 2015b] Kang, Z.; Peng, C.; and Cheng, Q. 2015b. Robust subspace clustering via tighter rank approximation. A CM CIKM’15 . [Larsen 2004] Larsen, R. M. 2004. Propack-software for large and sparse svd calculations. A vailable online . URL http://sun. stanfor d. edu/rmunk/PR OP A CK 2008–2009. [Lee et al. 2014] Lee, J.; Bengio, S.; Kim, S.; Lebanon, G.; and Singer, Y . 2014. Local collaborati ve ranking. In Pr o- ceedings of the 23r d international conference on W orld wide web , 85–96. A CM. [Li and Za ¨ ıane 2004] Li, J., and Za ¨ ıane, O. R. 2004. Com- bining usage, content, and structure data to improve web site recommendation. In E-Commerce and W eb T echnologies . Springer . 305–315. [Linden, Smith, and Y ork 2003] Linden, G.; Smith, B.; and Y ork, J. 2003. Amazon. com recommendations: Item-to-item collaborativ e filtering. Internet Computing, IEEE 7(1):76–80. [Lu et al. 2014] Lu, C.; T ang, J.; Y an, S.; and Lin, Z. 2014. Generalized noncon vex nonsmooth low-rank minimization. In Computer V ision and P attern Recognition (CVPR), 2014 IEEE Confer ence on , 4130–4137. IEEE. [Melville, Mooney , and Nagarajan 2002] Melville, P .; Mooney , R. J.; and Nagarajan, R. 2002. Content-boosted collaborativ e filtering for improv ed recommendations. In AAAI/IAAI , 187–192. [Ning and Karypis 2011] Ning, X., and Karypis, G. 2011. Slim: Sparse linear methods for top-n recommender systems. In Data Mining (ICDM), 2011 IEEE 11th International Con- fer ence on , 497–506. IEEE. [Pan et al. 2008] Pan, R.; Zhou, Y .; Cao, B.; Liu, N. N.; Lukose, R.; Scholz, M.; and Y ang, Q. 2008. One-class col- laborativ e filtering. In Data Mining, 2008. ICDM’08. Eighth IEEE International Confer ence on , 502–511. IEEE. [Recht, Xu, and Hassibi 2008] Recht, B.; Xu, W .; and Has- sibi, B. 2008. Necessary and sufficient conditions for success of the nuclear norm heuristic for rank minimization. In De- cision and Control, 2008. CDC 2008. 47th IEEE Conference on , 3065–3070. IEEE. [Rendle et al. 2009] Rendle, S.; Freudenthaler , C.; Gantner, Z.; and Schmidt-Thieme, L. 2009. Bpr: Bayesian person- alized ranking from implicit feedback. In Pr oceedings of the T wenty-F ifth Conference on Uncertainty in Artificial Intelli- gence , 452–461. A U AI Press. [Ricci, Rokach, and Shapira 2011] Ricci, F .; Rokach, L.; and Shapira, B. 2011. Intr oduction to r ecommender systems handbook . Springer . [Shi and Y u 2011] Shi, X., and Y u, P . S. 2011. Limitations of matrix completion via trace norm minimization. ACM SIGKDD Explorations Ne wsletter 12(2):16–20. [Srebro and Salakhutdinov 2010] Srebro, N., and Salakhutdi- nov , R. R. 2010. Collaborativ e filtering in a non-uniform world: Learning with the weighted trace norm. In Advances in Neural Information Pr ocessing Systems , 2056–2064. [Y ang and Y uan 2013] Y ang, J., and Y uan, X. 2013. Lin- earized augmented lagrangian and alternating direction meth- ods for nuclear norm minimization. Mathematics of Compu- tation 82(281):301–329. [Y u et al. 2009] Y u, K.; Zhu, S.; Lafferty , J.; and Gong, Y . 2009. F ast nonparametric matrix factorization for large-scale collaborativ e filtering. In Pr oceedings of the 32nd interna- tional ACM SIGIR confer ence on Resear ch and development in information r etrieval , 211–218. A CM. [Zhong et al. 2015] Zhong, X.; Xu, L.; Li, Y .; Liu, Z.; and Chen, E. 2015. A noncon ve x relaxation approach for rank minimization problems. In T wenty-Ninth AAAI Conference on Artificial Intelligence .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment