Clustering With Side Information: From a Probabilistic Model to a Deterministic Algorithm

In this paper, we propose a model-based clustering method (TVClust) that robustly incorporates noisy side information as soft-constraints and aims to seek a consensus between side information and the observed data. Our method is based on a nonparamet…

Authors: Daniel Khashabi, John Wieting, Jeffrey Yufei Liu

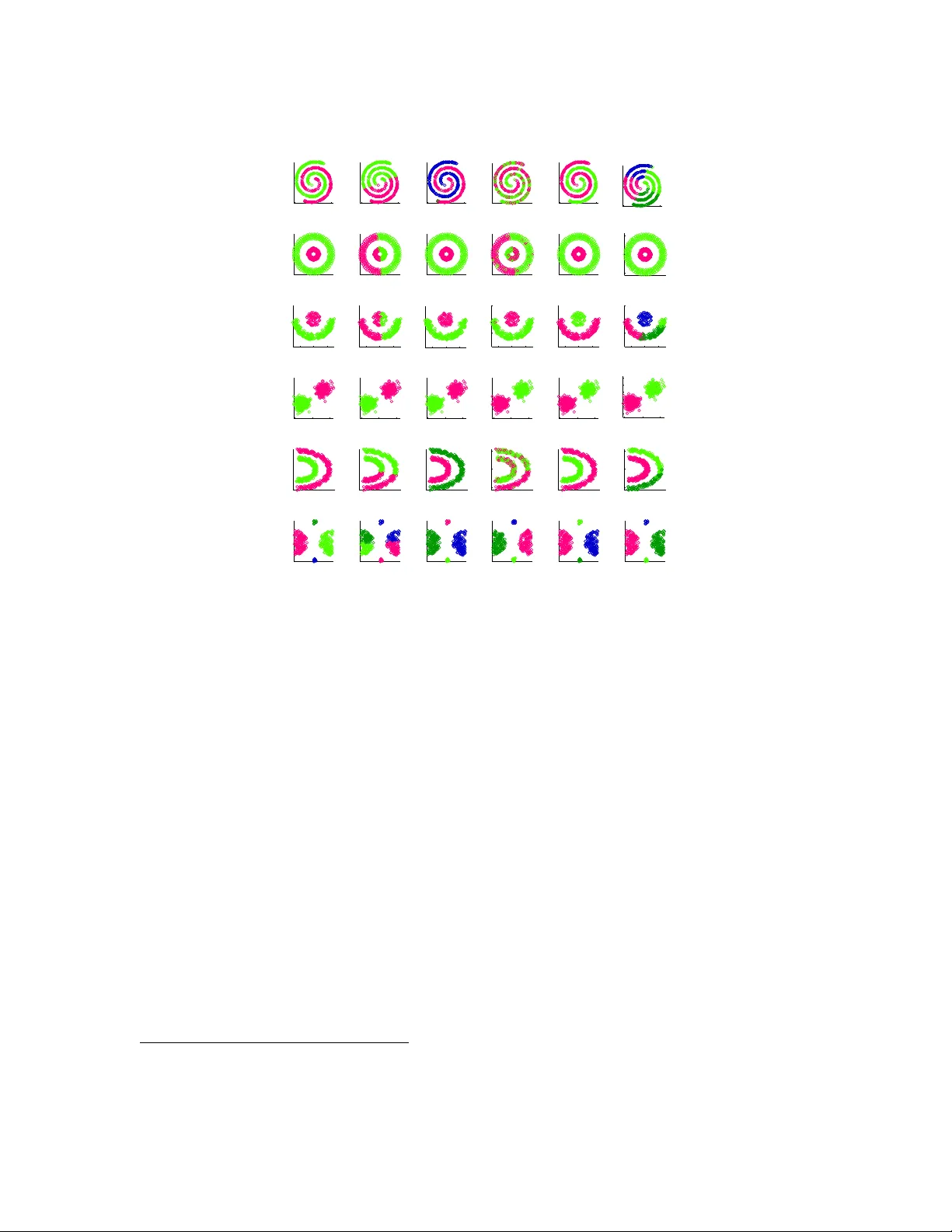

Journal of Machine Learning Research 1 (2000) 1-48 Submitted 4/00; Published 10/00 Clustering With Side Information: F rom a Probabilistic Mo del to a Deterministic Algorithm Daniel Khashabi khashab2@illinois.edu Dep artment of Computer Scienc e University of Il linois, Urb ana-Champ aign Urb ana, IL 61801 USA John Wieting wieting2@illinois.edu Dep artment of Computer Scienc e University of Il linois, Urb ana-Champ aign Urb ana, IL 61801 USA Jeffrey Y ufei Liu liu105@illinois.edu Go o gle 1600 A mphithe atr e Parkway Mountain View CA, 94043 F eng Liang liangf@illinois.edu Dep artment of Statistics University of Il linois, Urb ana-Champ aign Urb ana, IL 61801 USA Editor: ? Abstract In this pap er, we prop ose a mo del-based clustering metho d (TVClust) that robustly incorp orates noisy side information as soft-constrain ts and aims to seek a consensus b et ween side information and the observ ed data. Our method is based on a nonparametric Ba yesian hierarc hical mo del that com bines a probabilistic mo del for the data instances with one for the side-information. An efficient Gibbs sampling algorithm is prop osed for p osterior inference. Using the small-v ariance asymptotics of our probabilistic model, we deriv e a new deterministic clustering algorithm (RDP-means). It can b e view ed as an extension of K-means that allows for the inclusion of side information and has the additional prop ert y that the num b er of clusters do es not need to be sp ecified a priori. W e compare our work with many constrained clustering algorithms from the literature on a v ariety of data sets and conditions such as using noisy side information and erroneous k v alues. The results of our exp erimen ts show strong results for our probabilistic and deterministic approac hes under these conditions when compared to other algorithms in the literature. Keyw ords: Constrained Clustering, Model-based metho ds, Tw o-view clustering, Asymp- totics, Non-parametric models. . Authors hav e contributed equally to this work. c 2000 Daniel Khashabi, John Wieting, Jeffrey Y ufei Liu, F eng Liang. D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang 1. In tro duction W e consider the problem of clustering with side information, fo cusing on the t yp e of side information represen ted as pairwise cluster constrain ts b et w een any t w o data instances. F or example, when clustering genomics data, w e could ha ve prior kno wledge on whether tw o proteins should b e group ed together or not; when clustering pixels in an image, w e w ould naturally impose spatial smo othness in the sense that nearb y pixels are more likely to b e clustered together. Side information has b een shown to provide substan tial impro vemen t on clustering. F or example, Jin et al. (2013) show ed that combining additional tags with image visual fea- tures offered substantial b enefits to information retriev al and Khorev a et al. (2014) sho wed that learning and combining additional kno wledge (must-link constrain ts) offers substantial b enefits to image segmentation. Despite the adv an tages of including side information, how to best incorp orate it remains unresolv ed. Often the side-information in real applications can b e noisy , as it is usually based on heuristic and inexact domain kno wledge, and should not b e treated as the ground truth which further complicates the problem. In this pap er, w e approach incorp orating side information from a new p ersp ectiv e. W e mo del the observ ed data instances and the side information (or constraint) as tw o sources of data that are indep enden tly generated by a laten t clustering structure - hence we call our probabilistic mo del TVClust (Two-View Clustering). Specifically , TVClust combines the mixture of Dirichlet Pro cesses of the data instances and the random graph of constraints. W e deriv e a Gibbs sampler for TVClust (Section 3). F urthermore, inspired by Jiang et al. (2012), w e scale the v ariance of the aforementioned probabilistic mo del to derive a deter- ministic model. This can be seen as a generalization of K-means to a nonparametric num b er of clusters that also uses side instance-lev el information (Section 4). Since it is based on the DP-means algorithm (Jiang et al., 2012), and it uses relational side information w e call our final algorithm Relational DP-means (RDP-means). Lastly , exp erimen ts and results are presen ted (Section 5) in which we in v e stigate the b eha vior of our algorithm in different settings and compare to existing work in the literature. 2. Related W ork There has b een a plethora of w ork that aims to enhance the p erformance of clustering via side information, either in deterministic or probabilistic settings. W e refer the in terested reader to existing comprehensiv e literature reviews of this subarea suc h as Basu et al. (2008). K-me ans with side information : Some of the earliest efforts to incorp orate instance- lev el constraints for clustering were prop osed by W agstaff & Cardie (2000) and W agstaff et al. (2001). In these pap ers, b oth must-link and cannot-link constrain ts w ere considered in a mo dified K-means algorithm. A limitation of their w ork is that the side information must b e treated as the ground-truth and is incorp orated in to the mo dels as hard constraints. Other algorithms similar in nature to K-means ha ve b een prop osed as well that incor- p orate soft constraints. These include MPCK-means Bilenk o et al. (2004), Constrained V ector Quan tization Error (CVQE) Pelleg & Baras (2007) and its v ariant Linear Con- 2 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm strained Quantization Error (LCV QE) Pelleg & Baras (2007). 1 Unlik e these approaches, our algorithm is derived from using small v ariance asymptotics on our probabilistic mo del and therefore is derived in a more principled fashion. Moreo ver, our deterministic mo del do esn’t require as input the goal n umber of clusters, as it determines this from the data. Pr ob ablistic clustering with side information : Motiv ated b y enforcing smo oth- ness for image segmentation, Orbanz & Buhmann (2008) prop osed com bining a Marko v Random Field (MRF) prior with a nonparametric Ba yesian clustering mo del. One issue with their approac h is that b y its nature, MRF can only handle must-links but not cannot links. In contrast, our mo del, whic h is also based on a nonparametric Ba yesian clustering mo del, can handle b oth t yp es of constraints. Sp e ctr al clustering with side information : F ollowing the long tail of works on sp ectral clustering techniques (e.g. Ng et al. (2002); Shi & Malik (2000)), they’re some w orks using these techniques with side information, mostly differing by ho w the Laplacian matrix is constructed or b y v arious relaxations of the ob jectiv e functions. These works include Constrained Sp ectral Clustering (CSR) W ang & Davidson (2010) and Constrained 1-Sp ectral Clustering (C1-SC) Rangapuram & Hein (2012). Sup ervise d clustering : There has b een considerable in terest in sup ervise d clustering , where there is a lab eling for all instances Finley & Joachims (2005); Zhu et al. (2011) and the goal is to create uniform clu s ters with all instances of a particular class. In our work, w e aim to use side cues to impro v e the quality of clustering, making full lab eling unnecessary as we can also mak e use of partial and/or noisy lab els. Non-p ar ametric K-me ans : There has b een recent w ork that bridges the gap b etw een probabilistic clustering algorithms and deterministic algorithms. The work by Kulis & Jordan (2011) and Jiang et al. (2012) show that by prop erly scaling the distributions of the comp onen ts, one can derive an algorithm that is very similar to K-means but without requiring knowledge of the n umber of clusters, k . Instead, it requires another parameter λ , but DP-means is muc h less sensitive to this parameter than K-means is to k . W e use a similar technique to deriv e our prop osed algorithm, RDP-means. 3. A Nonparametric Bay esian Mo del In this section, we introduce our probabilistic mo del based on multi-view learning (Blum & Mitc hell, 1998). In multi-view learning, the datas consists of multiple views (indep enden t sources of information). In our approach w e consider the follo wing t wo views: 1. A set of observ ations { x i ∈ R p } n i =1 . 2. The side information, b et ween pairs of p oin ts, indicating how likely or unlik ely t wo p oin ts are to appear in the same cluster. The side information is represen ted b y a sym- metric n × n matrix E : if a priori x i and x j are believed to b elong to the same cluster, then E ij = 1. If they are b eliev ed to b e in different clusters, E ij = 0. Otherwise, if there is no side information about the pair ( i, j ), we denote it with E ij = NULL. F or future reference, denote the set of side information as C = { ( i, j ) : E ij 6 = NULL } . 1. These mo dels are further studied in Co v o es et al. (2013). 3 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang W e refer to our data, x 1: n and E , as tw o different views of the underlying clustering structure. It is worth noting that either view is sufficien t for clustering with existing algo- rithms. Giv en only the data instances x i ’s, it is the familiar clustering task where many metho ds suc h as K-means, mo del-based clustering (F raley & Raftery, 2002) and DPM can b e applied. Given the side information E , many graph-based clustering algorithms, such as normalized graph-cut (Shi & Malik, 2000) and sp ectral clustering (Ng et al., 2002) can b e applied. Our approach tries to aggregate information from the t wo views through a Bay esian framew ork and reach a consensus ab out the cluster structure. Given the laten t clustering structure, data from the tw o views is mo deled indep enden tly by tw o generative mo dels: x 1: n is modeled by a Diric hlet Pro cess Mixture (DPM) mo del (Antoniak, 1974; F erguson, 1973) and E is mo deled b y a random graph (Erd¨ os & R´ en yi, 1959). Aggregating the tw o views of x 1: n and E is particularly useful when neither view can b e fully trusted. While previous work suc h as constrained K-means or constrained EM assume and rely on constrain t exactness, TV Clust uses E in a “soft” manner and is more robust to errors. W e can call E ij = 1 a may link and E ij = 0 a may-not link, in contrast with the aforemen tioned m ust-link and cannot-link, to emphasize that our model tolerates noise in the side information. 3.1 Mo del for Data Instances W e use the Mixture of Diric hlet Pro cesses as the underlying clustering mo del for the data instances { x i } n i =1 . Let θ i denote the mo del parameter associated with observ ation x i , which is mo deled as an iid sample from a random distribution G . A Dirichlet Pro cess DP ( α, G 0 ) is used as the prior for G : θ 1 , . . . , θ n | G iid ∼ G, G ∼ DP ( α, G 0 ) . (1) Denote the collection ( θ 1 , . . . , θ i − 1 , θ i +1 , . . . , θ n ) by θ \ i . With prior sp ecification (1), the distribution of θ i giv en θ \ i (after in tegrating out G ) can b e found following the Balc kwell- MacQueen urn scheme (Blac kwell & MacQueen, 1973): p ( θ i | θ \ i ) ∝ K X k =1 n − i,k δ θ ∗ k ( θ i ) + αG 0 ( θ i ) , (2) where w e assume there are K unique v alues among θ \ i , denoted b y θ ∗ 1 , . . . , θ ∗ K , δ θ ∗ k ( · ) is the Kronec ker delta function, and n − i,k is the n umber of instances accumulated in cluster k excluding instance i . F rom (2) we can see a natural clustering effect in the sense that with a p ositiv e probability , θ i will tak e an existing v alue from θ ∗ 1 , . . . , θ ∗ K , i.e. it will join one of the K clusters. This effect can b e interpreted using the Chinese Restauran t Pro cess (CRP) metaphor Aldous (1983), where assigning θ i to a cluster is analogous to a new customer c ho osing a table in a Chinese restauran t. The customer can join an already occupied table or start a new one. Giv en θ i , we use a parametric family p ( x i | θ i ) to mo del the instance x i . In this paper, w e fo cus on exp onen tial families: p ( x | θ ) = exp ( h T ( x ) , θ i − ψ ( θ ) − h ( x )) , (3) 4 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm where ψ ( θ ) = log R exp ( h T ( x ) , θ i − h ( x )) d x is the log-partition function (cum ulant gen- erating function) and T ( x ) is the vector of sufficient statistics, giv en input p oint x . T o simplify the exp osition, we assume that x is the augmented vector of sufficient statistics giv en an input p oin t, and simplify (3) by remo ving T ( · ): p ( x | θ ) = exp ( h x , θ i − ψ ( θ ) − h ( x )) . (4) It is easy to sho w that for this form ulation, E p [ x ] = ∇ θ ψ ( θ ) , (5) Co v p [ x ] = ∇ 2 θ ψ ( θ ) . (6) F or conv enience, we choose the base measure G 0 , in DP ( α, G 0 ) from the conjugate family , whic h tak es the following form: dG 0 ( θ | τ , η ) = exp ( h θ , τ i − η ψ ( θ ) − m ( τ , η )) , (7) where τ and η are parameters of the prior distribution. Given these definitions of the lik eliho o d and conjugate prior, the p osterior distribution ov er θ is an exp onen tial family distribution of the same form as the prior distribution, but with scaled parameters τ + x and η + 1. Exp onen tial families contain many p opular distributions used in practice. F or example, Gaussian families are often used to mo del real v alued p oin ts in R p , whic h corresp ond to T ( x ) = [ x , x T x ] T , and θ = ( µ, Σ), where µ is the mean vector, and Σ is the cov ariance matrix. The base measure often chosen for Gaussian families is its conjugate prior, the Normal-In verse-Wishart distribution. Another p opular parametric family , the multinomial distribution, is often used to mo del word counts in text mining or histograms in image segmen tation. This distribution corresp onds to T ( x ) = x and the base measure is often c hosen to b e a Dirichlet distribution, its conjugate prior. 3.2 Mo del for Side Information Giv en θ 1: n = ( θ 1 , . . . , θ n ), w e can summarize the clustering structure by a matrix H n × n where H ij = δ θ i ( θ j ) . Note that H should not b e confused with E . E represents the side information and can b e view ed as a random realization based on the true clustering structure H . W e wan t to infer H based on E and x 1: n . W e mo del E using the following generative pro cess: with probabilit y p an existing edge of H is preserved in E , and with probability q a false edge (of H ) is added to E , i.e. for an y ( i, j ) ∈ C : p ( E ij = 0 | H ij = 1) = p, p ( E ij = 1 | H ij = 0) = 1 − p, p ( E ij = 0 | H ij = 0) = q , p ( E ij = 1 | H ij = 0) = 1 − q . or more concisely , p ( E ij | H ij , p, q ) = p E ij H ij (1 − p ) (1 − E ij ) H ij q (1 − E ij )(1 − H ij ) (1 − q ) E ij (1 − H ij ) . (8) 5 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang G 0 α DP G θ i x i ψ i,j Φ i,j E ij i = 1 ...n i, j = 1 ...n 1 Figure 1: Graphical representation of TV Clust. The data generating process for the data instances is on the left and the pro cess for the side information is on the right. The v alues p and q represent the credibility of the v alues in the matrix E , while the v alues 1 − p and 1 − q are error probabilities. One may b e able to set the v alue for ( p, q ) based on exp ert knowledge or learn them from the data in a fully Ba yesian approach by adding another lay er of priors ov er p and q , p ∼ Beta ( α p , β p ) , q ∼ Beta ( α q , β q ) . 3.3 P osterior Inference via Gibbs Sampling A graphical represen tation of our mo del TVClust is sho wn in Figure 1. Based on the parameters θ 1 , . . . , θ n , the full data lik eliho o d is p ( x 1: n , E | θ 1: n ) = n Y i =1 p ( x i | θ i ) n Y 1 ≤ i 1 − q . Then by (11), the chance of a p erson assigned to a table not only increases with the p opularit y of the table (i.e. the table size n − i,k ) lik e in the original DPM, but also increases with their friend coun t f i k and decreases with their stranger coun t s i k . Instead of sequentially updating the p oint-sp e cific parameters ( θ 1 , . . . , θ n ), one can sequen tially up date an equiv alent parameter set: the set of cluster-sp e cific parameters ( θ ∗ i , . . . , θ ∗ K ) and the cluster assignment indicators ( z 1 , . . . , z n ), where z i ∈ { 1 , . . . , K } indi- cates the cluster assignment for instance i , i.e., θ i = θ ∗ z i . By our deriv ation at (11), we can up date z i ’s sequentially as p ( z i = k ) ∝ n − i,k p ( x i | θ ∗ k ) p 1 − q f i k 1 − p q s i k , p ( z i = k new ) ∝ α R p ( x i | θ ∗ k ) dG 0 . (13) 7 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang The cluster parameters ( θ ∗ i , . . . , θ ∗ K ), given the partition z 1: n and the data x 1: n , can b e up dated similarly as they were in Algorithm 2 of Neal (2000). 4. RDP-means: A Deterministic Algorithm In this section, w e apply the scaling tric k as describ ed in Jiang et al. (2012) to transform the Gibbs sampler to a deterministic algorithm, which w e refer to as RDP-mean. 4.1 Reparameterization of the exp onen tial family using Bregman div ergence W e bring in the notion of Bregman divergence and its connection to the exp onen tial family . Our starting p oin t is the formal definition of the Bregman divergence. Definition 1 ((Bregman, 1967)) Define a strictly c onvex function φ : S → R , such that the domain S ⊆ R p is a c onvex set, and φ is differ entiable on ri ( S ) , the r elative interior of S , wher e its gr adient ∇ φ exists. Given two p oints x , y ∈ R p , the Br e gman diver genc e D φ ( x , y ) : S × ri ( S ) → [0 , + ∞ ) is define d as: D φ ( x , y ) = φ ( x ) − φ ( y ) − h x − y , ∇ φ ( y ) i . The Bregman div ergence is a general class of distance measures. F or instance, with a squared function φ , Bregman div ergence is equiv alen t to Euclidean distance (See T able 1 in Banerjee et al. (2005) for other cases). F orster & W armuth (2002) sho wed that there exists a bijection b et ween exp onen tial families and Bregman div ergences. Giv en this connection, Banerjee et al. (2005) derived a K-means t yp e algorithm for fitting a probabilistic mixture model (with fixed n umber of comp onen ts) using Bregman div ergence, rather than the Euclidean distance. Definition 2 (Legendre Conjugate) F or a function ψ ( . ) define d over R p , define its c on- vex c onjugate ψ ∗ ( . ) as, ψ ∗ ( µ ) = sup θ ∈ dom ( ψ ) {h µ , θ i − ψ ( θ ) } . In addition, if the function ψ ( θ ) is close d and c onvex, ( ψ ∗ ) ∗ = ψ . It can b e shown that the log-partition function of the exp onential families of distri- butions is a closed conv ex function (see Lemma 1 of Banerjee et al. (2005)). Therefore there is a bijection b et ween the conjugate parameter µ of the Legendre conjugate ψ ∗ ( · ), and the parameter of the exp onential family , θ , in the log-partition function defined for the exp onen tial family at (3). With this bijection, w e can rewrite the lik eliho o d (4) using the Bregman divergence and the Legendere conjugate: p ( x | θ ) = p ( x | µ ) = exp ( − D ψ ∗ ( x , µ )) f ψ ∗ ( x ) , (14) where f ψ ∗ ( x ) = exp ( ψ ∗ ( x ) − h ( x )). The left side of (14) is written as p ( x | θ ) = p ( x | µ ) to stress that conditioning on θ is equiv alent to conditioning on µ , since there is a bijection b et ween them and the right side of (14) is essentially the same as (4). A nice in tuition ab out this reparameterization is that now the lik eliho o d of an y data p oin t x is related to ho w far it is from the cluster comp onen ts parameters µ , where the distance is measured using the Bregman divergence D ψ ∗ ( x , µ ). 8 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm Similarly we can rewrite the prior (7) in terms of the Bregman div ergence and the Legendere conjugate: p ( θ | τ , η ) = p ( µ | τ , η ) = exp − η D ψ ∗ ( τ η , µ ) g ψ ∗ ( τ , η ) , (15) where g ψ ∗ ( τ , η ) = exp ( η ψ ( θ ) − m ( τ , η )) . 4.2 Scaling the Distributions Lemma 3 (Jiang et al. (2012)) Given the exp onential family distribution (4), define another pr ob ability distribution with p ar ameter ˜ θ , and lo g-p artition function ˜ ψ ( . ) , wher e ˜ θ = γ θ , and ˜ ψ ( ˜ θ ) = γ ψ ( ˜ θ /γ ) , then: 1. The sc ale d pr ob ability distribution ˜ p ( . ) define d with p ar ameter ve ctor ˜ θ , and lo g-p artition function ˜ ψ ( . ) , is a pr op er pr ob ability distribution and b elongs to the exp onential family. 2. The me an and varianc e of the pr ob ability distribution ˜ p ( . ) ar e: E ˜ p ( x ) = E p ( x ) , Cov ˜ p ( x ) = 1 γ Cov p ( x ) . 3. The L e gendr e c onjugate of ˜ ψ ( . ) 2 is: ˜ ψ ∗ ( ˜ θ ) = γ ψ ∗ ( ˜ θ ) . The implication of Lemma 3 is that the cov ariance Cov p ( x ) scales with 1 /γ , which is close to zero when γ is large, but the mean E p ( x ) remains the same. Thus we can obtain a deterministic algorithm when γ go es to infinit y . With the scaling trick, the scaled prior and scaled likelihoo d can b e written as: ( ˜ p ( x | θ , γ ) = ˜ p ( x | µ , γ ) = exp ( − γ D ψ ∗ ( x , µ )) f γ ψ ∗ ( x ) ˜ p ( θ | τ , η , γ ) = ˜ p ( ˜ µ | τ , η , γ ) = exp − η D ψ ∗ ( τ η , µ ) g γ ψ ∗ ( τ /γ , η/γ ) (16) 4.3 Asymptotics of TVclust Using the scaling distributions (16) we can write the Gibbs up date (13) in the following form: p ( z i = k ) ∝ n − i,k exp ( − γ D ψ ∗ ( x i , µ k )) p 1 − q f i k 1 − p q s i k p ( z i = k new ) ∝ α Z ˜ p ( x i | θ ) ˜ p ( θ | τ , η ) dθ (17) F ollowing Jiang et al. (2012), we can appro ximate the in tegral I = R ˜ p ( x | θ ) ˜ p ( θ | τ , η ) dθ using the Laplace approximation (Tierney & Kadane, 1986): ˜ p ( x | τ , η , γ ) ≈ g γ ψ ∗ ( τ /γ , η/γ ) exp − γ φ ( x ) − η φ ( τ /η ) − ( γ + η ) φ ( γ x + τ γ + η ) γ d Co v γ x + τ γ + τ . 2. h ˜ ψ ( . ) i ∗ the conjugate of ˜ ψ ( . ), is denoted with ˜ ψ ∗ ( . ) for simplicity . 9 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang W e can write the resulting expression as a pro duct of a function of the parameters and a function of the input observ ations: ˜ p ( x | τ , η , γ ) ≈ κ ( τ , η , γ ) × ν ( x ; τ , η, γ ) The concen tration parameter of the DPM, α in (8), is usually tuned by user. T o get the desired result, we c ho ose it to b e: α = κ ( τ , η , γ ) − 1 exp( λγ ) , where λ is a new parameter introduced for the mo del. In other words, the effect of the other parameters ( α, τ , η ) is now transferred to λ . Then the 2nd line of (17) b ecomes p ( z i = k new ) = 1 Z ν ( x i ; τ , η , γ ) n + α − 1 exp ( − γ λ ) , (18) suc h that ν ( x i ; τ , η , γ ) b ecomes a p ositiv e constan t when γ go es to infinity . Applying a similar trick to the 1st line of (17), w e ha ve p 1 − q f i k 1 − p q s i k = exp f i k ln p 1 − q − s i k ln q 1 − p = exp γ f i k .ξ 1 − s i k .ξ 2 where we introduced new v ariables ξ 1 = ln p 1 − q and ξ 2 = ln q 1 − p , which represents the confidence on having a link , and not having a link , resp ectively . Then the 1st line of (8) b ecomes: p ( z i = k ) = 1 Z n − i,k n + α − 1 exp − γ D ψ ∗ ( x i , µ k ) − f i k .ξ 1 + s i k .ξ 2 . (19) Com bining (18) and (19), we can rewrite the Gibbs up dates (13) as follows: ( p ( z i = k ) ∝ n − i,k exp − γ D ψ ∗ ( x i , µ k ) − f i k .ξ 1 + s i k .ξ 2 p ( z i = k new ) ∝ ν ( x i ; τ , η , γ ) exp ( − γ λ ) . When γ goes to infinit y , the Gibbs sampler degenerates in to a deterministic algorithm, where in eac h iteration, the assignment of x i is determined b y comparing the K + 1 v alues b elo w: n D ψ ∗ ( x i , µ 1 ) − f i 1 ξ 1 + s i 1 ξ 2 , . . . , D ψ ∗ ( x i , µ K ) − f i K .ξ 1 + s i K ξ 2 , λ o ; If the k -th v alue (where k = 1 , . . . , K ) is the smallest, then assign x i to the k -th cluster. If λ is the smallest, form a new cluster. 10 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm 4.4 Sampling the cluster parameters Giv en the cluster assignments { z i } n i =1 , the cluster centers are indep enden t of the side in- formation. In other w ords, the p osterior distribution o ver the cluster assignmen ts can b e written in the following form: p ( µ k | x 1: n , z 1: n , τ , η , γ , ξ ) ∝ h Y i : z i = k ˜ p ( x i | µ k , γ ) i × ˜ p ( µ k | τ , η , γ ) ∝ exp − ( γ n k + η ) D ψ ∗ P i : z i = k γ x i + τ γ n k + η , µ k in which n k = # { i : z i = k } . When γ → ∞ , p ( µ k | x 1: n , z 1: n , τ , η , γ , ξ ) ∝ exp − ( γ n k + η ) D ψ ∗ 1 n k X i : z i = k x i , µ k . The maximum is attained when the argumen ts of the Bregman divergence are the same, i.e. µ k = 1 n k X i : z i = k x i . So cluster parameters are just up dated by the corresp onding cluster means. This completes the algorithm for RDP-mean whic h is shown in Algorithm 1. 4.5 Effect of c hanging ξ 1 and ξ 2 T aking ξ 1 , ξ 2 → 0, RDP-means will b ehav e like DP-means, i.e. no side information is con- sidered. T aking ξ 1 , ξ 2 → + ∞ puts all the weigh t on the side information and no w eight on the p oin t observ ations. In other w ords, it generates a set of clusters according to just the constraints in E . In a similar w ay , w e can put more weigh t on may links compared to may-not links b y choosing ξ 1 > ξ 2 and vice versa. As w e will show, there is an ob jectiv e function which corresponds to our algorithm. The ob jective function has many lo cal minimum and the algorithm minimizes it in a greedy fashion. Exp erimentally w e hav e observed that if we initialize ξ 1 = ξ 2 = ξ with a very small v alue ξ 0 and increase it each iteration, incrementally tightening the constrain ts, it giv es a desirable result. 4.6 Ob jective F unction Theorem 4 The c onstr aine d clustering RDP-me ans (Algorithm 1) iter atively minimizes the fol lowing obje ctive function. min {I k } K k =1 K X k =1 X i ∈I k D φ ( x i , µ k ) − ξ 1 f i k + ξ 2 s i k + λK (20) wher e I 1 , . . . , I K denote a p artition of the n data instanc es. 11 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang Algorithm 1: Relational DP-means algorithm Input: The data p oints D = { x i } , Relational matrix E , The parameter of the Bregman divergence ψ ∗ , the parameters λ , ξ 0 , and its rate of increase at each iteration ξ rate . Result : The assignmen t v ariables z = [ z 1 , z 2 , . . . , z n ] and the component parameters. Initialization: ξ ← ξ 0 , and all points are assigned to one single cluster. while not c onver ged do for x i ∈ D do for µ k ∈ C do Find the v alues of f i k and s i k for x i from the matrix E , and using the current z as defined in (20). ; dist ( x i , µ k ) ← D ψ ∗ ( x i , µ k ) − ξ 1 f i k + ξ 2 s i k ; end [ d min , i min ] ← { dist ( x i , µ 1 ) , . . . , dist ( x i , µ K ) } ; // d min is the minimum distance and i min is the index of the minimum distance. if d min < λ then z i ← i min ; else // Add a new cluster: C ← {C ∪ x i } K ← K + 1 end end for µ k ∈ C do // given the current assignment of p oints, find the set of p oin ts assigned to cluster k , D k : if |D j | > 0 then µ K ← P x i ∈D j x i |D j | else // Remov e the cluster and apply the changes to the related v ariables ; end end ξ ← ξ × ξ rate end Pro of In the pro of we follow a similar argument as in Kulis & Jordan (2011). F or sim- plicit y , let us assume ξ 1 = ξ 2 = ξ and call the v alue D φ ( x i , µ k ) − ξ ( f i k − s i k ) the augmente d distanc e . F or a fixed num b er of clusters, each p oin t gets assigned to the cluster that has the smaller augmented distance, thus decreasing the v alue of the ob jectiv e function. When the augmented distance v alue of an elemen t D φ ( x i , µ k ) − ξ ( f i k − s i k ) is more than λ , we remo ve the p oint from its existing cluster, add a new cluster centered at the data p oin t and increase the v alue of K by one. This increases the ob jective by λ (ov erall decrease in the ob jective function). F or a fixed assignmen t of the p oin ts to the clusters, finding the cluster cen ters by av eraging the assigned p oin ts minimizes the ob jective function. Thus, the ob jective function is decreasing after eac h iteration. 4.7 Sp ectral Interpretation F ollowing the sp ectral relaxation framew ork for the K-means ob jectiv e function in tro duced b y Zha et al. (2001) and Kulis & Jordan (2011), we can apply the same reformulation to our framew ork, giv en the ob jectiv e function (20). Consider the follo wing optimization problem: max { Y | Y > Y = I n } tr Y > K − λI + ξ 1 E + − ξ 2 E − Y , (21) 12 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm where Y = Z ( Z > Z ) − 1 / 2 ∈ R p × k is the normalized point-component assignment matrix, and K is the kernel matrix whic h is defined as: K = A > A ∈ R p × p , A > = [ x 1 , . . . , x n ] ∈ R p × n E + = 1 { E > 0 } and E − = 1 { E < 0 } are side information matrices for may and may-not links, resp ectiv ely (where 1 { . } is applied element wise). In particular if ξ 1 = ξ 2 = ξ Equation 21 b ecomes: max { Y | Y > Y = I n } tr Y > ( K − λI + ξ E ) Y . Theorem 5 The obje ctive function in (21) is e quivalent the obje ctive function in (20). Pro of In Kulis & Jordan (2011) (Lemma 5.1) it has b een prov ed that max { Y | Y > Y = I n } tr Y > ( K − λI ) Y is equiv alent minimizing the ob jectiv e function of DP-means. F or simplicit y , we prov e the case for ξ 1 = ξ 2 = ξ , although the general case can also be pro ved in a v ery similar fashion. In our ob jectiv e function we ha ve the additional term max { Y | Y > Y = I n } tr Y > ( ξ E ) Y whic h w e will prov e to b e ξ P K k =1 P i ∈I k f i k − s i k : tr Y > ( ξ E ) Y = ξ tr Y > E Y = ξ K X k =1 X i ∈I k X j ∈I k E ( i, j ) = ξ K X k =1 X i ∈I k X j ∈I k 1 { E ( i, j ) = 1 } − X j ∈I k 1 { E ( i, j ) = − 1 } = ξ K X k =1 X i ∈I k f i k − s i k . Giv en the ob jective function in Equation 21, w e can use Theorem 5.2 in Kulis & Jordan (2011) and design a sp ectral algorithm for solving our problem, simply b y finding eigen- v ectors of K + ξ 1 E + − ξ 2 E − that hav e an eigenv alue larger than λ . By this interpretation one can easily see that if ξ 1 = ξ 2 = 0, the ob jective function is equiv alen t to the DP-means ob jective and when ξ 1 and ξ 2 are large, the clustering only mak es use of the side information. 5. Exp erimen ts In this section we rep ort exp erimen ts on sim ulated data, a v ariety of UCI datasets and an Image Net dataset. 3 F or ev aluation, w e report the F -measure (F) exactly as defined in Section 4.1 of Bilenk o et al. (2004), adjusted Rand index (AdjRnd) and normalized mutual 3. The co de and data for our exp eriments and implementation is av ailable at https://goo.gl/i6yoPb. 13 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang −10 0 10 −10 0 10 True labels −10 0 10 −10 0 10 K−means −10 0 10 −10 0 10 RDP−means −10 0 10 −10 0 10 MPCKMeans −10 0 10 −10 0 10 TVClust −5 0 5 −5 0 5 True labels −5 0 5 −5 0 5 K−means −5 0 5 −5 0 5 RDP−means −5 0 5 −5 0 5 LCVQE −5 0 5 −5 0 5 MPCKMeans −10 0 10 −10 0 10 True labels −10 0 10 −10 0 10 K−means −10 0 10 −10 0 10 LCVQE −10 0 10 −10 0 10 MPCKMeans −10 0 10 −10 0 10 TVClust 0 5 10 −4 −2 0 2 4 6 True labels 0 5 10 −4 −2 0 2 4 6 K−means 0 5 10 −4 −2 0 2 4 6 RDP−means 0 5 10 −4 −2 0 2 4 6 LCVQE 0 5 10 −4 −2 0 2 4 6 MPCKMeans 0 5 10 −4 −2 0 2 4 6 TVClust −20 0 20 −20 0 20 True labels −20 0 20 −20 0 20 K−means −20 0 20 −20 0 20 RDP−means −20 0 20 −20 0 20 LCVQE −20 0 20 −20 0 20 MPCKMeans −20 0 20 −20 0 20 True labels −20 0 20 −20 0 20 K−means −20 0 20 −20 0 20 RDP−means −20 0 20 −20 0 20 MPCKMeans −20 0 20 −20 0 20 TVClust −10 0 10 −20 −10 0 RDP−means −10 0 10 −10 0 10 LCVQE −20 0 20 −20 0 20 LCVQE −5 0 5 −5 0 5 TVClust −20 0 20 −20 0 20 TVClust Figure 2: Comparison of cluster quality on example of our synthetic data. information (NMI). F or RDP-Means, in all exp erimen ts, w e terminate the algorithm when the cluster assignments did not c hange after 20 iterations, and we initialize ξ 0 = 0 . 001 and ξ rate = 2. F or DP-means and RDP-means we calculate λ based on the k -th furthest first metho d explained in Kulis & Jordan (2011). Although w e use the actual k in calculating λ , in practice, λ is less sensitive to initialization (See Figure 3). W e compare with all constrained or semi-sup ervised clustering techniques from the lit- erature that w e could find online and from p ersonal comm unications. 4 In our experiments, w e do not include results from metho ds where w e observed unstable b ehavior such as n u- merical instabilities. The parameters for all algorithms w ere set to the default settings of the authors’ implementation. 5 . W e exp eriment on three tasks. The first exp eriment ev aluates the algorithms on a set of t wo-dimensional sim ulated data that sho wcases difficult clustering problems in order to gain some visual intuition of ho w the algorithms p erform. The second exp erimen t ev aluates on a collection of 5 datasets from the UCI rep ository , commonly used for ev aluation of clustering tasks: iris, wine, ecoli, glass, and balance. W e also study the effect of v arying some of the k ey parameters in these exp eriments. The third task illustrates the effe ctiv eness of using side information in the image clustering task of Jiang et al. (2012). One imp ortan t v ariable is the p ercentage of side information r , which is the n umber of ± 1 elemen ts in the E matrix (Section 3.1) normalized by its size. W e exp erimented 4. See http://goo.gl/tSSH95 for a list of metho ds with links to their implementations. 5. The co de for LCV QE is kindly pro vided b y the authors of Cov o es et al. (2013) via personal comm uni- cation. 14 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm Metho d \ Dataset iris wine ecoli F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI K-means 0.81 0.71 0.74 0.59 0.36 0.42 0.61 0.50 0.63 DP-means 0.74 0.57 0.69 0.63 0.37 0.44 0.71 0.61 0.64 TV Clust(v ariational) 0.91 0.85 0.90 0.56 0.45 0.53 0.85 0.79 0.76 RDP-means 0.86 0.80 0.80 0.81 0.73 0.72 0.90 0.86 0.82 MPCKMeans 0.53 0.29 0.30 0.54 0.30 0.30 0.57 0.33 0.33 LCV QE 0.73 0.58 0.60 0.57 0.35 0.39 0.61 0.52 0.61 Metho d \ Dataset glass balance a veraged ov er datasets F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI K-means 0.57 0.47 0.71 0.47 0.14 0.12 0.61 0.44 0.52 DP-means 0.53 0.29 0.45 0.31 0.12 0.21 0.58 0.39 0.48 TV Clust(v ariational) 0.42 0.22 0.42 0.94 0.92 0.91 0.74 0.64 0.70 RDP-means 0.82 0.76 0.73 0.94 0.92 0.88 0.87 0.81 0.79 MPCKMeans 0.46 0.30 0.36 0.70 0.26 0.28 0.56 0.30 0.31 LCV QE 0.64 0.55 0.66 0.62 0.38 0.37 0.64 0.48 0.53 T able 1: Results ov er eac h UCI dataset av eraging ov er p and r parameters. RDP-means has the b est p erformance o verall but not in some particular cases as shown in T ables 2 and 3. with v arying r , adding noise with probability 1 − p to the constrain t matrix, and deviating initialization from the true k v alue. 5.1 Sim ulated Data W e ev aluate on 6 different patterns using p = 1 (i.e. no noise) with a sampling rate of r = 0 . 01. The results for all patterns are shown in Figure 2. Each algorithm was tested 5 times, and we took the strongest result from these 5 runs to display . 5.2 UCI Datasets W e ev aluate on five datasets 6 exp erimen ting with different settings. In the first setting, w e v ary the percentage of constrain ts sampled, r , which we choose from { 0 . 01 , 0 . 03 , 0 . 05 } . Secondly , we add noise, letting the parameter p tak e on v alues in { 1 . 0 , 0 . 95 , 0 . 90 , 0 . 80 } . Lastly , we in vestigate the sensitivity of the algorithms to deviations from the true num b er of clusters, k , where w e c ho ose the deviation from the set {± 3 , ± 2 , ± 1 , 0 } . F or each dataset and set of h yp er-parameters in this section, w e av erage the results of five trials to pro duce the final result. Av erage ov er all parameters: W e present the p erformance of the algorithms, p er dataset, a veraged ov er differen t v alues of the parameters p and r . The results are summa- rized in T able 1 and show that o verall, RDP-means has the b est p erformance. Av erage all parameters v arying amount of noise: T o analyze ho w adding noise to the constrain ts affects p erformance, we v ary the v alues of p for eac h algorithm on each dataset. As mentioned previously , the probability of choosing noisy constraints is prop or- tional to 1 − p . The higher the v alue of p , the less noise in the constraints. The results as 6. The data is directly do wnloaded from http://archive.ics.uci.edu/ml/ . 15 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang Method \ Param. p = 1 p = 0 . 95 p = 0 . 9 p = 0 . 8 F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI K-means 0.61 0.44 0.53 0.60 0.43 0.52 0.61 0.43 0.53 0.61 0.43 0.52 DP-means 0.58 0.39 0.49 0.59 0.39 0.48 0.59 0.40 0.49 0.58 0.38 0.48 TVClust (v ariational) 0.77 0.69 0.74 0.75 0.66 0.72 0.73 0.63 0.69 0.68 0.56 0.64 RDP-means 0.93 0.90 0.89 0.92 0.89 0.87 0.87 0.82 0.79 0.75 0.65 0.62 MPCKMeans 0.94 0.91 0.90 0.46 0.14 0.17 0.44 0.10 0.12 0.41 0.04 0.07 LCVQE 0.83 0.76 0.79 0.64 0.48 0.53 0.58 0.39 0.45 0.50 0.27 0.35 T able 2: This table illustrates ho w the algorithms p erform under different levels of noise. W e av erage the results ov er eac h UCI dataset and v alues of r . a function of noise are summarized in T able 2. W e a verage o ver different constraint sizes r ∈ { 0 . 01 , 0 . 03 , 0 . 05 } and differen t datasets. First note that the results for K-means and DP-means are the same across differen t noise rates, 7 since these algorithms do not mak e use of constraints. Another observ ation is that, MPCKMeans has the b est p erformance for p = 1, although for p = 0 . 95 its p erformance drops significan tly . Therefore, this metho d is a go od option when the side information is relativ ely pure. Other metho ds, including RDP-means, TV Clust and LCV QE, hav e drops as well when increasing the noise level, although the drops for TV Clust and RDP-means are smaller. Av erage all parameters v arying amount of side information: T o b etter under- stand the effect of side-information, we unroll the results of T able 2 and show the perfor- mance as a function of r . The results are sho wn in T able 3. Unsurprisingly , adding constrain ts (increasing r ) increases the p erformance of those algorithms that mak e use of them. In terestingly , for p = 0 . 8 and r = 0 . 01, the b est algorithms that do make use of constrain ts hav e similar p erformance to K-means and DP- means (whic h do not use constrain ts). This suggests there could b e space for impro vemen t for handling noisy constraints. Effect of deviation from true num b er of clusters: Most of the algorithms we analyze are dependent on the true num b er of clusters, whic h is usually unkno wn in practice. Here, w e in vestigate the sensitivity of those algorithms to p erturbations in the true v alue of k . The DP-means algorithm of Jiang et al. (2012) is said to b e less sensitiv e to the c hoice of k , since its parameter has weak er dep endence on the choice of k . Similarly , since RDP-means is deriv ed from DP-means, it is exp ected that it to o would b e relatively robust to deviations from the actual k . F or all algorithms and for eac h dataset, w e set p = 1 and r = 0 . 03 and v ary the num b er of clusters to k − dev iation where dev iation ∈ {± 3 , ± 2 , ± 1 , 0 } . 8 The results are shown in Figure 3. The x -axis sho ws the v alue of dev iation and the y axis shows the v alue of F -measure. Notice the p erformance of DP-means is clearly stable for different c hoices of k , which supp orts the claim made in Jiang et al. (2012). Similarly RDP-means and TV Clust sho w v ery stable results. MPCKmeans generally works w ell unless k is underestimated. 7. Small v ariations in the results of K-means is p ossible due to random initialization of each run 8. F or some datasets where k = 3, we dropp ed the v alue dev iation = 3. Also the implementation of LCVEQ that w e used needs at least 2 clusters to w ork. W e are not aw are of a more general av ailable implemen tation for this algorithm. 16 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm Method \ Param. p = 1 p = 0 . 95 p = 0 . 9 p = 0 . 8 r = 0 . 01 F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI K-means 0.62 0.45 0.53 0.62 0.45 0.53 0.61 0.44 0.53 0.60 0.43 0.52 DP-means 0.58 0.39 0.48 0.59 0.39 0.48 0.58 0.38 0.48 0.58 0.38 0.48 TVClust(v ariational) 0.72 0.62 0.69 0.69 0.57 0.65 0.66 0.52 0.60 0.58 0.43 0.53 RDP-means 0.84 0.77 0.76 0.79 0.71 0.68 0.69 0.58 0.57 0.56 0.39 0.41 MPCKMeans 0.83 0.76 0.73 0.52 0.23 0.29 0.49 0.16 0.21 0.44 0.07 0.12 LCVQE 0.73 0.62 0.66 0.69 0.56 0.59 0.62 0.46 0.49 0.52 0.32 0.36 r = 0 . 03 F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI K-means 0.60 0.43 0.52 0.60 0.42 0.52 0.61 0.44 0.53 0.61 0.45 0.53 DP-means 0.59 0.39 0.49 0.58 0.39 0.49 0.59 0.39 0.48 0.58 0.38 0.47 TVClust(v ariational) 0.78 0.70 0.75 0.77 0.69 0.74 0.76 0.68 0.73 0.70 0.59 0.67 RDP-means 0.98 0.98 0.96 0.98 0.97 0.94 0.93 0.90 0.86 0.77 0.69 0.63 MPCKMeans 0.99 0.99 0.97 0.43 0.07 0.10 0.41 0.04 0.06 0.40 0.02 0.04 LCVQE 0.86 0.81 0.85 0.62 0.47 0.52 0.57 0.38 0.45 0.48 0.25 0.33 r = 0 . 05 F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI F AdjRnd NMI K-means 0.62 0.45 0.54 0.59 0.42 0.52 0.60 0.43 0.52 0.60 0.42 0.51 DP-means 0.58 0.39 0.49 0.59 0.39 0.48 0.60 0.42 0.50 0.58 0.38 0.48 TVClust(v ariational) 0.81 0.74 0.79 0.79 0.72 0.77 0.78 0.70 0.75 0.74 0.66 0.72 RDP-means 0.96 0.96 0.95 0.99 0.99 0.98 0.98 0.97 0.94 0.91 0.87 0.82 MPCKMeans 1.00 1.00 0.99 0.44 0.12 0.13 0.41 0.08 0.09 0.38 0.03 0.05 LCVQE 0.88 0.84 0.86 0.60 0.42 0.47 0.55 0.34 0.41 0.50 0.25 0.34 T able 3: This table is an expanded version of T able 2 and shows ho w the algorithms p erform under differen t levels of noise and for eac h constrain t sampling rate, a veraged o ver eac h UCI dataset. 5.3 ImageNet Clustering W e rep eat the same exp erimen t from Jiang et al. (2012), where 100 images from 10 different categories of the ImageNet data were sampled. 9 Eac h image was pro cessed via standard visual-bag-of-w ords where SIFT was applied to images patc hes and the resulting SIFT v ectors were mapp ed into 1000 visual w orks. The SIFT feature coun ts were then used as features for that image, and since these features are discrete counts, they w ere mo deled as if coming from a multinomial distribution. Thus we used the corresp onding divergence measure, i.e. KL-divergence (as opp osed to Euclidean distance in the Gaussian case) as the distance metric in the clustering. W e use Laplace smo othing, 10 with a smo othing parameter of 0.3 to remo ve the ill- conditioning (division by zero inside the KL div ergence). W e also include the clustering results using a Gaussian mo del, to show the imp ortance of c ho osing the appropriate distri- bution. The result of the ev aluation are in T able 4. Clearly RDP-means has the b est result as it makes use of the side information. W e also in v estigate the behavior of RDP-means as a function of the p ercen tage of pairs sampled. The result is depicted in the Figure 4. In the case when the rate is close to zero, the mo del is equiv alent to DP-means. The figure shows that, as w e add more constraints the p erformance of the model consistently increases. When we sample only 6% of the pairs, w e are able to almost fully reconstruct the true clustering without any loss of information. 9. The set of images from each clusters, and the extracted SIFT features are a v ailable at http://image- net. org . 10. See http://en.wikipedia.org/wiki/Additive_smoothing . 17 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang −3 −2 −1 0 1 2 3 0 0.2 0.4 0.6 0.8 1 deviation F−measure Ecoli K−means RDP−means DP−means MPCKMeans LCVQE TVClust (Variational) −3 −2 −1 0 1 2 0 0.2 0.4 0.6 0.8 1 deviation F−measure Balance K−means RDP−means DP−means MPCKMeans LCVQE TVClust(variational) −3 −2 −1 0 1 2 3 0 0.2 0.4 0.6 0.8 1 deviation F−measure Glass K−means RDP−means DP−means MPCKMeans LCVQE TVClust (Variational) −3 −2.5 −2 −1.5 −1 −0.5 0 0.5 1 1.5 2 0 0.2 0.4 0.6 0.8 1 deviation F−measure Wine K−means RDP−means DP−means MPCKMeans LCVQE TVClust(variational) −3 −2 −1 0 1 2 0 0.2 0.4 0.6 0.8 1 deviation F−measure Iris K−means RDP−means DP−means MPCKMeans LCVQE TVClust Figure 3: Comparison of clustering quality on each UCI datasets with deviations from the actual n umber of clusters. The x -axis sho ws dev iation , where the n umber of clusters declared to eac h algorithm is k − dev iation . The y -axis sho ws the F -measure ev aluation of eac h clustering result. DP-Means K-Means RDP-Means Gaussian Multinomial Gaussian Multinomial Gaussian Multinomial F -measure 0.18 0.22 0.18 0.25 0.20 0.44 T able 4: Results of clustering on ImageNet dataset. F or eac h measure, the result is av eraged o ver 10 runs. Ac knowledgmen ts The authors would lik e to thank Ke Jiang for pro viding the the data used in the UCI image clustering. W e also thank Daphne Tsatsoulis, Eric Horn, Sh y am Upadh ya y , Adam V olrath and Stephen Mayhew for helpful commen ts on the draft. References Aldous, David. Random w alks on finite groups and rapidly mixing marko v chains. In S ´ eminair e de Pr ob abilit´ es XVII 1981/82 , pp. 243–297. Springer, 1983. 18 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm 0 0.02 0.04 0.06 0.08 0.1 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 1.1 Rate for the pairs of constraints added to the constrained matrix E Performance measure NMI F1 Figure 4: Effect of adding side information to the p erformance of RDP-means. As more information it added, p erformance improv es. An toniak, Charles E. Mixtures of dirichlet processes with applications to ba yesian nonpara- metric problems. The annals of statistics , pp. 1152–1174, 1974. Banerjee, Arindam, Merugu, Srujana, Dhillon, Inderjit S, and Ghosh, Jo ydeep. Clustering with bregman divergences. The Journal of Machine L e arning R ese ar ch , 6:1705–1749, 2005. Basu, Sugato, Da vidson, Ian, and W agstaff, Kiri. Constr aine d clustering: A dvanc es in algorithms, the ory, and applic ations . CR C Press, 2008. Bilenk o, Mikhail, Basu, Sugato, and Mo oney , Raymond J. Integrating constrain ts and met- ric learning in semi-sup ervised clustering. In Pr o c e e dings of the twenty-first international c onfer enc e on Machine le arning , pp. 11. ACM, 2004. Blac kwell, D. and MacQueen, J. F erguson distributions via Polya urn schemes. The A nnals of Statistics , 1:353–355, 1973. Blum, Avrim and Mitchell, T om. Com bining lab eled and unlab eled data with co-training. In Pr o c e e dings of the Eleventh Annual Confer enc e on Computational L e arning The ory , COL T’ 98, pp. 92–100, New Y ork, NY, USA, 1998. ACM. ISBN 1-58113-057-0. doi: 10.1145/279943.279962. URL http://doi.acm.org/10.1145/279943.279962 . Bregman, Lev M. The relaxation metho d of finding the common p oin t of conv ex sets and its application to the solution of problems in conv ex programming. USSR c omputational mathematics and mathematic al physics , 7(3):200–217, 1967. Co voes, Thiago F, Hruschk a, Eduardo R, and Ghosh, Jo ydeep. A study of k-means-based algorithms for constrained clustering. Intel ligent Data A nalysis , 17(3):485–505, 2013. Erd¨ os, P . and R ´ en yi, A. On random graphs, I. Public ationes Mathematic ae (Debr e c en) , 6: 290–297, 1959. URL http://www.renyi.hu/ ~ {}p_erdos/Erdos.html#1959- 11 . 19 D aniel Khashabi, John Wieting, Jeffrey Yufei Liu, Feng Liang F erguson, Thomas S. A bay esian analysis of some nonparametric problems. The annals of statistics , pp. 209–230, 1973. Finley , Thomas and Joac hims, Thorsten. Sup ervised clustering with supp ort vector ma- c hines. In Pr o c e e dings of the 22nd international c onfer enc e on Machine le arning , pp. 217–224. ACM, 2005. F orster, J ¨ urgen and W arm uth, Manfred K. Relativ e exp ected instan taneous loss b ounds. Journal of Computer and System Scienc es , 64(1):76–102, 2002. F raley , Chris and Raftery , Adrian E. Mo del-based clustering, discriminant analysis, and densit y estimation. Journal of the Americ an Statistic al Asso ciation , 97(458):611–631, 2002. Jiang, Ke, Kulis, Brian, and Jordan, Michael. Small-v ariance asymptotics for exp onen tial family dirichlet pro cess mixture mo dels. In A dvanc es in Neur al Information Pr o c essing Systems 25 , pp. 3167–3175, 2012. Jin, Xin, Luo, Jieb o, Y u, Jie, W ang, Gang, Joshi, Dhira j, and Han, Jia wei. Reinforced sim- ilarit y in tegration in image-rich information netw orks. Know le dge and Data Engine ering, IEEE T r ansactions on , 25(2):448–460, 2013. Khorev a, Anna, Galasso, F abio, Hein, Matthias, and Sc hiele, Bern t. Learning must-link constrain ts for video segmentation based on sp ectral clustering. In Pattern R e c o gnition , pp. 701–712. Springer, 2014. Kulis, Brian and Jordan, Michael I. Revisiting k-means: New algorithms via bay esian nonparametrics. arXiv pr eprint arXiv:1111.0352 , 2011. Neal, Radford M. Marko v c hain sampling metho ds for diric hlet pro cess mixture mo dels. Journal of c omputational and gr aphic al statistics , 9(2):249–265, 2000. Ng, Andrew Y, Jordan, Michael I, W eiss, Y air, et al. On sp ectral clustering: Analysis and an algorithm. A dvanc es in neur al information pr o c essing systems , 2:849–856, 2002. Orbanz, P eter and Buhmann, Joac him M. Nonparametric bay esian image segmentation. International Journal of Computer Vision , 77(1-3):25–45, 2008. P elleg, Dan and Baras, Dorit. K-means with large and noisy constraint sets. In Machine L e arning: ECML 2007 , pp. 674–682. Springer, 2007. Rangapuram, Syama S and Hein, Matthias. Constrained 1-sp ectral clustering. In Interna- tional Confer enc e on Artificial Intel ligenc e and Statistics , pp. 1143–1151, 2012. Shi, Jian b o and Malik, Jitendra. Normalized cuts and image segmen tation. Pattern Analysis and Machine Intel ligenc e, IEEE T r ansactions on , 22(8):888–905, 2000. Tierney , Luk e and Kadane, Joseph B. Accurate appro ximations for p osterior momen ts and marginal densities. Journal of the A meric an Statistic al Asso ciation , 81(393):82–86, 1986. 20 Clustering With Side Inf orma tion: From a Probabilistic Model to a Deterministic Algorithm W agstaff, Kiri and Cardie, Claire. Clustering with instance-level constrain ts. In AAAI/IAAI , pp. 1097, 2000. W agstaff, Kiri, Cardie, Claire, Rogers, Seth, Schr¨ odl, Stefan, et al. Constrained k-means clustering with background kno wledge. In ICML , volume 1, pp. 577–584, 2001. W ang, Xiang and Davidson, Ian. Flexible constrained sp ectral clustering. In Pr o c e e dings of the 16th ACM SIGKDD international c onfer enc e on Know le dge disc overy and data mining , pp. 563–572. ACM, 2010. Zha, Hongyuan, He, Xiaofeng, Ding, Chris, Gu, Ming, and Simon, Horst D. Sp ectral relaxation for k-means clustering. In A dvanc es in neur al information pr o c essing systems , pp. 1057–1064, 2001. Zh u, Jun, Chen, Ning, and Xing, Eric P . Infinite svm: a dirichlet pro cess mixture of large- margin kernel mac hines. In Pr o c e e dings of the 28th International Confer enc e on Machine L e arning (ICML-11) , pp. 617–624, 2011. 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment