Towards Data-Driven Autonomics in Data Centers

Continued reliance on human operators for managing data centers is a major impediment for them from ever reaching extreme dimensions. Large computer systems in general, and data centers in particular, will ultimately be managed using predictive compu…

Authors: Alina S^irbu, Ozalp Babaoglu

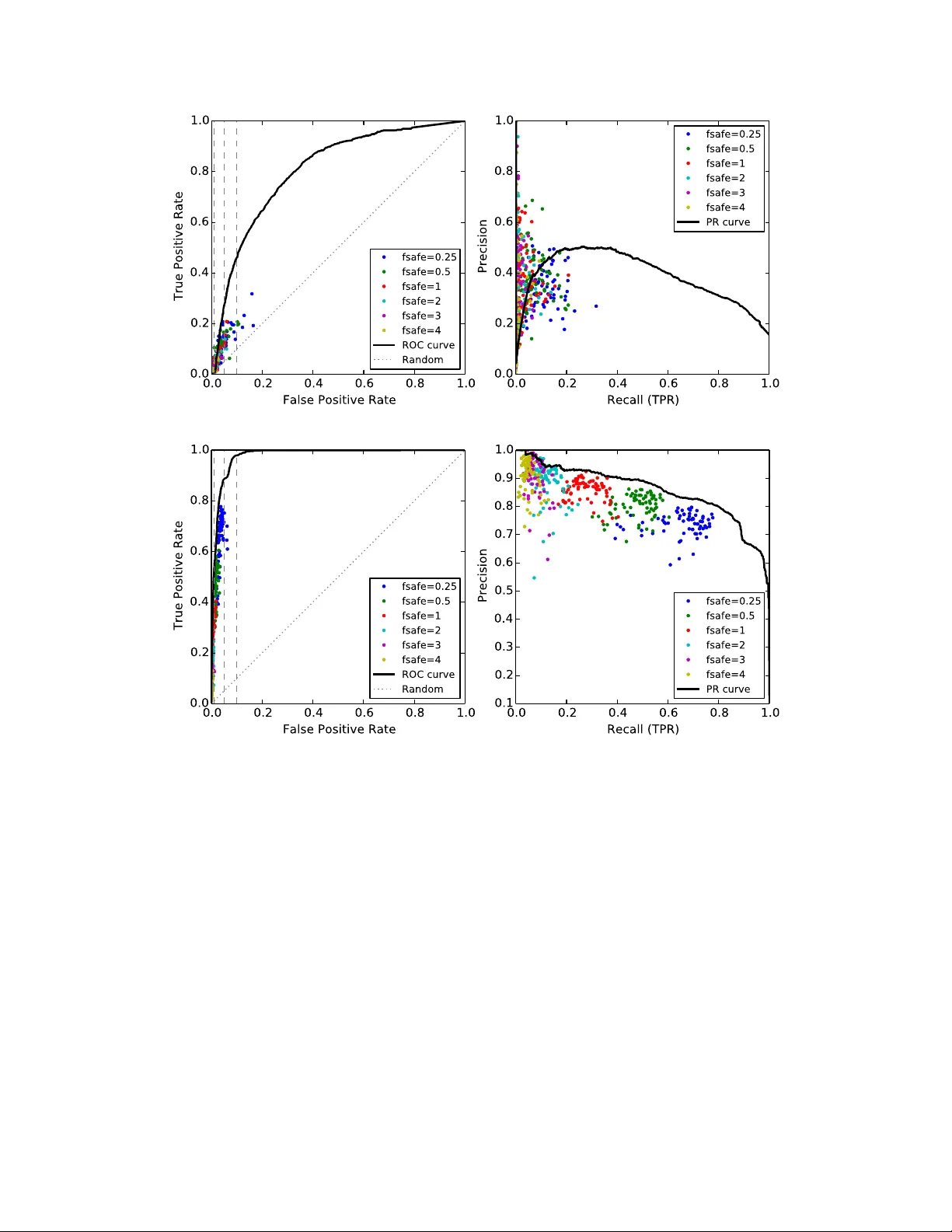

T owards Data-Dri ven A utonomics in Data Centers Alina S ˆ ırbu, Ozalp Babaoglu Department of Computer Science and Engineering, University of Bolo gna Mura Anteo Zamboni 7, 40126 Bologna, Italy Email: alina.sirbu@unibo.it, ozalp.babao glu@unibo.it Abstract —Continued reliance on human operators f or man- aging data centers is a major impediment for them fr om ever reaching extreme dimensions. Large computer systems in general, and data centers in particular , will ultimately be managed using predicti ve computational and executable models obtained through data-science tools, and at that point, the intervention of humans will be limited to setting high-level goals and policies rather than performing lo w-lev el operations. Data-driven autonomics , wher e management and contr ol are based on holistic predictive models that ar e built and updated using generated data, opens one possible path to wards limiting the role of operators in data centers. In this paper , we present a data-science study of a public Google dataset collected in a 12K-node cluster with the goal of building and evaluating a predicti ve model for node failures. W e use BigQuery , the big data SQL platform from the Google Cloud suite, to process massive amounts of data and generate a rich feature set characterizing machine state over time. W e describe how an ensemble classifier can be built out of many Random Forest classifiers each trained on these features, to predict if machines will fail in a future 24-hour window . Our e valuation reveals that if we limit false positive rates to 5%, we can achieve true positive rates between 27% and 88% with precision varying between 50% and 72%. W e discuss the practicality of including our predictive model as the central component of a data-driv en autonomic manager and operating it on-line with liv e data streams (rather than off-line on data logs). All of the scripts used for BigQuery and classification analyses are publicly av ailable fr om the authors’ website. Keyw ords -Data science; predictiv e analytics; Google cluster trace; log data analysis; failure prediction; machine learning classification; ensemble classifier; random f orest; BigQuery I . I N T RO D U C T I O N Modern data centers are the engines of the Internet that run e-commerce sites, cloud-based services accessed from mobile de vices and po wer the social networks utilized each day by hundreds of millions of users. Giv en the perv asi ve- ness of these services in many aspects of our daily lives, continued av ailability of data centers is critical. And when continued availability is not possible, service degradations and outages need to be foreseen in a timely manner so as to minimize their impact on users. For the most part, current automated data center management tools are limited to low-le vel infrastructure pro visioning, resource allocation, scheduling or monitoring tasks with no predictive capabili- ties. This leaves the brunt of the problem in detecting and resolving undesired behaviors to armies of operators who continuously monitor streams of data being displayed on monitors. Ev en at the highly optimistic rate of 26,000 servers managed per staffer 1 , this situation is not sustainable if data centers are e ver to reach e xascale dimensions. Applying traditional autonomic computing techniques to large data centers is problematic since their complex system character- istics prohibit building a “cause-effect” system model that is essential for closing the control loop. Furthermore, current autonomic computing technologies are reactive and try to steer the system back to desired states only after undesirable states are actually entered — they lack predicti ve capabilities to anticipate undesirable states in adv ance so that proacti ve actions can be taken to a void them in the first place. If data centers are the engines of the Internet, then data is their fuel and exhaust. Data centers generate and store vast amounts of data in the form of logs corresponding to various ev ents and errors in the course of their operation. When these computing infrastructure logs are augmented with numerous other internal and external data channels including power supply , cooling, management actions such as software updates, server additions/removal, configuration parameter changes, netw ork topology modifications, or operator actions to modify electrical wiring or change the physical locations of racks/serv er/storage de vices, data centers become ripe to benefit from data science. The grand challenge is to exploit the toolset of modern data science and dev elop a new generation of autonomics that is data-driven , pr edictive and pr oactive based on holistic models that capture a data centre as an ecosystem including not only the computer system as such, b ut also its physical as well as its socio-political en vironment. In this paper we present the results of an initial study tow ards b uilding predictiv e models for node failures in data centers. The study is based on a recent Google dataset containing workload and scheduler events emitted by the Borg cluster management system [1] in a cluster of over 12,000 nodes during a one-month period [2], [3]. W e em- ployed BigQuery [4], a big data tool from the Google Cloud Platform that allows running SQL-like queries on massive data, to perform an exploratory feature analysis. This step generated a large number of features at various lev els of 1 Delfina Eberly , Director of Data Center Operations at Facebook, speak- ing on “Operations at Scale” at the 7x24 Exchange 2013 F all Conference . aggregation suitable for use in a machine learning classifier . The use of BigQuery has allo wed us to complete the analysis for large amounts of data (table sizes up to 12TB containing ov er 100 billion ro ws) in reasonable amounts of time. For the classification study , we employed an ensemble that combines the output of multiple Random F orests (RF) classifiers, which themselv es are ensembles of Decision T rees. RF were employed due to their proven suitability in situations were the number of features is large [5] and the classes are “unbalanced” [6] such that one of the classes consists mainly of “rare ev ents” that occur with very low frequency . Although individual RF were better than other classifiers that were considered in our initial tests, the y still exhibited limited performance, which prompted us to pursue an ensemble appr oach . While indi vidual trees in RF are based on subsets of features, we used a combination of bagging and data subsampling to build the RF ensemble and tailor the methodology to this particular dataset. Our ensemble classifier was tested on sev eral days from the trace data, resulting in v ery good performance on some days (up to 88% true positiv e rate, TPR, and 5% false positiv e rate, FPR), and modest performance on other days (minimum of 27% TPR at the same 5% FPR). Precision lev els in all cases remained between 50% and 72%. W e should note that these results are comparable to other failure prediction studies in the field. The contributions of our work are sev eralfold. First, we advocate that modern data centers can be scaled to extreme dimensions only by eliminating reliance on human operators by adopting a new generation of autonomics that is data- driv en and based on holistic predictiv e models. T owards this goal, we provide a failure prediction analysis for a dataset that has been studied extensi vely in the literature from other perspectiv es. The model we de velop has v ery promising predictiv e power and has the potential to form the basis of a data-driv en autonomic manager for data centers. Secondly , we propose an ensemble classification methodology tailored to this particular problem where subsampling is combined with bagging and precision-weighted v oting to maximize performance. Thirdly , we provide one of the first exam- ples of BigQuery usage in the literature with quantitati ve ev aluation of running times as a function of data size. All of the scripts used for BigQuery and classification analysis are publicly av ailable from our website [7] under the GNU General Public License. The rest of this paper is organized as follows. The next section describes the process of building features from the trace data. Section III describes our classification approach while our prediction results are presented in Section IV. Related work is discussed in Section V. In Section VI we discuss the issues surrounding the construction of a data- driv en autonomic controller based on our predictive model and argue its practicality . Section VII concludes the paper . I I . B U I L D I N G T H E F E A T U R E S E T W I T H B I G Q U E RY The workload trace published by Google contains sev eral tables monitoring the status of the machines, jobs and tasks during a period of approximately 29 days for a cluster of 12,453 machines. This includes task ev ents (ov er 100 million records, 17GB uncompressed), which follow the state ev olution for each task, and task usage logs (over 1 billion records, 178GB uncompressed), which report the amount of resources per task at approximately 5 minute intervals. W e hav e used the data to compute the ov erall load and status of different cluster nodes at 5 minute interv als. This resulted in a time series for each machine and feature that spans the entire trace (periods when the machine was “up”). W e then proceeded to obtain sev eral features by aggregating measures in the original data. Due to the size of the dataset, this aggregation analysis was performed using BigQuery on the trace data directly from Google Cloud Storage. W e used the bq command line tool for the entire analysis, and our scripts are av ailable online through our W eb site [7]. From task ev ents, we obtained several time series for each machine with a time resolution of 5 minutes. A total of 7 features were e xtracted, which count the number of tasks currently running , the number of tasks that hav e started in the last 5 minutes and those that hav e finished with different exit statuses — evicted , failed , finished normally , killed or lost . From task usage data, we obtained 5 additional features (again at 5-minute intervals) measuring the load at machine lev el in terms of: CPU , memory , disk time , cycles per instruction (CPI) and memory accesses per instruction (MAI). This resulted in a total of 12 basic features that were extracted. For each feature, at each time step we consider the pre vious 6 time windo ws (corresponding to the machine status during the last 30 minutes) obtaining 72 features in total (12 basic features × 6 time windows). The procedure for obtaining the basic features w as ex- tremely fast on the BigQuery platform. For task counts, we started with constructing a table of running tasks, where each row corresponds to one task and includes its start time, end time, end status and the machine it was running on. Starting from this table, we could obtain the time series for each feature for each machine, requiring between 139 and 939 seconds on BigQuery per feature (one separate table per feature was obtained). The features related to machine load were computed by summing ov er all tasks running on a machine in each time window , requiring between 3585 and 9096 seconds on BigQuery per feature. The increased ex ecution time is due to the increased table sizes (over 1 billion rows). W e then performed a JOIN of all abov e tables to combine the basic features into a single table with 104,197,215 rows (occupying 7GB). For this analysis, our experience allows us to judge BigQuery as being extremely fast; an equiv alent computation would have taken months to Aggregation A verage, SD, CV Correlation 1h 166 (all features) 45(6.5) 12h 864 (all features) 258.8(89.1) 24h 284.6(86.6) 395.6(78.9) 48h 593.6(399.2) 987.2(590) 72h 726.6(411.5) 1055.47(265.23) 96h 739.4(319.4) 1489.2(805.9) T able I: Running times required by BigQuery for obtaining features aggreg ated ov er dif ferent time windows, for two aggregation types: computing avera ges , standar d deviation (SD) and coef ficient of variation (CV) v ersus computing corr elations . F or 1h and 12h windows, average, SD and CV were computed for all features in a single query . For all other cases, the mean (and standard deviation) of the required times per feature are shown. perform on a regular PC. A second lev el of aggregation meant looking at features ov er longer time windows rather than just the last 5 minutes. At each time step, 3 different statistics — averages, standard deviations and coefficients of v ariation — were computed for each basic feature obtained at the pre vious step. This was motiv ated by the suspicion that not only feature values but also their deviation from the mean could be important in understanding system beha vior . Six dif ferent running windows of sizes 1, 12, 24, 48, 72 and 96 hours were used to capture behavior at v arious time resolutions. This resulted in 216 additional features (3 statistics × 12 features × 6 window sizes). In order to generate these aggregated features, a set of intermediate tables were used. For each time point, these tables consisted of the entire set of data points to be av eraged. For instance, for 1-hour averages, the table would contain a set of 6 values for each feature and for each time point, sho wing the ev olution of the system over the past hour . While generating these tables was not time consuming (requiring between 197 and 960 seconds), their sizes were quite impressiv e: ranging from 143 GB (over 1 billion rows) for 1 hour up to 12.5 TB (ov er 100 billion rows) in the case of 96-hour windo w . Processing these tables to obtain the aggregated features of interest required significant resources and would not have been possible without the BigQuery platform. Even then, direct queries using a single G RO U P B Y operation to obtain all 216 features was not possible, requiring only one basic feature to be handled at a time and combining the results into a single table at the end. T able I shows statistics ov er the time required to obtain one feature for the different window sizes. Although independent feature values are important, an- other criterion that could be important for prediction is the relations that exist between different measures. Corre- lation between features is one such measure, with different correlation values indicating changes in system beha vior . Hence we introduced a third level of aggregation of the data by computing correlations between a chosen set of feature pairs, again o ver various window sizes (1 to 96 hours as before). W e chose 7 features to analyze: number of running, started and failed jobs together with CPU, memory , disk time and CPI. By computing correlations between all possible pairings of the 7 features, we obtained a total of 21 correlation values for each window size. This introduces 126 additional features to our dataset. The BigQuery analysis started from the same intermediate tables as before and computed correlations for one feature pair at a time. As can be seen in T able I, this step was more time consuming, requiring greater time than the previous aggreg ation step, yet still remains manageable considering the size of the data. The amount of data processed for these queries ranged from 49.6GB (per feature pair for 1-hour windo ws) to 4.33TB (per feature pair for 96-hour windows), resulting in a higher processing cost (5 USD per TB processed). Y et again, a similar analysis would not have been possible without the BigQuery platform. The Google trace also reports machine e vents. These are scheduler e vents corresponding to machines being added or remov ed from the pool of resources. Of particular interest are R E M OV E ev ents, which can be due to two causes: ma- chine failures or machine software updates. The goal of this work is to predict R E M OV E ev ents due to mac hine failur es , so the two causes have to be distinguished. Prompted by our discussions, publishers of the Google trace inv estigated the best way to perform this distinction and suggested to look at the length of time that machines remain down — the time from the R E M OV E ev ent of interest to the ne xt A D D ev ent for the same machine. If this “do wn time” is lar ge, then we can assume that the R E M OV E e vent was due to a machine failure, while if it is small, the machine was most likely removed to perform a software update. T o ensure that an event considered to be a failure is indeed a real failure, we used a relatively-long “down time” threshold of 2 hours, which is greater than the time required for a typical software update. Based on this threshold, out of a total of 8,957 R E M OV E e vents, 2,298 were considered failures, and were the target of our predictiv e study . F or the rest of the ev ents, for which we cannot be sure of the cause, the data points in the preceding 24-hour window were remov ed completely from the dataset. An alternati ve would hav e been considering them part of the S A F E class, ho wever this might not be true for some of the points. Thus, removing them completely ensures that all data labeled as S A F E are in fact S A F E . T o the abov e features based mostly on load measures, we added two new features: the up time for each machine (time since the last corresponding A D D ev ent) and number of R E M OV E events for the entire cluster within the last hour . This resulted in a total of 416 features for 104,197,215 data points (almost 300GB of processed data). Figure 1 displays the time series for 4 selected features (and the R E M OV E ev ents) at one typical machine. Figure 1: Four time series (4 out of 416 features) for one machine in the system. The features sho wn are: CPU for the last time window , CPU averages ov er 12 hours, CPU coefficient of variation for the last 12 hours and correlation between CPU and number of running jobs in the last 12 hours. Grey vertical lines indicate times of R E M OV E e vents, some followed by gaps during which the machine was unavailable. The large gap from ∼ 250 hours to ∼ 370 hours is an example of a long machine downtime, following a series of multiple failures (cluster of grey vertical lines around 250 hours). In this case, the machine probably needed more extensi ve in vestigation and repair before being inserted back in the scheduler pool. I I I . C L A S S I FI C A T I O N A P P R OAC H The features obtained in the previous section were used for classification with the Random F or est (RF) classifier . The data points were separated into two classes: S A F E (negati ves) and FA I L (positi ves). T o do this, for each data point (corresponding to one machine at a certain time point) we computed time to r emove as the time to the ne xt R E M OV E e vent. Then, all points with time to remo ve less than 24 hours were assigned to the class FA I L while all others were assigned to the class S A F E . W e extracted all the FA I L data points corresponding to real failures (108,365 data points) together with a subset of the S A F E class, corresponding to 0.5% of the total by random subsampling (544,985 points after subsampling). W e used this procedure to deal with the fact that the S A F E class is much larger than the FA I L class and classifiers hav e dif ficulty learning patterns from very imbalanced datasets. Subsampling is one way of reducing the extent of this imbalance [8]. Ev en after this subsampling procedure, negati ves are about five times the number of positiv es. These 653,350 data points ( S A F E plus FA I L ) formed the basis of our predictiv e study . Giv en the large number of features, some might be more useful than others, hence we explored two types of feature selection mechanisms. One was principal component analysis , which uses the original features to build a set of principal components — additional features that account for most of the v ariability in the data. Then one can use only the top principal components for classification, since those should contain the most important information. W e trained classifiers with an increasing number of principal components, howe ver the performance obtained was not better than using the original features. A second mechanism was to filter the original features based on their correlation to the time to the next failure ev ent ( time to r emove above). Correlations were in the interval [ − 0 . 3 , 0 . 45] , and we used only those features with absolute correlation larger than a threshold. W e found that the best performance was obtained with a null threshold, which means again using all features. Hence, our attempts to reduce the feature set did not produce better results that the RF trained directly on the original features. One reason for this may be the fact that the RF itself performs feature selection when training the Decision T rees. It appears that the RF mechanism performs better in this case that correlation-based filtering or principal component analysis. T o ev aluate the performance of our approach, we em- ployed cross validation. Given the procedure we used to define the two classes, there are multiple data points cor- responding to the same failure (data over 24 hours with 5 minutes resolution). Since some of these data points are very similar , choosing the train and test data cannot be done by selecting random subsets. While random selection may gi ve extremely good prediction results, it is not realistic since we would be using test data which is too similar to the training Benchmark*1* Training*data* Test*data* Benchmark*2* Benchmark*15* …* Trace*days* Figure 2: Cross validation approach: forward-in-time testing. T en days were used for training and one day for testing. A set of 15 benchmarks (train/test pairs) were obtained by sliding the train/test window over the 29-day trace. data. This is why we opted for a time-based separation of train and test data. W e considered basing the training on data over a 10-day window , follo wed by testing based on data over the next day with no ov erlap with the training data. Hence, the test day started 24 hours after the last training data point. The first two days were omitted in order to decrease the effect on aggregated features. In this manner , fifteen train/test pairs were obtained and used as benchmarks to ev aluate our analysis (see Figure 2). This forward-in- time cross validation procedure ensures that classification performance is realistic and not an artifact of the structure of the data. Also, it mimics the way failure prediction would be applied in a li ve data center , where ev ery day a model could be trained on past data to predict future failures. Giv en that many points from the FA I L class are very similar , which is not the case for the S A F E class due to initial subsampling, the information in the S A F E class is still ov erwhelmingly large. This prompted us to further subsam- ple the ne gati ve class in order to obtain the training data. This was performed in such a way that the ratio between S A F E and F A I L data points is equal to a parameter fsafe . W e varied this parameter with the values { 0 . 25 , 0 . 5 , 1 , 2 , 3 , 4 } while using all of the data points from the positive class so as not to miss any useful information. This applied only for training data: for testing we always used all data from both the neg ativ e and positiv e classes (out of the base dataset of 653,350 points). W e also used RF of dif ferent sizes, with the number of Decision Trees varying from 2 to 15 with a step of 1 (resulting in 14 different values). As we will discuss in the following section, the perfor- mance of the indi vidual classifiers, while better than random, was judged to be not satisfactory . This is why we opted for an ensemble method, which b uilds a series of classifiers and then selects and combines them to provide the final classification. Ensembles can enhance the power of low performing individual classifiers [5], especially if these are div erse [9], [10]: if they giv e false answers on different data points (independent errors), then combining their knowledge can impro ve accuracy . T o create diverse classifiers, one can vary the model parameters but also train them with different data (known as the bagging method [5]). Bagging matches very well with subsampling to overcome the rare e vents problem, and in fact it has been shown to be effecti ve for the class-imbalance problem [8]. Hence, we adopt a similar approach to build our indi vidual classifiers. Every time a new classifier is trained, a new training dataset is built by considering all the data points in the positi ve class and a random subset of the neg ativ e class. As described earlier , the size of this subset is defined by the fsafe parameter . By v arying the v alue of fsafe and number of trees in the RF algorithm, we created div erse classifiers. The following algorithm details the procedure used to build the indi vidual classifiers in the ensemble. Require: tr ain pos , train ne g , Shuf fle (), T rain () fsafe ← { 0 . 25 , 0 . 5 , 1 , 2 , 3 , 4 } tr ee count ← { 2 .. 15 } classifiers ← {} start ← 0 for all fs ∈ fsafe do for all tc ∈ tr ee count do end ← start + fs ∗ | train pos | if end ≥ | train ne g | then start ← 0 end ← start + fs ∗ | train pos | Shuffle ( tr ain ne g ) end if train data ← tr ain pos + train ne g [ start : end ] classifier ← T rain ( train data , tc ) append ( classifiers , classifier ) start ← end end for end for W e repeated this procedure 5 times, resulting in 5 classi- fiers for each combination of the parameters fsafe and RF size. This resulted in a total of 420 RF in the ensemble (5 repetitions × 6 fsafe values × 14 RF sizes). Once the pool of classifiers is obtained, a combining strategy has to be used. Most existing approaches use the majority vote rule — each classifier votes on the class and the majority class becomes the final decision [5]. Alternativ ely , a weighted v ote can be used, and we opted for pr ecision-weighted voting . For most existing methods, weights correspond to the accuracy of each classifier on training data [11]. In our case, performance on training data is close to perfect and accuracy is generally high, which is why we use precision on a subset of the test data. Specifically , we di vide the test data into two halv es: an individual test data set and an ensemble test data set. The former is used to ev aluate the precision of individual classifiers and obtain a weight for their vote. The latter provides the final ev aluation of the ensemble. All data corresponding to the test day w as used, with no subsampling. T able II shows the number of data points used for each benchmark for training and testing. While the parameter fsafe controlled the ratio S A F E / FA I L during training, FA I L instances were much less frequent during testing, varying T rain Individual T est Ensemble T est Benchmark FA IL FA IL S A F E F A I L S A FE 1 41485 2055 9609 2055 9610 2 41005 2010 9408 2011 9408 3 41592 1638 9606 1638 9606 4 42347 1770 9597 1770 9598 5 42958 1909 9589 1909 9589 6 42862 1999 9913 2000 9914 7 41984 1787 9821 1787 9822 8 39953 1520 10424 1520 10424 9 37719 1665 10007 1666 10008 10 36818 1582 9462 1583 9463 11 35431 1999 9302 1999 9302 12 35978 3786 10409 3787 10410 13 35862 2114 9575 2114 9575 14 39426 1449 9609 1450 9610 15 40377 1284 9783 1285 9784 T able II: Size of training and testing datasets. For training data, the number of S A F E data points is the number of FA I L multiplied by the fsafe parameter at each run. between 13% and 36% of the number of S A F E instances. T o perform precision-weighted v oting, we first applied each RF i obtained abo ve to the individual test data and computed their precision p i as the fraction of points labeled FA I L that were actually failures. In other w ords, precision is the probability that an instance labeled as a failure is actually a real failure, which is why we decided to use this as a weight. Then we applied each RF to the ensemble test data . F or each data point j in this set, each RF provided a classification o j i (either 0 or 1 corresponding to S A F E or FA I L , respectively). The classification of the ensemble (the whole set of RF) was then computed as a continuous score s j = X i o j i p i (1) by summing individual answers weighted by their precision. Finally , these were normalized by the highest score in the data s 0 j = s j max j ( s j ) (2) The resulting score s 0 j is proportional to the likelihood that a data point is in the FA I L class — the higher the score, the more certain we are that we have an actual failure. The following algorithm outlines the procedure of obtaining the final ensemble classification scores. Require: classifier s , individual test , ensemble test Require: Pr ecision (), Classify () classification scor es ← {} weights ← {} for all c ∈ classifiers do w ← Precision ( c , individual test ) weights [ c ] ← w end for for all d ∈ ensemble test do scor e ← 0 for all c ∈ classifiers do scor e ← scor e + weights [ c ] ∗ Classify ( c , d ) end for append ( classification scores , score ) end for max ← Max ( classification scores ) for all s ∈ classification scor es do s ← s / max end for It assumes that the set of classifiers is av ailable ( classi- fiers ), together with the two test data sets ( individual test and ensemble test ) and procedures to compute precision of a classifier on a dataset ( Pr ecision ()) and to apply a classifier to a data point ( Classify () which returns 0 for S A F E and 1 for FA I L ). I V . C L A S S I FI C A T I O N R E S U LT S The ensemble classifier was applied to all 15 benchmark datasets. T raining was done on an iMac with 3.06GHz Intel Core 2 Duo processor and 8GB of 1067MHz DDR3 memory running OSX 10.9.3. Training of the entire ensemble took between 7 and 9 hours for each benchmark dataset. Giv en that the result of the classification is a continuous score (Equation 2), and not a discrete label, ev aluation was based on the Receiver Operating Characteristic (ROC) and Pr ecision-Recall (PR) curves. A class can be obtained for a data point j from the score s 0 j by using a threshold s ∗ . A data point is considered to be in the FA I L class if s 0 j ≥ s ∗ . The smaller s ∗ , the more instances are classified as failures. Thus, by decreasing s ∗ the number of true positives grows but so do the false positiv es. Similarly , at dif ferent threshold values, a certain precision is obtained. The R OC curve plots the True Positiv e Rate (TPR) v ersus the False Positi ve Rate (FPR) of the classifier as the threshold is varied. Similarly , The PR curve displays the pr ecision versus recall (equal to TPR or Sensiti vity). It is common to e valuate a classifier by computing the area under R OC (A UR OC) and area under PR (A UPR) curves, which can range from 0 to 1. Figure 3: A UR OC and A UPR on ensemble test data for all benchmarks. (a) W orst case (Benchmark 4) (b) Best case (Benchmark 14) Figure 4: ROC and PR curv es for worst and best performance across the 15 benchmarks (4 and 14, respecti vely). The vertical lines correspond to FPR of 1%, 5% and 10%. Note that parameter fsafe controls the ratio of S A F E to FA I L data in the training datasets. A UR OC values greater than 0.5 correspond to classifiers that perform better than random guesses, while A UPR represents an a verage classification precision, so, again, the higher the better . A UR OC and A UPR do not depend on the relativ e distribution of the two classes, so they are particularly suitable for class-imbalance problems such as the one at hand. Figure 3 shows A UR OC and A UPR values obtained for all datasets, ev aluated on the ensemble test data. F or all benchmarks, A UROC v alues are very good, over 0.75 and up to 0.97. A UPR ranges between 0.38 and 0.87. Performance appears to increase, especially in terms of precision, tow ards the end of the trace. Lower performance that is observ ed for the first two benchmarks could be due to the fact that some of the aggregated features (those ov er 3 or 4 days) are computed with incomplete data at the beginning. T o e valuate the effect of the different parameters and the ensemble approach, Figure 4 displays the R OC and PR curves for the benchmarks that result in the worst and best results (4 and 14, respectively). Performance of the individ- ual classifiers in the ensemble are also displayed (as points in the R OC/PR space since their answer is cate gorical). W e can see that individual classifiers result in very lo w FPR which is very important in predicting failures. Y et, in many cases, the TPR v alues are also very low . This means that most test data is classified as S A F E and very few failures are actually identified. TPR appears to increase when the fsafe parameter de- …" Time" REMOVE" event " (failure )" SAFE"data" FAIL"data" 1"hour" MisclassificaBon "" has" higher "impact" MisclassificaBon "" has" higher "impact" MisclassificaBon "" has" lower "impact" Figure 5: Representation of the time axis for one machine. The S A F E and FA I L labels are assigned to time points based on the time to the next failure. Misclassification has different impacts depending on its position on the time axis. In particular , if we misclassify a data point close to the transition from S A F E to FA I L , the impact is lower than if we misclassify far from the boundary . The latter situation would mean flagging a failure ev en if no failure will appear for a long time, or marking a machine as S A F E when failure is imminent. creases, but at the expense of the FPR and Precision. The plots show quantitatively the clear dependence between the three plotted measures and fsafe v alues. As the amount of S A F E training data decreases, the classifiers become less stringent and can identify more f ailures, which is an important result for this class-imbalance problem. Also, the plot shows clearly that individual classifiers obtained with different values for fsafe are di verse, which is critical for obtaining good ensemble performance. In general, the points corresponding to the individual classifiers are below the ROC and PR curves describing the performance of the ensemble . This prov es that the ensemble method is better than the indi vidual classifiers for this problem, which can be also due to their di versity . Some exceptions do appear (points abov e the solid lines), ho we ver for very low TPR (under 0.2) so in an area of the R OC/PR space that is not interesting from our point of view . W e are interested in maximizing the TPR while keeping the FPR at bay . Specifically , the FPR should nev er grow beyond 5%, which means few f alse alarms. At this threshold, the two examples from Figure 4 display TPR values of 0.272 (worst case) and 0.886 (best case), corresponding to precision values of 0.502 and 0.728 respecti vely . This is much better than indi vidual classifiers at this lev el, both in terms of precision and TPR. For failure prediction, this means that between 27.2% and 88.6% of failures are identified as such, while from all instances labeled as failures, between 50.2% and 72.8% are actual failures. In order to analyze the implications of the obtained results in more detail, the relation between the classifier label and the exact time until the next R E M OV E ev ent was studied for the data points. This is important because we originally assigned the label S A F E to all data points that are more than 24 hours away from a failure. According to this classification, a machine would be considered to be in S A F E state whether it fails in 2 weeks or in 2 days. Similarly , it is considered to be in F A I L state whether it fails in 23 hours or in 10 minutes. Obviously these are very different situations, and the impact of misclassification varies depending on the time to the next failure. Figure 5 displays this graphically . As the time to the next failure decreases, a S A F E data point misclassified as FA I L counts less as a misclassification, since failure is actually approaching. Similarly , a FA I L data point labeled as S A F E has a higher negati ve impact when it is close to the point of failure. W e would like to verify whether our classifier assigns correct and incorrect labels uniformly within each class, irrespectiv e of the real time to the next failure. For this, Figure 6 shows the distribution of the time-to-the-next- failure, in the form of boxplots, for true positives (TP), false negati ves (FN), true negati ves (TN) and false positiv es (FP), again for the worst and best cases (benchmarks 4 and 14, respecti vely). W e look at results obtained at 5% FPR (a) Benchmark 4, Positive class (b) Benchmark 4, Negativ e class (c) Benchmark 14, Positive class (d) Benchmark 14, Negativ e class Figure 6: Distribution of time to the next machine REMO VE for data points classified, divided into T rue Positi ve (TP), False Negati ve (FN), T rue Negati ve (TN) and False Positi ve (FP). The worst and best performance (benchmarks 4 and 14, respectiv ely) out of the 15 runs are shown. Actual numbers of instances in each class are shown in parentheses on the horizontal axis. values. A good result would be if misclassified positives are further in time from the point of failure compared to correctly classified f ailures. And misclassified negati ves are closer to the f ailure point compared to correctly classified negati ves. All positiv e instances, which are data points correspond- ing to real failures, are sho wn in the left panels (Figure 6a and 6c). These are divided into TP (failures correctly identified by the classifier) and FN (failures missed by the classifier). All data points have a time to the next R E M OV E ev ent between 0 and 24 hours, due to the way the positive class was defined. If the classifier was independent of the time to the ne xt failure, the two distributions shown for TP and FN would be very similar . Howe ver , the plots show that TP have, on av erage, lower times until the next ev ent, compared to FN. This means that man y of the positiv e data points that are misclassified are further in time from the actual failure moment compared to those correctly identified. This is good news, because it suggests that although data points are not recognized as imminent failure situations when there is still some time left before the actual fail- ure, correct classification may occur as the failure moment approaches. In fact, if we compute the fraction of failure ev ents that are flagged at least once in the preceding 24 hour window , we obtain values lar ger than the TPR computed at the data-point le vel, especially when prediction po wer is lowest (52.5% vs. 27.2% for benchmark 4 and 88.7% vs. 88.6% for benchmark 14). Negati ve ( S A F E ) instances — data points that do not precede a R E M O V E ev ent by less than 24 hours — can be divided into two classes: those for which there is a R E M O V E due to a real failure before the end of the trace and those for which there is none. For the latter , we have no means to estimate the time to the next failure ev ent. So Figures 6b and 6d show the time to the next R E M OV E only for the former, i.e., those machines which will f ail before the end of the trace. This is only a small fraction of the entire S A F E test data, especially for the benchmark with best performance, because it is only three days before the trace ends. Howe ver it still pro vides some indication on the time to the next failure of the neg ati ve class, divided into FP (negati ves that are labeled as failures) and TN (negati ves correctly labeled S A F E ). As the figure shows, on average, times to the next failure are lower for FP compared to TN. This is again a good result because it means that many times the classifier giv es false alarms when a failure is approaching, ev en if it is not strictly in the next 24 hours. V . R E L A T E D W O R K The publication of the Google trace data has triggered a flurry of activity within the community including sev eral with goals that are related to ours. Some of these provide general characterization and statistics about the workload and node state for the cluster [12], [13], [14] and identify high levels of heterogeneity and dynamism in the system, especially when compared to grid workloads [15]. User profiles [16] and task usage shapes [17] hav e also been characterized for this cluster . Other studies have applied clustering techniques for workload characterization, either in terms of jobs and resources [18], [19] or placement constraints [20], with the aim to synthesize new traces. A different type of usage is for v alidation of various workload management algorithms. Examples are [21] where the trace is used to ev aluate consolidation strategies, [22], [23] where ov er-committing (ov erbooking) is validated, [24] who take heterogeneity into account to perform provisioning or [25] in vestigating checkpointing algorithms. System modeling and prediction studies using the Google trace data are f ar fewer than those performing characteri- zation or v alidation. An early attempt at system modeling based on this trace [26] validates an ev ent-based simulator using workload parameters e xtracted from the data, with good performance in simulating job status and overall sys- tem load. Host load prediction using a Bayesian classifier was analyzed in [27]. Using CPU and RAM history , the mean load in a future time window is predicted, by dividing possible load lev els into 50 discrete states. Here we perform prediction of machine failures for this cluster , which has not been attempted to date, to our knowledge. Failure prediction has been an acti ve topic for many years, with a comprehensive re view presented in [28]. This work summarizes several methods of failure prediction in single machines, clusters, application servers, file systems, hard driv es, email servers and clients, etc., dividing them into failure tracking, symptom monitoring or error reporting. The method introduced here falls into the symptom monitoring category , ho we ver elements of failure tracking and error reporting are also present through features like number of recent failures and job failure events. More recent studies concentrate on larger scale distributed systems such as HPC or clouds. For failure tracking meth- ods, an important resource is the failure trace archive [29], a repository for failure logs and an associated toolkit that can enable integrated characterization of failures, such as distributions of inter-e vent times. Job failure in a cloud setting has been analyzed in [30]. The naive Bayes classifier is used to obtain a probability of f ailure based on the job type and host name. This is applied on traces from Amazon EC2 running se veral scientific applications. The method reaches different performances on jobs from three different application settings, with FNs of 4%, 12% and 16% of total data points and corresponding FPs of 0%, 4% and 10% of total data points. This corresponds approximately to FPR of 0%, 5.7% and 16.3%, and TPR of 86.6%, 61.2% and 58.9%. The performance we obtained with our method is within a similar range for most benchmarks, although we never reach their best performance. Howe ver , we are predicting machine and not job failures. A comparison of different classification tools for failure prediction in an IBM Blue Gene/L cluster is giv en in [31]. In this work, they analyze Reliability , A vailability and Ser- viceability (RAS) events using SVMs, neural networks, rule based classifiers and a custom nearest neighbor algorithm, trying to predict whether different ev ent categories will appear . The custom nearest neighbor algorithm outperforms the others reaching 50% precision and 80% TPR. A similar analysis was also performed for a Blue Gene/Q cluster [32]. The best performance was again by the nearest neighbor classifier (10% FPR, 20% TPR). They never ev aluated the Random Forest or ensemble algorithms. In [33] an anomaly detection algorithm for cloud com- puting was introduced. It employs Principal Component Analysis and selects the most rele vant principal components for each failure type. The y find that higher order components are better correlated with errors. Using threshold on these principal components, they identify data points outside the normal range. They study four types of failures: CPU- related, memory-related, disk-related and network-related faults, in a controlled in-house system with fault injection and obtain very high performance, 91.4% TPR at 3.7% FPR. On a production trace (the same Google trace we are using) they predict task f ailures at 81.5% TPR and 27% FPR (at 5% FPR, TPR is do wn to about 40%). In our case, we studied the same trace but looking at machine failures as opposed to task failures, and obtained TRP v alues between 27% and 88% at 5% FPR. All abo ve-mentioned failure prediction studies concentrate on types of f ailures or systems dif ferent from ours and obtain variable results. In all cases, our predictions compare well with prior studies, with our best result being better than most. V I . D I S C U S S I O N Here we consider the possibility of including our predic- tiv e model in a data-dri ven autonomic controller for use in data centers. In such a scenario, both model building and model updating would happen on-line on data that is being streamed from various sources. From a technical point of view , on-line use would require a few changes to our model workflo w . T o b uild the model, all features hav e to be computed on- line. Log data can be collected in a BigQuery table using the streaming API. As data is being streamed, features hav e to be computed at 5 minute intervals. Both basic and aggregated features (averages, standard de viations, coefficients of varia- tion and correlations) have to be computed, but only for the last time windo w (previous time windows are already stored in a dedicated table). Basic features are straightforward to compute requiring negligible running time since they can be computed using accumulators as the e vents come in. For aggregated features, parallelization can be employed, since they are all independent. In our experiments, correlation computation w as most time consuming, with an av erage time to compute one correlation feature over the longest time windo w taking 1489.2 seconds for all values over the 29 days (T able I). For computing a single value (for the ne wly streamed data), the time required should be on av erage under 0.2 seconds. This estimate corresponds to a linear dependence between the number of time windo ws and computation time and offers an upper bound for the time required. If this stage is performed in parallel on BigQuery , this would also be the average time to compute all 126 correlation features (each feature can be computed independently so speedup would be linear). In terms of dollar costs, we expect figures similar to those during our tests — about 60 USD per day for storage and analysis. T o this, the streaming costs would have to be added — currently 1 cent per 200MB. For our system, the original raw data is about 200GB for all 29 days, so this would translate to approximately 7GB of data streamed every day for a system of similar size, leading to a cost of about 35 cents per day . At all times, only the last 12 days of features need to be stored, which keeps data size relativ ely low . In our analysis, for all 29 days, the final feature table requires 295GB of BigQuery storage, so 12 days would amount to about 122GB of data. When a new model has to be trained (e.g., once a day), all necessary features are already computed. One can use an infrastructure like the Google Compute Engine to train the model, which would eliminate the need to do wnload the data and would allow for training of the indi vidual classifiers of the ensemble in parallel. In our tests, the entire ensemble took under 9 hours to train, with each RF requiring at most 3 minutes. Again, since each classifier is independent, training all classifiers in parallel would take under 3 minutes as well (provided one can use as many CPUs as there are RFs — 420 in our study). Combining the classifiers takes a negligible amount of time. All in all, we expect the entire process of updating the model to take under 5 minutes if full parallelization is used both for feature computation and training. Application of the model on ne w data requires a negligible amount of time once features are av ailable. This makes the method very practical for on-line use. Here we hav e described a cloud computing scenario, howe ver , giv en the relativ ely limited computation and storage resources that are required, we believ e that more modest clusters can also be used for monitoring, model updating and prediction. V I I . C O N C L U S I O N S W e have presented a predicti ve study for failure of nodes in a Google cluster based on a published w orkload trace. Feature extraction from raw data was performed using BigQuery , the big data cloud platform from Google which enables SQL-like queries. A large number of features were generated and an ensemble classifier was trained on log data for 10 days and tested on the following non-o verlapping day . The length of the trace allo wed repeating this process 15 times producing 15 benchmark datasets, with the last day in each dataset being used for testing. The BigQuery platform was extremely useful for obtain- ing the features from log data. Although limits were found for J O I N and G RO U P B Y statements, these were circumvented by creating intermediate tables, which sometimes contained ov er 12TB of data. Even so, features were obtained with reduced running times, with o verall cost for the entire analysis processing one month worth of logs, coming in at under 2000 USD 2 , resulting in a daily cost of just over 60 USD. Classification performance varied from one benchmark to another , with Area-Under-the-R OC curve measure v arying between 0.76 and 0.97 while Area-Under-the-Precision- Recall curve measure varying between 0.38 and 0.87. This corresponded to true positiv e rates in the range 27%-88% and precision between 50% and 72% at a false positi ve rate of 5%. In other words, this means that in the worst case, we were able to identify 27% of failures, while if a data point was classified as a failure, we could hav e 50% confidence that we were looking at a real failure. F or the best case, we were able to identify almost 90% of failures and 72% of instances classified as failures corresponded to real failures. All this, at the cost of having false alarms 5% of the time. Although not perfect, our predictions achie ve good per- formance lev els. Results could be improv ed by changing the subsampling procedure. Here, only a subset of the S A F E data was used due to the large number of data points in this class, and a random sample was extracted from this subset when training each classifier in the ensemble. Howe ver , one could subsample e very time from the full set. Howe ver , this would require greater computational resources for training, since a single workstation cannot process 300 GB of data at a time. T raining times could be reduced through parallelization, since the problem is embarrassingly parallel (each classifier in the ensemble can be trained independently from the others). These improvements will be pursued in the future. Introduction of additional features will also be explored, to take into account in a more explicit manner the interaction between machines. BigQuery will be used to obtain interactions between machines from the data, which will result in networks of nodes. Changes in the properties of these networks ov er time could provide important information on possible future failures. The method presented here is very suitable for on-line use. A new model can be trained every day , using the last 12 days of logs. This is the scenario we simulated when we created the 15 test benchmarks. The model would be trained from 10 days of data and tested on one non-overlapping day , exactly like in the benchmarks (Figure 2). Then, it would 2 Based on current Google BigQuery pricing. be applied for one day to predict future failures. The next day a new model would be obtained from ne w data. Each time, only the last 12 days of data would be used, rather than increasing the amount of training data. This to account for the fact that the system itself and the workload can change in time, so old data may not match current system behavior . This would ensure that the model is up to date with the current system state. The testing stage is required for live use for two reasons. First, part of the test data is used to b uild the ensemble (prediction-weighted v oting). Secondly , the TPR and precision v alues on test data can help system administrators make decisions on the criticality of the predicted failure. A C K N O W L E D G M E N T S BigQuery analysis was carried out through a generous Cloud Credits grant from Google. W e are grateful to John W ilkes of Google for helpful discussions re garding the cluster trace data. R E F E R E N C E S [1] A. V erma, L. Pedrosa, M. R. Korupolu, D. Oppenheimer , E. T une, and J. W ilkes, “Large-scale cluster management at Google with Borg, ” in Pr oceedings of the Eur opean Con- fer ence on Computer Systems (Eur oSys) , Bordeaux, France, 2015. [2] J. W ilkes, “More Google cluster data, ” Google research blog, No v . 2011, Posted at http://googleresearch.blogspot. com/2011/11/more- google- cluster- data.html. [3] C. Reiss, J. W ilkes, and J. L. Hellerstein, “Obfuscatory obscanturism: making workload traces of commercially- sensitiv e systems safe to release, ” in Network Operations and Management Symposium (NOMS), 2012 IEEE . IEEE, 2012, pp. 1279–1286. [4] J. Tigani and S. Naidu, Google BigQuery Analytics . John W iley & Sons, 2014. [5] L. Rokach, “Ensemble-based classifiers, ” Artificial Intelli- gence Revie w , vol. 33, no. 1-2, pp. 1–39, 2010. [6] T . M. Khoshgoftaar, M. Golawala, and J. V an Hulse, “ An empirical study of learning from imbalanced data using random forest, ” in T ools with Artificial Intelligence, 2007. ICT AI 2007. 19th IEEE International Conference on , vol. 2. IEEE, 2007, pp. 310–317. [7] A. S ˆ ırbu and O. Babaoglu, “BigQuery and ML scripts for ‘Predicting machine failures in a Google clus- ter’, ” W ebsite, 2015, av ailable at http://cs.unibo.it/ ∼ sirbu/ Google- trace- prediction- scripts.html. [8] M. Galar , A. Fernandez, E. Barrenechea, H. Bustince, and F . Herrera, “ A revie w on ensembles for the class imbalance problem: bagging-, boosting-, and hybrid-based approaches, ” Systems, Man, and Cybernetics, P art C: Applications and Revie ws, IEEE T ransactions on , vol. 42, no. 4, pp. 463–484, 2012. [9] L. I. K unche va, C. J. Whitaker , C. A. Shipp, and R. P . Duin, “Is independence good for combining classifiers?” in P attern Reco gnition, 2000. Pr oceedings. 15th International Confer ence on , vol. 2. IEEE, 2000, pp. 168–171. [10] C. A. Shipp and L. I. Kunchev a, “Relationships between combination methods and measures of diversity in combining classifiers, ” Information Fusion , vol. 3, no. 2, pp. 135 – 148, 2002. [11] D. W . Opitz, J. W . Shavlik et al. , “Generating accurate and div erse members of a neural-network ensemble, ” Advances in neural information pr ocessing systems , pp. 535–541, 1996. [12] C. Reiss, A. T umanov , G. R. Ganger , R. H. Katz, and M. A. Kozuch, “Heterogeneity and Dynamicity of Clouds at Scale: Google T race Analysis, ” in ACM Symposium on Cloud Computing (SoCC) , 2012. [13] ——, “T ow ards understanding heterogeneous clouds at scale: Google trace analysis, ” Carnegie Mellon University T echnical Reports , vol. ISTC-CC-TR, no. 12-101, 2012. [14] Z. Liu and S. Cho, “Characterizing Machines and W ork- loads on a Google Cluster, ” in 8th International W orkshop on Scheduling and Resour ce Management for P arallel and Distributed Systems (SRMPDS) , 2012. [15] S. Di, D. Kondo, and W . Cirne, “Characterization and Com- parison of Google Cloud Load versus Grids, ” in International Confer ence on Cluster Computing (IEEE CLUSTER) , 2012, pp. 230–238. [16] O. A. Abdul-Rahman and K. Aida, “T owards understanding the usage behavior of Google cloud users: the mice and elephants phenomenon, ” in IEEE International Confer ence on Cloud Computing T echnology and Science (CloudCom) , Singapore, Dec. 2014, pp. 272–277. [17] Q. Zhang, J. L. Hellerstein, and R. Boutaba, “Characterizing T ask Usage Shapes in Google’ s Compute Clusters, ” in Pr o- ceedings of the 5th International W orkshop on Larg e Scale Distributed Systems and Middlewar e , 2011. [18] A. K. Mishra, J. L. Hellerstein, W . Cirne, and C. R. Das, “T owards Characterizing Cloud Backend W orkloads: Insights from Google Compute Clusters, ” Sigmetrics performance evaluation r eview , vol. 37, no. 4, pp. 34–41, 2010. [19] G. W ang, A. R. Butt, H. Monti, and K. Gupta, “T owards Syn- thesizing Realistic W orkload Traces for Studying the Hadoop Ecosystem, ” in 19th IEEE Annual International Symposium on Modelling, Analysis, and Simulation of Computer and T elecommunication Systems (MASCO TS) , 2011, pp. 400–408. [20] B. Sharma, V . Chudno vsky , J. L. Hellerstein, R. Rifaat, and C. R. Das, “Modeling and synthesizing task placement constraints in google compute clusters, ” in Pr oceedings of the 2nd ACM Symposium on Cloud Computing . A CM, 2011, p. 3. [21] J. O. Iglesias, L. M. Lero, M. D. Cauwer , D. Mehta, and B. O’Sulliv an, “ A methodology for online consolida- tion of tasks through more accurate resource estimations, ” in IEEE/ACM Intl. Conf . on Utility and Cloud Computing (UCC) , London, UK, Dec. 2014. [22] F . Caglar and A. Gokhale, “iOv erbook: intelligent resource- ov erbooking to support soft real-time applications in the cloud, ” in 7th IEEE International Conference on Cloud Computing (IEEE CLOUD) , Anchorage, AK, USA, Jun– Jul 2014. [Online]. A vailable: http://www .dre.vanderbilt.edu/ ∼ gokhale/WWW/papers/CLOUD- 2014.pdf [23] D. Breitgand, Z. Dubitzky , A. Epstein, O. Feder , A. Glikson, I. Shapira, and G. T offetti, “ An adaptiv e utilization accelerator for virtualized environments, ” in International Conference on Cloud Engineering (IC2E) , Boston, MA, USA, Mar . 2014, pp. 165–174. [24] Q. Zhang, M. F . Zhani, R. Boutaba, and J. L. Hellerstein, “Dynamic heterogeneity-aware resource provisioning in the cloud, ” IEEE T ransactions on Cloud Computing (TCC) , vol. 2, no. 1, Mar . 2014. [25] S. Di, Y . Robert, F . V ivien, D. K ondo, C.-L. W ang, and F . Cappello, “Optimization of cloud task processing with checkpoint-restart mechanism, ” in 25th International Confer- ence on High P erformance Computing, Networking, Stora ge and Analysis (SC) , Denver , CO, USA, Nov . 2013. [26] A. Balliu, D. Olivetti, O. Babaoglu, M. Marzolla, and A. S ˆ ırbu, “Bidal: Big data analyzer for cluster traces, ” in Informatika (BigSys workshop) , vol. 232. GI-Edition Lecture Notes in Informatics, 2014, pp. 1781–1795. [27] S. Di, D. K ondo, and W . Cirne, “Host load prediction in a Google compute cloud with a Bayesian model, ” 2012 International Conference for High P erformance Computing, Networking, Storag e and Analysis , pp. 1–11, Nov . 2012. [28] F . Salfner, M. Lenk, and M. Malek, “A surve y of online fail- ure prediction methods, ” ACM Computing Surveys (CSUR) , vol. 42, no. 3, pp. 1–68, 2010. [29] B. Javadi, D. Kondo, A. Iosup, and D. Epema, “The Failure T race Archive: Enabling the comparison of f ailure measure- ments and models of distributed systems, ” Journal of P arallel and Distributed Computing , vol. 73, no. 8, 2013. [30] T . Samak, D. Gunter , M. Goode, E. Deelman, G. Juve, F . Silva, and K. V ahi, “Failure analysis of distrib uted scientific workflo ws executing in the cloud, ” in Network and service management (cnsm), 2012 8th international conference and 2012 workshop on systems virtualiztion management (svm) . IEEE, 2012, pp. 46–54. [31] Y . Liang, Y . Zhang, H. Xiong, and R. Sahoo, “Failure Prediction in IBM BlueGene/L Event Logs, ” Seventh IEEE International Confer ence on Data Mining (ICDM 2007) , pp. 583–588, Oct. 2007. [32] R. Dudko, A. Sharma, and J. T edesco, “Effecti ve Failure Prediction in Hadoop Clusters, ” University of Idaho White P aper , pp. 1–8, 2012. [33] Q. Guan and S. Fu, “ Adaptiv e anomaly identification by ex- ploring metric subspace in cloud computing infrastructures, ” in 32nd IEEE Symposium on Reliable Distrib uted Systems (SRDS) , Braga, Portugal, Sep. 2013, pp. 205–214.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment