Doubly Robust Policy Evaluation and Optimization

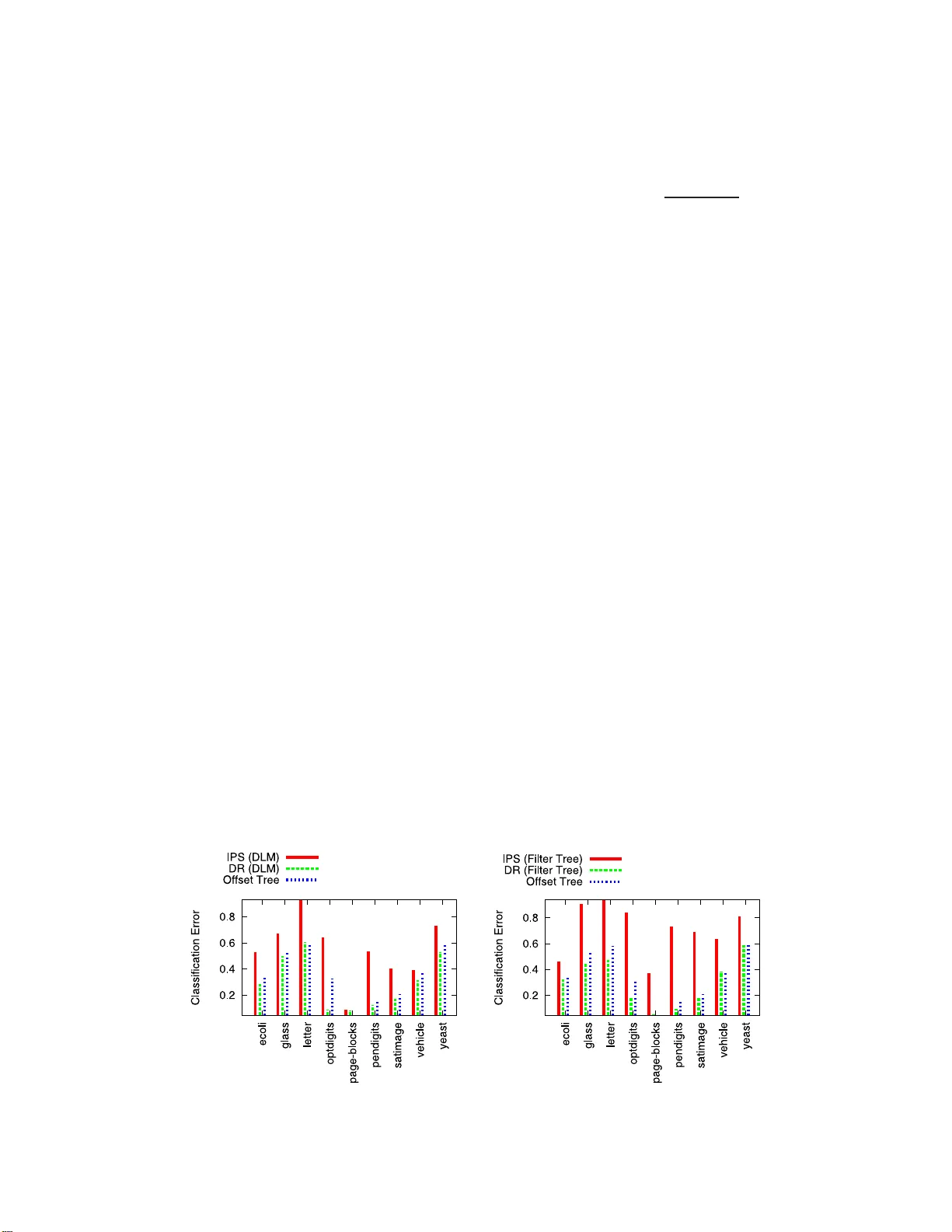

We study sequential decision making in environments where rewards are only partially observed, but can be modeled as a function of observed contexts and the chosen action by the decision maker. This setting, known as contextual bandits, encompasses a…

Authors: Miroslav Dudik, Dumitru Erhan, John Langford