Structure Learning in Bayesian Networks of Moderate Size by Efficient Sampling

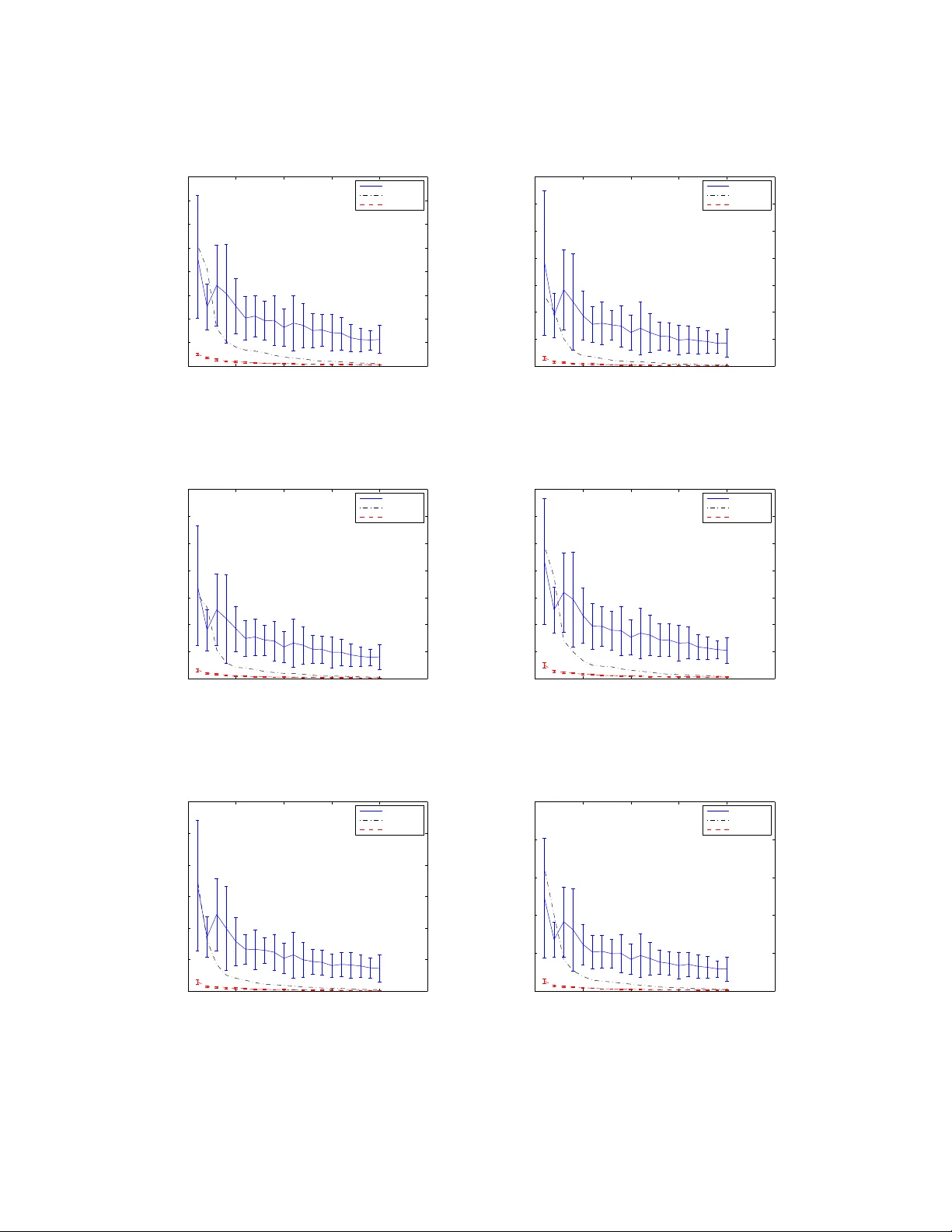

We study the Bayesian model averaging approach to learning Bayesian network structures (DAGs) from data. We develop new algorithms including the first algorithm that is able to efficiently sample DAGs according to the exact structure posterior. The D…

Authors: Ru He, Jin Tian, Huaiqing Wu