A Latent Source Model for Online Collaborative Filtering

Despite the prevalence of collaborative filtering in recommendation systems, there has been little theoretical development on why and how well it works, especially in the "online" setting, where items are recommended to users over time. We address th…

Authors: Guy Bresler, George H. Chen, Devavrat Shah

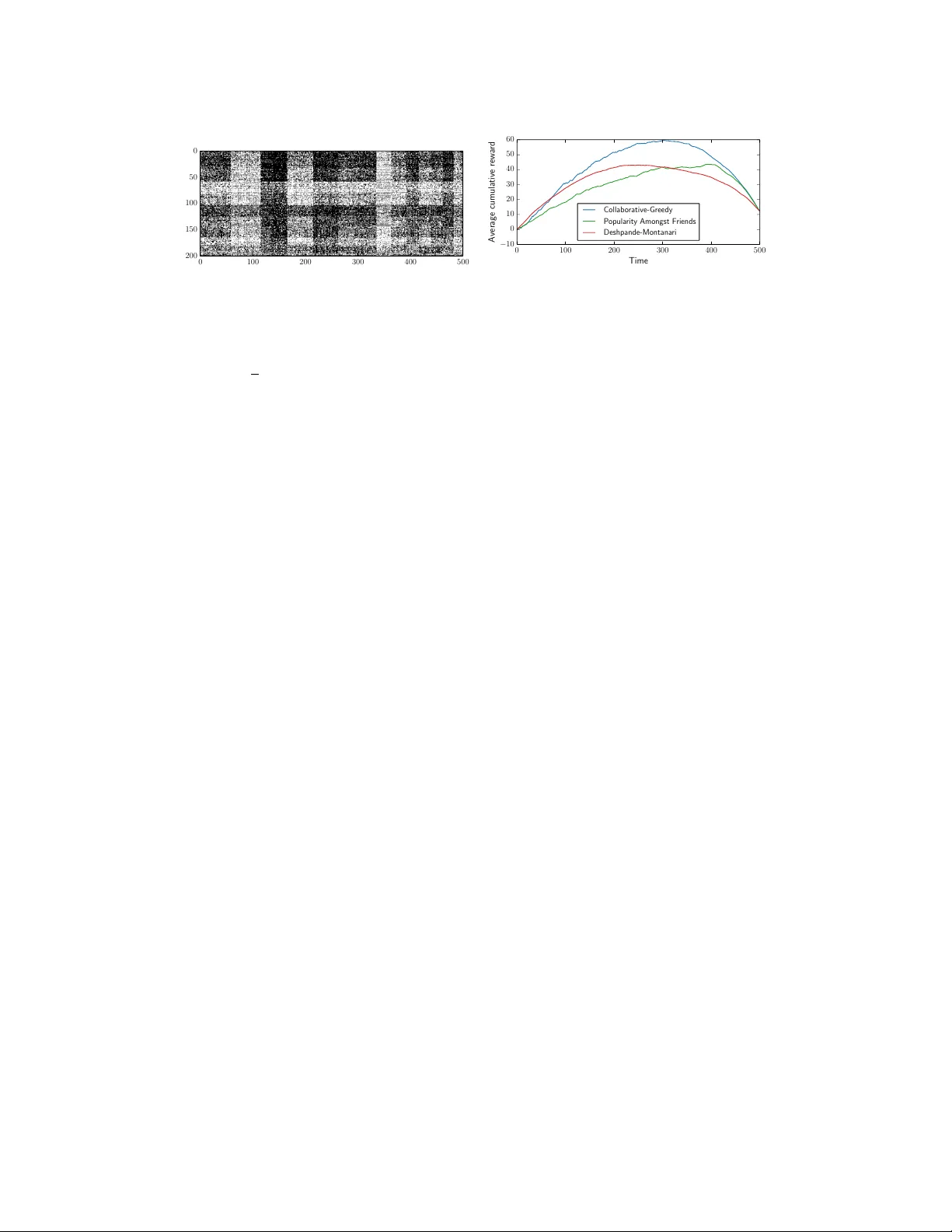

A Latent Sour ce Model f or Online Collaborativ e Filtering Guy Bresler George H. Chen Deva vrat Shah Department of Electrical Engineering and Computer Science Massachusetts Institute of T echnology Cambridge, MA 02139 { gbresler,georgehc,devavrat } @mit.edu Abstract Despite the pre valence of collaborati ve filtering in recommendation systems, there has been little theoretical de velopment on why and how well it works, especially in the “online” setting, where items are recommended to users ov er time. W e address this theoretical gap by introducing a model for online recommendation systems, cast item recommendation under the model as a learning problem, and analyze the performance of a cosine-similarity collaborativ e filtering method. In our model, each of n users either likes or dislikes each of m items. W e assume there to be k types of users, and all the users of a giv en type share a common string of probabilities determining the chance of liking each item. At each time step, we recommend an item to each user , where a key distinction from related bandit literature is that once a user consumes an item (e.g., watches a movie), then that item cannot be recommended to the same user again. The goal is to maximize the number of likable items recommended to users o ver time. Our main result establishes that after nearly log( k m ) initial learning time steps, a simple collaborativ e filtering algorithm achiev es essentially optimal performance without knowing k . The algorithm has an exploitation step that uses cosine similarity and two types of exploration steps, one to explore the space of items (standard in the literature) and the other to explore similarity between users (no vel to this work). 1 Introduction Recommendation systems hav e become ubiquitous in our li ves, helping us filter the v ast expanse of information we encounter into small selections tailored to our personal tastes. Prominent examples include Amazon recommending items to buy , Netflix recommending movies, and LinkedIn recom- mending jobs. In practice, recommendations are often made via collaborative filtering , which boils down to recommending an item to a user by considering items that other similar or “nearby” users liked. Collaborative filtering has been used extensi vely for decades now including in the GroupLens news recommendation system [20], Amazon’ s item recommendation system [17], the Netflix Prize winning algorithm by BellKor’ s Pragmatic Chaos [16, 24, 19], and a recent song recommendation system [1] that won the Million Song Dataset Challenge [6]. Most such systems operate in the “online” setting, where items are constantly recommended to users ov er time. In many scenarios, it does not make sense to recommend an item that is already consumed. F or e xample, once Alice watches a movie, there’ s little point to recommending the same movie to her again, at least not immediately , and one could argue that recommending unwatched movies and already watched movies could be handled as separate cases. Finally , what matters is whether a likable item is recommended to a user rather than an unlikable one. In short, a good online recommendation system should recommend different likable items continually o ver time. 1 Despite the success of collaborative filtering, there has been little theoretical dev elopment to justify its ef fecti veness in the online setting. W e address this theoretical gap with our two main contrib utions in this paper . First, we frame online recommendation as a learning problem that fuses the lines of work on sleeping bandits and clustered bandits. W e impose the constraint that once an item is consumed by a user , the system can’ t recommend the item to the same user again. Our second main contribution is to analyze a cosine-similarity collaborativ e filtering algorithm. The key insight is our inclusion of tw o types of exploration in the algorithm: (1) the standard random exploration for probing the space of items, and (2) a no vel “joint” e xploration for finding different user types. Under our learning problem setup, after nearly log( k m ) initial time steps, the proposed algorithm achiev es near-optimal performance relativ e to an oracle algorithm that recommends all likable items first. The nearly logarithmic dependence is a result of using the two dif ferent exploration types. W e note that the algorithm does not know k . Outline. W e present our model and learning problem for online recommendation systems in Section 2, provide a collaborati ve filtering algorithm and its performance guarantee in Section 3, and giv e the proof idea for the performance guarantee in Section 4. An ov ervie w of experimental results is giv en in Section 5. W e discuss our work in the context of prior work in Section 6. 2 A Model and Learning Problem f or Online Recommendations W e consider a system with n users and m items. At each time step, each user is recommended an item that she or he hasn’t consumed yet, upon which, for simplicity , we assume that the user imme- diately consumes the item and rates it +1 (like) or − 1 (dislike). 1 The reward earned by the recom- mendation system up to any time step is the total number of liked items that ha ve been recommended so far across all users. Formally , index time by t ∈ { 1 , 2 , . . . } , and users by u ∈ [ n ] , { 1 , . . . , n } . Let π ut ∈ [ m ] , { 1 , . . . , m } be the item recommended to user u at time t . Let Y ( t ) ui ∈ {− 1 , 0 , +1 } be the rating provided by user u for item i up to and including time t , where 0 indicates that no rating has been giv en yet. A reasonable objective is to maximize the e xpected rew ard r ( T ) up to time T : r ( T ) , T X t =1 n X u =1 E [ Y ( T ) uπ ut ] = m X i =1 n X u =1 E [ Y ( T ) ui ] . The ratings are noisy: the latent item preferences for user u are represented by a length- m v ector p u ∈ [0 , 1] m , where user u likes item i with probability p ui , independently across items. For a user u , we say that item i is likable if p ui > 1 / 2 and unlikable if p ui < 1 / 2 . T o maximize the expected rew ard r ( T ) , clearly likable items for the user should be recommended before unlikable ones. In this paper , we focus on recommending likable items. Thus, instead of maximizing the expected rew ard r ( T ) , we aim to maximize the expected number of likable items recommended up to time T : r ( T ) + , T X t =1 n X u =1 E [ X ut ] , (1) where X ut is the indicator random variable for whether the item recommended to user u at time t is likable, i.e., X ut = +1 if p uπ ut > 1 / 2 and X ut = 0 otherwise. Maximizing r ( T ) and r ( T ) + differ since the former asks that we prioritize items according to their probability of being liked. Recommending likable items for a user in an arbitrary order is sufficient for many real recommen- dation systems such as for movies and music. For example, we suspect that users wouldn’t actually prefer to listen to music starting from the songs that their user type would like with highest probabil- ity to the ones their user type would like with lowest probability; instead, each user would listen to songs that she or he finds likable, ordered such that there is suf ficient di v ersity in the playlist to k eep the user e xperience interesting. W e target the modest goal of merely recommending likable items, in any order . Of course, if all likable items have the same probability of being liked and similarly for all unlikable items, then maximizing r ( T ) and r ( T ) + are equiv alent. 1 In practice, a user could ignore the recommendation. T o keep our exposition simple, howe ver , we stick to this setting that resembles song recommendation systems like Pandora that per user continually recommends a single item at a time. For example, if a user rates a song as “thumbs down” then we assign a rating of − 1 (dislike), and any other action corresponds to +1 (like). 2 The fundamental challenge is that to learn about a user’ s preference for an item, we need the user to rate (and thus consume) the item. But then we cannot recommend that item to the user again! Thus, the only way to learn about a user’ s preferences is through collaboration, or inferring from other users’ ratings. Broadly , such inference is possible if the users preferences are someho w related. In this paper, we assume a simple structure for shared user preferences. W e posit that there are k < n different types of users, where users of the same type have identical item preference vectors. The number of types k represents the heterogeneity in the population. F or ease of exposition, in this paper we assume that a user belongs to each user type with probability 1 /k . W e refer to this model as a latent sour ce model , where each user type corresponds to a latent source of users. W e remark that there is evidence suggesting real movie recommendation data to be well modeled by clustering of both users and items [21]. Our model only assumes clustering o ver users. Our problem setup relates to some v ersions of the multi-armed bandit problem. A fundamental difference between our setup and that of the standard stochastic multi-armed bandit problem [23, 8] is that the latter allo ws each item to be recommended an infinite number of times. Thus, the solution concept for the stochastic multi-armed bandit problem is to determine the best item (arm) and keep choosing it [3]. This observ ation applies also to “clustered bandits” [9], which like our work seeks to capture collaboration between users. On the other hand, sleeping bandits [15] allow for the av ailable items at each time step to vary , but the analysis is worst-case in terms of which items are a v ailable ov er time. In our setup, the sequence of items that are av ailable is not adversarial. Our model combines the collaborati ve aspect of clustered bandits with dynamic item a vailability from sleeping bandits, where we impose a strict structure on how items become una vailable. 3 A Collaborative Filtering Algorithm and Its Perf ormance Guarantee This section presents our algorithm C O L L A B O R A T I V E - G R E E DY and its accompan ying theoretical performance guarantee. The algorithm is syntactically similar to the ε -greedy algorithm for multi- armed bandits [22], which explores items with probability ε and otherwise greedily chooses the best item seen so far based on a plurality vote. In our algorithm, the greedy choice, or exploitation, uses the standard cosine-similarity measure. The exploration, on the other hand, is split into two types, a standard item exploration in which a user is recommended an item that she or he hasn’t consumed yet uniformly at random, and a joint exploration in which all users are asked to provide a rating for the next item in a shared, randomly chosen sequence of items. Let’ s fill in the details. Algorithm. At each time step t , either all the users are ask ed to explore, or an item is recommended to each user by choosing the item with the highest score for that user . The pseudocode is described in Algorithm 1. There are two types of exploration: random explor ation , which is for exploring the space of items, and joint e xploration , which helps to learn about similarity between users. For a pre-specified rate α ∈ (0 , 4 / 7] , we set the probability of random e xploration to be ε R ( n ) = 1 /n α Algorithm 1: C O L L A B O R A T I V E - G R E E DY Input : P arameters θ ∈ [0 , 1] , α ∈ (0 , 4 / 7] . Select a random ordering σ of the items [ m ] . Define ε R ( n ) = 1 n α , and ε J ( t ) = 1 t α . for time step t = 1 , 2 , . . . , T do W ith prob . ε R ( n ) : ( random exploration ) for each user, recommend a random item that the user has not rated. W ith prob . ε J ( t ) : ( joint exploration ) for each user, recommend the first item in σ that the user has not rated. W ith prob . 1 − ε J ( t ) − ε R ( n ) : ( exploitation ) for each user u , recommend an item j that the user has not rated and that maximizes score e p ( t ) uj , which depends on threshold θ . end 3 (decaying with the number of users), and the probability of joint exploration to be ε J ( t ) = 1 /t α (decaying with time). 2 Next, we define user u ’ s score e p ( t ) ui for item i at time t . Recall that we observe Y ( t ) ui = {− 1 , 0 , +1 } as user u ’ s rating for item i up to time t , where 0 indicates that no rating has been gi v en yet. W e define e p ( t ) ui , P v ∈ e N ( t ) u 1 { Y ( t ) v i = +1 } P v ∈ e N ( t ) u 1 { Y ( t ) v i 6 = 0 } if P v ∈ e N ( t ) u 1 { Y ( t ) v i 6 = 0 } > 0 , 1 / 2 otherwise , where the neighborhood of user u is giv en by e N ( t ) u , { v ∈ [ n ] : h e Y ( t ) u , e Y ( t ) v i ≥ θ | supp ( e Y ( t ) u ) ∩ supp ( e Y ( t ) v ) |} , and e Y ( t ) u consists of the re vealed ratings of user u restricted to items that ha ve been jointly explored. In other words, e Y ( t ) ui = ( Y ( t ) ui if item i is jointly explored by time t, 0 otherwise . The neighborhoods are defined precisely by cosine similarity with respect to jointed e xplored items. T o see this, for users u and v with re vealed ratings e Y ( t ) u and e Y ( t ) v , let Ω uv , supp ( e Y ( t ) u ) ∩ supp ( e Y ( t ) v ) be the support overlap of e Y ( t ) u and e Y ( t ) v , and let h· , ·i Ω uv be the dot product restricted to entries in Ω uv . Then h e Y ( t ) u , e Y ( t ) v i | Ω uv | = h e Y ( t ) u , e Y ( t ) v i Ω uv q h e Y ( t ) u , e Y ( t ) u i Ω uv q h e Y ( t ) v , e Y ( t ) v i Ω uv , which is the cosine similarity of rev ealed rating v ectors e Y ( t ) u and e Y ( t ) v restricted to the o verlap of their supports. Thus, users u and v are neighbors if and only if their cosine similarity is at least θ . Theoretical performance guarantee. W e now state our main result on the proposed collaborati ve filtering algorithm’ s performance with respect to the objectiv e stated in equation (1). W e be gin with two reasonable, and seemingly necessary , conditions under which our the results will be established. A1 No ∆ -ambiguous items. There exists some constant ∆ > 0 such that | p ui − 1 / 2 | ≥ ∆ for all users u and items i . (Smaller ∆ corresponds to more noise.) A2 γ -incoherence. There exist a constant γ ∈ [0 , 1) such that if users u and v are of different types, then their item preference vectors p u and p v satisfy 1 m h 2 p u − 1 , 2 p v − 1 i ≤ 4 γ ∆ 2 , where 1 is the all ones vector . Note that a different way to write the left-hand side is E [ 1 m h Y ∗ u , Y ∗ v i ] , where Y ∗ u and Y ∗ v are fully-re vealed rating vectors of users u and v , and the expectation is o ver the random ratings of items. The first condition is a lo w noise condition to ensure that with a finite number of samples, we can correctly classify each item as either likable or unlikable. The incoherence condition asks that the different user types are well-separated so that cosine similarity can tease apart the users of different types o ver time. W e pro vide some examples after the statement of the main theorem that suggest the incoherence condition to be reasonable, allowing E [ h Y ∗ u , Y ∗ v i ] to scale as Θ( m ) rather than o ( m ) . W e assume that the number of users satisfies n = O ( m C ) for some constant C > 1 . This is without loss of generality since otherwise, we can randomly di vide the n users into separate population 2 For ease of presentation, we set the two explorations to hav e the same decay rate α , but our proof easily extends to encompass different decay rates for the two exploration types. Furthermore, the constant 4 / 7 ≥ α is not special. It could be different and only af fects another constant in our proof. 4 pools, each of size O ( m C ) and run the recommendation algorithm independently for each pool to achiev e the same ov erall performance guarantee. Finally , we define µ , the minimum proportion of likable items for an y user (and thus any user type): µ , min u ∈ [ n ] P m i =1 1 { p ui > 1 / 2 } m . Theorem 1. Let δ ∈ (0 , 1) be some pr e-specified tolerance. T ake as input to C O L L A B O R A T I V E - G R E E DY θ = 2∆ 2 (1 + γ ) wher e γ ∈ [0 , 1) is as defined in A2 , and α ∈ (0 , 4 / 7] . Under the latent sour ce model and assumptions A1 and A2 , if the number of users n = O ( m C ) satisfies n = Ω k m log 1 δ + 4 δ 1 /α , then for any T learn ≤ T ≤ µm , the expected proportion of likable items r ecommended by C O L L A B O R A T I V E - G R E E DY up until time T satisfies r ( T ) + T n ≥ 1 − T learn T (1 − δ ) , wher e T learn = Θ log km ∆ δ ∆ 4 (1 − γ ) 2 1 / (1 − α ) + 4 δ 1 /α . Theorem 1 says that there are T learn initial time steps for which the algorithm may be giving poor recommendations. Afterward, for T learn < T < µm , the algorithm becomes near-optimal, recom- mending a fraction of likable items 1 − δ close to what an optimal oracle algorithm (that recommends all likable items first) would achieve. Then for time horizon T > µm , we can no longer guarantee that there are likable items left to recommend. Indeed, if the user types each have the same fraction of likable items, then even an oracle recommender would use up the µm likable items by this time. Meanwhile, to giv e a sense of ho w long the learning period T learn is, note that when α = 1 / 2 , we hav e T learn scaling as log 2 ( k m ) , and if we choose α close to 0 , then T learn becomes nearly log( k m ) . In summary , after T learn initial time steps, the simple algorithm proposed is essentially optimal. T o gain intuition for incoherence condition A2 , we calculate the parameter γ for three e xamples. Example 1. Consider when ther e is no noise, i.e., ∆ = 1 2 . Then users’ r atings ar e deterministic given their user type. Pr oduce k vectors of pr obabilities by drawing m independent Bernoulli ( 1 2 ) random variables ( 0 or 1 with pr obability 1 2 each) for each user type . F or any item i and pair of users u and v of differ ent types, Y ∗ ui · Y ∗ v i is a Rademacher random variable ( ± 1 with pr obability 1 2 each), and thus the inner pr oduct of two user rating vectors is equal to the sum of m Rademacher random variables. Standar d concentration inequalities show that one may take γ = Θ q log m m to satisfy γ -incoherence with pr obability 1 − 1 / poly ( m ) . Example 2. W e expand on the pr evious example by c hoosing an arbitrary ∆ > 0 and making all latent sour ce pr obability vectors have entries equal to 1 2 ± ∆ with pr obability 1 2 each. As befor e let user u and v ar e fr om dif fer ent type . Now E [ Y ∗ ui · Y ∗ v i ] = ( 1 2 + ∆) 2 + ( 1 2 − ∆) 2 − 2( 1 4 − ∆ 2 ) = 4∆ 2 if p ui = p v i and E [ Y ∗ ui · Y ∗ v i ] = 2( 1 4 − ∆ 2 ) − ( 1 2 + ∆) 2 − ( 1 2 − ∆) 2 = − 4∆ 2 if p ui = 1 − p v i . The value of the inner pr oduct E [ h Y ∗ u , Y ∗ v i ] is again equal to the sum of m Rademac her random variables, b ut this time scaled by 4∆ 2 . F or similar r easons as befor e, γ = Θ q log m m suffices to satisfy γ -incoherence with pr obability 1 − 1 / poly ( m ) . Example 3. Continuing with the pre vious example, now suppose each entry is 1 2 +∆ with pr obability µ ∈ (0 , 1 / 2) and 1 2 − ∆ with pr obability 1 − µ . Then for two users u and v of differ ent types, p ui = p v i with pr obability µ 2 + (1 − µ ) 2 . This implies that E [ h Y ∗ u , Y ∗ v i ] = 4 m ∆ 2 (1 − 2 µ ) 2 . Again, using standar d concentration, this shows that γ = (1 − 2 µ ) 2 + Θ q log m m suffices to satisfy γ -incoherence with pr obability 1 − 1 / poly ( m ) . 5 4 Proof of Theorem 1 Recall that X ut is the indicator random variable for whether the item π ut recommended to user u at time t is likable, i.e., p uπ ut > 1 / 2 . Giv en assumption A1 , this is equiv alent to the ev ent that p uπ ut ≥ 1 2 + ∆ . The expected proportion of likable items is r ( T ) + T n = 1 T n T X t =1 n X u =1 E [ X ut ] = 1 T n T X t =1 n X u =1 P ( X ut = 1) . Our proof focuses on lower -bounding P ( X ut = 1) . The key idea is to condition on what we call the “good neighborhood” ev ent E good ( u, t ) : E good ( u, t ) = n at time t , user u has ≥ n 5 k neighbors from the same user type (“good neighbors”), and ≤ ∆ tn 1 − α 10 k m neighbors from other user types (“bad neighbors”) o . This good neighborhood event will enable us to argue that after an initial learning time, with high probability there are at most ∆ as many ratings from bad neighbors as there are from good neighbors. The proof of Theorem 1 consists of two parts. The first part uses joint exploration to sho w that after a sufficient amount of time, the good neighborhood e vent E good ( u, t ) holds with high probability . Lemma 1. F or user u , after t ≥ 2 log (10 k mn α / ∆) ∆ 4 (1 − γ ) 2 1 / (1 − α ) time steps, P ( E good ( u, t )) ≥ 1 − exp − n 8 k − 12 exp − ∆ 4 (1 − γ ) 2 t 1 − α 20 . In the above lower bound, the first exponentially decaying term could be thought of as the penalty for not ha ving enough users in the system from the k user types, and the second decaying term could be thought of as the penalty for not yet clustering the users correctly . The second part of our proof to Theorem 1 shows that, with high probability , the good neighborhoods hav e, through random exploration, accurately estimated the probability of liking each item. Thus, we correctly classify each item as likable or not with high probability , which leads to a lower bound on P ( X ut = 1) . Lemma 2. F or user u at time t , if the good neighborhood event E good ( u, t ) holds and t ≤ µm , then P ( X ut = 1) ≥ 1 − 2 m exp − ∆ 2 tn 1 − α 40 k m − 1 t α − 1 n α . Here, the first e xponentially decaying term could be thought of as the cost of not classifying items correctly as likable or unlikable, and the last two decaying terms together could be thought of as the cost of exploration (we e xplore with probability ε J ( t ) + ε R ( n ) = 1 /t α + 1 /n α ). W e defer the proofs of Lemmas 1 and 2 to Appendices A.1 and A.2. Combining these lemmas and choosing appropriate constraints on the numbers of users and items, we produce the following lemma. Lemma 3. Let δ ∈ (0 , 1) be some pr e-specified tolerance. If the number of users n and items m satisfy n ≥ max n 8 k log 4 δ , 4 δ 1 /α o , µm ≥ t ≥ max 2 log (10 k mn α / ∆) ∆ 4 (1 − γ ) 2 1 / (1 − α ) , 20 log (96 /δ ) ∆ 4 (1 − γ ) 2 1 / (1 − α ) , 4 δ 1 /α , nt 1 − α ≥ 40 k m ∆ 2 log 16 m δ , then P ( X ut = 1) ≥ 1 − δ . 6 Pr oof. W ith the abov e conditions on n and t satisfied, we combine Lemmas 1 and 2 to obtain P ( X ut = 1) ≥ 1 − exp − n 8 k − 12 exp − ∆ 4 (1 − γ ) 2 t 1 − α 20 − 2 m exp − ∆ 2 tn 1 − α 40 k m − 1 t α − 1 n α ≥ 1 − δ 4 − δ 8 − δ 8 − δ 4 − δ 4 = 1 − δ. Theorem 1 follo ws as a corollary to Lemma 3. As previously mentioned, without loss of generality , we take n = O ( m C ) . Then with number of users n satisfying O ( m C ) = n = Ω k m log 1 δ + 4 δ 1 /α , and for any time step t satisfying µm ≥ t ≥ Θ log km ∆ δ ∆ 4 (1 − γ ) 2 1 / (1 − α ) + 4 δ 1 /α , T learn , we simultaneously meet all of the conditions of Lemma 3. Note that the upper bound on number of users n appears since without it, T learn would depend on n (observe that in Lemma 3, we ask that t be greater than a quantity that depends on n ). Provided that the time horizon satisfies T ≤ µm , then r ( T ) + T n ≥ 1 T n T X t = T learn n X u =1 P ( X ut = 1) ≥ 1 T n T X t = T learn n X u =1 (1 − δ ) = ( T − T learn )(1 − δ ) T , yielding the theorem statement. 5 Experimental Results W e provide only a summary of our experimental results here, deferring full details to Appendix A.3. W e simulate an online recommendation system based on movie ratings from the Movielens10m and Netflix datasets, each of which provides a sparsely filled user-by-movie rating matrix with ratings out of 5 stars. Unfortunately , e xisting collaborati ve filtering datasets such as the tw o we consider don’t offer the interacti vity of a real online recommendation system, nor do they allow us to rev eal the rating for an item that a user didn’ t actually rate. For simulating an online system, the former issue can be dealt with by simply rev ealing entries in the user-by-item rating matrix over time. W e address the latter issue by only considering a dense “top users vs. top items” subset of each dataset. In particular , we consider only the “top” users who hav e rated the most number of items, and the “top” items that hav e recei ved the most number of ratings. While this dense part of the dataset is unrepresentativ e of the rest of the dataset, it does allow us to use actual ratings provided by users without synthesizing any ratings. A rigorous validation would require an implementation of an actual interactiv e online recommendation system, which is beyond the scope of our paper . First, we v alidate that our latent source model is reasonable for the dense parts of the two datasets we consider by looking for clustering beha vior across users. W e find that the dense top users vs. top movies matrices do in fact exhibit clustering behavior of users and also movies, as shown in Figure 1(a). The clustering was found via Bayesian clustered tensor factorization, which was previously shown to model real mo vie ratings data well [21]. Next, we demonstrate our algorithm C O L L A B O R A T I V E - G R E E DY on the two simulated online movie recommendation systems, showing that it outperforms two existing recommendation algorithms Popularity Amongst Friends (P AF) [4] and a method by Deshpande and Montanari (DM) [12]. F ol- lowing t he experimental setup of [4], we quantize a rating of 4 stars or more to be +1 (likable), and a rating less than 4 stars to be − 1 (unlikable). While we look at a dense subset of each dataset, there are still missing entries. If a user u hasn’t rated item j in the dataset, then we set the corresponding true rating to 0, meaning that in our simulation, upon recommending item j to user u , we recei ve 0 rew ard, but we still mark that user u has consumed item j ; thus, item j can no longer be recom- mended to user u . For both Movielens10m and Netflix datasets, we consider the top n = 200 users and the top m = 500 movies. For Movielens10m, the resulting user-by-rating matrix has 80.7% nonzero entries. For Netflix, the resulting matrix has 86.0% nonzero entries. For an algorithm that 7 0 100 200 300 400 500 0 50 100 150 200 (a) 0 100 200 300 400 500 Time − 10 0 10 20 30 40 50 60 Average cumulative rew a rd Collab o rative-Greedy P opula rit y Amongst F riends Deshpande-Montana ri (b) Figure 1: Movielens10m dataset: (a) T op users by top movies matrix with rows and columns re- ordered to show clustering of users and items. (b) A verage cumulati ve re wards o ver time. recommends item π ut to user u at time t , we measure the algorithm’ s av erage cumulati v e re ward up to time T as 1 n P T t =1 P n u =1 Y ( T ) uπ ut , where we average over users. For all four methods, we recom- mend items until we reach time T = 500 , i.e., we mak e mo vie recommendations until each user has seen all m = 500 mo vies. W e disallow the matrix completion step for DM to see the users that we actually test on, but we allow it to see the the same items as what is in the simulated online recom- mendation system in order to compute these items’ feature vectors (using the rest of the users in the dataset). Furthermore, when a rating is re vealed, we pro vide DM both the thresholded rating and the non-thresholded rating, the latter of which DM uses to estimate user feature v ectors over time. W e discuss choice of algorithm parameters in Appendix A.3. In short, parameters θ and α of our algorithm are chosen based on training data, whereas we allo w the other algorithms to use whiche ver parameters giv e the best results on the test data. Despite giving the two competing algorithms this advantage, C O L L A B O R A T I V E - G R E E DY outperforms the two, as shown in Figure 1(b). Results on the Netflix dataset are similar . 6 Discussion and Related W ork This paper proposes a model for online recommendation systems under which we can analyze the performance of recommendation algorithms. W e theoretical justify when a cosine-similarity collab- orativ e filtering method works well, with a k ey insight of using two e xploration types. The closest related w ork is by Biau et al. [7], who study the asymptotic consistenc y of a cosine- similarity nearest-neighbor collaborative filtering method. Their goal is to predict the rating of the next unseen item. Barman and Dabeer [4] study the performance of an algorithm called Popular- ity Amongst Friends, examining its ability to predict binary ratings in an asymptotic information- theoretic setting. In contrast, we seek to understand the finite-time performance of such systems. Dabeer [11] uses a model similar to ours and studies online collaborative filtering with a mo ving horizon cost in the limit of small noise using an algorithm that knows the numbers of user types and item types. W e do not model different item types, our algorithm is oblivious to the number of user types, and our performance metric is dif ferent. Another related w ork is by Deshpande and Mon- tanari [12], who study online recommendations as a linear bandit problem; their method, ho wever , does not actually use any collaboration beyond a pre-processing step in which offline collaborative filtering (specifically matrix completion) is solved to compute feature v ectors for items. Our work also relates to the problem of learning mixture distrib utions (c.f., [10, 18, 5, 2]), where one observes samples from a mixture distrib ution and the goal is to learn the mixture components and weights. Existing results assume that one has access to the entire high-dimensional sample or that the samples are produced in an e xogenous manner (not chosen by the algorithm). Neither assumption holds in our setting, as we only see each user’ s re vealed ratings thus far and not the user’ s entire preference vector , and the recommendation algorithm af fects which samples are observ ed (by choosing which item ratings are revealed for each user). These tw o aspects make our setting more challenging than the standard setting for learning mixture distributions. Howe ver , our goal is more modest. Rather than learning the k item preference vectors, we settle for classifying them as likable or unlikable. Despite this, we suspect ha ving two types of exploration to be useful in general for efficiently learning mixture distrib utions in the active learning setting. Acknowledgements. This work was supported in part by NSF grant CNS-1161964 and by Army Research Of fice MURI A w ard W911NF-11-1-0036. GHC was supported by an NDSEG fello wship. 8 References [1] Fabio Aiolli. A preliminary study on a recommender system for the million songs dataset challenge. In Pr oceedings of the Italian Information Retrieval W orkshop , pages 73–83, 2013. [2] Anima Anandkumar, Rong Ge, Daniel Hsu, Sham M. Kakade, and Matus T elgarsk y . T ensor decomposi- tions for learning latent variable models, 2012. [3] Peter Auer, Nicol ` o Cesa-Bianchi, and Paul Fischer . Finite-time analysis of the multiarmed bandit problem. Machine Learning , 47(2-3):235–256, May 2002. [4] Kishor Barman and Onkar Dabeer . Analysis of a collaborativ e filter based on popularity amongst neigh- bors. IEEE T ransactions on Information Theory , 58(12):7110–7134, 2012. [5] Mikhail Belkin and Kaushik Sinha. Polynomial learning of distribution families. In F oundations of Computer Science (FOCS), 2010 51st Annual IEEE Symposium on , pages 103–112. IEEE, 2010. [6] Thierry Bertin-Mahieux, Daniel P .W . Ellis, Brian Whitman, and Paul Lamere. The million song dataset. In Pr oceedings of the 12th International Conference on Music Information Retrieval (ISMIR 2011) , 2011. [7] G ´ erard Biau, Beno ˆ ıt Cadre, and Laurent Rouvi ` ere. Statistical analysis of k -nearest neighbor collaborative recommendation. The Annals of Statistics , 38(3):1568–1592, 2010. [8] S ´ ebastien Bubeck and Nicol ` o Cesa-Bianchi. Re gret analysis of stochastic and nonstochastic multi-armed bandit problems. F oundations and T rends in Mac hine Learning , 5(1):1–122, 2012. [9] Loc Bui, Ramesh Johari, and Shie Mannor . Clustered bandits, 2012. arXi v:1206.4169. [10] Kamalika Chaudhuri and Satish Rao. Learning mixtures of product distributions using correlations and independence. In Confer ence on Learning Theory , pages 9–20, 2008. [11] Onkar Dabeer . Adaptive collaborating filtering: The lo w noise regime. In IEEE International Symposium on Information Theory , pages 1197–1201, 2013. [12] Y ash Deshpande and Andrea Montanari. Linear bandits in high dimension and recommendation systems, 2013. [13] Roger B. Grosse, Ruslan Salakhutdinov , W illiam T . Freeman, and Joshua B. T enenbaum. Exploiting compositionality to explore a lar ge space of model structures. In Uncertainty in Artificial Intelligence , pages 306–315, 2012. [14] W assily Hoeffding. Probability inequalities for sums of bounded random v ariables. J ournal of the Amer- ican statistical association , 58(301):13–30, 1963. [15] Robert Kleinberg, Alexandru Niculescu-Mizil, and Y ogeshwer Sharma. Regret bounds for sleeping ex- perts and bandits. Machine Learning , 80(2-3):245–272, 2010. [16] Y ehuda K oren. The BellKor solution to the Netflix grand prize. http://www.netflixprize.com/ assets/GrandPrize2009_BPC_BellKor.pdf , August 2009. [17] Greg Linden, Brent Smith, and Jeremy Y ork. Amazon.com recommendations: item-to-item collaborati ve filtering. IEEE Internet Computing , 7(1):76–80, 2003. [18] Ankur Moitra and Gregory V aliant. Settling the polynomial learnability of mixtures of gaussians. Pr o- ceedings of the 51st Annual IEEE Symposium on F oundations of Computer Science , 2010. [19] Martin Piotte and Martin Chabbert. The pragmatic theory solution to the netflix grand prize. http:// www.netflixprize.com/assets/GrandPrize2009_BPC_PragmaticTheory.pdf , Au- gust 2009. [20] Paul Resnick, Neophytos Iaco vou, Mitesh Suchak, Peter Bergstrom, and John Riedl. Grouplens: An open architecture for collaborative filtering of netnews. In Pr oceedings of the 1994 ACM Confer ence on Computer Supported Cooperative W ork , CSCW ’94, pages 175–186, New Y ork, NY , USA, 1994. A CM. [21] Ilya Sutskever , Ruslan Salakhutdinov , and Joshua B. T enenbaum. Modelling relational data using bayesian clustered tensor factorization. In NIPS , pages 1821–1828, 2009. [22] Richard S. Sutton and Andrew G. Barto. Reinfor cement Learning: An Intr oduction . MIT Press, Cam- bridge, MA, 1998. [23] W illiam R. Thompson. On the Likelihood that one Unkno wn Probability Exceeds Another in V iew of the Evidence of T wo Samples. Biometrika , 25:285–294, 1933. [24] Andreas T ¨ oscher and Michael Jahrer . The bigchaos solution to the netflix grand prize. http://www. netflixprize.com/assets/GrandPrize2009_BPC_BigChaos.pdf , September 2009. 9 A A ppendix Throughout our deriv ations, if it is clear from context, we omit the argument ( t ) indexing time, for example writing Y u instead of Y u ( t ) . A.1 Proof of Lemma 1 W e reproduce Lemma 1 below for ease of presentation. Lemma 1. F or user u , after t ≥ 2 log (10 k mn α / ∆) ∆ 4 (1 − γ ) 2 1 / (1 − α ) time steps, P ( E good ( u, t )) ≥ 1 − exp − n 8 k − 12 exp − ∆ 4 (1 − γ ) 2 t 1 − α 20 . T o deri ve this lo wer bound on the probability that the good neighborhood ev ent E good ( u, t ) occurs, we prove four lemmas (Lemmas 4, 5, 6, and 7). Before doing so, we define a constant that will appear sev eral times: β , exp( − ∆ 4 (1 − γ ) 2 t 1 − α ) . W e begin by ensuring that enough users from each of the k user types are in the system. Lemma 4. F or a user u , P user u ’ s type has ≤ n 2 k users ≤ exp − n 8 k . Pr oof. Let N be the number of users from user u ’ s type. User types are equiprobable, so N ∼ Bin ( n, 1 k ) . By a Chernof f bound, P N ≤ n 2 k ≤ exp − 1 2 ( n k − n 2 k ) 2 n k = exp − n 8 k . Next, we ensure that sufficiently many items have been jointly explored across all users. This will subsequently be used for bounding both the number of good neighbors and the number of bad neighbors. Lemma 5. After t time steps, P ( fewer than t 1 − α / 2 jointly explor ed items ) ≤ exp( − t 1 − α / 20) . Pr oof. Let Z s be the indicator random variable for the event that the algorithm jointly e xplores at time s . Thus, the number of jointly e xplored items up to time t is P t s =1 Z s . By our choice for the time-varying joint exploration probability ε J , we have P ( Z s = 1) = ε J ( s ) = 1 s α and P ( Z s = 0) = 1 − 1 s α . Note that the centered random variable ¯ Z s = E [ Z s ] − Z s = 1 s α − Z s has zero mean, and | ¯ Z s | ≤ 1 with probability 1. Then, P t X s =1 Z s < 1 2 t 1 − α = P t X s =1 ¯ Z s > t X s =1 E [ Z s ] − 1 2 t 1 − α ( i ) ≤ P t X s =1 ¯ Z s > 1 2 t 1 − α ( ii ) ≤ exp − 1 8 t 2(1 − α ) P t s =1 E [ ¯ Z 2 s ] + 1 6 t 1 − α ( iii ) ≤ exp − 1 8 t 2(1 − α ) t 1 − α 1 − α + 1 6 t 1 − α = exp − 3(1 − α ) t 1 − α 4(7 − α ) ( iv ) ≤ exp( − t 1 − α / 20) , where step ( i ) uses the f act that P t s =1 E [ Z s ] = P t s =1 1 /s α ≥ t/t α = t 1 − α , step ( ii ) is Bernstein’ s inequality , step ( iii ) uses the fact that P t s =1 E [ ¯ Z 2 s ] ≤ P t s =1 E [ Z 2 s ] = P t s =1 1 /s α ≤ t 1 − α / (1 − α ) , and step ( iv ) uses the fact that α ≤ 4 / 7 . (W e remark that the choice of constant 4 / 7 isn’ t special; changing it would simply modify the constant in the decaying exponentially to potentially no longer be 1 / 20 ). 10 Assuming that the bad e vents for the pre vious two lemmas do not occur , we now pro vide a lower bound on the number of good neighbors that holds with high probability . Lemma 6. Suppose that ther e ar e no ∆ -ambiguous items, that ther e ar e mor e than n 2 k users of user u ’ s type, and that all users have r ated at least t 1 − α / 2 items as part of joint exploration. F or user u , let n good be the number of “good” neighbors of user u . If β ≤ 1 10 , then P n good ≤ (1 − β ) n 4 k ≤ 10 β . W e defer the proof of Lemma 6 to Appendix A.1.1. Finally , we verify that the number of bad neighbors for any user is not too large, again conditioned on there being enough jointly explored items. Lemma 7. Suppose that the minimum number of rated items in common between any pair of users is t 1 − α / 2 and suppose that γ -incoherence holds for some γ ∈ [0 , 1) . F or user u , let n bad be the number of “bad” neighbors of user u . Then P ( n bad ≥ n p β ) ≤ p β . W e defer the proof of Lemma 7 to Appendix A.1.2. W e no w prove Lemma 1, which union bounds ov er the four bad e vents of Lemmas 4, 5, 6, and 7. Recall that the good neighborhood e vent E good ( u, t ) holds if at time t , user u has more than n 5 k good neighbors and less than ∆ tn 1 − α 10 km bad neighbors. By assuming that the four bad ev ents don’t happen, then Lemma 6 tells us that there are more than (1 − β ) n 4 k good neighbors provided that β ≤ 1 10 . Thus, to ensure that there are more than n 5 k good neighbors, it suffices to ha v e (1 − β ) n 4 k ≥ n 5 k , which happens when β ≤ 1 5 , but we already require that β ≤ 1 10 . Similarly , Lemma 7 tells us that there are fewer than n √ β bad neighbors, so to ensure that there are fe wer than ∆ tn 1 − α 10 km bad neighbors it suffices to have n √ β ≤ ∆ tn 1 − α 10 km , which happens when β ≤ ( ∆ t 10 kmn α ) 2 . W e can satisfy all constraints on β by asking that β ≤ ( ∆ 10 kmn α ) 2 , which is tantamount to asking that t ≥ 2 log (10 k mn α / ∆) ∆ 4 (1 − γ ) 2 1 / (1 − α ) since β = exp( − ∆ 4 (1 − γ ) 2 t 1 − α ) . Finally , with t satisfying the inequality above, the union bound o ver the four bad ev ents can be further bounded to complete the proof: P ( E good ( u, t )) ≥ 1 − exp − n 8 k − exp( − t 1 − α / 20) − 10 β − p β ≥ 1 − exp − n 8 k − 12 exp − ∆ 4 (1 − γ ) 2 t 1 − α 20 . A.1.1 Proof of Lemma 6 W e begin with a preliminary lemma that upper-bounds the probability of tw o users of the same type not being declared as neighbors. Lemma 8. Suppose that ther e ar e no ∆ -ambiguous items for any of the user types. Let user s u and v be of the same type, and suppose that they have rated at least Γ 0 items in common (explor ed jointly). Then for θ ∈ (0 , 4∆ 2 ) , P ( users u and v ar e not declar ed as neighbors ) ≤ exp − (4∆ 2 − θ ) 2 2 Γ 0 . 11 Pr oof. Let us first suppose that users u and v ha ve rated exactly Γ 0 items in common. The tw o users are not declared to be neighbors if h e Y u , e Y v i < θ Γ 0 . Let Ω ⊆ [ m ] such that | Ω | = Γ 0 . W e hav e E h e Y u , e Y v i supp ( e Y u ) ∩ supp ( e Y v ) = Ω = X i ∈ Ω E [ e Y ui e Y v i | e Y ui 6 = 0 , e Y v i 6 = 0] = X i ∈ Ω ( p 2 ui + (1 − p ui ) 2 − 2 p ui (1 − p ui )) = 4 X i ∈ Ω p ui − 1 2 2 . (2) Since h e Y u , e Y v i = P i ∈ Ω e Y ui e Y v i is the sum of terms { e Y ui e Y v i } i ∈ Ω that are each bounded within [ − 1 , 1] , Hoeffding’ s inequality yields P h e Y u , e Y v i ≤ θ Γ 0 supp ( e Y u ) ∩ supp ( e Y v ) = Ω ≤ exp − equation (2) z }| { 4 P i ∈ Ω p g i − 1 2 2 − θ Γ 0 2 2Γ 0 . (3) As there are no ∆ -ambiguous items, ∆ ≤ | p ui − 1 / 2 | for all users u and items i . Thus, our choice of θ guarantees that 4 X i ∈ Ω p ui − 1 2 2 − θ Γ 0 ≥ 4Γ 0 ∆ 2 − θ Γ 0 = (4∆ 2 − θ )Γ 0 ≥ 0 . (4) Combining inequalities (3) and (4), and observing that the above holds for all subsets Ω of cardinality Γ 0 , we obtain the desired bound on the probability that users u and v are not declared as neighbors: P ( h e Y u , e Y v i ≤ θ Γ 0 | | supp ( e Y u ) ∩ supp ( e Y v ) | = Γ 0 ) ≤ exp − (4∆ 2 − θ ) 2 2 Γ 0 . (5) Now to handle the case that users u and v ha ve jointly rated more than Γ 0 items, observe that, with shorthand Γ uv , | supp ( e Y u ) ∩ supp ( e Y v ) | , P ( u and v not declared neighbors | p u = p v , Γ uv ≥ Γ 0 ) = P ( h e Y u , e Y v i < θ Γ uv | p u = p v , Γ uv ≥ Γ 0 ) = P ( h e Y u , e Y v i ≤ θ Γ uv , Γ uv ≥ Γ 0 | p u = p v ) P (Γ uv ≥ Γ 0 | p u = p v ) = P m ` =Γ 0 P ( h e Y u , e Y v i ≤ θ `, Γ uv = ` | p u = p v ) P (Γ uv ≥ Γ 0 | p u = p v ) = P m ` =Γ 0 P (Γ uv = ` | p u = p v ) · P ( h e Y u , e Y v i ≤ θ ` | p u = p v , Γ uv = ` ) P (Γ uv ≥ Γ 0 | p u = p v ) ≤ P m ` =Γ 0 P (Γ uv = ` | p u = p v ) exp − (4∆ 2 − θ ) 2 2 Γ 0 P (Γ uv ≥ Γ 0 | p u = p v ) by inequality (5) = exp − (4∆ 2 − θ ) 2 2 Γ 0 . W e now prov e Lemma 6. Suppose that the ev ent in Lemma 4 holds. Let G be n 2 k users from the same user type as user u ; there could be more than n 2 k such users but it suffices to consider n 2 k of them. W e define an indicator random variable G v , 1 { users u and v are neighbors } = 1 {h e Y ( t ) u , e Y ( t ) v i ≥ θ t 1 − α / 2 } . 12 Thus, the number of good neighbors of user u is lower -bounded by W = P v ∈G G v . Note that the G v ’ s are not independent. T o arri ve at a lo wer bound for W that holds with high probability , we use Chebyshev’ s inequality: P ( W − E [ W ] ≤ − E [ W ] / 2) ≤ 4V ar( W ) ( E [ W ]) 2 . (6) Let β = exp( − (4∆ 2 − θ ) 2 Γ 0 / 2) be the probability bound from Lemma 8, where by our choice of θ = 2∆ 2 (1 + γ ) and with Γ 0 = t 1 − α / 2 , we hav e β = exp( − ∆ 4 (1 − γ ) 2 t 1 − α ) . Applying Lemma 8, we hav e E [ W ] ≥ (1 − β ) n 2 k , and hence ( E [ W ]) 2 ≥ (1 − 2 β ) n 2 4 k 2 . (7) W e now upper -bound V ar ( W ) = X v ∈G V ar ( G v ) + X v 6 = w Cov ( G v , G w ) . Since G v = G 2 v , V ar ( G v ) = E [ G v ] − E [ G v ] 2 = E [ G v ] | {z } ≤ 1 (1 − E [ G v ]) ≤ β , where the last step uses Lemma 8. Meanwhile, Cov ( G v , G w ) = E [ G v G w ] − E [ G v ] E [ G w ] ≤ 1 − (1 − β ) 2 ≤ 2 β . Putting together the pieces, V ar ( W ) ≤ n 2 k · β + n 2 k · n 2 k − 1 · 2 β ≤ n 2 2 k 2 · β . (8) Plugging (7) and (8) into (6) giv es P ( W − E [ W ] ≤ − E [ W ] / 2) ≤ 8 β 1 − 2 β ≤ 10 β , provided that β ≤ 1 10 . Thus, n good ≥ W ≥ E [ W ] / 2 ≥ (1 − β ) n 4 k with probability at least 1 − 10 β . A.1.2 Proof of Lemma 7 W e be gin with a preliminary lemma that upper -bounds the probability of two users of different types being declared as neighbors. Lemma 9. Let users u and v be of differ ent types, and suppose that the y have r ated at least Γ 0 items in common via joint explor ation. Further suppose γ -incoher ence is satisfied for γ ∈ [0 , 1) . If θ ≥ 4 γ ∆ 2 , then P ( users u and v ar e declar ed to be neighbors ) ≤ exp − ( θ − 4 γ ∆ 2 ) 2 2 Γ 0 . Pr oof. As with the proof of Lemma 8, we first analyze the case where users u and v have rated exactly Γ 0 items in common. Users u and v are declared to be neighbors if h e Y u , e Y v i ≥ θ Γ 0 . W e now crucially use the fact that joint exploration chooses these Γ 0 items as a random subset of the m items. For our random permutation σ of m items, we ha ve h e Y u , e Y v i = P Γ 0 i =1 e Y u,σ ( i ) e Y v ,σ ( i ) = P Γ 0 i =1 Y u,σ ( i ) Y v ,σ ( i ) , which is the sum of terms { Y u,σ ( i ) Y v ,σ ( i ) } Γ 0 i =1 that are each bounded within [ − 1 , 1] and drawn without replacement from a population of all possible items. Hoeffding’ s in- equality (which also applies to the current scenario of sampling without replacement [14]) yields P h e Y u , e Y v i ≥ θ Γ 0 | p u 6 = p v ≤ exp − θ Γ 0 − E [ h e Y u , e Y v i | p u 6 = p v ] 2 2Γ 0 ! . (9) 13 By γ -incoherence and our choice of θ , θ Γ 0 − E h e Y u , e Y v i | p u 6 = p v ≥ θ Γ 0 − 4 γ ∆ 2 Γ 0 = ( θ − 4 γ ∆ 2 )Γ 0 ≥ 0 . (10) Abov e, we used the fact that Γ 0 randomly explored items are a random subset of m items, and hence E 1 Γ 0 h e Y u , e Y v i = E 1 m h Y u , Y v i , with Y u , Y v representing the entire (random) vector of preferences of u and v respecti vely . Combining inequalities (9) and (10) yields P h e Y u , e Y v i ≥ θ Γ 0 | p u 6 = p v ≤ exp − ( θ − 4 γ ∆ 2 ) 2 2 Γ 0 . A similar argument as the ending of Lemma 8’ s proof establishes that the bound holds ev en if users u and v hav e jointly explored more than Γ 0 items. W e now prov e Lemma 7. Let β = exp( − ( θ − 4 γ ∆ 2 ) 2 Γ 0 / 2) be the probability bound from Lemma 9, where by our choice of θ = 2∆ 2 (1 + γ ) and with Γ 0 = t 1 − α / 2 , we hav e β = exp( − ∆ 4 (1 − γ ) 2 t 1 − α ) . By Lemma 9, for a pair of users u and v with at least t 1 − α / 2 items jointly explored, the probability that they are erroneously declared neighbors is upper -bounded by β . Denote the set of users of type different from u by B , and write n bad = X v ∈B 1 { u and v are declared to be neighbors } , whence E [ n bad ] ≤ nβ . Marko v’ s inequality gives P ( n bad ≥ n p β ) ≤ E [ n bad ] n √ β ≤ nβ n √ β = p β , proving the lemma. A.2 Proof of Lemma 2 W e reproduce Lemma 2 below . Lemma 2. F or user u at time t , if the good neighborhood event E good ( u, t ) holds and t ≤ µm , then P ( X ut = 1) ≥ 1 − 2 m exp − ∆ 2 tn 1 − α 40 k m − 1 t α − 1 n α . W e begin by checking that when the good neighborhood e vent E good ( u, t ) holds for user u , the items hav e been rated enough times by the good neighbors. Lemma 10. F or user u at time t , suppose that the good neighborhood e vent E good ( u, t ) holds. Then for a given item i , P item i has ≤ tn 1 − α 10 k m ratings fr om good neighbors of u ≤ exp − tn 1 − α 40 k m . Pr oof. The number of user u ’ s good neighbors who hav e rated item i stochastically dominates a Bin ( n 5 k , ε R ( n ) t m ) random variable, where ε R ( n ) t m = t mn α (here, we ha ve critically used the lower bound on the number of good neighbors user u has when the good neighborhood ev ent E good ( u, t ) holds). By a Chernof f bound, P Bin n 5 k , t mn α ≤ tn 1 − α 10 k m ≤ exp − 1 2 ( tn 1 − α 5 km − tn 1 − α 10 km ) 2 tn 1 − α 5 km ≤ exp − tn 1 − α 40 k m . Next, we show a sufficient condition for which the algorithm correctly classifies every item as likable or unlikable for user u . 14 Lemma 11. Suppose that there are no ∆ -ambiguous items. F or user u at time t , suppose that the good neighborhood event E good ( u, t ) holds. Pr ovided that every item i ∈ [ m ] has more than tn 1 − α 10 km ratings fr om good neighbors of user u , then with pr obability at least 1 − m exp( − ∆ 2 tn 1 − α 20 km ) , we have that for every item i ∈ [ m ] , e p ui > 1 2 if item i is likable by user u, e p ui < 1 2 if item i is unlikable by user u. Pr oof. Let A be the number of ratings that good neighbors of user u have pro vided. Suppose item i is likable by user u . Then when we condition on A = a 0 , d tn 1 − α 10 km e , e p ui stochastically dominates q ui , Bin ( a 0 , p ui ) a 0 + ∆ a 0 = Bin ( a 0 , p ui ) (1 + ∆) a 0 , which is the worst-case variant of e p ui that insists that all ∆ a 0 bad neighbors provided rating “ − 1 ” for likable item i (here, we hav e critically used the upper bound on the number of bad neighbors user u has when the good neighborhood ev ent E good ( u, t ) holds). Then P ( q ui ≤ 1 2 | A = a 0 ) = P Bin ( a 0 , p ui ) ≤ (1 + ∆) a 0 2 A = a 0 = P a 0 p ui − Bin ( a 0 , p ui ) ≥ a 0 p ui − 1 2 − ∆ 2 A = a 0 ( i ) ≤ exp − 2 a 0 p ui − 1 2 − ∆ 2 2 ( ii ) ≤ exp − 1 2 a 0 ∆ 2 ( iii ) ≤ exp − ∆ 2 tn 1 − α 20 k m , where step ( i ) is Hoef fding’ s inequality , step ( ii ) follo ws from item i being likable by user u (i.e., p ui ≥ 1 2 + ∆ ), and step ( iii ) is by our choice of a 0 . Conclude then that P ( e p ui ≤ 1 2 | A = a 0 ) ≤ exp − ∆ 2 tn 1 − α 20 k m . Finally , P e p ui ≤ 1 2 A ≥ tn 1 − α 10 k m = P ∞ a = a 0 P ( A = a ) P ( e p ui ≤ 1 2 | A = a ) P ( A ≥ tn 1 − α 10 km ) ≤ P ∞ a = a 0 P ( A = a ) exp( − ∆ 2 tn 1 − α 20 km ) P ( A ≥ tn 1 − α 10 km ) = exp − ∆ 2 tn 1 − α 20 k m . A similar argument holds for when item i is unlikable. Union-bounding over all m items yields the claim. W e now prove Lemma 2. First off, pro vided that t ≤ µm , we know that there must still exist an item likable by user u that user u has yet to consume. For user u at time t , supposing that e vent E good ( u, t ) holds, then e v ery item has been rated more than tn 1 − α 10 km times by the good neighbors of user u with probability at least 1 − m exp( − tn 1 − α 40 km ) . This follows from union-bounding o ver the m items with Lemma 10. Applying Lemma 11, and noting that we only e xploit with probability 1 − ε J ( t ) − ε R ( n ) = 1 − 1 /t α − 1 /n α , we finish the proof: P ( X ut = 1) ≥ 1 − m exp − tn 1 − α 40 k m − m exp − ∆ 2 tn 1 − α 20 k m − 1 t α − 1 n α ≥ 1 − 2 m exp − ∆ 2 tn 1 − α 40 k m − 1 t α − 1 n α . 15 A.3 Experimental Results W e demonstrate our algorithm C O L L A B O R AT I V E - G R E E DY on two datasets, sho wing that they ha ve comparable performance and that the y both outperform two existing recommendation algorithms Popularity Amongst Friends (P AF) [4] and Deshpande and Montanari’ s method (DM) [12]. At each time step, P AF finds nearest neighbors (“friends”) for ev ery user and recommends to a user the “most popular” item, i.e., the one with the most number of +1 ratings, among the user’ s friends. DM doesn’t do any collaboration beyond a preprocessing step that computes item feature vectors via matrix completion. Then during online recommendation, DM learns user feature vectors ov er time with the help of item feature vectors and recommends an item to each user based on whether it aligns well with the user’ s feature vector . W e simulate an online recommendation system based on movie ratings from the Movielens10m and Netflix datasets, each of which provides a sparsely filled user-by-movie rating matrix with ratings out of 5 stars. Unfortunately , e xisting collaborati ve filtering datasets such as the tw o we consider don’t offer the interacti vity of a real online recommendation system, nor do they allow us to rev eal the rating for an item that a user didn’ t actually rate. For simulating an online system, the former issue can be dealt with by simply rev ealing entries in the user-by-item rating matrix over time. W e address the latter issue by only considering a dense “top users vs. top items” subset of each dataset. In particular , we consider only the “top” users who hav e rated the most number of items, and the “top” items that hav e recei ved the most number of ratings. While this dense part of the dataset is unrepresentativ e of the rest of the dataset, it does allow us to use actual ratings provided by users without synthesizing any ratings. An initial question to ask is whether the dense movie ratings matrices we consider could be reason- ably e xplained by our latent source model. W e automatically learn the structure of these matrices using the method by Grosse et al. [13] and find Bayesian clustered tensor factorization (BCTF) to accurately model the data. This finding isn’t surprising as BCTF has pre viously been used to model movie ratings data [21]. BCTF ef fecti vely clusters both users and mo vies so that we get structure such as that sho wn in Figure 1(a) for the Movielens10m “top users vs. top items” matrix. Our latent source model could reasonably model movie ratings data as it only assumes clustering of users. Follo wing the e xperimental setup of [4], we quantize a rating of 4 stars or more to be +1 (likeable), and a rating of 3 stars or less to be − 1 (unlikeable). While we look at a dense subset of each dataset, there are still missing entries. If a user u hasn’t rated item j in the dataset, then we set the corresponding true rating to 0, meaning that in our simulation, upon recommending item j to user u , we receive 0 rew ard, but we still mark that user u has consumed item j ; thus, item j can no longer be recommended to user u . For both Mo vielens10m and Netflix datasets, we consider the top n = 200 users and the top m = 500 movies. For Mo vielens10m, the resulting user-by-r ating matrix has 80.7% nonzero entries. For Netflix, the resulting matrix has 86.0% nonzero entries. For an algorithm that recommends item π ut to user u at time t , we measure the algorithm’ s average cumulativ e re ward up to time T as 1 n T X t =1 n X u =1 Y ( T ) uπ ut , where we av erage ov er users. For all methods, we recommend items until we reach time T = 500 , i.e., we mak e mo vie recom- mendations until each user has seen all m = 500 movies. W e disallow the matrix completion step for DM to see the users that we actually test on, b ut we allo w it to see the the same items as what is in the simulated online recommendation system in order to compute these items’ feature vectors (using the rest of the users in the dataset). Furthermore, when a rating is rev ealed, we provide DM both the thresholded rating and the non-thresholded rating, the latter of which DM uses to estimate user feature vectors o ver time. Parameters θ and α for and C O L L A B O R A T I V E - G R E E DY are chosen using training data: W e sweep ov er the two parameters on training data consisting of 200 users that are the “next top” 200 users, i.e., ranked 201 to 400 in number movie ratings they provided. For simplicity , we discretize our search space to θ ∈ { 0 . 0 , 0 . 1 , . . . , 1 . 0 } and α ∈ { 0 . 1 , 0 . 2 , 0 . 3 , 0 . 4 , 0 . 5 } . W e choose the parameter setting achieving the highest area under the cumulative reward curve. For both Movielens10m and Netflix datasets, this corresponded to setting θ = 0 . 0 and α = 0 . 5 for C O L L A B O R AT I V E - G R E E DY . 16 0 100 200 300 400 500 Time − 10 0 10 20 30 40 50 60 Average cumulative rew a rd Collab o rative-Greedy P opula rit y Amongst F riends Deshpande-Montana ri (a) 0 100 200 300 400 500 Time − 10 0 10 20 30 40 50 60 Average cumulative rew a rd Collab o rative-Greedy P opula rit y Amongst F riends Deshpande-Montana ri (b) Figure 2: A verage cumulati ve re wards o ver time: (a) Movielens10m, (b) Netflix. In contrast, the parameters for P AF and DM are chosen to be the best parameters for the test data among a wide range of parameters. The results are shown in Figure 2. W e find that our algorithm C O L L A B O R A T I V E - G R E E DY outperforms P AF and DM. W e remark that the curv es are roughly con- cav e, which is expected since once we’ ve finished recommending lik eable items (roughly around time step 300), we end up recommending mostly unlikeable items until we’ ve e xhausted all the items. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment