Overcoming the Curse of Sentence Length for Neural Machine Translation using Automatic Segmentation

The authors of (Cho et al., 2014a) have shown that the recently introduced neural network translation systems suffer from a significant drop in translation quality when translating long sentences, unlike existing phrase-based translation systems. In …

Authors: Jean Pouget-Abadie, Dzmitry Bahdanau, Bart van Merrienboer

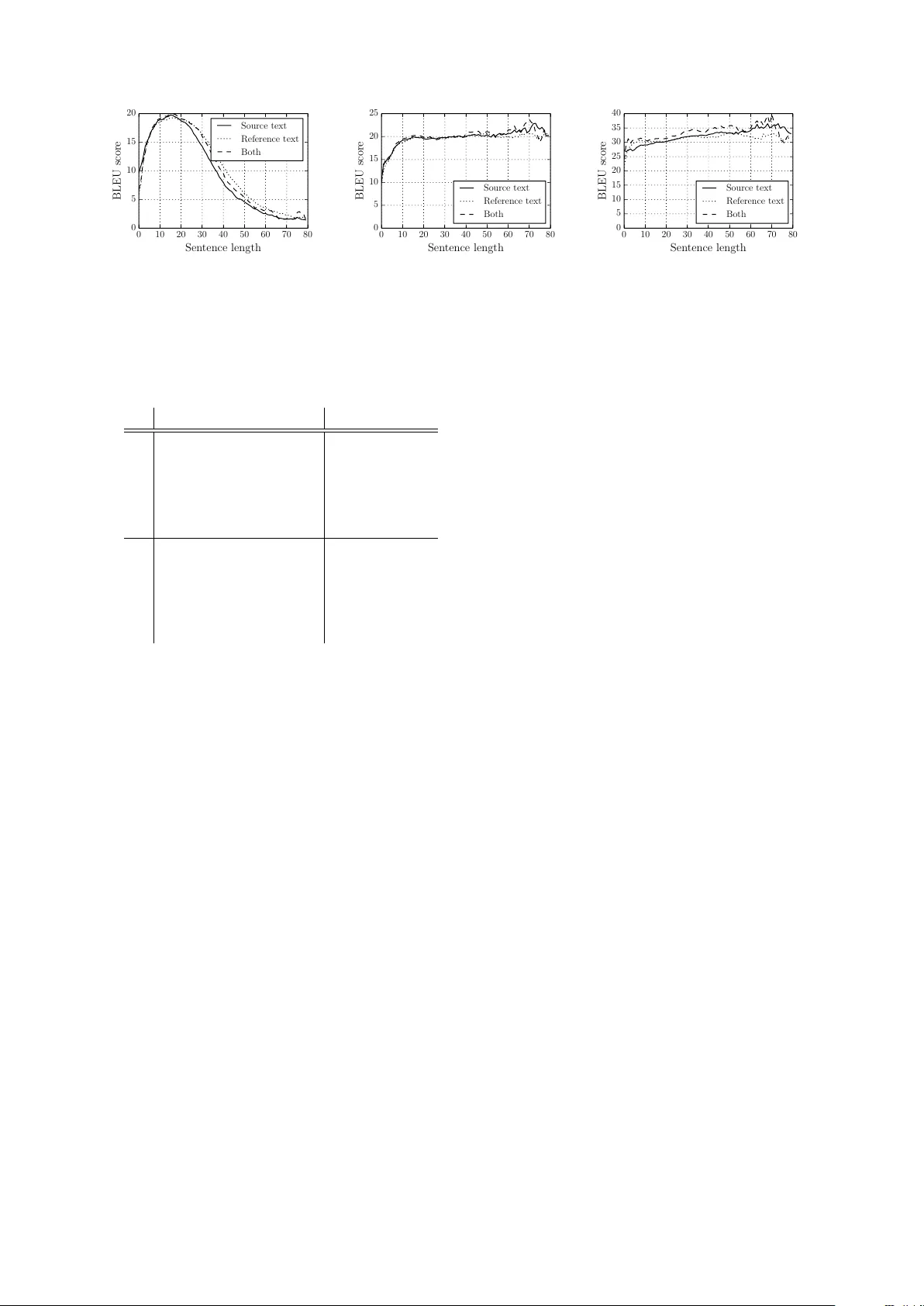

Over coming the Curse of Sentence Length f or Neural Machine T ranslation using A utomatic Segmentation Jean Pouget-Abadie ∗ Ecole Polytechnique, France Dzmitry Bahdanau ∗ Jacobs Uni versity , Germany Bart van Merri ¨ enboer K yunghyun Cho Uni versit ´ e de Montr ´ eal, Canada Y oshua Bengio Uni versit ´ e de Montr ´ eal, Canada CIF AR Senior Fellow Abstract The authors of (Cho et al., 2014a) hav e sho wn that the recently introduced neural network translation systems suf fer from a significant drop in translation quality when translating long sentences, unlike existing phrase-based translation systems. In this paper , we propose a way to ad- dress this issue by automatically segment- ing an input sentence into phrases that can be easily translated by the neural network translation model. Once each segment has been independently translated by the neu- ral machine translation model, the trans- lated clauses are concatenated to form a final translation. Empirical results show a significant improv ement in translation quality for long sentences. 1 Introduction Up to no w , most research ef forts in statistical ma- chine translation (SMT) research hav e relied on the use of a phrase-based system as suggested in (K oehn et al., 2003). Recently , howe ver , an entirely new , neural network based approach has been proposed by several research groups (Kalch- brenner and Blunsom, 2013; Sutske ver et al., 2014; Cho et al., 2014b), showing promising re- sults, both as a standalone system or as an addi- tional component in the existing phrase-based sys- tem. In this neural network based approach, an en- coder ‘encodes’ a variable-length input sentence into a fixed-length v ector and a decoder ‘decodes’ a variable-length target sentence from the fix ed- length encoded vector . It has been observed in (Sutskev er et al., 2014), (Kalchbrenner and Blunsom, 2013) and (Cho et al., 2014a) that this neural network approach ∗ Research done while these authors were visiting Uni- versit ´ e de Montr ´ eal works well with short sentences (e.g., / 20 words), b ut has dif ficulty with long sentences (e.g., ' 20 words), and particularly with sentences that are longer than those used for training. T raining on long sentences is dif ficult because few av ailable training corpora include sufficiently many long sentences, and because the computational ov er- head of each update iteration in training is linearly correlated with the length of training sentences. Additionally , by the nature of encoding a v ariable- length sentence into a fixed-size vector representa- tion, the neural network may fail to encode all the important details. In this paper , hence, we propose to translate sen- tences piece-wise. W e segment an input sentence into a number of short clauses that can be confi- dently translated by the model. W e sho w empiri- cally that this approach impro ves translation qual- ity of long sentences, compared to using a neural network to translate a whole sentence without se g- mentation. 2 RNN Encoder –Decoder for T ranslation The RNN Encoder –Decoder (RNNenc) model is a recent implementation of the encoder –decoder approach, proposed independently in (Cho et al., 2014b) and in (Sutske ver et al., 2014). It con- sists of two RNNs, acting respectiv ely as encoder and decoder . Each RNN maintains a set of hid- den units that makes an ‘update’ decision for each symbol in an input sequence. This decision de- pends on the input symbol and the pre vious hid- den state. The RNNenc in (Cho et al., 2014b) uses a special hidden unit that adaptiv ely forgets or re- members the previous hidden state such that the acti vation of a hidden unit h h t i j at time t is com- puted by h h t i j = z j h h t − 1 i j + (1 − z j ) ˜ h h t i j , where ˜ h h t i j = f [ Wx ] j + r j Uh h t − 1 i , z j = σ [ W z x ] j + U z h h t − 1 i j , r j = σ [ W r x ] j + U r h h t − 1 i j . z j and r j are respecti vely the update and reset gates. Additionally , the RNN in the decoder computes at each step the conditional probability of a target word: p ( f t,j = 1 | f t − 1 , . . . , f 1 , c ) = exp w j h h t i P K j 0 =1 exp w j 0 h h t i , (1) where f t,j is the indicator v ariable for the j -th word in the target vocab ulary at time t and only a single indicator variable is on ( = 1 ) each time. c is the context vector , the representation of the input sentence as encoded by the encoder . Although the model in (Cho et al., 2014b) was originally trained on phrase pairs, it is straight- forward to train the same model with a bilin- gual, parallel corpus consisting of sentence pairs as has been done in (Sutskev er et al., 2014). In the remainder of this paper , we use the RNNenc trained on English–French sentence pairs (Cho et al., 2014a). 3 A utomatic Segmentation and T ranslation One hypothesis explaining the difficulty encoun- tered by the RNNenc model when translating long sentences is that a plain, fixed-length vector lacks the capacity to encode a long sentence. When en- coding a long input sentence, the encoder may lose the track of all the subtleties in the sentence. Con- sequently , the decoder has dif ficult time recov er- ing the correct translation from the encoded repre- sentation. One solution would be to build a larger model with a larger representation vector to in- crease the capacity of the model at the price of higher computational cost. In this section, ho wev er , we propose to segment an input sentence such that each se gmented clause can be easily translated by the RNN Encoder– Decoder . In other words, we wish to find a segmentation that maximizes the total confidence scor e which is a sum of the confidence scores of the phrases in the segmentation. Once the confi- dence score is defined, the problem of finding the best segmentation can be formulated as an integer programming problem. Let e = ( e 1 , · · · , e n ) be a source sentence com- posed of words e k . W e denote a phrase, which is a subsequence of e , with e ij = ( e i , · · · , e j ) . W e use the RNN Encoder–Decoder to measure ho w confidently we can translate a subsequence e ij by considering the log-probability log p ( f k | e ij ) of a candidate translation f k generated by the model. In addition to the log-probability , we also use the log-probability log p ( e ij | f k ) from a re- verse RNN Encoder–Decoder (translating from a target language to source language). W ith these two probabilities, we define the confidence score of a phrase pair ( e ij , f k ) as: c ( e ij , f k ) = log p ( f k | e ij ) + log q ( e ij | f k ) 2 | log( j − i + 1) | , (2) where the denominator penalizes a short segment whose probability is kno wn to be overestimated by an RNN (Grav es, 2013). The confidence score of a source phrase only is then defined as c ij = max k c ( e ij , f k ) . (3) W e use an approximate beam search to search for the candidate translations f k of e ij , that maximize log-likelihood log p ( f k | e ij ) (Grav es et al., 2013; Boulanger-Le wandowski et al., 2013). Let x ij be an indicator variable equal to 1 if we include a phrase e ij in the segmentation, and oth- erwise, 0. W e can rewrite the segmentation prob- lem as the optimization of the following objectiv e function: max x X i ≤ j c ij x ij = x · c (4) subject to ∀ k , n k = 1 n k = P i,j x ij 1 i ≤ k ≤ j is the number of source phrases chosen in the segmentation containing word e k . The constraint in Eq. (4) states that for each word e k in the sentence one and only one of the source phrases contains this word, ( e ij ) i ≤ k ≤ j , is included in the segmentation. The constraint ma- trix is totally unimodular making this integer pro- gramming problem solv able in polynomial time. Let S k j be the first index of the k -th segment counting from the last phrase of the optimal seg- mentation of subsequence e 1 j ( S j := S 1 j ), and s j be the corresponding score of this se gmentation ( s 0 := 0 ). Then, the follo wing relations hold: s j = max 1 ≤ i ≤ j ( c ij + s i − 1 ) , ∀ j ≥ 1 (5) S j = arg max 1 ≤ i ≤ j ( c ij + s i − 1 ) , ∀ j ≥ 1 (6) W ith Eq. (5) we can ev aluate s j incrementally . W ith the e valuated s j ’ s, we can compute S j as well (Eq. (6)). By the definition of S k j we find the optimal segmentation by decomposing e 1 n into e S k n ,S k − 1 n − 1 , · · · , e S 2 n ,S 1 n − 1 , e S 1 n ,n , where k is the index of the first one in the sequence S k n . This approach described above requires quadratic time with respect to sentence length. 3.1 Issues and Discussion The proposed segmentation approach does not av oid the problem of reordering clauses. Unless the source and tar get languages follow roughly the same order , such as in English to French transla- tions, a simple concatenation of translated clauses will not necessarily be grammatically correct. Despite the lack of long-distance reordering 1 in the current approach, we find nonetheless signifi- cant gains in the translation performance of neural machine translation. A mechanism to reorder the obtained clause translations is, howe ver , an impor- tant future research question. Another issue at the heart of any purely neu- ral machine translation is the limited model v o- cabulary size for both source and target languages. As shown in (Cho et al., 2014a), translation qual- ity drops considerably with just a fe w unknown words present in the input sentence. Interestingly enough, the proposed segmentation approach ap- pears to be more robust to the presence of un- kno wn w ords (see Sec. 5). One intuition is that the segmentation leads to multiple short clauses with less unknown words, which leads to more stable translation of each clause by the neural translation model. Finally , the proposed approach is computation- ally expensiv e as it requires scoring all the sub- phrases of an input sentence. Howe ver , the scoring process can be easily sped up by scoring phrases 1 Note that, inside each clause, the words are reordered automatically when the clause is translated by the RNN Encoder–Decoder . in parallel, since each phrase can be scored inde- pendently . 4 Experiment Settings 4.1 Dataset W e e v aluate the proposed approach on the task of English-to-French translation. W e use a bilin- gual, parallel corpus of 348M words selected by the method of (Axelrod et al., 2011) from a combination of Europarl (61M), news com- mentary (5.5M), UN (421M) and two crawled corpora of 90M and 780M words respectiv ely . 2 The performance of our models w as tested on news-test2012 , news-test2013 , and news-test2014 . When comparing with the phrase-based SMT system Moses (K oehn et al., 2007), the first two were used as a dev elopment set for tuning Moses while news-test2014 was used as our test set. T o train the neural netw ork models, we use only the sentence pairs in the parallel corpus, where both English and French sentences are at most 30 words long. Furthermore, we limit our vocabu- lary size to the 30,000 most frequent words for both English and French. All other words are con- sidered unknown and mapped to a special token ( [ UNK ] ). In both neural network training and automatic segmentation, we do not incorporate any domain- specific kno wledge, except when tokenizing the original text data. 4.2 Models and Appr oaches W e compare the proposed segmentation-based translation scheme against the same neural net- work model translations without segmentation. The neural machine translation is done by an RNN Encoder–Decoder (RNNenc) (Cho et al., 2014b) trained to maximize the conditional probability of a French translation given an English sen- tence. Once the RNNenc is trained, an approxi- mate beam-search is used to find possible transla- tions with high likelihood. 3 This RNNenc is used for the proposed segmentation-based approach together with an- other RNNenc trained to translate from French to 2 The datasets and trained Moses models can be down- loaded from http://www- lium.univ- lemans.fr/ ˜ schwenk/cslm_joint_paper/ and the website of A CL 2014 Ninth W orkshop on Statistical Machine T ransla- tion (WMT 14). 3 In all experiments, the beam width is 10. English. The two RNNenc’ s are used in the pro- posed segmentation algorithm to compute the con- fidence score of each phrase (See Eqs. (2)–(3)). W e also compare with the translations of a con- ventional phrase-based machine translation sys- tem, which we expect to be more robust when translating long sentences. 5 Results and Analysis 5.1 V alidity of the A utomatic Segmentation W e validate the proposed segmentation algorithm described in Sec. 3 by comparing against two baseline segmentation approaches. The first one randomly segments an input sentence such that the distribution of the lengths of random se gments has its mean and v ariance identical to those of the seg- ments produced by our algorithm. The second ap- proach follows the proposed algorithm, howe ver , using a uniform random confidence score. Model T est set No segmentation 13.15 Random segmentation 16.60 Random confidence score 16.76 Proposed segmentation 20.86 T able 1: BLEU score computed on news-test2014 for two control e xperi- ments. Random segmentation refers to randomly segmenting a sentence so that the mean and v ariance of the segment lengths corresponded to the ones our best segmentation method. Random confidence score refers to segmenting a sentence with randomly generated confidence score for each segment. From T able 1 we can clearly see that the pro- posed se gmentation algorithm results in signifi- cantly better performance. One interesting phe- nomenon is that any random segmentation was better than the direct translation without any seg- mentation. This indirectly agrees well with the pre vious finding in (Cho et al., 2014a) that the neural machine translation suffers from long sen- tences. 5.2 Importance of Using an In verse Model The proposed confidence score av erages the scores of a translation model p ( f | e ) and an inv erse translation model p ( e | f ) and penalizes for short phrases. Ho we ver , it is possible to use alternate 0 2 4 6 8 10 Max. n um b er of unkno wn w ords − 9 − 8 − 7 − 6 − 5 − 4 − 3 − 2 − 1 0 BLEU score decrease With segm. Without segm. Figure 2: BLEU score loss vs. maximum number of unkno wn words in source and target sentence when translating with the RNNenc model with and without segmentation. definitions of confidence score. F or instance, one may use only the ‘direct’ translation model or v arying penalties for phrase lengths. In this section, we test three different confidence score: p ( f | e ) Using a single translation model p ( f | e ) + p ( e | f ) Using both direct and rev erse translation models without the short phrase penalty p ( f | e ) + p ( e | f ) (p) Using both direct and re- verse translation models together with the short phrase penalty The results in T able 2 clearly show the impor- tance of using both translation and in verse trans- lation models. Furthermore, we were able to get the best performance by incorporating the short phrase penalty (the denominator in Eq. (2)). From here on, thus, we only use the original formula- tion of the confidence score which uses the both models and the penalty . 5.3 Quantitative and Qualitativ e Analysis As expected, translation with the proposed ap- proach helps significantly with translating long sentences (see Fig. 1). W e observe that trans- lation performance does not drop for sentences of lengths greater than those used to train the RNNenc ( ≤ 30 words). Similarly , in Fig. 2 we observe that translation quality of the proposed approach is more robust 0 10 20 30 40 50 60 70 80 Sen tence length 0 5 10 15 20 BLEU score Source text Reference text Both 0 10 20 30 40 50 60 70 80 Sen tence length 0 5 10 15 20 25 BLEU score Source text Reference text Both 0 10 20 30 40 50 60 70 80 Sen tence length 0 5 10 15 20 25 30 35 40 BLEU score Source text Reference text Both (a) RNNenc without segmentation (b) RNNenc with segmentation (c) Moses Figure 1: The BLEU scores achiev ed by (a) the RNNenc without segmentation, (b) the RNNenc with the penalized rev erse confidence score, and (c) the phrase-based translation system Moses on a newstest12-14 . Model De v T est All RNNenc 13.15 13.92 p ( f | e ) 12.49 13.57 p ( f | e ) + p ( e | f ) 18.82 20.10 p ( f | e ) + p ( e | f ) (p) 19.39 20.86 Moses 30.64 33.30 No UNK RNNenc 21.01 23.45 p ( f | e ) 20.94 22.62 p ( f | e ) + p ( e | f ) 23.05 24.63 p ( f | e ) + p ( e | f ) (p) 23.93 26.46 Moses 32.77 35.63 T able 2: BLEU scores computed on the dev elop- ment and test sets. See the text for the description of each approach. Moses refers to the scores by the conv entional phrase-based translation system. The top fi ve ro ws consider all sentences of each data set, whilst the bottom fi ve ro ws includes only sentences with no unkno wn words to the presence of unknown words. W e suspect that the existence of many unknown words make it harder for the RNNenc to extract the meaning of the sentence clearly , while this is a voided with the proposed se gmentation approach as it ef fectiv ely allo ws the RNNenc to deal with a less number of unkno wn words. In T able 3, we show the translations of ran- domly selected long sentences (40 or more words). Segmentation improves ov erall translation quality , agreeing well with our quantitati ve result. How- e ver , we can also observe a decrease in transla- tion quality when an input sentence is not seg- mented into well-formed sentential clauses. Addi- tionally , the concatenation of independently trans- lated segments sometimes negati vely impacts flu- ency , punctuation, and capitalization by the RN- Nenc model. T able 4 shows one such example. 6 Discussion and Conclusion In this paper we propose an automatic segmen- tation solution to the ‘curse of sentence length’ in neural machine translation. By choosing an appropriate confidence score based on bidirec- tional translation models, we observed significant improv ement in translation quality for long sen- tences. Our inv estigation shows that the proposed segmentation-based translation is more robust to the presence of unkno wn words. Ho wev er , since each segment is translated in isolation, a segmen- tation of an input sentence may negati vely impact translation quality , especially the fluency of the translated sentence, the placement of punctuation marks and the capitalization of words. An important research direction in the future is to in vestigate how to improve the quality of the translation obtained by concatenating translated segments. Acknowledgments The authors would like to acknowledge the sup- port of the following agencies for research funding and computing support: NSERC, Calcul Qu ´ ebec, Compute Canada, the Canada Research Chairs and CIF AR. References [Axelrod et al.2011] Amittai Axelrod, Xiaodong He, and Jianfeng Gao. 2011. Domain adaptation via pseudo in-domain data selection. In Pr oceedings of Source Between the early 1970s , when the Boeing 747 jumbo defined modern long-haul travel , and the turn of the century , the weight of the average American 40- to 49-year-old male increased by 10 per cent , according to U.S. Health Department Data . Segmentation [[ Between the early 1970s , when the Boeing 747 jumbo defined modern long-haul travel ,] [ and the turn of the century , the weight of the av erage American 40- to 49-year-old male] [ increased by 10 per cent , according to U.S. Health Department Data .]] Reference Entre le d ´ ebut des ann ´ ees 1970 , lorsque le jumbo 747 de Boeing a d ´ efini le v oyage long-courrier moderne , et le tournant du si ` ecle , le poids de l’ Am ´ ericain moyen de 40 ` a 49 ans a augment ´ e de 10 % , selon les donn ´ ees du d ´ epartement am ´ ericain de la Sant ´ e . W ith segmentation Entre les ann ´ ees 70 , lorsque le Boeing Boeing a d ´ efini le transport de voyageurs modernes ; et la fin du si ` ecle , le poids de la mo yenne am ´ ericaine mo yenne ` a l’ ´ egard des hommes a augment ´ e de 10 % , conform ´ ement aux donn ´ ees fournies par le U.S. Department of Health Aff airs . W ithout segmenta- tion Entre les ann ´ ees 1970 , lorsque les a vions de service Boeing ont d ´ epass ´ e le prix du trav ail , le taux moyen ´ etait de 40 % . Source During his arrest Ditta picked up his wallet and tried to remov e sev eral credit cards but they were all seized and a hair sample was taken fom him. Segmentation [[During his arrest Ditta] [pick ed up his wallet and tried to remo ve se veral credit cards b ut they were all seized and] [a hair sample was taken from him.]] Reference Au cours de son arrestation , Ditta a ramass ´ e son portefeuille et a tent ´ e de retirer plusieurs cartes de cr ´ edit , mais elles ont toutes ´ et ´ e saisies et on lui a pr ´ elev ´ e un ´ echantillon de chev eux . W ith segmentation Pendant son arrestation J’ ai utilis ´ e son portefeuille et a essay ´ e de retirer plusieurs cartes de cr ´ edit mais toutes les pi ` eces ont ´ et ´ e saisies et un ´ echantillon de chev eux a ´ et ´ e enlev ´ e. W ithout segmentation Lors de son arrestation il a tent ´ e de r ´ ecup ´ erer plusieurs cartes de cr ´ edit mais il a ´ et ´ e saisi de tous les coups et des blessures. Source ”W e can now move forwards and focus on the future and on the 90 % of assets that make up a really good bank, and on building a great bank for our clients and the United Kingdom, ” new director general, Ross McEwan, said to the press . Segmentation [[”W e can now mov e forwards and focus on the future] [and] [on the 90 % of assets that make up a really good bank, and on b uilding] [a great bank for our clients and the United Kingdom, ”] [new director general, Ross McEw an, said to the press.]] Reference ”Nous pouvons maintenant aller de l’avant , nous pr ´ eoccuper de l’avenir et des 90 % des actifs qui constituent une banque vraiment bonne et construire une grande banque pour la client ` ele et pour le Royaume Uni”, a dit le nouveau directeur g ´ en ´ eral Ross McEwan ` a la presse . W ith segmentation ”Nous pouvons maintenant passer ` a l’avenir et se concentrer sur l a venir ou sur les 90 % d actifs qui constituent une bonne banque et sur la construction une grande banque de nos clients et du Royaume-Uni” Le nouveau directeur g ´ en ´ eral Ross Ross a dit que la presse. W ithout segmentation ”Nous pouv ons maintenant passer et ´ etudier les 90 % et mettre en place une banque importante pour la nouvelle banque et le directeur g ´ en ´ eral” a soulign ´ e le journaliste . Source There are se veral beautiful flashes - the creation of images has alw ays been one of Chouinard’ s strong points - like the hair that is ruf fled or the black fabric that extends the lines. Segmentation [[There are sev eral beautiful flashes - the creation of images has always been one of Chouinard’ s strong points -] [like the hair that is ruf fled or the black fabric that extends the lines.]] Reference Il y a quelques beaux flashs - la cr ´ eation d’images a toujours ´ et ´ e une force chez Chouinard - comme ces ch ev eux qui s’ ´ ebouriffent ou ces tissus noirs qui allongent les lignes . W ith segmentation Il existe plusieurs belles images - la cr ´ eation d images a toujours ´ et ´ e l un de ses points forts . comme les chev eux comme le vernis ou le tissu noir qui ´ etend les lignes. W ithout segmentation Il existe plusieurs points forts : la cr ´ eation d images est toujours l un des points forts . Source W ithout specifying the illness she was suffering from, the star performer of ‘Respect’ confirmed to the media on 16 October that the side effects of a treatment she was recei ving were ‘difficult’ to deal with. Segmentation [[W ithout specifying the illness she was suffering from, the star performer of ‘Respect’] [con- firmed to the media on 16 October that the side effects of a treatment she was receiving were] [‘difficult’ to deal with.]] Reference Sans pr ´ eciser la maladie dont elle souffrait , la c ´ el ` ebre interpr ` ete de Respect av ait af firm ´ e aux m ´ edias le 16 octobre que les effets secondaires d’un traitement qu’elle recev ait ´ etaient ”diffi- ciles”. W ith segmentation Sans pr ´ eciser la maladie qu’elle souffrait la star de l’ ‘œuvre’ de ‘respect’. Il a ´ et ´ e confirm ´ e aux m ´ edias le 16 octobre que les effets secondaires d’un traitement ont ´ et ´ e rec ¸ us. ”difficile” de traiter . W ithout segmentation Sans la pr ´ ecision de la maladie elle a eu l’impression de ”marquer le 16 a vril’ les ef fets d’un tel ‘traitement’. T able 3: Sample translations with the RNNenc model taken from the test set along with the source sentences and the reference translations. Source He nev ertheless praised the Government for responding to his request for urgent assis- tance which he first raised with the Prime Minister at the beginning of May . Segmentation [He ne vertheless praised the Go vernment for responding to his request for urgent assis- tance which he first raised ] [with the Prime Minister at the beginning of May . ] Reference Il a n ´ eanmoins f ´ elicit ´ e le gouv ernement pour a voir r ´ epondu ` a la demande d’ aide ur gente qu’il a pr ´ esent ´ ee au Premier ministre d ´ ebut mai . W ith segmentation Il a n ´ eanmoins f ´ elicit ´ e le Gouvernement de r ´ epondre ` a sa demande d’ aide ur gente qu’il a soulev ´ ee . av ec le Premier ministre d ´ ebut mai . W ithout segmentation Il a n ´ eanmoins f ´ elicit ´ e le gouvernement de r ´ epondre ` a sa demande d’ aide ur gente qu’il a adress ´ ee au Premier Ministre d ´ ebut mai . T able 4: An example where an incorrect segmentation ne gativ ely impacts fluency and punctuation. the A CL Confer ence on Empirical Methods in Natu- ral Language Processing (EMNLP) , pages 355–362. Association for Computational Linguistics. [Boulanger-Le wando wski et al.2013] Nicolas Boulanger-Le wando wski, Y oshua Bengio, and Pascal V incent. 2013. Audio chord recognition with recurrent neural networks. In ISMIR . [Cho et al.2014a] K yunghyun Cho, Bart van Merri ¨ enboer , Dzmitry Bahdanau, and Y oshua Bengio. 2014a. On the properties of neural ma- chine translation: Encoder–Decoder approaches. In Eighth W orkshop on Syntax, Semantics and Structur e in Statistical T ranslation , October . [Cho et al.2014b] K yunghyun Cho, Bart v an Merrien- boer , Caglar Gulcehre, Fethi Bougares, Holger Schwenk, and Y oshua Bengio. 2014b . Learning phrase representations using rnn encoder-decoder for statistical machine translation. In Pr oceedings of the Empiricial Methods in Natural Language Pr o- cessing (EMNLP 2014) , October . to appear . [Grav es et al.2013] A. Gra ves, A. Mohamed, and G. Hinton. 2013. Speech recognition with deep re- current neural networks. ICASSP . [Grav es2013] A. Graves. 2013. Generating sequences with recurrent neural networks. arXiv: 1308.0850 [cs.NE] , August. [Kalchbrenner and Blunsom2013] Nal Kalchbrenner and Phil Blunsom. 2013. T wo recurrent continuous translation models. In Pr oceedings of the A CL Confer ence on Empirical Methods in Natur al Language Pr ocessing (EMNLP) , pages 1700–1709. Association for Computational Linguistics. [K oehn et al.2003] Philipp Koehn, Franz Josef Och, and Daniel Marcu. 2003. Statistical phrase-based translation. In Pr oceedings of the 2003 Confer ence of the North American Chapter of the Association for Computational Linguistics on Human Language T echnology - V olume 1 , NAA CL ’03, pages 48–54, Stroudsbur g, P A, USA. Association for Computa- tional Linguistics. [K oehn et al.2007] Philipp K oehn, Hieu Hoang, Alexandra Birch, Chris Callison-Burch, Marcello Federico, Nicola Bertoldi, Brooke Co wan, W ade Shen, Christine Moran, Richard Zens, Chris Dyer, Ondrej Bojar , Alexandra Constantin, and Evan Herbst. 2007. Annual meeting of the association for computational linguistics (acl). Prague, Czech Republic. demonstration session. [Sutske ver et al.2014] Ilya Sutskev er , Oriol V inyals, and Quoc Le. 2014. Sequence to sequence learning with neural networks. In Advances in Neural Infor- mation Pr ocessing Systems (NIPS 2014) , December .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment