Generative Model Selection Using a Scalable and Size-Independent Complex Network Classifier

Real networks exhibit nontrivial topological features such as heavy-tailed degree distribution, high clustering, and small-worldness. Researchers have developed several generative models for synthesizing artificial networks that are structurally simi…

Authors: Sadegh Motallebi, Sadegh Aliakbary, Jafar Habibi

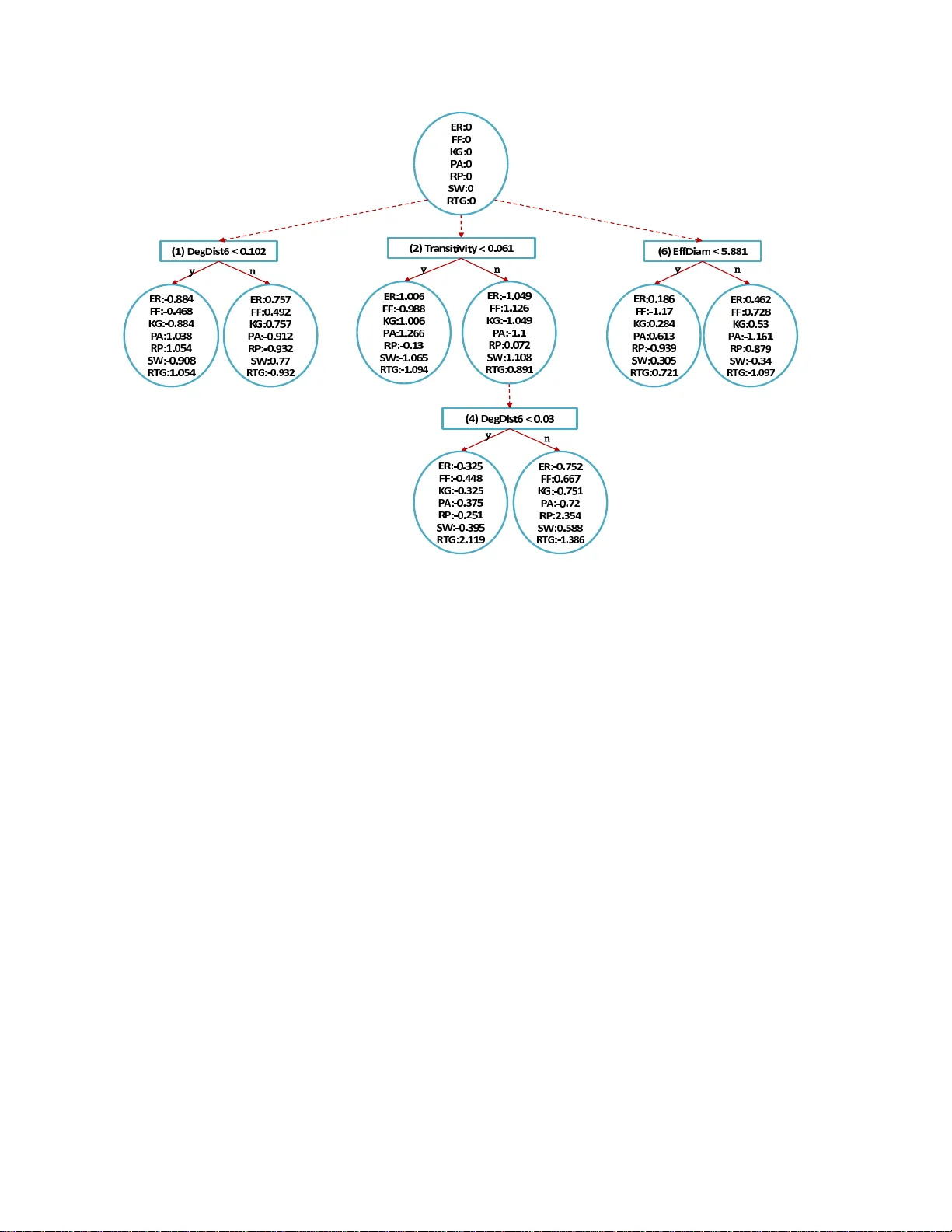

GMSCN: Generative Mo del Selection Using a Scalable and Size-Indep endent Complex Net w o rk Cl a ssifier Sadegh Motallebi, 1 , a) Sadegh Aliakbary, 1 , b) and Jafar Habibi 1 , c) Dep artment of C omputer Engine ering, Shar i f University of T e chnolo gy, T ehr an, Ir an (Dated: 4 F ebruary 2014) Real net works exhibit nontrivial topo lo gical features such as hea vy-ta iled degree distribution, high clustering , and small-worldness. Researc he r s ha ve dev elop ed several generative mo dels for synthesizing artificia l net works that are structurally similar to real netw orks. An imp ortant research pro blem is to iden tify the generative mo del that best fits to a target netw or k. In this pap er, w e in vestigate this problem and o ur goal is to select the mo del that is able to g enerate gr aphs similar to a given netw o r k instance. By the means o f gener a ting synthetic net works with seven outstanding generative mo dels, we hav e utilized machine lear ning methods to develop a decision tree for mo del selection. Our prop osed metho d, which is named “Generative Mo del Selection for Complex Netw or ks” (GMSCN), outp erforms existing metho ds with resp ect to accur acy , scalability and size-indep endence. Keywords: Complex Netw or ks, Generative Mo dels, Synthetic Net works, Mo del Selection, Netw or k Structural F eatures, So cia l Netw ork s, Decision T ree Learning A realistic net work generativ e mo del can gener- ate artificial graphs similar to real net w orks. But there is no b est uni v ersal generativ e mo del for all situations. In any application, among different existing generativ e mo del s, we shoul d c ho ose the b est mo del for that sp e cific application. So, gen- erativ e mo del selection is a p rerequi site for creat- ing artificial reali stic net works. In this pap er, w e consider the problem of mo del selection and pro- p ose a m etho d for findi ng the mo del that b es t fits a giv en net work. The selected generativ e mo del helps us i nfe r the growth mec hanisms o f the gi v en net work. It can also gene rate artificial net works simil ar to the given netw ork for tasks lik e sim- ulation, prediction, e xtrapo lation, and h yp othe- sis testing. W e prop ose utilizi ng a combination of different lo cal and glob al netw ork features for learning a mo del sele ction decision tree. W e also devise a new net work feature based on the quan- tification of t he degree distribution. W e sho w that Our prop ose d metho d is robus t, scalable, in- dep endent from the size o f the giv en net work, and more accura te than the basel ine metho d. I. INTRODUCTION Complex net works appear in differen t categorie s such as so cial net works, citation net works, collab oration net works, a nd commu nic a tion netw orks 1– 4 . In recent years, complex netw orks ar e frequently studied and a) motallebi@ce.sharif.edu. b) aliakbary@ce.sharif.edu. c) jhabibi@sharif. edu. many evidences indicate that they show some non-tr ivial structural pr o p erties 1,3,5–8 . F or example, power law degree distribution, high clustering and s mall path lengths are s o me prop erties that distinguish co mplex net works fro m completely rando m gra phs. An active field of rese a rch is dedica ted to the devel- opment of alg orithms for generating complex netw orks. These alg orithms, calle d “g enerative mo dels”, try to gen- erate synt hetic graphs that adhere the structural prop er- ties of complex netw orks 2,3 . Realis tic gener a tive mo dels hav e man y applica tions and b enefits. Once a gener a- tive model is fitted to a given real netw or k, we can r e- place the real netw ork with artificia l netw o rks in tasks such as simulation, extrap o lation (by genera ting similar graphs with lar g er sizes), sampling (reverse of e xtrap o- lations), capturing the net work s tr ucture and net works compariso n 9,10 . Despite the adv a nces in the field, there is no univ er sal generative mo de l suitable for all net work t y pes and fea- tures. The prer equisite of netw or k g eneration is the stage of gener ative mo del selec tio n. In fact, when we generate synthetic netw o rks, we ho p e to reach gr aphs that are structurally similar to a targe t netw ork. In the mo del selection s tage, the prop erties of a given net work (called tar get n et work ) are analy z ed and the b est mo del suit- able for generating similar netw orks is selected. A mo del selection metho d tries to answ er this question: “Among candidate generative mo dels, whic h o ne is the most suit- able one for genera ting complex net work instances sim- ilar to the given netw ork?” In this pap er, w e in vesti- gate this pr oblem and by the means of machine lear ning algorithms, we prop ose a new mo del selection metho d based on net work structural proper ties. The prop o s ed metho d is named “Genera tive Mo del Selectio n for Com- plex Netw o r ks” (GMSCN). T he need for mo del selectio n is frequently indicated in litera ture 11–13 . More sp ecifi- 2 cally some works 11,13,14 are based on counting subgraphs of small s izes (called gr aphlets or motifs 4,11,13–19 ), and some others concentrate o n structural features of complex net works 12 , a nd some a re ba sed on manually se le c ting a mo del thro ug h w atching a small set of netw or k features 20 . W e will show that by using a n appro priate combination of lo c al a nd g lobal netw or k features, we ca n develop a more accur ate mo del sele c tio n metho d. In our pro po sed metho d (GMSCN), we consider seven pro minen t g ener- ative mo dels by which we hav e generated datasets of net work instances. The data sets are used as training data for lear ning a decision tree for mo del selection. O ur metho d also consists of a sp ecial technique fo r qua n tifi- cation of degree distribution. In compar ison to e x isting metho ds 11–13 , w e hav e considered wider , new e r and more significant g enerative mo dels. Due to a b etter se lection of netw ork fea tur es, GMSCN is also more efficient and more s calable than similar metho ds 11,13 . The rest o f this pa per is organize d as follows. Section I I reviews the rela ted work. Section I I I presents GMSCN. Section I V is dedicated to ev aluation of GMSCN. Section V desc rib es a case study on some real netw ork s a mples. The results and ev a luations of this paper are discusse d in Sec tion VI. Finally , Section VI I concludes the pap er. I I. R ELA TED WORK A. Netw ork Generation Mo dels In this subsection, we briefly introduce the lea ding metho ds of netw or k ge ne r ation: • Kr one cker Gr aphs Mo del (KG) 9 . This mo del gener- ates realistic synthetic netw ork s by applying a ma- trix op eratio n (the kronecker pr o duct) on a small initiator ma trix. This mo del is mathematica lly tractable a nd supp or ts ma ny net work fea tures suc h as small path lengths, hea v y tail deg ree distr ibu- tion, heavy tails for eigen v alues a nd eigenv ector s, densification and shr ink ing diameter s over time. • F or est Fir e Mo del ( FF) 21 . In this mo del, edges are added in a pro cess similar to a fire- spreading pro- cess. This mo del is inspired b y Cop ying mo de l 22 and Comm unity Guided A ttachmen t 21 but sup- po rts the shr inking diameter pr o p e rty . • Ra ndom T yping Gener ator Mo del (R TG) 10 . R TG uses a pro cess of “random typing” for generating no de identifiers. This mo del mimics real world graphs and co nforms to elev en imp ortant patterns (such as pow e r la w degree distribution, densifica - tion pow er la w and small and shr inking diameter) observed in real netw ork s 10 . • Pr efer ential Attachment Mo del ( P A) 23 . The cla ssi- cal preferen tial attachmen t model g enerates scale- free netw or ks with p ow er law degree distribution. In this mo del, the nodes a r e added to the ne tw o rk incrementally a nd the probability of the attach- men ts depends on the deg ree o f existing no des . • Smal l World Mo del (SW ) 24 . This is another classi- cal net work generatio n mo del that sy n thes iz es net- works with s mall path leng ths and high clus tering. It starts with a regular lattice and then rewires some edg es o f the netw or k randomly . • Er d¨ os-R´ enyi Mo del (ER) 25 . This model genera tes a completely ra ndom graph. The num b er of no des and edges are co nfig urable. • R andom Power L aw Mo del (RP) 26 . The RP mo del generates synthetic netw or ks by following a v ari- ation of E R mo del that supports the pow er law degree distribution prop er t y . Other generative mo dels are also av a ilable (we hav e not utilized them but they ar e used in related mo del selectio n metho ds), such as Copying Model (CM) 22 , Random Geometric Mo del (GEO ) 27 , Spa- tial Preferential A ttachmen t (SP A) 28 , Ra ndom Grow- ing (RDG) 29 , Duplication-Mutation-Complementation (DMC) 30 , Duplication-Mutation using Random m uta- tions (DMR) 29 , Aging V ertex (AGV) 31 , Ring Lattice (RL) 32 , Core-p eripher y (CP) 33 , and Ce llular mo del (CL) 34 . B. Mo del Selection Methods The aim of this pa pe r a nd the mo del s election metho ds is to find the best genera tive mo del that fits a given net work instance. Some mo del selection metho ds are based on gra phlet counting 11,13,14 . Graphlets are subgraphs of b o unded s iz es (e.g., all possible subgraphs with three or four no des) and the frequency of gra phlets in a net work is considered as a w ay of ca pturing the net work structure 11 . In some works, directed graphs and graphlets ar e considered 14,15 and some others consider the netw o rk as simple (undirected) gra phs 11,14 . Janssen et al. 11 hav e tested bo th graphlet features and structural features (degree dis tr ibution, assor tativity and average path length) in the mo del selection pro ble m. They co nclude that counting gr aphlets of three a nd four no des is sufficien t for capturing the s tructure of the netw or k, i.e., appending structura l features to the feature vector of gr aphlet co unt s do es no t improv e the acc ur acy of the model selector. In this pa p er , we critique this claim and show that using a better set of lo cal (such as transitivity) and global (such as effective diameter 21,35 ) net work structural features, along with an appropria te deg ree distribution quantification algo rithm, actually improv es the a ccuracy of the model selection. In fact, gra phlet co un ts a r e limited lo cal features and are not able to reflect the structural prop er ties of a netw o rk 3 instance. Janss e n et al 11 implemen ted six generative mo dels and genera ted a dataset of syn thetic net works as the training data for decision tr ee learning 36 . In this metho d, candidate genera tive mo dels are: P A 23 , CM 22 , GEO 27 (GEO2D and GEO3D) and SP A 28 (SP A2D and SP A3 D). A similar method is prop o sed by Middendorf et a l. 13 . In this metho d, the feature vectors ar e the counts of graphlets with sma ll size s . Seven different ge nerative mo dels a re cons idered by which netw or k instances are generated a s the training data. Candidate genera tive mo dels ar e: E R 25 , P A 23 , SW 24 , RDG 29 , DMC 30 , DMR 29 and AGV 31 . The a uthors hav e us e d a general- ized decision tree ca lled alternating decis ion tree (ADT) as the lea rning algo rithm. Airoldi et al. 12 prop ose to form feature vectors according to structural netw ork prop erties. They hav e considered some classical generative mo dels and g enerated a da taset by whic h a na ¨ ıve Bayes cla ssifier is learned. Candida te generative mo dels a re: P A 23 , ER 25 , RL 32 , CP 33 and CL 34 . This metho d is dep endent on the size and average connectivity o f the target net work and this dependency is one o f its limitations. Patro et a l. 37 prop ose a fra mework for implementing net work generation mo dels. The use r of this framew or k can sp ecify the impo rtant netw ork fea tures and the weigh t of each feature. In o ther words, we consider each generative mo del as a cla s s of net works. This mo del, more than to b e a sp ecific metho d, is a rela tively op en framework and the user should determine different parameters of the framework according to the target application. I I I. T HE PROP OS E D METHOD GMSCN is based o n learning a clas sifier for mo del selection. The goal of a clas sifier is to accurately pre - dict the tar get class for a given netw o rk ins tance and in our method, gener ative mo dels play the role of network classes. In GMSCN, the clas s ifier sugg e sts the be s t model that gener ates netw ork s similar to a given netw o r k. The inputs of the classifier are the structural prop erties of the targ e t netw ork and the output is the selected mo del among the candidate netw o rk gener ation mo dels. A. Metho dology Fig. 1 sho ws the high-level methodology of GMSCN. The methodo logy is configurable by s everal parameters and decision p oints, such as the set of considered net- work features, the c hos e n s up er vised learning algorithm and the candidate generative mo dels . The steps of con- structing the net work c lassifier, as illustrated in Fig. 1, are describ ed in the following: 1. Many artificial netw ork instances a re synthesized using the candidate net work generative models . These netw ork instances will for m the dataset (training a nd test data) for lear ning a netw or k clas- sifier. In this step, the parameter s of the generative mo dels ar e tuned in order to synt hes ize net works with densities similar to the density o f the given target netw ork. 2. After genera ting the net work instances, the struc- tural features (e.g., the degree distributio n and the clustering co efficient) of eac h netw ork instance ar e extracted. The r esult is a da taset o f la bele d s truc- tural features in whic h eac h record consists of top o- logical features o f a synthesized netw ork along with the la be l o f its gener ative mo del. 3. The labe le d dataset forms the training a nd test data for the sup er vised le arning a lgorithm. The learning algor ithm will retur n a net work classifier which is able to predict the class (the best genera - tive mo del) of the given netw or k instance. 4. The structural features of the targ e t netw or k are also extra cted. The same “ F eatur e Extraction” blo ck which is use d in the second s tep is applied here. The structura l feature s of the ta rget net work are used as input for the lear ned clas s ifier. 5. The le a rned netw or k classifier is a cus tomized “mo del selector” for finding the mo del that fits the target netw o rk. It gets the structur al features of the targ e t net work as input and returns the most compatible genera tive mo del. In this metho dolo gy , the density of the tar get netw o rk is considered a s an imp ortant prop er t y of the target net work. Net work density is defined a s the ratio of the existing edg e s to p otential edges and is regarded as an indicator of the sparseness o f the g raph. In the prop osed metho do logy , g enerative mo dels are configured to synthesize netw or ks with densities similar to the density of the ta rget netw ork. This decision is due to the fact that it is hard to co mpare netw ork s of completely different densities for predic ting their growth mechanism and generation pro cess. On the other ha nd, even with similar netw or k densities, v ar ious gener a tive mo dels cre- ate differen t net work structures. So, we tr y to keep the density of the genera ted net works simila r to the density of the ta rget netw o rk. In this manner, the netw or k classifier ca n learn the diff er ence a mo ng the struc tur e of v ar ious g enerative mo dels with similar netw o rk densities . It is also worth noting tha t it is not p ossible to generate netw or k s with exactly equal densities with some of the existing generative mo dels. This is b ecaus e some genera tive models (suc h as Kronecker gra phs and R TG) a r e no t configura ble for finely tuning the exact density of synthesized netw orks . So, we generate the net works of tr a ining data with similar, and no t exactly 4 FIG. 1. The metho dology of learning a netw ork classifier equal, dens ities to the dens ity of the given netw or k . Our prop osed metho dology , unlike existing metho ds 11–13 , is not dep endent o n the s ize (num- ber of no des) of the targ et net work. Size-indep endence is a n impor tant feature o f our method. It enables the classifier to lea rn fro m a datase t of generated netw o rks with sizes different -p erhaps smaller- fro m the size of the target net work, but with a similar density . This facility decreases the time of netw ork gener ation and feature extractio n considerably . W e will demonstr ate the size- independence prop er t y o f the GMSCN in the ev a luation sec tion. GMSCN is actually a realiza tion of the descr ib e d metho dology . In the following subsections, w e further illustrate the details of GMSCN b y spe cifying the op en parameters and decisio n p oints of the metho dology . B. Netw ork Feat u r es The pr o cess of mo del selection, as describ e d in Fig. 1, utilizes str uctural ne tw o rk features in the se c o nd and fourth steps . There a re plen ty of different net work features, so w e clarify the considered features in GMSCN here. T o ca pture the prop erties of a net work, we should ana l- yse a wide and diverse feature s et of netw ork connec tivit y patterns. W e prop os e the utilization of a combination of lo cal and global net work s tructural features. The utiliza - tion of a limited set o f lo ca l fea tures (gr a phlet c ounts) in s imilar metho ds 11,13 has resulted in a low er precision for the model selector. As explained later, w e hav e uti- lized ten netw or k feature s fr om four feature ca teg ories. While trying to find the best and minimal set o f net- work features, we considered features tha t are no t only effective on the c lassification acc ur acy , but als o efficiently computable and size-indep endent. One may consider a longer list of netw ork features, ev en fro m differ ent feature categorie s (e.g. eigenv alues). In such a n approach, auto - matic metho ds for feature selection such as the metho d- ology explained in Ref. 38 may b e helpful. But supp ort- ing s pec ifie d diverse criteria (effectiveness, efficiency a nd size-indep endence) for selected fea tures is q uite difficult in s uch an automatic metho dolog ie s . The utilized features and meas urements in GMSCN ar e: • T r ansitivity of r elationships . In this catego ry of net work features, w e consider t wo measur e- men ts of “average clustering co efficient” 1,24 and “transitivity” 39 . • De gr e e c orr elation . The measure of a ssorta tivity 1 is selected fr o m this categ ory of netw or k features. • Path lengths . There a r e different global fea- tures ab out the path leng ths in a netw ork , such as diameter 40 , effectiv e diameter 21,35 and av er age path length 3 . W e selected the “effectiv e diame- ter” measurement since it is more robust 9 and a lso bec ause of its le s s computation cost and se ns itiv- it y to sma ll netw o rk changes 41 . Effectiv e diameter indicates the minimum n umber o f edges in which 90 perc ent of a ll connected pa ir s can reach e a ch other 9,35,42 . Effective diameter is well defined for bo th co nnected and disco nnected netw orks 35 . • De gr e e distribut ion . It is a common appro ach to fit a pow er la w on the degr ee distr ibutio n and ex- tract the p ower law ex po nent a s a repr e sentativ e quantit y for the degree distr ibution. But a single nu mber (the p ower law ex po nent ) is to o limited for representing the whole degre e distribution. On the other ha nd, some r e a l netw orks do not co nform to the p ow er law deg ree distribution 43–45 . W e pr op ose an alternative metho d for quan tification of the de- gree distribution by co mputing its pr obability p er- centiles. The p ercentiles are calculated fr o m some defined regio ns of the deg ree distribution according to its mean and s tandard deviation. W e devise K int er v a ls in the degree distribution a nd then calcu- late the probabilit y of deg rees of ea ch interv al. K is always an ev en num b er gr eater than or equa l to four. The size of all interv als, except the first and the la st one, is co nsidered equal to pσ where σ is the standar d deviation o f the distribution and p is a tunable para meter. In any application, we can configure the v a lues of K and p in a manner that the p ercentile v alue s b eco me mo re distinctive. In our exp eriments we let K = 6 and p = 0 . 3, so 5 we ex tr act six quant ities (DegDistP1..DegDistP6 per centiles) from an y degree distributions. If we increase the v a lue o f K , we should normally de- crease the v alue of σ so that most of the in ter- v al points stay in the range of existing no de de- grees. Smaller v alues for σ also necessitate larg er v alue s for K . Large v alues (e.g., K = 100) and small v alues (e.g ., K = 1 ) for K will a lso decrea s e the distinction p ow er of the extracted features vec- tor. The sp ecified v a lues for σ and K are found through trial and error. Equation 1 shows the in- terv a l points of the deg ree distribution and Equa- tion 2 sp ecifies the probability for a no de degree to sit in the i th int er v a l. The set of six p ercentiles (DegDistP1..DegDistP6) ar e used as the network features r epresenting the deg r ee distribution. Let I P i be the i th interv a l p oint and D be the de- gree ra ndom v aria ble. I P i = min ( D ) i = 1 µ − ( k 2 − i + 1) pσ i = 2 ..K max ( D ) i = K + 1 (1) D eg D i stP i = P ( I P i < D < I P i +1 ) , i = 1 ..K (2) C. Learning the Classifier The third step of the propos ed metho dolo gy is the utilization of a super vised machine learning alg orithm. The learning a lgorithm c o nstructs the net work class ifier based o n the features of gener ated netw ork instance s as the training data . E ach recor d o f the training data consists of the structural features - as descr ibe d in the previous subse c tion- of a g enerated net work along with the lab el of its generative mo del. By the means of sup e rvised algor ithms, we can learn from this training data a classifier which predicts the b est gener ative mo del for a given net work with the specified structural features. W e ex amined several sup ervised learning algo r ithms such as decision tr ee lear ning 36,46 , Ba yesian netw ork s 47 , suppo rt vector machines 48 (SVM) and neural net works 49 among which the LADT ree metho d showed b etter results. A sho rt description of e x amined learning algo- rithms is presented in Appe ndix A. In o ur experiments, although some metho ds (suc h as Bayesian netw orks ) resulted in a small improvemen t in the a ccuracy o f the learned c la ssifier, but the decision tree lea rned b y LADT ree algo rithm was obviously more r obust and less sensitive to noise s than o ther learning metho ds. The robustness to no is e analysis is describ ed in the ev a luation section. T o avoid ov er -fitting, we alwa ys used stratified 1 0-fold cross- v alida tion. D. Netw ork Mo dels Among several existing net work gener ative mo dels, we ha ve selected seven importa nt models: Kr oneck er Graphs 9 Mo del, F orest Fire 21 Mo del , Random Typing Generator 10 Mo del, Pr eferential A ttachmen t 23 Mo del, Small W orld 24 Mo del, Erd¨ os- R ´ enyi 25 Mo del and Ran- dom Pow er Law 26 Mo del. The selected mo dels a re the state of the art metho ds of netw or k genera tion. The e x - isting mo de l s election metho ds such as Ref. 11 and Ref. 13 hav e ignor ed some new and impor tant generative mod- els such as K roneck er Graphs 9 , F ores t Fire 21 and R TG 10 mo dels. IV. EV ALUA TION In this section, we ev aluate our prop osed method of mo del selection (GMSCN). W e a lso compa re GMSCN with the baseline method 11 and show that it outp erfor ms state of the ar t metho ds with r e s pe c t to differen t criteria. Despite most of the existing metho ds, GMSCN has no depe ndency on the size o f the g iven netw ork. In other words, we ig no re the n umber of no des o f the target net- work and w e only consider its densit y in g e ne r ating the training data . Beca use the baseline metho d is depen- dent on the size of the target netw or k, we ev a luate the metho ds in tw o s tages. In the first stage, we fix the size of the generated net works to prepare a fair condi- tion for comparing GMSCN with the baseline method. Although size-dep endence is a drawback for the baseline metho d, the ev a luation shows that GMSCN outp erfor ms the baseline method even in fixe d netw or k size condition. In the second stage, w e allow the g eneration mo dels to synthesize net works of different sizes. In this stag e, w e show that the size diversit y of generated netw orks do es not affect the accurac y of the lear ned decision tree. As describ ed in Section I I I, GMSCN is bas e d o n lear ning a decision tree from a training se t of generated netw orks . In each ev aluatio n stage, we g enerated 100 netw or k s from each netw or k generative mo del and with seven candidate mo dels, we ga thered 70 0 g e nerated netw ork s. W e used these netw ork instances as the tra ining and test data for learning the decisio n tree. A. The Baseline m ethod W e hav e s e lected the gr aphlet-based metho d prop os ed by Jansse n et al. 11 as the baseline metho d. The base line metho d ha s some s imilarities to GMSCN: it is based o n considering so me net work genera tive mo dels a nd then learning a dec is ion tr ee for netw or k classification with the a id of a set of generated netw orks . In the baseline metho d, eight g r aphlet counts are consider ed as the net work features . All subgra phs with three no des (t wo graphlets) and four no des (six graphlets) ar e considered 6 FIG. 2. The graphlets with three and four no des in the ba seline metho d (Fig. 2). A similar appr o ach is also prop osed by Middendorf et al. 13 , with dis tinctions on the learning algor ithm a nd the set of candidate generative mo dels. The graphlet-ba sed method is selected as the baseline b ecause it is a new metho d and its e v alua tions show a high accuracy , and it is prop osed similarly in different resea r ch domains such as so cia l net works 11 and protein netw orks 13 . Despite the similarities, there exist some impor tant differences b etw een GMSCN and the ba seline metho d. First, the bas eline metho d is ba sed on counting graphlets in netw orks while GMSCN pro po ses a wider set of lo- cal and global features . Janssen et al. 11 conclude that considering s tr uctural features does not improve the ac- curacy of the graphlet- ba sed classifier , but w e will sho w that choosing a better set of lo cal and global netw ork features and with the aid of our prop osed degree dis- tribution quant ifica tion method, structural features will play an undeniable role in mo del selection. Second, the baseline metho d is size-dep endent, i.e ., it consider s b oth the size and the densit y of the target netw or k, and it generates net work instances a ccording to these tw o prop- erties. On the other hand, GMSCN is size-indep endent and w e o nly co nsider the dens ity of the target net work in the net work generation phase. Third, GMSCN employs newer and more impor tant generative mo dels such as the Kronecker Gr a phs 9 mo del, the F orest Fire 21 mo del and the R TG 10 mo del. F ourth, w e examined different learn- ing a lgorithms and then selected LADT ree as the best learning algor ithm for this application. Our ev aluation of GMSCN is more thoro ugh, considering diff er ent ev al- uation cr iteria. W e have also prese nted a new alg orithm for qua nt ifying the netw or k degree distribution. Graphlet counting is a very time consuming task and there is no efficient alg o rithm for computing the full counts of gra phlets for larg e net works. T o handle the algorithmic complexity , most of the gr aphlet-counting metho ds (e.g., Refs. 11, 17, 50, and 51) propos e a sam- pling phase be fo re co un ting the gra phlets. B ut the sa m- pling alg orithm may affect the graphlet counts and the resulting co unt s may b e bias ed tow ar ds the features of the sa mpling algo rithm. It is also po ssible to estimate the graphlet counts with approximate algo rithms 16,52 , but this approach may also bring remark able er rors in graphlet counts. T o pre pa re a fair compariso n situation, we hav e counted the exa ct n umber of graphlets in orig- inal netw ork s a nd hav e not employed any sampling or approximation algorithms. It is worth noting that re- po rted accuracy of the bas eline method in this paper is different from the rep ort o f the origina l pap er 11 , ma inly bec ause the set of gener ative mo dels are not the same in the tw o pap er s . B. Ac curacy of the Mo del Class ifier W e first s et a fixed size for generated netw or ks of the dataset and genera te net works with ab out 4 096 no des. Almost all the generated netw ork s in our da taset contain 4096 no des , but the net works gener ated by R TG 10 mo del hav e small v ar iations in their size. Number of no des in these netw orks is in the range of 40 00 to 4200 and this is b ecause the exact num b er of no des is not configura ble in the R TG mo de l. Since the K r oneck er Graphs mo del generates netw or ks with 2 x no des in its o riginal form, we chosen 4096 (2 12 ) as the size of the netw orks . The av erag e density o f net works in this dataset is equal to 0.0024 . In addition to ov erall accuracy , w e ev alu- ate the precision and recall of the learned de- cision tree for differen t netw ork models. “Pre- cision” shows the p ercentage of correctly classi- fied instances calc ula ted for each categor y (e.g., P rec i sion F F = number of cor r ectly pr edicted F F intances number of F F predicted instances ), “Recall” illustra tes the ability of the method in finding the insta nc e s o f a ca teg ory (e.g., Recal l F F = number of cor r ectly pr edicted F F intances number of F F instances ), and “ Accuracy” is an indica to r o f overall effectiv e- ness of the cla ssifier acro ss the entire da taset (i.e., Accura cy = number of cor r ectly pr edicted intances total number of instances ). The ov erall a ccuracy of GMSCN is 97.1 4% while the accur acy of the baseline metho d is 78.57 % whic h indica tes 1 8.57% improv ements. Fig. 3 and Fig . 4 show the precision and recall of GMSCN and the baseline metho d resp ectively for different netw or k mo dels . In addition to an a pparent improv ement in the precision and r e call for most of the gener a tive mo dels, the figures show the stability (less undesired devia tion) of GMSCN ov er the bas eline metho d. The accura cy and precision of GMSCN sho w small deviation for different genera tive mo dels, while these measures for baseline metho d v ar y in a wide range. T able I shows the details of GMSCN results for different ne tw o rk mo dels. F or ex ample, the first row of this table indicates that amo ng 700 net work instances, 104 netw or ks ar e predicted to be genera ted by the ER mo del but in fact 9 7 (out of 104 ) instances are ER, six instances a re the KG mo del and one is g enerated by the SW model. Because w e hav e utilized cro ss-v alidation, all of the 700 netw or k instances ar e included in the ev a luation. T able I I sho ws co rresp onding results for the baseline metho d. It is worth noting that considering b oth the gr aphlet counts a nd the structura l fea tures do es not improve the accuracy of the c lassifier considera bly . Since we wan t to prepare a size-indep endent and efficient method, we do 7 FIG. 3. Precision of GMSCN compared to b aseline metho d for different generative mo dels FIG. 4. Recall of GMSCN compared to baseline meth od for different generativ e mo dels not consider the gr aphlet co un ts in feature vectors. C. S ize Indep endence GMSCN for mo del selection is indep endent fr o m the size of the target netw or k. When we w a nt to find the b est mo del fitting a real netw ork, we ca n discar d the num b er of no des in the netw or k and genera te the training da ta only ac cording to its density . The size-indep endence is an imp or tant feature of GMSCN whic h is missing in the baseline method. This feature is es pe c ially impo rtant when we wan t to find the g enerative mo del for a rela tively lar ge netw o rk. In this condition, w e can generate the training netw or k instance s with smaller sizes than the target net work. This feature also increas e s the applicability , scalability and p erforma nce of GMSCN. F or ev aluating the dep endency of GMSCN to the size of the netw ork, we generate a new dataset with net works of differe nt siz e s. Instead of fixing the n umber of no des in each netw ork instance (such as ab out 40 96 no des in the previo us ev aluation) we allow net works with differen t n umber of node s in the da ta set. In this test, with each of the generative mo dels, w e generated 100 netw orks o f different sizes: 24 netw orks with 4,096 no des, 24 netw orks with 3 2,768 no des, 24 netw o r ks with 131,07 2 no des , 24 netw or k s with 5 2 4,288 no des and four net works with 1,048,57 6 no des. Aga in, the only exception is the R TG mo del which g e nerates net works with sma ll v a r iations from the sp ecified sizes. The no de counts a re p ow er s of tw o b ecaus e the origina l v er sion of Kro neck er gr aph mo del is able to gener ate netw or ks with 2 n no des. The av erag e density of netw o rks in this 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% 1M 512K 128K 32K 4K 2K 1K 512 256 FIG. 5. Accuracy of GMSCN for different n etw ork sizes. dataset is equal to 0.0 00885 . T able I I I shows the precision and recall of GMSCN for this datase t. In this ev alua tion, the overall a ccuracy of the classifier is 97.29% which is v er y close to the accuracy of the system in the ev a luation with fixed netw o rk sizes. This fact shows that GMSCN is not dependent o n the size of the tar g et net work. The av er age density of netw or ks in this data s et (0.000 885) is different from the average den- sity of netw o rks in the fixed-size datas et (0.0 024). So, the mo del selection is als o p erforming well for different densities of the given netw or k. W e also extended this exp eriment to ensure that there is no meaningful low e r bo und for GMSCN in terms of net work size. The new ex- per iment is config ured similar to the previo us tria l, but it examines a wider r ange of net work s iz es. Fig. 5 plots the result of this exp eriment at each n umber of no des. It indicates that GMSCN shows go o d perfor mance for the v ar ying netw o rk sizes. Obviously , the baseline method is size-dep endent 11,13 bec ause the graphlet co un ts c o m- pletely dep end on the size of the netw o rk. So , it is not necessary to show the precision and recall of the baseline metho d for data set of netw orks of different sizes . W e ig- nored s uch a useles s ev a luation b ecaus e the ca lculation of gra phlet counts fo r lar g e netw or k s is very time con- suming. D. Robus tness to Noise W e also e v alua te the robustness o f GMSCN with resp ect to ra ndom changes in netw orks. F or each test-case netw ork , we randomly select a fraction of edges, rewire them to r andom no des, and test the accuracy of the classifier for the r esulting netw ork. W e start fro m the pur e netw o rk samples and in ea ch step, we ch a nge five perce n t of the edges until all the edges (100 p ercent c ha nge) are ra ndomly rewir ed. In o ther words, in addition to pure netw or ks, w e gene r ated 20 test-sets with from z e ro to 100 p erce nt edge ch a nges, each of which containing 7 00 net work samples from seven generative mo dels . As discussed b efore, we have chosen LADT ree as the sup ervised learning a lgorithm in GMSCN. Fig. 6 shows the av erag e acc uracy of GMSCN for different random change fractions. This figure shows the effect 8 T A BLE I. Precision, R ecall and A ccuracy of GMSCN for d ifferent generative mo dels T ru e ER T ru e FF T ru e KG T rue P A T rue RP T rue S W T rue R TG Class Precis ion Pred. ER 97 0 6 0 0 1 0 93.27% Pred. FF 0 100 0 0 2 0 0 98.04% Pred. KG 2 0 93 2 0 0 0 95.88 % Pred. P A 1 0 0 98 0 0 0 98.99% Pred. R P 0 0 1 0 94 0 1 97.92% Pred. S W 0 0 0 0 0 99 0 100.00% Pred. R TG 0 0 0 0 4 0 99 96.12% Class Recall 97% 100% 93% 98% 94% 99% 99% Accuracy: 97.14% T A BLE I I . Precision, R ecall and A ccuracy of the baseline metho d for different generative mod els T ru e ER T ru e FF T ru e KG T rue P A T rue RP T rue S W T rue R TG Class Precis ion Pred. ER 94 1 30 0 11 0 0 69.1 2% Pred. FF 0 73 2 0 6 6 0 83.91% Pred. KG 6 0 37 0 17 0 0 61.67 % Pred. P A 0 0 26 100 6 0 0 75.76% Pred. R P 0 23 5 0 52 0 0 65.00% Pred. S W 0 3 0 0 0 94 0 96.91% Pred. R TG 0 0 0 0 8 0 100 92.59% Class Recall 94% 7 3% 37% 100% 52% 94% 100% Accuracy: 78.57% T A BLE I I I. Precision and Recall of GMSCN for netw orks of different sizes ER FF KG P A RP SW R TG Precision 96.0 % 98.0% 95.0% 98.0% 97.9% 100% 96.1% Recall 96.0% 100% 95.0% 99.0% 94.0% 99.0% 98.0% of c ho os ing differ e nt learning a lg orithms for GMSCN. As the figure shows, LADT ree results in a mo re robust classifier for this application, since it is less sensitive to noise. The accuracy of GMSCN is smo othly decreasing nearly linear with random changes. Ther e is no sudden drop in the chart of the GMSCN (base d o n LADT ree). With 100 p ercent random changes (the right e nd of the diag r am), the accura cy of the cla ssifier reaches the v a lue of 14.43 p ercent, whic h is near to 1/7 (i.e., 1 number of candidate models ). This is due to existence of seven net work models a nd indicates that almo st all the characteristics o f the gener ative mo del is elimina ted from a g enerated net work with 100 p ercent edge rewir ing. E. Sc alabilit y and Perfo rmance The a im of GMSCN is finding a gener ative mo del best fitting a given real netw or k. W e define the scala bilit y of such a metho d as its ability to handle net works o f FIG. 6. Robu stness of the differen t classification metho ds with respect t o rand om edge rewiring. large siz e s a s the input. Noting to the metho dolog y of the prop osed metho d (Fig. 1), the most time-consuming part o f the mo del classification is the featur e e xtraction task. F or the feature extraction task, GMSCN is obvi- ously more scala ble th a n th e baseline metho d. Ther e is no efficient alg orithm for counting the graphlets in larg e net works. The selected netw o rk features in GMSCN (ef- fective diameter, clustering co efficient, tr ansitivity , as- sorativity and degree distribution p erce ntiles) are effi- ciently computable by existing algo rithms. W e hav e a lso discarded “timely to extrac t” features such as “av er a ge path length” bec a use their extraction has mor e compu- tationally complex a lgorithms. Most of the graphlet-based metho ds such as Ref. 11 and Ref. 13 try to increase their s calability by incorp ora ting a pre-stage of netw or k sampling with very small ra tes such as 0 .01% (one out of 1 0,000 ) in Ref. 11 . But such sampling rates decrea ses the a ccuracy of graph counts and the c ho - 9 sen sampling alg orithm will a lso bias the gr aph counts. On the other hand, if sa mpling or approximation algo - rithms a r e accepted for baseline method, these techniques will improve the p erfor ma nce of GMSCN to o. In other words, utilization of sampling and approximation alg o- rithms increa s es the scalability of b o th of the baseline metho d and GMSCN similarly . Some notes about the implemen ta tion and ev aluation of GMSCN are presented in the App endix B. F. Effec tiveness of the Degree Distribution Quantification Method As de s crib ed in Sec tion II I, w e hav e pr op osed a new metho d for the quantification of the degree distribution based on its mean and standard deviation. In this sub- section, w e test the effectiveness of this qua nt ifica tion metho d. W e show that without the prop osed fea tures of degree dis tribution, the accuracy of the netw or k classifier will diminish. T a ble IV shows the r esults o f GMSCN b y eliminating six features r elated to the degree distribution (DegDistP1..DegDistP6 p erce ntiles). By this c hang e, the ov erall accuracy of the metho d decreases ab out eight p er- cent (from 97.14 % to 89.2 9%). This ca n b e se en by com- paring the v a lues in T able IV with those of T able I which reflects the results of GMSCN when employing all the features. P recision and r ecall are improv e d for almost all the mo dels with incorp ora ting fea tures rela ted to the de- gree dis tribution. This fact shows the effectiveness of our prop osed quantification metho d for degree distribution. T A BLE IV. The results of GMSCN after excluding the fea- tures of d egree d istribu tion ER FF K G P A RP SW R TG Precision 89.1% 100% 79.41% 94.17% 76.47% 96% 90.7% Recall 90% 95% 81% 97% 78% 96% 88% V. C A SE STUDY W e applied GMSCN for some r eal netw o rks. The real net work instances and the result o f applying GMSCN on these netw orks a re illustrated her e: 1. “dblp cite” 53 (with 475,8 86 no des and 2,2 84,694 edges) is a net work which is extr a cted from the DBLP serv ice. This netw or k shows the citation net work among scient ific pap er s. GMSCN prop ose s F or est Fire as the b est fitting genera tive mo del for this netw o rk. Leskov ec et al. 21 also pr op ose F or- est Fire mo del for tw o similar graphs o f a rXiv a nd patent citatio n netw o rks. 2. “dblp collab” 54 (with 975 ,0 44 no des a nd 3,48 9,572 edges) is a co-autho r ship netw o rk o f paper s indexed in the DBLP service. A no de in this net work rep- resents an author and an edge indicates at lea st one co llab oratio n in writing pa per s b etw een the tw o authors. GMSCN sug gests F o rest Fire for this net- work instance to o. 3. “p2p-Gnutella08” 55 (with 6,3 01 no des and 2 0,777 edges) is a rela tively small P2P netw ork with abo ut 6000 no des . The b est fitting model suggested by GMSCN for this net work instance is Kr oneck er Graphs. 4. Slashdot, as a technology-rela ted news website, presented the Slashdot Zo o which allowed users to tag each other as friends. “Slashdot090 2” 56 (with 82,168 no des a nd 543,3 81 edges) is a net work of friendship links b etw een the users of Sla shdot, ob- tained in F ebr uary 20 09. The output of GMSCN for this so cial netw or k is the Ra ndo m Pow er La w mo del. 5. In the “web-Go ogle” 57 (with 875,713 no des and 4,322,0 51 edges) net work, the nodes r epresent web pag e s and dir e c ted edges r epresent h y per links among them. W e ig nored the direction of the links and cons idered the netw o rk as a simple undirected graph. The ra ndom Pow er Law mo del is als o pro- po sed for this netw ork by GMSCN. 6. “Email- EuAll” 58 (with 265,214 no des and 365,025 edges) is a comm unicatio n netw o r k of email con- tacts which is predicted to follow the R TG mo del. 7. Finally , for the small netw or k of “Ema il- UR V” 59 (with 1,133 no des and 5,451 edges ), whic h is an- other co mmunication netw or k o f emails, GMSCN suggests the Small W orld mo del. As explained ab ov e, v arious real netw or ks, which are se- lected from a wide range of sizes, densities, and domains, are categorized in differen t netw ork models b y the GM- SCN classifier. This fact indicates that no gener a tive mo del is dominated in GMSCN for r eal netw or ks and it suggests different mo dels for differen t netw o rk s truc- tures. The cas e study also verifies that no gener ative mo del is sufficient for synthesizing net works similar to real net works a nd w e should find the b est mo del fitting to the target netw ork in each application. As a result, it is worth no ting that the task of generative model se- lection is an importa nt stage befo r e genera ting net work instances. VI. DISCUS SION W e ev aluated GMSCN from differe nt p er sp ectives. GMSCN pr op oses a size-indep endent metho dolo g y fo r building th e netw or k clas sifier ba sed on a wide ra nge of 10 lo cal and global net work features a s the inputs of a de- cision tree . It sho ws a high accura cy in predicting the generative mo de l for a giv en netw or k. It is tolerant and insensitive to small netw or k changes. In addition to size - independenc e , GMSCN outper forms the baseline metho d that only co nsiders lo cal features of g raphlet counts with resp ect to accuracy and efficiency . A new s tructural fea- ture is also prop osed in GMSCN which quantifies the net work degr ee distr ibutio n. One may ar g ue that the size of the training set (700 net- work instances) is relatively small for a machine lear n- ing task. But we hav e actually utilized many mor e net- work instances in the pro cess of ev aluating GMSCN. Our dataset for ev aluating GMSCN includes 15,4 00 differ- ent netw ork instances: 700 instances in the fixed- size ev a luation, 700 instances in the size- indep endence test and 14,0 00 (20 × 7 00) instances in the robustness test. The dataset size seems to b e sufficient for ev aluating the learned class ifier b ecause the net work instances a re g en- erated with different par ameters (e.g., different siz es) and the r esults for v ario us ev a luation s teps are stable. It can also b e ar gued that the definition o f “Accuracy” in the ev aluations is not fair. When we c o mpute the ac- curacy of the cla ssifier, given that a net work is gener ated precisely acc o rding to one of seven mo dels, the classifier attempts to deter mine the g e nerative model. One may argue that r eal netw orks are unlikely to b e determined b y one o f these mo dels, so a ccuracy in pr edicting the or igin of artificia lly genera ted net works do es not necessa rily im- ply accuracy for real netw or k s. But we hav e s hown tha t GMSCN is able to classify synthesized net work instances even with ra ndom noises (in subsection IV C). In other words, net works that are not co mpletely co mpatible to one o f the g enerative mo dels are als o well ca tegorized with GMSCN. W e should note that no accepted b ench- mark exists for sug gesting the b est generative mo de l fo r real netw or ks. So, the computation of the actual a ccu- racy of a mo del selectio n algorithm for real netw orks is fundamen ta lly impo ssible. Considering the existing model selection metho ds, we summarize the main distinctions and contributions of GMSCN here. First, we have prop osed new structural net work features base d on the qua nt ifica tion of degree distribution. W e hav e sho wn the effectiveness of these features in impr oving the accuracy o f the mo del selec tion metho d. Second, we prop osed a set o f lo cal a nd globa l net work features for the pr oblem of model sele c tion. The baseline method suggests a set of gra phlet co un ts that are limit e d lo cal features a nd the ev aluations sho w that such features are not sufficient for this applica tion. It is not p oss ible to capture imp ortant characteristics of real netw o rks such as heavy-tailed degre e distribution, small path lengths, and degr e e corr elation (assor tativ- it y ) o nly by counting gra phlets, while such characteristics are among the main distinctions of gener ative mo dels. F or exa mple, the Small W orld mo del ge nerates netw o rks with high clustering a nd small path lengths a nd artificial net works generated by most of the models demons tr ate heavy-tailed degree distributions. Third, GMSCN is a size-indep endent method a nd the learned cla ssifier is ap- plicable for netw orks of different sizes. This is an imp or- tant featur e espec ially in the ca se of sugge s ting a genera - tive mo del for a larg e netw or k. In this ca se, we ca n gener - ate the tra ining set of artificial net works with a r elatively smaller num b er of no des. F ourth, although our prop o sed metho dology is not dep endent on the generative mo dels, we ha ve chosen seven impor tant and outstanding netw ork generative mo dels as th e candidate mo dels of the classi- fier. Importa n t mo dels such as K roneck er g raphs, F o rest Fire and R TG a re not cons idered in similar existing meth- o ds. Fifth, we have inv estiga ted differe nt learning algo- rithms and reached LADT ree as the most robust le a rning algorithm for this application. Sixth, w e have presented a diverse set of ev aluations for GMSCN with differen t criteria such a s prec ision, recall, accuracy , ro bustness to noise, s iz e-indep endence, scalability and effectiv eness o f the fea tures. VI I. CONCLUSION In this pap er, we propo sed a new metho d (GMSCN) for net work mo del se lection. This metho d, which is based on learning a decis ion tree, finds the b est mo del for generating co mplex netw o r ks similar to a spec ified net work ins tance. The structur al features of the g iven net work insta nce a re utilized as the input o f the decisio n tree and the result is the b est fitting mo del. GMSCN outp e rforms the existing metho ds with resp e ct to different criteria. The accur acy o f GMSCN shows a considerable improvemen t ov er the baseline metho d 11 . In addition, the s e t of s uppo rted gener ative mo dels in GMSCN contains wider , newer a nd more imp or tant generative mo dels such as Kr oneck er gra phs, F o rest Fire and R TG. Despite most of the existing metho ds, GMSCN is indep endent fro m the size of the input net work. GMSCN is a ro bust mo del and insensitive to small netw ork changes and nois es. It is also a scalable metho d a nd its p erfo rmance is obviously better than the baseline metho d. GMSCN also includes a new and effective alg o rithm for the quantification of netw ork degree distribution. W e hav e examined different learning algorithms and as a result, decis io n tree learning b y LADT ree metho d was the most ac c urate and robust mo del. W e s how ed that the lo cal structura l features, such as gr a phlet counts, are insufficient for inferring the net work mechanisms and it is a must to conside r a wider range of lo cal and global str uc tur al feature s to b e able to predict the netw o r k growth mechanisms. In future, w e will in vestigate the effect of netw ork structural features and g rowth mec ha nis ms on dynamics and b ehavior of the netw ork when it is faced with dif- ferent pro c e s ses. F or example, we will ev alua te the sim- ilarity of the informatio n diffusion process in a netw ork and its c o unt e r parts s y nt hes ized by the selected netw ork 11 generation mo de l. ACKNO WLEDGMENTS W e wish to thank Maso ud Asadp our , Mehdi Jalili and Abbas Heydar no ori for their gr eat comments. App endix A: Brief Intro duction to Clas sification Metho ds Machine Lear ning is a subfield of Artificial Intelligence in which the main goa l is to lear n knowledge thro ugh ex- per ience. Classification is a lear ning tas k of inferr ing a classification function from lab eled training data. Here, we expla in some cla ssifiers that are used in this pap er. Supp or t V e ctor Machines (SVM) 48 . SVM per - forms a cla ssification by mapping the inputs into a high- dimensional feature spa ce and co nstructing hype r planes to categ orize the data instanc e s. The b est hyperplanes are those that c a use the la rgest mar gin among the class e s. The parameters of suc h a maxim um- ma rgin h yp erpla ne are derived by s o lving an optimizatio n problem. Sequen- tial Minimal Optimization (SMO) 48 is a common metho d for so lving the o ptimization pro blem. Bayesian Networks L e arni ng 47 . A Bay es ian net- work mo del is a probabilistic graphica l model that rep- resents a set of random v a riables and their conditiona l depe ndencie s b y a dir ected acyclic gr aph. The nodes in this gra ph repres e n t the r andom v a riables and an edge shows a co nditional dep endency b etw een tw o v ar iables. Bay esian netw or k lear ning aims to create a netw o rk that bes t describ es the pro bability distribution ov er the tr a in- ing da ta. T o find the be s t net work among the set of po s- sible Bay esian net works, the heur istic search techniques has b een freq uent ly used in the literatur e. Artificial Neur al Networks 49 . ANN is inspired by hu- man brain neural net work. An ANN consists of neuron units, ar ranged in lay er s a nd connected with w e ig hted links, which co nvert an input v e ctor in to some outputs. Usually , the netw ork s are defined to b e feed-for ward, with no feedback to the pre v ious lay er . In the training pha s e, the weigh ts o f the links a re tuned to a dapt an ANN to the training data. Back-propaga tio n a lg orithm is a com- mon metho d f o r the tra ining phas e. C4.5 De cision T r e e L e arning 46 . A decision tree is a tree structure of decis ion rules which can b e used as a c la ssification function (leaf no des show the retur ned classes). C4.5 co nstructs a decision tree based o n a la - bele d training data. C4.5 us es informatio n entropy to ev a luate the go o dness of branches in the tr e e. LADT r e e 36 .This classifier gener ates a m ulti-cla ss al- ternating decision tree and it uses the b o osting strat- egy . Bo o sting is a well-established cla ssification tech- nique that combines some weak classifier s to form a sin- gle pow erful classifier. A prediction no de in a LADT ree includes a scor e for each of candidate classes. LADT ree calculates confidences for differen t classes accor ding to their v is ited sco re in prediction no des, a nd it returns the bes t cla ss acco rding to the confidences. App endix B: Implementation Notes T o implemen t Kro neck er Graphs, F o rest Fire mo del, Preferential A ttachmen t, Small W orld, a nd Ra ndom Po wer Law mo dels , we utilized the SNAP library ( http:/ /snap. stanford.edu/snap/ ). The implemen- tation of R TG mo del is a v aila ble in a MA TLAB li- brary ( http://w ww.cs .cmu.edu/ ~ lakogl u/ ) . W e a lso developed our own implemen ta tion of the E R mo del. The features are extracted by the aid of differ- ent netw o rk analys is to ols. The ig raph pa ck a ge ( http:/ /igrap h.sourceforge.net/ ) of the R pro ject helpe d us calc ulate the a s sortativity a nd transitivity measures. W e used the SNAP library for measur ing the effective diameter , average clustering co efficient , densit y and also the graphlet counts. Since we pr op osed a new metho d for qua n tifying net work degree distribution, we hav e implemen ted this metho d our selves. W e utilized RapidMiner as an op en source tool for machine learn- ing. T he implementation of LADT r e e and Bay esia n net- work learning and SVM are a ctually part of the W ek a to ol whic h is em b edded in RapidMiner. The amount of computation needed for this resear ch, esp ecially co unt - ing the exact num b er o f graphlets, w as enormous. W e utilized three virtual machines on a super-computer for this enormo us computation task, each of whic h simulated a computer with 16 proc e ssing cores of 2.8 GHz and 24 GB of memory . Most o f the computation time was spent for counting the gr aphlets of the gener ated netw ork in- stances. 1 M. E. Newman, “The structure and f unction of complex net- wo r ks,” SIAM review 45 , 167–256 (2003). 2 R. A lb ert and A.-L. Bar ab´ asi, “Statistical mechanics of complex net works,” Reviews of modern ph ysics 74 , 47 (2002). 3 L. d. F. Costa, F. A. Rodr igues, G. T ravieso, and P . Vi llas Boas, “Characterization of complex netw orks: A survey of measure- men ts,” Adv ances in Ph ysi cs 56 , 167–242 (2007). 4 C. Chr istensen and R. Alb ert, “Using graph concepts to under- stand the organization of complex systems,” Inte r national Jour- nal of Bifurcation and Chaos 17 , 2201–2214 (2007). 5 S. Boccaletti, V. Latora, Y . Moreno, M. Cha vez, and D.-U. Hwan g, “Complex netw orks: Structure and dynamics,” Physics repor ts 424 , 175–308 (2006) . 6 J. Hlink a, D. Hartman, and M. Palu ˇ s, “Small-world top ology of f unctional connectivit y i n randomly connec ted dynamical sys- tems,” Chaos: An Interdisciplinary Journal of Nonlinear Science 22 , 033107– 033107 (2012). 7 A. Y azdani and P . Jeffrey , “Complex netw ork analysis of wate r distribution systems,” Chaos: An In terdisciplinary Journal of Nonlinear Science 21 , 016111–016 111 (2011). 8 P . Cano, O. Celma, M. Koppenberger, and J. M. Buld´ u, “T op ol- ogy of music r ecommendation net works,” Chaos: An Interdisci- plinary Journal of Nonlinear Science 16 , 013107–013 107 (2006). 9 J. Lesk ov ec, D. Chakrabarti, J. Kleinberg, C. F aloutsos, and Z. Ghahramani, “Kronec ker graphs: An approac h to modeling net works,” The Journal of Machine Learning Research 11 , 985– 1042 (2010 ). 12 10 L. Akoglu and C. F aloutsos, “Rtg: a recursive realistic gr aph generator using random t yping,” Data Mi ning and K no wl edge Discov ery 19 , 194–209 (2009). 11 J. Janssen, M. Hurshman, and N. Kaly aniwalla, “Mo del selection for so cial net works usi ng graphlets,” Internet Mathematics 8 , 338–363 (2012). 12 E. M. Air oldi, X. Bai, and K. M. Carley , “Net work sampling and classification: An inv estigation of net work mo del represen- tations,” Decision Support Systems 51 , 506–518 (2011). 13 M. Middendorf, E. Ziv, and C. H. Wiggins, “Inferring net- wo r k mec hanisms: the drosophila melanogaster protein i n terac- tion net work,” Pr oceedings of the National Academ y of Sciences of the United States of America 102 , 3192–3197 (2005). 14 R. Milo, S. Itzk ovitz, N. Kash tan, R. Levitt, S. Shen-Orr, I. Ayzensh tat, M. Sheffer, and U. Alon, “Superfamili es of ev olved and designed net works,” Science 303 , 1538–1542 (2004). 15 R. Mi lo, S. Shen-Orr, S. I tzko vitz, N. K ash tan, D. Chklovskii, and U. Al on, “Net work motifs: simple building blo cks of complex net works,” Science Signaling 29 8 , 824 (2002). 16 I. Bordino, D. Donato, A. Gionis, and S. Leonardi, “Mining large net works with subgraph coun ting,” in D ata Mi ning, 2008. ICDM’08. Eighth IEEE International Conferenc e on (IEEE, 2008) pp. 737–742. 17 J. A. Grocho w and M. Kelli s, “Net wo r k motif discov ery using subgraph enu m eration and symmetry-breaking,” in R ese ar ch in Computational Mole cular Biolo g y (S pr inger, 2007) pp. 92–106. 18 L. Cui, S. Kumar a, and R. Alb ert, “Complex net works: An engineering view,” Circuits and Systems M agazine, IEEE 10 , 10–25 (2010). 19 A. Pomerance, E. Ott, M . Girv an, and W. Losert, “The effect of net work topology on the stabili t y of discrete state mo dels of ge- netic con trol,” Pro ceedings of the National Academ y of Sciences 106 , 8209–821 4 (2009). 20 E. Lameu, C. Batista, A. Batista, K. Iarosz, R. Viana, S. Lop es, and J. Kurths, “Suppression of bursting synchronization i n clus- tered scale-free (rich-club) neuronal net works,” Chaos: An In ter- disciplinary Journal of Nonlinear Science 22 , 043149 (2012). 21 J. Lesko vec, J. K leinberg, and C. F aloutsos, “Graphs ov er time: densification la ws, shrinking diameters and p ossibl e explana- tions,” in Pr o c ee dings of the eleventh A CM SIGKDD interna- tional c onfer enc e on Know le dge disc overy in data mining (ACM, 2005) pp. 177–187. 22 J. M . Kleinberg, R. Kumar, P . Ragha v an, S. Ra jagopalan, and A. S . T omkins, “ The we b as a graph: Measuremen ts, models, and methods,” i n Computing and c ombinatorics (Springer, 1999) pp. 1–17. 23 A.-L. Barab´ asi and R. Alb ert, “Emergence of scaling in r andom net works,” science 286 , 509–512 (1999). 24 D. J. W atts and S. H. Strogatz, “Coll ectiv e dynamics of ‘small- wo r ld’net works,” nature 393 , 440–442 (1998). 25 P . Erd¨ os a nd A. R ´ en yi, “On the cen tral limit theorem for samples from a finite p opulation,” Publ. Math. Inst. Hungar. A cad. Sci 4 , 49–61 (1959). 26 D. V olch enko v and P . Bl anc hard, “An algorithm generating ran- dom graphs with p ow er law degree distributions,” Physica A: Statistical Mecha ni cs and its Applications 315 , 677–690 (2002). 27 M. P enrose, R andom ge ometri c gr aphs , V ol. 5 (Oxford Univ er sity Press Oxford, 2003). 28 W. Aiello, A . B onato, C. Co op er, J. Janssen, and P . Pra lat, “A spatial web graph m odel with lo cal influence regions,” Internet Mathematics 5 , 175–196 (2008). 29 D. S. Callaw ay , J. E. Hop croft, J. M. K leinberg, M . E . Newman, and S. H. Strogatz, “Are randomly gr o wn graphs really rand om?” Ph ysi cal Review E 64 , 041902 (2001). 30 R. V. Sol´ e, R. Pastor-Satorras, E. Smith, and T. B. Kepler, “A model of lar ge-scale proteome evolution,” Adv ances in Complex Systems 5 , 43–54 (2002). 31 K. Klemm and V. M. Eguiluz, “Highly clustered scale-fr ee net- wo r ks,” Ph ysi cal Review E 65 , 036123 (2002). 32 B. Bol lob´ as, R andom gr aphs , V ol. 73 (Cambridge universit y press, 2001). 33 S. P . Borgatti and M. G. Everett, “Mo dels of core/peri phery structures,” Social net works 21 , 375–395 (2000). 34 T. L. F rant z and K. M. Carley , “A f ormal c haracterization of cellular net works,” T ec h. Rep. (DTIC Do cumen t, 2005). 35 J. Lesko vec, J. Kleinberg, and C. F aloutsos, “Graph evolution: Densification and shri nking diameters,” A CM T ransactions on Kno wl edge Disco very from Data (TKDD) 1 , 2 (2007). 36 G. Holmes, B. Pfahringer, R. K irkby , E. F r ank, and M. Hall, “Multiclass alternating decision trees,” i n Machine L e arning: ECML 2002 (Springer, 2002) pp. 161–172. 37 R. Pat r o, G. Duggal, E. Sefer, H. W ang, D. Fili ppov a, and C. K ingsford, “The mi ssing m odels: a data-driven approac h for learning how netw orks gro w,” in Pr o c ee dings of the 1 8th A CM SIGKDD inte rnational c onfer enc e o n K now le dge disc overy and data mining (A CM, 2012) pp. 42–50. 38 M. Zanin, P . Sousa, D. Papo, R. Ba jo, J. Garc ´ ıa-Prieto, F. del Po zo, E. Menasalv as, and S. Bo ccaletti, “Optimizing functional net work r epresen tation of multiv ari ate time s eries,” Scientific r e- ports 2 (2012) . 39 A. Barrat and M. W eigt, “On the properties of small-world net work mo dels,” The Europ ean Physical Journal B-Condensed Matter and Complex Systems 13 , 547–560 (2000). 40 B. Bollob´ as, “The diameter of random graphs,” T r ansactions of the American Mathematical So ciet y 267 , 41–52 (1981) . 41 P . V . Boas, F. Ro dri gues, G . T ravieso, and L. da F Costa, “Sensi- tivit y of complex netw orks m easuremen ts,” Journal of Statistical Mec hanics: Theory and Exp eriment 2010 , P03009 (2010). 42 S. L. T auro, C. Palmer, G. Siganos, and M. F al outsos, “A simple concept ual m odel for the internet topol ogy ,” in Glob al T e le c om- munic ations Con f er enc e, 2001. GLOBECOM’01. IEEE , V ol. 3 (IEEE, 2001) pp. 1667–16 71. 43 V. G´ omez, A. Kalten brunner, and V. L´ opez, “Statistical analy- sis of the so cial netw ork and discussion threads in slashdot,” in Pr o ce e dings of the 17th int ernational c onfer enc e on World Wide Web (ACM, 2008) pp. 645–654. 44 N. Z. Gong, W. Xu, L. Huang, P . Mi ttal, E. Stefano v, V. Sek ar, and D. Song, “Evolut i on of s ocial- attribute netw orks: measure- men ts, mo deling, and implications using go ogle+,” in Pr o c ee d- ings of the 2012 ACM co nferenc e on Internet me asur ement c on- fer enc e (ACM, 2012) pp. 131–144. 45 H. Kwak, C. Lee, H. Park, and S. Mo on, “What is twitt er , a so cial net work or a news media?” i n Pr o c e e dings of the 1 9th international c onfer enc e on World wide web (A CM, 2010) pp. 591–600. 46 J. R. Quinlan, C4. 5: pr o gr ams for machine le arning , V ol. 1 (Morgan k aufmann, 1993). 47 N. F riedman, D. Geiger, and M. Goldszmidt, “Ba yesian net work classifiers,” Machine learning 2 9 , 131–163 (1997). 48 J. C. Pl att, “12 fast training o f support vector machines using sequen tial minimal optimization,” (1999). 49 J. A. F reeman and D. M. Sk apura, “Neural net works: A lgo- rithms, applications, and programmi ng tec hniques (computation and neural systems series),” Neural net works: algorithms, appli- cations and programmi ng tec hniques (Computation and Neural Systems Series) (1991) . 50 E. de Silv a and M. P . Stumpf, “Complex net works and simple models in biology ,” Journal of th e Roy al Society In terface 2 , 419– 430 (2005) . 51 T. Aittok allio and B. Sc hwiko ws ki, “Graph-based metho ds for analysing net works in cell biology ,” Br iefings in bioinformatics 7 , 243–255 (2006) . 52 M. Rahman, M. Bhuiy an, and M. A . Hasan, “Graft: an appro x- imate graphlet counting algorithm for large graph analysis,” in Pr o ce e dings of the 21st ACM international co nfe r enc e on Infor- mation and k now le dge management (ACM, 2012) pp. 1467–1 471. 53 Http://dblp.uni-trier.de/xml. 54 Http://dblp.uni-trier.de/xml. 55 Http://snap.stanford.edu. 13 56 Http://snap.stanford.edu. 57 Http://snap.stanford.edu. 58 Http://k onect.uni-ko bl enz.de. 59 Http://deim.urv.cat/˜aaren as. 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% 1M 512K 128K 32K 4K

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment