Distributional Results for Thresholding Estimators in High-Dimensional Gaussian Regression Models

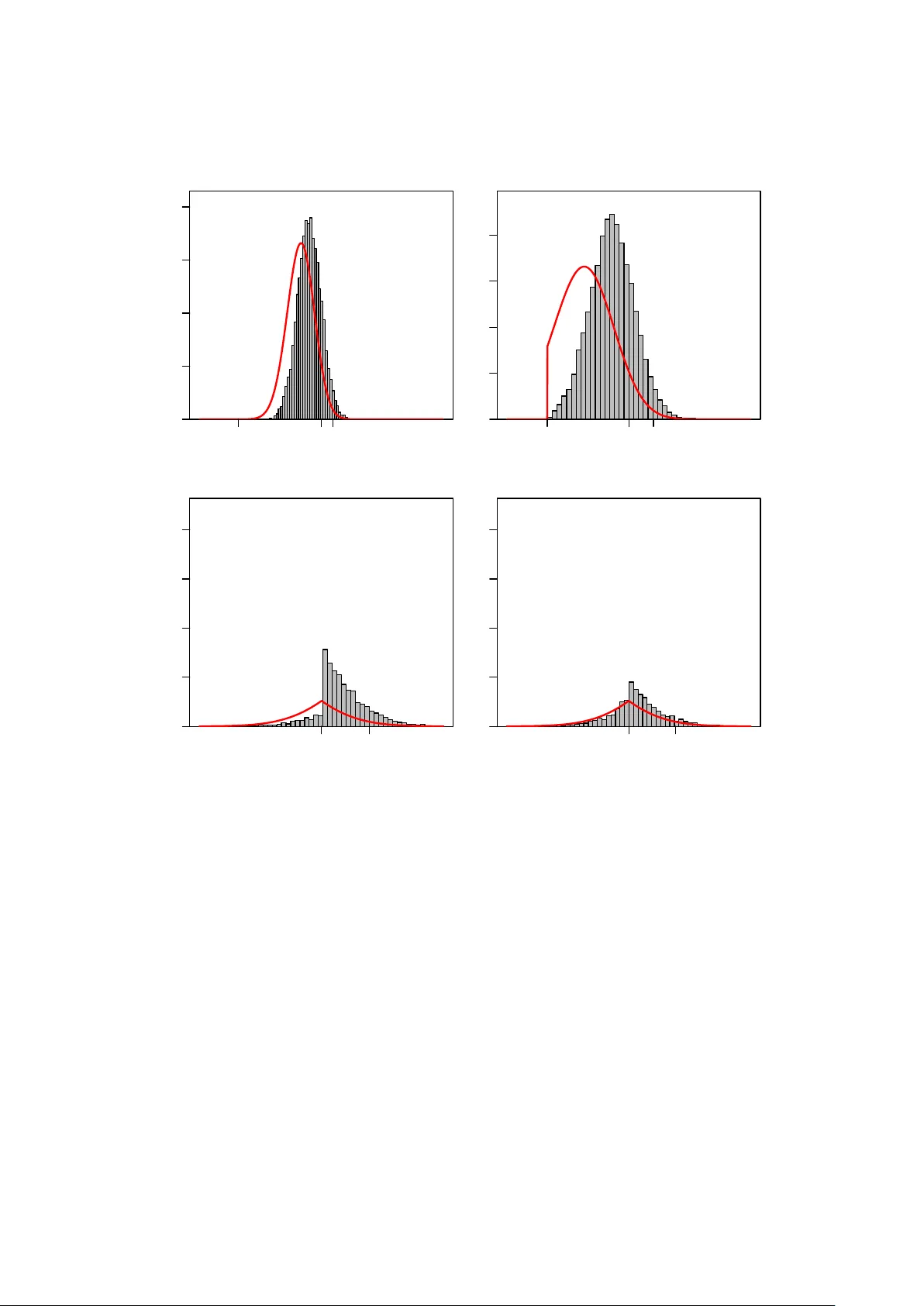

We study the distribution of hard-, soft-, and adaptive soft-thresholding estimators within a linear regression model where the number of parameters k can depend on sample size n and may diverge with n. In addition to the case of known error-variance…

Authors: Benedikt M. P"otscher, Ulrike Schneider