A Student-t based filter for robust signal detection

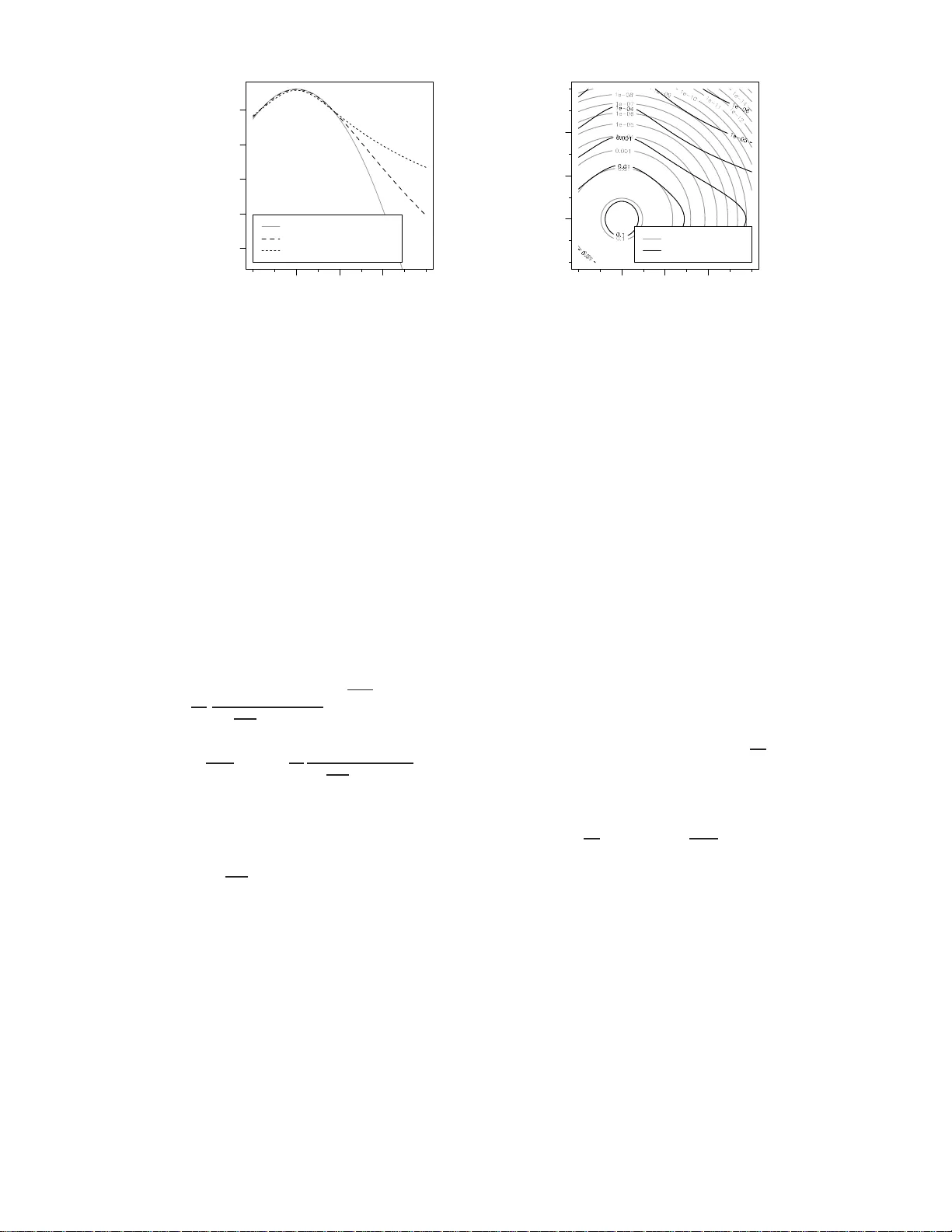

The search for gravitational-wave signals in detector data is often hampered by the fact that many data analysis methods are based on the theory of stationary Gaussian noise, while actual measurement data frequently exhibit clear departures from thes…

Authors: Christian R"over