Zero-error feedback capacity via dynamic programming

In this paper, we study the zero-error capacity for finite state channels with feedback when channel state information is known to both the transmitter and the receiver. We prove that the zero-error capacity in this case can be obtained through the s…

Authors: Lei Zhao, Haim Permuter

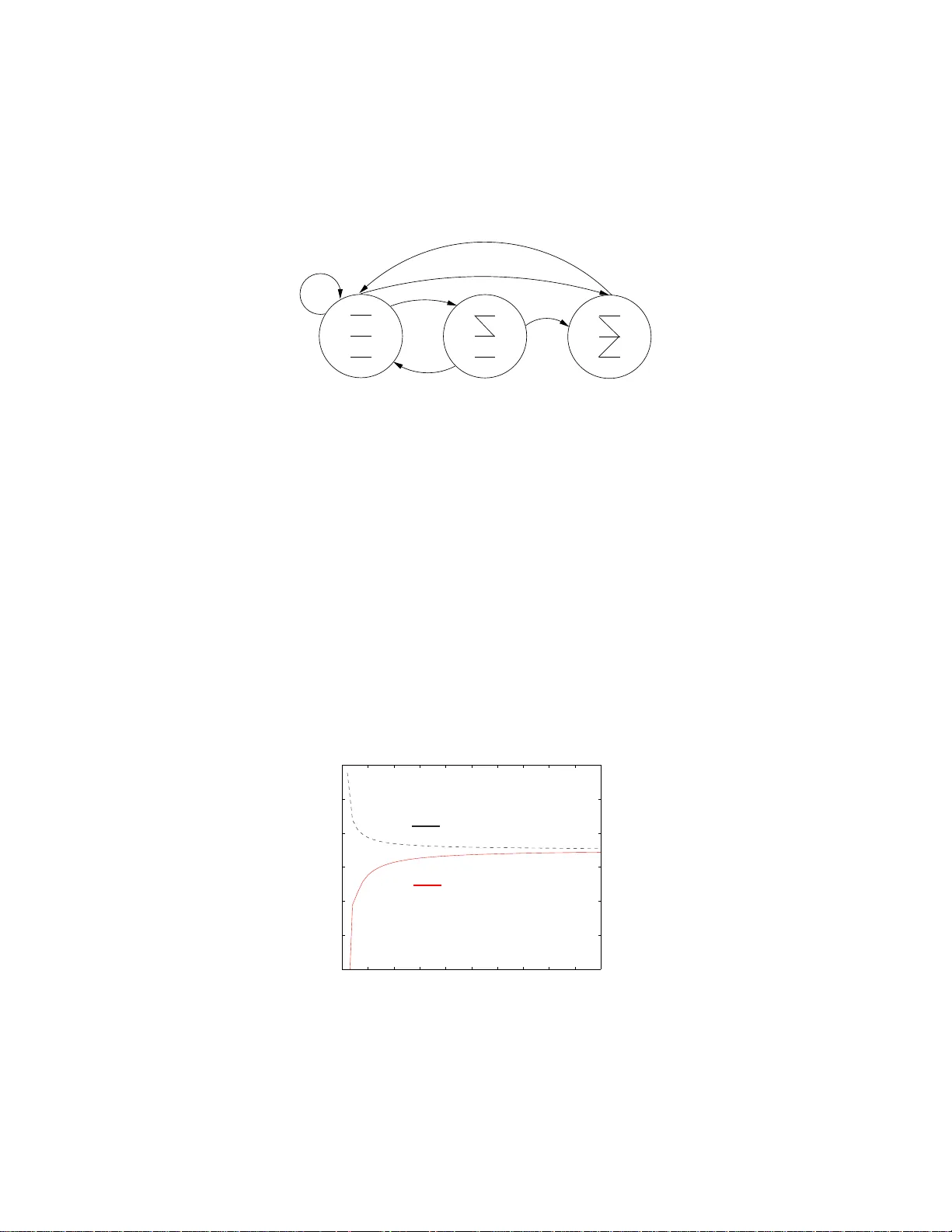

1 Zero-error feedbac k capacity via dynamic programming Lei Zhao and Ha im Permuter Abstract In this paper , we study the zero-error capacity for fi nite state channels with feedback when channel state information is kno wn to both the transmitter an d the receiv er . W e prov e that the zero-error cap acity in this case can be obtained through the solution of a dynamic programming problem. Each iteration of the dynamic programming provid es lower and upper bounds on the zero-error capacity , and in the limit, the lo wer bound coincides with the zero-error feedback capacity . F urthermore, a sufficient condition for solving the dynamic programming problem is provid ed t hrough a fixed-po int equation. Analytical solutions for sev eral examples are pro vided. Index T erms Bellman equations, competiti ve Markov decision processes, dynamic programming, feedback capacity , fixed-point equation, infinite-horizon av erage re ward, stochastic games, zero-error capacity . I . I N T R O D U C T I O N In 19 56, Shan non [1] introduc ed the concep t of zer o-erro r co mmunica tion, wh ich req uires that the pr obability of error in dec oding any message transmitted thro ugh th e ch annel to be zero. Altho ugh th e zer o-erro r capacity for gene ral ch annels remains an un solved prob lem (see [2] for a com prehen si ve sur vey of zero-er ror in formatio n theory) , Shannon [ 1] showed th at fo r discrete memor yless chann els (DMC) with fe edback the zero- error capacity is either zer o (if any two inpu ts can generate a comm on outp ut) o r equa l to: C F B 0 = max P X log 2 max y X x ∈ G ( y ) P X ( x ) − 1 , (1) where P X is the ch annel inpu t distribution, y is an outpu t realization of the chann el, and G ( y ) is the set o f inp uts that have a po siti ve probab ility o f gen erating the ou tput y , i. e., G ( y ) , { x : P Y | X ( y | x ) > 0 } . T he achiev ability proof o f (1) is based on a de terminist schem e rathe r than on a ra ndom co ding scheme , as used f or showing th e achiev ability o f r egular cap acity . In this p aper, we study th e z ero-err or feed back capacity fo r finite state chann els (FSC), a family of channels with memory . W e make the assumptions that channe l state info rmation (CSI) is available both to the transmitter an d to the receiver . In this case, we solve the zero-e rror capacity that de pends only on th e topo logical proper ties of the channel. A similar setup h as been used by Chen and Berger [3 ], wh o solved the regular chan nel cap acity b y finding Lei Zhao is wit h the Depa rtment of Ele ctrical Engi neering, Stanford Unive rsity , Stanford , CA 94305. Haim Pe rmuter is with Dep artment of Electric al & Computer Engineering Department, Ben-Gu rion Univ ersity of the Nege v , Beer -She va , Israel. Authors’ emai ls: leiz@stanfor d.edu and haimp@bgu.ac.il. 2 the optimal stationar y and non stationary input proce sses that max imize the long- term directed mutu al informatio n. In [4] and [5], the zero-er ror capacity of the chemical channel with feedback was deriv ed. The chemical channel is a special case of FSCs. With fee dback, the tran smitter knows the state o f the chemical chann el while th e receiver does not, which is different from our setup. Other related work can be foun d in [6], which addresses the zero-erro r capacity for co mpoun d channels. The remaining of the paper is organ ized as follo ws. In Section II, we introdu ce the chann el model and the dynamic progr amming p roblem fo rmulation. In Section I II, we use a finite-h orizon dy namic pro grammin g (DP) to p rovide a condition for the channel to have ze ro zero-erro r capacity . In Section IV, we define an infinite-ho rizon average rew ard DP pro blem and link its solu tion the the zer o-erro r capacity . In Sections V and VI, we pr ove the converse and direct pa rts respectively . In Section VI I, we explain how to ev aluate the infinite-h orizon a verage rew ard DP; in particular, we provid e a sequenc e of lower an d upper b ounds that are ea sy to co mpute and p rove the Bellman equation theorem f or the par ticular DP , namely , a fixed-po int eq uation that is a suffi cient condition for verifyin g th e optimality of a so lution. In Section VI II, we ev aluate and then find analytically the zero er ror feedback capacity of se veral examples. I I . C H A N N E L M O D E L A N D P R E L I M I N A R I E S W e u se calligrap hic letter X to deno te the alph abet and |X | to deno te the card inality of the alphab et. Subscripts and su perscripts are used to deno te vectors in the fo llowing way: x j = ( x 1 , ..., x j ) an d x j i = ( x i , ..., x j ) fo r i 6 j . Next we introduce the chann el mod el an d the DP f ormulatio n. A. Channel model and zer o-err or ca pacity defi nition An FSC [7, ch . 4] is a c hannel that, at each tim e index, has a state whic belon gs to a finite set S and has the proper ty that, g iv en the curren t input a nd state, the ou tput and the next state is in depend ent of the past inputs, outputs and states, i. e., p ( y t , s t +1 | x t 1 , s t 1 ) = p ( y t , s t +1 | x t , s t ) . (2) For simplicity , we assum e that the ch annel has the same inpu t alp habet X and the same ou tput alp habet Y f or all states. The alp habets X an d Y are bo th finite. W itho ut loss of g enerality , we can assume that X = { 1 , 2 , ..., |X |} . W e consider the commun ication setting shown in Fig. 1, wh ere the state of the channel is known to the encoder and to the d ecoder . An ( M , n ) z ero-err or feedback code of length n is defined as a seq uence of encod ing mapping s x t ( m, y t − 1 , s t ) and a dec oding f unction ˆ m = g ( y n , s n +1 ) , where a message m is selected from a set { 1 , ..., M } . T he p robability o f error is required to be zero, i.e., Pr g ( Y n , S n +1 ) 6 = m | message m is sen t = 0 for all messages m ∈ { 1 , 2 , ..., M } . W e emph asize that the size of the message set M does not depend on th e initial state of the c hannel; hence, the probab ility of er ror d ecoding needs to be zero for any in itial state. Definition 1 A rate R is achievable if there exists an ( M , n ) zero- error f eedback code such tha t R 6 log 2 M n . 3 P S f r a g r e p l a c e m e n t s Message m Encoder x i ( m, y i − 1 , s i ) x i FSC p ( y i , s i +1 | x i , s i ) y i , s i +1 y i , s i +1 Decoder ˆ m ( y n , s n +1 ) ˆ m Unit Delay y i − 1 , s i Fig. 1. Communicat ion model: a fini te st ate c hannel (FSC) wit h f eedback and stat e in formation at the decoder a nd en coder . Definition 2 The operational zer o-err or capacity of an FSC is defined as the sup reme of all ach iev ab le rates. Throu ghout this paper we use th e following alternative and e quiv alent defin ition of the op erational zero-err or capacity . Definition 3 Let M ( n, s ) be the maximum numb er o f messages that ca n be tran smitted with zer o error in n transmissions when the initial state of the chan nel is s ∈ S . Define a n = min s ∈S log 2 M ( n, s ) . (3) The operational zer o-err or capac ity is g iv en b y: C 0 , lim n →∞ a n n = lim n →∞ min s ∈S log 2 M ( n, s ) n , (4) where the limit is shown to exist. Since the transmitter knows the state, the sequen ce { a n } is super additi ve, i.e., a n + m > a n + a m and a n n 6 |X | . By Fekete’ s lemma [8, Ch. 2. 6], lim a n n exists and is equ al to s up a n n . Note th at R 6 lim a n n holds fo r any achiev able rate R , and any rate less th an lim a n n is achiev ab le, which are simple c onsequen ces o f Definition 1. Thus, lim a n n defines the zero -error capacity . B. Dynamic pr ogramming For the standard Markov decision p rocess (MDP), we have the d ynamic progr amming equatio n [9 ], [10]: U n ( s ) = max a ∈ A ( s ) ( r ( s, a ) + N X s ′ =1 P ( s ′ | s, a ) U n − 1 ( s ′ ) ) , (5) where r ( s, a ) is the r ew ard, giv en that we are at state s ∈ S , an d we p erform action a ∈ A . The te rm U n ( s ) is the total re ward after n steps (a.k.a. the ”rew ard-to-g o” in n steps) when we start at time s . The c ondition al distribution P ( s ′ | s, a ) is the pro bability o f the next state s ′ ∈ S , g iv en the cu rrent state s ∈ S and actio n a ∈ A . 4 The dynam ic pro grammin g equation that is associated in this paper with the zero-erro r capacity has the form U n ( s ) = max a ∈ A ( s ) min s ′ ∈S ( a ) { r ( s, a, s ′ ) + U n − 1 ( s ′ ) } , (6) where r ( s, a , s ′ ) is th e rew ard, given the current state s , the action a and the next state s ′ . The r ew ard may be a ny real numb er , including ±∞ . The value U n ( s ) is d efined as befo re, i.e., the total r ew ard in n steps wh en starting at state s . The DP eq uation in (6) may b e vie wed as a stochastic game [ 11], which is a.k.a competiti ve M DP [ 12], in wh ich there are two asymmetric players. Player 1, the leader, takes an action a ∈ A ( s ) , which may depend on the curren t state an d Player 2 , the follower , determ ines the next state s ′ ∈ S . Player 2 sees the state o f the game s and the action of p layer 1. I n th e zero -error cap acity pro blem, Player 1 would be the user who designs the c ode to maximize the transmitted rate, and Player 2 would be Nature, which chooses the next state to m inimize the transmitted rate. I I I . A S U FFI C I E N T A N D N E C E S S A RY C O N D I T I O N F O R C 0 = 0 Shannon [1 ] showed that for a DMC, which is an FSC with only on e state, if any two inpu t letters h av e at least one commo n outp ut, it is im possible to distinguish between two messages with zero- error . Using fin ite-horizo n dynamic p rogram ming, we d erive in this section a sufficient an d necessary condition for a n FSC to have C 0 = 0 , i.e., the zero -error capacity is zero. Definition 4 T wo input letter s x 1 and x 2 are called a djacent at state s if there exists an outpu t letter y and a state s ′ such that p ( y , s ′ | x 1 , s ) > 0 and p ( y , s ′ | x 2 , s ) > 0 . Definition 5 A state s is p ositive if ther e exist two inpu t letter s that are no t a djacent at state s . The intuition behin d the resu lt in this section is that if the chan nel u ndergoes on ly n on- po sitive states dur ing the transmission, we cannot distingu ish between two messages based on the ou tput sequence and the chan nel state sequence, since they cou ld result from eith er message. T o dete rmine w hether C 0 = 0 , we form the following dyn amic pr ogramm ing equation, V n ( s ) = r ( s ) + max x ∈X min s ′ ∈S ( s,x ) V n − 1 ( s ′ ) , (7) where V 0 ( s ) = 0 , ∀ s ∈ S , S ( s, x ) = { s ′ : p ( y , s ′ | x, s ) > 0 for some y ∈ Y } , and reward r ( s ) = 1 if state s is positive , while r ( s ) = 0 if state s is not positive . Lemma 1 (monoton icity of V n ( s ) .) The total r ewar d V n ( s ) is no n-negative and non -decreasing in n , i.e., 0 6 V n ( s ) 6 V n +1 ( s ) , ∀ n = 1 , 2 , 3 , ... and s ∈ S . (8) Pr oof: Let ˜ V n ( s ) = r ( s ) + max x ∈X min s ′ ∈S ( s,x ) ˜ V n − 1 ( s ′ ) and ˜ V 0 ( s ) > V 0 ( s ) , ∀ s ∈ S . Th en, by inductio n, we have ˜ V n ( s ) > V n ( s ) , ∀ n = 1 , 2 , 3 , ... and s ∈ S . Since r ( s ) > 0 , ∀ s ∈ S , then V 1 ( s ) > 0 . Let us define ˜ V 0 ( s ) = V 1 ( s ) . Since ˜ V 0 ( s ) > V 0 ( s ) , we ob tain that ˜ V n ( s ) > V n ( s ) , which mean s tha t V n +1 ( s ) > V n ( s ) > 0 . 5 T ABLE I I N T E R P R E TA T I O N O F T H E D P G I V E N I N ( 7 ) , W H I C H C O R R E S P O N D S T O D E T E R M I N I N G W H E T H E R C 0 > 0 . The DP given in (7) Interpr etation of the DP state s of the DP state s of the channel rew ard r ( s ) =1 state s is positi ve; at least one b it can be transmitted error-free rew ard r ( s ) =0 state s is non p ositiv e; n o bits can be transmitted er ror-free Player 1 takes action x in order to encoder choo ses inp ut x in orde r to maximize the reward of th e DP maximize the nu mber of positive states visited Player 2 cho oses next state in or der to Nature choo ses next state and o utput to minimize the reward of th e DP minimize the num ber o f messages transm itted V n ( s ) - total reward in n rounds, number of po siti ve states v isited in n starting the ga me fro m state s usages of the ch annel star ting at state s , This DP can be viewed as a two-p erson game , wher e V n ( s ) is the ga me result after n steps star ting with initial state s . Player 1 chooses the input letter x , and Play er 2 chooses the next state s ′ . Both players know the c urrent state s , and th e reward of the g ame is a fun ction o nly of the c urrent state only , i.e., r ( s ) . Player 1 makes the first play , and the two players m ake a lternative plays the reafter . Th e go al of Player 1 is to maximize th e nu mber of times the chan nel visits a positive state, and Player 2 tr ies to min imize it. The interpr etation of the DP as a stochastic game b etween the u ser and Nature is summ arized in T able I. The follo wing lemma s tates that if the total reward o f the stoc hastic game is ze ro after n ro unds with initial state s , i.e., V n ( s ) = 0 , the n only one message can b e sent erro r-free through n uses of the c hannel with initial state s . Lemma 2 V n ( s ) = 0 implies M ( n, s ) = 1 and V n ( s ) > 0 implies M ( n, s ) > 1 . Pr oof: Fir st, we ob serve that so as to send two or mor e m essages in n uses of the channel, a positive state should be v isited with probab ility one. Once a positive state is visited, we can u se two inpu ts that are not adjacen t to tran smit without er ror one b it (two messages). If a positive state is not visited, then ther e are no two inp uts that can distinguish betwe en two message s. The stoch astic g ame given in (7) verifies wh ether a po siti ve state is visited with probab ility 1. In the stoch astic game, the rewards r ( s ) = 1 and r ( s ) = 0 ind icate that state s is positive and non-positive, respectively . Player 1 is the encoder wh ich w ants to visit a positi ve state and Player 2 is Nature which chooses the output and the state such that a po siti ve state will not be v isited. A total rew ard V n ( s ) = 0 implies that in n transmissions with initial state s , with positiv e proba bility , the chann el un dergoes only non- positive s tates, regardless of the inputs. Thu s V n ( s ) = 0 implies M ( n, s ) = 1 . According to Lemma 1, V n ( s ) is n on-negative an d n on-de creasing in n for any s ∈ S . Thu s, min s ∈S V n ( s ) is also nondecreasing in n , an d theref ore lim n →∞ min s ∈S V n ( s ) is w ell defin ed (it may also be infinite). I f lim n →∞ min s ∈S V n ( s ) = 0 , then min s ∈S V n ( s ) = 0 , ∀ n and inv oking Lemm a 2 , min s ∈S M ( n, s ) = 1 , which giv es C 0 = 0 by definition. Th e next lemma states th at to verify whether lim n →∞ min s ∈S V n ( s ) > 0 , it is enoug h to calculate a finite-horizon problem . 6 Lemma 3 lim n →∞ min s ∈S V n ( s ) = 0 ⇐ ⇒ min s ∈S V |S | ( s ) = 0 Pr oof: The = ⇒ dir ection follows from Lemma 1, which states that fo r any s ∈ S , V n ( s ) is a n on-negative and non -decreasing fun ction in n . Now we p rove the ⇐ = direction . Define S n , the set of initial states fo r wh ich the re ward is zero after n rounds o f the stochastic gam e, i.e., S n = { s ∈ S : V n ( s ) = 0 } . Note that S n +1 ⊆ S n , S 0 = S and S 1 = { s ∈ S : r ( s ) = 0 } . First, we claim that there exists n ∗ , 0 6 n ∗ 6 |S | − 1 , for w hich S n ∗ = S n ∗ +1 must hold, wh ere S n ∗ is non-em pty . Otherwise S n +1 has at least one less elem ent than S n for 0 6 n 6 |S | − 1 , and therefo re S | S | = ∅ . If S | S | is empty , it means that min s ∈S V |S | ( s ) > 0 , which con tradicts our assumption. The equality between S n ∗ and S n ∗ +1 means that when the ch annel starts at some s ∈ S n ∗ +1 , for any inp ut letter x , there exists an action of Player 2 such that the n ext state s ′ would satis fy s ′ ∈ S n ∗ . Define th is strate gy of Player 2 as a function A 2 ( · , · ) : S n ∗ × X 7→ S n ∗ , n amely , giv en s ∈ S n ∗ , an d any input x ∈ X , the next step s ′ depend s on s an d x by the functio n A 2 ( s, x ) such that s ′ = A 2 ( s, x ) . W e claim that S n ∗ + k = S n ∗ , ∀ k > 0 , i.e., o nce the s et S n stops shr inking, it will stay the same. T o prove this, let us fix an ar bitrary s ∈ S n ∗ +1 . Since S 1 ⊆ S n , s ∈ S 1 and r ( s ) = 0 . W e have V n ∗ +2 ( s ) = r ( s ) + max x ∈X min s ′ ∈S ( s,x ) V n ∗ +1 ( s ′ ) = max x ∈X min s ′ ∈S ( s,x ) V n ∗ +1 ( s ′ ) 6 max x ∈X V n ∗ +1 ( A 2 ( s, x )) = 0 (9) Therefo re S n ∗ +2 = S n ∗ +1 . Repeating th e same argume nt, we have S n ∗ + k = S n ∗ , ∀ k > 0 , which me ans that V n ( s ∗ ) = 0 , ∀ n . This com pletes th e proof . The following theo rem state the necessary and su fficient cond ition for C 0 = 0 thr ough th e stochastic game. Theorem 1 The zer o -err or capacity is positive if and o nly if the tota l r ewar d min s ∈S V |S | ( s ) is po sitive, i. e., min s ∈S V |S | ( s ) = 0 ⇐ ⇒ C 0 = 0 . (10) Pr oof: If min s ∈S V |S | ( s ) = 0 , th en accor ding to Lemm a 3 lim n →∞ min s ∈S V n ( s ) = 0 , and following Lemm a 2 it follows that min s M ( n, s ) = 1 f or any n ; hence C 0 = 0 . If min s ∈S V |S | ( s ) > 0 , then in accord ing to Lemma 2, min s ∈S M ( |S | , s ) > 2 , and following from the defin ition of zero-er ror capac ity C 0 > 1 |S | . 7 I V . T H E D Y N A M I C P RO G R A M M I N G P RO B L E M A S S O C I AT E D W I T H T H E C H A N N E L In this section, we define a d ynamic prog ramming p roblem associated with the chann el. Th e solution to the problem is later u sed to deter mine th e f eedback capacity of th e c hannel. Denote G ( y , s ′ | s ) = { x : x ∈ X , p ( y , s ′ | x, s ) > 0 } , i.e., G ( y , s ′ | s ) is the set of input letters at state s that can drive the channel state to s ′ while yielding an output letter y with positiv e pr obability . Denote W ( · , · ) as a mapping Z + × S 7→ R + . Set W (0 , s ) = 1 , ∀ s ∈ S as th e initial value. Den ote P X | S ( ·|· ) as a m apping X × S 7→ R + such tha t for each s ∈ S , P X | S ( ·| s ) is a probab ility m ass fu nction (p mf) on X , i.e., P x ∈X P X | S ( x | s ) = 1 , an d P X | S ( x | s ) > 0 , ∀ x ∈ X . The term W ( · , · ) is the solutio n to the pro blem defined iterativ ely by: W ( n, s ) = max P X | S ( ·| s ) min s ′ ∈S W ( n − 1 , s ′ ) max y ∈Y X x ∈ G ( y ,s ′ | s ) P X | S ( x | s ) − 1 ∀ s ∈ S , and for n = 1 , 2 , 3 , ... (11) W e adopt th e co n vention th at 1 0 = ∞ , a nd, if G ( y , s ′ | s ) = ∅ , P x ∈ G ( y ,s ′ | s ) P X | S ( x | s ) = 0 . One prope rty that can be verified from the defin ition an d the initial value is that ∀ n > 0 , ∀ s ∈ S , W ( n, s ) > 1 . The main result o f this paper is th e following theor em: Theorem 2 If min s ∈S V |S | ( s ) > 0 , C 0 = lim inf n →∞ 1 n min s ∈S log 2 W ( n, s ); (12) Otherwise C 0 = 0 . Before proving the th eorem, let us verify that the z ero-err or cap acity of a DMC [1, Theorem 7] is a special case of Theorem 2. Since a DMC is an FSC with only o ne state, V |S | ( s ) = 0 means that the state is non- p ositive , i.e., “all pairs of inp ut letters are adjacent”, as stated in [ 1, Theorem 7]. If V |S | ( s ) > 0 , f or a DMC, define M ( n ) = M ( n, s ) and G ( y ) = G ( y , s ′ | s ) . M ( n, s ) = max P X | S ( ·| s ) M ( n − 1) max y ∈Y X x ∈ G ( y ) P X | S ( x | s ) − 1 = M ( n − 1) max P X max y ∈Y X x ∈ G ( y ) P X ( x ) − 1 , (13) and C 0 = lim inf n →∞ 1 n log 2 M ( n ) = log 2 max y ∈Y X x ∈ G ( y ) P X ( x ) − 1 , (14) which is exactly the result fo r DMC in [1 ]. 8 The converse and the direct parts of T heorem 2 are p roved in Sec tion V an d Section VI, respectively . V . C O N V E R S E Theorem 3 (Conver se.) M ( n, s ) 6 W ( n, s ) , ∀ n = 0 , 1 , 2 , .... and ∀ s ∈ S . Pr oof: W e p rove the theorem by ind uction. First, the inequ ality ho lds w hen n = 0 . Now , sup pose M ( k , s ) 6 W ( k , s ) is true ∀ k = 0 , ..., n − 1 and ∀ s ∈ S . Fix an arbitra ry initial state s 0 . I t is sufficient to show that M ( n, s 0 ) 6 W ( n, s 0 ) to pr ove the conv erse. For a fixed zero -error co de that has M ( n, s 0 ) messages, we defin e u ( x | s 0 ) = nu mber of messages with first transmitted letter x when in itial state is s 0 , f ( x | s 0 ) = u ( x | s 0 ) M ( n, s 0 ) . (15) Note that f ( ·| s 0 ) is a v alid pmf. After the first tr ansmission, suppose the outp ut is some y ∈ Y and the channel g oes to state s 1 . W e h av e P x ∈ G ( y ,s 1 | s 0 ) u ( x | s 0 ) messages, ea ch of wh ich with p ositiv e p robability gives ou tput y and chan ges th e state to s 1 . T o guaran tee that the d ecoder can d istinguish between these messages in the following n − 1 transmission, we must have P x ∈ G ( y ,s 1 | s 0 ) u ( x | s 0 ) 6 M ( n − 1 , s 1 ) , which yield s M ( n, s 0 ) X x ∈ G ( y ,s 1 | s 0 ) f ( x | s 0 ) 6 M ( n − 1 , s 1 ) . (16) Since the above in equality must hold , ∀ y ∈ Y , and ∀ s 1 ∈ S M ( n, s 0 ) 6 min s 1 ∈S M ( n − 1 , s 1 ) max y ∈Y X x ∈ G ( y ,s 1 | s 0 ) f ( x | s 0 ) − 1 (17) Since we assumed M ( n − 1 , s ) 6 W ( n − 1 , s ) for all s ∈ S , M ( n, s 0 ) 6 min s 1 ∈S W ( n − 1 , s 1 ) max y ∈Y X x ∈ G ( y ,s 1 | s 0 ) f ( x | s 0 ) − 1 . (18) Using the iterative for mula o f W ( n, s 0 ) given in (11) an d the fact that f ( ·| s 0 ) is a v alid pmf, we obtain M ( n, s 0 ) 6 W ( n, s 0 ) . (19) Finally , since s 0 is arbitrar ily fixed, we have M ( n, s ) 6 W ( n, s ) , ∀ s ∈ S . By induction , the theorem is proved. From the converse, Theorem 3, and the zer o-erro r capacity definitio n 3, we h av e the following upper boun d C 0 = lim n →∞ min s ∈S log 2 M ( n, s ) n 6 lim inf n →∞ min s ∈S log 2 W ( n, s ) n . (20) 9 V I . D I R E C T T H E O R E M Theorem 4 Assume min s ∈S V |S | ( s ) > 0 , then for a ny initial sta te s ∈ S ther e exists an n 0 > 0 such that fo r n > n 0 , ⌊ W ( n, s ) ⌋ messages ca n be transmitted with no mor e th an n + |S |⌈ log 2 L ⌉ , wher e L is a positive in te ger that does not depend on n and s . Pr oof: Th e direct part is proved using d eterministic codes [1] rather th an ra ndom codes. Let the so lution a nd the max imizer in the k th iteration ( k = 1 , 2 , ..., n ) of (11) be W ( k, · ) and P ( k ) X | S ( ·|· ) , respe ctiv ely . Suppose tha t at th e first tr ansmission the channel state is s 1 and t he total number of messages tran smitted th rough the chan nel is ⌊ W ( n, s 1 ) ⌋ . W e divide the message set into |X | gr oups a nd transmit x = i f or the me ssages in the i th g roup fo r the first transmission. Let m i denote the numb er of messages in the i th g roup. By similar argumen ts to those in [1, p. 18], we can control the size of ea ch group such that: if P ( n ) X | S ( i | s 1 ) > 0 , m i ⌊ W ( n, s 1 ) ⌋ − P ( n ) X | S ( i | s 1 ) 6 1 ⌊ W ( n, s 1 ) ⌋ ; if P ( n ) X | S ( i | s 1 ) = 0 , m i = 0 . (21) Both th e transmitter and the rece i ver know how the messages are d ivided before the tran smission. An ar bitrary message m ∈ { 1 , ..., ⌊ W ( n, s 1 ) ⌋} is selected, and letter i is sent if m belongs to the i th gro up. The nu mber of messages abou t which th e receiver is uncertain befo re the first transmission is Z 1 = ⌊ M ( n, s 1 ) ⌋ . After th e first transmission, we obtain an output y 1 , and the channel state changes to s 2 . Den ote Z 2 as th e number of messages that are co mpatible with ( y 1 , s 2 ) , i.e., when tran smitting those messages, ( y 1 , s 2 ) is ob tained with positive probab ility . Z 2 can be up per b ound ed in the fo llowing way: Z 2 = X x ∈ G ( y 1 ,s 2 | s 1 ) m x = ⌊ W ( n, s 1 ) ⌋ X x ∈ G ( y 1 ,s 2 | s 1 ) m x ⌊ W ( n, s 1 ) ⌋ 6 ⌊ W ( n, s 1 ) ⌋ X x ∈ G ( y 1 ,s 2 | s 1 ) P ( n ) X | S ( x | s 1 ) + 1 ⌊ W ( n, s 1 ) ⌋ 6 ⌊ W ( n, s 1 ) ⌋ max y ∈Y X x ∈ G ( y ,s 2 | s 1 ) P ( n ) X | S ( x | s 1 ) + |X | . (22) For co n venience, let us defin e J ( k ) ( s, s ′ ) = max y ∈Y X x ∈ G ( y ,s ′ | s ) P ( k ) X | S ( x | s ) . (23) Eq. (11) and (2 2) can be written , respectively , in terms of J ( k ) ( s, s ′ ) as: W ( k, s ) 6 W ( k − 1 , s ′ ) h J ( k ) ( s, s ′ ) i − 1 , ∀ k ∈ Z + , s ∈ S , s ′ ∈ S . (24) 10 Z 2 6 ⌊ W ( n, s 1 ) ⌋ J ( n ) ( s 1 , s 2 ) + |X | 6 W ( n − 1 , s 2 ) + |X | , (25) where the last in equality is due to ( 24). Since b oth tra nsmitter and receiver know s 1 and s 2 and the tr ansmitter kn ows the outpu t y 1 throug h feedback , both of them kn ow which messages are compa tible with ( y 1 , s 2 ) . In the second transmission, the tran smitter can further divide th e remain ing Z 2 messages into groups accordin g to P ( n − 1) X | S ( ·| s 2 ) , similar to eq. (21). Th e way the messages are di vided is k nown to the receiv er . Suppo se th e o utput letter is y 2 and the state goes to s 3 . Following the argume nt in the previous iter ation, we have Z 3 6 Z 2 J ( n − 1) ( s 2 , s 3 ) + |X | ( a ) 6 W ( n − 1 , s 2 ) J ( n − 1) ( s 2 , s 3 ) + |X | 1 + J ( n − 1) ( s 2 , s 3 ) ( b ) 6 W ( n − 2 , s 3 ) + |X | 1 + J ( n − 1) ( s 2 , s 3 ) , (26) where steps (a) an d (b) follow f rom ( 25) and (2 4), respectively . As the transmission proceeds, the ch annel state e volves a s s 1 , ...., s n , s n +1 , and the output s equen ce is y 1 , ..., y n . The transmitter divides the remaining unce rtain me ssages accord ing to P ( k ) X ( ·| s k ) fo r each transmission. After the n th transmission, the number o f messages reman ing ca n be upper bo unded as: Z n +1 6 Z n J (1) ( s n , s n +1 ) + |X | 6 1 + |X | 1 + J (1) ( s n , s n +1 ) + J (1) ( s n , s n +1 ) J (2) ( s n − 1 , s n ) + · · · + n − 1 Y i =1 J ( i ) ( s n +1 − i , s n +2 − i ) ! (27) Using Ineq. (2 4) itera ti vely , we obtain W ( k, s n +1 − k ) 6 " k Y i =1 J ( i ) ( s n +1 − i , s n +2 − i ) # − 1 ; (28) hence we can f urther upper bou nd Z n +1 as Z n +1 6 1 + |X | 1 + 1 W (1 , s n ) + 1 W (2 , s n − 1 ) + · · · + 1 W ( n − 1 , s 2 ) . (29) Recall the assumption of the theo rem min s ∈S V ( |S | , s ) > 0 , which implies, via Theo rem 1, that C 0 > 0 , and follows from Theo rem 3 we obtain that lim inf n →∞ min s ∈S 1 n log M ( n, s ) > 0 . (30) Hence, there exists ǫ > 0 and an integer n 0 such that ∀ s ∈ S , ∀ n > n 0 , W ( n, s ) > M ( n, s ) > 2 ǫn (the first inequa lity is d ue to the converse proved i n the pr evious section, an d second ineq uality is d ue to (30)). Recall tha t M ( n, s ) > 1 ; 11 we can thus further upper boun d Z n +1 as Z n +1 6 1 + |X | 1 + n 0 X k =1 1 W ( k, s n +1 − k ) + ∞ X k = n 0 +1 2 − ǫn ! 6 1 + |X | n 0 + 1 + ∞ X k = n 0 +1 2 − ǫn ! =1 + |X | n 0 + 1 + 2 − ǫ ( n 0 +1) 1 − 2 − ǫ , L. (31) Note that L is fin ite and is independe nt of n and s 1 . This mea ns that after n tran smissions, the number of m essages about which the r eceiv er is uncertain is not more th an L . The assum ption that min s ∈S V |S | ( s ) > 0 implies th at we can dri ve the channel to a positive state with pro bability 1 in less than |S | transmissions. In a po siti ve state, we can tra nsmit 1 bit of infor mation with zero -error; hence we c an now con clude that ther e exists a zer o-erro r code such th at ⌊ W ( n, s ) ⌋ m essages can be transmitted with no more than n + |S |⌈ log 2 L ⌉ transmissions. Based on the direct theorem, it is straigh tforward to d erive a lower boun d on the zer o-erro r capacity: C 0 > lim inf n →∞ min s ∈S log 2 ⌊ W ( n, s ) ⌋ n + |S |⌈ log 2 L ⌉ = lim inf n →∞ 1 n min s ∈S log 2 W ( n, s ) , (32) giv en the condition min s ∈S V |S | ( s ) > 0 . Combinin g ineq. (2 0) and ineq . (32), we hav e proved eq. (12) thus Theorem 2. V I I . S O LV I N G T H E D Y N A M I C P RO G R A M M I N G P RO B L E M Throu ghout this section, we assume that min s ∈S V |S | ( s ) > 0 , i.e., we focus on ch annels with positive zero-error capacity . Let us first intr oduce a fe w definition s so that we can use the standard language of dyn amic p rogram ming to r ewrite Eq. (1 1) in the form of Eq. (6). Basically , we take log 2 on both side s of Eq. (1 1). Define the value function as J n ( s ) = log 2 W ( n, s ) , th e ac tion as a = P X | S ( ·| s ) , and the rew a rd as r ( s ′ , a, s ) = log 2 max y ∈Y X x ∈ G ( y ,s ′ | s ) P X | S ( x | s ) − 1 . (33) And the DP equation in (11) beco mes simply J n ( s ) = m ax a ∈ A min s ′ ∈S { r ( s ′ , a, s ) + J n − 1 ( s ′ ) } . (34) where A is the actio n space, A = { f ( x ) : P x f ( x ) = 1 , f ( x ) > 0 } . 12 Theorem 2 states th at C 0 = lim inf n →∞ min s ∈S J n ( s ) n . (35) Define an op erator T as follows, ( T ◦ J )( s ) = max a ∈ A ( s ) min s ′ { r ( s ′ , a, s ) + J ( s ′ ) } . (36) The DP equa tion can be rewritten in a c ompact for m as fo llows, J n ( s ) = ( T ◦ J n − 1 )( s ) , (37) with initial value J 0 ( s ) = 0 . W e a lso deno te T n as applyin g op erator T n times. Lemma 4 Let W a nd V denote two fu nctions S 7→ R + . Th e following p r operties of T hold: (a) If W ( s ) > V ( s ) , ∀ s ∈ S , then T ◦ W ( s ) > T ◦ V ( s ) ∀ s ∈ S . (b) If W ( s ) = V ( s ) + d ∀ s ∈ S , wher e d is a constant, then T ◦ W ( s ) = T ◦ V ( s ) + d, ∀ s ∈ S Pr oof: B oth p arts o f the lemm a f ollow directly from th e d efinition of T . Lemma 5 The following pr operties of J n hold: (a) The sequenc e { min s J n ( s ) } is su p-add itive, i.e., min s J n + m ( s ) > m in s J n ( s ) + min s J m ( s ) (b) The sequen ce { max s J n ( s ) } is s ub-a dditive, i.e., max s J n + m ( s ) 6 m ax s J n ( s ) + max s J m ( s ) Pr oof: W e p rove the first prop erty h ere. T he proof of the second one is similar . min s J n + m ( s ) = m in s ( T n ◦ J m )( s ) ( a ) > min s T n ◦ min s ′ J m ( s ′ ) ( s ) = min s T n ◦ [ J 0 + min s ′ J m ( s ′ )] ( s ) ( b ) = min s ( T n ◦ J 0 )( s ) + min s ′ J m ( s ′ ) , (38) where the steps (a) and (b) fo llow from parts (a) and (b ) of Lemma 4, resp ectiv ely . Theorem 5 The lim inf in Theorem 2 ca n be r e placed by lim , i. e., C 0 = lim n →∞ min s J n ( s ) n , (39) and for a ll n ∈ Z + the following bou nds h old min s J n ( s ) n 6 C 0 6 max s J n ( s ) n . (40) 13 Pr oof: F o llowing Lemma 5 and Fekete’ s lemm a [8, Ch. 2. 6], we obtain the following two limits: lim n →∞ min s J n ( s ) n = sup n min s J n ( s ) n , lim n →∞ max s J n ( s ) n = inf n max s J n ( s ) n . (41) Finally , from Theor em 2 we obtain: max s J k ( s ) n > lim n →∞ max s J n ( s ) n > C 0 = lim n →∞ min s J n ( s ) n > min s J k ( s ) k , (42) for all k ∈ Z + . Eq. (40) provides a numer ical way to app roximate C 0 . W e now alter to th e case th at an analy tical solution in the limit can b e obtained via Bellman eq uations. Theorem 6 (Bellman equ ation) If there exists a positive bound ed function g : S 7→ R + and a constant ρ that satisfy g ( s ) + ρ = ( T ◦ g )( s ) (43) then lim n →∞ 1 n J n ( s ) = ρ . Pr oof: Assume that there exists a positive b ounded function g : S 7→ R + and a constant ρ that satisfy g ( s ) + ρ = ( T ◦ g )( s ) . Define g 0 ( s ) = g ( s ) , g n ( s ) = T n g 0 ( s ) . Since J 0 ( s ) = 0 6 g 0 ( s ) , then accord ing to part (a) of Lemma 4 J n ( s ) 6 g n ( s ) . Let d = max s g ( s ) . Then J 0 + d > g 0 . Hence, accord ing to part (a) of L emma 4, g n ( s ) 6 J n ( s ) + d . Ther efore we have, g n ( s ) − d 6 J n ( s ) 6 g n ( s ) . (44) Finally , g ( s ) + ρ = ( T ◦ g )( s ) implies th at lim n →∞ g n ( s ) n = ρ ; h ence lim n →∞ J n ( s ) n = ρ . Remark: ρ does n ot depend o n th e initial state, which hin ts th at fo r some de composab le Markov chains, it is impossible to find a g : S 7→ R + and a con stant ρ to satisfy the Bellman e quation. V I I I . E X A M P L E S Here we provide three e xamples and solve them a nalytically . For t he first two examples, we also find the regular feedback capacity using [3 ]. Example 1 W e consider th e very simple example illustrated in Fig. 2. T he chan nel h as two states. In state 0 , the channel is a binar y symmetric ch annel (BSC) with positive cross probability . In state 1 , the channel is a BSC with 0 cross prob ability . Rough ly speaking, in state 0, the channel is noisy , and, in state 1, the channel is noiseless. Suppose the chann el state evolves as a Markov pr ocess and is in depend ent of the input and output. If the curren t state is 0, the next chan nel state is 1 with cer tainty . If the state is 1, the chan nel goes to state 0 with p robab ility p > 0 or stays at state 0 with probab ility 1 − p . Th us, the channel stays in the no isy state a geometric length of time, and return s to the perfect state imm ediately . 14 P S f r a g r e p l a c e m e n t s S = 0 S = 1 0 0 0 0 1 1 1 1 1 1 − p p Fig. 2. Channel topology of Example 1 F ind ing C 0 by calculating W ( n, s ) : for this channel G ( y , 0 | 0) = ∅ , G ( y, 1 | 0) = { 0 , 1 } , G ( y , 0 | 1) = G ( y , 1 | 1) = { y } . Usin g eq. (11) , we ha ve th e solution to th e DP prob lem of the 1 st iteration as W (1 , 0) = ma x P X | S ( ·| 0) min { 1 , 1 } = 1 W (1 , 1) = ma x P X | S ( ·| 1) " max P X | S (0 | 1) , P X | S (1 | 1) # − 1 =2 . (45) For the 2nd iteration, we have W (2 , 0) = ma x P X | S ( ·| 0) " W (1 , 1) min { 1 , 1 } # = 2 W (2 , 1) = ma x P X | S ( ·| 1) W (1 , 0) " max P X | S (0 | 1) , P X | S (1 | 1) # − 1 = 2 . (46) By induc tion an d some simple algebra , we o btain the solu tion to the DP prob lem at th e n th iteration: W ( n, 0) =2 ⌊ n/ 2 ⌋ , and W ( n, 1) = 2 ⌈ n/ 2 ⌉ . (47) Thus C 0 = 1 / 2 . (48) Alternatively , we ca n solve the example by f unding a solution to Bellman equation (43). F ind ing C 0 via Bellman equa tion: the Bellman e quation for the channel is simply the fo llowing, g (0) = g (1 ) − ρ, g (1) = 1 + g (0) − ρ. (49) Using simple algeb ra we obtain ρ = 1 2 , g (0) = v, g (1) = v + 1 2 . W e note th at we can achie ve the zero -error capacity with feedb ack and state inform ation simply by tra nsmitting 1 bit of inf ormation w henever the channel state is 1 . F ind ing the r egular feedb ack capacity C f : T o calculate the regular capacity we use the result of Chen and Berger in [3, T heorem 6]. Th e theor em states that if the ch annel is stro ngly irred ucible and strong ly ap eriodic, then the 15 capacity is C = max P X | S |S |− 1 X k =0 π k I ( X ; Y | S = k ) , (50) where π k is the equ ilibrium distribution of state k induced by the input d istribution P X | S . The chann el is stro ngly irreduc ible and stro ngly aperiodic if the matr ix T th at is defined as T ( k , l ) = m in x { Pr( S i = l | X k = x, S i − 1 = k ) } (51) is irreducib le and ape riodic f or any x ∈ X . Sin ce th e transition prob ability o f th e state does no t d epend on the input, and since the state transition matrix is irred ucible and aperiod ic f or any p < 1 , the cap acity is giv en by (50); hence C ( p ) = max P X | S π 0 I ( X ; Y | S = 0) + π 1 I ( X ; Y | S = 1 ) = π 1 = 1 2 − p (52) 0 0.2 0.4 0.6 0.8 1 0.4 0.5 0.6 0.7 0.8 0.9 1 feedback capacity zero error feedback capacity P S f r a g r e p l a c e m e n t s p C ( p ) Fig. 3. Feedbac k capac ity and zero-error feedback cap acity of the channel in Example 1 for dif ferent v alues of p = Pr { S = 1 | S = 1 } . Example 2 Let us con sider ano ther c hannel with two states as illu strated in Fig. 4. I n state 0, the chan nel is a Z-chann el. In state 1 , th e ch annel is a BSC with 0 cross probab ility . The next chann el state is determined by the output. If the ou tput is 0 , the chann el goes to state 0; if the o utput is 1, the ch annel goes to state 1; h ence the regular fe edback of the outp ut includes the state info rmation. It is tempting to make full use of state 1, i. e., to transmit 1 bit of in formatio n, b u t as a consequ ence the channe l goes to the un desirable state 0 half th e time, and the rate would be only 1 2 . F ind ing C 0 by calcu lating W ( n, s ) : For this chann el, G (0 , 0 | 0) = { 0 } , G (1 , 1 | 0) = { 0 , 1 } , G (0 , 0 | 1) = { 0 } , 16 P S f r a g r e p l a c e m e n t s S = 0 S = 1 0 0 0 0 1 1 1 1 y = 1 y = 0 y = 1 y = 0 Fig. 4. Channel topology of Example 2. G (1 , 1 | 1) = { 1 } and all th e o ther comb inations yield emp ty sets. For in itial state 0 , we have W ( n, 0) = max P X | S ( ·| 0) min W ( n − 1 , 0) P X | S (0 | 0) , W ( n − 1 , 1 ) = W ( n − 1 , 1 ) (53) The maximu m is ach iev ed b y setting P X | S (0 | 0) = 0 . For initial state 1, we hav e W ( n, 1) = max P X | S ( ·| 1) min W ( n − 1 , 0) P X | S (0 | 1) , W ( n − 1 , 1) P X | S (1 | 1) = max P X | S ( ·| 1) min W ( n − 2 , 1) P X | S (0 | 1) , W ( n − 1 , 1) P X | S (1 | 1) = W ( n − 2 , 1 ) + W ( n − 1 , 1) (54) By setting P (0 | 1) = W ( n − 2 , 1) W ( n − 2 , 1)+ W ( n − 1 , 1) , the maximum is achieved. Recall W (0 , 1) = 1 . Notice that W (1 , 1) = 2 , which can be computed d irectly . T hus, both W ( n, 1) an d W ( n, 0) a re a Fibo nacci seq uences (with pro per shif ts). Therefo re, lim log 2 W ( n, 1) n = lim log 2 W ( n, 0) n = log 2 1+ √ 5 2 . From Th eorem 2, we have C 0 = log 2 1 + √ 5 2 ≈ 0 . 6 942 , (55) which is the lo g of the go lden ratio. Here, we list th e first few values of W ( n, s ) in T able II . T ABLE I I W ( n, s ) W H I C H E Q UA L S T O T H E N U M B E R O F M E S S AG E S T H AT C A N B E TR A N S M I T T E D E R RO R - F R E E T H R O U G H T H E C H A N N E L I N E X A M P L E 2 I N n S T E P S S TA R T I N G A T S TA T E s ❍ ❍ ❍ ❍ ❍ s n 1 2 3 4 5 0 1 2 3 5 8 1 2 3 5 8 1 3 F ind ing C 0 via a Bellman equation : Sinc e the ch annel in put is bin ary , the actions are equi valent t o tw o number s: 17 p 0 = P X | S (0 | 0) , p 1 = P X | S (0 | 1) . Bellman’ s eq uation become J (0) + ρ = max 0 6 p 0 6 1 min log 2 p 0 + J (0) , J (1) J (1) + ρ = max 0 6 p 1 6 1 min log 1 p 1 + J (0) , log 1 1 − p 1 + J (1) (56) which implies tha t p 0 = 0 an d J (0) = J (1) − ρ, J (1) = J (0) + log 2 1 p 1 − ρ, log 2 1 p 1 + J (0) = log 2 1 1 − p 1 + J (1) (57) the solution of which is ρ = lo g 2 √ 5+1 2 , p 1 = 3 − √ 5 2 . It is of in terest to observe that starting at state 1 , any binary sequence with length n and no co nsecutive 0’ s can be transmitted with zero-erro r in n tran smissions. The number of such sequ ences as a functio n of n is also a Fibonacci sequence. Since we can always transm it a 1 to d riv e the channe l from state 0 to state 1, this is actually one way to achieve the zero -error c apacity . F ind ing the r e gular feedback capacity C f : This chan nel is not stron gly irreducib le, since th e matrix transition P S i | S i − 1 ,X =0 is no t irred ucible; hence, the stationarity o f the optimal po licy used by Chen and Berger [3] requir es additional ju stification. By inv o king theory on th e in finite-hor izon av erage-reward dynamic progr amming we show that a station ary p olicy achieves the optim um o f the DP and h ence Eq. (5 0) h olds. The feedba ck-capacity of the channel in Exam ple 2 can be formu lated acco rding to [3] and [13] as: C = lim N →∞ 1 N max { P X n | S n } N n =1 N X n =1 I ( X n ; Y n | S n ) , (58) and this is equ iv alen t to an in finite-hor izon average-rew ard DP with finite state space and co mpact a ctions where: • the state of th e DP is the state of the chann els i.e. , S n , • the actio ns o f the DP are the inp ut distributions p 0 ∈ [0 , 1] and p 1 ∈ [0 , 1] , where p 0 = P X | S (0 | 0) , p 1 = P X | S (0 | 1) . • the rew ard at time n given th at th e state of the DP is 0 or 1 is I ( X n ; Y n | S n = 0) = H b ( p 0 p ) − p 0 H b ( p ) or I ( X n ; Y n | S n = 1) = H b ( p 1 ) , resp ectiv ely , • the transition p robability given the actions p 1 and p 2 is P S n | S n − 1 (0 | 1) = p 1 and P S n | S n − 1 (0 | 0) = p 0 p . Next, we claim th at it is enough to consider the action p 1 ∈ [ ǫ, 1] for some ǫ > 0 . First we note tha t for ǫ 6 1 6 H (2 ǫ ) > H ( ǫ ) + ǫ, (59) since dH b ( x ) dx > 1 fo r x < 1 3 . Next we show that it is never optimal to have an actio n p 1 6 1 6 . L et J n (0) an d J n (1) b e the max imum rewards to go in n steps starting at state 0 and 1 , respectively , a nd let assume that the o ptimal actio n in state 1 is p ∗ 1 < 1 6 , 18 then J n (0) ( a ) = H ( p ∗ 1 ) + (1 − p ∗ 1 ) J n − 1 (0) + p ∗ 1 J n − 1 (1) ( b ) = H ( p 1 ) + (1 − 2 p ∗ 1 ) J n − 1 (0) + 2 p ∗ 1 J n − 1 (1) + p ∗ 1 ( J n − 1 (0) − J n − 1 (1)) ( c ) 6 H ( p ∗ 1 ) + (1 − 2 p ∗ 1 ) J n − 1 (0) + 2 p ∗ 1 J n − 1 (1) + p ∗ 1 ( d ) < H (2 p 1 ) + (1 − 2 p ∗ 1 ) J n − 1 (0) + 2 p ∗ 1 J n − 1 (1) , (60) where step (a ) f ollows from the dynamic program ming for mulation; step (b ) f ollows from the fact that we added and subtracted p ∗ 1 ( J n − 1 (0) − J n − 1 (1)) ; and step (c) follows from th e fact that J n − 1 (0) − J n − 1 (1) 6 1 ; this is because w e can cho ose p 0 = 0 , which means th at in on e epoch time we can cause th e state to change f rom 0 to 1 with pr obability 1 , and the reward in one epo ch tim e is al ways less than 1. Finally , step (d) follows from ( 59). Since step (d) co rrespond s to the action 2 p ∗ 1 , it implies that an optimal po licy would never include the action p ∗ 1 < 1 6 . Now we inv o ke [9 , T heorem 4.5] that states that if the reward is a continuo us fun ction of the action s, and for any action th e co rrespon ding state chain is irreducible ( unchain ), then the optimal p olicy is stationa ry . Since the rew ard f unction is contin uous in p 0 , p 1 and since for any p 0 ∈ [0 , 1] , p 1 ∈ [ 1 6 , 1 ] the state process is a ir reducible , we conclude that the optimal po licy p ∗ 1 , p ∗ 2 is stationary ( time-inv ar iant), and therefore the capac ity is giv en b y (50). 0 0.2 0.4 0.6 0.8 1 0.65 0.7 0.75 0.8 0.85 0.9 0.95 1 feedback capacity zero error feedback capacity P S f r a g r e p l a c e m e n t s p C ( p ) Fig. 5. Capaci ty and zero-error capacity of the channel in Example 2 fo r di ff erent v alues of p = Pr { Y = 0 | X = 0 , S = 0 } . Now , using (50), we obtain th at the regular feedback capacity as a function of p is C f ( p ) = max p 0 ,p 1 ( π 0 ( H b ( p 0 p ) − p 0 H b ( p )) + π 1 H b ( p 1 )) , (61) where ( π 0 , π 1 ) ar e the equilibrium distributions given by π 0 = p 1 1+ p 1 − p 0 p and π 1 = 1 − π 0 . Fig. 5 shows a numerical ev alu ation ( 61) a s a fun ction o f p . Example 3 W e co nsider here an e xample with three states with a trinary in put and trinary output. The t opolo gy of the cha nnel is dep icted in Fig. 6. The channel conditio nal distribution P ( s ′ , y | x, s ) has the form of P ( s ′ , y | x, s ) = P ( s ′ | x, s ) P ( y | x, s ) , where state s = 0 is a perfec t state , s = 1 is a good state and s = 0 is a bad state; the states 1,2,3 can transmit log 3 , 1 a nd 0 bits with z ero error prob ability . 19 W e first ev aluate the zero -error c apacity n umerically u sing the dynam ic program ming value iteration, i.e., Eq. (40), and then , using the n umerical ev aluation, we co njecture an analytica l solution, which we verify via the Bellman equation. x=1,2 1 2 0 0 1 2 x=1 1 2 0 0 1 2 1 2 0 0 1 2 x=3 S=0 x=1,2,3 S=1 S=2 x=1,3 x=2 P S f r a g r e p l a c e m e n t s X X X Y Y Y Fig. 6. Channel topology of Example 3. Evaluatin g C 0 using a value iteration algorithm: W e calcu lated 50 iterations o f the DP value iteratio n for mula giv en in ( 34). The action space of playe r 1 is the sto chastic matrix P X | S , and we quan tize each eleme nt o f the stochastic matr ix with a 10 − 4 resolution. Fig . 7 depicts th e value of max s J n ( s ) an d max s J n ( s ) wh ich acco rding to Theo rem 5 ar e upper and lower b ounds, respectively , on th e zero- error cap acity . After 50 iteration s, we obtain that the first p layer’ s action P X | S is given by P X | S = 0.465 6 0 .3177 0.2167 0 0.317 7 0 .6823 0 0 1 , (62) and th e the reward J 50 ( s ) − J 49 ( s ) , which is an estimate of the zero- error cap acity , is 1 . 10 283 fo r all s ∈ 0 , 1 , 2 . 0 5 10 15 20 25 30 35 40 45 50 0.4 0.6 0.8 1 1.2 1.4 1.6 P S f r a g r e p l a c e m e n t s ← max s J n ( s ) n ← min s J n ( s ) n n ← J 50 ( s ) − J 49 ( s ) = 1 . 1028 Fig. 7. Upper bou nd, max s J n ( s ) , and lo wer bound, min s J n ( s ) , on the zero-error feed back capaci ty of the channel in Example 3. The valu e J 50 ( s ) − J 49 ( s ) = 1 . 102 is an est imate of C 0 . Analytical solution via Bellman eq uation: W e conjectur e that the optimal po licy of Player 1 is a stochastic m atrix of the fo rm given in (62), i.e., P X | S (1 | 1) = P X | S (1 | 0) , and P X | S (0 | 1) = P X | S (0 | 2) = P X | S (1 | 2) = 0 . Based on 20 this assumption s an d the notation a 0 , P X | S (0 | 0) and a 1 , P X | S (1 | 0) , the Bellman eq uation becomes: ρ + J (0) = max a 0 ,a 1 min {− log a 0 + J (0) , − log a 1 + J (1) , − l og (1 − a 1 − a 0 ) + J (2) } ρ + J (1) = max a 1 min {− log a 1 + J (2) , − log(1 − a 1 ) + J (0) } ρ + J (2) = J (0) . (63) Using simple algeb raic ma nipulation , we obtain th at a 1 = (1 − a 1 ) 3 ρ = lo g (1 − a 1 ) a 1 , (64) which implies tha t a 1 = 1 + u − 1 3 u , where u = 3 r − 1 2 + q 1 4 + 1 27 , hence a 1 = 0 . 317 67 ... a nd C 0 = − log(1 − a 1 ) = 1 . 102926 .... (65) I X . C O N C L U S I O N S W e in troduce d a DP for mulation for computing the z ero-erro r fe edback capacity for FSCs with state information at the decoder and encod er . The DP fo rmulation , wh ich can also be viewed as a stochastic game between two players, is a powerful to ol that allows u s to ev aluate num erically th e zer o-erro r feed back capacity an d in many cases as shown in the paper, to find an analytical solutio n via a fixed-po int equ ation. X . A C K N O W L E D G E M E N T S The autho rs would lik e to than k Professor Thomas Cover for very helpful discussions and comments. This work is suppor ted by th e National Science Found ation thro ugh the grants CCF-05153 03 and CCF-063 5318 . R E F E R E N C E S [1] C. E. Sha nnon. The zero e rror ca pacity of a noisy channel. IEEE T rans. Inf. Theory , IT -2:8–19, 1956. [2] J. K ¨ orner and A. Orlit sky . Zero-error in formation theory . IE EE T rans. In f. Theory , 44(6 ):2207–2229, 19 98. [3] J. Chen a nd T . Berger . The c apacit y of finite-state Markov channels with feedbac k. IEEE T rans. Inf. Theory , 51:780–789, 2005. [4] R. Ahlswede a nd A. Kaspi. Optimal c oding st rate gies for certain permuting cha nnels. IEEE T rans. Inf. The ory , 33(3):310–314, 198 7. [5] B. V an Roy H. Per muter , P . Cuff and T . W eissman. Capacity and zero-err or capacity of the chemical channel w ith feedba ck. In Pr oc. Internati onal Symposium on Information Theory (ISIT) , F rance, Nice, 200 7. [6] J. Nayak and K. Rose. Graph capacities and zero-error transmission o ver compound channels. IEEE Tr ans. Inf. Theory , 51(12):4374–4378, 2005. [7] R. G. Ga llager . Information th eory and r eliable communicati on . Wi ley , Ne w Y ork, 1968. [8] A. Schrijve r . Combinatorial Optimization - P olyhedra and Efficiency . Springer , 2003. [9] A. Ara postathi s, V . S. Borkar , E. Fe rnandez-Ga ucherand , M. K. Ghosh, and S. Marcus. Discrete time controlled Mark ov pro cesses with av erage c ost criterion - a survey . SIAM J ournal of Contr ol and Optimization , 31(2):282 –344, 1993. [10] D. P . Bertsekas. Dynamic Pr ogramming and Optimal Co ntr ol: V ols 1 and 2 . Athena Scie ntific, Belmont, MA., 3 edition, 2005 . [11] L.S. Shapley . Stochast ic games. Proce edings o f the Nat ional Acade my of Scienc es , 39:109 51100, 1953. [12] J. Filar an d K. Vrieze . Competitiv e Marko v Dec ision Pr ocesses . Springer , Ne w Y ork and Heidelbe rg, 1997. 21 [13] H. H. Permuter , P . Cuf f, B. V an Roy , and T . W eissman. Capa city of the trapdoor channel with feedba ck. IEE E T rans. Inf. Theory , 54(7):3150 –3165, 2009.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment