Discussion of Twenty Questions Problem

Discuss several tricks for solving twenty question problems which in this paper is depicted as a guessing game. Player tries to find a ball in twenty boxes by asking as few questions as possible, and these questions are answered by only "Yes" or "No"…

Authors: Barco You

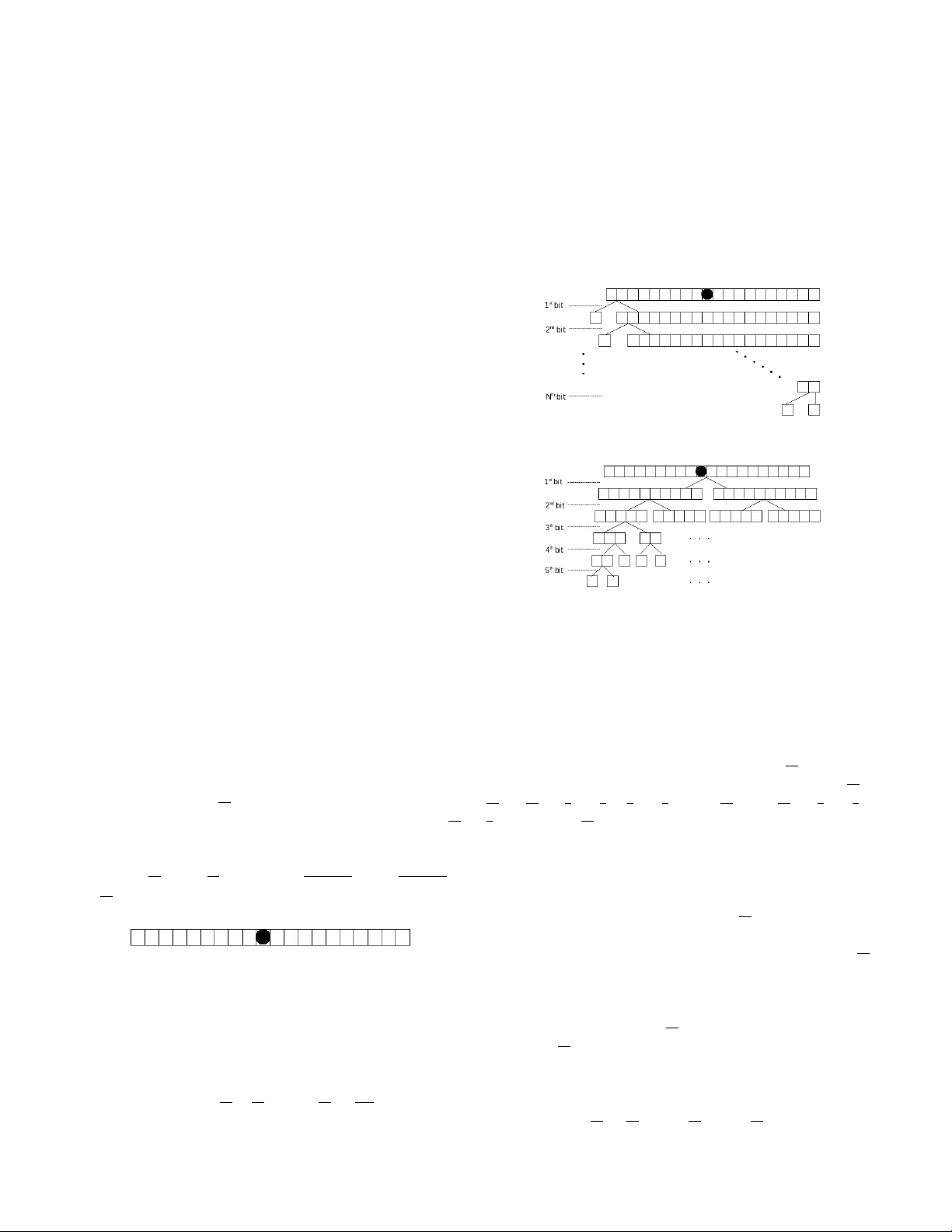

Discussion of T wenty Questions Problem Barco Y ou Department of Electronics and Information Engineering Huazhong Univ ersity of Science and T echnology W uhan, China 430074 Email: barcojie@gmail.com Abstract —Discuss several tricks for solving twenty question problems which in this paper is depicted as a guessing game. Player tries to find a ball in twenty boxes by asking as few questions as possible, and these questions are answered by only “Y es” or “No”. With the discussion, demonstration of source coding methods is the main concern. I . I N T RO D U C T I O N Unit computation of mordern computer is still binary , while “Y es or No” question is a good illustration of such computing, asking one question is equiv alent to spending one bit of computation resource. This discussion is intended to give an intution behind symbol source coding through discussing the different ways for solving a concrete twenty question problem. The rest of this paper is organized as follows. Section II introduces the way of one-by-one asking. Section III is about top-down division. In Section IV we discuss the way of down- top merging. The work is concluded in Section V. I I . O N E - B Y E - O N E A S K I N G W e depict the TQP(T wenty Question Problem) with 20 boxes in which only one box contains a ball, sho wn as figure 1. W ith method one, we choose arbitraily one box and say it contain the ball, if opening the box and find there is none, equiv alently answered by “No”, we get in- formation content log 20 19 . Continuously we draw another box but miss the ball again, we get information content log 19 18 . Step forward repeatedly , and assume the ball is found at step N (1 ≤ N ≤ 20) , up to now the total information content we got is (log 20 19 + log 19 18 + · · · + log 20 − N +2 20 − N +1 + log 20 − N +1 1 = log 20 1 = 4 . 3219 bits ) . Fig. 1. Only one of twenty boxes includes a ball W ithout loss of generality , the guessing process is illustrated as choosing the boxes in order from left to right, shown as figure 2. For e very guessing, we hav e “Y es” or “No” results, Imagine that 1 bit is spent for every guessing. Then the expected bits need solving the TQP with the One-by-One method equals to (1 + 19 20 + 18 20 + · · · + 2 20 = 209 20 = 10 . 45 bits ) . Fig. 2. Illustration of One-by-One Asking Fig. 3. Illustration of T op-Down Di vision I I I . T O P - D OW N D I V I S I O N Before every asking we divede equally the boxes into two groups, then ask if the ball is in one of the tw o groups. Accord- ing to the answer continue this strategy repeatly until the ball is found. This di vision process is sho wn as figure 3. In this way the expected bits to spend is (1 + 1 + 1 + 1 + 1 × 8 20 = 4 . 4 bits ) . The information content gotten from this ways is (1 + ( 10 20 + 10 20 ) + 5 20 × ( 3 5 log 5 3 + 2 5 log 5 2 ) × 4 + 2 20 × 4 + 3 20 × ( 2 3 log 3 2 + 1 3 log 3) × 4 + 2 20 × 4 = log 20 = 4 . 3219 bits ) . I V . D OW N - T O P M E R G I N G The smartest way presented here is to merge the options in Down-T op direction, which follows Huf fman Coding method [1]. Every box has the same probability 1 20 to contain the ball, combine two of the boxes and imagine they become a bigger one, then the probability of the ball in this bigger box is 2 20 . For every merging we make sure that the two boxes (real or imagined box) hav e the smallest probability of including the ball. For example, after first merging we have one bigger box which has probability 2 20 and there are 18 boxes with probability 1 20 , so 9 bigger boxes should be formed from the 18 boxes respecti vely . Repeat merging bigger boxes until we hav e a box which include the ball with probability 1 . This merging process is shown as figure 4. From this process we hav e the spent bits is (1 + 12 20 + ( 8 20 × 2) +( 4 20 × 5) +( 2 20 × 10) = 4 . 4 bits ) . 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 1 20 2 20 2 20 2 20 2 20 2 20 2 20 2 20 2 20 2 20 2 20 4 20 4 20 4 20 4 20 4 20 8 20 8 20 4 20 8 20 12 20 1 E E E E E E E E E E E E E E E E E E E E E E E E E E E E E E C C C C C C C C C C C C C C C A A A A A A A A A A A A 5 th bit 4 th bit 3 rd bit 2 nd bit 1 st bit Fig. 4. Illustration of Down-T op Merging The information content gotten in this way is (1 × ( 8 20 log 20 8 + 12 20 log 20 12 ) + 12 20 × ( 8 12 log 12 8 + 4 12 log 12 4 ) + 8 20 × 1 × 2 + 4 20 × 1 × 5 + 2 20 × 1 × 10 = 4 . 3219 bits ) . V . C O N C L U S I O N From abov e discussion, we can definitely conclude that to find the ball the three tricks get the same information content, but the first method consume in av erage much more extra effort than the later two methods. For TQP , the T op-Down Divsion method and Down-T op Merging method consume the same expected bits for achieving the goal. But they are not of the same efficiency . Actually the Down-T op Merging is optimal while T op-Down Di vsion is sub-optimal, just like nuclear fusion has much more energy than nuclear fission. Theorem 1. F or symbol coding, Huffman code is the optimal. Pr oof: Let symbol set A X = { x 1 , · · · , x N } hav e P X = { p 1 , · · · , p N } . Use division or merging method to construct codes for symbols, with once division or merging we have a ne w level. At any lev el I there are intermedi- ate symbols A I = { α 1 , · · · , α n I } (2 ≤ n I ≤ N ) , and P I = { p 1 , · · · , p n I } ( P n I k =1 p k = 1) . W ith Huffman cod- ing method, at lev el I we merge two symbols α i and α j , ∀ k ∈ { 1 , · · · , n i } and k 6 = i, k 6 = j : p k ≥ p i , p j . Then the bits consumed by this merge is 1 × ( p i + p j ) . W ith other code, at any le vel I if two symbols α k 1 and α k 2 merge into or are divided from ( I − 1) lev el. The consumed bits 1 × ( p k 1 + p k 2 ) ≥ 1 × ( p i + p j ) , if k 1 , k 2 6 = i, j . Sum all the bits consumed at all levels, we can get the Huffman code is the shortest. T ake an example as figure 5. A symbol set with P X = { 2 5 , 1 3 , 1 5 , 1 15 } , with Huffman merging we get expected code length (1 + 9 15 + 4 15 = 1 . 87 bits ) , while greedy division has expected code length (1 + 1 = 2 bits ) . 2 5 1 3 1 5 1 15 2 5 1 3 4 15 2 5 9 15 1 A A A A A A (a) Huffman D-T Merging 1 3 1 5 2 5 1 15 8 15 7 15 1 A A A A A A (b) Greedy T -D Division Fig. 5. Comparison between Huffman method and Greedy division R E F E R E N C E S [1] D.A Huffman, A Method for the Construction of Minimum-Redundancy Codes , pp 1098-1102. Proceedings of I.R.E, September , 1952.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment