Approximating the Permanent via Nonabelian Determinants

Celebrated work of Jerrum, Sinclair, and Vigoda has established that the permanent of a {0,1} matrix can be approximated in randomized polynomial time by using a rapidly mixing Markov chain. A separate strand of the literature has pursued the possibi…

Authors: Cristopher Moore, Alex, er Russell

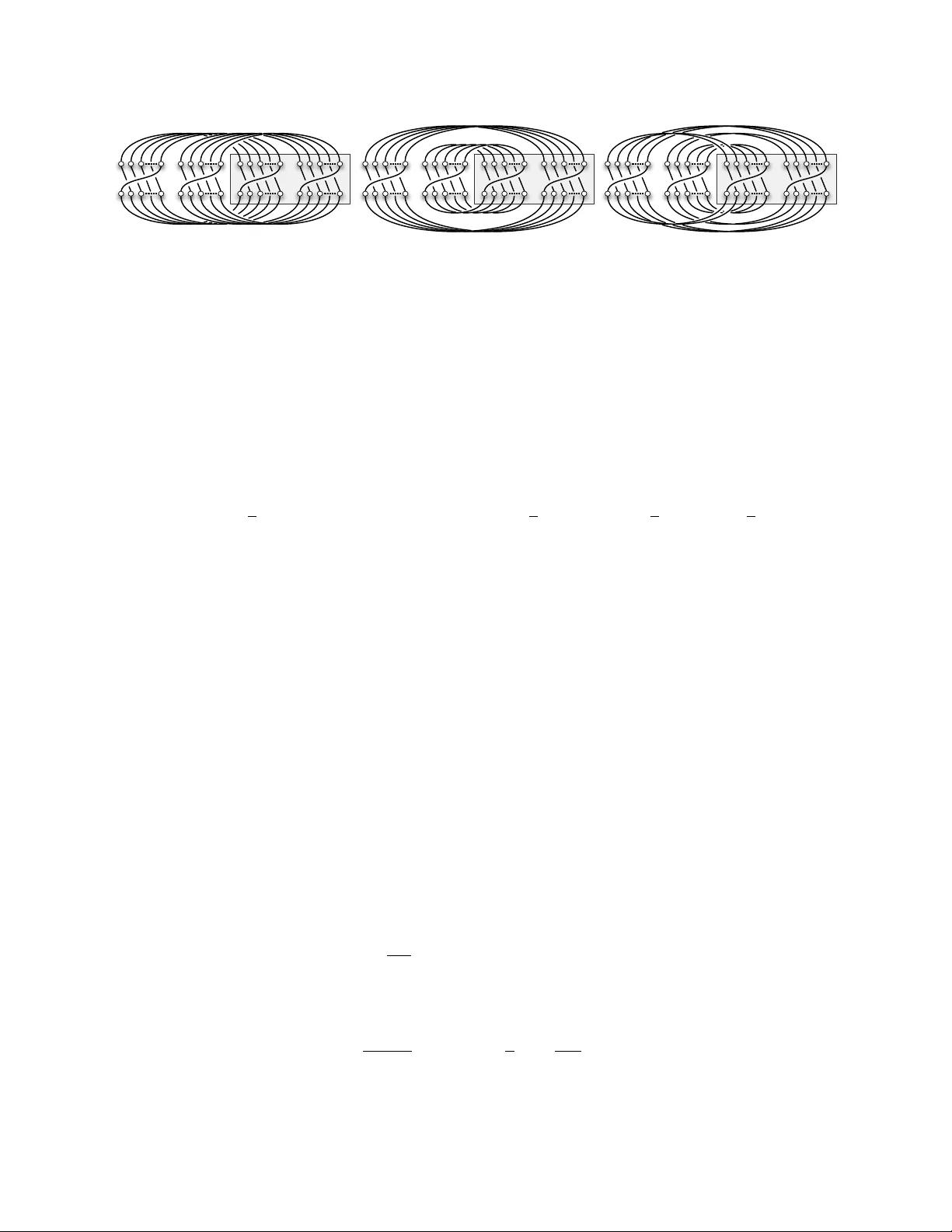

Appro ximating the P ermanen t via Nonab elian Determinan ts Cristopher Mo ore ∗ Alexander Russell † No vem ber 2, 2018 Abstract Since the celebrated w ork of Jerrum, Sinclair, and Vigo da, we ha v e known that the perma- nen t of a { 0 , 1 } matrix can b e appro ximated in randomized p olynomial time b y using a rapidly mixing Marko v c hain to sample p erfect matc hings of a bipartite graph. A separate strand of the literature has pursued the p ossibilit y of an alternate, algebr aic p olynomial-time approximation sc heme. These sc hemes work by replacing eac h 1 with a random element of an algebra A , and considering the determinant of the resulting matrix. In the case where A is noncommutativ e, this determinant can b e defined in sev eral w ays. W e show that for estimators based on the conv en tional determinant, the critical ratio of the second moment to the square of the first—and therefore the num b er of trials w e need to obtain a goo d estimate of the p ermanen t—is (1 + O (1 /d )) n when A is the algebra of d × d matrices. These results can b e extended to group algebras, and semi-simple algebras in general. W e also study the symmetrize d determinan t of Barvinok, showing that the resulting esti- mator has small v ariance when d is large enough. Ho wev er, if d is constant—the only case in whic h an efficient algorithm is known—w e show that the critical ratio exceeds 2 n /n O ( d ) . Th us our results do not pro vide a new p olynomial-time approximation sc heme for the p ermanent. In- deed, they suggest that the algebraic approach to approximating the permanent faces significant obstacles. W e obtain these results using diagrammatic techniques in which w e express matrix pro ducts as con tractions of tensor pro ducts. When these matrices are random, in either the Haar measure or the Gaussian measure, w e can ev aluate the trace of these pro ducts in terms of the cycle structure of a suitably random p erm utation. In the symmetrized case, our estimates are then deriv ed by a connection with the character theory of the symmetric group. 1 In tro duction The p ermanent of an n × n matrix A is p erm A = P π ∈ S n Q n i =1 A i,π i , where S n denotes the group of p erm utations of n ob jects. If A ij ∈ { 0 , 1 } for all i, j , we can also write p erm A = |{ π ∈ S n | π ` A }| where π ` A denotes the follo wing relation, π ` A ⇔ A i,π i = 1 for all i . Computing the p ermanent of a { 0 , 1 } matrix is #P -complete. Therefore, we cannot exp ect to compute it efficiently without startling complexity-theoretic consequences, including the collapse of the p olynomial hierarch y [V al79, T o d91]. ∗ mo o re@santafe.edu , Department of Computer Science, Univ ersity of New Mexico and San ta F e Institute † acr@cse.uconn.edu , Department of Computer Science and Engineering, Univ ersity of Connecticut 1 On the other hand, Go dsil and Gutman [GG81] p ointed out the following charming fact. If w e define the matrix-v alued random v ariable M so that M ij = ρ ij A ij , where the ρ ij are chosen indep enden tly and uniformly from {± 1 } , and define X = (det M ) 2 , then it is easy to chec k that X is an estimator for p erm A , which is to sa y that E [ X ] = p erm A . Since det M can b e computed effi- cien tly , so can X . This suggests a natural randomized approximation algorithm for the p ermanen t: a verage a family of indep enden t samples of X . The qualit y of this approximation can b e con trolled b y determining the v ariance of X . If X t denotes the av erage of X o ver t indep enden t trials, then Cheb yshev’s inequalit y sho ws that, in order for X t to yield an approximation of E [ X ] within a factor α = O (1) with probabilit y Ω(1), the n umber of trials we need is at most t ∼ V ar X E [ X ] 2 ≤ E [ X 2 ] E [ X ] 2 . F ollo wing [CRS03], w e refer to this quan tity as the critic al r atio of the estimator. Karmark ar, Karp, Lipton, Lov´ asz, and Lub y [KKL + 93] show ed, unfortunately , that the critical ratio is 3 n/ 2 in the w orst case, ignoring p oly( n ) factors. Then again, they sho wed that we can decrease this to 2 n/ 2 b y dra wing ρ ij uniformly from the unit circle in the complex plane, or simply from the cub e ro ots of unit y , instead of {± 1 } . Barvinok [Bar99] obtained a more concentrated estimator b y drawing ρ ij from normal distributions o ver R , C , and the quaternions H . This raises the in teresting p ossibilit y that, b y choosing the ρ ij from the right set of algebraic ob jects, w e migh t b e able to reduce the critical ratio to e o ( n ) , or even to p oly( n ), resulting in a sub exponential or p olynomial-time algorithm. One exciting result in this direction is due to Chien, Rasm ussen, and Sinclair [CRS03], who show ed that certain determinants defined ov er the Clifford algebra CL k with k generators give estimators where the critical ratio is (1 + O (2 − k/ 2 )) n/ 2 . In the case of the quaternion group, where k = 3, they ga ve a p olynomial-time algorithm for a type of determinan t where the critical ratio grows as (3 / 2) n/ 2 . This is currently the b est kno wn critical ratio for an algebraic estimator which can b e computed efficien tly . Sadly , how ev er, for larger k we do not kno w how to compute these determinants in p olynomial time. These results can b e given a uniform presentation b y defining a notion of determinan t for a matrix M o ver an asso ciativ e algebra A . The traditional Cayley determinan t is then det M = X α ∈ S n ( − 1) α n Y i =1 M i,αi , (1) where ( − 1) α denotes the sign of the p erm utation α . Note that det M tak es v alues in A . If A is noncomm utative, ho wev er, the determinan t as defined in (1) ma y dep end on the order in which the pro duct is taken. As written, each tra versal M i,αi is ordered from the top ro w to the bottom ro w; w e could just as easily order them from the left column to the righ t. This introduces some arbitrariness to the definition, and app ears to complicate the problem of computing such determinants, ev en when the algebra A has small dimension [Nis91]. One natural remedy is to remo ve this order dep endence b y forcibly symmetrizing eac h pro duct app earing in (1). This gives the following symmetrize d determinant , sdet M = 1 n ! X α,α 0 ∈ S n ( − 1) α 0 α − 1 n Y i =1 M αi,α 0 i . (2) 2 Observ e that sdet is obtained by symmetrizing each pro duct app earing in (1). This definition is due to Barvinok [Bar00], who show ed that if A has dimension m , the symmetrized determinant can b e computed in time O ( n m + O (1) ). In con trast, no efficient algorithm is curren tly kno wn for the unsymmetrized Ca yley determinant (1), even when the dimension of A is constant. W e fo cus on the algebra A d , consisting of all d × d matrices o ver C . W e remark that any finite dimensional C ∗ -algebra, 1 whic h app ear to be the natural settings for suc h appro ximations, are semi-simple , meaning that they can b e decomp osed as a direct pro duct of algebras of the form A d . In particular, all group algebras and the Clifford algebras studied in [CRS03] ha ve this prop ert y . It follo ws that many of our results, esp ecially low er b ounds on the critical ratio, carry ov er easily to estimators based on suitable distributions in semisimple algebras. No w, giv en a matrix A with entries in { 0 , 1 } , define M ij = ρ ij A ij , where the ρ ij are indep en- den tly random d × d matrices. (W e fo cus on { 0 , 1 } matrices, but we can let the A ij b e arbitrary nonnegativ e reals by taking M ij = ρ ij p A ij .) Since the M ij tak e v alues in A d , then so do det M and sdet M . There are sev eral wa ys to turn these matrix-v alued determinants in to real-v alued estimators for the p ermanen t of a real-v alued matrix A . As mentioned ab o ve, most of the existing literature has focused on the F rob enius norm of these determinan ts. F or tec hnical reasons, we focus first on the absolute v alue squared of their trace. This gives us tw o estimators, X = | tr det M | 2 and X s = | tr sdet M | 2 . Note that these are random R -v alued v ariables dep ending on the ρ ij . W e will then address the F robenius estimators, X F rob = k det M k 2 and X F rob ,s = k sdet M k 2 . As an additional degree of freedom, we can draw ρ ij according to t wo differen t distributions on A d . The Haar me asur e is the uniform distribution ov er unitary matrices. In the Gaussian me asur e , eac h entry of ρ ij is drawn indep enden tly from the Gaussian distribution on C with mean 0 and v ariance 1 /d : that is, its real and imaginary parts are dra wn independently from the Gaussian distribution on R with mean 0 and v ariance 1 / (2 d ), p ( x ) = e − x 2 / √ π . Our main con tribution is given by the following theorems. Theorem 1. F or b oth the Haar and Gaussian me asur es, in the unsymmetrize d c ase we have E [ X 2 ] E [ X ] 2 = 1 + O 1 d n . (3) In the symmetrize d c ase, E [ X 2 s ] E [ X s ] 2 ≤ 2 2 n n − d + O (1) if d = O (1) (4) and mor e gener al ly, E [ X 2 s ] E [ X s ] 2 = O e 4 n 2 /d . (5) Additionally , we establish low er b ounds on the critical ratio E [ X 2 s ] / E [ X s ] 2 . 1 A C ∗ -algebra is an algebra o ver R or C p ossessing a norm k · k and an inv olution op erator · ∗ consisten t in the sense that k x ∗ x k 2 = k x k 2 . See, e.g., [Con00, § 1] for a complete definition. 3 Theorem 2. L et A b e the n × n identity matrix and d a c onstant. Then E [ X 2 s ] E [ X s ] 2 = Ω 2 n n d and E [ X 2 s ] E [ X s ] 2 = 1 − O 1 d n Ω 2 n n d , when the ρ ij ar e distribute d ac c or ding to the Gaussian or Haar me asur e r esp e ctively. Finally , w e show the critical ratio differs by at most d 4 for the F rob enius estimators than for those giv en by the square of the trace: Theorem 3. 1 d 4 E [ X 2 ] E [ X ] 2 ≤ E [ X 2 F rob ] E [ X F rob ] 2 ≤ d 4 E [ X 2 ] E [ X ] 2 , (6) and similarly for X F rob ,s . These results give a somewhat frustrating outlo ok. The critical ratio for the unsymmetrized estimator b eha v es very well, b ecoming more and more mildly exponential as d increases, muc h lik e the Clifford group estimator of [CRS03]. How ev er, w e do not kno w ho w to compute these estimators efficien tly . On the other hand, w e can compute the symmetrized estimator if d is constant [Bar00], but our results sho w that its critical ratio do es not decrease appreciably un til d is roughly n 2 . Barvinok [Bar00] suggested that the estimators X F rob ,s migh t b ecome asymptotically concen- trated when d is large, but constan t. Sp ecifically , he made the following conjecture (where we ha ve weak ened the low er b ound, sp ecialized to { 0 , 1 } matrices, and changed the notation to fit our purp oses): Conjecture 1. If A is an n × n matrix with entries in { 0 , 1 } , let M ( A ) b e the matrix M ij = ρ ij A ij , wher e e ach ρ ij is chosen indep endently fr om the Gaussian distribution on A d . Define M ( 1 ) similarly, wher e M ij = ρ ij δ ij . Then ther e is a se quenc e of c onstants γ d , wher e lim d →∞ γ d = 1 , such that for any > 0 , lim n →∞ Pr " ( γ d + ) − n p erm A ≤ k M ( A ) k 2 k M ( 1 ) k 2 ≤ ( γ d + ) n p erm A # = 1 . Our results do not address Conjecture 1 directly , Ho wev er, given Cheb yshev’s inequalit y , it is v ery natural to consider the following stronger conjecture, which w ould imply Conjecture 1: Conjecture 2. Ther e is a se quenc e of c onstants θ d , wher e lim d →∞ θ = 1 , such that for any n × n matrix A , the critic al r atio of the estimator X F rob ,s = k M ( A ) k 2 ob eys E [ X 2 F rob ,s ] E [ X F rob ,s ] 2 ≤ θ n d . Sadly , Theorems 2 and 3 imply that Conjecture 2 is false. It is still conceiv able that Conjecture 1 is true, but it seems that any pro of of it w ould ha ve to b ound higher moments of the estimator: the first and second momen ts alone do not scale in a wa y that giv es concentration. The remainder of the pap er is organized as follo ws. In Section 2, we calculate the exp ectations of these estimators, showing that they are each a constan t a d times the p ermanen t, and computing the constan t explicitly using a diagrammatic tec hnique. In Sections 3 and 4, we b ound their second momen ts using the same tec hnique, proving Theorems 1 and 2. In Section 5, we relate the critical ratio for the F rob enius estimators to the trace-squared estimators, pro ving Theorem 3. Finally , in Section 6 w e discuss the implications of this theorem, and the remaining barriers to an algebraic appro ximation scheme for the p ermanen t. 4 2 The exp ectation Before we proceed, we write the following expansions for these estimators, which w e will find useful for calculating their exp ectations and second moments: X = X α,β ` A ( − 1) αβ tr Y i ρ i,αi ! tr Y i ρ ∗ i,β i ! (7) X s = X κ,λ ` A ( − 1) κλ E α,β tr Y i ρ αi,καi ! tr Y i ρ ∗ β i,λβ i ! . (8) Since E [ ρ ij ] = 0, any term in whic h some ρ ij app ears only once will ha ve zero expectation. Then the cross-terms in the expansion (7) are zero in exp ectation except when α = β , so E [ X ] = X α ` A E tr Y i ρ i,αi ! tr Y i ρ ∗ i,αi ! = X α ` A E tr Y i ρ i,αi 2 = p erm A . (9) Here w e used the use the following fact, which is an easy exercise: if σ is the pro duct of n indepen- den t random matrices, chosen from the Haar measure or the Gaussian measure, then E | tr σ | 2 = 1. Similarly , the only terms in (8) that contribute to E [ X s ] are those where λ = κ , so that eac h ρ ij app ears twice or not at all. Thus E { ρ ij } [ X s ] = X κ ` A E { σ i } E α,β tr Y i σ αi ! tr Y i σ ∗ β i ! = a d · p erm A where a d = E { σ i } E α,β tr Y i σ αi ! tr Y i σ ∗ β i ! . (10) A similar result for the F rob enius estimator X F rob ,s = k sdet M k 2 app ears as Theorem 4.3 in Barvi- nok [Bar00]. W e can think of a d as the exp ectation, ov er all pairs of p erm utations α, β , of the co v ariance b et w een the trace of t wo pro ducts of the same n random matrices, where the pro ducts are tak en in the orders given by α and β . This exp ectation clearly stays the same if w e assume that α is the iden tity 1 and β is uniformly random, so we can write a d = E β E { σ i } tr Y i σ i ! tr Y i σ ∗ β i ! . W e will ev aluate these co v ariances using a diagrammatic approac h. First, supp ose w e hav e n linear op erators σ 1 , . . . , σ n . The trace of their pro duct is ( σ 1 ) i 1 i 2 ( σ 2 ) i 2 i 3 · · · ( σ n ) i n i 1 . Here we sa ve ink by using the Einstein summation conv en tion, in whic h an y index that app ears t wice is automatically summed o ver. W e can think of this pro duct as a particular kind of internal trace of the tensor pro duct ( σ 1 ⊗ · · · ⊗ σ n ) i 1 ,...,i n j 1 ,...,j n = ( σ 1 ) i 1 j 1 · · · ( σ n ) i n j n , 5 σ 1 σ 2 σ n σ ∗ 3 σ ∗ 2 σ ∗ 1 σ 1 σ 2 σ 3 Figure 1: The trace of the matrix pro duct σ 1 σ 2 · · · σ n is a con traction of the tensor pro duct σ 1 ⊗ · · · ⊗ σ n . Com bining this with (13) shows that the cov ariance b et ween the traces of t wo p erm uted pro ducts is giv en b y d c − n where c is the num b er of lo ops in a diagram lik e that on the righ t. In this case, the co v ariance b etw een tr σ 1 σ 2 σ 3 and tr σ 2 σ 1 σ 3 is 1 /d 2 , since n = 3 and c = 1. where we contract the index i t with j ( i +1) mod n for each i . W e dra w this on the left-hand side of Fig. 1. Then if n = 3, say , and β is the the transp osition (2 3), the cov ariance E σ 1 ,σ 2 ,σ 3 ( tr σ 1 σ 2 σ 3 ) ( tr σ 1 σ 3 σ 2 ) ∗ b ecomes a certain con traction of the tensor pro duct of three indep enden t and iden tical expectations, E σ 1 [ σ 1 ⊗ σ ∗ 1 ] ⊗ E σ 2 [ σ 2 ⊗ σ ∗ 2 ] ⊗ E σ 3 [ σ 3 ⊗ σ ∗ 3 ] . (11) The follo wing lemma is well-kno wn; we prov e it in App endix B for completeness. Lemma 4. If σ is chosen ac c or ding to the Haar me asur e or the Gaussian me asur e, then E σ [ σ ⊗ σ ∗ ] ik j ` = 1 d δ ik δ j ` . (12) W e can represent (12) diagrammatically as a “cup cap,” E σ [ σ ⊗ σ ∗ ] = 1 d . (13) A tensor pro duct such as (11) b ecomes three cup caps side by side, and con tracting it consists of connecting pairs of inputs and outputs until the diagram b ecomes closed. F or instance, the exp ectation of ( tr σ 1 σ 2 σ 3 ) ( tr σ 1 σ 3 σ 2 ) ∗ corresp onds to the diagram on the right-hand side of Fig. 1. Here w e ha ve drawn the cup caps on the top and b ottom of the diagram (b et w een corresp onding indices of σ i and σ i ∗ ) and the connections b et w een them in the interior. When w e ev aluate the trace of this diagram, eac h of the n cup caps introduces a factor of 1 /d according to (13), and each lo op in the diagram corresp onds to an index whic h can b e set indep enden tly to any v alue betw een 1 and d . So, the diagram ev aluates to d c − n where c is the n umber of lo ops. In this case n = 3 and c = 1, and the cov ariance is 1 /d 2 . More generally , we can write the cov ariance b et ween tr Q i σ i and tr Q i σ β i as a function of β as follo ws. The cup caps match the upp er indices of the σ s in the first pro duct to those of the second 6 pro duct according to β , and the low er indices of the second pro duct to those of the first pro duct according to β − 1 . If r denotes the rotation (1 2 · · · n ), which “w ea ves” the σ s together and takes the trace of their pro duct, then follo wing the diagram around gives a p erm utation on (say) the upp er n indices of the first pro duct (darkened in Fig. 1) equal to the commutator [ β , r ] = β rβ − 1 r − 1 . Each lo op in the diagram corresp onds to a cycle in this p erm utation. So, w e hav e a d = 1 d n E β d c ([ β ,r ]) (14) where c ( π ) denotes the num b er of cycles in a p ermutation π . Note that we alwa ys ha ve a d ≥ n n ! . (15) This follows b ecause, with probability n/n !, a uniformly random β is one of the n p o w ers of r . In that case [ β , r ] = 1, and d c ([ β ,r ]) = d n . It can b e shown that this b ound is tight when d = ω ( n 2 ). The exp ectation (14) can be view ed as the inner pro duct of P n , the uniform distribution o v er the conjugacy class [ r ] = { π − 1 r π | π ∈ S n } , and the function d c ( · ) , b oth of which are class functions — in v arian t under conjugation. Belo w, we sho w that these can b e expanded in terms of the characters of the group S n and analyzed using the Littlewoo d-Ric hardson rule; this yields an exact expression for a d : Lemma 5. If d ≤ n , a d = 1 d n n + d n + 1 . If d > n , a d = 1 d n n + d n + 1 − d n + 1 . (16) Pr o of. First, note that the function d c ( π ) is a class function , i.e., one whic h is in v arian t under conjugation. Therefore, in E β d c ([ β ,r ]) w e can replace [ β , r ] with ζ [ β , r ] ζ − 1 where β and ζ are uniformly random. Since ζ [ β , r ] ζ − 1 = ζ β r β − 1 r − 1 ζ − 1 = ( ζ β ) r ( ζ β ) − 1 ζ r − 1 ζ − 1 , w e can treat this as the exp ectation of d c ( π ) where π is the pro duct of tw o uniformly random elemen ts of [ r ], the conjugacy class consisting of cycles of length n . In other words, if P n : S n → R is the uniform distribution on the conjugacy class of n -cycles, then a d = 1 d n X π ( P n ∗ P n )( π ) d c ( π ) (17) where P n ∗ P n is the con volution of P n with itself, ( P n ∗ P n )( π ) = X η ∈ S n P n ( η ) P n ( η − 1 π ) . W e will view (17) as an inner pro duct ov er S n , a d = n ! d n D P n ∗ P n , d c ( · ) E , (18) 7 where the inner pro duct ov er a group G of tw o functions f 1 , f 2 : G → C is defined as h f 1 , f 2 i = 1 | G | X g ∈ G f 1 ( g ) ∗ f 2 ( g ) . T o ev aluate (18), we will expand P and d c ( · ) in the F ourier basis, as a sum of irreducible c haracters of S n . Recall that the characters of a finite group are orthonormal under the inner pro duct ab ov e and, additionally , conv olution is transformed to p oin twise pro duct in the F ourier basis. In short, for t wo characters χ and ψ , χ ∗ ψ = ( | G | χ (1) χ if χ = ψ , 0 if χ 6 = ψ , and h χ, ψ i = ( 1 if χ = ψ , 0 if χ 6 = ψ . (19) Eac h character of the symmetric group is associated with a Y oung diagram, i.e., a partition λ 1 ≥ λ 2 ≥ · · · where P i λ i = n . In ligh t of the Murnaghan-Nak a yama rule (Lemma 10 of App endix A), the uniform distribution P n o ver the conjugacy class [ r ] is supp orted solely on ho oks , i.e., Y oung diagrams consisting of a single ribb on of size n . Let Λ t denote the ho ok of height t + 1, in which λ 1 = n − t for some 0 ≤ t < n and λ i = 1 for 1 < i ≤ t + 1. Let χ t denote the corresp onding c haracter. Then dim χ t = χ t (1) = n − 1 t and, again app ealing to Lemma 10, we ha v e χ t ([ r ]) = ( − 1) t . Applying (19) then gives h P n , χ t i = ( − 1) t n ! and h P n ∗ P n , χ t i = 1 n ! 1 n − 1 t . (20) T o calculate the inner pro duct d c ( · ) , χ t , consider the following com binatorial representation of S n . Let Σ b e the set of strings of length n o ver the alphab et { 1 , . . . , d } , and let S n act on Σ in the natural w ay , b y p erm uting the symbols in a given string. Giv en a p erm utation π , the c haracter χ Σ ( π ) is the n umber of strings fixed b y π . Since each of π ’s cycles can b e giv en an indep enden t lab el in { 1 , . . . , d } , we hav e χ Σ ( π ) = d c ( π ) . It follo ws that d c ( · ) , χ t = h χ t , χ Σ i , the n umber of copies of Λ t app earing in the decomp osition of Σ into irreducible represen tations. T o find this, we first decompose Σ into a direct sum of com binatorial represen tations Σ ( n 1 ,...,n d ) , consisting of strings where i appears n i times for each i ∈ { 1 , . . . , d } . Then χ ( n 1 ,...,n d ) , χ t is given by a Kostka numb er , defined as follo ws. First, sort the n i in decreasing order so that they form a Y oung diagram N . Then K Λ t N = χ ( n 1 ,...,n d ) , χ t is the n umber of semistandard tableaux of shape Λ t and con tent N : that is, the n umber of wa ys to fill Λ t with n i i s for each i ∈ { 1 , . . . , d } , where each row is nondecreasing and where each column is strictly increasing. Since Λ t is a ho ok, to sp ecify a semistandard tableau with a given con tent it suffices to sp ecify the con tent of the leftmost column. Since this column must b e strictly increasing, its t + 1 entries m ust b e distinct. If N has k rows, i.e., if n i 6 = 0 for k v alues of i , then the first one must app ear in the top cell, but the remaining t cells can b e chosen arbitrarily . Th us K Λ t N = k − 1 t , and is 0 if t ≥ k . There are d k n − 1 k − 1 partitions ( n 1 , . . . , n d ) with k nonzero n i . Since 1 ≤ k ≤ min( d, n ), summing o ver k then gives D d c ( π ) , χ t E = X k d k n − 1 k − 1 K Λ t N = min( d,n ) X k =1 d k n − 1 k − 1 k − 1 t . (21) 8 W e can no w calculate the inner product P n , d c ( · ) . Combining (20) and (21) and summing o v er t , w e hav e n ! D P n , d c ( · ) E = n ! n − 1 X t =0 h P ∗ P , χ t i D d c ( · ) , χ t E = n − 1 X t =0 min( d,n ) X k =1 d k n − 1 k − 1 k − 1 t . n − 1 t = min( d,n ) X k =1 d k k − 1 X t =0 n − t − 1 n − k = min( d,n ) X k =1 d k n k − 1 = n + d n + 1 − d n + 1 , where d n +1 = 0 if d ≤ n . Com bining this with (18) completes the pro of. 3 The second momen t in the unsymmetrized case Squaring (7)—and, for aesthetic reasons, placing the conjugated ρ s in the second half of the ex- pression and c hanging the names of the p erm utations—gives X 2 = X κ,λ,µ,ν ` A ( − 1) κλµν tr Y i ρ i,κi ! tr Y i ρ i,λi ! tr Y i ρ ∗ i,µi ! tr Y i ρ ∗ i,ν i ! . (22) No w w e tak e the exp ectation o ver the ρ ij . As b efore, the only terms of this sum that contribute to this exp ectation are those in which each ρ ij app ears an even num b er of times. Moreov er, each ρ ij m ust app ear an equal num b er of times conjugated (in the first and second pro ducts) and unconjugated (in the third and fourth pro ducts), since E σ [ σ ⊗ σ ] = 0. In the Gaussian measure, this is b ecause E [( σ i j ) 2 ] = 0 if σ i j is c hosen from the Gaussian distribution on C . In the Haar measure, the same thing is true b ecause the tensor square σ ⊗ σ of the defining representation of U ( d ) con tains no copies of the trivial representation. F or eac h term of (22), asso ciated with a tuple ( κ, λ, µ, ν ), we express the total num b er of o ccurrences of eac h ρ ij with an n × n matrix C ij . In ligh t of the discussion ab o ve, for the terms that contribute to the second moment we ha ve C ij = 0 if A ij = 0, C ij ∈ { 2 , 4 } if A ij = 1, and P i C ij = P j C ij = 2 n . W e will denote these conditions as C ` A . As in [KKL + 93, CRS03], w e think of C as a “double cycle cov er” of the bipartite graph described by A . This graph has n v ertices on either side, and an edge b et ween the i th vertex on the left and the j th vertex on the righ t if and only if A ij = 1. Eac h vertex has degree 2 or 4 in C . Thus C consists of cycles where eac h edge is cov ered twice, and p ossibly some isolated edges which are co vered four times. W e then write the second moment as a sum, o v er all C , of the quadruples suc h that ( κ, λ, µ, ν ) ` C , where this denotes the following relation: ( κ, λ, µ, ν ) ` C ⇔ π ` A for all π ∈ { κ, λ, µ, ν } and |{ π ∈ { κ, λ } | j = π i }| = |{ π ∈ { µ, ν } | j = π i }| for all i, j ∈ { 1 , . . . , n } , and |{ π ∈ { κ, λ, µ, ν } | j = π i }| = C ij for all i, j ∈ { 1 , . . . , n } . In our discussion b elo w, w e will treat eac h ( κ, λ, µ, ν ) as a “coloring” of C . Eac h double edge is colored ( κ, µ ), ( κ, ν ), ( λ, µ ), or ( λ, ν ), indicating some pair ρ ij , ρ ∗ ij app earing in the first and third pro ducts, or the first and fourth, and so on. Eac h cycle in C must alternate b et ween ( κ, µ ) and ( λ, ν ) or b et ween ( κ, ν ) and ( λ, µ ). The isolated edges in C b ear all four colors, indicating that some ρ ij app ears in all four pro ducts. W e observe that for those tuples that contribute to the second momen t, the parity ( − 1) κλµν is alw ays 1. 9 Lemma 6. If ( κ, λ, µ, ν ) ` C for some C , then ( − 1) κλµν = 1 . Pr o of. Observe that ( − 1) κλµν = ( − 1) π where π = κ − 1 µλ − 1 ν . W e claim that the constraints w e describ e ab o v e imply that π = 1. Consider a cycle c of C on the bipartite graph defined by A . W e can view κ, λ, µ, ν as one-to-one mappings from the n v ertices on the left side to the n vertices on the right. If c alternates b et w een ( κ, µ ) and ( λ, ν ), then restricting to the vertices on the left side of c we ha ve κ = µ and λ = ν . Similarly , if c alternates b et w een ( κ, ν ) and ( λ, µ ), then restricting to these vertices giv es κ = ν and λ = µ . Finally , for an isolated edge we ha ve κ = λ = µ = ν when restricted to its left endp oin t. In all cases w e hav e κ − 1 µλ − 1 ν = 1. Th us the second moment of the unsymmetrized estimator can b e written E [ X 2 ] = X C ` A X ( κ,λ,µ,ν ) ` C E { ρ ij } tr Y i ρ i,κi ! tr Y i ρ i,λi ! tr Y i ρ ∗ i,µi ! tr Y i ρ ∗ i,ν i ! . (23) Man y terms in this exp ectation can b e ev aluated using the same picture we gav e for the exp ec- tation. Each pair ρ ij , ρ ∗ ij creates a cupcap matching a pair of indices in one of the first t wo products with a pair in one of the second tw o pro ducts. How ever, the isolated edges in C corresp ond to a fourth-order op erator E σ ( σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ) whic h we calculate in the following lemma. Lemma 7. If σ is chosen ac c or ding to the Gaussian me asur e, then E σ [ σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ] ikmp j `nq = 1 d 2 δ im δ j n δ kp δ `q + δ ip δ j q δ km δ `n , (24) or diagr ammatic al ly, E σ [ σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ] = 1 d 2 + . (25) If σ is chosen ac c or ding to the Haar me asur e, then 1 − O (1 /d ) d 2 + E σ [ σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ] 1 + O (1 /d ) d 2 + , (26) wher e we write A B if B − A is p ositive semidefinite. Pr o of. W e hav e E σ [ σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ] ikmp j `nq = E h σ i j σ k ` ( σ m n ) ∗ ( σ p q ) ∗ i . In the Gaussian measure, if i = m , j = n , k = p , and ` = q , but i 6 = k or j 6 = ` , this giv es σ i j 2 σ k ` 2 = 1 /d 2 . If i = p , j = q , k = m , and ` = n , but i 6 = k or j 6 = ` , w e get the same result. Finally , if i = k = m = p and j = ` = n = p , we get E σ i j 4 = 2 /d 2 . In the Haar measure, analogous to Lemma 4 w e will calculate the exp ectation of σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ b y considering tensor p o wers of the defining represen tation σ of U ( d ). The tensor square σ ⊗ σ decomp oses into symmetric and antisymmetric subspaces, eac h of which is irreducible: σ ⊗ σ = τ + ⊕ τ − . The dimension of τ ± is d ± = ( d 2 ± d ) / 2. W e can write the pro jection op erators onto τ ± in terms of the exc hange op erator whic h reverses the order of the tensor pro duct, and the identit y : Π ± = 1 2 ± . 10 No w writing σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ = ( τ + ⊕ τ − ) ⊗ ( τ ∗ + ⊕ τ ∗ − ), the exp ectation ov er σ is the pro jection op erator onto the trivial subspaces of τ + ⊗ τ ∗ + and τ − ⊗ τ ∗ − : E σ [ σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ] = Π τ + ⊗ τ ∗ + 1 ⊕ Π τ − ⊗ τ ∗ − 1 . (27) Analogous to (13), w e hav e the handsome Π τ + ⊗ τ ∗ + 1 = (Π + ⊗ Π + ) · 1 d + · (Π + ⊗ Π + ) (28) and similarly for Π τ − ⊗ τ ∗ − 1 . Putting these diagrams together with (27) gives E σ [ σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ] = (Π + ⊗ Π + ) · 1 d + · (Π + ⊗ Π + ) + (Π − ⊗ Π − ) · 1 d − · (Π − ⊗ Π − ) = 1 4 d + + + + + 1 4 d − − − + = 1 d 2 − 1 + − 1 d + . (29) One can chec k that (29) is the pro jection op erator on to the tw o-dimensional subspace spanned b y the images of and , that is, the vectors u = 1 d P i,j ( i, j, i, j ) and v = 1 d P i,j ( i, j, j, i ). In general, giv en t wo real-v alued v ectors u and v of norm 1, let Π u and Π v denote the pro jection operators onto the subspaces parallel to them, and let Π u , v b e the pro jection op erator on to the tw o-dimensional subspace they span. Then 1 1 + |h u , v i| (Π u + Π v ) Π u , v 1 1 − |h u , v i| (Π u + Π v ) , where we write A B if B − A is p ositiv e semidefinite. T o see this, note that the eigenv ectors of Π u + Π v are u ± v , with eigenv alues λ ± = 1 ± h u , v i , while their eigenv alues with resp ect to Π u , v are 1. In this case , we ha ve h u , v i = 1 /d and Π u = 1 d 2 and Π v = 1 d 2 . Th us (29) b ecomes 1 1 + 1 /d 1 d 2 + E σ [ σ ⊗ σ ⊗ σ ∗ ⊗ σ ∗ ] 1 1 − 1 /d 1 d 2 + , completing the pro of. The op erator corresp onds to the coloring ( κ, µ ) , ( λ, ν ), in which some ρ ij app ears in the first and third pro ducts, and another ρ 0 ij app ears in the second and fourth. Similarly , the op erator corresp onds to the coloring ( κ, ν ) , ( λ, µ ), in which ρ ij app ears in the first and fourth pro ducts and ρ 0 ij app ears in the second and third. Th us Lemma 7 tells us that, with a m ultiplicative cost of 1 + O (1 /d ) p er isolated edge in the Haar measure, w e can replace a given isolated edge in C with 11 an (unordered) pair of edges. This pair can b e colored in t wo w ays: with ( κ, µ ) and ( λ, ν ), or with ( κ, ν ) and ( λ, µ ). Equiv alen tly , we can “decouple” each quadruple pro duct ρ ⊗ ρ ⊗ ρ ∗ ⊗ ρ ∗ in to the sum of t wo combinations of tensor pro ducts, ρ ⊗ ρ ⊗ ρ ∗ ⊗ ρ ∗ ≈ ρ 0 ⊗ ρ 00 ⊗ ρ 0 ∗ ⊗ ρ 00 ∗ + ρ 0 ⊗ ρ 00 ⊗ ρ 00 ∗ ⊗ ρ 0 ∗ , (30) where ρ 0 and ρ 00 are c hosen indep enden tly . Next we explore the set of ( κ, λ, µ, ν ) corresp onding to a giv en C , or equiv alently the set of colorings of C . W e will call a coloring pur e if every edge in C are colored ( κ, µ ) or ( λ, ν ). This corresp onds to pairing the ρ ij s in the first pro duct in (23) with their conjugates in the third, and those in the second pro duct with their conjugates in the fourth—and c ho osing the first term in (30) for each ρ ij whic h app ears in all four pro ducts. Eac h cycle in C has tw o pure colorings, and eac h isolated edge has one. Thus the num b er of pure colorings of C is 2 t ( C ) where t ( C ) is the n umber of cycles in C . A w ell-known bijection shows that (p erm A ) 2 can b e written as a sum o ver cycle co vers of the bipartite graph defined by A , (p erm A ) 2 = X C ` A 2 t ( C ) , (31) or equiv alen tly that (p erm A ) 2 is the total n umber of pure colorings. Combining this with (9), w e ha ve E [ X ] 2 = X C ` A X ( κ,λ,µ,ν ) ` C pure 1 . (32) On the other hand, we can asso ciate each coloring with a pure one, say b y replacing the color ( κ, ν ) with ( κ, µ ) and ( λ, µ ) with ( λ, ν ) on each edge. If this conv erts a tuple of p erm utations ( κ, λ, µ 0 , ν 0 ) to a tuple ( κ, λ, µ, ν ) corresponding to a pure coloring, w e will write ( µ 0 , ν 0 ) ` ( κ, λ, µ, ν ). Then, at the risk of some notational o verload, we write (23) as a sum ov er pure colorings: E [ X 2 ] = X C ` A X ( κ,λ,µ,ν ) ` C pure X ( µ 0 ,ν 0 ) ` ( κ,λ,µ,ν ) E { ρ ij } tr Y i ρ i,κi ! tr Y i ρ i,λi ! tr Y i ρ ∗ i,µi ! tr Y i ρ ∗ i,ν i ! . No w, analogous to [KKL + 93], we b ound the critical ratio E [ X 2 ] / E [ X ] 2 as the maximum ratio b et w een corresp onding terms in these tw o sums, asso ciated with some pure coloring of some cycle co ver. The w orst p ossible case is when C consists entirely of isolated edges, since in that case we can switc h the colors on each edge indep enden tly , giving 2 n colorings for the single pure one. W e can parametrize these 2 n colorings by strings s ∈ { 0 , 1 } n , where s i = 0 if the coloring of the i th edge is pure, and 1 if its colors are switched. This pro duces diagrams such as those shown in Fig. 2, w eaving a total of 8 n v ertices together. As in our calculation of the exp ectation, the corresp onding pro duct of traces is d c − 2 n where c is the n umber of lo ops in this diagram. Both the pure and “completely impure” colorings 0 n and 1 n —where the ρ s in the first pro duct are all paired with those in the third or fourth resp ectiv ely , and the those in the second pro duct are all paired with those in the fourth or third—hav e 2 n lo ops. In general, the num b er of lo ops is 2 n minus the n umber of times s switches bac k and forth b et ween 0 and 1 when s is arranged cyclically . Sp ecifically , there are t wo lo ops of length 4 for each i where s i = s ( i +1) mod n , and a cycle of length 8 for eac h i where s i 6 = s ( i +1) mod n . 12 Figure 2: T erms corresp onding to a giv en cycle co ver C , where the ρ s in the gra y b o x are conjugated and n = 5. Left, a pure coloring, which has 2 n lo ops. Middle, a maximally impure coloring, which also has 2 n loops. Righ t, a mixed coloring corresp onding to the string s = 00111. A careful insp ection shows that it has 8 lo ops: 6 of length 4, and 2 of length 8. F or each ev en i with 0 ≤ i ≤ n , there are 2 n i strings which switch bac k and forth i times. Therefore, com bined with Lemma 7, we hav e (for a cycle cov er C consisting of n isolated edges) X ( µ 0 ,ν 0 ) ` ( κ,λ,µ,ν ) E { ρ ij } tr Y i ρ i,κi ! tr Y i ρ i,λi ! tr Y i ρ ∗ i,µi ! tr Y i ρ ∗ i,ν i ! = 1 + O 1 d n × 2 n X i =0 , 2 , 4 ,... n i d − i = 1 + O 1 d n × 1 + 1 d n + 1 − 1 d n In the Gaussian measure, this expression is exact if we remo ve the prefactor (1 + O (1 /d )) n ; but in an y case, w e get a b ound (1 + O (1 /d )) n in e ither measure. Com bining this with (32) completes the first part of the pro of of Theorem 1. 4 The second momen t in the symmetrized case Our analysis of the second moment in the symmetrized case pro ceeds in tw o steps. W e b egin, as with the unsymmetrized case, by diagrammatically analyzing the relev an t traces. The result is a sum o v er double cycle co v ers weigh ted b y an exponential generating function P π d c ( π ) o ver a subset of the symmetric group S 2 n . W e then show that an allied quantit y can b e analyzed, as in Lemma 5, b y harmonic analysis on S 2 n . Before stating the main lemmas of this section, we in tro duce some further notation. As in (31), t ( C ) denotes the n umber of cycles in C . As b efore, we let r denote the rotation (1 , 2 , . . . , n ) ∈ S n . The expression π σ = σ − 1 π σ denotes conjugation, and, for tw o elements π , σ ∈ S n , we let ( π , σ ) denote the elemen t of S 2 n giv en b y applying π and σ to the first n and last n elements of { 1 , . . . , 2 n } , resp ectiv ely . Finally , w e let w k denote the inv olution (1 n + 1)(2 n + 2) · · · ( k n + k ) with the con ven tion that w 0 is the iden tity . W e can then write E [ X 2 s ] in terms of the follo wing quantit y: a (2) d = n X k =0 n k 1 d 2 n E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 ) ( α,β ) w k ( r,r ) ( γ ,δ ) w k ) . Lemma 8. If the ρ ij ar e dr awn ac c or ding to the Gaussian or Haar me asur e, E [ X 2 s ] E [ X s ] 2 ≤ 1 + O 1 d n a (2) d a 2 d . 13 W e delay the proof of Lemma 8 just long enough for some comforting w ords regarding the ma jor remaining obstacle: estimating a (2) d . While we do not hav e a simple, exact expression for a (2) d , w e c an control a larger quantit y , ˜ a (2) d = n X k =0 n k 2 1 d 2 n E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 ) ( α,β ) w k ( r,r ) ( γ ,δ ) w k ) , in which the k th term of the sum is graced with an extra factor of n k . With this reweigh ting we can analyze ˜ a (2) d in terms of the F ourier expansions of the class function d c ( · ) , determined b y the Kostk a num bers, and the con volution square of the conjugacy class { ( r, r ) σ | σ ∈ S 2 n } , determined b y the Murnaghan-Nak a yama rule. This results in the following b ound. Lemma 9. With notation as ab ove, 1 n n/ 2 · ˜ a (2) d ≤ a (2) d ≤ ˜ a (2) d and 1 d 2 n 2 n n 2 n + d − 1 2 n ≤ ˜ a (2) d ≤ 4 n 2 d 2 n 2 n n 2 n + d − 1 2 n . Com bining this with Lemmas 8 and 5 completes the pro of of (4) and (5) in Theorem 1. W e return now to the pro ofs of these t wo lemmas. Pr o of of L emma 8. Squaring (8), the second moment of the symmetrized estimator can b e written E [ X 2 s ] = X C X ( κ,λ,µ,ν ) ` C E α,β ,γ ,δ E { ρ ij } tr Y i ρ αi,καi ! tr Y i ρ β i,λβ i ! tr Y i ρ ∗ γ i,µγ i ! tr Y i ρ ∗ δ i,ν δ i ! . (33) Consider no w a term of (33) corresp onding to a tuple ( κ, λ, µ, ν ) of the form E α,β ,γ ,δ tr Y i ρ αi,καi ! tr Y i ρ β i,λβ i ! tr Y i ρ ∗ γ i,µγ i ! tr Y i ρ ∗ δ i,ν δ i ! . (34) In light of Lemma 7 (cf. (30)), w e may “decouple” an y four app earances of the same ρ ij , resulting in a sum of terms in which no ρ app ears more than twice. F or this reason, we b egin our analysis with the extra assumption that each ρ ij app ears exactly twice. F or notational conv enience, let us temp orarily refer to the 2 n distinct ρ ij app earing in (34) simply b y ρ 1 , ρ 2 , . . . , ρ 2 n , this list in the natural order given by κ and λ (e.g., ρ i = ρ i,κi and ρ n + i = ρ i,λi for i ≤ n ). F or a tuple ( α, β , γ , δ ), then, the cup caps of Eq. (13) introduce edges b et ween conjugate app earances of the same ρ i as sho wn in Figure 3(a); any tw o indices attached by an edge are constrained to b e equal. With this conv en tion, the p erm utations µ and ν determine a p erm utation w ∈ S 2 n giv en by the ordering of the conjugate app earances of the ρ i (when α = β = γ = δ = 1). The contraction determined by w and a particular ( α, β , γ , δ ) is combinatorial in the sense that it merely constrains families of indices (among the [ ρ i ] t s and their conjugates) to b e equal. Recalling that each cup cap con tributes a factor of 1 /d and eac h cycle p ermits d differen t settings of the indices it contains, the v alue of this contraction is determined by the cycle structure of the p erm utation ( r − 1 , r − 1 ) ( α − 1 ,β − 1 ) w − 1 ( r , r ) ( γ ,δ ) w ; 14 Conjugated (a) Cup caps and rotations α β γ α β γ δ δ w w (b) Symmetrization induces conjugation Figure 3: Con tractions in the second moment computation see Figure 3(b). In particular, w e may write the quantit y of (34) as 1 d 2 n E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 ) ( α,β ) w − 1 ( r,r ) ( γ ,δ ) w ) , where, as b efore, c ( π ) denotes the num ber of cycles in the p ermutation π . As w e are interested in the exp ectation, ov er all rearrangements determined by α , β , γ , and δ , the only relev ant feature of the p erm utation w is k = k κ,ν = w { 1 , . . . , n } ∩ { n + 1 , . . . , 2 n } = ( i, κ ( i )) ∩ ( i, ν ( i )) , (35) the num b er of σ i carried from the “ κ -blo c k” to the “ ν -blo c k.” Defining w k = (1 n + 1) · · · ( k n + k ), w e may rewrite the exp ectation of (34) as 1 d 2 n E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 ) ( α,β ) w k ( r,r ) ( γ ,δ ) w k ) . As in Section 3, for a giv en double cycle co ver C , a coloring ( κ, λ, µ, ν ) ` C is determined b y selecting, for each nontrivial cycle c of C , whether c ’s colors alternate b etw een ( κ, µ ) and ( λ, ν ) or ( κ, ν ) and ( λ, µ ), and the parity of this coloring. In ligh t of the decoupling equation (30), we ma y treat eac h isolated edge as an “unordered pair” of edges that can b e colored in tw o p ossible w ays, with ( κ, µ ) and ( λ, ν ) or ( κ, ν ) and ( λ, µ ). Recall that in the case of Haar measure, this in tro duces a factor 1 + O (1 /d ) for each isolated edge, giving the factor (1 + O (1 /d )) n . Observ e now that the v alue of k determined in Eq. (35) is unaffected by the c hoice of parit y in a non trivial cycle. The other choices describ ed ab ov e (determining the colors in volv ed in a nontrivial cycle or isolated edge) ha ve the effect of exchanging a family of ρ ij in the µ -blo c k with a family in the ν -block. In particular, focusing on the p ortion of the second momen t corresp onding to a particular double 15 cycle co ver C , we ha ve X ( κ,λ,µ,ν ) ` C E α,β ,γ ,δ tr Y i ρ αi,καi ! tr Y i ρ β i,λβ i ! tr Y i ρ ∗ γ i,µγ i ! tr Y i ρ ∗ δ i,ν δ i ! = X ( κ,λ,µ,ν ) ` C E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 ) ( α,β ) w κ,ν ( r,r ) ( γ ,δ ) w κ,ν ) (36) ≤ 1 + O 1 d n 2 t ( C ) n X k =0 n k 1 d 2 n E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 ) ( α,β ) w k ( r,r ) ( γ ,δ ) w k ) (37) = 1 + O 1 d n 2 t ( C ) a (2) d . (38) Summing ov er all cycle cov ers C ` A and applying (31) completes the pro of. F or the Gaussian measure, the same pro of applies without the factor (1 + O (1 /d )) n . W e return to the pro of of Lemma 9. Pr o of of L emma 9. The inequality 1 n n/ 2 ˜ a (2) d ≤ a (2) d ≤ ˜ a (2) d is immediate from the fact that the terms of the sums defining these quantities are p ositiv e. W e in tro duce some further notation: for a p erm utation π ∈ S 2 n , w e define π ↑ = i | i ∈ { 1 , . . . , n } , π i ∈ { n +1 , . . . , 2 n } and π ↓ = i | i ∈ { n +1 , . . . , 2 n } , π i ∈ { 1 , . . . , n } . Then | π ↑ | = | π ↓ | and, if π is selected uniformly in S 2 n , Pr | π ↑ | = k = n k 2 / 2 n n . Observ e also that if α , β , γ , and δ are c hosen uniformly from S n , the element ( γ , δ ) w k ( α, β ) is uniform in the set { π | | π ↑ | = k } . Rec alling that d c ( · ) is a class function, 1 2 n n · ˜ a (2) d = 1 2 n n X k n k 2 1 d 2 n E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 ) ( α,β ) w k ( r,r ) ( γ ,δ ) w k ) (39) = 1 2 n n X k n k 2 1 d 2 n E α,β ,γ ,δ d c ( ( r − 1 ,r − 1 )( α,β ) − 1 w k ( γ ,δ ) − 1 ( r,r )( γ ,δ ) w k ( α,β ) ) = 1 d 2 n E π d c ( ( r − 1 ,r − 1 )( r,r ) π ) = 1 d 2 n E π E σ d c (( r,r ) σ ( r,r ) π ) , where π and σ are chosen uniformly at random from S 2 n . Here we use the fact that an y element of S 2 n —in this case, ( r , r )—is in the same conjugacy class as its in verse. Defining P n,n to b e the uniform distribution on the conjugacy class [( r , r )] = { ( r , r ) π | π ∈ S 2 n } ⊂ S 2 n , w e may express the quantit y ab ov e as an inner pro duct 1 2 n n ˜ a (2) d = 1 d 2 n (2 n )! h d c ( · ) , P n,n ∗ P n,n i . (40) 16 As in the pro of of Lemma 5, we compute this inner pro duct b y determining the F ourier expan- sions of the class functions d c ( · ) and P n,n . By the Murnaghan-Nak a y ama rule, χ λ ( n, n ) = 0 unless the tableau λ can b e expressed as the union of t wo n -ribb on tiles. An y such tableau has r ank (the n umber of cells on the diagonal) no more than t wo and can b e conv eniently expressed in terms of its char acteristics : defining a i and b i to b e the n umber of cells b elo w and to the right of the i th b o x of the diagonal, resp ectiv ely , we use the notation τ = ( b 1 , b 2 , . . . , b r | a 1 , a 2 , . . . , b r ) to describ e the tableau (see Figure 4). If χ τ ( n, n ) is nonzero, so that τ can b e written as the union of tw o a 2 a 1 b 2 b 1 Figure 4: A Y oung tableau decomp osed in to tw o n -ribb on tiles. n -ribb ons, we find (again app ealing to the Murnaghan-Nak ay ama rule) that either • τ = ( b 1 , b 2 | a 1 , a 2 ) has rank t wo, a 1 + b 2 + 1 = a 2 + b 1 + 1 = n , and χ τ ( n, n ) = ± 2, or • τ = ( b 1 | a 1 ) has rank one and χ τ ( n, n ) = ± 1. W e let T n denote the family of represen tations of S 2 n describ ed ab o ve; note that | T n | ≤ n 2 . Observe that for eac h τ ∈ T n , h P n,n , χ τ i = 1 (2 n )! χ τ ( n, n ) (where χ τ ( n, n ) ∈ {± 1 , ± 2 } ). Recalling that χ ∗ χ = | G | χ (1) χ for an y irreducible character χ of a group G , we may express h P n,n ∗ P n,n , χ τ i = 1 (2 n )! χ τ ( n, n ) 2 dim τ . As discussed in the pro of of Lemma 5, D d c ( · ) , χ τ E = h χ Σ , χ τ i = X ( ρ 1 ,...,ρ d ) P ρ i =2 n K τ ρ , where χ Σ is the p erm utation representation giv en by the action of S 2 n on the set { ( a 1 , . . . , a 2 n | a i ∈ { 1 , . . . , d }} and K τ ρ is the Kostk a num ber, equal to the n umber of semistandard tableaux of shap e τ with ρ i app earances of the num b er i . Then h P n,n ∗ P n,n , d c ( · ) i = X τ h P n,n ∗ P n,n , χ τ i D d c ( · ) , χ τ E = 1 (2 n )! X τ ∈ T n χ τ ( n, n ) 2 X ( ρ 1 ,...,ρ d ) P ρ i =2 n K τ ρ dim τ . (41) 17 Note that for eac h τ ∈ T n , χ τ ( n, n ) 2 ≤ 4 and K τ ρ ≤ dim τ , as dim τ is the num b er of semistandard tableaux of shap e τ with distinct entries in any totally ordered set. Th us, h P n,n ∗ P n,n , d c ( · ) i ≤ 4 (2 n )! X τ ∈ T n 2 n + d − 1 2 n ≤ 4 (2 n )! | T n | 2 n + d − 1 2 n ≤ 4 n 2 (2 n )! 2 n + d − 1 2 n . On the other hand, eac h term in the sum of (41) is p ositiv e; thus h P n,n ∗ P n,n , d c ( · ) i ≥ h P n,n ∗ P n,n , χ 1 i D d c ( · ) , χ 1 E = 1 2 n ! 2 n + d − 1 2 n . (42) W e conclude that 1 2 n ! 2 n + d − 1 2 n ≤ h P n,n ∗ P n,n , d c ( · ) i ≤ 4 n 2 2 n ! 2 n + d − 1 2 n whic h, in conjunction with (40), completes the pro of of Lemma 9. No w w e apply these Lemmas to prov e an upp er b ound on the critical ratio E [ X 2 s ] / E [ X s ] 2 . If d is constan t, which is the only case for which we ha ve an efficient algorithm to compute X s [Bar00], our b ound is not very inspiring. If n ≥ d , com bining Lemmas 5, 8, and 9 gives E [ X 2 s ] E [ X s ] 2 ≤ 4 n 2 2 n n 2 n + d − 1 2 n . n + d n + 1 2 = O ( n 3 /d ) 2 n + 2 d n + d . 2 n + 2 d d = 2 2 n n − d + O (1) , assuming that d = O (1). This prov es (4) in Theorem 1, and suggests that d needs to grow with n to giv e a go o d estimator. On the other hand, when d grows fast enough with n , w e find that the critical ratio b ehav es quite w ell. Combining Lemma 9 with the low er b ound (15) gives E [ X 2 s ] E [ X s ] 2 ≤ 4 n ! 2 d 2 n 2 n n 2 n + d − 1 2 n = 4 d 2 n (2 n + d − 1)! ( d − 1)! = 4 1 + 1 d · · · 1 + 2 n − 1 d ≤ 4e 4 n 2 /d , completing the pro of of (5) in Theorem 1. In the critical case where d = O (1) the upp er b ound of 2 2 n n − d + O (1) w e establish ab ov e is tight up to the factor introduced b y our “approximation” of a (2) d b y ˜ a ( d ) d —that is, a factor of n n/ 2 . In particular, even for the identit y matrix, we can establish a 2 n n − d + O (1) lo wer b ound on the critical ratio: Theorem (Restatement of Theorem 2) . L et A b e the n × n identity matrix and d a c onstant. Then E [ X 2 s ] E [ X s ] 2 = Ω 2 n n d and E [ X 2 s ] E [ X s ] 2 = 1 − O 1 d n Ω 2 n n d , when the ρ ij ar e distribute d ac c or ding to the Gaussian or Haar me asur e r esp e ctively. Pr o of of The or em 2. Let d b e a constan t and A the n × n iden tity matrix. Then p erm A = p erm 2 A = 1 and, from Lemma 5, E [ X s ] = a d = 1 d n n + d n + 1 . 18 As for the second momen t, the only nontrivial term in the sum (33) corresp onds to the case where the p erm utations κ , λ , µ , and ν are the iden tity . In this case each ρ ij app ears four times and there are precisely n k terms of (36) for which w κ,λ = w k ; in particular, in this case the inequalit y of (37) is an equalit y . Recalling Lemma 7, w e conclude that E [ X 2 s ] = a (2) d and E [ X 2 s ] ≥ (1 − O (1 /d )) n a (2) d when the ρ ij ha ve Gaussian measure and Haar measure, resp ectiv ely . F or constant d we hav e ˜ a (2) d a 2 d ≥ a (2) d n n/ 2 a 2 d = 2 n n 2 n + d − 1 2 n n n/ 2 n + d n +1 2 and, considering that ` `/ 2 = 2 ` Θ( √ ` ) and 2 n + d − 1 2 n ≥ n + d n +1 , ˜ a (2) d a 2 d = 2 2 n 2 n O ( √ n ) n + d n +1 = Ω 2 n n d . The statemen t of the theorem follows. 5 Estimators based on the F rob enius norm In this section, w e prov e Theorem 3 b y relating the moments of F rob enius estimators, X F rob = k det M k 2 and X F rob ,s = k sdet M k 2 , to those of the trace-squared estimators w e studied ab o ve. As Fig. 5 shows, the diagrams corresp onding to the exp ectations and second moments of these estimators differ from those of their counterparts by a small num b er of lo cal mov es. Let Q b e the pro duct of some sequence of ρ ij . Then all we hav e to do is c hange our previous contraction, | tr Q | 2 = Q i i Q j j where the “output” of eac h Q is connected to its “input,” to k Q k 2 = tr QQ † = Q i j ( Q † ) j i = Q i j ( Q ∗ ) i j . In this contraction, w e connect the output of eac h Q to the output of the corresp onding Q ∗ , and similarly wire their inputs together. The cup caps, resulting from taking the exp ectation of ρ ⊗ ρ ∗ for eac h ρ ij app earing in these pro ducts, remain the same as b efore. No w recall that the expectation and second momen t of these estimators is proportional to d c , where c is the n umber of lo ops in these diagrams. Each of these rewiring mo v es c hanges the n umber of lo ops by at most one, b y cutting one lo op into tw o or merging tw o lo ops into one. Th us we hav e 1 d E [ X ] ≤ E [ X F rob ] ≤ d E [ X ] and 1 d 2 E [ X 2 ] ≤ E [ X 2 F rob ] ≤ d 2 E [ X 2 ] , and similarly in the symmetrized case. Assuming the worst regarding these b ounds yields (6), and completes the pro of of Theorem 1. 19 Conjugated Conjugated Figure 5: Rewiring the diagram to change | tr M | 2 to tr M M † = k M k 2 . The cupc aps remain unc hanged, but instead of wiring the “input” of each pro duct to its “output,” we wire a pair of pro ducts together “input” to “input” and “output” to “output.” 6 Conclusions As w e stated in the In tro duction, our results present us with the following iron y . F or the estimators based on the unsymmetrized determinan t, whic h w e do not kno w how to compute efficien tly , the critical ratio E [ X 2 ] / E [ X ] 2 b ecomes more mildly exp onen tial as d increases. Sp ecifically , for any > 0 we can make the critical ratio O ((1 + ) n ) b y taking d = 1 / . On the other hand, for the estimators based on the symmetrized determinant, the critical ratio is Ω(2 n ) in the case d = O (1) where w e ha v e an efficien t algorithm. In order to reduce this exponential to O ( c n ) for some c < 2, we need d to b e a growing function of n . This is con trary to the intuition expressed in [Bar00], and to our o wn initial intuition when we b egan work on this problem. Of course, the symmetrized estimators ma y still be tightly concen trated, as conjectured in [Bar00]. Ho wev er, since their v ariance is large, any pro of of concen tration would hav e to b ound, implicitly or explicitly , their higher moments. A t this p oint, finding an algebraic p olynomial-time approximation scheme for the p ermanen t seems to require progress on at least one of several fronts. One approac h would b e to seek a p olynomial-time algorithm for sdet M in the case where M ’s en tries b elong to A d where d = poly ( n ), but it seems difficult to scale up the algorithm of [Bar00] b ey ond d = O (1). Another approach, as suggested in [CRS03], would b e to seek an algorithm for det M where M ’s entries b elong to some group with representations of arbitrarily high dimension. Ho wev er, it seems difficult to construct a succinct description for the group algebra elements which app ear in the determinan t, since their supp ort in the group basis is exp onen tially large. Ac kno wledgmen ts This researc h was supp orted by NSF grants CCF-0524613, CCF-0835735, and CCF-0829917. 20 References [Bar99] Alexander I. Barvinok. P olynomial time algorithms to appro ximate p ermanen ts and mixed discriminants within a simply exp onential factor. R andom Structur es and Algo- rithms , 14(1):29–61, 1999. [Bar00] Alexander I. Barvinok. New p ermanen t estimators via non-commutativ e determinants, 2000. [Con00] John B. Conw a y . A Course in Op er ator The ory , v olume 21 of Gr aduate Studies in Mathematics . American Mathematical So ciety , 2000. [CRS03] Stev e Chien, Lars Eilstrup Rasmussen, and Alistair Sinclair. Clifford algebras and appro ximating the p ermanen t. J. Comput. Syst. Sci. , 67(2):263–290, 2003. [GG81] C. D. Go dsil and Iv an Gutman. On the matc hing p olynomial of a graph. In A lgebr aic Metho ds in Gr aph The ory , pages 241–249. North-Holland, 1981. [JK81] Gordon James and Adalb ert Kerb er. The r epr esentation the ory of the symmetric gr oup , v olume 16 of Encyclop e dia of mathematics and its applic ations . Addison–W esley , 1981. [KKL + 93] Narendra Karmark ar, Richard M. Karp, Richard J. Lipton, L´ aszl´ o Lo v´ asz, and Michael Lub y . A Monte-Carlo algorithm for estimating the p ermanent. SIAM J. Comput. , 22(2):284–293, 1993. [Nis91] Noam Nisan. Low er b ounds for non-comm utative computation. In Pr o c. 23r d Annual A CM Symp osium on The ory of c omputing , pages 410–418, New Y ork, NY, USA, 1991. A CM. [T o d91] Seinosuk e T o da. PP is as hard as the p olynomial-time hierarch y . SIAM J. Comput. , 20(5):865–877, 1991. [V al79] Leslie G. V aliant. The complexity of computing the p ermanen t. The or. Comp. Sci. , 8:189–201, 1979. A Represen tation theory and the symmetric group W e briefly discuss the elements of the represen tation theory of groups, and of the symmetric groups in particular. Our treatment is primarily for the purp oses of setting do wn notation; w e refer the reader to [JK81] for a complete accoun t. Let G b e a finite group. A r epr esentation ρ of G is a homomorphism ρ : G → U ( V ), where V is a finite-dimensional Hilb ert space and U ( V ) is the group of unitary op erators on V . The dimension of ρ , denoted d ρ , is the dimension of the v ector space V . By c ho osing a basis for V , then, we can iden tify each ρ ( g ) with a unitary d ρ × d ρ matrix; these matrices then satisfy ρ ( g h ) = ρ ( g ) · ρ ( h ) for ev ery g , h ∈ G . Fixing a representation ρ : G → U ( V ), w e say that a subspace W ⊂ V is invariant if ρ ( g ) W ⊂ W for all g ∈ G . W e say ρ is irr e ducible if it has no inv ariant subspaces other than the trivial space { 0 } and V . If tw o represen tations ρ and σ are the same up to a unitary c hange of basis, w e say that they are e quivalent . It is a fact that any finite group G has a finite num ber of distinct irreducible 21 represen tations up to equiv alence and, for a group G , w e let ˆ G denote a set of representations con taining exactly one from each equiv alence class. The irreducible represen tations of G give rise to the F ourier transform. Sp ecifically , for a function f : G → C and an element ρ ∈ ˆ G , define the F ourier tr ansform of f at ρ to b e ˆ f ( ρ ) = s d ρ | G | X g ∈ G f ( g ) ρ ( g ) . The leading co efficients are c hosen to make the transform unitary , so that it preserves inner pro d- ucts: h f 1 , f 2 i = X g f ∗ 1 ( g ) f 2 ( g ) = X ρ ∈ ˆ G tr ˆ f 1 ( ρ ) † · ˆ f 2 ( ρ ) . In the case when ρ is not irreducible, it can b e decomp osed into a direct sum of irreducible represen tations, each one of whic h op erates on an inv arian t subspace. W e write ρ = σ 1 ⊕ · · · ⊕ σ k and, for the σ i app earing at least once in this decomp osition, σ i ≺ ρ . In general, a given σ can app ear m ultiple times, in the sense that ρ can hav e an inv ariant subspace isomorphic to the direct sum of a ρ σ copies of σ . In this case a ρ σ is called the multiplicity of σ in ρ , and we write ρ = L σ ≺ ρ a ρ σ σ . F or a representation ρ we define its char acter as the trace χ ρ ( g ) = tr ρ ( g ). Giv en an element m , w e denote its conjugacy class [ m ] = { g − 1 mg | g ∈ G } . Since the trace is inv arian t under conjugation, c haracters are constant on the conjugacy classes, and w e write χ ρ ([ m ]) = χ ρ ( m ) where m is any elemen t of [ m ]. Characters are a p ow erful to ol for reasoning ab out the decomp osition of reducible represen tations. In particular, for ρ, σ ∈ ˆ G , w e hav e the orthogonality conditions h χ ρ , χ σ i G = 1 | G | X g ∈ G χ ρ ( g ) χ σ ( g ) ∗ = ( 1 ρ = σ , 0 ρ 6 = σ . If ρ is reducible, w e hav e χ ρ = P σ ≺ ρ a ρ σ χ σ i , and so the m ultiplicity a ρ σ is giv en by a ρ σ = h χ ρ , χ σ i G . If ρ is irreducible, Schur’s lemma asserts that the only matrices which commute with ρ ( g ) for all g are the scalars, { c 1 | c ∈ C } . Therefore, for an y A we hav e 1 | G | X g ∈ G ρ ( g ) † Aρ ( g ) = tr A d ρ 1 d ρ (43) since conjugating this sum b y ρ ( g ) simply p erm utes its terms. W e sp ecialize no w to the case of the symmetric group S n of p erm utations of the set { 1 , . . . , n } . The represen tations of S n are in one-to-one corresp ondence with Y oung diagr ams or, equiv alently , in teger partitions λ = ( λ 1 , λ 2 , · · · ) where λ 1 ≥ λ 2 ≥ · · · and P i λ i = n . The character of this represen tation is denoted χ λ . The Murnaghan-Nakayama rule giv es a recursive formula for the c haracter χ λ . In preparation for stating the rule, w e define a ribb on tile of length k to b e a p oly omino of k cells, arranged in a path where each step is up or to the right. Lemma 10 (Murnaghan-Nak ay ama rule) . Given a Y oung diagr am λ and a p ermutation π with cycle structur e k 1 ≥ k 2 ≥ · · · , a consistent tiling of λ c onsists of r emoving a ribb on tile of length k 1 22 fr om the b oundary of λ , then one of length k 2 , and so on, with the r e quir ement that the r emaining p art of λ is a Y oung diagr am at e ach step. L et h i denote the height of the ribb on tile c orr esp onding to the i th cycle: then χ λ ( π ) = X T Y i ( − 1) h i +1 (44) wher e the sum is over al l c onsistent tilings T . B Pro of of Lemma 4 Pr o of. F or the Gaussian measure, this is simply the fact that ( σ ⊗ σ ∗ ) ik j ` = σ i j ( σ k ` ) ∗ . If i 6 = k or j 6 = ` , then this is the pro duct of tw o independent random v ariables both of whom ha v e exp ectation zero. If i = k and j = ` , then this is σ i j 2 , whose exp ectation is 1 /d . F or the Haar measure, (12) follows from a little representation theory . (F or a brief introduction to representation theory , see Appendix A.) Abusing notation, supp ose that σ is the defining rep- resen tation of the group U ( d ) of unitary matrices, i.e., the d -dimensional represen tation in which unitary matrices act on column v ectors in the natural wa y . Then σ ⊗ σ ∗ is isomorphic to the conjugation action of U ( d ) on GL ( d ), the vector space of d × d matrices. W e can decomp ose this in to the direct sum of tw o inv ariant subspaces σ ⊗ σ ∗ ∼ = 1 ⊕ Γ, where 1 is the trivial representation, consisting of the scalar matrices, and Γ is the ( d 2 − 1)-dimensional represen tation consisting of d × d matrices with zero trace. Both these subspaces are clearly inv arian t under conjugation, and are, in fact, irreducible. T aking the exp ectation ov er σ ∈ U ( d ) giv es the pro jection op erator Π σ ⊗ σ ∗ 1 on to the trivial subspace—that is, the linear op erator on the space of matrices which takes a matrix A = A ik and returns a scalar whose trace is tr A . W e claim that this op erator is exactly (12), since 1 d δ ik δ j ` A ik = 1 d A i i δ j ` = 1 d tr A 1 . Here w e again use the Einstein summation conv en tion, so that A i i = tr A , and the identit y matrix is 1 = δ j ` . 23

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment