Robust Joint Source-Channel Coding for Delay-Limited Applications

In this paper, we consider the problem of robust joint source-channel coding over an additive white Gaussian noise channel. We propose a new scheme which achieves the optimal slope of the signal-to-distortion (SDR) curve (unlike the previously known …

Authors: Mahmoud Taherzadeh, Amir K. Kh, ani

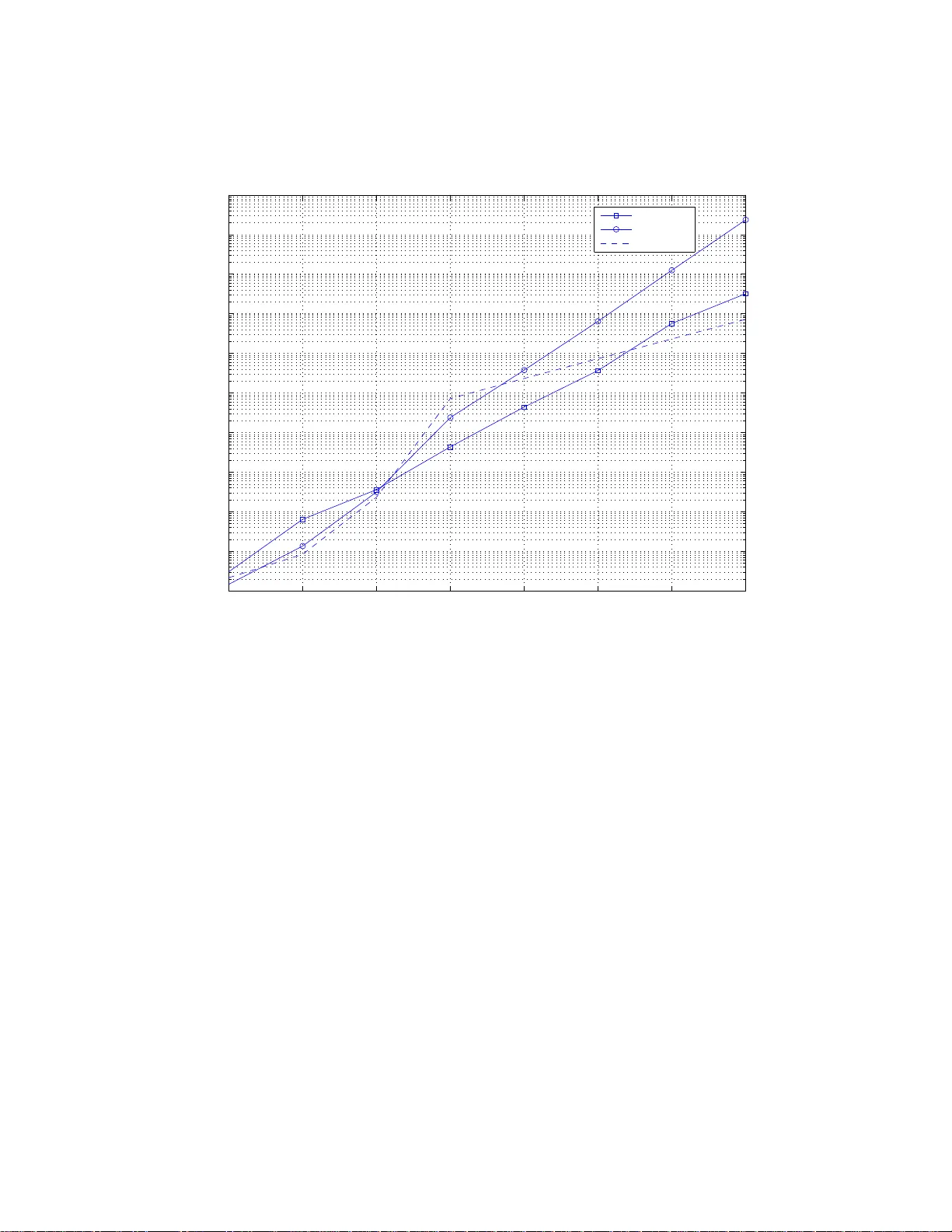

1 Rob ust Joint S ource-Channel Coding for Delay-Limit ed Applications Mahmoud T aherzadeh and Amir . K. Khandani Coding & Signal T ran smission Laboratory( www .cst.uwaterloo. ca) Dept. of Elec. and Comp. Eng., University of W aterloo, W aterloo , ON, Canad a, N2 L 3 G1 e-mail: { taherza d, khanda ni } @cst.uwaterloo.ca, T el: 51 9-884 8552 , Fax: 5 19-88 84338 Abstract In this paper, we consider the problem of robust joint so urce-ch annel cod ing over an additi ve white Gaussian noise chann el. W e prop ose a new sch eme which achieves the optimal slope of the signal-to - distortion (SDR) curve (unlike th e previously kn own codin g sch emes). Also, we propose a family of robust codes which toge ther maintain a bou nded gap with the optimum SDR curve (in ter ms of dB). T o show the importan ce of this result, we dr iv e some theo retical bounds o n the asymp totic performance o f delay-limited hybr id digital- analog (HD A) co ding schemes. W e sh ow that, unlike the delay- unlimited case, fo r any family o f delay- limited HD A code s, the a symptotic perf ormance loss is unbou nded (in terms of dB). I . I N T RO D U C T I O N In many applications, delay-limited transmis sion of analog sources over an additiv e white Gaussian noise channel is needed. Als o, in many cases, the exact signal-to-noise-ratio (SNR) is not known at the transmit ter , and may va ry over a wide range of values. T wo examples of thi s scenario are transm itting an analog source over a quasi-stati c fading channel and/or m ulticasting it t o differ ent users (with diffe rent channel gains). W it hout considerin g the d elay limitatio ns, d igital codes can theoretically achie ve the opti mal performance in the Gaussian channel. Indeed, for the er godic poin t-to-point channels , Shannon’ s source-channel coding separation theorem [1] [2] ensures the op timality of separately designi ng source and channel codes. Ho w e ver , for th e case of limit ed delay , several articles [3] [4] [5] 2 [6] [7] ha ve shown that joint source-channel codes hav e a better p erformance as compared to the separately designed source and channel codes (which are called tandem cod es). Also , digital coding is very sensitive to the mism atch in the estimati on of the channel SNR. T o a void the saturation effect of digital coding, various analog and hybrid digit al-analog schemes are introduced and in vestigated in the past [8]–[23]. Among them, examples of 1-to-2- dimensional analog maps can be found as early as t he works of Shannon [8] and K otelnikov [9] and diff erent variations of Sh annon-K o telnikov maps (which are also called t wisted modu lations ) are st udied in [10] [11] [19]. Also, in [14] and [15], analog codes based on dynamical sy stems are proposed. Alt hough th ese codes can provide asympto tic gains (for h igh SNR) over simpl e repetition codes, they suff er from a threshold effec t. Indeed, when the SNR becomes less than a certain threshold, the performance o f these s ystems degrades s e verely . Therefore, design parameters of these methods should be chos en according to the operating SNR, resulting in sensitivity to SNR estimation errors. Also, although the performance of t he system is not saturated for the high SNR values (unl ike dig ital codes), the scaling of the end-to-end distorti on is far from the t heoretical bou nds. Theoreti cal bounds on the robustness of jo int source channel coding schemes (for the delay-unli mited case) are presented in [24] and [25]. T o achieve better signal-t o-distortion (SDR) scali ng, a coding scheme is introduced in [26] [27] wh ich uses B repetitions of a ( k , n ) b inary code to m ap t he digit s of the i nfinite binary expansion of k sam ples of the source to the dig its of a nB -dimension al transmit vector . For this scheme, th e bandwidth expansion factor is η = nB k and the SDR asymptot ically scales as S D R ∝ S N R B , while in theory , the opt imum scaling i s S D R ∝ S N R η . Thus, this scheme cannot achieve the optimum scaling b y using a singl e mappi ng. In this paper , we address the prob lem of rob ust joint source-channel coding, using delay- limited codes. In particular , we show that the op timum slope of the SDR curve can be achiev ed by a single mapping . The rest of the paper is organized as follows: In section II, the system model and the basic concepts are p resented. Section III presents an analysis of the p re vious analo g coding schemes, and th eir l imitation s. In section IV , we int roduce a class of joint source-channel codes which have a self-simil ar structure, and achieve a better asymptotic performance, com pared to th e other minimum -delay analog and hybrid digital-analog 3 coding s chemes. The asymptotic p erformance of these codes, in terms of the SDR scaling, is comparable with the scheme presented in [26], but with a simpl er structure and a shorter delay . W e in vestigate t he limits of the asympto tic performance of self-similar coding s chemes and their relation with t he Hausdorff dimensi on of the modul ation signal set. In section V , we present a singl e mapping which achiev es the optimum slop e of t he SDR curve, wh ich is equal t o the bandwidth expansion factor . Although thi s mapping achiev es the o ptimum sl ope of the SDR curve, its gap wit h the opt imum SDR curve is unboun ded (i n t erms of dB). In section VI, we construct a family of robust mapp ings, which individually achiev e the optimum SDR slop e, and together , m aintain a bounded gap with the opti mum SDR curve. W e also analyze the limi ts on the asymptoti c performance of th e delay-lim ited HDA coding schemes. I I . S Y S T E M M O D E L A N D T H E O R E T I C A L L I M I T S W e consider a memoryless { X i } ∞ i =1 uniform source with zero mean and v ariance 1 12 , i .e. − 1 2 ≤ x i < 1 2 . Als o, t he samples of the source s equence are assumed independent with id entical distributions (i .i.d.). Although the focus of t his paper is on a source with uniform distribution, as it is discussed in Appendix C, the asympto tic result s are v alid for all distributions which have a b ounded p robability d ensity function. The t ransmitted signal is sent over an additive whit e Gaussian noise (A WGN) channel. The problem is to map the one-di mensional signal to the N -dimensional channel s pace, such th at the effe ct of the noise is m inimized. This means that the data x , − 1 2 ≤ x < 1 2 , is mapped to the transmitted vector s = ( s 1 , ..., s N ) . At the receiv er side, the recei ved signal is y = s + z where z = ( z 1 , ..., z N ) is t he additive whit e Gaussian noise with var iance σ 2 . As an upper bound on the performance of the system, we can consider the case of delay- unlimited. In this case, we can u se Shannon’ s theorem o n the separation of source and channel coding. By comb ining the lower bo und o n th e distortion of the quantized signal (us ing the rate- distortion formula) and the capacity of N parallel Gaussian channels with the n oise variance σ 2 , we can bo und t he distortion D = E {| x − e x | 2 } as [15] D ≥ cσ 2 N (1) where c i s a constant number . 4 I I I . C O D E S BA S E D O N DY NA M I C A L S Y S T E M S A N D H Y B R I D D I G I TA L - A NA L O G C O D I N G Pre viously , two related schemes, based on dynamical systems, h a ve been proposed for the scenario of delay-limited analog coding: 1) Shift-map dyn amical sy stem [14] 2) Spherical s hift-map dynami cal syst em [15] These are further explained in th e following. A. Shift-map dynam ical syst em In [14 ], an analog transmission schem e based o n shift-map dynamical systems i s presented. In this method, the analog data x is mapped to the m odulated vector ( s 1 , ..., s N ) where s 1 = x mo d 1 (2) s i +1 = b i s i mo d 1 , for 1 ≤ i ≤ N − 1 (3) where b i is an integer num ber , b i ≥ 2 . The set of m odulated sig nals generated by the shift map consists of b 1 · b 2 · ... · b N − 1 parallel segments insid e an N -dimensional unit hypercube. In [15 ], the authors hav e shown that by appropriately choosing the parameters { b i } for d iffe rent SNR values, one can achie ve the SDR scaling (versus the channel SNR) wit h the sl ope N − ǫ , for any posi tiv e number ǫ . Indeed, we can have a sli ghtly tighter u pper bound on th e end-t o-end distortion as follows: Theor em 1 Consider th e shif t-map analog coding system which maps th e sour ce s ample to an N -dimensional modulat ed vector . F or a ny noise variance 1 σ 2 ≤ 1 2 , we can find parameter a such th at fo r the shift-map scheme with the parameters b i = a ≥ 2 , the dist ortion of th e decoded signal D i s boun ded as 2 D ≤ cσ 2 N ( − log σ ) N − 1 (4) wher e c d epends onl y on N . 1 The result is still valid if σ 2 ≤ δ , for some 0 < δ < 1 ( but c will depend on δ ) . 2 W e use log x to denote the natural logarithm, i. e. log e x . 5 Pr o of: See Appendix A. Also, we h a ve the following lower bound on t he end-to-end di stortion: Theor em 2 F or any s hift-map ana log codi ng scheme and any noise variance σ 2 ≤ 1 2 , the output distortio n is lower bounded as D ≥ c ′ σ 2 N ( − log σ ) N − 1 (5) wher e c ′ depends on ly on N . Pr o of: See Appendix B. B. Spherical shif t-map d ynamical system In [15 ], a s pherical code based on the linear system ˙ s T = As T is introduced, where s T is the 2 N -dimensional modulated signal and A is a s ke w-symmetric matrix, i .e. A T = − A . This scheme is very si milar to the sh ift-map scheme. Indeed, with an appropri ate change of coordinates, the above mo dulated s ignal can be represented as s T = 1 √ N cos 2 π x, cos 2 aπ x, ..., cos 2 a N − 1 π x, sin 2 π x, sin 2 aπ x, ..., sin 2 a N − 1 π x (6) for some parameter a . If we consider s sm as the modulated s ignal g enerated by the shift-map s cheme with parameters b i = a in (3), then, (6) can be written i n the vector form as s T = Re e π i s sm , I m e π i s sm . (7) The relati on between the spherical code and the linear shift-map cod e is very s imilar to the relation between phase-shi ft-ke ying (PSK) and pulse-amplitud e-modulation (P AM). Indeed, the spherical shi ft-map code and PSK modul ation are, respectively , the linear shift-map and P AM modulation s which are transform ed from the uni t in terva l, [ − 1 2 , 1 2 ) , to the u nit circle. 6 s 3 s 2 s 1 s 1 = x mod 1 − 1 2 < x < 1 2 s 2 = as 1 mod 1 d s 3 = as 2 mod 1 d ≥ a − 1 √ 1+ a − 2 + ... Fig. 1. The shift-map modu lated signal set for N = 3 dimensions and a = 2 . For the performance of the spherical codes, the same result as Theorem 1 is valid. Indeed, for any parameters a and N , the spherical code asymp totically has a saving of (2 π ) 2 12 or 5.17 dB in th e power . This asymptot ic gain results from transformin g the un it-interval s ignal set (with length 1 and power 1 12 ) to the unit-circle signal set (with length 2 π and power 1 ) . Howe ver , the s pherical code uses 2 N dimensions (comp ared to N di mensions for the linear shift-map scheme). For both these methods, for any fixed parameter a , t he output SDR asymptoticall y has linear scaling with th e channel SNR. The asymptotic gain (over the simple repetition code) is approxi- mately a 2( N − 1) (because the mod ulated signal is stretched approximately a N − 1 times) 3 . There fore, a larger scalin g parameter a results in a high er asy mptotic gain. Howe ver , by increasing a , t he distance between t he parallel segments of the modu lated signal set decreases. Thi s distance is approximately 1 a and for the low SNRs (when the noi se variance is larger than or comparable t o 1 a ), jumping from one segment of t he modulated signal set to another one becomes the dominant factor in t he disto rtion of the decoded signal which results in a poo r performance in th is SNR region. Thus, there is a trade-off between t he gain in the hi gh-SNR re gion and the critical noise 3 The exact asymptotic gain is equal to the scaling factor of the si gnal set, i.e. a 2( N − 1) “ 1 + 1 a 2 + ... + 1 a 2( N − 1) ” for the shift map and (2 π ) 2 12 a 2( N − 1) “ 1 + 1 a 2 + ... + 1 a 2( N − 1) ” for the spherical shift map. 7 lev el which is fatal for the system. By increasing the scaling parameter a , the asympt otic gain increases, but at th e same ti me, a hi gher SNR t hreshold is n eeded to achieve t hat gain. In [28], the authors have combined the dynamical-system schemes with LDPC and iterative decodin g to reduce the critical SNR threshold. Howe ver , overall behavior of the out put d istortion i s the same for all t hese methods. Also, in [29] and [30], a scheme is introd uced for approaching arbitrarily close to the optim um SDR, for colored sources. Howe ver , i t is not delay-li mited and it only works for the bandwidth expansion of 1. The shift-map analog coding system can be seen as a variation of a hybrid-digi tal-analog (HD A) j oint s ource-channel code. V arious types of such hybrid schem es are inv estigated in [16] [17] [18] [24] and [31]. Indeed, for the s hift-map sys tem, we can rotate the m odulated signal set s uch that all t he parallel segments of it become aligned in the direction of o ne of the dimensions . In thi s case, by changing the supp ort region of the m odulated set (which is a rotated N -dimensional cube) to the standard cube, we obtain a ne w similar m odulation which is hybrid digital-analog and has almost the same performance. In th e new mo dulation, the information signal is quantized by a N − 1 points in an ( N − 1) -dimensional sub-space and the qu antization error is transmitted over the remaining dimension. Regarding the scaling of the output dist ortion, the performance of t he shi ft-map scheme, with appropriate choice of parameters for each SNR, is very close to t he t heoretical lim it. In fact, the output dis tortion scales as σ 2 N ( − log σ ) N − 1 , instead of being proportional to σ 2 N . Howe ver , for any fixed set of p arameters, th e curve of SDR-versus-SNR (in dB) is saturated by the unit slope (instead of N ). This shortcomi ng is an inherent drawback of schemes l ike the shift-map code or the spherical code (which are based on dynami cal systems). Indeed, in [32], i t i s shown that no si ngle diffe rentiable mapping can achieve an asymptotic slope better th an 1. T his article addresses this short coming. There are some ot her analog codes i n the literature wh ich u se different mappings. Anal og codes based on the 2-di mensional Shannon map [20] [21] [22] [23], or t he tent map [14] are examples of these codes. Howe ver , all these codes share the shortcomings of t he shift-map code. 8 I V . J O I N T S O U R C E - C H A N N E L C O D E S B A S E D O N F R AC TA L S E T S In this section, we prop ose a coding scheme, b ased on the fractal sets , that can achieve slopes greater th an 1 (for t he curve of SDR versus SNR). Scheme I: For t he modulatin g signal x , − 1 2 ≤ x < 1 2 , we consider the binary expansion of x + 1 2 : x + 1 2 = 0 · b 1 b 2 b 3 ... 2 . (8) Now , we construct s 1 , s 2 , ..., s N as s 1 = 0 · b 1 b N +1 b 2 N +1 ... α (9) s 2 = 0 · b 2 b N +2 b 2 N +2 ... α (10) ... s N = 0 · b N b 2 N b 3 N ... α (11) where 0 · b 1 b 2 b 3 ... α is t he base- α expansion 4 . Theor em 3 In the pr oposed scheme, for any α > 2 and noi se variance σ 2 ≤ 1 2 , th e output distortio n D is upp er bounded b y D ≤ cσ 2 β ( − log σ ) N (12) wher e c d epends onl y on N , and β = N log 2 log α . Pr o of: Consider z i as the Gaussian noise on the i th dim ension: Pr n | z i | > 2 √ N σ p − log σ o = (13) 2 Q 2 √ N p − log σ ≤ e − 4 N ( − log σ ) 2 = e − 2 N ( − log σ ) = σ 2 N (14) 4 In this arti cle, w e define the base- α expa nsion, for any real number α > 2 and any binary sequence ( b 1 b 2 b 3 ... ) , as ` 0 · b 1 b 2 b 3 ... ´ α , P ∞ i =1 b i α − i . 9 Now , we bound the distort ion, condi tioned on | z i | ≤ 2 √ N σ √ − log σ for 1 ≤ i ≤ N . If the k th digit of s i and s ′ i are di f ferent, | s i − s ′ i | ≥ (15) 0 · 0 ... 0 |{z} k − 1 1000 .. α − 0 · 0 ... 0 |{z} k − 1 0111 ... α (16) > ( α − 2) α − ( k + 1) (17) Therefore, if | s i − s ′ i | ≤ δ for δ > 0 , the first k digit s of s i and s ′ i are th e same, where k ≥ − log α δ α − 2 − 1 . Now , by considering δ = 4 √ N σ √ − log σ , | s i − s ′ i | ≤ 2 | z i | ≤ 4 √ N σ p − log σ (18) = ⇒ k ≥ $ − log α 4 √ N σ √ − log σ α − 2 !% − 1 (19) Therefore, for 1 ≤ i ≤ N , th e first $ − log α 4 √ N σ √ − log σ α − 2 !% − 1 digits of s 1 , s 2 , ..., s N can be decoded wi thout any error , hence, the first N $ − log α 4 √ N σ √ − log σ α − 2 !% − 1 ! bits of the binary expansion of x can be reconstructed perfectly . In th is case, the outpu t dis tortion is bo unded by √ D ≤ 2 − N “j − log α “ 4 √ N σ √ − log σ α − 2 ”k − 1 ” (20) = ⇒ D ≤ c 1 σ 2 β ( − log σ ) N (21) where c 1 depends only on α and N . By combining the upper bounds for the two cases, not ing that σ < 1 10 D ≤ N X i =1 Pr n | z i | > 2 √ N σ p − log σ o + c 1 σ 2 β ( − log σ ) N (22) ≤ σ 2 N + c 1 σ 2 β ( − log σ ) N (23) ≤ cσ 2 β ( − log σ ) N . (24) According to the t heorem 2, for any ǫ > 0 , we can construct a modulation schem e that achie ves the asymptotic slope of N − ǫ (for the curve of SDR versus SNR, in terms of dB). As expected (according to the result by Ziv [32]), no ne of th ese mappin gs are differentiable. More generally , Ziv has shown that [32]: Theor em 4 ( [32], Theor em 2) F or the modu lation map ping s = f ( x ) , define d f (∆) = E k f ( x + ∆) − f ( x ) k 2 . If ther e are positive numb ers A, γ , ∆ 0 such that d f (∆) ≤ A ∆ γ for ∆ ≤ ∆ 0 . (25) Then, ther e is constant c such t hat D ≥ cσ 2 γ . (26) In Scheme I, by decreasing α , we can increase th e asymptot ic slope β . Howe ver , it also degrades the low-SNR performance of the system. This phenom enon is observed in figure 3. In schem e I, the signal set is a self-simil ar fractal [33], where th e parameter β , which determines the asymptotic slope of the curve, is the dimension of the fractal. There are di f ferent ways t o define th e fractal dimension . One of them is the Hausdorff dimensi on. Cons ider F as a Borel set in a metric space, and A as a countable family of sets that cover s i t. W e define H s ε ( F ) = inf P A∈ A (diameter( A )) s , where the infimum is over all count able covers that diameter o f th eir sets are not larger than ε . The s -dimensio nal Hausdorff space is defined as H s ( F ) = lim ε → 0 H s ε ( F ) = sup ε> 0 H s ε ( F ) . It can be s hown that there is a critical value s 0 11 such that for s < s 0 , this measure i s infinit e and for s > s 0 , i t is zero [33]. This critical value s 0 is called t he Hausdorff dimension of the set F . Another useful definition is t he box-counting dim ension. If we partition the space into a grid of cubic boxes of size ε , and consi der m ε as the number of boxes which intersect the set F , the box-counting d imension o f F is defined as Dim b ( F ) = lim ε → 0 log m ε log 1 ε (27) It can be shown that for regular self-similar fractals, the Hausdorff dimensi on i s equ al to the box-counting dim ension [33]. Intuitively , theorem 3 means that i n scheme I amo ng the N a vailable d imensions, only β dimensio ns are effecti vely used. Ind eed, we can show that for any modulation set 5 with box-countin g dimension β , t he asymptotic slope of the SDR curve is at most β : Theor em 5 F or a modulat ion mapp ing s = f ( x ) , if t he modulati on set F ha s b ox-counting dimension β , then lim σ → 0 log D log σ ≤ 2 β . (28) Pr o of: W e divide the space t o boxes of size σ . Consider m σ as the number of cubic boxes that cove r F . W e divide the source signal set to 4 m σ segments of length 1 4 m σ . Consider A 1 , ..., A 4 m σ as the corresponding N -dimensional opti mal d ecoding regions (based on t he MMSE criterion ), and B 1 , ..., B 4 m σ as their intersection wi th the m σ cubes (see figure 2). T otal v olume of these 4 m σ sets is equal to the tot al volume of the covering boxes, i.e. m σ σ N . Thus, at least, h alf of these sets (i.e. 2 m σ of them) h a ve volume less than 1 2 σ N . For any of these sets such as B i and any box, the volume of the intersectio n of that box with the other sets is at least V min = σ N − 1 2 σ N = 1 2 σ N . For any point in t he corresponding segments of the set B i , the probabilit y of decoding to a wrong segment is lo wer bounded by the prob ability of a jump t o th e neighbo ring sets in t he same box. Because the variance of the additive Gaussi an noise is σ 2 per each dimensio n, and for such a jump the squared n orm of the noise at mo st needs to be N σ 2 (square of the diameter of the box), t he probability of su ch a j ump to the neighboring sets can be lower bounded as 5 Modulation set is the set all possible modulated vectors. 12 B i Fig. 2. Boxes of size σ and their intersections with the decoding region s Pr( j ump ) ≥ V min · min k z k 2 ≤ N σ 2 f z ( z ) (29) ≥ 1 2 σ N · 1 (2 π ) N 2 σ N e − N σ 2 2 σ 2 = 1 2 N 2 +1 π N 2 e − N 2 , (30) where f z ( z ) is the pdf of the noi se vector z . Now , for these segments of the so urce, consider the sub segments with length 1 20 m σ at the center of them. When the s ource belongs to one of th ese s ubsegments, wrong segment d ecoding results in a squared error of at least 1 2 · 1 4 m σ − 1 20 m σ 2 = 1 10 m σ 2 . Thus, for these subsegments whose total length is at least 1 20 m σ · 2 m σ = 1 10 , at least with probabili ty Pr( j ump ) , we have a squared error whi ch is no t less than 1 10 m σ 2 . Therefore, D ≥ 1 10 Pr( j ump ) · 1 10 m σ 2 = c m 2 σ (31) 13 where c only depends on the band width expansion N . On t he other hand, based on the definiti on of the box-coun ting dimension, β = lim σ → 0 log m σ log 1 σ . (32) By u sing (31) and (32), lim σ → 0 log D log σ ≤ 2 β . (33) It should be noted that t heorem 5 is valid for all si gnal sets, not just self-sim ilar si gnal sets. As a corollary , based on t he fact that the box-counti ng dimension can not be greater than th e dimension of t he space [33 ], Theorem 5 provides a geomet ric insi ght t o (1). Another scheme based on self-simil ar signal sets and the infinite binary expansion of the source is proposed in [26] [27], which similar to the scheme proposed in this section, achie ves a SDR scaling better th an l inear coding, but cannot achieve the optim um SDR scaling. The scheme presented in [26] i s b ased on using B repetitions of a ( k , n ) binary code to m ap the digits o f the infinite binary expansion o f k samples of the source to the digits of a nB -dimensional transmi t vector . This s cheme s hares t he shortcoming of Scheme I. In [26 ], t he b andwidth expansion factor is η = nB k and the SDR asym ptotically scales as S D R ∝ S N R B , inst ead o f the optimum scaling S D R ∝ S N R η . Th e main difference between Scheme I and the scheme propos ed in [26] is that in Scheme I, the delay is mini mum (it u ses only one sample of th e source for coding), but in [26], t he delay is k , and the the ratio between the SDR exponent and the opt imum SDR exponent is dependent on the delay (it is k n ), i.e. t o increase it, one needs to i ncrease the length of the bin ary cod e, which result s in increasing the delay . The idea of using t he infinite binary expansion of the source, for j oint sou rce-channel coding, can be traced back t o Shannon’ s 1 949 paper [8], where shuffling t he digits is propos ed for band- width contraction (i.e. mapping high-di mensional data to a s ignal set wi th a lower dimension). For bandwidth expansion, space-filling self-simil ar signal sets hav e been in vestigated in [13], howe ver , the SDR scaling of those schemes are not better than linear coding . The reason is that when we us e a self-simi lar set t o fill t he s pace, the squared error caused by j umping to adjacent subsets d ominates the scaling of the distortion. T o a void this effect, we need to av oid filling the whol e s pace. Th is results in losin g dimensio nality for self-similar sets, which result s 14 in su b-optimum SDR scaling (as in vestigated in this s ection). T o av oid this drawback, we need to cons ider signal sets whi ch are not self-sim ilar , as p roposed in the next section. V . A C H I E V I N G T H E O P T I M U M A S Y M P T OT I C S D R S L O P E U S I N G A S I N G L E M A P P I N G Although Scheme I can construct m appings that achie ve n ear -optimum slope for the curve of SDR (versus the channel SNR), none of these mappings can achieve the opt imum s lope N . T o achie ve the opti mum slope with a single mapping, we s lightly m odify Scheme I: For the modulating signal x , cons ider x + 1 2 = 0 .b 1 b 2 b 3 ... 2 . W e construct s 1 , s 2 , ..., s N as s 1 = 0 .b 1 0 b N ( N +1) 2 +1 b N ( N +1) 2 +2 ...b N ( N +1) 2 + N +1 0 b (2 N )(2 N +1) 2 +1 ... 2 (34) s 2 = 0 .b 2 b 3 0 b ( N +1)( N +2) 2 +1 b ( N +1)( N +2) 2 +2 ...b ( N +1)( N +2) 2 + N +2 0 ... 2 (35) ... ... s N = 0 .b N ( N − 1) 2 +1 b N ( N − 1) 2 +2 ...b N ( N +1) 2 0 ... 2 (36) The diffe rence between thi s schem e and Scheme I is that inst ead of assigning t he k N + i th bit to the si gnal s i , t he bits of the bi nary expansion of x + 1 2 are grouped s uch t hat the l th g roup ( l = k N + i ) consists of l bit s and is assigned to the i th dimensi on. In decoding, we find the point in the sig nal set which is closest to the recei ved vector s + z . If | z i | < 2 − 1 − P n k =0 ( kN + i +1) , the first P n k =0 ( k N + i + 1) bits of s i can be d ecoded error-freely (for 1 ≤ i ≤ N ) whi ch i nclude P n k =0 ( k N + i ) bit s of the so urce x . Theor em 6 Using the mapping constructed by Scheme II, for any noise variance σ 2 ≤ 1 2 , t he output distorti on D is upper bound ed by D ≤ c 1 σ 2 N 2 c 2 √ − log 2 σ (37) wher e c 1 and c 2 only depend 6 on N . 6 Throughout this pap er , c 1 , c 2 , ... are constan ts, independent of σ (they may depend on N ). 15 Pr o of: Let z i be the Gaussian noise o n the i th dimension and assume that n is selected s uch that n +1 X k =1 ( k N + 1) ≤ − log 2 σ < n +2 X k =1 ( k N + 1) . (38) The probability t hat | z i | ≥ 2 − 1 − P n k =1 ( kN +1) is negligi ble. Indeed, Pr ( | z i | ≥ 2 − 1 − P n k =1 ( kN +1) − log 2 σ ≥ n +1 X k =1 ( k N + 1) ) ≤ (39) 2 Q 2 − 1 − P n k =1 ( kN +1) 2 − P n +1 k =1 ( kN +1) = 2 Q 2 ( n +1) N (40) a < 2 Q 2 √ P n +2 k =1 k N +1 (41) b < 2 Q 2 √ − log 2 σ c < 2 − 2 2 √ − log 2 σ − 1 (42) where ( a ) because q P n +2 k =1 k N + 1 = q N ( n +2)( n +3) 2 + n + 2 < ( n + 1) N for N ≥ 2 , and ( b ) because (38 ) , and ( c ) because Q ( x ) < 1 2 e − x 2 2 . On the oth er hand, when | z i | < 2 − 1 − P n k =1 ( kN +1) , for 1 ≤ i ≤ N , | z i | < 2 − 1 − P n − 1 k =0 ( kN + i +1) , hence the first P n − 1 k =0 ( k N + i + 1) bits o f s i can be decoded error -freely which include P n − 1 k =0 ( k N + i ) bits of t he source x . Thus, the first P N i =1 P n − 1 k =0 ( k N + i ) = P nN j =1 j bits of x can be decoded error -freely . Now , nN X j =1 j = nN ( nN + 1 ) 2 (43) = N N ( n + 2) ( n + 3) 2 + n + 2 − N 2 (5 n + 6) + nN + 4 N 2 (44) = N n +2 X k =1 ( k N + 1) ! − N 2 (5 n + 6) + nN + 4 N 2 (45) ≥ N n +2 X k =1 ( k N + 1) ! − c 3 v u u t n +1 X k =1 ( k N + 1) (46) 16 where c 3 depends on ly on N . Therefore, by using the assumption (38), nN X j =1 j ≥ (47) − N log 2 σ − c 3 p − log 2 σ (48) Consequently , the outp ut distorti on is bound ed by D ≤ N X i =1 Pr n | z i | ≥ 2 − 1 − P n k =1 k N o + 2 − 2 P nN j =1 j (49) ≤ 2 Q 2 √ − log 2 σ + 2 2 N l og 2 σ +2 c 3 √ − log 2 σ (50) = 2 Q 2 √ − log 2 σ + σ 2 N 2 c 2 √ − log 2 σ . (51) = ⇒ D ≤ c 1 σ 2 N 2 c 2 √ − log 2 σ . (52) It s hould be n oted that i n this proof, t he assumption o f having a uniform distribution is not used, and the above proof is valid for any source whose samples are in the interval − 1 2 , 1 2 . In Ap pendix C, we extend the scheme proposed i n this section t o o ther sources which are not necessarily bounded. V I . A P P ROA C H I N G A N E A R - O P T I M U M S D R B Y D E L A Y - L I M I T E D C O D E S In [24], a family of hybrid di gital-analog (HD A) source-channel codes are p roposed which together can achieve th e optimum SDR curve and each of them only suf fers from t he mild saturation effect (the asymp totic un it slo pe for th e curve of SDR versus SNR). Howe ver , t heir approach is based on usin g capacity-approaching digital codes as a component of their scheme. In [25], i t is shown that for any joint source-channel code that touches the optim um SDR curve at a certain SNR point , the asym ptotic slop e can not be better than on e. 17 In this section, we cons ider the pro blem of finding a family of delay-lim ited analog cod es which together hav e a boun ded asymptoti c loss in the SDR performance (in terms of dB). Results of Section III sh ow that none the pre vious analog coding schemes (based on dynamical systems) can construct such a fa mil y of codes. In this section, we also show t hat no HD A source-channel coding scheme can achieve this goal. In t he HD A source-channel coding, in general, to map an M dimensio nal source t o an N dimensional signal set, the source is quantized by κ poi nts which are sent o ver N − M dimensions and the residual nois e is transmitt ed o ver the remaining M dimension s. In other words, the region of the sou rce (which is a hypercube for the case of a uniform source) is divided into κ sub regions A 1 , ..., A κ . These subregions are m apped to κ parallel subsets of the N dimens ional E uclidean space, A ′ 1 , ..., A ′ κ , where A ′ i is a scaled version of A i with a factor of a . Theor em 7 Consider a HD A joint s our ce-channel code which map s an M -dimensional uni form sour ce (inside the unit cube) to κ parallel M -dimens ional subsets of an N dimens ional Euclidean space ( N > M ), with a power constraint of 1 . If the decoding of digi tal and analog parts ar e done separately , for any noise vari ance σ 2 < 1 , the output distorti on is lower bounded by D ≥ cσ 2 N M ( − log σ ) N − M M (53) wher e c d epends onl y on M and N . Pr o of: See Appendix D. Now , we con struct families of delay-limi ted analog codes which by a proper choice of param- eters (according to the channel SNR) have a bounded asymptoti c loss in th e SDR performance (in terms of d B). T ype I - Family o f piece-wise linear mappings: For any 2 − k − 1 < σ ≤ 2 − k , for k > 0 , we construct an analo g code as the following: For x + 1 2 = 0 · b 1 b 2 ...b N k − 1 2 + { 2 N k − 1 x } 2 N k − 1 , where {·} represents the fractional part, we construct s 1 , s 2 , ..., s N as s 1 = k X i =1 (2 − i + 2 − k ( k − i )) b ( i − 1) N +1 18 s 2 = k X i =1 (2 − i + 2 − k ( k − i )) b ( i − 1) N +2 ... s N − 1 = k X i =1 (2 − i + 2 − k ( k − i )) b ( i − 1) N + N − 1 s N = k − 1 X i =1 (2 − i + 2 − k ( k − i )) b ( i − 1) N + N + 2 N k − k − 2 2 N k − 1 x 2 N k − 1 (54) First, we show that 0 ≤ s j < 2 , for 1 ≤ j ≤ N . By usi ng the fact that the v alue of the bits are at m ost 1 , and 2 N k − 1 x < 1 , s j ≤ k X i =1 (2 − i + 2 − k ( k − i )) + 2 − k − 1 = k +1 X i =1 2 − i + 2 − k k X i =1 ( k − i ) (55) < 1 + 2 − k · k ( k − 1 ) 2 < 2 . (56) Therefore, noting that 0 ≤ s j < 2 , by an appropriate shift (e.g. modifying the transmitted sign al set as s ′ = s − 1 ), the transm itted power can b e bounded by one. Next, we show that t he proposed scheme has a bound ed gap (in terms of dB) to the optimum SDR curve: Theor em 8 In the pr oposed scheme, noi se variance σ 2 ≤ 1 2 , the out put distortio n D i s upper bounded by D ≤ cσ 2 N (57) wher e c d epends onl y on N . Pr o of: The signal set consis ts of 2 N k − 1 segments o f length 2 − k − 1 , where each of them is a subsegment of the source region (the unit interval), scaled by a factor of 2 N k − k − 2 . The probabil ity that the first error occurs in the l t h bit ( l = ( i − 1) N + j , where 1 ≤ j ≤ N ) of x is bou nded by P l ≤ 2 Q k − i 2 ≤ 2 Q k 2 − l 2 N and it results in an output squared error of at most D l ≤ 4 − l +1 = 4 − ( i − 1) N − j +1 . Therefore, by considering the union-bound over all possi ble errors, w e o btain 19 D ≤ N k − 1 X l =1 D l · P l + D no − bit − er r or ≤ N k − 1 X l =1 4 − l +1 · 2 Q k 2 − l 2 N + 4 − ( N k − k − 2) σ 2 . (58) Now , by u sing Q ( x ) < e − x 2 2 and 2 − k − 1 < σ , we have D ≤ k N − 1 X l =1 2 − 2 l +3 e − ( k − l/N ) 2 8 + 4 − ( N k − k − 2) σ 2 ≤ k N − 1 X l =1 2 − 2 l +3 2 − ( k − l/N ) 2 8 + 4 − ( N k − k − 2) σ 2 ≤ 2 − 2 k N k N − 1 X l =1 2 2( kN − l )+3 2 − ( k − l/N ) 2 8 + 4 − ( N k − k − 2) σ 2 = 2 − 2 k N · 2 3 · 2 8 N 2 k N − 1 X l =1 2 − ( k − l/N − 8 N ) 2 8 + 4 − ( N k − k − 2) σ 2 < 2 − 2 k N · 2 3 · 2 8 N 2 ∞ X l = −∞ 2 − ( k − l/N − 8 N ) 2 8 + 4 − ( N k − k − 2) σ 2 = 2 − 2 k N · 2 3 · 2 8 N 2 ∞ X l ′ = −∞ 2 − ( l ′ / N ) 2 8 + 4 − ( N k − k − 2) σ 2 ≤ 2 − 2 k N · c 1 + 4 − ( N k − k − 2) σ 2 ≤ cσ 2 N . (59) It is worth noting th at in the proposed family of codes, for each code, the asympto tic sl ope of the SDR curve is 1 (as we expected from the fact that for each code, the mapping is piece wise diffe rentiable). W e can mix the i dea of this scheme with Scheme II of t he previous section, to 20 construct a family of mappin gs where for each of them, the asymp totic s lope is N , and together , they maintain a bounded gap wi th t he opti mal SDR (in terms of dB): T ype II - Family of rob ust mappings: For x + 1 2 = 0 · b 1 b 2 b 3 ... 2 , we construct f k ( x ) = ( s 1 , s 2 , ..., s N ) as s 1 = k X i =1 (2 − i + 2 − k ( k − i )) b ( i − 1) N +1 + 2 − k − 1 0 · b k N +1 0 b k N + N ( N +1) 2 +1 b k N + N ( N +1) 2 +2 ... 2 s 2 = k X i =1 (2 − i + 2 − k ( k − i )) b ( i − 1) N +2 + 2 − k − 1 0 · b k N +2 b k N +3 0 b k N + ( N +1)( N +2) 2 +1 ... 2 ... s N = k X i =1 (2 − i + 2 − k ( k − i )) b ( i − 1) N + N + 2 − k − 1 0 · b k N + N ( N − 1) 2 +1 b k N + N ( N − 1) 2 +2 ... 2 Theor em 9 In the pr oposed fa mily of mappings (T ype II), ther e are constants c, c 1 , c 2 , ind epen- dent of σ and k (ar e on ly dependent on N ) such th at for every inte ger k > 0 , if we use the modulation map f k ( x ) , i) F or 2 − k − 1 < σ ≤ 2 − k , D ≤ cσ 2 N . (60) ii) fo r any σ < 2 − k − 1 , D ≤ c 1 σ 2 N 2 c 2 √ − log 2 σ . (61) Pr o of: i) The probabi lity that t he first error occurs in th e l th bit ( l = ( i − 1) N + j < k N ) of x i s boun ded by P l ≥ 2 Q k − i 2 and it resul ts in an outpu t squared error of at most 4 − l +1 , and w hen there is no error in th e first N k bit s, the squared error is D ′ ≤ 4 − N k . T herefore, by considering the uni on-bound over all possi ble errors, we ha ve D ≤ N k X l =1 D l · P l + D ′ 21 ≤ N k X l =1 4 − l +1 · 2 Q k 2 − l 2 N + 4 − N k Similar t o the proof of theorem 8, by using Q ( x ) < e − x 2 2 and 2 − k − 1 < σ ≤ 2 − k , we ha ve D ≤ N k − 1 X l =1 4 − l +1 e − ( k − l/N ) 2 8 + σ 2 N ≤ c 4 4 − k N + σ 2 N ≤ cσ 2 N . ii) Consider z i as t he Gaussian noise on t he i th channel and ass ume that n is selected su ch that k + n +1 X l =1 ( l N + 1) ≤ − log 2 σ < k + n +2 X l =1 ( l N + 1) (62) The probability t hat | z i | ≥ 2 − k − 1 − P n l =1 ( lN +1) is negligi ble (it is bounded by 2 Q 2 ( n +1) N ). On the other hand, when | z i | < 2 − k − 1 − P n l =1 ( lN +1) , the first k + P n − 1 l =0 ( l N + i + 1) bits of s i can be decoded error-fre ely ( 1 ≤ i ≤ N ) which in clude k + P n − 1 l =0 ( l N + i ) bits of x . Thus , the first k N + P nN j =1 j bits o f x can be decoded error-free ly . No w , sim ilar to th e proof o f theorem 6, k N + nN X j =1 j ≥ (63) N k + n +2 X l =1 ( l N + 1) ! − c 5 v u u t n +1 X l =1 ( l N + 1) (64) N k + n +2 X l =1 ( l N + 1) ! − c 6 v u u t k + n +1 X l =1 ( l N + 1) (65) Therefore, by u sing the assum ption (62), k N + nN X j =1 j ≥ (66) 22 − N log 2 σ − c 6 p − log 2 σ (67) Therefore, th e o utput dis tortion is bounded by D ≤ 2 − 2 ( k N + P nN j =1 j ) + 2 Q 2 ( n +1) N (68) ≤ 2 2 N l og 2 σ +2 c 6 √ − log 2 σ + 2 Q 2 ( n +1) N (69) = ⇒ D ≤ c 1 σ 2 N 2 c 2 √ − log 2 σ . (70) V I I . S I M U L A T I O N R E S U L T S In figure 3 , for a bandwidth expansion factor of 4, the performance of Scheme I (with parameters α = 3 and 4) is compared with the shift-map scheme wi th a = 3 . As we expect, for the shift-m ap schem e, the SDR curve satu rates at slope 1, while the new scheme of fers asymptotic slopes high er than one. For the propo sed scheme, with parameters α 1 = 3 and α 2 = 4 , the asymptotic slop e is respectively β 1 = 4 log 2 log 3 and β 2 = 4 log 2 log 4 = 2 (as expected from Theorem 3). Also, we see that the proposed scheme provides a graceful degradation in the low SNR region. Figure 4 shows th e performance of Scheme II for N = 4 dimensio ns. As it i s shown in the figure, the asympto tic exponent of the SDR is close to the optimum value of 4, i.e. t he bandwidth expansion ratio . The fluctuations of the slope of the curve is due to the fac t that groups of consequent bits are assi gned t o each dim ension, and for di f ferent ranges of SNR, errors in d iff erent dimensions become dominant (for e xample, for SNR values around 40-50d B, the error in the second layer of bits of s 1 becomes dominant i n the over all squared error). By modifying Scheme II and assig ning groups of bi ts of lengt h l ′ = i + k ( N − 1) (instead of l = i + k N ) to the i th dim ension, we can s lightly improve the performance in the middle SNR range. Asymptotic exponents of the SDR in b oth variations of Scheme II are the same. 23 10 15 20 25 30 35 40 45 10 1 10 2 10 3 10 4 10 5 10 6 10 7 10 8 10 9 10 10 10 11 SNR (dB) SDR 4 dimensions Scheme I, α =4 Scheme I, α =3 shift−map, a=3 Fig. 3. The output SNR (or SDR) for the first proposed scheme (with α = 4 and 3) and the shift-map scheme with a = 3 . The band width expansion is N = 4 . V I I I . C O N C L U S I O N S T o a void the mild saturatio n effe ct in analog transmi ssion (i.e. achie ving the optim um scaling of the output disto rtion), one needs to u se non-differentiable mappings (more precisely , m appings which are n ot di ff erentiable on any interval). T wo non-differe ntiable schemes are introduced in this p aper . Bot h t hese schemes, whi ch are mi nimum-delay schemes, outperform the traditional minimum -delay analo g schemes, in terms of scaling of the output SDR. Also, one of them (Scheme II) a chieves the optimum S DR scaling with a simpl e mapping (it achie ves t he asymptotic exponent N for the SDR, versus SNR). 24 0 10 20 30 40 50 60 70 80 90 100 0 50 100 150 200 250 300 SDR (dB) SNR (dB) Scheme II (a) Scheme II (b) Fig. 4. Performance of Scheme II for N = 4 dimensions. (a) corresponds to the scheme introduced in Section V and (b) corresponds to the other variation of Scheme II, when group s of l ′ = i + k ( N − 1) bits are considered. A P P E N D I X A : P RO O F O F T H E O R E M 1 The set of mod ulated signals consists of a N − 1 parallel segments where the projection of each of them on the i th dimension has the length a − ( i − 1) , hence, each segment has the length √ 1 + a − 2 + ... + a − 2( N − 1) . By considering the distance of their i ntersections with the hyp erspace orthogonal to the N th dimensi on (which is at least a − 1 ) and the angular factor of these s egments, respecting to the s N -axis, because a ≥ 2 , we can bou nd the distance between two parallel segments of the modulated signal set as (see Fig. 1) d ≥ a − 1 √ 1 + a − 2 + ... + a − 2( N − 1) ≥ a − 1 √ 1 + 2 − 2 + ... + 2 − 2( N − 1) ≥ a − 1 2 (71) First, we consider the case of σ √ − log σ ≤ 1 16 √ N . Consider a = j 1 8 √ N σ √ − log σ k . Probability of a j ump to a wrong segment (du ring th e decoding) is bounded by 25 Pr( j ump ) ≤ 2 Q d 2 σ ≤ 2 Q a − 1 4 σ (72) ≤ 2 Q 8 √ N σ √ − log σ 4 σ ! . (73) By u sing Q ( x ) ≤ 1 2 e − x 2 2 , Pr( j ump ) ≤ e − ( 2 √ N √ − l og σ ) 2 2 = e 2 N l og σ = σ 2 N . (74) On the other hand, each segment of the m odulated s ignal s et is a segment of th e s ource signal set, stretched by a factor of a N − 1 √ 1 + a − 2 + ... + a − 2( N − 1) (its length is changed from 1 a N − 1 to √ 1 + a − 2 + ... + a − 2( N − 1) ). Therefore, assuming the correct segment decoding, th e av erage distortion is th e v ariance of th e chann el noise divided by a N − 1 √ 1 + a − 2 + ... + a − 2( N − 1) 2 : E | ˜ x − x | 2 | no j ump = (75) σ 2 a N − 1 √ 1 + a − 2 + ... + a − 2( N − 1) 2 ≤ (76) σ 2 a 2( N − 1) = σ 2 j 1 8 √ N σ √ − log σ k 2( N − 1) ≤ c 1 σ 2 N ( − log σ ) N − 1 (77) where ˜ x is the estimate of x and c 1 is independent of a and σ and only depends on N . Now , because E {| ˜ x − x | 2 | j ump } and Pr ( no j ump ) are bounded by 1, D = Pr( j ump ) · E | ˜ x − x | 2 | j ump + Pr( no j ump ) · E | ˜ x − x | 2 | no j ump (78) = ⇒ D ≤ Pr( j ump ) + E | ˜ x − x | 2 | no j ump (79) ≤ c 2 σ 2 N ( − log σ ) N − 1 , for σ p − log σ ≤ 1 16 √ N . (80) On the ot her h and, for σ √ − log σ > 1 16 √ N , 26 D ≤ 1 = σ − 2 N ( − log σ ) − ( N − 1) · σ 2 N ( − log σ ) N − 1 (81) = σ p − log σ − 2 N · ( − log σ ) · σ 2 N ( − log σ ) N − 1 (82) < 1 16 √ N − 2 N · ( − log √ 2) · σ 2 N ( − log σ ) N − 1 (83) ≤ c 3 σ 2 N ( − log σ ) N − 1 . (84) Therefore, by com bining t hese two bounds together , we obt ain D ≤ cσ 2 N ( − log σ ) N − 1 . (85) A P P E N D I X B : P R O O F O F T H E O R E M 2 W e consider two cases: Case 1) a ≤ 4 σ √ − log σ : Each segment of the m odulated signal set is a segment o f th e source signal set, scaled by a factor of a N − 1 √ 1 + a − 2 + ... + a − 2( N − 1) , hence D ≥ E | ˜ x − x | 2 | no j ump (86) = σ 2 a N − 1 √ 1 + a − 2 + ... + a − 2( N − 1) 2 (87) ≥ σ 2 2 a 2( N − 1) (88) ≥ c 1 σ 2 N ( − log σ ) N − 1 (89) Case 2) 2 l +1 σ √ − log σ < a ≤ 2 l +2 σ √ − log σ , for l ≥ 1 : 27 In this case, we bound the output dis tortion by th e av erage distorti on caused by a l ar ge jump to another segment. Let z 1 be the additiv e noise in the first di mension and f ( x ) = s the m odulated vector corresponding to the source s ample x . For any point in the int erv al − 1 2 + ( k − 1 ) a − 1 < x ≤ − 1 2 + k a − 1 (for 1 ≤ k ≤ a − 2 l +1 ), when z 1 > 2 l +1 a − 1 , for any point x ′ ≤ x + 2 l a − 1 , th e recei ved point f ( x ) + z is closer to f x ′ + 2 l a − 1 than f ( x ) . Therefore, the decoded signal i s ˜ x > x + 2 l a − 1 . Thus, i n thi s case, the squared error i s at least 2 l a − 1 2 . Therefore, the average distortio n is lower bounded by D ≥ Pr − 1 2 < x ≤ 1 2 − 2 l +1 a − 1 · Pr z 1 > 2 l +1 a − 1 · 2 l a − 1 2 (90) = 1 − 2 l +1 a − 1 · Q 2 l +1 a − 1 σ · 2 l a − 1 2 (91) ≥ 1 − σ p − log σ · Q σ √ − log σ σ · σ √ − log σ 2 2 2 (92) = 1 − σ p − log σ · Q p − log σ · σ 2 ( − log σ ) 2 4 (93) By u sing e − x 2 < Q ( x ) , D ≥ 1 − σ p − log σ · σ · σ 2 ( − log σ ) 2 4 (94) = ⇒ D ≥ c 2 σ 3 ( − log σ ) . (95) By com bining t he bound s (for two cases), and noting t hat σ 2 ≤ 1 2 , D ≥ min c 2 σ 3 ( − log σ ) , c 1 σ 2 N ( − log σ ) N − 1 (96) D ≥ c ′ σ 2 N ( − log σ ) N − 1 for N ≥ 2 . (97) 28 A P P E N D I X C : C O D I N G F O R U N B O U N D E D S O U R C E S Consider { X i } ∞ i =1 as an arbitrary mem oryless i .i.d source. W e show that the result s of Section V can be extended for non-uniform sources, to construct robust jo int-source channel codes with a constraint on the av erage power . W ithout loss of generality , we can assume the variance of the source to be equal to 1. For the source sample x , we can writ e it as x = x 1 + x 2 where x 1 is an int eger , − 1 2 ≤ x 2 < 1 2 , and x 2 + 1 2 = 0 · b 1 b 2 b 3 ... 2 . No w , we construct the N -dimensional transmissio n vector as s ′ = ( s ′ 1 , s ′ 2 , ..., s ′ N ) = x 1 + s 1 − 1 2 , s 2 − 1 2 , ..., s N − 1 2 , wh ere s 1 , ..., s N are const ructed usin g (36) in section V . Let D 1 be the disto rtion conditi oned on correct decoding of x 1 . Similar t o the proof Theorem 6, we can show that the D 1 is up per bounded by D 1 ≤ c 1 σ 2 N 2 c 2 √ − log σ (98) where c 1 and c 2 depend onl y on N . Now , we bound the disto rtion D 2 , for the case that x 1 is not decoded correctly . Since s 1 is constructed by scheme II (in Section V), s 1 is between 0 and ( 0 . 10111 · · · ) 2 , hence 0 ≤ s 1 < 3 4 . T o have an error of | x 1 − e x 1 | = k , the amplitude of the n oise on the first d imension shou ld be greater than k − 3 4 2 , hence its probability is bounded by 2 Q k − 3 4 2 σ . Wh en | x 1 − e x 1 | = k , the overa ll squared error is lower bounded by | x − e x | ≤ | x 1 − e x 1 | + | x 2 − e x 2 | ≤ k + 1 . (99) Therefore, by using the uni on bound for all va lues of k , the di stortion D 2 is lower bounded by D 2 ≤ ∞ X k =1 2 Q k − 3 4 2 σ ( k + 1) (100) ≤ ∞ X k =1 e − k − 3 4 2 σ ! 2 2 · ( k + 1) (101) ≤ c 3 e − 1 128 σ 2 . (102) Thus, D ≤ D 1 + D 2 ≤ c 4 σ 2 N 2 c 2 √ − log σ . 29 T o finish th e proof, we only need to show that the aver age transmitted po wer is bounded. F or s ′ 2 , ..., s ′ N , the transmitted powe r is bou nded as | s ′ i | 2 ≤ 1 4 . For s ′ 1 , | s ′ 1 | 2 = x 1 + s 1 − 1 2 2 ≤ | x 1 | + s 1 − 1 2 2 (103) ≤ | x | + 1 2 + 1 2 2 = ( | x | + 1) 2 (104) Thus, using the Cauchy-Schwarz i nequality , E | s ′ i | 2 ≤ E ( | x | + 1) 2 ≤ p E | x | 2 + 1 2 (105) ≤ (1 + 1) 2 = 4 . (106) A P P E N D I X D : P RO O F O F T H E O R E M 7 W e consider two cases for a , the scaling factor , Case 1) a ≤ 2 2( N − M ) M +4 σ − ( N − M ) M ( − log σ ) − ( N − M ) 2 M : Each s ubset of the modulated s ignal s et is the scaled version of a segment of the source si gnal set by a fac tor of a , hence, we can lo wer b ound the distortion by on ly considering the case t hat the subset is decoded correctly and there is no jump to adj acent s ubsets, D ≥ E | ˜ x − x | 2 | no j ump (107) = σ 2 a 2 (108) ≥ c 4 σ 2 N M ( − log σ ) N − M M (109) Case 2) 2 l +1+ 2( N − M ) M < a σ − ( N − M ) M ( − log σ ) − ( N − M ) 2 M ≤ 2 l +2+ 2( N − M ) M for l ≥ 3 : In this case, we bound the output disto rtion b y the a verage distortio n caused by a jump to another su bset. Without loss of generality 7 , we can consider σ < 1 e , hence 2 − l a > 8 . First, we 7 For 1 < σ < 1 e , the distortion D is larger than D 1 e (the distortion for σ = 1 e ), hence D ≥ D 1 e > D 1 e σ 2 N M ( − log σ ) N − M M , and D 1 e depends only on N . 30 show that there are two constants c 5 and c 6 (independent of a and σ ) such t hat probability of an squared error of at least c 5 2 − l a − 2 is l ower bounded by Pr( j ump ) ≥ c 6 Q p − log σ ≥ c 6 σ (110) By considering the power constraint, the m aximum distance of each source samp le to its quan- tization point is u pper bound ed by d max ≤ 1 a . (111) W e can parti tion th e M -di mensional uniform source to n = a 2 l M ≥ a 2 l +1 M cubes of size s = 1 ⌊ a 2 l ⌋ ≥ 2 l a ≥ 2 l d max . W e consider B i as the uni on of th e quantizatio n regions whose center is in the i th cube ( 1 ≤ i ≤ n ). Because the decoding of digital and analog parts are done separately , the ( N − M ) -dimension al subspace (dedicated to send t he quantization points) can be partitioned to n decoding subsets , corresponding to regions B 1 , ..., B n . If we consid er C 1 , ..., C n , the int ersections of these decoding regions and the ( N − M ) -dimens ional cube of size 4 , centered at the o rigin, at l east n 2 of them hav e volume l ess than 2 (4) N − M n ≤ (4) N − M ( 2 − l − 1 a ) M ≤ 2 σ N − M ( − log σ ) N − M 2 . This v olum e is l ess than t he volume of an ( N − M ) -dimens ional s phere of radius σ ( − log σ ) 1 2 . Thus, for any point inside B i with this property , t he probability of being decoded to a wrong subset B j is at least equal to the prob ability t hat the amplitude o f the noise is lar ger than the radius of that sphere (i.e. σ ( − log σ ) 1 2 ). Thi s p robability is lower b ounded by Pr n z 1 > σ ( − log σ ) 1 2 o = Q √ − log σ ≥ σ . Now , for the cubes corresponding to th ese subsets, we consider poi nts insi de a smaller cube of size s 2 , with the same center . For these po ints, at least with probabili ty σ , decoder finds a wrong q uantization region where the distance of its center and the o riginal point is at least s − s 2 2 = s 4 ≥ 2 l − 2 a , hence, the final squared error i s at least 2 l − 2 a − d max 2 ≥ 2 l − 2 a − 1 a 2 ≥ c 5 2 − l a − 2 . Because at least half of the n sub sets have the mentioned property , the overall probability of having this kin d of po ints as the so urce is at least 1 2 2 − M , and in transmi tting these points, wit h a probabili ty which is lower bounded by σ , the squared error is at least c 5 2 − l a − 2 . Therefore, the distortion is l ower bounded by D ≥ 1 2 2 − M · σ · c 5 2 − l a − 2 ≥ c 7 σ 2 − l a − 2 31 ≥ c 8 σ · σ 2( N − M ) M ( − log σ ) N − M M = c 8 σ 2 N − M M ( − log σ ) N − M M . (112) Finally , by considering the minimum of (109 ) and (112), we conclude D ≥ cσ 2 N M ( − log σ ) N − M M . (113) R E F E R E N C E S [1] C. Shannon , “ A mathematical theory of commun ication, ” Bell Syst. T ech . J. , 1948. [2] C. Shannon, “Coding theorems for a discrete source with a fidelity criterion, ” IRE Nat. Con v . R ec. , pp. 149–163, Mar . 1959. [3] E. A yanoglu and R. M. Gray , “The design of joint source and channel trellis wa veform coders, ” IEEE Tr ans. Info. Theory , pp. 855–86 5, Nov . 1987. [4] N. Farv ardin and V . V aishampa yan, “Optimal quantizer design for noisy channels: An approach to combined source-channel coding, ” IEEE T rans. Info. Theory , pp. 827–838, Nov . 1987. [5] N. Farv ardin, “ A study of v ector quantization for noisy chan nels, ” IEE E Tr ans. Info. T heory , pp. 799–809, July 1990. [6] N. Phamdo, N. Farv ardin, and T . Moriya, “ A unified approach to treestructured and multistage vector quantization for noisy chann els, ” IEEE T rans. Info. Theory , pp. 835–850, May 1993 . [7] M. W ang and T . R. Fischer , “Trellis-coded quantization designed for noisy channels, ” IEEE T rans. Info. Theory , pp. 1792– 1802, Nov . 1994. [8] C. E. Shannon, “Communication in the presenc e of noise, ” Pr oceedings of IRE , vol. 37, pp. 10–21, 1949. [9] V . A. Ko tel’nikov , The Theory of Optimum Noise Immunity . Ne w Y ork: McGraw-Hill, 195 9. [10] J. M. W ozencraft and I. M. Jacobs, Principles of Communication Enginee ring . New Y ork: W il ey , 196 5. [11] U. T imor , “Design of signals for analog communications, ” IE EE T rans. on Info. T heory , vol. 16, pp. 581 –587, Sep. 1970. [12] C. M. Thomas, C. L. May , and G. R. W elt i, “Design of signals for analog communications, ” IE EE T rans. on Info. Theory , vol. 16, pp. 581–587 , Sep. 1970 . [13] S .-Y . Chung , On the Construction of some capacity-appr oaching coding schemes . PhD thesis, MIT , 2000. [14] B. Chen and G. W . W ornell, “ Analog error correcting codes based on chaotic dynamical systems, ” IEEE Tr ans. Info. Theory , pp. 169 1–1706, July 1998. [15] V . A. V aishampayan and I. R. Costa, “Curv es on a sphere, shift-map dynamics, and error control for continuous alphab et sources, ” IEEE T rans. Info. T heory , pp. 1691–1706, July 2003. [16] M. Skoglund, N. P hamdo, and F . F Alajaji, “Design and performance of VQ-based hybrid digital-analog joint source- channel codes, ” IEEE T rans. Info. Theory , vol. 48, pp. 708 – 720, March 2002. [17] F . Hekland, G. E. Oien, and T . A. Ramstad, “Design of VQ-based hybrid digital-analog joint source-channel codes for image communication , ” in Data Compr ession Confer ence , pp. 193 – 202, March 2005. [18] M. Skoglund, N. Phamdo, and F . F Al ajaji, “Hybrid digital analog source channel coding for bandwidth compres- sion/exp ansion, ” IE EE T rans. Info. Theory , vol. 52, pp. 3757–3763, Aug. 2006. 32 [19] F . Hekland, On the design and analysis of Shannon-K otelniko v mappings for joint sour ce-chann el coding . P hD thesis, Norwegian University of Science and T echnology , 2007. [20] H. Cow ard and T . A. Ramstad, “Quantizer optimization in hybrid digitalanalog transmission of analog source signals, ” in Pr oc. Intl. Conf . Acoustics, Speec h, Signal Pr oc , 2000. [21] T . A. Ramstad, “Shannon mappings for robust communication, ” T elektron ikk , vol. 98, no. 1, p. 114128 , 2002. [22] F . Hekland, G. E. Oien, and T . A. Ramstad, “Using 2:1 shannon mapping for joint source-chan nel coding, ” in Pr oc. Data Compr ession Confer ence , March 200 5. [23] X. Cai and J. W . Modestino, “Bandwidth expan sion shannon mapping for analog error-control coding, ” in P r oc. 40th annual Confer ence on Information Sciences and Systems ( CISS) , March 2006. [24] U. Mittal and N. Phamdo, “Hybrid digital-analog (HD A) joint source-channel codes f or broadcasting and robust communications, ” IEEE T rans. Info. T heory , pp. 1082–1102, May 2002. [25] Z . Reznic, M. Feder, and R. Zamir , “Distorti on bounds for broadcasting with bandwidth expa nsion, ” IEEE T rans. Info. Theory , pp. 377 8–3788, Aug. 2006. [26] N. Santhi and A. V ardy , “ Analog codes on graphs, ” Av ailable online: http://arxiv . or g/abs/cs/0608 086 , 2006. [27] N. Santhi and A. V ardy , “ Analog codes on graphs, ” in Pr oceedings of I EEE International Symposium on Information Theory , p. 13, July 2003. [28] I. Rosenhouse and A. J. W eiss, “Combined analog and digital error-correcting codes for analog information sources, ” IEEE T ran s. Communications , pp. 2073 – 2083, Nove mber 2007. [29] Y . Koch man and R. Zamir , “ Analog matching of colored sources to colored channels, ” in I EEE Internationa l Symposium on Information Theory , pp. 1539 – 1543, July 2006. [30] Y . Koch man and R. Z amir , “ Approachin g R(D)=C in colored joint source/channe l broadcasting by prediction, ” in IEEE International Symposium on Information Theory , June 2007. [31] S . Shamai (Shitz), S. V erdu, and R. Zamir , “Systematic lossy source/channel coding, ” IEEE T rans. Info. Theory , p. 564 579, March 199 8. [32] J. Ziv , “The beha vior of analog communication systems, ” IEEE Tr ans. Info. T heory , pp. 1691–1706, Aug. 1970. [33] G. A. Edgar , Measur e, T opology and F ractal Geometry , ch. 6. Springer-V erlag, 1990.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment