Bi-criteria Pipeline Mappings for Parallel Image Processing

Mapping workflow applications onto parallel platforms is a challenging problem, even for simple application patterns such as pipeline graphs. Several antagonistic criteria should be optimized, such as throughput and latency (or a combination). Typica…

Authors: Anne Benoit (INRIA Rh^one-Alpes, LIP), Harald Kosch

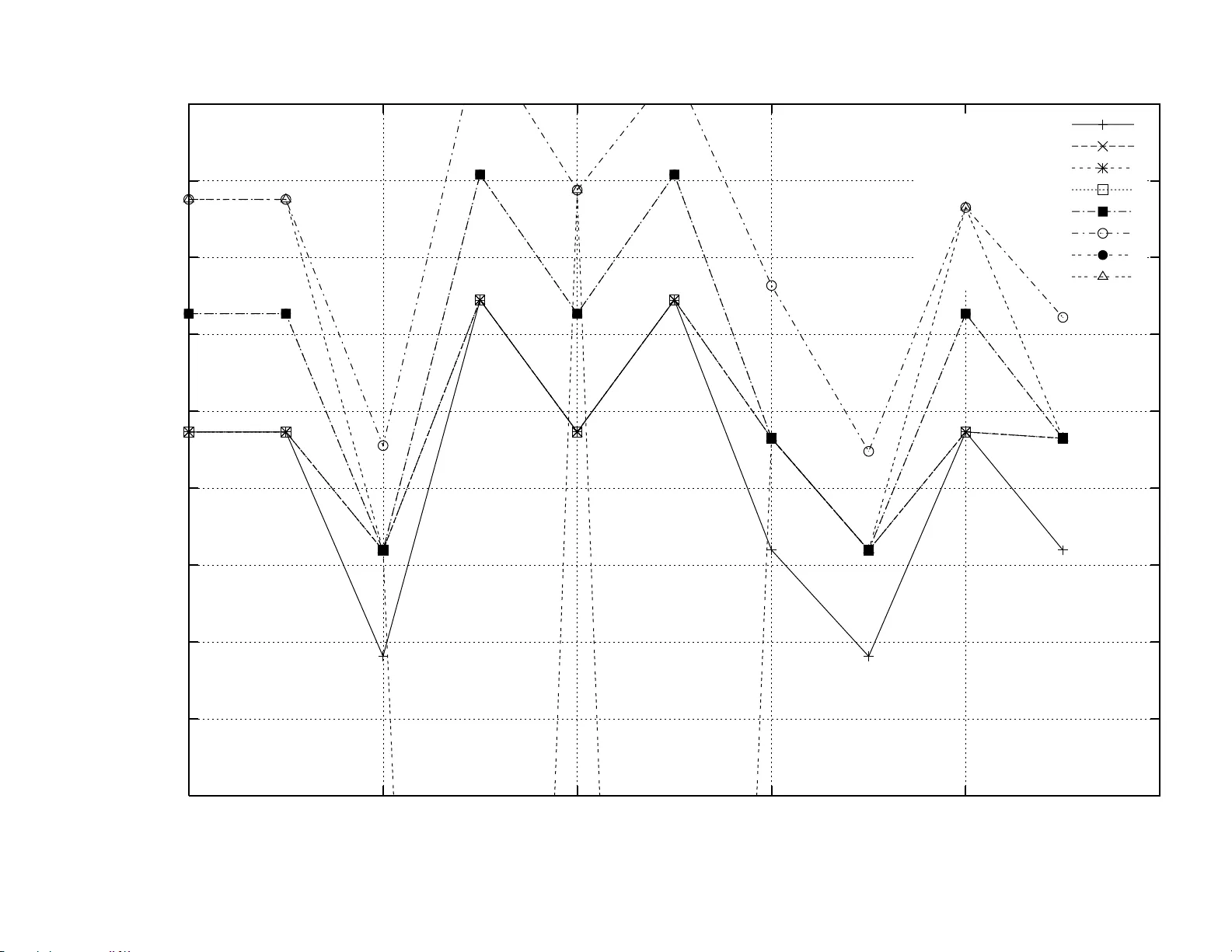

apport de recherche ISSN 0249-6399 ISRN INRIA/RR--6410--FR+ENG Thème NUM INSTITUT N A TION AL DE RECHERCHE EN INFORMA TIQUE ET EN A UTOMA TIQUE Bi-criteria Pipeline Mappings f or P arallel Image Processing Anne Benoit — Harald K osch — V e ronika Rehn-Sonigo — Yves Robert N° 6410 January 2008 Unité de recherche INRIA Rhône-Alpe s 655, av enue de l’Europ e, 38334 Montbon not Saint Ismier (France) Téléphone : +33 4 76 61 52 00 — Téléco pie +33 4 76 61 52 52 Bi-criteria Pip eline Mappings for P arallel Image Pro cessing Anne Benoit , Harald Kosc h , V eronik a Rehn-Sonigo , Yv es Rob ert Th ` eme NUM — Syst` emes num ´ eriques Pro jet GRAAL Rapp ort d e rec h erche n ° 6410 — Jan uary 2 008 — 13 pages Abstract: Mapping w orkflow applications on to parallel platforms is a challenging pr oblem, ev en for simple a pp lication patterns such as pip eline graphs. Seve ral an tagonistic criteria should b e optimized, such as throughpu t and latency (or a combination). Typical applications include d igital image pro cessing, where images are pro cessed in steady-state mode. In th is paper, w e stu dy the mappin g of a particular image pr ocessing application, th e JPEG e nco ding. Mapping pip elined JP EG enco ding onto parallel platforms is usefu l for instance for enco ding Motion JPEG images. As the bi-criteria mapping problem is NP- complete, w e concen trate on the ev aluatio n and performance of p olynomial heuristics. Key-w ords: pip eline, w orkflow app lication, m ulti-criteria optimization, J PEG enco ding This text is also av ailable as a researc h rep ort of t he Lab oratoire de l’Informatique du P arall ´ elisme http://www .ens-lyon.fr/L IP . Mapping bi-cit ` ere de pip elines p our le traitemen t d’image en parall ` ele R ´ esum ´ e : L’ordonnancement et l’allocation d es workflows su r plates-formes parall ` eles est un probl ` eme crucial, mˆ eme pour des applicatio ns simp les comme des graphes en pip eline. Plusieurs crit` eres contradicto ires doiv ent ˆ etre optimis ´ es, tel s que le d´ ebit et la latence (ou une com binaison des deux). Des app lications t ypiques incluent le traitemen t d’images num´ eriques, o` u les imag es son t trait ´ ees en r ´ egime p ermanent. Dans ce rapp ort, nous ´ etudions l’ordonnancement et l’allocation d’une application d e traitemen t d’image p articuli` ere, l’e nco dage JPEG. L’allocation de l’e nco dage JPEG pipelin´ e sur d es plates-formes parall ` eles est par exemple utile p our l’enco d age d es images Motion JPEG. Comme le p r obl ` eme de l’allocation bi-crit ` ere est NP-complet, nous nous conce ntrons sur l’analyse et ´ ev aluation d’heuristiques p olynomiales. Mots-cl ´ es : pip eline, applicat ion w orkflow, optimisatio n multi-crit ` ere, enco dage JPEG Bi-criteria Pip eline Mappings 3 1 Int ro duction This wo rk considers the problem of mapping w orkflow applications onto parallel platforms. This is a challenging problem, even for simp le application patterns. F or homogeneous ar- c hitectures, several scheduling and load-balancing tec hniques hav e b een dev eloped but the extension to heterogeneous clusters mak es th e pr oblem more difficult. Structured programming approac hes rule out many of the problems which the low-le vel parallel application d eve lop er is usu ally confronted to, such as d eadlocks or p ro cess starv ation. W e therefore fo cus on pip eline applications, as they can easily b e expressed as algorithmic ske letons. More precisely , in this pap er, we study the mapping of a particular pipeline appli- cation: we focus on the JP EG encoder (baseline pro cess, basic mode). This image p ro cessing application transf orms numerical pictures from any format into a standardized format ca lled JPEG. T h is stand ard w as developed almost 20 ye ars ago to create a portable format for the compression of still images and new versions are created until n ow (see http://www.jp eg.org/). JPEG (and later JPEG 2000) is used for encod ing still image s in Motion-JPEG (later MJ2) . These stand ards are commonly emp lo yed in I P -cams and are part of man y video applica- tions in the world of game consoles. Motion-JPEG (M-JPEG) has b een adopted and further deve lop ed to several other formats, e.g ., AMV (alternativel y kno wn as MTV) which is a pro- prietary video file format designed to b e consumed on low-reso urce d evices. The manner of encodin g in M-JPEG an d su bsequent form ats leads to a flow of still image coding, h ence pip eline mapp ing is appropriate. W e consider the different steps of the encoder as a linear pip eline of stages, wh ere each stage gets some input, has to p erform seve ral computations and transfers the output to the next stage. T h e corresp on d ing mapping problem can b e stated informally as follows: which stage to assign to wh ic h pro cessor? W e require the mapp in g to b e interv al-based, i.e., a pro cessor is assigned an interv al of consecutiv e stages. Tw o key optimization p arameters emerge. O n the one hand, we target a high throughput, or sh ort per io d, in order to b e able to h andle as man y images as p ossible p er time unit. O n the other h an d , we aim at a short resp onse time, or latency , for the p r ocessing of eac h image. These tw o criteria are antago nistic: intuitiv ely , we obtain a high throughput with m any p ro cessors to share the w ork, wh ile we get a small latency by mapping many stage s to the same pro cessor in order to av oid the cost of inter-stage communications. The rest of the p ap er is organized as follows: Section 2 briefly describ es JPEG cod ing principles. In Section 3 th e theoretical and applicative f ramework is introduced, and S ection 4 is d edicated to linear programming formulat ion of th e bi-criteria mapping. In S ection 5 we describ e some p olynomial heuristics, which w e use for our exp eriments of Section 6 . W e discuss related wo rk in Section 7 . Finally , w e give some co ncludin g remarks in Section 8 . 2 Pr inc iples of JPEG enco d ing Here we b riefly present the mod e of op eration of a JPEG enco der (see [ 13 ] for further details). The encod er consists in sev en p ip eline stages, as shown in Figure 1 . In the fir s t stage, the image is scaled to hav e a multiple of an 8x8 pixel matrix, and the standard ev en claims a multiple of 16x16. In the next stag e a color space con ve rsion is p erformed: the colors of the picture are transformed from the RGB to the YUV-color model. The sub-samp lin g stage is an optional stage, w h ic h, dep ending on th e sampling rate, reduces the data vo lume: as th e RR n ° 6410 4 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert Compressed Image Data Source Image Data Scaling YUV Conv ersion Block Storage Subsampling FDCT Quantization T able En tropy Encoder Huffman T able Quantize r Figure 1: Steps of the JPEG encoding. human eye can dissolve lum inosit y more easily th an color, the chrominance components are sampled m ore r arely than the luminance components. Admittedly , this leads to a loss of data. The last preparation step consists in the creati on and s torage of so-c alled MC Us (Minimum Cod ed Units), which corresp ond to 8x8 pixel blocks in the picture. T he next s tage is the core of the encod er. It p erforms a F ast Discrete C osine T ransformation (FDCT) (eg. [ 14 ]) on the 8x8 pixel blocks whic h are interpreted as a discrete signal of 64 v alues. After the transformation, ev ery p oint in the matrix is represented as a linear com bination of th e 64 p oints. The quantizer redu ces the image information to the important parts. Dep end in g on the quantiza tion fac tor and quan tization matrix, irrelev ant frequencies are redu ced. Th ereby quantiz ation errors ca n occur, that are remark able as quan tization noise or block ge neration in the encoded image. The last stage is the entrop y encoder, which p erform s a modifi ed Huffman codin g: it com bines the v ariable length co des of Huffman coding with th e codin g of rep etitiv e data in run-length encod in g. 3 F ramew ork 3.1 Applicativ e framew ork On the theoretical p oint of view, we consider a p ip eline of n stages S k , 1 ≤ k ≤ n . T asks are fed into the pip eline and p ro cessed from stage to stage, unt il th ey exit the pip eline after the last stage. The k -th stage S k first receiv es a n input fr om the previous stage, of size δ k − 1 , then p erforms a n umb er of w k computations, and finally outputs data of size δ k to the next stage. T h ese three operations are p erformed sequen tially . The firs t s tage S 1 receiv es an input of size δ 0 from the outside world, while the last stage S n returns the result, of size δ n , to th e outside world, thus these particular stages behav e in the same wa y a s the others. On the practica l p oint of view, w e consider the applicativ e pipeline of the J PEG enco der as presen ted in Figure 1 an d its seven stages. 3.2 T arget platform W e target a platform w ith p p r ocessors P u , 1 ≤ u ≤ p , fully interconnected as a (virtual) clique. There is a bidirectional lin k link u,v : P u → P v b et ween any pro cessor p air P u and P v , of bandwidth b u,v . The sp eed of pro cessor P u is denoted as s u , and it takes X/ s u time- units for P u to execute X floating point operations. W e also enforce a linear cost mo del for comm unications, hen ce it takes X/ b time-units to send (resp. receiv e) a m essage of s ize X to (r esp . fr om) P v . Comm unications conten tion is take n care of by enforcing th e one-p ort mod el [ 3 ]. INRIA Bi-criteria Pip eline Mappings 5 3.3 Bi-criteria in terv al mapping problem W e seek to map interv als of consecutiv e stages onto pr ocessors [ 12 ]. In tuitiv ely , assigning sev eral consecutiv e tasks to the same pro cessor will incr ease their computational load, but ma y well dramatically decrease communication r equirements. W e search for a partition of [1 ..n ] into m ≤ p in terv als I j = [ d j , e j ] such that d j ≤ e j for 1 ≤ j ≤ m , d 1 = 1, d j +1 = e j + 1 for 1 ≤ j ≤ m − 1 and e m = n . The optimization problem is to determine the b est mapping, o ve r all p ossible p artitions into interv als, and ov er all processor assignments. The ob jective can b e to m inimize either the p erio d, or the latency , or a com bination: giv en a thr eshold p erio d, what is the min imum latency that can b e achiev ed? and the counterpart: given a thresh old latency , what is th e minimum perio d that can be ac hieved? The decision problem asso ciated to this bi-criteria in terv al mappin g optimization problem is NP-hard, s ince the p eriod minimization problem is NP-hard for interv al-based mappings (see [ 2 ]). 4 L inear program form ulation W e present here an in teger linear program to co mpu te the optimal int erv al-based bi-criteria mapping on F u l ly Heter o gene ous platforms, resp ecting either a fi x ed latency or a fixed p erio d. W e assume n stages and p p ro cessors, plus t wo fictitious extra stages S 0 and S n +1 resp ectiv ely assigned to P in and P out . First we need to define a few v ariables: F or k ∈ [0 ..n + 1] and u ∈ [1 ..p ] ∪ { in , out } , x k ,u is a bo olean v ariable equal to 1 if stage S k is assigned to processor P u ; w e let x 0 , in = x n +1 , out = 1, and x k , in = x k , out = 0 for 1 ≤ k ≤ n . F or k ∈ [0 ..n ], u, v ∈ [1 ..p ] ∪ { in , out } with u 6 = v , z k ,u,v is a b o olean v ariable equ al to 1 if stage S k is assigned to P u and stage S k +1 is assigned to P v : hence link u,v : P u → P v is used for the co mmunication betw een these two stages. If k 6 = 0 then z k , in ,v = 0 for all v 6 = in and if k 6 = n then z k ,u, out = 0 for all u 6 = out . F or k ∈ [0 ..n ] and u ∈ [1 ..p ] ∪ { in , out } , y k ,u is a bo olean v ariable equal to 1 if stages S k and S k +1 are both assigned to P u ; we let y k , in = y k , out = 0 for all k , and y 0 ,u = y n,u = 0 for all u . F or u ∈ [1 ..p ], first ( u ) is an in teger v ariable whic h denotes the first stage assigned to P u ; similarly , last ( u ) denotes the last stage assigned to P u . Thus P u is assigned the interv al [ first ( u ) , last ( u )]. Of cour se 1 ≤ first ( u ) ≤ l a st ( u ) ≤ n . T opt is the v ariable to optimiz e, so dep en ding on the ob jective function it corresp onds either to the perio d or to the la tency . W e list b elo w th e constraints that need to b e enforced. F or sim p licit y , we write P u instead of P u ∈ [1 ..p ] ∪{ i n , out } when summing o ve r all pro cessors. First there are constraints f or pro cessor and link us age: Eve ry sta ge is assigned a pro cessor: ∀ k ∈ [0 ..n + 1] , P u x k ,u = 1. Eve ry communication either is assigned a link or collapses b ecause b oth stages are assigned to the same pr ocessor: ∀ k ∈ [0 ..n ] , X u 6 = v z k ,u,v + X u y k ,u = 1 If stage S k is assigned to P u and stage S k +1 to P v , then link u,v : P u → P v is u sed for this comm unication: ∀ k ∈ [0 ..n ] , ∀ u, v ∈ [1 ..p ] ∪ { in , out } , u 6 = v , x k ,u + x k +1 ,v ≤ 1 + z k ,u,v RR n ° 6410 6 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert If b oth stages S k and S k +1 are a ssigned to P u , then y k ,u = 1: ∀ k ∈ [0 ..n ] , ∀ u ∈ [1 ..p ] ∪ { in , out } , x k ,u + x k +1 ,u ≤ 1 + y k ,u If s tage S k is assigned to P u , then necessarily first u ≤ k ≤ last u . W e write this constraint as: ∀ k ∈ [1 ..n ] , ∀ u ∈ [1 ..p ] , fi rst u ≤ k .x k ,u + n . (1 − x k ,u ) ∀ k ∈ [1 ..n ] , ∀ u ∈ [1 ..p ] , l ast u ≥ k .x k ,u If stage S k is assigned to P u and stage S k +1 is assigned to P v 6 = P u ( i.e., z k ,u,v = 1) then necessarily last u ≤ k and first v ≥ k + 1 sin ce w e consider interv als. W e w rite this constraint as: ∀ k ∈ [1 ..n − 1] , ∀ u, v ∈ [1 ..p ] , u 6 = v , last u ≤ k .z k ,u,v + n . (1 − z k ,u,v ) ∀ k ∈ [1 ..n − 1] , ∀ u, v ∈ [1 ..p ] , u 6 = v , first v ≥ ( k + 1) .z k ,u,v The latency of schedule is bound ed by T latency : and t ∈ [1 ..p ] ∪ { in , out } . p X u =1 n X k =1 X t 6 = u δ k − 1 b t,u z k − 1 ,t,u + w k s u x k ,u + X u ∈ [1 ..p ] ∪{ i n } δ n b u, out z n,u, out ≤ T latency and t ∈ [1 ..p ] ∪ { in , out } . There remains to express the p erio d of eac h pro cessor and to constrain it by T p erio d : ∀ u ∈ [1 ..p ] , n X k =1 X t 6 = u δ k − 1 b t,u z k − 1 ,t,u + w k s u x k ,u + X v 6 = u δ k b u,v z k ,u,v ≤ T p erio d Finally , the ob jectiv e fu n ction is either to minimize the p erio d T p erio d resp ecting th e fixed latency T latency or to minimize the latency T latency with a fixed p eriod T p erio d . So in the first ca se we fix T latency and set T opt = T p erio d . In the seco nd case T p erio d is fix ed a priori and T opt = T latency . With this mechanism the ob j ectiv e function r educes to minimizing T opt in b oth cases. 5 Ove rview of the h euristics The p roblem of b i-criteria in terv al m apping of w orkflow app lications is NP-hard [ 2 ], so in this sectio n we briefly describe p olynomial heuristics to solve it. See [ 2 ] f or a more co mplete description or refer to the W eb at: http://g raal.ens - lyon.fr/~vsonigo/code /multicriteria/ In th e follo w ing, w e d enote by n the n umb er of stages, and by p the num b er of processors. W e distinguish tw o sets of heuristics. The heu ristics of the first set aim to minimize the latency resp ecting an a priori fixed p eriod. The heur istics of the second set minimize the counte rpart: t he latency is fixed a p riori and we try to achiev e a minimum perio d while resp ecting th e latency constrain t. INRIA Bi-criteria Pip eline Mappings 7 5.1 Minimizing Latency for a Fixed Period All the following heuristics s ort p ro cessors b y non -in cr easing sp eed, and start b y assigning all the stages to the first (fastest) processor in the list. T his pro cessor b ecomes use d . H1-Sp-mono-P: Splitt ing mono-criterion – A t eac h step, we select the us ed pr ocessor j with the largest p erio d and w e try to split its stage interv al, giving some stages to the n ext fastest pro cessor j ′ in the list (n ot yet used). T his can b e done by splitting the interv al at any place, and either placing the first part of the interv al on j and the remainder on j ′ , or the other wa y roun d . The solution which minimizes max ( per iod ( j ) , per iod ( j ′ )) is c hosen if it is better than the original solution. Splitting is p erform ed as long as w e h av e not reac hed the fixed p erio d or u ntil w e cann ot improv e the per io d an ymore. H2-Sp-bi-P: Splitting bi-criteria – This heuristic uses a binary searc h ov er the la tency . F or this purp ose at eac h iteration we fix an authorized in crease of the optimal lat ency (which is obtained by mapping all stages on the fastest pro cessor), an d we test if we get a feasible solution via s p litting. The splitting mechanism itself is quite similar to H1-Sp-mono-P except that we choose the solution that m inimizes max i ∈{ j,j ′ } ( ∆ latency ∆ per iod ( j ) ) within the authorized latency increase to decide where to split. While we get a feasible solution, we reduce th e authorized latency increase for the next iteration of the binary s earch, thereby aiming at minimizing the mapping global late ncy . H3-3-Sp-mono-P: 3-splitting mono-criterion – A t each step we select the used pro ces- sor j with the largest p erio d and we split its interv al into three parts. F or th is p urp ose we try to map tw o parts of the interv al on the n ext p air of fastest p ro cessors in th e list, j ′ and j ′′ , and to ke ep the third p art on pro cessor j . T esting all p ossible p ermutations and all p ossible p ositions where to cut, we choose the solution th at m in imizes max ( per iod ( j ) , per iod ( j ′ ) , per iod ( j ′′ )). H4-3-Sp-bi-P: 3-splitting bi-criteria – In this heuristic the c hoice of where to split is more elaborated: it dep end s not only of the p eriod im p rov emen t, but also of the lat ency increase. Using the same splitting mechanism as in H3-3-Sp-mono-P , we select the solu- tion that min im izes m ax i ∈{ j,j ′ ,j ′′ } ( ∆ latency ∆ per iod ( i ) ). Here ∆ l atency denotes the differen ce b et ween the global latency of the solution b efore the sp lit and after the split. In the same mann er ∆ per iod ( i ) defi n es the differen ce b et ween the p eriod b efore the split (achiev ed by processor j ) and the n ew p eriod of p ro cessor i . 5.2 Minimizing P erio d for a Fixed Latency As in the heur istics describ ed ab ov e, first of all we sort pro cessors according to their sp eed and map all stages on the fastest pro cessor. H5-Sp-mono-L: Splitting mono-criterion – This heu ristic uses the same metho d as H1- Sp-mono-P with a d ifferent break condition. Here sp litting is p erformed as long as we do not exceed the fi x ed latency , still choosing th e solution th at minimizes max ( per iod ( j ) , per iod ( j ′ )). RR n ° 6410 8 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert a) b) 2 3 4 5 6 7 P 3 P 7 1 P 5 L f ix = 370 P opt = 307 , 319 1 2 3 4 5 6 7 P 6 P 3 P f ix = 310 L opt = 337 , 575 1 4 5 6 7 3 2 P 6 P 3 P f ix = 320 L opt = 336 , 729 4 5 6 7 1 2 3 P 3 P f ix = 330 L opt = 322 , 700 1 2 3 4 5 6 7 P 3 P 4 L f ix = 340 P opt = 307 , 319 1 2 3 4 5 6 7 P 3 L f ix = 330 P opt = 322 , 700 Figure 2: LP solutio ns strongly d ep en d on fixed initial parameters. H6-Sp-bi-L: Splitting bi-criteria – This v arian t of the s p litting h euristic works similarly to H5-Sp-mono-L , bu t at each step it chooses the solution wh ic h minimizes max i ∈{ j,j ′ } ( ∆ latency ∆ per iod ( i ) ) while the fixed latency is not excee ded. Remark In the cont ext of M-JPEG coding, minimizing the lat ency for a fixed perio d cor- resp onds to a fixed co ding rate, and we want to min im ize the r esp onse time. The counterpart (minimizing the p eriod resp ecting a fixed lat ency L ) corresp onds to the question: if I accept to wait L time units for a given image, wh ich coding r ate can I achiev e? W e ev aluate the b ehavio r of the heuristics with r esp ect to these qu estions in Section 6.2 . 6 Exp erimen ts and sim ulations In the follo win g exp eriments, w e study the m apping of th e JPEG app lication on to clusters of wo rkstations. 6.1 Influence of fixed parameters In this first test s er ies, we examine the influence of fixed parameters on the solution of the linear program. A s shown in Figure 2 , the division into in terv als is highly dep end ant of the c hosen fi xed v alue. The op timal solution to minimize th e latency (without an y sup plemental constraint s) obviously consists in mapping the whole application pip eline onto the fastest pro cessor. As exp ected, if the p erio d fixed in the linear program is n ot smaller than the latter optimal mono-criterion latency , this solution is chosen. Dec reasing the v alue for the fixed p erio d imposes to split the stages a mong sev eral pro cessors, un til no m ore solution can b e foun d. F igure 2 (a) shows the division into inte rv als for a fixed p eriod . A fixed p eriod of T p erio d = 330 is su fficient ly high f or the whole pip eline to b e mapp ed on to the fastest pro cessor, wh ereas smaller p eriod s lead to splitting into interv als. W e would lik e to mention, that for a p erio d fixed to 300, there exists no solution anymore. The counterpart - fixed latency - can b e foun d in Figure 2 (b). Note th at the first tw o solutions find the same p eriod , but for a different latency . Th e fir st solution has a high v alue for latency , which allo ws more splits, hence larger communicatio n costs. Comparing the last lines of Figures 2 (a) and (b), w e INRIA Bi-criteria Pip eline Mappings 9 300 310 320 330 340 350 300 305 310 315 320 325 330 Optimal Latency Fixed Period P_fixed (a) Fixed P . 300 305 310 315 320 325 330 320 330 340 350 360 370 380 390 400 Optimal Period Fixed Latency L_fixed (b) Fixed L. Figure 3: Buck et b ehavior of LP solutions. state that b oth solutions are the s ame, and we ha ve T p erio d = T latency . Finally , expand ing the range of the fixed v alues, a s ort of buck et b eha vior becomes apparen t: Incr easing the fixed parameter h as in a first time no influence, the LP still finds the same solutio n u ntil the increase crosses an unknown b oun d and the LP can find a b etter solution. This p henomenon is sh o wn in Figure 3 . 6.2 Assessing heuristic p er formance The comparison of the solution returned b y th e LP program, in terms of optimal late ncy resp ecting a fixed p erio d (or the con verse) with the heuristics is shown in Figure 4 . The implementa tion is fed with the parameters of th e JPEG encod ing pip eline and compu tes the mapping on 10 randomly created platforms with 10 pro cessors. O n platforms 3 an d 5, no v alid sol ution can be found for the fi xed p erio d. There a re t wo imp ortan t p oints to mention. First, the s olutions f ound by H2 often are not v alid, since th ey do not resp ect the fixed p erio d, but they hav e the b est ratio latency/p erio d. Figure 5(b) p lots some more d etails: H2 achiev es goo d latency r esu lts, but th e fixed p eriod of P = 310 is ofte n viola ted. This is a consequence of the fact that the fixed p erio d v alue is very close to the feasible p erio d. When the tole rance f or the p eriod is b igger, this heur istic su cceeds to find low-la tency solutions. S econd, all solutions, LP and heuristics, alwa ys kee p the stages 4 to 7 together (see Figure 2 for an example). As stage 5 (DCT) is the most costly in terms of computation, the interv al conta ining these stages is resp onsible for the p erio d of the whole application. Finally , in the comparativ e study H1 alw ays finds the optimal p erio d for a fixed latency and we therefore recommend this heu ristic for p eriod optimizatio n. In the case of latency minimization for a fixed p erio d, then H5 is to use, as it alw ays fi nds the L P solution in the exp eriments. This is a striking result, esp ecially giv en the fact that the LP inte ger program ma y r equire a long time to compute th e solution (up to 11389 seconds in our exp eriments), while the heu ristics alwa ys complete in less than a second, and find the corresp onding optimal solution. 6.3 MPI sim ulations on a cluster This last experiment p erforms a JPEG enco ding sim ulation. All simulatio ns are made on a cluster of homogeneous Optiplex GX 745 machines with an Intel Core 2 Duo 6300 of 1,83Ghz. Heterogeneit y is enforced by increasing and decreasing the number of operations a pro cessor has to execute. The same holds f or bandwid th capacities. W e call th is exp eriment s imulation, RR n ° 6410 10 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert 280 300 320 340 360 380 400 0 1 2 3 4 5 6 7 8 9 heuristical solution random platform LP fixed P H1 H2 H3 H4 (a) Fixed P = 310. 270 280 290 300 310 320 330 340 350 360 0 1 2 3 4 5 6 7 8 9 heuristical solution random platform LP fixed L H5 H6 (b) Fixed L = 370. Figure 4: Beha vior of the heuristics (comparing to LP solutio n). 100 200 300 400 500 600 1 2 3 4 5 6 latency heuristic theoretical simulation (a) Simulativ e latency . 280 290 300 310 320 330 340 350 280 290 300 310 320 330 340 350 latency period LP H2 (b) H2 versus LP . Figure 5: MPI sim ulation r esu lts. as we do not paralle lize a real J P EG encoder , bu t we use a parallel pip eline app lication which has the same parameters for comm unication and computation as the JPEG encoder. An mpich implemen tation of MPI is used for comm unications. In this exp eriment the same random p latforms with 10 pro cessors and fixed parameters as in the theoretical experiments are used. W e measured the latency of the simulation, even for the h euristics of fixed latency , and computed the av erage ov er all rand om platforms. Figure 5(a) compares the av erage of the theoretical results of the heuristics to the av erage simulat ive p erform an ce. The s imulativ e b ehavior nicely mirrors the theoretical b eha vior, with the exception of H2 (see Figure 5(b) ). Here once again, some solutions of this h euristic are not v alid, as they do not resp ect the fixed p eriod . 7 Related w ork The blockwise indep endent p ro cessing of the JPEG encod er allo ws to app ly simple data par- allelism for efficient parallelization. Many p ap ers hav e ad d ressed this fine-grain parallelization opp ortunity [ 5 , 11 ]. In add ition, parallelization of almost a ll sta ges, from color space conv er- sion, ov er DCT to the Hu ffman encod in g has b een addressed [ 1 , 7 ]. Recent ly , w ith r esp ect to th e JPE G2000 co dec, efficien t parallelizati on of wa vele t codin g h as b een introdu ced [ 8 ]. All these w orks target the best sp eed-up with resp ect to different architectures and p ossible v arying load situations. Optimizing the p eriod and the latency is an imp ortan t issue when INRIA Bi-criteria Pip eline Mappings 11 encodin g a p ip eline of multiple images, as for instance for Motion JPE G (M-JPEG). T o meet these issues, one has to solv e in addition to the ab ov e mentio ned work a bi-criteria optimiza- tion problem, i.e., optimiz e the latency , as wel l as the perio d. The applicatio n of coarse grain parallelism seems to b e a promising solution. W e p rop ose to use an interv al-based mapping strategy allowing multiple stages to b e map p ed to one p ro cessor which allo ws m eeting th e most fl exible the domain constraint s (ev en for v ery large pictur es). Sev eral pipelined ve rsions of the J PEG encoding hav e b een co nsidered. They rely mainly on pixel or block-wise p aral- lelizati on [ 6 , 9 ]. F or instance, F erretti et al. [6] uses three pip elines to carry out concur rently the encod ing on indep endent p ixels extracted from the serial stream of in coming data. The pixel and b lock-based approach is ho wev er useful for small p ictures only . Recently , Sheel et al. [ 10 ] consider a pip eline architecture where each stage presen ts a step in the JPEG en- codin g. T h e targeted architecture consists of Xtensa LX p ro cessors wh ic h run su bprograms of the JPEG enco der p rogram. Eac h p rogram accepts data via the queues of the pro cessor, p erforms the necessary computatio n, and finally p ushes it to th e o utpu t qu eu e in to the next stage of the pip eline. The basic assump tions are similar to our w ork, how ev er n o optimization problem is consid ered and on ly runtime (latency) measurements are av aila ble. The sc hedule is static and set according to basic assumptions ab out the image p ro cessing, e.g., that the DCT is the m ost complex op eration in runtime. 8 Conclus ion In this p ap er, w e hav e stud ied the bi-criteria (min imizing latency and p erio d) mapping of pip eline workflow applica tions, fr om b oth a theoretical and p ractical p oint of view. On the theoretical side, we ha ve presented an integer linear p rogramming formulatio n for this NP- hard p roblem. On the practical side, we ha ve studied in depth the in terv al mapping of the JPEG enco ding pipeline on a cluster of workstations. Owing to the LP solution, we were able to charac terize a buck et b ehavior in the optimal solution, d ep ending on the in itial parameters. F urtherm ore, we hav e compared the b ehavior of some p olynomial heu ristics to th e LP solution and we were able to recommended tw o h euristics w ith almost optimal b ehavi or for parallel JPEG enco ding. Fin ally , we ev aluated the h eu ristics run n ing a parallel pip eline application with the s ame parameters as a JPEG enco der. The heur istics were designed for general pip eline applications, and s ome of them w ere aiming at applications with a large number of stages (3-splitting), thus a priori not v ery efficient on the JPEG encod er. Still, some of these heuristics r eac h the optimal solution in our experiments, which is a striking resu lt. A natural extension of this work would b e to consider fur ther image pro cessing applications with more pip eline stages or a sligh tly more complicated p ip eline architect ure. Naturally , our wo rk extends to JPEG 2000 en codin g w hich offers among others wa vel et coding and more complex multiple- comp onent imag e en co ding [ 4 ]. Another exte nsion is for the MPEG co ding family which uses lagged feedbac k: th e co ding of some types of frames dep ends on other frames. Differen tiating the t yp es of coding alg orithms, a p ip eline archite cture seems again to b e a promising solution arc hitecture. References [1] L. V. Agostini, I. S. Silv a, and S. Bampi. Parallel color space conv erters for J PEG image compression. Micr o ele ctr onics R eliability , 44(4):6 97–703, 2004. RR n ° 6410 12 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert [2] A. Benoit, V. Rehn-Sonigo, and Y. Robert. Multi-criteria S cheduling of Pip eline W ork- flows. In Heter oPar’07, Algorithms , Mo dels and T o ols for Par al lel Computing on H et- er o gene ous N etworks (in c onjunction with Cluster 20 07) . IEEE Computer Society Press, 2007. [3] P . Bhat, C. Ragha vendra, and V. P rasanna. Efficien t collectiv e communicatio n in d is- tributed heterog eneous systems. Journal of P ar al lel and Distribute d Computing , 63:25 1– 263, 2003. [4] C. Christop oulos, A. S kodras, and T. Ebrahimi. T h e J P EG2000 still image cod in g system: an ov erview. IEE E T r ansactions on Consumer Ele ctr onics , 46(4):1 103–112 7, 2000. [5] J. F alk emeier and G. Joub ert. Pa rallel image compr ession with jp eg for m ultimedisa applications. In High Performanc e Com puting: T e chnolo gies, Metho ds and Applic ations , Adv ances in Paral lel Computing, pages 379–394. North Holland, 1995. [6] M. F erretti and M. Boffadossi. A Parallel Pip elined Imp lementa tion of LOCO-I for JPEG- LS. In 17th International Confer e nc e on Pattern R e c o gnition (ICPR’04) , volume 1, p ages 769–7 72, 2004. [7] T. Kumaki, M. Ishizaki, T. Koide, H. J. Mattausc h, Y. Kur od a, H. No da, K. Dosak a, K. Arimoto, and K. Saito. Accelerat ion of DCT P r ocessing with Massive -Paralle l Memory-Em b edd ed S IMD Matrix Pr ocessor. IE ICE T r ansactions on Information and Systems - LETTER- Image Pr o c essing and Vide o Pr o c essing , E90-D(8 ):1312–1 315, 200 7. [8] P . Meerwald, R. Norce n, and A. Uhl. Paral lel JPEG2000 Image Co ding on Multiproces- sors. In IPDPS’02, Interna tional Par al lel and Distribute d Pr o c essing Symp osium . IEEE Computer S o ciety Press, 200 2. [9] M. P apadonikola kis, V. P anta zis, and A. P . K ak arountas. Efficient high-p erf ormance ASIC implementation of JP E G-LS enco der. In Pr o c e e dings of the Confer enc e on Design, Autom ation and T est in Eur op e (D A TE 2007) , vo lume IEEE Comm unications S o ciety Press, 2007. [10] S. L. Sh ee, A. Er dos, and S. Parame swaran. Arc hitectural E x p loration of Heterogeneous Multipro cessor Systems for JPEG. International J ournal of P ar al lel Pr o gr amming , 35, 2007. [11] K. Shen , G. Co ok, L. Jamieson, and E. Delp. An ov erview of parallel p ro cessing ap- proac hes to image and video compression. In Image and Vide o Compr ession , vo lume Pro c. S PIE 2186, pages 197–208 , 1994 . [12] J. Su bhlok and G. V ond ran. Optimal latency-throughpu t tr adeoffs for data parallel pip elines. In ACM Symp osium on P ar al lel Algorith ms and Ar chite ctur es SP AA’96 , pages 62–71 . ACM Press, 1996. [13] G. K. W allace. Th e JPEG still picture compression standard . Com mun. ACM , 34(4):30– 44, 1991. INRIA Bi-criteria Pip eline Mappings 13 [14] C. W en -Hsiung, C. S mith, and S. F ralic k. A F ast Compu tational Algorithm for the Discrete Cosine T ranfsorm. IE EE T r ansactions on Communic ations , 25(9):1004 –1009, 1977. RR n ° 6410 Unité de recherche INRIA Rhône-Alpe s 655, av enue de l’Eu rope - 38334 Montbonn ot Saint-Ismier (France) Unité de reche rche INRIA Futurs : Parc Club Orsay Uni versité - ZAC des V ignes 4, rue Jacques Monod - 91893 ORSA Y Cedex (Franc e) Unité de reche rche INRIA Lorraine : LORIA, T echnopôle de Nancy -Brabois - Campus scientifique 615, rue du Jardin Botani que - BP 101 - 54602 V illers-lè s-Nancy Cedex (France) Unité de reche rche INRIA Rennes : IRISA, Campus univ ersitai re de Beaulie u - 35042 Rennes Cedex (Franc e) Unité de recherch e INRIA Rocquen court : Domaine de V oluceau - Rocquencourt - BP 105 - 78153 Le Chesnay Cedex (France) Unité de reche rche INRIA Sophia Antipolis : 2004, route des Lucioles - BP 93 - 06902 Sophia Antipolis Cedex (France) Éditeur INRIA - Domaine de V olucea u - Rocquenc ourt, BP 105 - 78153 Le Chesnay Cedex (France) http://www .inria.fr ISSN 0249 -6399 apport de recherche ISSN 0249-6399 ISRN INRIA/RR--????--FR+ENG Thème NUM INSTITUT N A TION AL DE RECHERCHE EN INFORMA TIQUE ET EN A UTOMA TIQUE Bi-criteria Pipeline Mappings f or P arallel Image Processing Anne Benoit — Harald K osch — V e ronika Rehn-Sonigo — Yves Robert N° ???? January 2008 Unité de recherche INRIA Rhône-Alpe s 655, av enue de l’Europ e, 38334 Montbon not Saint Ismier (France) Téléphone : +33 4 76 61 52 00 — Téléco pie +33 4 76 61 52 52 Bi-criteria Pip eline Mapp ings for P arallel Image Pro cessing Anne Benoit , Harald Kosc h , V eronik a Rehn-Sonigo , Yv es Rob ert Th ` eme NUM — Syst ` emes num ´ eriques Pro jet GRAAL Rapp ort de rec herche n ° ???? — January 2008 — 13 pages Abstract: Mapping workflow app licatio ns onto parallel platforms is a c hallenging p r oblem, ev en for simple application patterns s u ch as pip eline graphs. Sev eral antagonisti c criteria should b e optimized, su ch as throu ghp ut and latency (or a combinatio n). Typical applications include d igital imag e pro cessing, where image s are p rocessed in steady-state mode. In th is pap er, we study the mapping of a p articular image pro cessing app licatio n, the JPEG encoding. Mapping pip elined JPEG enco ding onto parallel platforms is useful for instance for enco d ing Motion JPEG images. As the b i-criteria m apping problem is NP- complete, we concen trate on the ev aluatio n and performance of p olynomial h euristics. Key-w ords: pip eline, workflo w application, m ulti-criteria optimizatio n, JPEG enco ding This t ex t is also av ailable as a research rep ort of t h e Lab oratoire de l’Informatique d u Paral l´ elisme http://www .ens-lyon.fr/L IP . Mapping b i-cit ` ere de pip elines p our le traitemen t d’image en parall ` ele R ´ esum ´ e : L’ord onnancement et l’allocation des workflo ws sur plates-formes parall ` eles est un probl ` eme crucial, mˆ eme p our des applicatio ns simples comme d es graphes en pipeline. Plusieurs crit ` eres cont radictoires d oiv ent ˆ etre optimis ´ es, tels qu e le d´ ebit et la latence (ou une com binaison des deux). Des applications typiques incluent le traitemen t d’images num ´ eriques, o` u les imag es son t trait ´ ees en r ´ egime p ermanent. Dans ce rapp ort, nous ´ etudions l’ordonnancement et l’allocation d’une application de traitemen t d’imag e particuli ` ere, l’encodage JPEG. L’all o cation de l’enco dage JPEG p ip elin ´ e sur d es p lates-formes p arall` eles est p ar exemple utile p our l’encodage des images Motion JPEG. Comme le probl` eme de l’a llocation bi-crit ` ere est NP-complet, nous nous concentrons sur l’analyse et ´ ev aluation d’heuristiques polynomiales. Mots-cl ´ es : pip eline, app lication w orkflow, optimisation mult i-crit ` ere, encodage JPEG Bi-criteria Pip eline Mappings 3 1 Int ro duction This w ork considers the p roblem of mapping w orkflow applicatio ns on to parallel platforms. This is a chall enging problem, ev en for simple application patt erns. F or homogeneous ar- c hitectures, sev eral scheduling and load-balancing techniques h a ve b een dev eloped but the extension to heterogeneo us clusters makes the pr oblem more difficult. Structured programming app roac hes rule out many of the problems wh ic h the lo w-lev el parallel application developer is usu ally confronted to, such as d eadlocks or pr ocess starv ation. W e therefore fo cus on p ip eline applicatio ns, as they can easily b e expressed as algorithmic ske letons. More precisely , in this paper, we study the m app ing of a p articular pipeline appli- cation: we fo cus on the JPEG enco der (baseline process, b asic mode). T his image p ro cessing application transforms numerical pictures from any format into a standardized format called JPEG. Th is standard wa s dev eloped almost 20 yea rs ago to crea te a p ortable format for the compression of still images and new versions are created until now (see http://www.jp eg.org/) . JPEG (and later JPEG 2000 ) is used for encoding still images in Motion-JPEG (later MJ2). These standard s are commonly employ ed in IP-cams and are part of many video app lica- tions in the world of game consoles. Motion-JPEG (M-JPEG) has been adopted and furth er deve lop ed to several other formats, e.g., AMV (alternativ ely known as MTV) wh ic h is a pro- prietary video file f ormat designed to b e consumed on lo w-resource devices. The manner of encoding in M-JPEG and subsequent formats leads to a flo w of still image coding, hence pip eline mappin g is appropriate. W e consider the different steps of the enco der as a linear pip eline of stages, wh ere each stage gets some in p ut, has to p erf orm sev eral compu tations and transfers the output to the next stage. Th e corresp onding mappin g problem ca n b e stated informally as follo ws: wh ic h stage to assign to which p ro cessor? W e require the mapp ing to b e interv al-based, i.e., a pro cessor is assigned an interv al of consecutiv e stages. T wo key optimiza tion p arameters emerge. On the one hand, w e targe t a high throughput, or short p erio d, in order to be able to h andle as man y images as p ossible p er time un it. On the other h and, we aim at a short resp onse time, or latency , for the pro cessing of each image. Th ese t wo criteria are antago nistic: int uitively , w e obtain a high thr oughput with many pr ocessors to share the wo rk, wh ile we get a small latency by mapping man y stages to the same p ro cessor in order to av oid the cost of inter-stag e comm unications. The rest of the pap er is organized as follows: Section 2 briefly describ es JPEG cod ing principles. I n S ection 3 the theoretical and applicativ e f r amewo rk is introduced, and Section 4 is ded icated to linear pr ogramming formulation of the bi-criteria mapping. In Section 5 we describ e some p olynomial h euristics, wh ic h we use for our exp eriments of Section 6 . W e discuss related w ork in Secti on 7 . Finally , w e give some concluding remarks in Section 8 . 2 Princ iples of JPEG enco ding Here we b riefly p resent the mo de of op eration of a JPEG encod er (see [ 13 ] for further details). The encoder consists in seven pip eline stages, as sh o wn in Figure 1 . In the first stage, the image is scaled to hav e a multiple of an 8x8 pixel matrix, and the standard ev en claims a multi ple of 16x1 6. In the next stage a color space co nv ersion is performed: the colors of the picture are transf ormed from the RGB to the YUV-color mo del. T he sub-sampling stage is an optional stage, which, dep end ing on the samp ling rate, reduces the data v olume: as the RR n ° 012345 6789 4 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert Compressed Image Data Source Image Data Scaling YUV Con version Block Storage Subsampling FDCT Quantiz ation T able En tropy Encoder Huffman T able Quantiz er Figure 1: Steps of the JPEG encoding. human eye can dissolv e luminosity more easily than colo r, the chrominance co mp onents are sampled more rarely than the lum in ance comp onents. Adm ittedly , this leads to a loss of data. The last preparation ste p consists in the creation and storage of s o-calle d MCUs (Minim um Cod ed Units), which corresp ond to 8x8 p ixel blo cks in the picture. Th e next stage is the core of the encoder. It p erforms a F ast Discrete Cosine T ransformation (FDCT) (eg. [ 14 ]) on the 8x8 pixel b locks whic h are interpreted as a d iscrete signal of 64 v alues. After the transformation, every p oin t in the matrix is r ep r esent ed as a linear combination of the 64 p oint s. Th e quant izer reduces the image information to the imp ortant parts. Dep ending on the quantiz ation factor and qu ant ization matrix, irrelev ant frequencies are reduced. Th ereby quantiz ation errors can occur, that are remark able as quant ization noise or block ge neration in the encoded image. The last stage is the en tropy enco der, w hich p erforms a mod ified Huffman coding: it combines the v ariable lengt h co des of Huffman coding with the co ding of rep etitiv e d ata in run-length encod ing. 3 F ramew ork 3.1 Applicativ e fram ework On the th eoretical p oin t of view, we consider a p ip eline of n stages S k , 1 ≤ k ≤ n . T asks are fed into the pip eline and pro cessed from s tage to stage, until they exi t the pipeline after the last stage. The k -th stag e S k first receiv es an in put from the previous stage, of size δ k − 1 , then p erforms a n umber of w k computations, and finally outputs data of size δ k to the next stage. Th ese three op erations are p erformed sequen tially . T he fir st stage S 1 receiv es an input of size δ 0 from the ou tside w orld, while the last stage S n returns the r esult, of size δ n , to the outside world, thus these particular s tages b eha ve in the same wa y as the ot hers. On the practic al p oint of view, we consider the applicativ e pipeline of the JP EG enco der as presen ted in Figure 1 and its seven stages. 3.2 T arget platform W e target a platform with p pro cessors P u , 1 ≤ u ≤ p , fully int erconnected as a (virtual) clique. Th ere is a bidirectional link l i nk u,v : P u → P v b et ween any pro cessor pair P u and P v , of bandwidth b u,v . The sp eed of processor P u is denoted as s u , and it take s X/ s u time- units for P u to execute X floati ng p oint operations. W e also enforce a linear cost model for comm unications, hence it tak es X/ b time-units to send (resp. receiv e) a message of size X to (resp . from) P v . Comm unications cont ention is taken care of by enforcing the one-p ort mod el [ 3 ]. INRIA Bi-criteria Pip eline Mappings 5 3.3 Bi-criteria interv al mapping problem W e seek to map interv als of consecutiv e stages ont o processors [ 12 ]. In tuitiv ely , assigning sev eral consecutive tasks to the same pro cessor will increase their computational load, bu t ma y well dramatically decrease communicat ion requirements. W e search f or a partitio n of [1 ..n ] into m ≤ p in terv als I j = [ d j , e j ] such that d j ≤ e j for 1 ≤ j ≤ m , d 1 = 1, d j +1 = e j + 1 for 1 ≤ j ≤ m − 1 a nd e m = n . The optimization p roblem is to determine the b est mapp ing, ov er all p ossible partitions int o inte rv als, and ov er all p ro cessor assignmen ts. Th e ob jective can b e to min imize either the p erio d, or the late ncy , or a combination: given a thr eshold p eriod , what is the minimum latency that can b e achiev ed? and the counterpart: give n a threshold latency , what is the minimum perio d that can be ac hiev ed? The decision problem asso ciated to this bi-crit eria in terv al m apping optimization p roblem is NP-hard, since the p erio d m inimization problem is NP-hard for in terv al-based mappings (see [ 2 ]). 4 Linear program form ulation W e present here an integer linea r program to compute the optimal interv al-based bi-c riteria mapping on F ul ly Heter o gene ous platforms, resp ecting either a fixed latency or a fixed p eriod. W e assume n stages and p pr ocessors, plus tw o fi ctitious extra stages S 0 and S n +1 resp ectiv ely assigned to P in and P out . First we need to define a few v ariables: F or k ∈ [0 . .n + 1] and u ∈ [1 ..p ] ∪ { in , out } , x k ,u is a bo olean v ariable equal to 1 if stage S k is assigned to processor P u ; w e let x 0 , in = x n +1 , out = 1, and x k , in = x k , out = 0 for 1 ≤ k ≤ n . F or k ∈ [0 ..n ], u, v ∈ [1 ..p ] ∪ { in , out } w ith u 6 = v , z k ,u,v is a b o olean v ariable equal to 1 if stage S k is assigned to P u and stage S k +1 is assigned to P v : hence link u,v : P u → P v is used for the communication betw een th ese t wo stages. If k 6 = 0 then z k , in ,v = 0 for all v 6 = in a nd if k 6 = n then z k ,u, out = 0 for all u 6 = out . F or k ∈ [0 ..n ] and u ∈ [1 ..p ] ∪ { in , out } , y k ,u is a bo olean v ariable equal to 1 if stages S k and S k +1 are both assigned to P u ; we let y k , in = y k , out = 0 for all k , and y 0 ,u = y n,u = 0 for all u . F or u ∈ [1 ..p ], first ( u ) is an integer v ariable which denotes the first stage assigned to P u ; similarly , la s t ( u ) denotes the last stage assigned to P u . Thus P u is assigned the interv al [ first ( u ) , last ( u )]. Of course 1 ≤ first ( u ) ≤ l ast ( u ) ≤ n . T opt is the v ariable to optimize, so dependin g on the ob jectiv e function it corresp onds either to the perio d or to the latency . W e list b elo w the constraint s that need to b e enforced. F or simplicit y , we wr ite P u instead of P u ∈ [1 ..p ] ∪{ in , out } when summing o v er all pro cessors. First there are co nstraints for pro cessor a nd link usage: Eve ry stage is assigned a pro cessor: ∀ k ∈ [0 ..n + 1] , P u x k ,u = 1. Eve ry communicatio n either is assigned a link or collapses b ecause b oth stages are assigned to the same pro cessor: ∀ k ∈ [0 ..n ] , X u 6 = v z k ,u,v + X u y k ,u = 1 If stage S k is assigned to P u and stage S k +1 to P v , then l ink u,v : P u → P v is u s ed for this comm unication: ∀ k ∈ [0 ..n ] , ∀ u, v ∈ [1 ..p ] ∪ { in , out } , u 6 = v , x k ,u + x k +1 ,v ≤ 1 + z k ,u,v RR n ° 012345 6789 6 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert If b oth stages S k and S k +1 are assigned to P u , then y k ,u = 1: ∀ k ∈ [0 ..n ] , ∀ u ∈ [1 ..p ] ∪ { in , out } , x k ,u + x k +1 ,u ≤ 1 + y k ,u If stage S k is assig ned to P u , then necessarily first u ≤ k ≤ l ast u . W e write this constraint as: ∀ k ∈ [1 ..n ] , ∀ u ∈ [1 ..p ] , first u ≤ k .x k ,u + n . (1 − x k ,u ) ∀ k ∈ [1 ..n ] , ∀ u ∈ [1 ..p ] , last u ≥ k .x k ,u If stage S k is assigned to P u and stage S k +1 is assigned to P v 6 = P u ( i.e., z k ,u,v = 1) then necessarily last u ≤ k and first v ≥ k + 1 since we consider int erv als. W e write this constraint as: ∀ k ∈ [1 ..n − 1] , ∀ u, v ∈ [1 ..p ] , u 6 = v , last u ≤ k .z k ,u,v + n . (1 − z k ,u,v ) ∀ k ∈ [1 ..n − 1] , ∀ u, v ∈ [1 ..p ] , u 6 = v , first v ≥ ( k + 1) .z k ,u,v The latency of schedule is b oun ded by T latency : and t ∈ [1 ..p ] ∪ { in , out } . p X u =1 n X k =1 X t 6 = u δ k − 1 b t,u z k − 1 ,t,u + w k s u x k ,u + X u ∈ [1 ..p ] ∪{ in } δ n b u, out z n,u, out ≤ T latency and t ∈ [1 ..p ] ∪ { in , out } . There r emains to exp r ess the perio d of eac h pro cessor and to constrain it by T perio d : ∀ u ∈ [1 ..p ] , n X k =1 X t 6 = u δ k − 1 b t,u z k − 1 ,t,u + w k s u x k ,u + X v 6 = u δ k b u,v z k ,u,v ≤ T perio d Finally , the ob jective fun ction is either to min imize the p erio d T perio d resp ecting the fixed latency T latency or to minimize the latency T latency with a fixed perio d T perio d . So in the first ca se we fix T latency and s et T opt = T perio d . In the sec ond case T perio d is fixed a priori and T opt = T latency . With this mechanism the ob jectiv e function r educes to minimizing T opt in b oth cases. 5 Ov erview of the heuristics The p roblem of b i-criteria int erv al mapping of workflo w applications is NP-hard [ 2 ], so in this sect ion we briefly d escrib e polynomial heuristics to solv e it. See [ 2 ] for a more complete description or refer to the W eb at: http://g raal.ens - lyon.fr/~vsonigo/code /multicriteria/ In the follo win g, w e denote b y n the number of stag es, and by p the number of processors. W e distinguish tw o sets of h euristics. The heuristics of the first set aim to minimize the latency resp ecting an a pr iori fixed p eriod . The heur istics of the second set m inimize the count erpart: the latency is fixed a p riori and we try to ac hieve a minimum p eriod while resp ecting the lat ency constraint . INRIA Bi-criteria Pip eline Mappings 7 5.1 Minimizing Latency for a Fixed P erio d All the follo wing heuristics sort processors b y n on-increasing sp eed, and start by assigning all the stages to the first (fastest) pr ocessor in the list. This processor becomes use d . H1-Sp-mono-P: Splitting mono-criterion – A t eac h step, we select the used pro cessor j with the largest p erio d and we try to split its stage interv al, giving some stages to the next fastest pro cessor j ′ in the list (not yet used). This can b e done by splitting th e in terv al at any place, and either placing the fi rst p art of the interv al on j and the remainder on j ′ , or the other w ay roun d. The solution whic h minimizes max ( per iod ( j ) , per iod ( j ′ )) is c hosen if it is better than the original solution. Sp litting is p erformed as long as w e ha v e not r eac hed the fixed p erio d or until we cannot improv e the perio d an ymore. H2-Sp-bi-P: Splitting bi-criteria – This heur istic uses a b inary searc h o ver the latency . F or this purp ose at eac h iteration we fi x an authorized increa se of the optimal latency (which is obtained by mapping all stages on the fastest pro cessor), and we test if we get a feasible solution via splitting. T he splitting mechanism itself is quite similar to H1-Sp-mono-P except that we choose the solution that minimizes max i ∈{ j,j ′ } ( ∆ latency ∆ per iod ( j ) ) within the authorized latency increase to decide wh ere to split. While w e get a feasible solution, we reduce the authorized latency increase for the next iterat ion of the binary searc h, thereby aiming at minimizing the mapping global latency . H3-3-Sp-mono-P: 3-splitting mono -criterion – At eac h step we select the u sed p ro ces- sor j with the largest p erio d and we sp lit its interv al into three parts. F or this purp ose we try to map tw o parts of the interv al on the next pair of fastest p ro cessors in the list, j ′ and j ′′ , and to ke ep the third part on p r ocessor j . T esting all p ossible p ermutations and all p ossible p ositions where to cut, we c ho ose the solution that minimizes max ( per iod ( j ) , per iod ( j ′ ) , per iod ( j ′′ )). H4-3-Sp-bi-P: 3-splitting bi-criteria – In this heuristic the choic e of where to sp lit is more elaborated: it dep ends not only of the p eriod improv emen t, but also of the latency increase. Using the same splitting mec hanism as in H3-3-Sp-mono-P , w e select th e solu- tion that minimizes max i ∈{ j,j ′ ,j ′′ } ( ∆ latency ∆ per iod ( i ) ). Here ∆ lat en cy d enotes the difference b etw een the global latency of the solution b efore the split and after the split. In the same manner ∆ per iod ( i ) defines the difference b et ween the p eriod b efore th e split (ac hieved by processor j ) a nd the new perio d of pro cessor i . 5.2 Minimizing Perio d for a Fixed Latency As in the heuristics describ ed ab ov e, first of all we sort processors according to their sp eed and m ap all stage s on the fastest pro cessor. H5-Sp-mono-L: Splitting mono-criterion – This heuristic u ses the same metho d as H1- Sp-mono-P with a different b reak condition. Here splitting is p erformed as long as we d o not exceed the fixed latency , still c ho osing the solution that minimizes max ( per iod ( j ) , per iod ( j ′ )). RR n ° 012345 6789 8 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert a) b) 2 3 4 5 6 7 P 3 P 7 1 P 5 L f ix = 370 P opt = 307 , 319 1 2 3 4 5 6 7 P 6 P 3 P f ix = 310 L opt = 337 , 575 1 4 5 6 7 3 2 P 6 P 3 P f ix = 320 L opt = 336 , 729 4 5 6 7 1 2 3 P 3 P f ix = 330 L opt = 322 , 700 1 2 3 4 5 6 7 P 3 P 4 L f ix = 340 P opt = 307 , 319 1 2 3 4 5 6 7 P 3 L f ix = 330 P opt = 322 , 700 Figure 2: LP solutions strongly dep end on fixed initi al paramet ers. H6-Sp-bi-L: Splitting bi-criteria – This v ariant of the splitt ing heuristic works similarly to H5-Sp-mono-L , but at each step it chooses the s olution w h ic h m inimizes max i ∈{ j,j ′ } ( ∆ latency ∆ per iod ( i ) ) while the fixed latency is not exceeded. Remark In the co ntext of M-JPEG codin g, minimizing the latency for a fixed p erio d cor- resp onds to a fixed co ding rate, and w e wan t to minimize the response time. The counterpart (minimizing the perio d resp ecting a fixed latency L ) corresp on d s to the question: if I acce pt to wait L time units for a giv en image, which co ding rate can I achiev e? W e ev aluate the b ehavi or of the h euristics with r esp ect to these questions in Section 6.2 . 6 Exp erimen ts and sim ulations In the f ollo wing experiments, w e study the mapp in g of the JPEG applica tion onto clusters of wo rkstations. 6.1 Influence of fixed parameters In this fi rst test s eries, we examine the influence of fixed parameters on the solution of the linear program. As shown in Figure 2 , the division int o interv als is highly depend ant of the c hosen fixed v alue. The optimal solution to minimize the latency (without any supplementa l constrain ts) obvio usly consists in mapping the whole application pip eline on to the fastest pro cessor. As exp ected, if the perio d fixed in the linear program is not smaller than the latter optimal mono-criterion latency , this s olution is chose n. Decreasing the v alue for the fixed p erio d imposes to split the sta ges among several processors, un til n o m ore solution can b e found . Figure 2 (a) shows the division into interv als for a fixed p eriod. A fixed p erio d of T perio d = 330 is suffi cien tly high for the whole pip eline to b e mapp ed on to the fastest pro cessor, whereas smaller p erio ds lead to splitting into interv als. W e would lik e to men tion, that for a p erio d fixed to 300, there exists no solution anymore. Th e counte rpart - fixed latency - can b e found in Figure 2 (b). Not e th at the fi rst t wo solutions fi nd the same p eriod, but for a different latency . The first solution has a high v alue for la tency , which allo ws more splits, hence larger comm unication costs. Comp aring the last lines of Figures 2 (a) and (b), we INRIA Bi-criteria Pip eline Mappings 9 300 310 320 330 340 350 300 305 310 315 320 325 330 Optimal Latency Fixed Period P_fixed (a) Fixed P . 300 305 310 315 320 325 330 320 330 340 350 360 370 380 390 400 Optimal Period Fixed Latency L_fixed (b) Fixed L. Figure 3: Buc ket b eha vior of LP sol utions. state that b oth solutions are the same, and we ha ve T perio d = T latency . Finally , expanding the range of the fixed v alues, a sort of buck et b ehavior b ecomes app arent: Increasing the fixed parameter has in a first time no infl uence, the LP stil l finds the same solution until the increase crosses an unknown b ound and the LP can find a better solution. T his phenomenon is shown in Fig ure 3 . 6.2 Assessing heuristic p erformance The comparison of the solution returned by the LP program, in terms of optimal latency resp ecting a fi xed p erio d (or the con verse) with the heur istics is shown in Figure 4 . The implement ation is fed with the p arameters of the JPEG enco ding pipeline and computes the mapping on 10 randomly created platforms with 10 p rocessors. On p latforms 3 and 5, no v alid solution can be found for the fixed perio d. There are t wo important p oint s to m ent ion. First, the solutions found by H2 often are not v alid, s ince they do not resp ect the fi xed p erio d , but they h a ve th e b est ratio latency/perio d. Figure 5(b) plots some more details: H2 ac hieves goo d latency results, but the fixed p erio d of P=310 is often vi olated. Th is is a consequence of the f act that the fixed p eriod v alue is very close to the feasible p eriod . When the tolerance for the p eriod is bigger, this h euristic succeeds to find low-la tency solutions. Second, all solutions, LP and heuristics, alw ays kee p the stages 4 to 7 together (see Fig ure 2 for an exa mple). As stage 5 (DCT) is the most costly in terms of computation, the interv al con taining these stages is resp onsib le for the p eriod of the whole application. Finally , in the comparativ e study H1 alwa ys finds the optimal p erio d for a fixed latency and we therefore recommend this heuristic for p eriod optimizati on. I n the case of latency minimization for a fixed p eriod, then H5 is to u se, as it alwa ys fin ds the LP solution in the exp eriments. This is a striking result, esp eciall y giv en the fact that the L P int eger program ma y requ ire a long time to compute the solution (up to 11389 seconds in our exp eriments) , while the heuristics alw ays complete in less than a second, and find the corresp ond ing optimal solution. 6.3 MPI simul ations on a cluster This last experiment p erf orms a JPEG encoding sim ulation. All s imulations are made on a cluster of h omogeneous Optiplex GX 745 machines with an Intel Core 2 Duo 6300 of 1,83Ghz. Heteroge neity is enforced by increasing and decreasing the num b er of o p erations a pr o cessor has to execute. The same holds for band width capacities. W e call this exp eriment simulatio n, RR n ° 012345 6789 10 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert 280 300 320 340 360 380 400 0 1 2 3 4 5 6 7 8 9 heuristical solution random platform LP fixed P H1 H2 H3 H4 (a) Fixed P = 310. 270 280 290 300 310 320 330 340 350 360 0 1 2 3 4 5 6 7 8 9 heuristical solution random platform LP fixed L H5 H6 (b) Fixed L = 370. Figure 4: Beha vior of the heur istics (comparing to LP solution). 100 200 300 400 500 600 1 2 3 4 5 6 latency heuristic theoretical simulation (a) Simulativ e latency . 280 290 300 310 320 330 340 350 280 290 300 310 320 330 340 350 latency period LP H2 (b) H 2 versus LP . Figure 5: MPI sim ulation results. as we d o not p arallelize a real JPEG enco der, but we use a parallel pip eline application whic h has the same parameters f or comm unication and computation as the JPEG enco der. An mpich implemen tation of MPI is u sed for comm unications. In this exp eriment the same random platforms with 10 pro cessors and fixed parameters as in the theoretic al exp eriments are used. W e mea sured the latency of the simulation, even for the heur istics of fixed latency , and compu ted the av erage ov er all random platforms. Figure 5(a) compares the av erage of th e theoretical resu lts of the heuristics to the av erage simulat ive p erformance. Th e simulat ive b ehavior n icely mirrors the theoretica l b ehavior, with the exception of H2 (see Figure 5(b) ). Here once again, some solutions of this heuristic are not v alid, as th ey do not resp ect the fixed p erio d. 7 Related w ork The blockwise indep endent pro cessing of the JPEG encod er allo ws to apply simp le data par- allelism f or efficien t parallelization. Many pap ers h a ve addressed this fine-grain parallelizatio n opp ortunity [ 5 , 11 ]. In addition, parall elization of almost all stag es, from color sp ace conv er- sion, ov er DCT to the Huffman enco ding has b een addressed [ 1 , 7 ]. Recen tly , with resp ect to the JPEG2000 cod ec, efficient parallelizatio n of wa vele t coding h as b een introduced [ 8 ]. All these w orks target the b est sp eed-up with resp ect to differen t arc hitectures and possible v arying load situations. Optimizing the p erio d and the latency is an imp ortant issu e when INRIA Bi-criteria Pip eline Mappings 11 encoding a pip eline of multiple images, as for instance f or Motion JPEG (M-JPEG). T o meet these issues, one has to solve in addition to the abov e mentioned work a bi-criteria optimiza- tion problem, i.e ., optimize the latency , as well as the p eriod . The app lication of coa rse grain parallelism seems to b e a p r omising solution. W e prop ose to use an interv al-based mapping strategy allo wing multiple stages to b e mapp ed to one pro cessor which allo ws meeting the most fl exible the domain constrain ts (eve n for v ery large pictures). Several pip elined v ersions of the JPE G encod in g ha ve b een considered. They rely mainly on pixel or blo ck- wise paral- lelizat ion [ 6 , 9 ]. F or instance, F err etti e t al. [6] uses three pip elines to carry out concurrently the encod in g on in dep endent pixels extracted f rom the serial stream of incoming data. T he pixel and blo ck-based approac h is how eve r useful for small pictures only . Recently , Sheel et al. [ 10 ] consider a pip eline architec ture where each stage presen ts a step in the JPEG en- coding. Th e target ed architec ture consists of Xtensa LX pro cessors whic h run subp rograms of the JPEG encod er program. Each program acce pts data via the queues of the p ro cessor, p erforms the necessary compu tation, and fi nally pushes it to the outp u t queu e into the next stage of the pip eline. T he basic assu mptions are similar to our work, how ev er n o optimizatio n problem is considered and only r u ntime (late ncy) measurements are av ailable . The sc hedule is static and set according to basic assump tions ab out the image pro cessing, e.g., that the DCT is the most co mplex operation in runtime. 8 Conclusion In this pap er, we hav e stud ied the bi-criteria (minimizing latency and p erio d) mapp ing of pip eline workflo w applications, from b oth a theoretic al and pr actica l p oint of view. On the theoretica l side, we ha ve presente d an integ er linear programming formulatio n for this NP- hard pr oblem. On the p ractical sid e, we h av e studied in depth the int erv al mapping of the JPEG enco ding p ip eline on a cluster of wo rkstations. Ow in g to the LP solution, w e were able to charac terize a buck et b eha vior in the optimal solution, dep ending on the initial parameters. F urthermore, we h a ve compared the b ehavi or of some p olynomial heuristics to th e LP solution and we we re able to recommended t wo heur istics with almost optimal b eha vior for parallel JPEG enco d ing. Finally , we ev aluated the heuristics runn ing a parallel pip eline applicati on with the same parameters as a J PEG enco der. The h euristics were designed for general pip eline applications, and some of them were aimi ng at app licati ons w ith a large n umb er of stages (3-splitting) , th us a priori not very efficien t on the JPEG encoder. Still, some of these heuristics reach the optimal solution in our experiments, which is a striking result. A natural extension of this work would b e to consider fur ther image pr ocessing applications with more pip eline stages or a slig htly more complicated pip eline archite cture. Naturally , our wo rk extends to JPEG 2000 enco ding which offers among others wa vel et co ding and more complex mult iple-comp onent image enco ding [ 4 ]. Another extension is for the MPEG cod ing family whic h uses lagg ed f eedbac k: the co d ing of some t yp es of frames dep en d s on other frames. Diffe rentiati ng the types of coding algo rithms, a p ipeline architect ure seems again to b e a promising solution arc h itecture. References [1] L. V. Agostini, I. S. Silv a, and S. Bampi. Pa rallel color space conv erters for JPEG image compression. M icr o ele ctr onics R eliability , 44(4 ):697–70 3, 2004. RR n ° 012345 6789 12 A. Benoit , H. Kosch , V. R ehn-Sonigo , Y. R ob ert [2] A. Benoi t, V. Rehn-Sonigo, and Y. Rob ert. Multi-criteria Sc heduling of Pipeline W ork- flows. In H eter oPar’07, Algorith ms, Mo dels and T o ols for Par al lel Computing on H et- er o gene ous N etworks (in c onjunction with Cluster 2007) . IEEE Computer S ociety Press, 2007. [3] P . Bhat, C . Ragha ve ndr a, an d V. P rasanna. Efficien t colle ctiv e comm unication in dis- tributed h eterogeneous systems. J ournal of Par al lel and Distribute d Computing , 63:251 – 263, 2003. [4] C. Christop oulos, A. Skodras, and T. Ebrahimi. T he J PEG2000 still image co ding system: an ov erview. IEEE T r ansactions on Consumer Ele ctr onics , 46(4) :1103–11 27, 2000. [5] J. F alk emeier and G. Joub ert. P arallel image compression with jp eg for multimedisa applications. In H igh Performanc e Com puting: T e chnolo gies, Metho ds and Appl ic ations , Adv ances in Pa rallel C omputing, pages 37 9–394. North Holland, 199 5. [6] M. F erretti and M. Boffadossi. A Pa rallel Pip elined Implementat ion of LOCO-I for JPEG- LS. In 17th Interna tional Confer enc e on Patt ern R e c o gnition (ICPR’04 ) , vol ume 1, pages 769–7 72, 2004. [7] T. Kumaki, M. Ishizaki, T. Koide, H. J. Mattausc h, Y. Kur o da, H. Nod a, K. Dosak a, K. Arimoto, and K. S aito. Acceleration of DCT Pr o cessing with Massive-P arallel Memory-Em b edded SIMD Matrix Pr ocessor. IEICE T r ansaction s on Information and Systems - LETTER- Image Pr o c essing and Vide o Pr o c essing , E90- D(8):131 2–1315, 2007. [8] P . Meerwal d, R. Norcen, and A. Uhl. Parallel J P E G2000 Image C od ing on Multiproces- sors. In IP DPS’02, Internation al Par al lel and D istribute d Pr o c essing Symp osium . IEEE Computer So ciet y Press, 2002. [9] M. P apadoniko lakis, V. Pa ntazis, and A. P . Kak arountas. Efficient high-p erformance ASIC implementati on of JPEG-LS en co der. In Pr o c e e dings of the Confer e nc e on Design, Autom ation and T est in Eur op e (DA TE2007 ) , vo lume IEEE C ommunicat ions Societ y Press, 2007. [10] S. L. Shee, A. Erdos, and S. P arameswaran. Architect ural Exploration of Heteroge neous Multiprocessor Systems for JP E G. International J ournal of Par al lel Pr o g r amming , 35, 2007. [11] K. Shen, G. Cook, L. Jamieson, and E. Delp. An ov erview of parallel pr ocessing ap- proac hes to image and video compression. In Image and Vide o Comp r ession , vo lume Proc. SPIE 2186, pages 197–208 , 1994 . [12] J. Sub hlok and G. V ondran. O ptimal latency-throughput tradeoffs f or data parallel pip elines. In ACM Symp osium on Par al lel Algorith ms and Ar chite ctur es SP AA’96 , p ages 62–71 . ACM Press, 1996. [13] G. K . W allac e. The JPEG still picture compression standard. Comm un. ACM , 34(4):30– 44, 1991. INRIA Bi-criteria Pip eline Mappings 13 [14] C. W en-Hsiun g, C . Smith, and S. F ralic k. A F ast Computational Algorithm for the Discrete Cosine T ranf s orm. IEE E T r ansactions on Communic ations , 25(9): 1004–100 9, 1977. RR n ° 012345 6789 Unité de recherche INRIA Rhône-Alpe s 655, av enue de l’Eu rope - 38334 Montbonn ot Saint-Ismier (France) Unité de reche rche INRIA Futurs : Parc Club Orsay Uni versité - ZAC des V ignes 4, rue Jacques Monod - 91893 ORSA Y Cedex (Franc e) Unité de reche rche INRIA Lorraine : LORIA, T echnopôle de Nancy -Brabois - Campus scientifique 615, rue du Jardin Botani que - BP 101 - 54602 V illers-lè s-Nancy Cedex (France) Unité de reche rche INRIA Rennes : IRISA, Campus univ ersitai re de Beaulie u - 35042 Rennes Cedex (Franc e) Unité de recherch e INRIA Rocquen court : Domaine de V oluceau - Rocquencourt - BP 105 - 78153 Le Chesnay Cedex (France) Unité de reche rche INRIA Sophia Antipolis : 2004, route des Lucioles - BP 93 - 06902 Sophia Antipolis Cedex (France) Éditeur INRIA - Domaine de V olucea u - Rocquenc ourt, BP 105 - 78153 Le Chesnay Cedex (France) http://www.in ria.fr ISSN 0249 -6399 260 270 280 290 300 310 320 330 340 350 0 2 4 6 8 10 heuristical solution random platform LP fixed L H1 H2 H3 H4 LP fixed P H5 H6 300 350 400 450 500 0 2 4 6 8 10 simulative solution random platform H1 H2 H3 H4 H5 H6 250 300 350 400 450 500 0 1 2 3 4 5 6 7 8 9 simulative solution random platform LP fixed L H1 H2 H3 H4 250 300 350 400 450 500 0 1 2 3 4 5 6 7 8 9 simulative solution random platform LP fixed P H5 H6 260 280 300 320 340 360 380 400 0 1 2 3 4 5 6 7 8 9 simulative solution random platform LP fixed P H1 H2 H3 H4 H5 H6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment