스케일러블 딥 객체 탐지와 효율적 박스 예측

본 논문은 클래스에 구애받지 않는 바운딩 박스 후보를 다수 예측하고, 각 박스에 객체 존재 확률을 부여하는 DeepMultiBox 모델을 제안한다. 바운딩 박스와 신뢰도 점수를 동시에 학습하는 새로운 손실 함수를 도입해, 적은 수의 후보만으로도 VOC2007 및 ILSVRC2012에서 경쟁력 있는 검출 성능을 달성한다.

저자: Dumitru Erhan, Christian Szegedy, Alex

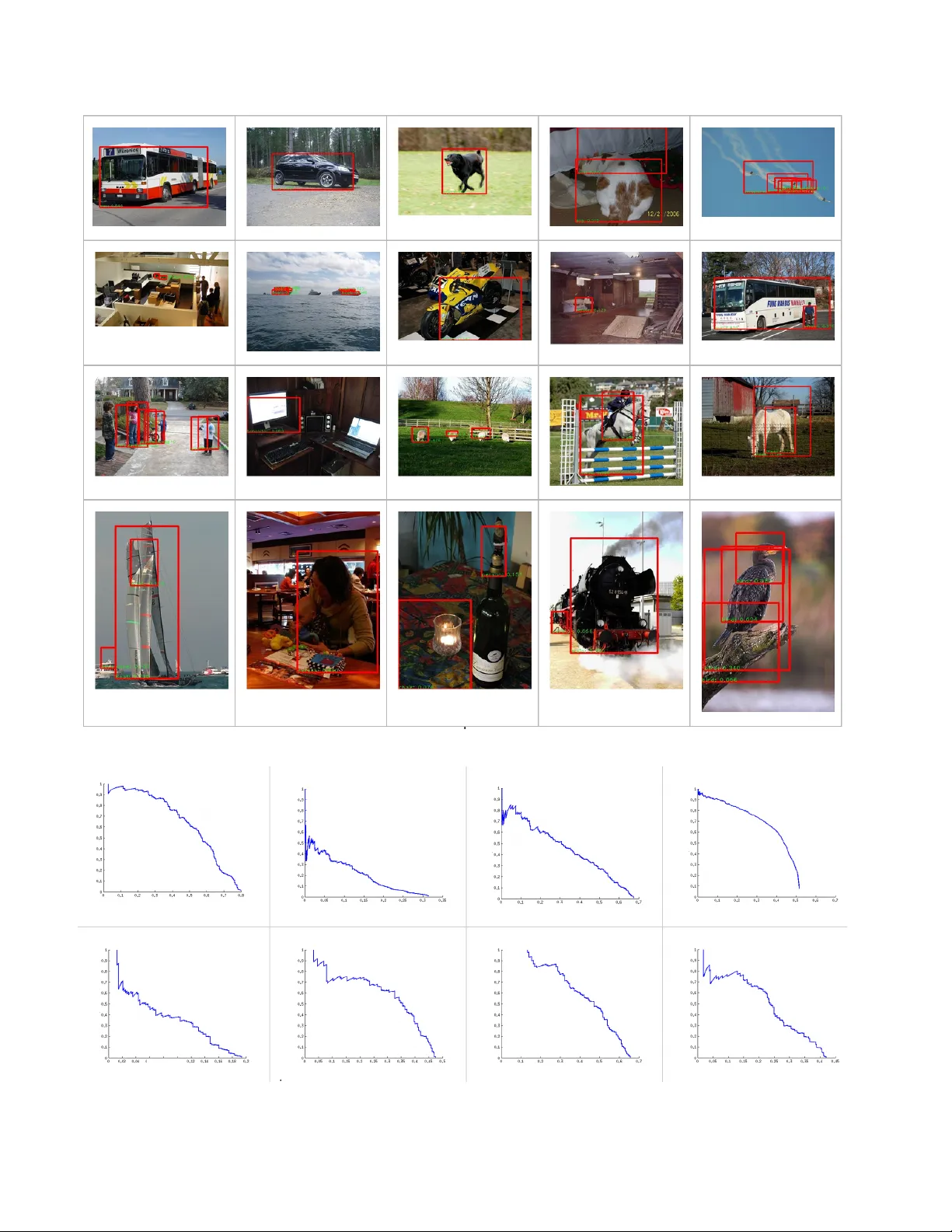

Scalable Object Detection using Deep Neural Networks Dumitru Erhan Christian Szegedy Ale xander T oshe v Dragomir Anguelov Google { dumitru, szegedy, toshev, dragomir } @google.com Abstract Deep con volutional neural networks have r ecently achie ved state-of-the-art performance on a number of image r eco gnition benchmarks, including the Ima geNet Lar ge-Scale V isual Recognition Challeng e (ILSVRC-2012). The winning model on the localization sub-task was a net- work that pr edicts a single bounding box and a confidence scor e for each object cate gory in the imag e. Such a model captur es the whole-image context ar ound the objects but cannot handle multiple instances of the same object in the image without naively r eplicating the number of outputs for each instance . In this work, we pr opose a saliency-inspir ed neural network model for detection, which pr edicts a set of class-agnostic bounding boxes along with a single score for each box, corresponding to its likelihood of containing any object of interest. The model naturally handles a variable number of instances for each class and allows for cr oss- class g eneralization at the highest levels of the network. W e ar e able to obtain competitive r ecognition performance on V OC2007 and ILSVRC2012, while using only the top fe w pr edicted locations in each image and a small number of neural network e valuations. 1. Introduction Object detection is one of the fundamental tasks in com- puter vision. A common paradigm to address this problem is to train object detectors which operate on a subimage and apply these detectors in an exhausti ve manner across all lo- cations and scales. This paradigm was successfully used within a discriminativ ely trained Deformable Part Model (DPM) to achiev e state-of-art results on detection tasks [ 6 ]. The exhausti ve search through all possible locations and scales poses a computational challenge. This challenge be- comes e ven harder as the number of classes grows, since most of the approaches train a separate detector per class. In order to address this issue a variety of methods were proposed, varying from detector cascades, to using seg- mentation to suggest a small number of object hypotheses [ 14 , 2 , 4 ]. In this paper , we ascribe to the latter philosphy and pro- pose to train a detector, called “DeepMultiBox”, ’ which generates a fe w bounding boxes as object candidates. These boxes are generated by a single DNN in a class agnostic manner . Our model has sev eral contributions. First, we de- fine object detection as a regression problem to the coordi- nates of se veral bounding boxes. In addition, for each pre- dicted box the net outputs a confidence score of ho w lik ely this box contains an object. This is quite different from tra- ditional approaches, which score features within predefined boxes, and has the advantage of expressing detection of ob- jects in a very compact and ef ficient way . The second major contribution is the loss, which trains the bounding box predictors as part of the network training. For each training e xample, we solve an assignment problem between the current predictions and the groundtruth boxes and update the matched box coordinates, their confidences and the underlying features through Backpropagation. In this way , we learn a deep net tailored towards our localiza- tion problem. W e capitalize on the excellent representation learning abilities of DNNs, as recently exeplified recently in image classification [ 10 ] and object detection settings [ 13 ], and perform joint learning of representation and predictors. Finally , we train our object box predictor in a class- agnostic manner . W e consider this as a scalable way to en- able efficient detection of large number of object classes. W e sho w in our experiments that by only post-classifying less than ten boxes, obtained by a single network applica- tion, we can achiev e state-of-art detection results. Further , we show that our box predictor generalizes ov er unseen classes and as such is flexible to be re-used within other detection problems. 2. Pre vious work The literature on object detection is v ast, and in this sec- tion we will focus on approaches exploiting class-agnostic ideas and addressing scalability . Many of the proposed detection approaches are based on part-based models [ 7 ], which more recently hav e achieved impressiv e performance thanks to discriminative learning and carefully crafted features [ 6 ]. These methods, howe ver , 1 rely on exhaustiv e application of part templates over multi- ple scales and as such are expensi ve. Moreover , they scale linearly in the number of classes, which becomes a chal- lenge for modern datasets such as ImageNet. T o address the former issue, Lampert et al. [ 11 ] use a branch-and-bound strate gy to av oid e v aluating all potential object locations. T o address the latter issue, Song et al. [ 12 ] use a low-dimensional part basis, shared across all object classes. A hashing based approach for efficient part detec- tion has shown good results as well [ 3 ]. A different line of work, closer to ours, is based on the idea that objects can be localized without having to kno w their class. Some of these approaches build on bottom-up classless segmentation [ 9 ]. The segments, obtained in this way , can be scored using top-do wn feedback [ 14 , 2 , 4 ]. Us- ing the same moti v ation, Alexe et al. [ 1 ] use an inexpen- siv e classifier to score object hypotheses for being an ob- ject or not and in this w ay reduce the number of location for the subsequent detection steps. These approaches can be thought of as Multi-layered models, with segmentation as first layer and a segment classification as a subsequent layer . Despite the fact that they encode pro ven perceptual principles, we will show that having deeper models which are fully learned can lead to superior results. Finally , we capitalize on the recent advances in Deep Learning, most noticeably the work by Krizhe vsky et al. [ 10 ]. W e extend their bounding box regression approach for detection to the case of handling multiple objects in a scalable manner . DNN-based regression, to object masks howe ver , has been applied by Szegedy et al. [ 13 ]. This last approach achie ves state-of-art detection performance but does not scale up to multiple classes due to the cost of a single mask regression. 3. Proposed appr oach W e aim at achieving a class-agnostic scalable object de- tection by predicting a set of bounding boxes, which rep- resent potential objects. More precisely , we use a Deep Neural Network (DNN), which outputs a fix ed number of bounding box es. In addition, it outputs a score for each box expressing the networkconfidence of this box containing an object. Model T o formalize the above idea, we encode the i -th object box and its associated confidence as node values of the last net layer: Bounding box: we encode the upper-left and lower -right coordinates of each box as four node values, which can be written as a vector l i ∈ R 4 . These coordinates are normalized w . r . t. image dimensions to achiev e in vari- ance to absolute image size. Each normalized coordi- nate is produced by a linear transformation of the last hidden layer . Confidence: the confidence score for the box containing an object is encoded as a single node value c i ∈ [0 , 1] . This v alue is produced through a linear transformation of the last hidden layer followed by a sigmoid. W e can combine the bounding box locations l i , i ∈ { 1 , . . . K } , as one linear layer . Similarly , we can treat col- lection of all confidences c i , i ∈ { 1 , . . . K } as the output as one sigmoid layer . Both these output layers are connected to the last hidden layers. At inference time, out algorithm produces K bound- ing boxes. In our experiments, we use K = 100 and K = 200 . If desired, we can use the confidence scores and non-maximum suppression to obtain a smaller number of high-confidence boxes at inference time. These boxes are supposed to represent objects. As such, they can be classi- fied with a subsequent classifier to achie ve object detection. Since the number of boxes is very small, we can afford pow- erful classifiers. In our experiments, we use another DNN for classification [ 10 ]. T raining Objectiv e W e train a DNN to predict bounding boxes and their confidence scores for each training image such that the highest scoring boxes match well the ground truth object boxes for the image. Suppose that for a partic- ular training e xample, M objects were labeled by bounding boxes g j , j ∈ { 1 , . . . , M } . In practice, the number of pre- dictions K is much larger than the number of groundtruth boxes M . Therefore, we try to optimize only the subset of predicted boxes which match best the ground truth ones. W e optimize their locations to impro ve their match and maxi- mize their confidences. At the same time we minimize the confidences of the remaining predictions, which are deemed not to localize the true objects well. T o achiev e the abov e, we formulate an assignment prob- lem for each training example. W e x ij ∈ { 0 , 1 } denote the assignment: x ij = 1 iff the i -th prediction is assigned to j -th true object. The objecti ve of this assignment can be expressed as: F match ( x, l ) = 1 2 X i,j x ij || l i − g j || 2 2 (1) where we use L 2 distance between the normalized bound- ing box coordinates to quantify the dissimilarity between bounding boxes. Additionally , we w ant to optimize the confidences of the boxes according to the assignment x . Maximizing the con- fidences of assigned predictions can be expressed as: F conf ( x, c ) = − X i,j x ij log( c i ) − X i (1 − X j x ij ) log(1 − c i ) (2) In the above objectiv e P j x ij = 1 iff prediction i has been matched to a groundtruth. In that case c i is being maxi- mized, while in the opposite case it is being minimized. A different interpretation of the above term is achiev ed if we P j x ij view as a probability of prediction i containing an object of interest. Then, the above loss is the negativ e of the entropy and thus corresponds to a max entropy loss. The final loss objectiv e combines the matching and con- fidence losses: F ( x, l , c ) = αF match ( x, l ) + F conf ( x, c ) (3) subject to constraints in Eq. 1 . α balances the contrib ution of the different loss terms. Optimization For each training e xample, we solve for an optimal assignment x ∗ of predictions to true boxes by x ∗ = arg min x F ( x, l , c ) (4) subject to x ij ∈ { 0 , 1 } , X i x ij = 1 , (5) where the constraints enforce an assignment solution. This is a v ariant of bipartite matching, which is polynomial in complexity . In our application the matching is v ery ine x- pensiv e – the number of labeled objects per image is less than a dozen and in most cases only very fe w objects are labeled. Then, we optimize the network parameters via back- propagation. For example, the first deriv atives of the back- propagation algorithm are computed w . r . t. l and c : ∂ F ∂ l i = X j ( l i − g j ) x ∗ ij (6) ∂ F ∂ c i = P j x ∗ ij c i c i (1 − c i ) (7) T raining Details While the loss as defined above is in principle suf ficient, three modifications make it possible to reach better accuracy significantly faster . The first such modification is to perform clustering of ground truth loca- tions and find K such clusters/centroids that we can use as priors for each of the predicted locations. Thus, the learn- ing algorithm is encouraged to learn a residual to a prior , for each of the predicted locations. A second modification pertains to using these priors in the matching process: instead of matching the N ground truth locations with the K predictions, we find the best match between the K priors and the ground truth. Once the matching is done, the target confidences are computed as before. Moreo ver , the location prediction loss is also unchanged: for any matched pair of (target, prediction) locations, the loss is defined by the difference between the groundtruth and the coordinates that correspond to the matched prior . W e call the usage of priors for matching prior matching and hypothesize that it enforces div ersifica- tion among the predictions. It should be noted, that although we defined our method in a class-agnostic w ay , we can apply it to predicting object boxes for a particular class. T o do this, we simply need to train our models on bounding boxes for that class. Further , we can predict K boxes per class. Unfortu- nately , this model will hav e number of parameters grow- ing linearly with the number of classes. Also, in a typi- cal setting, where the number of objects for a gi ven class is relatively small, most of these parameters will see v ery few training e xamples with a corresponding gradient con- tribution. W e argue thusly that our two-step process – first localize, then recognize – is a superior alternative in that it allows lev eraging data from multiple object types in the same image using a small number of parameters. 4. Experimental results 4.1. Network Ar chitecture and Experiment Details The network architecture for the localization and clas- sification models that we use is the same as the one used by [ 10 ]. W e use Adagrad for controlling the learning rate decay , mini-batches of size 128, and parallel distributed training with multiple identical replicas of the network, which achie ves faster con vergence. As mentioned previ- ously , we use priors in the localization loss – these are com- puted using k -means on the training set. W e also use an α of 0 . 3 to balance the localization and confidence losses. The localizer might output coordinates outside the crop area used for the inference. The coordinates are mapped and truncated to the final image area, at the end. Boxes are additionally pruned using non-maximum-suppression with a Jaccard similarity threshold of 0 . 5 . Our second model then classifies each bounding box as objects of interest or “background”. T o train our localizer networks, we generated approxi- mately 30 million images from the training set, applying the following procedure to each image in the training set. The samples are shuffled at the end. T o train our localizer networks, we generated approximately 30 million images from the training set by applying the following procedure to each image in the training set. For each image, we gen- erate the same number of square samples such that the total number of samples is about ten million. For each image, the samples are bucketed such that for each of the ratios in the ranges of 0 − 5% , 5 − 15% , 15 − 50% , 50 − 100% , there is an equal number of samples in which the ratio covered by the bounding boxes is in the gi ven range. The selection of the training set and most of our hyper- parameters were based on past experiences with non-public data sets. For the experiments belo w we hav e not explored any non-standard data generation or re gularization options. In all experiments, all hyper-parameters were selected by ev aluating on a held out portion of the training set (10% random choice of examples). 4.2. V OC 2007 The Pascal V isual Object Classes (VOC) Challenge [ 5 ] is the most commong benchmark for object detection algo- rithms. It consists mainly of complex scene images in which bounding boxes of 20 di verse object classes were labelled. In our ev aluation we focus on the 2007 edition of VOC, for which a test set was released. W e present results by training on V OC 2012, which contains approx. 11000 im- ages. W e trained a 100 box localizer as well as a deep net based classifier [ 10 ]. 4.2.1 T raining methodology W e trained the classifier on a data set comprising of • 10 million crops overlapping some object with at least 0 . 5 Jaccard ov erlap similarity . The crops are labeled with one of the 20 V OC object classes. • 20 million ne gati ve crops that hav e at most 0 . 2 Jaccard similarity with any of the object boxes. These crops are labeled with the special “background” class label. The architecture and the selection of hyperparameters fol- lowed that of [ 10 ]. 4.2.2 Evaluation methodology In the first round, the localizer model is applied to the max- imum center square crop in the image. The crop is resized to the network input size which is 220 × 220 . A single pass through this network gives us up to hundred candi- date boxes. After a non-maximum-suppression with over - lap threshold 0 . 5 , the top 10 highest scoring detections are kept and were classified by the 21-way classifier model in a separate passes through the network. The final detection score is the product of the localizer score for the given box multiplied by the score of the classifier e valuated on the maximum square region around the crop. These scores are passed to the ev aluation and were used for computing the precision recall curves. 4.3. Discussion First, we analyze the performance of our localizer in iso- lation. W e present the number of detected objects, as de- fined by the Pascal detection criterion, against the number of produced bounding boxes. In Fig. 1 plot we show results obtained by training on V OC2012. In addition, we present results by using the max-center square crop of the image as input as well as by using two scales: the max-center crop by a second scale where we select 3 × 3 windows of size 60% of the image size. 0 10 20 30 40 50 60 70 80 90 100 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Detection rate Number of boxes One shot (max center crop) Max center + 1 scale Max center + 2 scales Figure 1. Detection rate of class “object” vs number of bounding boxes per image. The model, used for these results, w as trained on V OC 2012. As we can see, when using a budget of 10 bounding boxes we can localize 45 . 3% of the objects with the first model, and 48% with the second model. This shows better perfomance than other reported results, such as the object- ness algorithm achieving 42% [ 1 ]. Further, this plot sho ws the importance of looking at the image at sev eral resolu- tions. Although our algorithm manages to get large number of objects by using the max-center crop, we obtain an addi- tional boost when using higher resolution image crops. Further , we classify the produced bounding boxes by a 21-way classifier, as described abov e. The a verage preci- sions (APs) on V OC 2007 are presented in T able 1 . The achiev ed mean AP is 0 . 29 , which is on par with state-of-art. Note that, our running time complexity is very lo w – we simply use the top 10 boxes. Example detections and full precision recall curves are shown in Fig. 2 and Fig. 3 respectively . It is important to note that the visualized detections were obtained by using only the max-centered square image crop, i. e. the full im- age was used. Ne vertheless, we manage to obtain relatively small objects, such as the boats in row 2 and column 2, as well as the sheep in row 3 and column 3. 4.4. ILSVRC 2012 Detection Challenge For this set of experiments, we used the ILSVRC 2012 detection challenge dataset. This dataset consists of 544,545 training images labeled with categories and loca- tions of 1,000 object categories, relativ ely uniformly dis- tributed among the classes. The validation set, on which the performance metrics are calculated, consists of 48,238 images. 4.4.1 T raining methodology In addition to a localization model that is identical (up to the dataset on which it is trained on) to the V OC model, we Figure 2. Sample of detection results on V OC 2007. recall recall recall recall recall recall recall recall precision precision precision precision precision precision precision precision cat chair horse person potted plant sheep train tv Figure 3. Precision-recall curves on selected V OC classes. class aero bicycle bird boat bottle bus car cat chair cow DeepMultiBox .413 .277 .305 .176 .032 .454 .362 .535 .069 .256 3-layer model [ 15 ] .294 .558 .094 .143 .286 .440 .513 .213 .200 .193 Felz. et al. [ 6 ] .328 .568 .025 .168 .285 .397 .516 .213 .179 .185 Girshick et al. [ 8 ] .324 .577 .107 .157 .253 .513 .542 .179 .210 .240 Szegedy et al. [ 13 ] .292 .352 .194 .167 .037 .532 .502 .272 .102 .348 class table dog horse m-bike person plant sheep sof a train tv DeepMultiBox .273 .464 .312 .297 .375 .074 .298 .211 .436 .225 3-layer model [ 15 ] .252 .125 .504 .384 .366 .151 .197 .251 .368 .393 Felz. et al. [ 6 ] .259 .088 .492 .412 .368 .146 .162 .244 .392 .391 Girshick et al. [ 8 ] .257 .116 .556 .475 .435 .145 .226 .342 .442 .413 Szegedy et al .[ 13 ] .302 .282 .466 .417 .262 .103 .328 .268 .398 .47 T able 1. A verage Precision on V OC 2007 test of our method, called DeepMultiBox, and other competitiv e methods. DeepMultibox was trained on V OC2012 training data, while the rest of the models were trained on V OC2007 data. also train a model on the ImageNet Classification challenge data, which will serve as the recognition model. This model is trained in a procedure that is substantially similar to that of [ 10 ] and is able to achiev e the same results on the clas- sification challenge validation set; note that we only train a single model, instead of 7 – the latter brings substantial benefits in terms of classification accuracy , but is 7 × more expensi ve, which is not a ne gligible factor . Inference is done as with the V OC setup: the number of predicted locations is K = 100 , which are then reduced by Non-Max-Suppression (Jaccard o verlap criterion of 0 . 4 ) and which are post-scored by the classifier: the score is the product of the localizer confidence for the giv en box mul- tiplied by the score of the classifier ev aluated on the mini- mum square region around the crop. The final scores (de- tection score times classification score) are then sorted in descending order and only the top scoring score/location pair is kept for a given class (as per the challenge e valua- tion criterion). In all e xperiments, the hyper -parameters were selected by ev aluating on a held out portion of the training set (10% random choice of examples). 4.4.2 Evaluation methodology The official metric of the “Classification with localization“ ILSVRC-2012 challenge is detection@5, where an algo- rithm is only allo wed to produce one box per each of the 5 labels (in other words, a model is neither penalized nor re- warded for producing valid multiple detections of the same class), where the detection criterion is 0.5 Jaccard overlap with any of the ground-truth boxes (in addition to the match- ing class label). T able 4.4.2 contains a comparison of the proposed method, dubbed DeepMultiBox, with classifying the ground-truth boxes directly and with the approach of in- ferring one box per class directly . The metrics reported are detection5 and classification5, the official metrics for the ILSVRC-2012 challenge metrics. In the table, we vary the number of windows at which we apply the classifier (this number represents the top windo ws chosen after non- max-suppression, the ranking coming from the confidence scores). The one-box-per-class approach is a careful re- implementation of the winning entry of ILSVRC-2012 (the “classification with localization” challenge), with 1 network trained (instead of 7). T able 2. Performance of Multibox (the proposed method) vs. clas- sifying ground-truth boxes directly and predicting one box per class Method det@5 class@5 One-box-per-class 61.00% 79.40% Classify GT directly 82.81% 82.81% DeepMultiBox, top 1 window 56.65% 73.03% DeepMultiBox, top 3 windows 58.71% 77.56% DeepMultiBox, top 5 windows 58.94% 78.41% DeepMultiBox, top 10 windows 59.06% 78.70% DeepMultiBox, top 25 windows 59.04% 78.76% W e can see that the DeepMultiBox approach is quite competitiv e: with 5-10 windo ws, it is able to perform about as well as the competing approach. While the one-box-per- class approach may come of f as more appealing in this par- ticular case in terms of the raw performance, it suf fers from a number of drawbacks: first, its output scales linearly with the number of classes, for which there needs to be training data. The multibox approach can in principle use transfer learning to detect certain types of objects on which it has nev er been specifically trained on, but which share similar - ities with objects that it has seen 1 . Figure 5 explores this hypothesis by observing what happens when one tak es a lo- calization model trained on ImageNet and applies it on the 1 For instance, if one trains with fine-grained categories of dogs, it will likely generalize to other kinds of breeds by itself Figure 4. Some selected detection results on the ILSVRC-2012 detection challenge validation set. V OC test set, and vice-versa. The figure sho ws a precision- recall curve: in this case, we perform a class-agnostic de- tection: a true positiv e occurs if two windo ws (prediction and groundtruth) overlap by more than 0.5, independently of their class. Interestingly , the ImageNet-trained model is able to capture more VOC windows than vice-versa: we hypothesize that this is due to the ImageNet class set being much richer than the V OC class set. Secondly , the one-box-per-class approach does not gen- eralize naturally to multiple instances of objects of the same type (except via the the method presented in this work, for instance). Figure 5 shows this too, in the comparison between DeepMultiBox and the one-per-class approach 2 . Generalizing to such a scenario is necessary for actual im- age understanding by algorithms, thus such limitations need to be ov ercome, and our method is a scalable way of doing so. Evidence supporting this statement is shown in Figure 5 shows that the proposed method is able to generally capture more objects more accurately that a single-box method. 5. Discussion and Conclusion In this work, we propose a novel method for localiz- ing objects in an image, which predicts multiple bounding boxes at a time. The method uses a deep con volutional neu- ral netw ork as a base feature e xtraction and learning model. It formulates a multiple box localization cost that is able to take adv antage of variable number of groundtruth locations 2 In the case of the one-box-per-class method, non-max-suppression is performed on the 1000 boxes using the same criterion as the DeepMulti- Box method of interest in a giv en image and learn to predict such loca- tions in unseen images. W e present results on two challenging benchmarks, V OC2007 and ILSVRC-2012, on which the proposed method is competitive. Moreover , the method is able to perform well by predicting only very fe w locations to be probed by a subsequent classifier . Our results show that the DeepMultiBox approach is scalable and can even generalize across the two datasets, in terms of being able to predict lo- cations of interest, even for categories on which it was not trained on. Additionally , it is able to capture multiple in- stances of objects of the same class, which is an important feature of algorithms that aim for better image understand- ing. In the future, we hope to be able to fold the localization and recognition paths into a single network, such that we would be able to extract both location and class label in- formation in a one-shot feed-forward pass through the net- work. Even in its current state, the two-pass procedure (lo- calization network follo wed by categorization network) en- tails 5-10 network ev aluations, each at roughly 1 CPU-sec (modern machine). Importantly , this number does not scale linearly with the number of classes to be recognized, which makes the proposed approach very competitive with DPM- like approaches. References [1] B. Alex e, T . Deselaers, and V . Ferrari. What is an object? In CVPR . IEEE, 2010. 2 , 4 [2] J. Carreira and C. Sminchisescu. Constrained parametric min-cuts for automatic object se gmentation. In CVPR , 2010. Figure 5. Class-agnostic detection on ILSVRC-2012 (left) and V OC 2007 (right). 1 , 2 [3] T . Dean, M. A. Ruzon, M. Segal, J. Shlens, S. V ijaya- narasimhan, and J. Y agnik. Fast, accurate detection of 100,000 object classes on a single machine. In CVPR , 2013. 2 [4] I. Endres and D. Hoiem. Category independent object pro- posals. In ECCV . 2010. 1 , 2 [5] M. Everingham, L. V an Gool, C. K. Williams, J. Winn, and A. Zisserman. The pascal visual object classes (voc) chal- lenge. International journal of computer vision , 88(2):303– 338, 2010. 4 [6] P . F . Felzenszwalb, R. B. Girshick, D. McAllester, and D. Ra- manan. Object detection with discriminativ ely trained part- based models. P attern Analysis and Machine Intelligence, IEEE T ransactions on , 32(9):1627–1645, 2010. 1 , 6 [7] M. A. Fischler and R. A. Elschlager . The representation and matching of pictorial structures. Computers, IEEE Tr ansac- tions on , 100(1):67–92, 1973. 1 [8] R. B. Girshick, P . F . Felzenszwalb, and D. McAllester . Discriminativ ely trained deformable part models, release 5. http://people.cs.uchicago.edu/ rbg/latent-release5/. 6 [9] C. Gu, J. J. Lim, P . Arbel ´ aez, and J. Malik. Recognition using regions. In CVPR , 2009. 2 [10] A. Krizhevsky , I. Sutskever , and G. Hinton. Imagenet clas- sification with deep con volutional neural networks. In Ad- vances in Neural Information Pr ocessing Systems 25 , pages 1106–1114, 2012. 1 , 2 , 3 , 4 , 6 [11] C. H. Lampert, M. B. Blaschko, and T . Hofmann. Beyond sliding windo ws: Object localization by ef ficient subwindo w search. In CVPR , 2008. 2 [12] H. O. Song, S. Zickler , T . Althof f, R. Girshick, M. Fritz, C. Geyer , P . Felzenszwalb, and T . Darrell. Sparselet models for efficient multiclass object detection. In ECCV . 2012. 2 [13] C. Szegedy , A. T oshev , and D. Erhan. Deep neural networks for object detection. In Advances in Neur al Information Pr o- cessing Systems (NIPS) , 2013. 1 , 2 , 6 [14] K. E. v an de Sande, J. R. Uijlings, T . Gev ers, and A. W . Smeulders. Segmentation as selective search for object recognition. In ICCV , 2011. 1 , 2 [15] L. Zhu, Y . Chen, A. Y uille, and W . Freeman. Latent hierar- chical structural learning for object detection. In Computer V ision and P attern Recognition (CVPR), 2010 IEEE Confer- ence on , pages 1062–1069. IEEE, 2010. 6

원본 논문

고화질 논문을 불러오는 중입니다...

댓글 및 학술 토론

Loading comments...

의견 남기기