Detecting and Mitigating Flakiness in REST API Fuzzing

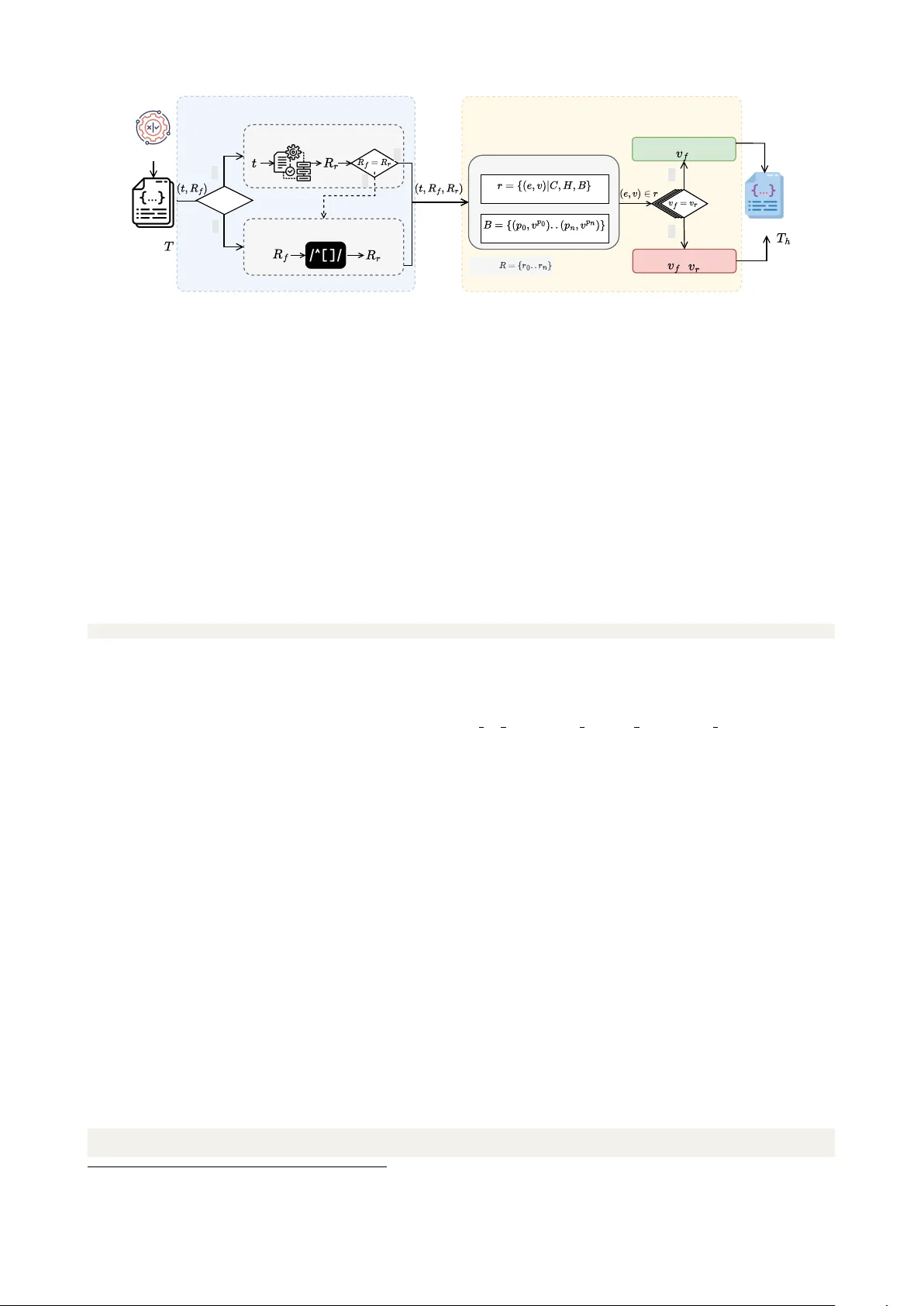

Test flakiness is a common problem in industry, which hinders the reliability of automated build and testing workflows. Most existing research on test flakiness has primarily focused on unit and small-scale integration tests. In contrast, flakiness i…

Authors: Man Zhang, Chongyang Shen, Andrea Arcuri