Democratizing Federated Learning with Blockchain and Multi-Task Peer Prediction

The synergy between Federated Learning and blockchain has been considered promising; however, the computationally intensive nature of contribution measurement conflicts with the strict computation and storage limits of blockchain systems. We propose …

Authors: Leon Witt, Kentaroh Toyoda, Wojciech Samek

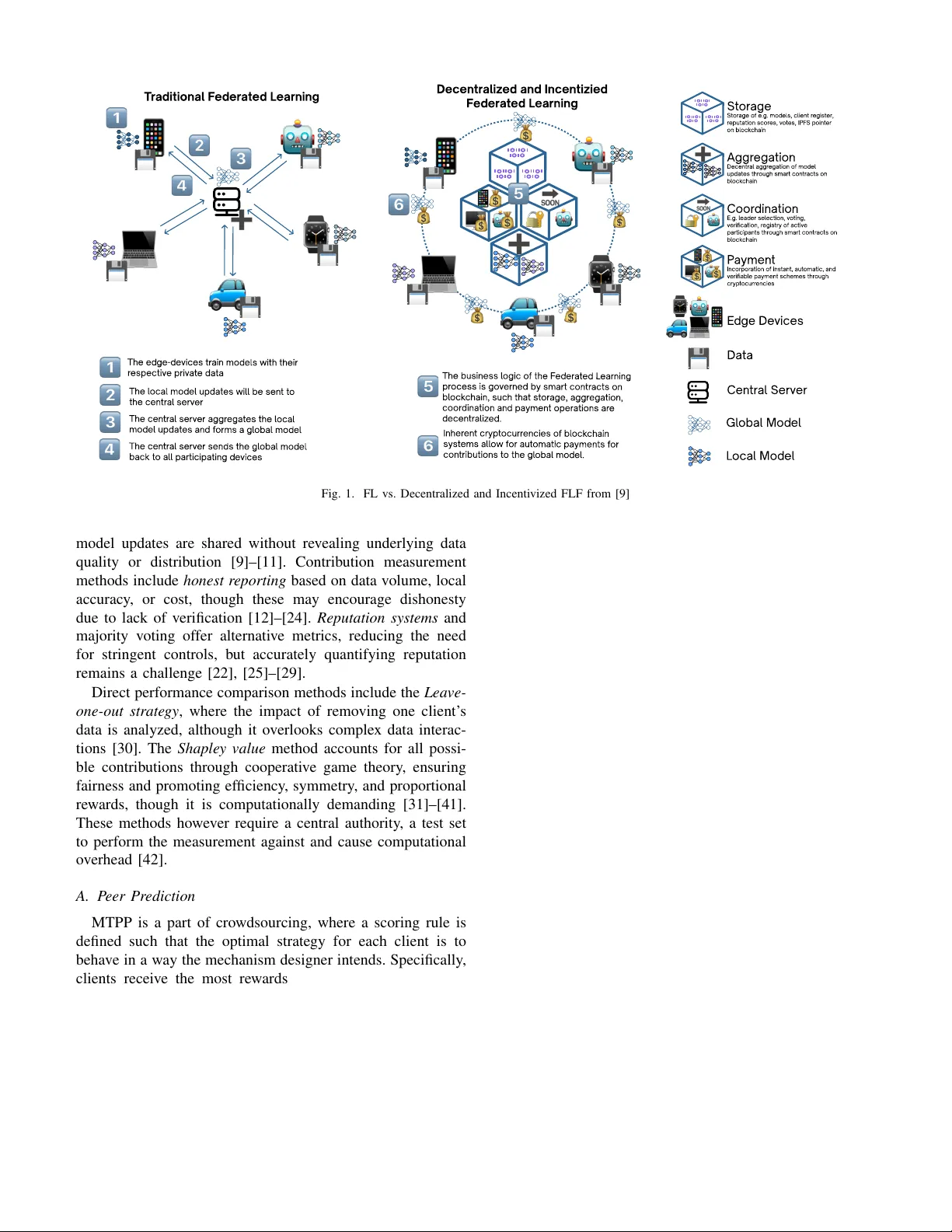

Democratizing Federated Learning with Blockchain and Multi-T ask Peer Prediction Leon W itt Dept. of Comp. Sci. & T ech. Tsinghua University & F r aunhofer HHI leonmaximilianwitt@gmail.com W ojciech Samek Dept. of Elec. Eng. & Comp. Sci. TU Berlin & F r aunhofer HHI wojciech.samek@hhi.fraunhofer .de K entaroh T oyoda Inst. of High P erformance Computing A*ST AR kentaroh.toyoda@ieee.or g Dan Li Dept. of Comp. Sci. & T ech. Tsinghua University tolidan@tsinghua.edu.cn Abstract —The synergy between F ederated Learning and blockchain has been considered pr omising; however , the compu- tationally intensiv e nature of contrib ution measurement conflicts with the strict computation and storage limits of blockchain systems. W e propose a novel concept to decentralize the AI training process using blockchain technology and Multi-task Peer Prediction. By lev eraging smart contracts and cryptocurrencies to incentivize contrib utions to the training process, we aim to harness the mutual benefits of AI and blockchain. W e discuss the advantages and limitations of our design. Index T erms —Blockchain, AI, Decentral and Incentivized Fed- erated Lear ning I . I N T R O D U C TI O N Federated Learning (FL), a technique where multiple clients train an AI model locally and in parallel without data leaving the device, was pioneered by Google in 2016 [1]. This approach (i) decentralizes the computational load across par - ticipants and (ii) allows for priv acy-preserving AI training as the training data remains on the edge devices. In this context, Federated A veraging 1 ( FedAvg ) is an algorithm [1] widely adopted in FL, aiming to minimize the global model’ s empirical risk by aggregating local updates, represented as arg min θ X i | S i | | S | f i ( θ ) (1) where for each agent i , f i represents the loss function, S i is the set of index es of data points on each client, and S := S i S i is the combined set of index es of data points of all participants. The traditional Federated Learning process contains four steps and is depicted on the left of Fig. 1. T o scale FL Framew orks beyond entrusted entities to wards mass adoption, where participants are treated equally and fairly tow ards genuine democratic AI training, two major challenges hav e to be overcome: 1 Other optimization algorithms for FL exist and are ongoing research [2], with variations like FedBoost [3], FedProx [4], FedNova [5], FedSTC [6], and FetchSGD [7] exploring improv ements to the FedAvg algorithm. 1) Incentivization: Use cases where clients ha ve valuable training data yet no interest in the AI model require in- centiv e mechanisms through compensation. The pri vac y- by-design nature of FL makes it challenging to measure contributions for a f air reward distrib ution. 2) Decentralization: FL, although intended to be decen- tralized and pri vate, relies on a central server for aggre- gating gradients (FedA vg). This central server can (i) ex- clude clients, (ii) introduce a single point of failure, and (iii) withhold payment in en vironments where clients are compensated for their contributions (incenti vized FL). These issues can be mitigated by replacing the central authority with blockchain technology . Although the synergy between FL and blockchain has been considered to be promising [8], computationally intensive pro- cesses on the blockchain, such as contribution measurement, hinders its adoption in the real world. In this paper , we first propose Multi-T ask Peer Prediction (MTPP), a lightweight incentivization method from the do- main of cro wdsourcing, based on the correlation of clients’ outputs, to incentivize honest model training from clients in Federated Learning (FL). W e then propose a nov el framework to decentralize the AI training process using blockchain tech- nology with MTPP . This framework le verages smart contracts and cryptocurrencies to incenti vize contrib utions to the train- ing process, offering a practical solution to harness the mutual benefits of AI and blockchain. This work is structured as follo ws: Section II explores contemporary methods of measuring contributions in FL. Section III e xamines the integration of blockchain technology within FL. Finally , Section IV describes a conceptual frame- work that combines MTPP with existing General-Purpose Blockchain Systems (GPBS) to both incentivize and decen- tralize FL. I I . C O N T R I B U T I O N M E A S U R E M E N T I N F L In FL, measuring indi vidual contributions is challenging due to the priv acy-preserving nature of the system, where only Decen tr aliz ed and Incen tizied F ed er at ed L earning St or ag St or age o f e.g. mod el s, cl ien t r egis t er, r eputation scor es, v o t es, IPF S poin t er on bl ock chain A ggr egatio Decen tr al aggr egation o f mod el updat es thr ough smart con tr acts on bl ock chain Coor dinatio E.g. l ead er sel ection, v o ting, v eri cation, r egis try o f activ e participan ts thr ough smart con tr acts on bl ock chain P aymen Incorpor ation o f ins tan t, aut omatic, and v eriabl e paymen t schemes thr ough cryp t ocurr encies T r aditional F ed er at ed L earning The ed ge-d e vices tr ain mod el s with their r espectiv e priv at e data The l ocal mod el updat es wil l be sen t t o the cen tr al serv er The business l ogic o f the F ed er at ed L earning pr ocess is go v erned by smart con tr acts on bl ock chain, such that s t or age, aggr egation, coor dination and paymen t oper ations ar e d ecen tr al iz ed. The cen tr al serv er aggr egat es the l ocal mod el updat es and f orms a gl obal mod el Inher en t cryp t ocurr encies o f bl ock chain sys t ems al l ow f or aut omatic paymen ts f or con tributions t o the gl obal mod el . The cen tr al serv er sends the gl obal mod el back t o al l participating d e vices E d ge De vices Data Cen tr al Serv er Gl obal Mod el L ocal Mod el Fig. 1. FL vs. Decentralized and Incentivized FLF from [9] model updates are shared without re vealing underlying data quality or distribution [9]–[11]. Contribution measurement methods include honest reporting based on data volume, local accuracy , or cost, though these may encourage dishonesty due to lack of verification [12]–[24]. Reputation systems and majority voting of fer alternativ e metrics, reducing the need for stringent controls, but accurately quantifying reputation remains a challenge [22], [25]–[29]. Direct performance comparison methods include the Leave- one-out str ategy , where the impact of removing one client’ s data is analyzed, although it overlooks complex data interac- tions [30]. The Shapley value method accounts for all possi- ble contributions through cooperativ e game theory , ensuring fairness and promoting efficiency , symmetry , and proportional rew ards, though it is computationally demanding [31]–[41]. These methods howe ver require a central authority , a test set to perform the measurement against and cause computational ov erhead [42]. A. P eer Pr ediction MTPP is a part of crowdsourcing, where a scoring rule is defined such that the optimal strategy for each client is to behav e in a way the mechanism designer intends. Specifically , clients receiv e the most rew ards for (i) exerting ef fort into solving the tasks and then (ii) reporting the results of the task truthfully . The beauty of peer prediction is that it does not require knowing the ground truth to elicit said properties, as the scoring function is based on the correlations of what clients report. T o understand ho w MTPP can be applied to FL, we need to define what constitutes a task , signal , and report in the context of FL. Examples of tasks in FL include the classification of images [43] or finding the right parameterization of a neural netw ork [44]. For image classification, retrieving a signal means apply- ing inference on a trained model to predict a label, where the label would be the signal. In contrast, when the task is correct parameterization, training the model to retrieve the correct parameterization would constitute retrie ving a signal. Whether the client then reports the retrie ved signal truthfully depends on the incentivization. An informed str ategy inv olves v arying reports based on the signal, whereas an uninformed str ate gy maintains random reports independent of the true signal. A mechanism is informed-truthful if truthful reporting ( I ) always results in at least as high expected payment as any other strategy , achie ving equality only when all strategies are fully informed [45]. In the context of FL, the optimal client behavior (both genuine AI training and honest reporting) should achieve a Bayesian Nash equilibrium, aligning individual incenti ves with the ov erall objectiv es of the FL system. Multi-T ask Peer Prediction: The MTPP algorithm works as follows for two clients as part of a broader group: 1) Assign shared tasks to ensure diverse engagement. 2) Define subsets M b as “bonus tasks” and M 1 , M 2 (non- ov erlapping) as “penalty tasks. ” 3) Payment for a bonus task is calculated by the difference in scores for bonus versus penalty tasks, with the total payment being the aggregate of all bonus tasks. The objective of MTPP algorithms is to define a scor- ing function S , which determines ho w rewards should be paid based on the clients’ reports, ensuring informed-truthful behavior . Correlated Agreement [45] defines such a scoring function, based on the correlation matrix ov er all signals. 2 I I I . A P P L I C A T I O N O F B L O C K C H A I N I N F E D E R AT E D L E A R N I N G Blockchain technology appears promising in addressing sev eral pressing issues within the context of FL: • Decentralization : T raditional server -worker topologies in FL are susceptible to power imbalances and single points of failure. A server in such setups could potentially with- hold payments or arbitrarily exclude participants. More- ov er , this model does not suit scenarios where multiple stakeholders hav e an equal interest in jointly dev eloping models. Blockchain technology supports a decentralized framew ork, eliminating the need for a central serv er and allowing multiple entities to cooperate as peers with equal authority . • T ranspar ency and Immutability : Blockchain ensures that data can be added b ut not remov ed, with each transaction recorded permanently . This feature is crucial in FL, where a transparent and immutable record of rew ards fosters trust among participants. Additionally , the system’ s auditability ensures accountability , deterring malicious activities. • Cryptocurrency : Many blockchain platforms incorporate cryptocurrency functionalities, enabling the integration of payment systems within the smart contracts. This allo ws for immediate, automatic, and transparent compensation of workers based on pre-defined rules within the FL rew ard mechanisms. Consequently , significant research ef forts have been ded- icated to dev eloping frameworks that utilize blockchain to decentralize FL [9], [46]–[51]. Ne vertheless, the high com- putational demands of model training and substantial storage requirements present considerable challenges. As of now , none of the blockchain-integrated FL frameworks are ready for pro- duction deployment [9]. Blockchain’ s capabilities potentially extend to se veral complementary aspects of FL, including aggregation, payment, coordination, and storage: 1) Aggregation : In this approach, each client i transmits their model parameters θ i directly to the blockchain rather than to a central server . The aggregation of model parameters is then conducted on the blockchain via smart contracts. This method enhances robustness by eliminating single-point failures and ensures trans- parency and auditability in contributions [13], [28], [29], [52]–[54]. Although this approach results in consider- able computational and storage ov erhead that scales with O ( tnm ) , techniques such as Federated Distillation [11] and two-layer BC frameworks [53], where computation layer is separated from the consensus layer , can mitigate these costs. 2) Coordination : Blockchain can take up coordinator func- tions. For example, instead of aggregating θ i directly 2 Please refer to [45] for further information and [43], [44] for the applica- tion of CA in FL. on-chain—which can lead to computational and storage ov erhead—blockchain can facilitate random leader se- lection through oracles . Other coordination functions enabled by blockchain include trustless v oting systems [28], [29], [54]–[56], and the maintenance of essential federated learning (FL) data such as update verifications and member registries [11], [11], [19], [25], [25], [28], [29], [40], [52], [56], [57], [57], [58], [58]–[60]. These processes are managed in a transparent and immutable manner , enhancing accountability . 3) Payment : Incorporates cryptocurrenc y transactions within smart contracts for instant, automatic payments, thereby enhancing the ef ficiency of transaction processes within FL. 4) Storage : Although decentralized storage on BC is resource-intensiv e due to redundancy , it provides critical benefits in terms of data auditability and trust. The immutable and transparent nature of BC supports shared access and verifiability of crucial FLF data, such as machine learning models, reputation scores, user in- formation, and votes, thereby facilitating accountability and precise rew ard calculations [11], [13], [19], [26], [28], [29], [40], [41], [52], [54], [57], [58], [61]–[64]. Protocols lik e the interplanetary file-system (IPFS [65]) can be used to store data off-chain I V . D E C E N T RA L I Z E D A N D I N C E N T I V I Z E D F E D E R A T E D L E A R N I N G : A F R A M E W O R K Computationally intensi ve contrib ution measurement meth- ods conflict with the strict computation and storage limits of blockchain systems because multiple blockchain nodes must store and compute smart contract transactions in parallel. Ideally , the incentive system should be (i) lightweight, (ii) capable of computing rewards in a single operation, and (iii) compatible with integer -operations only . MTPP - in contrast to explicit measurements like the Shapley value - could be the remedy here [11], [43], [44] as the y base their rew ards on the correlation of other reports to incentivized honest model training by the clients. The extent of the clients’ interaction with the blockchain, whether as a communication intermediary or as participants in functions like v alidation, depends on the specific blockchain architecture used. A. MTPP to incentivize FL on blockchain MTPP have promising applications in the domain of de- centralized and incentivized FL due to its simplicity in in- centivizing and rewarding clients. Fig. 2 illustrates a possible integration of an MTPP , leaning on Correlated Agreement [45] - on Blockchain (e.g., Ethereum V irtual Machine (EVM)) in the following steps. 1) Registration on Blockchain: First, clients who want to participate hav e to register themselves on the Blockchain. On the EVM, this can happen with a smart contract, where clients register their public address and sign the registration with their pri vate key . Depending on the use cases, clients might be required to initially R egis tr ation on bl ock chai Mod el tr aining D ata A d dr ess Bl ock chain Priv at e, Public In t erplane tar Fil e Sys t em Clien ts In f er ence on public t es tse t Hash o f r esul ts + sal t and commit t o bl ock chain RNG-Or acl e mat ches pairs o f clien ts Clien ts r e v eal commits, BC v eri es BC comput es MTPP tr anspar en tly P aymen ts sen based on MTPP 1 1 0 .. . 0 0 1 Public T es tse t Label Pr edictions Rand o number Fig. 2. Incenti vized and Decentralized Federated Learning: Application of a Multi-task peer prediction mechanism to incentivize FL on Blockchain. commit a certain financial stake (for example, if clients are fully anonymous or have a self-interest in a trained model). Another option is that the registration can be set up such that only pre-known clients are allowed to participate. 2) Model T raining Initially , clients train their local models on their respective data by applying an appropriate optimization algorithm. The goal of this training process is to optimize a specified objective, which often in volv es minimizing a loss function. 3) Inference on Public T estset: Given the public testset X pub , clients predict the labels e.g. [43] or the quantized model parameters, e.g., [44]. Note that Fig. 2 illustrates the case where the ML task is to classify an image. 4) Hash-Commit: Blockchain is inherently transparent, meaning every node has access to all information on the blockchain. T o perform MTPP , clients need to re- veal their reports of signals to the chain so that a smart contract can calculate the rewards. Ho wev er, this introduces a problem: malicious clients could wait to see what other clients have reported and then report something similar to av oid model training while still getting rew arded. T o pre vent this, we introduce a hash- commit scheme, similar to [11]. In this scheme, instead of publishing results directly , the information is concate- nated with a random number , referred to as a salt , and then hashed. Adding the salt prevents brute-force attacks that could potentially restore the input gi ven a hashed output, especially if the input space is limited. Once a sufficient number of clients hav e committed —signing with their priv ate key to secure the data and ensure its authenticity—the smart contract requires the clients to re veal both the initial information and the salt (see step 6: rev eal). Because every node in the blockchain network must duplicate all information and computation, storing large amounts of data negativ ely impacts the netw ork’ s scala- bility . T o manage this issue while ensuring data remains unchangeable and secure, we use the Interplanetary File System (IPFS) [66]. IPFS provides a collision-proof hash for each piece of data. Instead of storing the clients’ signals directly on the blockchain, the hash acts as a unique pointer to the data, ensuring it can be securely and reliably identified without the ov erhead. 5) Random Number Oracle to Randomly Match T wo Clients: A crucial step of MTPP is the random pairing of clients to compute the re wards, necessitating a verifiable randomization mechanism. W e employ a decentralized V erifiable Random Function (VRF), such as Chainlink VRF [67]. This VRF functions as an oracle that can generate a cryptographically secure random seed. The random seed is then used to facilitate the random match- ing of two committed clients. Utilizing a decentralized VRF in this process ensures the generation of unbiased and cryptographically verifiable randomness, thereby upholding the integrity and fairness of the client pairing process in the MTPP algorithm. 6) Reveal: Following the random selection of clients by the oracle, the clients must now reveal their previ- ously committed information. The selected clients are required to disclose two key pieces of information to the blockchain: the IPFS pointer to their labels and their respectiv e individual salt values. The blockchain then hashes both the IPFS pointer and the salt for each client. This hashed combination is compared against the initial commit made by the clients to verify the authenticity and integrity of the information, ensuring that the data provided by the clients has not been altered since the initial commitment. 7) MTPP: Similar to step 5, the V erifiable Random Func- tion (VRF) is used to generate a random seed for select- ing random samples. Once this selection is completed, a smart contract on the blockchain computes the reward scores for the clients using the MTPP algorithm. 8) Payment: Utilizing the cryptocurrency features of blockchain platforms, the rew ards are adjusted according to the predefined rules of the protocol. P ayments are then automatically and immediately transferred to the clients’ accounts, ideally occurring in real-time as specified by the MTPP details. V . L I M I T A T I O N S A N D F U T U R E R E S E A R C H The proposed concept is a high-le vel architecture and requires further specification particularly of a blockchain- compatible MTPP , in order to be implemented in practice. Here, the following limitations have to be ov ercome and in vestigated in future research: 1) MTPP Specifics: The problem with Correlated Agree- ment (CA) and Peer T ruth Serum (PTS) is that full information on the data distribution is needed, which requires an overvie w . Additionally , calculating the delta- matrix for CA still requires significant computation and cannot be done on the fly . 2) Implementation and P erformance T ests: The per- formance of such a system hea vily depends on the underlying blockchain architecture, particularly whether the consensus mechanism causes overhead, how the data structure influences data queries (Merkle-root based data structures used in IPFS might be slow), the number of peers in the network, and the encoding and data- type specifics of the underlying virtual machine. All of these factors contribute to computational, storage, and complexity overhead, a trade-off for a decentralized and incentivized FL paradigm. 3) Random V alidator Selection: The diagram does not specify who should aggregate the respective model pa- rameters. A suggestion would be that the oracle ran- domly chooses a participant as a parameter - perhaps in an optimistic fashion [68] or as a majority v ote [11]. V I . C O N C L U S I O N This work introduces a concept to decentralize and in- centivize Federated Learning by leveraging Multi-T ask Peer Prediction to rew ard informed and truthful AI training. By integrating general-purpose blockchain technology , this frame- work achiev es genuine decentralization, setting the stage for truly democratic AI training processes. R E F E R E N C E S [1] H. Brendan McMahan, E. Moore, D. Ramage, S. Hampson, and B. Ag ¨ uera y Arcas, “Communication-efficient learning of deep networks from decentralized data, ” in Proc. AIST A TS , 2017. [2] P . Kairouz, H. B. McMahan, B. A v ent, A. Bellet et al. , “ Advances and open problems in federated learning, ” , 2019. [3] J. Hamer, M. Mohri, and A. T . Suresh, “FedBoost: A communication- efficient algorithm for federated learning, ” in Pr oc. of ICML , vol. 119, 2020, pp. 3973–3983. [4] T . Li, A. K. Sahu, M. Zaheer , M. Sanjabi, A. T alw alkar, and V . Smith, “Federated optimization in heterogeneous networks, ” in Proc. of Ma- chine Learning and Systems , vol. 2, 2020, pp. 429–450. [5] J. W ang, Q. Liu, H. Liang, G. Joshi, and H. V . Poor , “T ackling the ob- jectiv e inconsistency problem in heterogeneous federated optimization, ” in Pr oc. NeurIPS , vol. 33, 2020, pp. 7611–7623. [6] F . Sattler , S. W iedemann, K.-R. M ¨ uller , and W . Samek, “Robust and communication-efficient federated learning from non-iid data, ” IEEE TNNLS. , vol. 31, no. 9, pp. 772–785, 2020. [7] D. Rothchild, A. Panda, E. Ullah, N. Ivkin, I. Stoica, V . Brav erman, J. Gonzalez, and R. Arora, “FetchSGD: Communication-efficient feder - ated learning with sketching, ” , 2020. [8] L. W itt, A. T . Fortes, K. T oyoda, W . Samek, and D. Li, “Blockchain and artificial intelligence: Synergies and conflicts, ” 2024. [9] L. W itt, M. Heyer , K. T oyoda, W . Samek, and D. Li, “Decentral and incenti vized federated learning framew orks: A systematic literature revie w , ” IEEE Internet of Things Journal , vol. 10, no. 4, pp. 3642–3663, 2022. [10] P . Kairouz, H. B. McMahan, B. A vent, A. Bellet, M. Bennis, A. N. Bhagoji, K. Bonawitz, Z. Charles, G. Cormode, R. Cummings et al. , “ Adv ances and open problems in federated learning, ” F oundations and T r ends® in Machine Learning , vol. 14, no. 1–2, pp. 1–210, 2021. [11] L. Witt, U. Zafar , K. Shen, F . Sattler, D. Li, S. W ang, and W . Samek, “Decentralized and incentivized federated learning: A blockchain- enabled framework utilising compressed soft-labels and peer consis- tency , ” IEEE Tr ansactions on Services Computing , no. 01, pp. 1–16, nov 2023. [12] S. Rahmadika and K.-H. Rhee, “Reliable collaborati ve learning with commensurate incenti ve schemes, ” in Pr oc. of IEEE International Con- fer ence on Blockc hain , 2020. [13] S. Jiang and J. W u, “ A rew ard response game in the blockchain-powered federated learning system, ” International J ournal of P arallel, Emer gent and Distributed Systems , vol. 37, no. 1, pp. 68–90, 2022. [14] H. Y u, Z. Liu, Y . Liu, T . Chen, M. Cong, X. W eng, D. Niyato, and Q. Y ang, “ A sustainable incentive scheme for federated learning, ” IEEE Intelligent Systems , vol. 35, no. 4, pp. 58–69, 2020. [15] R. Zeng, S. Zhang, J. W ang, and X. Chu, “FMore: An incentive scheme of multi-dimensional auction for federated learning in MEC, ” in Pr oc. of IEEE International Confer ence on Distributed Computing Systems (ICDCS) , 2020. [16] S. Feng, D. Niyato, P . W ang, D. I. Kim, and Y .-C. Liang, “Joint service pricing and cooperative relay communication for federated learning, ” in 2019 International Confer ence on Internet of Things (iThings) and IEEE Green Computing and Communications (Gr eenCom) and IEEE Cyber , Physical and Social Computing (CPSCom) and IEEE Smart Data (SmartData) , 2019, pp. 815–820. [17] W . Zhang, Q. Lu, Q. Y u, Z. Li, Y . Liu, S. K. Lo, S. Chen, X. Xu, and L. Zhu, “Blockchain-based federated learning for de vice failure detection in industrial IoT, ” IEEE Internet of Things Journal , vol. 8, no. 7, pp. 5926–5937, 2021. [18] N. Ding, Z. Fang, and J. Huang, “Incentive mechanism design for federated learning with multi-dimensional private information, ” in Proc. of IEEE International Symposium on Modeling and Optimization in Mobile, Ad Hoc, and Wir eless Networks (W iOPT) , 2020. [19] H. Chai, S. Leng, Y . Chen, and K. Zhang, “ A hierarchical blockchain- enabled federated learning algorithm for knowledge sharing in internet of vehicles, ” IEEE T ransactions on Intelligent T ransportation Systems , vol. 22, no. 7, pp. 3975–3986, 2021. [20] J. Kang, Z. Xiong, D. Niyato, S. Xie, and J. Zhang, “Incentive mech- anism for reliable federated learning: A joint optimization approach to combining reputation and contract theory , ” IEEE Internet of Things Journal , v ol. 6, no. 6, pp. 10 700–10 714, 2019. [21] Y . Chen, X. Y ang, X. Qin, H. Y u, P . Chan, and Z. Shen, Dealing with Label Quality Disparity in F ederated Learning . Cham: Springer International Publishing, 2020, pp. 108–121. [22] S. R. Pandey , N. H. Tran, M. Bennis, Y . K. T un, A. Manzoor , and C. S. Hong, “ A crowdsourcing framew ork for on-device federated learning, ” IEEE T ransactions on W ir eless Communications , v ol. 19, no. 5, pp. 3241–3256, 2020. [23] M. T ang and V . W . W ong, “ An incentive mechanism for cross-silo federated learning: A public goods perspectiv e, ” in Pr oc of IEEE Confer ence on Computer Communications (INFOCOM) , 2021. [24] S. Chakrabarti, T . Knauth, D. Kuvaiskii, M. Steiner , and M. V ij, “Chapter 8 - trusted execution environment with intel sgx, ” in Responsible Genomic Data Sharing , X. Jiang and H. T ang, Eds. Academic Press, 2020, pp. 161–190. [25] Y . Zhao, J. Zhao, L. Jiang, R. T an, D. Niyato, Z. Li, L. L yu, and Y . Liu, “Pri vac y-preserving blockchain-based federated learning for IoT devices, ” IEEE Internet of Things Journal , vol. 8, no. 3, pp. 1817–1829, 2021. [26] Q. Zhang, Q. Ding, J. Zhu, and D. Li, “Blockchain empowered reli- able federated learning by worker selection: A trustworthy reputation ev aluation method, ” in Pr oc. of IEEE W ir eless Communications and Networking Conference W orkshops (WCNCW) , 2021. [27] B. Zhao, X. Liu, W . Chen, and R. H. Deng, “Crowdfl: Pri vac y-preserving mobile crowdsensing system via federated learning, ” IEEE Tr ansactions on Mobile Computing , vol. 22, no. 08, pp. 4607–4619, aug 2023. [28] K. T oyoda, J. Zhao, A. N. Zhang, and P . T . Mathiopoulos, “Blockchain- enabled federated learning with mechanism design, ” IEEE Access , vol. 8, pp. 219 744–219 756, 2020. [29] K. T oyoda and A. N. Zhang, “Mechanism design for an incentive- aware blockchain-enabled federated learning platform, ” in Proc. of IEEE International Conference on Big Data , 2019. [30] P . W . K oh and P . Liang, “Understanding black-box predictions via influence functions, ” in Pr oc. of International Conference on Machine Learning (ICML) , 2017. [31] L. S. Shapley , “ A value for n-person games, ” Contributions to the Theory of Games , vol. 2, no. 28, pp. 307–317, 1953. [32] X. Tu, K. Zhu, N. C. Luong, D. Niyato, Y . Zhang, and J. Li, “Incentiv e mechanisms for federated learning: From economic and game theoretic perspectiv e, ” 2021. [33] A. Ghorbani and J. Zou, “Data shapley: Equitable valuation of data for machine learning, ” in Pr oc. of the International Conference on Machine Learning (ICML) , 2019. [34] T . W ang, J. Rausch, C. Zhang, R. Jia, and D. Song, “ A principled approach to data valuation for federated learning, ” F ederated Learning: Privacy and Incentive , pp. 153–167, 2020. [35] R. Jia, D. Dao, B. W ang, F . A. Hubis, N. Hynes, N. M. G ¨ urel, B. Li, C. Zhang, D. Song, and C. J. Spanos, “T o wards efficient data valuation based on the shapley v alue, ” in Pr oc. of International Confer ence on Artificial Intelligence and Statistics (AISTA TS) , 2019. [36] Z. Liu, Y . Chen, H. Y u, Y . Liu, and L. Cui, “Gtg-shapley: Efficient and accurate participant contribution evaluation in federated learning, ” ACM Tr ans. Intell. Syst. T echnol. , vol. 13, no. 4, may 2022. [Online]. A v ailable: https://doi.org/10.1145/3501811 [37] T . Song, Y . T ong, and S. W ei, “Profit allocation for federated learning, ” in Pr oc. of IEEE International Conference on Big Data , 2019. [38] T . W ang, J. Rausch, C. Zhang, R. Jia, and D. Song, “ A principled approach to data valuation for federated learning, ” 2020. [39] S. W ei, Y . T ong, Z. Zhou, and T . Song, “Efficient and fair data valuation for horizontal federated learning, ” F ederated Learning: Privacy and Incentive , pp. 139–152, 2020. [40] Y . Liu, Z. Ai, S. Sun, S. Zhang, Z. Liu, and H. Y u, F edCoin: A P eer - to-P eer P ayment System for F ederated Learning . Springer , 2020, pp. 125–138. [41] S. Ma, Y . Cao, and L. Xiong, “T ransparent contribution evaluation for secure federated learning on blockchain, ” in Pr oc. of ICDEW , 2021, pp. 88–91. [42] Z. Liu, Y . Chen, Y . Zhao, H. Y u, Y . Liu, R. Bao, J. Jiang, Z. Nie, Q. Xu, and Q. Y ang, “Contribution-aw are federated learning for smart healthcare, ” Proceedings of the AAAI Confer ence on Artificial Intelligence , vol. 36, no. 11, pp. 12 396–12 404, Jun. 2022. [Online]. A v ailable: https://ojs.aaai.org/index.php/AAAI/article/vie w/21505 [43] Y . Liu and J. W ei, “Incenti ves for federated learning: a hypothesis elicitation approach, ” , 2020. [44] H. Lv , Z. Zheng, T . Luo, F . W u, S. T ang, L. Hua, R. Jia, and C. Lv , “Data-free ev aluation of user contributions in federated learning, ” in Pr oc. of IEEE International Symposium on Modeling and Optimization in Mobile, Ad hoc, and W ir eless Networks (W iOpt) , 2021. [45] V . Shnayder, A. Agarwal, R. Frongillo, and D. C. Parkes, “Informed truthfulness in multi-task peer prediction, ” in Proc. of ACM Conference on Economics and Computation (EC) , 2016. [46] D. Hou, J. Zhang, K. L. Man, J. Ma, and Z. Peng, “ A systematic literature review of blockchain-based federated learning: Architectures, applications and issues, ” in 2021 2nd Information Communication T echnologies Conference (ICTC) , 2021, pp. 302–307. [47] Y . Zhan, J. Zhang, Z. Hong, L. W u, P . Li, and S. Guo, “ A surv ey of incentiv e mechanism design for federated learning, ” IEEE T ransactions on Emerging T opics in Computing , p. preprint, 2021. [48] R. Zeng, C. Zeng, X. W ang, B. Li, and X. Chu, “ A comprehensi ve survey of incentive mechanism for federated learning, ” 2021. [49] A. Ali, I. Ilahi, A. Qayyum, I. Mohammed, A. Al-Fuqaha, and J. Qadir, “Incentiv e-driven federated learning and associated security challenges: A systematic review , ” T ec hRxiv , 2021. [50] X. Tu, K. Zhu, N. C. Luong, D. Niyato, Y . Zhang, and J. Li, “Incentiv e mechanisms for federated learning: From economic and game theoretic perspectiv e, ” , 2021. [51] D. C. Nguyen, M. Ding, Q.-V . Pham, P . N. Pathirana, L. B. Le, A. Sene viratne, J. Li, D. Niyato, and H. V . Poor , “Federated learning meets blockchain in edge computing: Opportunities and challenges, ” IEEE Internet of Things Journal , vol. 8, no. 16, pp. 12 806–12 825, 2021. [52] J. W eng, J. W eng, J. Zhang, M. Li, Y . Zhang, and W . Luo, “DeepChain: Auditable and Privac y-Preserving Deep Learning with Blockchain-Based Incentiv e, ” IEEE T ransactions on Dependable and Secur e Computing , vol. 18, pp. 2438–2455, 2021. [53] L. Feng, Z. Y ang, S. Guo, X. Qiu, W . Li, and P . Y u, “T wo-layered blockchain architecture for federated learning over mobile edge net- work, ” IEEE Network , pp. 1–14, 2021. [54] C. He, B. Xiao, X. Chen, Q. Xu, and J. Lin, “Federated learning intellectual capital platform, ” P ersonal and Ubiquitous Computing , 2021. [55] Fadaeddini, Amin and Majidi, Babak and Eshghi, Mohammad, “Priv acy preserved decentralized deep learning: A blockchain based solution for secure ai-dri ven enterprise, ” in Proc. of High-P erformance Computing and Big Data Analysis , 2019, pp. 32–40. [56] S. Xuan, M. Jin, X. Li, Z. Y ao, W . Y ang, and D. Man, “DAM-SE: A blockchain-based optimized solution for the counterattacks in the internet of federated learning systems, ” Security and Communication Networks , vol. 2021, p. 9965157, Jul. 2021. [57] H. B. Desai, M. S. Ozdayi, and M. Kantarcioglu, “BlockFLA: Account- able federated learning via hybrid blockchain architecture, ” in Proc. of the Eleventh A CM Confer ence on Data and Application Security and Privacy , 2021, p. 101–112. [58] Z. Zhang, D. Dong, Y . Ma, Y . Y ing, D. Jiang, K. Chen, L. Shou, and G. Chen, “Refiner: A reliable incentive-dri ven federated learning system powered by blockchain, ” VLDB Endowment , vol. 14, no. 12, p. 2659–2662, 2021. [59] Z. Li, J. Liu, J. Hao, H. W ang, and M. Xian, “CrowdSFL: A secure crowd computing framework based on blockchain and federated learn- ing, ” Electronics , vol. 9, no. 5, 2020. [60] Y . Zou, F . Shen, F . Y an, J. Lin, and Y . Qiu, “Reputation-based regional federated learning for knowledge trading in blockchain-enhanced IoV, ” in Pr oc. of IEEE WCNC , Mar . 2021, pp. 1–6. [61] M. H. ur Rehman, K. Salah, E. Damiani, and D. Svetinovic, “T ow ards blockchain-based reputation-aware federated learning, ” in Pr oc. of IN- FOCOMW , 2020, pp. 183–188. [62] Y . Li, X. T ao, X. Zhang, J. Liu, and J. Xu, “Priv acy-preserved federated learning for autonomous driving, ” IEEE T ransactions on Intelligent T ransportation Systems , pp. 1–12, 2021. [63] X. Bao, C. Su, Y . Xiong, W . Huang, and Y . Hu, “FLChain: A blockchain for auditable federated learning with trust and incenti ve, ” in Pr oc. of BIGCOM , Aug. 2019, pp. 151–159. [64] G. Qu, H. W u, and N. Cui, “Joint blockchain and federated learning- based offloading in harsh edge computing environments, ” in Proc. of the International W orkshop on Big Data in Emer gent Distributed En vironments , 2021. [65] J. Benet, “IPFS - content addressed, versioned, p2p file system, ” 2014. [66] ——, “Ipfs - content addressed, versioned, p2p file system, ” 2014. [67] “Chainlink verifiable random function (vrf), ” https://docs.chain.link/vrf, 2024, accessed: 2024-05-05. [68] K. Conway , C. So, X. Y u, and K. W ong, “opml: Optimistic machine learning on blockchain, ” 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment