EdgeDiT: Hardware-Aware Diffusion Transformers for Efficient On-Device Image Generation

Diffusion Transformers (DiT) have established a new state-of-the-art in high-fidelity image synthesis; however, their massive computational complexity and memory requirements hinder local deployment on resource-constrained edge devices. In this paper…

Authors: Sravanth Kodavanti, Manjunath Arveti, Sowmya Vajrala

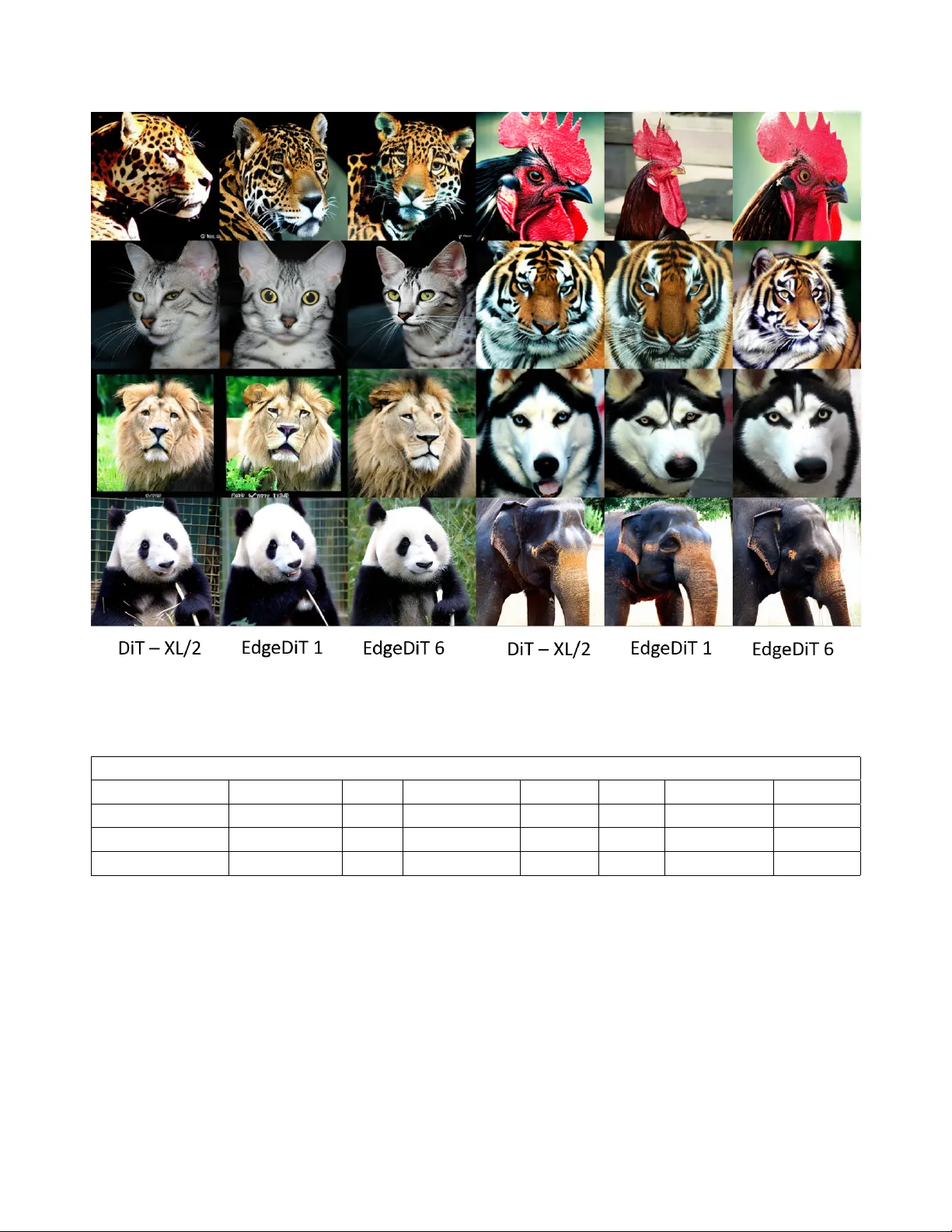

EdgeDiT : Hardwar e-A war e Diffusion T ransf ormers f or Efficient On-Device Image Generation Srav anth K odav anti * Manjunath Arveti * So wmya V ajrala Srini vas Miriyala V ikram N R Samsung Research Institute Bangalore, India Abstract Diffusion T ransformer s (DiT) have established a new state- of-the-art in high-fidelity image synthesis; however , their massive computational complexity and memory r equire- ments hinder local deployment on r esour ce-constrained edge de vices. In this paper , we intr oduce EdgeDiT , a family of har dwar e-efficient gener ative transformers specifically engineer ed for mobile Neur al Pr ocessing Units (NPUs), such as the Qualcomm Hexagon and Apple Neur al En- gine (ANE). By lever aging a har dwar e-aware optimization frame work, we systematically identify and prune structural r edundancies within the DiT bac kbone that ar e particularly taxing for mobile data-flows. Our appr oach yields a series of lightweight models that achie ve a 20–30% r eduction in parameters, a 36-46% decrease in FLOPs, and a 1.65-fold r eduction in on-device latency without sacrificing the scal- ing advantag es or the expr essive capacity of the original transformer ar chitectur e. Extensive benchmarking demon- strates that EdgeDiT offers a superior P areto-optimal trade- off between F r ´ echet Inception Distance (FID) and infer- ence latency compar ed to both optimized mobile U-Nets and vanilla DiT variants. By enabling r esponsive, private, and offline generative AI dir ectly on-de vice, EdgeDiT pro vides a scalable blueprint for transitioning lar ge-scale foundation models fr om high-end GPUs to the palm of the user . 1. Introduction Diffusion models hav e recently emer ged as a dominant paradigm for high-fidelity image generation. Early ap- proaches such as Denoising Dif fusion Probabilistic Mod- els (DDPM) [ 6 ] and Denoising Diffusion Implicit Mod- els (DDIM) [ 12 ] demonstrated that iterative denoising pro- cesses can generate high-quality images by gradually trans- forming Gaussian noise into structured samples. These models were initially built upon con volutional U-Net back- bones, which became the de facto architecture for dif fusion- * Equal contribution. Email: { k.sravanth, at.manjunath } @samsung.com based image synthesis. Recent advances have shifted toward transformer-based generativ e architectures. Diffusion T ransformers (DiT) [ 9 ] replace conv olutional U-Nets with V ision Transformer backbones, enabling improved scalability and better utiliza- tion of lar ge-scale training data. Building on this paradigm, sev eral works have explored architectural improvements and training strate gies for transformer-based dif fusion mod- els. Masked Diffusion T ransformer (MDT) [ 3 ] intro- duced masked modeling objectiv es that significantly im- prov e synthesis quality . Scalable Interpolant Transformers (SiT) [ 7 ] further unified dif fusion and flow-based genera- tiv e modeling under a transformer-based framework. More recently , representation alignment techniques [ 13 ] ha ve demonstrated that training DiTs can be simplified and sta- bilized through improv ed feature alignment strategies. Parallel efforts have also in vestigated alternative back- bones for dif fusion models. For instance, state-space mod- els hav e recently been explored as efficient sequence mod- eling alternatives to transformers, leading to architectures such as dif fusion models with state-space backbones [ 2 ]. These approaches aim to improve scalability and computa- tional efficienc y while preserving generativ e quality . Despite these advances, the deployment of diffusion transformers on edge devices remains challenging. State- of-the-art DiT architectures require substantial computa- tional resources, lar ge memory footprints, and high infer - ence latency , making them impractical for mobile and em- bedded platforms. While cloud-based inference mitigates these limitations, it introduces pri vacy concerns, network dependency , and increased energy consumption. In this work, we address the challenge of bringing transformer-based diffusion models to resource-constrained hardware. W e introduce EdgeDiT , a family of lightweight diffusion transformers specifically optimized for mobile Neural Processing Units (NPUs) such as Qualcomm NPUs and Apple Neural Engine (ANE). Our approach identifies the computationally expensiv e and redundant operations in the pretrained DiT model and applies hardware-aw are ar - chitectural optimization to improve on-device inference ef- ficiency , while preserving generati ve capacity . 1 Through systematic model redesign and pruning strate- gies, EdgeDiT achiev es a 20–30% reduction in parame- ters, a 36–46% decrease in FLOPs, and 1.65 × speedup in on-device latency compared to baseline DiT architectures. Extensiv e experiments demonstrate that EdgeDiT achiev es competitiv e Fr ´ echet Inception Distance (FID) while signif- icantly reducing inference latency on edge hardware. Our contributions are summarized as follo ws: • W e propose EdgeDiT , a hardware-a ware dif fusion trans- former architecture designed for ef ficient on-de vice gen- eration. • W e introduce structural simplifications and pruning strategies tailored for mobile NPUs. • W e demonstrate improv ed Pareto trade-offs between gen- eration quality and inference efficienc y compared to ex- isting DiT variants. • W e provide empirical evidence that transformer-based diffusion models can be effecti vely scaled down for real- world edge deployment. 2. Related W ork 2.1. Diffusion Models Diffusion models ha ve achie ved remarkable success in gen- erativ e modeling across a variety of visual tasks. Denois- ing Diffusion Probabilistic Models (DDPM) [ 6 ] introduced a probabilistic formulation for image generation based on iterativ e denoising of Gaussian noise. Subsequent impro ve- ments such as Improv ed DDPM [ 8 ] enhanced sampling ef- ficiency and training stability . Latent Diffusion Models (LDM) [ 10 ] further improv ed scalability by performing dif- fusion in a compressed latent space, significantly reducing computational requirements while maintaining high visual fidelity . 2.2. Diffusion T ransf ormers T ransformer architectures hav e recently been adopted as powerful backbones for diffusion models. Diffusion T rans- formers (DiT) [ 9 ] replace con volutional U-Net architectures with transformer blocks operating on latent tokens, demon- strating strong scaling properties for generativ e modeling. Sev eral works hav e further explored architectural improve- ments to diffusion transformers. Masked Diffusion Trans- former (MDT) [ 3 ] and its impro ved variant MDTv2 [ 4 ] introduce masked latent modeling strategies that improve contextual reasoning and accelerate training. Scalable Interpolant T ransformers (SiT) [ 7 ] extend diffu- sion transformers to a broader generativ e modeling frame- work that unifies diffusion and flow-based models through stochastic interpolants, enabling flexible training objecti ves and improv ed performance across model scales. Other recent work in vestigates alternativ e architectural backbones for diffusion models. For instance, state-space model based diffusion architectures replace attention mech- anisms with structured state-space layers to improve scal- ability and reduce computational complexity . These ap- proaches highlight ongoing ef forts to improv e both the scal- ability and efficienc y of transformer-based diffusion mod- els. 2.3. Efficient and Lightweight Diffusion Models The growing computational cost of diffusion models has motiv ated research into lightweight dif fusion architectures. MobileDiffusion [ 14 ] proposes architectural simplifications designed for mobile hardware to enable efficient on-device diffusion inference. Other w orks e xplore structured pruning and tok en sparsification techniques to reduce redundancy in diffusion transformers. In addition, efficient training strategies hav e been ex- plored to reduce the cost of training lar ge diffusion models. For example, masked training strategies and representation alignment approaches hav e been shown to significantly sim- plify the dif fusion transformer training process while main- taining strong generativ e performance [ 13 ]. 2.4. Model Compression and Ar chitecture Sear ch Model compression techniques such as pruning, kno wledge distillation, and neural architecture search ha ve been widely used to obtain compact neural networks suitable for de- ployment on edge hardware. Knowledge distillation [ 5 ] allows smaller student networks to mimic the behavior of larger teacher models while preserving performance. Auto- mated architecture search methods such as Bayesian opti- mization [ 11 ] enable systematic exploration of large archi- tecture spaces under hardware constraints. In contrast to prior work that primarily focuses on man- ual architecture design or pruning strate gies, our work intro- duces a surrogate-based architecture search frame work that combines feature-wise knowledge distillation and multi- objectiv e Bayesian optimization to discover ef ficient diffu- sion transformer architectures optimized for edge deploy- ment. 3. Method Our goal is to design edge-efficient v ariants of Dif fusion T ransformers based on the DiT -XL/2 [ 9 ] baseline, that pre- serve generative performance while reducing the computa- tional and memory overhead for edge deployment. More- ov er, theoretical compute metrics (FLOPs/GMA Cs) may not always reliably predict pragmatic latency , since NPUs are optimized for specific operations such as GEMM. So, reducing the arithmetic compute may not always guarantee proportional edge latency impro vements. T o address this gap, we construct a structured architecture search space of lightweight surrogate transformer blocks, which are aligned with edge hardware. 2 Figure 1. Flo wsheet depicting the proposed method These surrogates are trained using feature-wise knowl- edge distillation and these locally distilled surrogates are combined to form candidate architectures, which are then fully trained end-to-end for accuracy improv ements. W e employ multi-objectiv e Bayesian optimization to identify these Pareto-optimal candidate models in the hardware- performance search space. From this set, we select the most balanced architecture and fix it as our base architec- ture EdgeDiT . An overvie w of the proposed framew ork is illustrated in Figure 1 . 3.1. Hardwar e-aware surgery of DiTs Diffusion Transformers (DiT) [ 9 ] operate on latent image representations by treating them as sequences of tokens pro- cessed by transformer blocks. Given an input image x , it is first encoded into a latent representation using a variational autoencoder . The latent representation is then divided into non-ov erlapping patches of size p × p , producing a sequence of tokens that form the input to the transformer backbone. A DiT model consists of a stack of L ( L = 28 for DiT -XL/2 [ 9 ]) transformer blocks. Each block contains two primary components: • Multi-head self-attention (MHSA) • A feed-forward network (FFN) with an expansion ratio r Formally , a transformer block can be expressed as h l +1 = h l + MHSA ( LN ( h l )) + FFN ( LN ( h l )) , (1) where h l denotes the hidden representation at layer l and LN denotes layer normalization. While DiT models provide strong generativ e perfor- mance, their large number of layers and high-dimensional hidden representations introduce significant computational and memory ov erhead. T o address this challenge, we decompose the DiT architecture into smaller hardware- friendly b uilding blocks and construct a structured archi- tecture search space. Our hardw are-aware search space consists of the follo w- ing surrogates. 1. Block remov al : Every two consecutiv e DiT layers are selected for remov al to reduce the network depth. 2. MLP ratio modification : The expansion ratio of the FFN block in DiT layers is v aried (expansion factor = r ∈ { 2 , 4 } ) to reduce parameter count and FLOPs. 3. Hidden dimension reduction : The internal projection dimension ( d ∈ { 512 , 1152 } ) of the transformer blocks in the DiT layers are reduced to construct lo w-rank rep- resentations. The abov e three types of surrogates are built in two stages. • Stage 1 consists of choosing among the options in Block remov al, which results in replacing two consecutiv e DiT layers with a single DiT layer, resulting in a search space of ( 2 × 2 × ... (14 times) × 2 = 2 14 ) • Stage 2 consists of choosing either the MLP modified layer or the Hidden dimension reduced layer, so per each layer we hav e 2 + 2 = 4 options, resulting in a search space of (4 × 4 × ... (28 times) × 4 = 4 28 ) These modifications result in a combined hardware- aware search space of 2 14 + 4 28 . All possible surrogate options are listed in Figure 2 . 3.2. F eature-wise Knowledge Distillation T raining ev ery candidate architecture in 2 14 + 4 28 from scratch would be computationally expensi ve and infeasible. 3 Figure 2. hardware-a ware surrogates search space design T o enable ef ficient training, we adopt a feature-wise kno wl- edge distillation strategy , which is a divide-and-conquer strategy , where each surrogate block learns to mimic the behavior of the corresponding block in the teacher network. Let T l ( x ) denote the output feature of the l -th block in the teacher model and S l ( x ) denote the output of the corre- sponding surrogate block for a giv en input features x . The feature-wise distillation loss is defined as L l KD = || T l ( x ) − S l ( x ) || 2 2 , (2) W e perform surrogate training in two stages as men- tioned abov e as well: Stage 1: Block remo val. Each pair of two consecutiv e DiT layers are treated as the teacher model and their infor- mation is distilled into single student DiT layer . Stage 2: Structural Simplification. In the second stage, the DiT layers act as teacher blocks and DiT layers with varying MLP ratio, reduced hidden dimensions act as stu- dents. Since all blocks are distilled independently , this process is highly parallelizable and computationally lightweight. W e obtain 14 surrogates in stage 1, and 2 × 28 = 56 surro- gates in stage 2. This progressi ve training strate gy allo ws us to efficiently learn lightweight surrogate components, pre- serving DiT -XL/2’ s local beha vior . This modular decompo- sition enables the design of the full candidate architecture by assembling the surrogates to approximate the DiT -XL/2 without full architecture training. 3.3. Assembling Candidate Architectur es Once the surrogate blocks are trained, we construct candi- date diffusion transformer architectures by combining dif- ferent configurations of surrogate components. Each candidate architecture is represented by a configu- ration vector a = ( b 1 , b 2 , . . . , b L ) , (3) where b i denotes the block type selected for layer i . The block type can correspond to the original DiT block or one of the surrogate v ariants. Giv en a configuration a , the corresponding network is assembled by selecting the appropriate surrogate blocks for each layer . Because surrogate blocks are pre-trained through distillation, candidate architectures can be ev alu- ated efficiently without requiring e xhaustiv e retraining. 3.4. Optimization in Hardwar e-Aligned Space T o identify efficient architectures within the search space, we formulate model selection as a multi-objectiv e optimiza- tion problem. Specifically , we aim to simultaneously opti- mize generativ e performance and hardware ef ficiency . Let f ( a ) denote the performance metric (e.g., FID or In- ception Score) of architecture a , and let g ( a ) denote a hard- ware cost metric such as peak memory usage or edge la- tency . Our objective is to identify architectures that lie on the Pareto frontier , for any architecture a we aim to: 4 max a ∈A f ( a ) and min a ∈A g ( a ) (4) T o efficiently explore the architecture space, we em- ploy multi-objective Bayesian optimization [ 11 ] (MOBO). A Gaussian model is trained to predict the objecti ve val- ues of candidate architectures based on previously ev aluated configurations. New architectures are then selected using an acquisition function that balances exploration and exploita- tion. This process enables efficient discov ery of architectures that achiev e fav orable trade-offs between generative qual- ity and hardware efficiency . For our experiments, we con- structed a set of images from each of the candidate architec- tures and we took f ( a ) as the resultant FID score and g ( a ) as the edge latency of the particular candidate architecture. Since the FID calculation process also remains costly ev en without training, we adapt MOBO whereby relaxing a to a continuous representation x ∈ [0 , 1] 28 and map it to the nearest feasible architecture via a deterministic map- ping. Candidate architectures are selected by maximizing Expected Hyper-v olume Improvement (EHVI). 3.5. End-to-End T raining After identifying Pareto-optimal candidate architectures from the search process, the selected models are trained end-to-end to fully adapt the network parameters. During this stage, all model components are jointly optimized us- ing the standard diffusion training objecti ve. Giv en a noisy latent representation z t at timestep t , the model predicts the corresponding noise ϵ θ ( z t , t ) . The dif fu- sion training loss is defined as L diff = E z 0 ,ϵ,t || ϵ − ϵ θ ( z t , t ) || 2 2 , (5) where z 0 is the clean latent representation and ϵ is Gaus- sian noise. This final training stage allo ws the selected architecture to fully adapt to the dif fusion task, resulting in compact models that maintain strong generativ e performance while satisfying hardware constraints. 4. Experimental Results 4.1. Sensitivity Analysis and Surr ogate choice W e analyze the structural importance of each surrogate in the DiT architecture. W e show analysis on why we chose particular surrogate instead of the other options. T o v al- idate our choices, we replace some of DiT blocks with surrogate weights learned through feature-wise-knowledge distillation approach. W e hav e experimented with replac- ing 3 DIT Blocks with a Block removal surrogate, Figure 3 sho ws that image quality drastically deteriorates when 3 DiT blocks are replaced. Due to drop in image quality , we Figure 3. image quality when Block Remov al Surrogates are re- placing 2 DiT Blocks vs 3 DiT Blocks Figure 4. image quality vs MLP ratio surrogates Figure 5. image quality vs Hidden dimension surrogates do not include 3 DiT replacement option to our hardware- aware search space. This brought the search space from 3 14 to 2 14 in Stage 1. W e ev aluated this by replacing the MLP ratio in DiT Blocks from 4 to 1,2,3. Figure 4 sho ws that image quality drastically decreases when the MLP ratio is set to 1, while MLP ratios 2, 3 produce similar results. So, we select sur- 5 Figure 6. Results of visual image quality comparison T able 1. Benchmarking class-conditional image generation on ImageNet 256 × 256 Class Conditioned ImageNet 256 × 256 Model Params (M) CFG FID - 50K ↓ SFID ↓ IS ↑ Precision ↑ Recall ↑ DiT - XL/2 [ 9 ] 675 4 16.23 11.06 80.91 0.93 0.26 EdgeDiT 1 471 4 12.3 13.97 75.72 0.92 0.24 EdgeDiT 6 530 4 12.4 14.96 78 0.91 0.25 rogates with MLP ratio 2 in the search space. W e ha ve also experimented by replacing the hidden di- mension in DiT blocks to 768 instead of 1152. Figure 5 shows that image quality is very similar to that generated by the surrogate having hidden dimension as 512. Due to similar image quality , we include surrogates with hidden dimension 512 to the search space. These combined efforts brought down the search space from 6 28 to 4 28 in Stage 2. 4.2. Par eto Analysis and Model Selection T o jointly ev aluate generati ve quality and deployment effi- ciency , we formulate model selection as a bi-objecti ve opti- mization problem with two competing objecti ves: (i) mini- mizing the Fr ´ echet Inception Distance (FID), which mea- sures the fidelity of generated images, and (ii) minimiz- ing the on-device latency loss, which captures the inference ov erhead on the target edge hardware. Since improv ements in generativ e quality often come at the cost of increased computational complexity and run- 6 Figure 7. Ablation: image quality of EdgeDiT with and without FwKD time, these objectiv es naturally exhibit a trade-of f. W e therefore analyze the resulting candidate models using the Pareto front, which represents the set of non-dominated so- lutions where no model can improv e one objectiv e without degrading the other . Models lying on this front provide op- timal trade-offs between visual quality and inference effi- ciency . From the resulting Pareto set, we select two representa- tiv e architectures for full training and e valuation: EdgeDiT - 1 and EdgeDiT -6 . These models correspond to the small- est and largest architectures within the EdgeDiT family in terms of parameter count, thereby capturing the spectrum of efficienc y–capacity trade-offs discov ered by the search. Due to limited computational resources, it was not feasible to train e very candidate architecture identified during the search. Instead, we employ a 400K iteration checkpoint of DiT -XL/2 [ 9 ] as the teacher model for surrogate guidance and also treat it as the baseline architecture, as it is the only publicly av ailable checkpoint within the DiT family . The selected EdgeDiT -1 and EdgeDiT -6 models are then trained end-to-end with an additional 100K iterations for class conditioned image generation task on ImageNet [ 1 ] dataset to obtain their final weights. All training experi- ments were conducted using 4 NVIDIA A100 GPUs . 4.3. Accuracy and V isual Comparisons W e compared the EdgeDiT 1, 6 models with baseline DiT - XL/2 [ 9 ] architecture both qualitatively and quantitativ ely . Quantitativ e comparisons are reported in T able 1 . T able 1 shows that with minimal training of the EdgeDiT candi- date architectures, W e were able to outperform the baseline DiT -XL/2 [ 9 ] architecture. Although EdgeDiT has around 20 − 30 % reduction in parameters than the baseline archi- tecture, it was able to outperform the baseline architecture with minimal training, this demonstrates efficient surrogate selection while preserving both quality and efficienc y . V isual results are sho wn in Figure 6 , Figure 6 shows that the quality of generated images from EdgeDiT is compara- ble or e ven better than DiT -XL/2 [ 9 ] in some cases of class- conditioned image generation. 4.4. Perf ormance Comparisons • Server Benchmarking: W e have calculated the parame- ters, GFLOPs, GMA Cs of the DiT family of models (S, B, L, XL) and our EdgeDiT for both (256, 256) and (512, 512) image generation. Results hav e been reported in T a- ble 2 • Edge Device Benchmarking: W e have profiled the DiT family of models (S, B, L, XL) and our EdgeDiT on Ap- ple A18 Pro ANE (iPhone 16 Pro Max) and Qualcomm 8850 NPU (Samsung Galaxy S25 Ultra). Results are pre- sented in T able 3 . T ables 2 and 3 show that EdgeDiT models had around 20 − 30 % fe wer parameters, 28 − 40 % fewer GMA Cs, and 36 − 46 % reduced GFLOPs than the DiT -XL/2 [ 9 ] baselines. While on the edge de vice latenc y , EdgeDiT mod- els are 1 . 65 × faster on Samsung Galaxy S25 Ultra, and 1 . 45 × faster on iPhone 16 Pro Max than the DiT -XL/2 [ 9 ] baselines. 4.5. Ablation Analysis Impact of featur e-wise Kno wledge Distillation: FwKD is central to our EdgeDiT design. It reduces the computational cost required for end-to-end training of candidate architec- tures. T o v alidate its contribution, we perform an ablation in which EdgeDiT architectures are initialized with random weights and then trained with progressiv e learning. W e ob- served that image quality degraded significantly more than the corresponding EdgeDiT models with FwKD. Refer to Figure 7 . These results confirm that FwKD is necessary to achiev e the desired accuracy , and it also reduces the com- putational cost required for training. 5. Conclusion EdgeDiT demonstrates that the scalability of Dif fusion T ransformers is not inherently at odds with edge deploy- ment. By lev eraging layer-wise distillation and Bayesian- optimized architecture search, we successfully compressed the state-of-the-art DiT -XL/2 by 30 %, and improv ed edge latency by 1 . 65 × . Our approach provides a robust frame- work for bringing large-scale generativ e models to mobile NPUs, ensuring priv acy , speed, and efficienc y for the next generation of mobile AI. 7 T able 2. Complexity Analysis: Parameters, GMA Cs, and GFLOPS for varying image resolutions. Model Image Size: 1 × 4 × 256 × 256 Image Size: 1 × 4 × 512 × 512 Params (M) GMA Cs GFlops Params (M) GMA Cs GFlops DiT S/2 32.96 6.06 12.13 33.26 31.44 62.93 DiT B/2 130.51 23.01 46.04 131.10 106.38 212.88 DiT L/2 458.10 80.70 161.47 458.89 360.98 722.27 DiT XL /2 675.13 118.62 237.34 676.01 524.54 1050.00 EdgeDiT 1 470.65 71.94 143.96 470.76 311.29 560.01 EdgeDiT 2 480.01 74.02 148.12 480.97 336.74 605.93 EdgeDiT 3 491.88 76.62 153.32 492.77 342.11 684.55 EdgeDiT 4 501.32 78.70 157.49 502.21 346.41 693.15 EdgeDiT 5 510.76 80.78 161.65 511.75 350.70 711.65 EdgeDiT 6 529.64 84.94 169.97 529.69 372.86 762.83 T able 3. Inference Latency (ms) comparison on mobile de vices across different resolutions. Model Image Size: 1 × 4 × 256 × 256 Image Size: 1 × 4 × 512 × 512 iPhone Latency Samsung Latency iPhone Latency Samsung Latency DiT S/2 6.63 8.35 93.31 52 DiT B/2 19.55 24.45 286.58 115.95 DiT L/2 68.99 85.00 790.26 376.08 DiT XL /2 118.56 129.00 1098.92 553.13 EdgeDiT 1 70.86 86.13 804.81 379.22 EdgeDiT 2 70.89 86.55 808.67 381.5 EdgeDiT 3 71.35 88.19 810.17 383.69 EdgeDiT 4 72.36 87.81 816.59 385.42 EdgeDiT 5 71.22 88.74 811.29 381.56 EdgeDiT 6 72.53 89.22 820.37 389.37 6. Limitations and Future W ork While EdgeDiT demonstrates promising results for en- abling diffusion transformers on mobile hardware, sev eral limitations remain. First, our experiments are primarily based on the DiT -XL/2[ 9 ] architecture as the teacher model. Although the proposed surrogate-based search frame work is model-agnostic, extending it to other diffusion transformer families such as SiT[ 7 ] or MDT[ 3 ] remains future work. Second, the objective of this work is not to design the most parameter-ef ficient diffusion model, but to introduce a general hardware-aw are optimization frame work capa- ble of discovering efficient architectures within a giv en model family . Integrating this framework with newer transformer-based diffusion models could further improve performance–efficienc y trade-offs. Third, although we ev aluate latency on representativ e mobile NPUs such as Qualcomm Hexagon and Apple Neu- ral Engine, the search space can be further specialized for different hardware accelerators such as TPUs and other edge devices such as AR/VR/XR. Finally , due to the high cost of diffusion training, only a subset of candidate architectures is trained end-to-end. Larger compute budgets could enable broader exploration of the architecture space and potentially yield even more efficient designs. 8 References [1] Jia Deng, W ei Dong, Richard Socher , Li-Jia Li, Kai Li, and Li Fei-Fei. Imagenet: A large-scale hierarchical image database. In 2009 IEEE confer ence on computer vision and pattern r ecognition , pages 248–255. Ieee, 2009. 7 [2] Z Fei, M Fan, C Y u, and J Huang. Scalable dif fusion mod- els with state space backbone. arxiv 2024. arXiv preprint arXiv:2402.05608 , 2024. 1 [3] Shanghua Gao, Pan Zhou, Ming-Ming Cheng, and Shuicheng Y an. Masked diffusion transformer is a strong image synthesizer . In Pr oceedings of the IEEE/CVF interna- tional conference on computer vision , pages 23164–23173, 2023. 1 , 2 , 8 [4] Shanghua Gao, Pan Zhou, Ming-Ming Cheng, and Shuicheng Y an. Mdtv2: Masked diffusion transformer is a strong image synthesizer . arXiv preprint , 2023. 2 [5] Geoffrey Hinton, Oriol V inyals, and Jeff Dean. Distill- ing the knowledge in a neural network. arXiv preprint arXiv:1503.02531 , 2015. 2 [6] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising dif fu- sion probabilistic models. In NeurIPS , 2020. 1 , 2 [7] Nanye Ma, Mark Goldstein, Michael S Albergo, Nicholas M Boffi, Eric V anden-Eijnden, and Saining Xie. Sit: Explor- ing flow and diffusion-based generative models with scalable interpolant transformers. In Eur opean Confer ence on Com- puter V ision , pages 23–40. Springer , 2024. 1 , 2 , 8 [8] Alex Nichol and Prafulla Dhariwal. Improved denoising dif- fusion probabilistic models. In ICML , 2021. 2 [9] William Peebles and Saining Xie. Scalable diffusion models with transformers. In ICCV , 2023. 1 , 2 , 3 , 6 , 7 , 8 [10] Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Bjorn Ommer . High-resolution image syn- thesis with latent diffusion models. In CVPR , 2022. 2 [11] Jasper Snoek, Hugo Larochelle, and Ryan P Adams. Prac- tical bayesian optimization of machine learning algorithms. Advances in neural information pr ocessing systems , 25, 2012. 2 , 5 [12] Jiaming Song, Chenlin Meng, and Stefano Ermon. Denoising diffusion implicit models. arXiv preprint arXiv:2010.02502 , 2020. 1 [13] Sihyun Y u, Sangkyung Kwak, Huiwon Jang, Jongheon Jeong, Jonathan Huang, Jinwoo Shin, and Saining Xie. Representation alignment for generation: Training diffu- sion transformers is easier than you think. arXiv pr eprint arXiv:2410.06940 , 2024. 1 , 2 [14] Y ang Zhao, Y anwu Xu, Zhisheng Xiao, Haolin Jia, and T ingbo Hou. Mobilediffusion: Instant text-to-image gener- ation on mobile devices. In Eur opean Conference on Com- puter V ision , pages 225–242. Springer , 2024. 2 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment