Warp-STAR: High-performance, Differentiable GPU-Accelerated Static Timing Analysis through Warp-oriented Parallel Orchestration

Static timing analysis (STA) is crucial for Electronic Design Automation (EDA) flows but remains a computational bottleneck. While existing GPU-based STA engines are faster than CPU, they suffer from inefficiencies, particularly intra-warp load imbal…

Authors: En-Ming Huang, Shih-Hao Hung

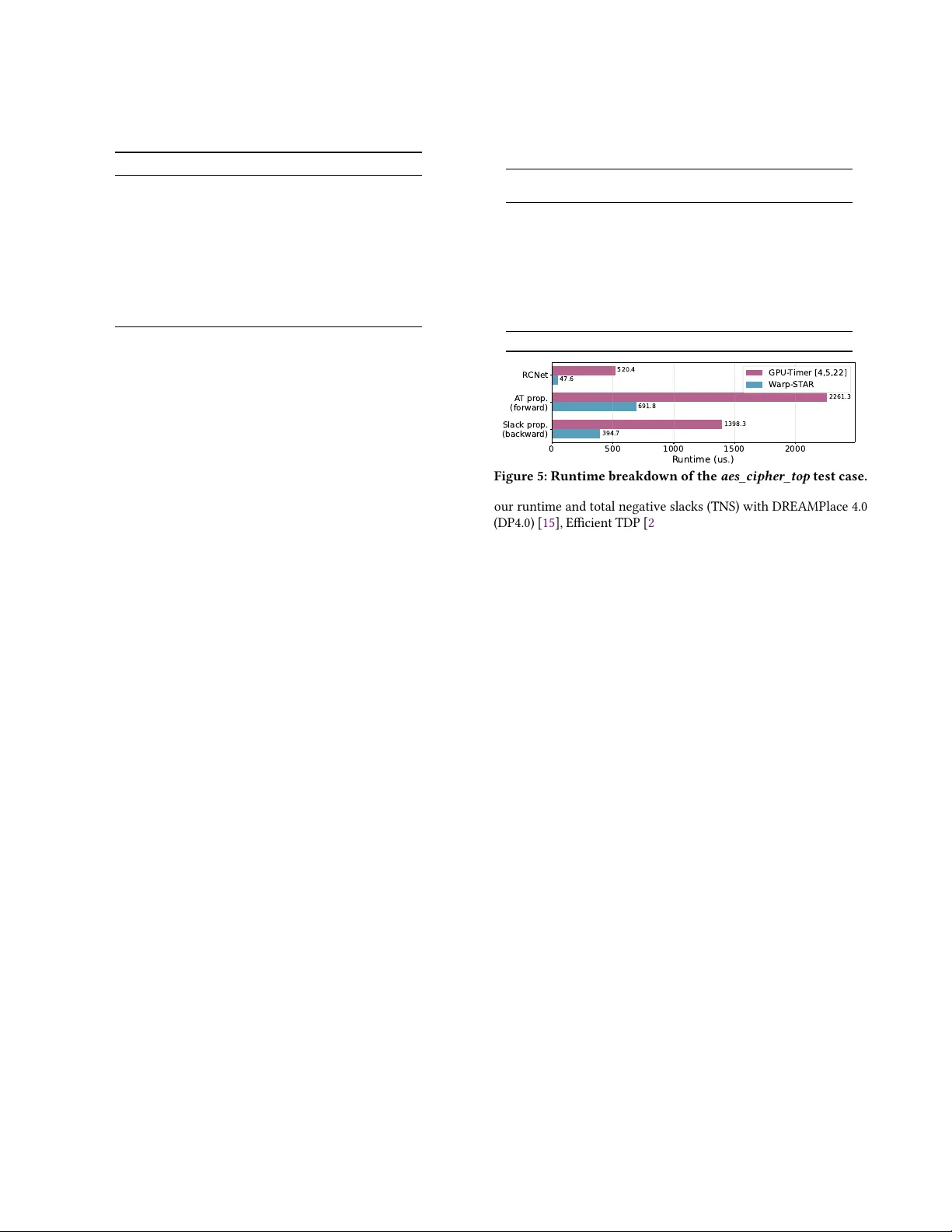

W arp-ST AR: High-p erformance, Dier entiable GP U- Accelerated Static Timing Analysis through W arp-oriented Parallel Orchestration En-Ming Huang r13922078@csie.ntu.edu.tw National T aiwan University T aipei, T aiwan Shih-Hao Hung hungsh@csie.ntu.edu.tw National T aiwan University T aipei, T aiwan Abstract Static timing analysis (ST A) is crucial for Electronic Design A utoma- tion (EDA) ows but remains a computational bottleneck. While ex- isting GP U-based ST A engines are faster than CP U, they suer from ineciencies, particularly intra-warp load imbalance caused by ir- regular circuit graphs. This paper introduces W arp-ST AR, a novel GP U-accelerated STA engine that eliminates this imbalance by or- chestrating parallel computations at the warp level. This approach achieves a 2.4X speedup ov er pre vious state-of-the-art (So T A) GP U- based ST A. When integrated into a timing-driven global placement framework, W arp-ST AR delivers a 1.7X spe edup over So T A frame- works. The method also proves eective for dierentiable gradient analysis with minimal overhead. Ke ywords Static Timing Analysis, GP U Acceleration, Irregular Graphs, Intra- W arp Load Imbalance A CM Reference Format: En-Ming Huang and Shih-Hao Hung. 2025. W arp-ST AR: High - performance, Dierentiable GP U-A ccelerated S tatic T iming A nalysis through W a r p- oriented Parallel Orchestration. In Proceedings of The Chips to Systems Con- ference (DA C ’26) . ACM, New Y ork, NY , USA, 7 pages. https://doi.org/10. 1145/3770743.3803891 1 Introduction Static timing analysis (ST A) is widely adopted across various stages of the ED A design ow , providing critical insights into timing op- timization and correction [ 1 , 7 , 14 , 15 , 19 ]. For instance, timing- driven global placement frameworks [ 3 , 6 , 15 , 16 , 21 ] leverage ST A engines to optimize cell delays alongside standard metrics like half- perimeter wire length (HP WL). Nonetheless, these frameworks re- quire hundreds of iterations of ST A, resulting in signicant runtime overhead. Dr eamPlace 4.0 [ 15 ], a timing-driven global placement (GP) framework, reports that the CP U-based ST A engine [ 9 ] ac- counts for 51.3% of the total runtime during the GP phase in a design with 2.5 million pins, limiting invocations to once p er 15 training iterations [ 15 ], making ST A eciency a pressing challenge. Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distribute d for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than the author(s) must be honor ed. Abstracting with credit is permitted. T o copy otherwise, or republish, to post on servers or to redistribute to lists, r equires prior spe cic permission and /or a fee. Request p ermissions from permissions@acm.org. DA C ’26, Long Beach, CA © 2025 Copyright held by the owner/author(s). Publication rights licensed to A CM. ACM ISBN XXX -X-XXXX-XXXX -X/2026/07 https://doi.org/10.1145/3770743.3803891 ST A engines are accelerate d through either multithreading or GP U-based parallelism. OpenTimer (OT) [ 9 ] integrates T askow [ 10 ] to enhance p erformance on multi-core CP Us. GP U-based engines [ 4 , 5 , 16 , 22 ] achieve higher p erformance, contributing 3.7X speedup compared to OT running on a 40-core CP U [ 4 ]. Howev er , these works omit their low execution eciency on the GP U because cir- cuits are irregular graphs which are inecient for GP U processing. W e found that GP U-based ST A engines [ 4 , 5 , 22 ] map each net to individual GP U threads (CUDA threads), leading to the intra-warp load imbalance issue. Each thread performs computation on its own net, and since each net has a dierent number of pins, the number of iterations diers across threads. Due to the design of the GP U, threads in the same warp are executed in lockstep, lead- ing to underutilized resources. Beyond intra-warp load imbalance, dierentiable ST A [ 6 , 16 ] introduces a further ov erhead: existing frameworks execute gradient backward pass sequentially after the full ST A pip eline, leaving the GP U idle between synchronizations, reducing GP U utilization and adding substantial per-iteration cost. In this work, we present W arp-ST AR, an ecient, dierentiable GP U-accelerated ST A through wa r p-oriented parallel orchestration to eliminate intra-warp load imbalances. Unlike previous works that map nets to individual thr eads, workloads (i.e., the pins within a net) ar e processed by the unit of warp. As a result, we enhance the utilization of CUD A warps, achieving an average of 2.4X speedup in comparison with existing GP U-based ST A [ 4 , 5 , 22 ], and 162X speedup against CP U-base d ST A, OT [ 9 ] on the 2015 ICCAD contest dataset [ 14 ]. Additionally , we incorporate our ST A engine into a timing-driven GP framework base d on Xplace 3.0 [ 3 ], delivering state-of-the-art execution time and high quality of timing slack com- pared with recent So T A w orks [ 3 , 15 , 16 , 21 ]. W e further r educe the dierentiable backward-pass o verhead through operation fusion , by allowing concurrent ST A computations and gradient computations via CUDA streams, achieving sup erior runtime over INSTA [ 16 ]. Our main contributions are threefold: (1) T ackling Intra- W arp Load Imbalance : W e identify and re- solve the intra-warp load imbalance problem in GP U-based ST A engines through our proposed W arp-ST AR. (2) Operation Fusion for Dierentiable ST A : W e reduce the sequential backward-pass overhead of dierentiable ST A by overlapping gradient computations with STA ’s slack propaga- tion, enhancing GP U utilization in gradient analysis. (3) V ersatile Performance with W arp-ST AR : Our optimize d ST A engine provides improved performance across applications, including existing GP frameworks and gradient analysis. DA C ’26, July 26–29, 2026, Long Beach, CA En-Ming Huang and Shih-Hao Hung a b c d Net R Net B (a) An illustration of a circuit de- sign with its components: pins, cells, and nets. a b c d Net R Net B Level 1 Level 2 Level 3 (b) Visualization of the lev- elization process, where nets are groupe d into levels based on their dependencies. Figure 1: Circuit comp onents and levelization in ST A. (a) The components of a circuit design. (b) The levelization process. 2 Preliminary In this section, we introduce the background knowledge of STA and GP U architecture along with the pr oblem of intra-warp load imbalance in graph processing. 2.1 Static Timing Analysis In STA, the problem is formulated as a Directed Acyclic Graph (D A G) and comprises three main components: pins, cells, and nets. Figure 1a illustrates these components. Each gate ( e.g., AND , OR, BUFFER, etc.) is r epresented as a cell, while the blue dots associated with the cells are the pins, representing their input or output ports. Wire connections are represented by arrows. Wires of the same color , such as the red wires, are grouped as a net. A net has one input (driver ) pin and multiple output pins to represent a fanout. The signal arrival time (A T) of each cell can be computed through a forward Breadth-First Search (BFS) propagation by taking the maximum A T of its preceding cells and adding the delay of wires and itself. Once all A T s are computed, slacks can b e derived from a backward propagation using another BFS operation by subtracting the calculated A T from the required arrival time (RA T). The method of calculating ST A in parallel is commonly achieved through the following four stages: (1) RC net delay computation : Computes the delay of wires in each net and can be done in paral- lel. (2) Data dependency identication ( levelization ) : Groups independent nets together , which is crucial for calculating cell A T propagation since data dependency relationships exist b etween cells in the DA G. As Figure 1b shows, the circuit in Figure 1a can be for- mulated into three levels of nets, where the pins b elonging to level 𝑖 require A T computed in le vel 𝑖 − 1 . (3) For ward propagation for cell A T : This stage computes the cell delay along with its A T level by level to ensure data correctness. Howev er , computations within each level can be executed in parallel since no data dependency exists. (4) Backward propagation for cell slacks : Computes cell slacks from the opposite direction, starting from the output pins of the last level. It can be noted that for ows requiring multiple ST A executions (e.g., timing-driv en GP), stage (2) nee ds to be computed only once because the design’s netlist is not modied. Therefore , the primary b ottlenecks are the RC net delay computation, as well as the forward and backward propagation stages. 2.2 GP U Architecture and Irregular Graph Processing Ecient execution of graph algorithms, such as BFS, on GPUs re- mains challenging due to irregular graph structures and the GP U’s Do A If threadID is 1: Do B else if threadID is 2: Do C Do E Thread 1 Thread 2 Thread 3 Thread 4 A A A A threadID == 1? B Inactive Inactive Inactive threadID == 2? C Inactive Inactive Inactive E E E E Time Figure 2: Illustration of thread divergence in a 4-threaded warp. Single-Instruction Multiple- Threads (SIMT) execution model [ 8 ]. In the SIMT execution model, threads within a warp operate in lockstep. A group of threads are ultimately mappe d to a Single- Instruction Multiple-Data (SIMD) e xecution unit that fetches and attempts to execute the same instruction simultaneously . Nonethe- less, graph algorithms often exhibit control-ow divergence due to conditional branches or workload imbalance within a warp (e.g., threads iterating over neighbors with vastly dierent degrees). T o maintain correctness during such divergence, SIMT masks are em- ployed and maintained by the hardware. These masks disable SIMD lanes corresponding to threads that do not follow the currently active execution path. While this ensur es correctness, it critically results in idled SIMD lanes and unnecessarily extends the warp’s overall completion time. This problem is intensied by typical warp sizes: NVIDIA ’s GP Us gr oup thr eads into warps of 32, while AMD’s CDNA GP Us use warps comprising 64 threads. Figure 2 illustrates a common challenge: branch divergence within a 4-threaded warp. This phenomenon leads to underuti- lized SIMD units when threads within the same warp take dierent execution paths. A similar ineciency occurs when threads hav e varying numbers of iterations within lo ops. T o maximize computational throughput and hide latency (e.g., memory requests), GP Us dynamically schedule multiple warps on their cores (i.e., stream-multiprocessors). When a warp ’s workload nishes or is stalled, the GP U scheduler can switch to other warps, even if some warps are still processing lengthy tasks. Consequently , inter-warp load imbalance is less critical than intra-warp load im- balance, as the hardware scheduler eciently switches b etween warps to maintain high utilization. Recent studies have pr oposed approaches such as graph transformations [ 17 ], w orkload reschedul- ing [ 12 , 13 ], and hardware-assisted techniques [ 8 , 20 ] to improv e SIMD lane utilization for general graph algorithms. These works all aim to address intra-warp load imbalances. 3 Methodology W arp-ST AR introduces two methods to eliminate intra-warp inef- ciencies through warp-centric parallelism: a pin-based scheme and Collaborative T ask Engagement (CTE) [ 12 , 13 ]. In contrast to prior GP U-based STA engines [ 4 , 5 , 22 ] that employed a net-based scheme, our appr oach distributes the w orkloads of each net (i.e., its pins) across threads to enhance SIMD lane utilization. Figure 3 illustrates the key dierence: in a traditional net-based scheme, employed by previous GP U-based STA s, entire nets are mapped to individual threads. This can lead to underutilization of other threads (or SIMD lanes) if a net has a large number of pins (e .g., as shown for thread 2 in Figure 3 (a)). Conversely , our pin-based scheme enables threads to process workloads at the granularity of individual pins, allowing large nets to be processed in parallel for reduced execution time. Furthermore, we introduce CTE, which dynamically reschedules workloads within the thread block before W arp-STAR DA C ’26, July 26–29, 2026, Long Beach, CA T0 T1 T2 N0P1 N1P1 N2P1 N0P2 IDLE N2P2 IDLE N2P3 IDLE IDLE N2P4 IDLE IDLE N2P5 IDLE IDLE N2P6 IDLE 50% utilization Iter . 1 2 3 4 5 6 T0 T1 T2 N0P1 N1P1 N2P1 N0P2 IDLE N2P2 IDLE N2P3 IDLE N2P4 N2P5 N2P6 75% utilization T0 T1 T2 N0P1 N1P1 N2P1 N0P2 N2P2 N2P3 N2P4 N2P5 N2P6 100% utilization (a) Net-based (b) Pin-based (ours) (c) CTE (ours) N = Net P = Pin Example: N2P1 → Net 2, Pin 1 Net 0 Net 1 Net 2 Figure 3: Comparison of task scheduling strategies for threads T0 ∼ T2. (a) Net based scheme: each thread processes an entire net. ( b) Pin based scheme: each thread processes in- dividual pins, allowing for higher parallelism. (c) Collab ora- tive T ask Engagement (CTE), which dynamically reschedules workloads within the thread block to maximize utilization. computation to achieve maximum utilization. Although CTE en- ables the best SIMD lane utilization, it incurs overhead for workload rescheduling and does not yield optimal performance compared to the pin-based scheme. Nevertheless, both methods provide perfor- mance acceleration compared to the baseline GP U-based ST A, as detailed comparisons examined in Section 4 . In this section, we rst intr oduce the parallel algorithm of our work and the procedures to deal with race conditions across threads. Next, we showcase the operation fusion for ecient gradient compu- tation. Finally , we incorporate W arp-ST AR into a global placement framework for ecient timing-driven GP optimization. 3.1 Algorithm of W arp-Centric ST A Computation Our algorithm divides the ST A computation into two main phases: (1) net delay calculation and (2) arrival time (A T) propagation. This separation is key for p erformance, as the net delay calculations are highly parallelizable, while the A T propagation through cells is inherently sequential due to data dependencies that ar e managed by the process of levelization [ 4 ]. First, we describe the algorithm of RC net delay calculation in a warp-centric manner , followed by the A T propagation and slack computation process. 3.1.1 RC Net Delay Computation . The RC delay of a single net is estimated using the Elmore delay model, described by Equa- tions 1 ∼ 3 [ 7 ]: 𝐿𝑜 𝑎𝑑 ( 𝑢 ) = 𝐶𝑎 𝑝 ( 𝑢 ) + Í 𝑣 ∈ 𝐹 𝑎𝑛𝑜𝑢 𝑡 ( 𝑢 ) 𝐿𝑜 𝑎𝑑 ( 𝑣 ) (1) 𝐷 𝑒 𝑙 𝑎𝑦 ( 𝑢 ) = 𝑅𝑒 𝑠 𝑓 𝑎 ( 𝑢 ) → 𝑢 · 𝐿𝑜 𝑎𝑑 ( 𝑢 ) (2) 𝐼 𝑚𝑝 𝑢𝑙 𝑠 𝑒 ( 𝑢 ) = 2 · 𝑅 𝑒𝑠 ( 𝑢 ) · 𝐶 𝑎𝑝 ( 𝑢 ) · 𝐷 𝑒 𝑙 𝑎𝑦 ( 𝑢 ) − 𝐷 𝑒 𝑙 𝑎𝑦 ( 𝑢 ) 2 (3) Here, 𝑓 𝑎 ( 𝑢 ) refers to the par ent pin of 𝑢 , specically the input pin of its associated net. These e quations are applied to each pin ( 𝑢 ) within a net, inherently r evealing the load imbalance pr oblem across nets due to varying numbers of pins. As a result, instead of mapping each net to individual CUDA threads, in W arp-ST AR, the workload of a net is processed by a warp. Note that the summation operator in Equation 1 highlights that the parent pin requires the Algorithm 1 RC Net Delay in the Pin-based Scheme 1: CP U: 2: N_blocks ← ⌈ num_nets / NETS_PER_BLOCK ⌉ 3: RCNet ( < dim3(4,8,NETS_PER_BLOCK), N_blocks > ) 4: GP U: 5: function RCNet 6: Input: N as # of nets, P as # of pins 7: Input: netlist[0 . . . P], the netlist in Compressed Sparse Row (CSR) format 8: Input: netlist_ind[0 . . . N], the indexes of each net 9: Output: load[0 . . . P], delay[0 . . . P], Impulse[0 . . . P] 10: netID ← blo ckIdx.x · blockDim.z + threadIdx.z; 11: localTID ← threadIdx.y; 12: condID ← threadIdx.x; ⊲ Shared memory 13: shared oat shmem[4][8][NETS_PER_BLOCK]; 14: if netID ≥ N then return ; 15: netRoot ← netlist_ind[netID]; 16: netStart ← netlist_ind[netID] + localTID; 17: netEnd ← netlist_ind[netID+1]; 18: ⊲ Parallel calculation for pin loads ⊳ 19: shmem[condID][localTID][threadIdx.z] ← 0; 20: for i = netStart; i < netEnd; i += blockDim.y do 21: load[i · 4 + condID] ← calc_load(); 22: shmem[condID][localTID][threadIdx.z] += load[i · 4 + condID]; 23: __syncwarp() 24: ⊲ Parallel reduction for root load ⊳ 25: for i = 1; i < blockDim.y; i *= 2 do 26: if localTID mod (i · 2) == 0 then 27: shmem[condID][localTID][threadIdx.z] += shmem[condID][localTID + i][threadIdx.z]; 28: __syncwarp() 29: if localTID == 0 then 30: load[netRoot · 4 + condID] ← shmem[condID][0][thr eadIdx.z]; 31: ⊲ Parallel calculation for pin delays ⊳ 32: for i = netStart; i < netEnd; i += blockDim.y do 33: delay[i · 4 + condID] ← res[i+condID] · load[i+condID]; 34: ⊲ Parallel calculation for pin impulses ⊳ 35: for i = netStart; i < netEnd; i += blockDim.y do 36: impulse[i · 4 + condID] ← √ . . . ; sum of its output pin loads. In our implementation, we employ parallel reduction to avoid race conditions and atomic operations. Algorithm 1 presents the pseudo co de for our pin-based scheme. Threads are groupe d into a three-dimensional format: (x=4, y=8, z=NETS_PER_BLOCK). The X -dimension (4 threads) is dedicated to processing a single pin across four timing conditions (early/late and fall/rise). The Y -dimension (8 threads) forms a warp of 32 threads. Finally , the Z-dimension is utilized to form a larger thread block, thereby reducing hardwar e scheduling overhead due to the large amount of thread blocks. W e le verage shared memory , a scratchpad memory from the L1 cache, for storing shared data across thr eads and facilitating parallel reduction computations. Additionally , w e present the CTE algorithm in Algorithm 2 . CTE optimizes workload distribution within a thread block by reassign- ing tasks dynamically . First, each CUD A thread obtains its assigned DA C ’26, July 26–29, 2026, Long Beach, CA En-Ming Huang and Shih-Hao Hung Algorithm 2 RC Net Delay in the CTE [ 12 , 13 ] Scheme 1: CP U: 2: N_blocks ← ⌈ num_nets / NETS_PER_BLOCK ⌉ 3: RCNet_CTE ( < NETS_PER_BLOCK, N_blocks > ) 4: GP U: 5: function RCNet_CTE 6: netID ← blo ckIdx.x · blockDim.x + threadIdx.x; 7: shared int workload_prex[NETS_PER_BLOCK]; 8: netStart ← netlist_ind[netID]+1; 9: netEnd ← netlist_ind[netID+1]; 10: workload_prex[threadIdx.x] ← (netEnd - netStart)*4; 11: ⊲ Parallel prex sum (W ork-Ecient Parallel Scan) on work- load_prex ⊳ 12: . . . 13: total_workloads ← workload_prex[blockDim.x-1]; 14: ⊲ Calculate ⊳ 15: for taskID = threadIdx.x; taskID < total_workloads; taskID += blockDim.x do 16: taskNetID ← lower_bound(workload_prex, taskID); 17: taskNetRoot ← netlist_ind[taskNetID]; 18: taskPinID ← (taskID - workload_prex[taskNetID]) / 4; 19: taskCondID ← (taskID - workload_prex[taskNetID]) % 4; 20: Do work . . . net and its workloads (i.e., pins). These workloads are combined to form a unied task po ol using a parallel prex sum op eration, which gathers the jobs. The parallel prex sum operation is based on the work-ecient data-parallel scanning algorithm [ 2 ], consisting of two stages: reduce and down-sweep. This method is ecient for scenarios where parallel threads, such as those from dierent warps, are not perfectly synchronized. Therefore , this allows for workload rescheduling across warps, not just within a single warp , thereby reducing inter-warp load imbalance. Once the workloads are orga- nized into the prex sum array , computations begin. Each thr ead retrieves a single task (i.e., a pin) through striding. Subsequently , it calculates its belonging net by performing a binary search on the prex sum array to nd the maximum index, whose value is less than or equal to the task index. This operation is also r eferred to as the std::lower_bound in the C++ standard library . After the workload is retrieved, the Do work continues to the same RC net delay computation as Line 19 ∼ 36 in Algorithm 1 . 3.1.2 Cell arrival time and Slack propagation . The propaga- tion of arrival times (A T s) across cells requires updating the output pin’s A T based on the input pins’ A Ts and the calculated cell delays through LU T interpolations. For each input-to-output path within a cell, we determine the cell arc delay by interpolating values from a look-up table (LU T). The cell’s late-mo de output A T is then com- puted by taking the maximum over all input pins of the sum of the input pin’s A T and its corresponding cell arc delay . Conversely , the early-mode A T is derived using the minimum. The calculation of pin and cell slacks is performed by subtracting the A T from the required arrival time (RA T), which is computed via a backward propagation pass, starting from the circuit’s primary outputs. Therefore , in W arp-ST AR, A T s and slacks are calculated in two distinct stages: a for ward pass for A Ts and a backward pass for slacks. W e apply the same parallelization strategies used in the RC CUDA stream: Cell prop. layer 1 layer 2 CUDA stream: LSE/gradient layer 1 cudaStream WaitEvent cudaStream WaitEvent layer 2 Figure 4: Overlapped execution of A T propagation and gradi- ent computation in W arp-ST AR. CUD A events are employed, allowing concurrent execution while ensuring correctness. Net Delay Computation , the pin-based scheme or the CTE scheme, to accelerate the A T propagation and slack computation. T o ensure the correct execution order of A T propagation for parallel processing, nets are organized into a sequential order , con- sisting of multiple levels, through the process of levelization [ 22 ]. Independent nets are grouped into the same level and can b e pro- cessed in parallel, while dependent nets are place d in dierent levels to manage their data dependencies, as shown in Figure 1b . Our im- plementation follo ws the method presented in [ 4 ], wher e each level is described by a CUDA kernel function. 3.2 Operation Fusion for Dierentiable ST A In traditional STA, the arrival time at a cell’s output pin is deter- mined using a maximum op eration over the A T s from all input paths. Howev er , it is unsuitable for gradient analysis b ecause only the single largest input value contributes to the maximum, pre- venting the gradient from b eing ee ctively shared among other pins. T o overcome this limitation, many works have adopted the LogSumExp (LSE) operation as a dierentiable replacement [ 6 , 16 ], which can be expressed as Equation 4 𝑦 = 𝑐 + 𝛾 log Í 𝑛 𝑖 = 1 𝑒 𝑥 𝑝 𝑥 𝑖 − 𝑐 𝛾 (4) ,where 𝑥 𝑖 represents the values intended for the LSE operation, 𝑐 is the maximum value among the 𝑥 𝑖 values, and 𝛾 is a hyper- parameter that controls the smoothness of the output. The term 𝑐 is crucial for pre venting oating-point overow when 𝑒 𝑥 𝑖 / 𝛾 are large enough to exceed the oating point’s range. T o obtain gradients, we calculate pin A T s using both the max- imum and LSE op erations, the former for traditional timing cal- culations and the latter for dierentiable computations. The LSE operations follow the same data dependency relationships as A T propagation, thus le veraging the same levelization results. However , since the LSE operation depends on cell ar c delays (deriv ed from LU T interpolations during the A T propagation stage), it must be scheduled after the cell delay for a given level has been computed. W e further propose a tight integration with the original ST A through operation fusion , which overlaps slack propagation and gradient computations using CUDA streams. Instead of calculating LSE and gradient after the original ST A, which consists of the RC net delay and the A T propagation stages, we demonstrate that it can be performed concurrently with the A T propagation stage. This strategy maximizes GP U’s computing throughput and reduces the overhead associated with gradient computations. Our key observa- tion is that separating gradient calculations into distinct for ward and backward passes, a common practice when integrating auto- matic dierentiation frameworks like PyT orch (e.g., INST A [ 16 ] requires a manual insta.tns.backward() invocation after the W arp-STAR DA C ’26, July 26–29, 2026, Long Beach, CA T able 1: Statistics of the datasets used in our experiments. Design #Cells #Nets #Pins aes_cipher_top[ 11 ] 9,917 10,178 37,357 superblue1[ 14 ] 1,209,716 1,215,710 3,767,494 superblue3[ 14 ] 1,213,252 1,224,979 3,905,321 superblue4[ 14 ] 795,645 802,513 2,497,940 superblue5[ 14 ] 1,086,888 1,100,825 3,246,878 superblue7[ 14 ] 1,931,639 1,933,945 6,372,094 superblue10[ 14 ] 1,876,103 1,898,119 5,560,506 superblue16[ 14 ] 981,559 999,902 3,013,268 superblue18[ 14 ] 768,068 771,542 2,559,143 slack calculation) is unnecessary in our context. This is primarily for two reasons: (1) calculating cell slacks inherently involves a backward propagation step within the timing analysis; and (2) most implementations derive the gradient directly from the loss func- tion of total negative slacks. As a result, we enable gradients to be computed directly during the A T and slack propagation phases by meticulously managing data dependencies. W e create two separate CUD A streams: one dedicated to STA ’s core forward (i.e., cell delay and A T propagations) and backward propagation (i.e., slack computations), and another for the dier- entiable LSE (for ward) and its associated gradient computations (backward). This architectural design allows both computational ows to be scheduled concurrently on the GP U. In such o verlapping scheme, the LSE operation for le vel 𝑖 must execute only after the completion of the cell delay computation for the same level 𝑖 , as the cell delays derived from LU T interpolations are required for the LSE operation. Hence, we incorporate CUDA ev ents to manage synchronizations between these streams. Figure 4 depicts the over- lapped execution: CUD A events ( green blocks) ar e inserted into the cell delay propagation CUD A stream, and cudaStreamWaitEvent is utilized in the LSE and gradient stream for synchronization. This demonstrates how LSE and gradient computations can be optimized by fusing them with the process of cell A T and slack pr opagation. Notably , our pin-based parallelization scheme is also applicable to this stage for maximizing parallelism and SIMD lane utilization. 3.3 Timing-driven Global P lacement W e integrate our GP U ST A engine into Xplace3.0 [ 3 ], a timing- driven global placement framework powered by GP U, replacing its original, less ecient GP U-base d ST A engine [ 4 , 5 , 22 ] as discusse d in previous sections. W e adopt Xplace3.0’s existing pin and path weighting scheme, which optimizes placement based on slacks. In this scheme , each pin is assigned a weight that reects its criticality . Since slack information is required for all components, the ST A engine must b e invoked in every placement iteration, leading to signicant runtime overhead for large designs. 4 Experimental Results and Evaluation In this section, we evaluate the performance of W arp-ST AR against existing CP U and GP U based ST A engines: OT [ 9 ] and GP U- Timer [ 4 , 5 , 22 ], r espectively . Next, we analyze the eciency of gradient com- putation through operation fusion , and compare with INST A [ 16 ], a GP U-based dierentiable ST A engine. Finally , we demonstrate the potential of W arp-ST AR-based GP framework by comparing T able 2: Runtime comparison (ms.) of W arp-STAR (Ours) with Op enTimer and GP U- Timer . Design OpenTimer (OT) [ 9 ] GP U- Timer [ 4 , 5 , 22 ] W arp-STAR (Pin-based) W arp-STAR (CTE) aes_cipher_top 89.1 4.03 1.49 3.96 superblue1 5095 57 25 48 superblue3 5165 61 26 51 superblue4 3149 43 17 31 superblue5 4274 48 21 41 superblue7 8811 71 35 50 superblue10 7551 81 34 71 superblue16 3894 40 18 29 superblue18 3407 38 16 30 A vg. Spe edup 1.48% 100.00% 235.97% 124.18% 0 500 1000 1500 2000 R untime (us.) Slack pr op. (backwar d) A T pr op. (forwar d) R CNet 394.7 691.8 47.6 1398.3 2261.3 520.4 GPU- T imer [4,5,22] W arp-ST AR Figure 5: Runtime breakdown of the aes_cipher_top test case. our runtime and total negative slacks (TNS) with DREAMPlace 4.0 (DP4.0) [ 15 ], Ecient TDP [ 21 ], Xplace3.0 [ 3 ] and INST A -Place [ 16 ]. Our experiments are conducted on a 24-cor e AMD 7965WX CP U and an NVIDIA RTX 4090 GP U, running with CUD A version 11.8. W e use the incremental timing-driven GP contest datasets from the ICCAD 2015 [ 14 ] and 2025 [ 11 ], with statistics in T able 1 . 4.1 ST A Runtime Performance T able 2 presents the runtime performance of W arp-ST AR compared to OT (CP U-based) and GP U- Timer . OT is executed using all 24 CP U cores, while both W arp-ST AR and GP U- Timer are executed on a single GP U. Our w ork presents two implementations: W arp- ST AR (pin-based) and W arp-STAR (CTE) , corresponding to the two methods described in Section 3 . The results show that W arp-ST AR achieves an average speedup of 2.4X over GP U- Timer and 162X over OT . The performance improvement is attributed to the elimination of intra-warp load imbalance, which allows for higher SIMD lane utilization on the GP U. Although W arp-ST AR (CTE) is theoretically more capable of reducing intra-warp load imbalance, it does not show signicant improvement over W arp-ST AR (pin-based) . The CTE method introduces overhead from workload rescheduling and indexing—a limitation also documented by [ 8 ]. Consequently , we adopt the pin-based scheme as our primary implementation. W e further evaluate the runtime performance breakdown of W arp-ST AR against GP U- Timer in Figure 5 for the aes_cipher_top test case. W arp-STAR provides a p erformance boost across all stages due to its increased parallelism and higher SIMD lane utilization. 4.2 Dierentiable ST A Runtime Analysis W e evaluate the runtime performance of our gradient computation method, which combines our pin-based scheme with the opera- tion fusion technique. T able 4 presents the runtime performance of gradient computation. The setting “Di ” refers to the baseline ap- proach where LSE and gradient computations are performed after ST A, while “Di+Fusion” represents our proposed operation fusion , DA C ’26, July 26–29, 2026, Long Beach, CA En-Ming Huang and Shih-Hao Hung T able 3: Runtime (in se conds) and TNS (in 10 5 ns) of W arp-ST AR against existing global placement to ols 1 . 1 Both lower runtime and TNS are better . 2 INST A-Place uses both CP U (OT) & GPU (INSTA). Design DP4.0 [ 15 ] INST A -Place [ 16 ] Ecient TDP [ 21 ] Xplace 3.0 [ 3 ] W arp-STAR ( Ours ) TNS Time TNS Time TNS Time TNS Time TNS Time Time (CTE) GP U-based ST A ✗ ✓ 2 ✗ ✓ ✓ aes_cipher_top -1.9 6.3 - - -1.9 6.5 -1.0 2.8 -1.0 1.2 1.6 superblue1 -92.3 277.0 -34.6 >277.0 -15.9 263.8 -21.8 60.9 -23.7 35.4 51.1 superblue3 -52.9 346.2 -40.4 >346.0 -20.0 367.4 -16.9 65.2 -16.8 40.8 57.7 superblue4 -151.5 143.1 -114.5 >143.0 -89.8 540.7 -67.6 42.3 -78.0 23.5 32.2 superblue5 -98.5 287.0 -93.7 >286.0 -64.4 342.0 -61.7 59.0 -61.6 34.3 51.4 superblue7 -62.1 367.0 -57.6 >367.0 -38.3 387.5 -33.2 88.5 -28.9 58.9 70.4 superblue10 -674.8 472.1 -628.2 >472.0 -562.3 783.3 -522.8 104.9 -544.3 59.2 91.5 superblue16 -67.3 189.2 -37.6 >189.0 -24.7 184.1 -20.5 42.6 -23.5 26.3 33.0 superblue18 -47.3 153.6 -35.8 >153.0 -16.0 146.4 -15.2 41.8 -15.1 24.3 33.4 Ratio 2.43 7.07 1.30 >7.00 1.16 9.73 0.98 1.74 1.0 1.0 1.39 T able 4: Runtime p erformance (in ms.) of gradient compu- tation through operation fusion. “Di ” refers to se quential gradient computation, “Di+Fusion” refers to the op. fusion. Design Ours Ours (Di ) Ours (Di+Fusion) aes_cipher_top 1.49 1.72 1.61 superblue1 25 34 29 superblue10 34 47 41 superblue16 18 23 21 superblue18 16 22 19 superblue3 26 36 30 superblue4 17 22 19 superblue5 21 28 24 superblue7 35 46 43 Norm. Time 100% 133% 116% which enables concurr ent execution with the ST A engine. With the employment of optimized warp-level parallelism, the ov erhead of gradient analysis is only 33% of the ST A engine ’s runtime. This ov er- head is further reduced to 16% with our op eration fusion technique. Additionally , we prole the actual execution pattern of gradient computation with operation fusion in Figure 6 . The result depicts that the gradient computation is ee ctively overlapped with the ST A engine, which is the primary reason for the reduced runtime. W e also compare our p erformance against INST A. For the aes- cipher_top test case, INST A takes 108 ms for ST A and 61 ms for gradient computation, rev ealing that gradient computation intro- duces a 56% overhead relative to the ST A runtime. While INST A is designed for high accuracy against industrial sign-o tools and is partially close d-sourced, making a direct runtime comparison challenging, our results serve as reference for the performance improvement achie vable through our optimization techniques. 4.3 Timing-driven Global P lacement T o demonstrate the potential of our W arp-ST AR-based ST A engine, we integrate it into the timing-driven GP framework Xplace3.0 [ 3 ] which implements the standard GP U-timer [ 4 , 5 , 22 ]. Our experi- mental results on the ICCAD 2015 and 2025 contest benchmarks are presented in T able 3 . In terms of execution time, the W arp- ST AR-enable d GP framework achieves an average speedup of 7.1X over DP4.0 [ 15 ] and INST A -Place [ 16 ], 9.7X over Ecient TDP [ 21 ], and 174% over Xplace3.0 [ 3 ]. Due to the substantial overhead of CP U-based ST A, DP4.0, INST A-Place, and Ecient TDP are limited to performing timing analysis every 15 iterations. In contrast, our optimized GP U-based ST A is performed in every iteration of our S T A F o r w a r d P r o p . S T A B a c k w a r d P r o p. L S E F o r w a r d P r o p. G r a d i e n t B a c k P r o p . Figure 6: Proling result from NVIDIA Nsight System [ 18 ], illustrating the overlapped execution. The upp er part is the CUD A stream for ST A engine, while the lower part shows the stream for LSE and gradient computation. W e use CUDA events to handle data dependencies every 10 kernel launches. GP ow . Note that since INST A -Place is not open-sourced, we cite its TNS results directly from its corresponding paper [ 16 ], and its runtime is higher than DP4.0 due to its reliance on the DREAMPlace framework. The experimental results, encompassing both runtime performance and TNS 3 , demonstrate that W arp-STAR’s benets extend beyond standalone ST A, proving its eective applicability to other performance-critical applications like global placement. 5 Conclusion In this paper , we present W arp-ST AR, a GP U-based ST A engine designed to eectively address the overlooked intra-warp load im- balance issue. Incorporating the pin-base d scheme, W arp-STAR achieves remarkable p erformance, demonstrating an average of 162X speedup over CP U-based approach and 2.4X over GP U-based engines. Furthermor e, our operation fusion reduces gradient compu- tation overhead to just 16% of the ST A runtime. Finally , integrated into a timing-driven GP framework, W arp-ST AR achieves the best runtime costs over So T A placers, highlighting its broad applicability and performance benets across timing-driven design ows. Acknowledgments W e acknowledge the nancial support from Academia Sinica’s Sili- conMind Project ( AS-IAIA-114-M11). This work was also supported in part by the National Science and T echnology Council, T aiwan (112-2221-E-002-159-MY3), as well as the National Center for High- performance Computing and Taipei-1 for providing computational resources. W e also thank Pei-Che Hsu, Kun-Cheng W ang, and Prof. Hung-Ming Chen from the VD ALab at National Y ang Ming Chiao T ung University for their assistance during the ICCAD contest. 3 V ariations in TNS metrics compared to the original Xplace3.0 are attributed to the adoption of a race-free parallel reduction scheme in W arp-STAR, resolving synchro- nization errors in the baseline GP U-timer and acknowledging the numerical divergence caused by oating-point non-associativity in non-convex optimization. W arp-STAR DA C ’26, July 26–29, 2026, Long Beach, CA References [1] T utu Ajayi, Vidya A. Chhabria, Mateus Fogaça, Soheil Hashemi, Abdelrahman Hosny , Andrew B. Kahng, Minsoo Kim, Jeongsup Lee, Uday Mallappa, Marina Neseem, Geraldo Pradipta, Sherief Reda, Mehdi Saligane, Sachin S. Sapatnekar , Carl Sechen, Mohamed Shalan, William Swartz, Lutong W ang, Zhehong W ang, Mingyu W oo, and Bangqi Xu. 2019. To ward an Open-Source Digital Flow: First Learnings from the OpenROAD Project. In Proc. Design A utomation Conf. (DAC) . Article 76, 4 pages. doi:10.1145/3316781.3326334 [2] Guy E. Blello ch. 1990. Prex Sums and Their A pplications . Technical Report CMU-CS-90-190. School of Computer Science, Carnegie Mellon University . [3] Bangqi Fu, Lixin Liu, Martin D. F. W ong, and Evangeline F . Y. Y oung. 2025. Hybrid Modeling and W eighting for Timing-driven Placement with Ecient Calibration. In Proc. Int. Conf. on Computer-Aided Design (ICCAD) . 9 pages. doi:10.1145/ 3676536.3676803 [4] Zizheng Guo, T sung- W ei Huang, and Yib o Lin. 2020. GP U- Accelerated Static Timing Analysis. In Proc. Int. Conf. on Computer-Aided Design (ICCAD) . doi:10. 1145/3400302.3415631 [5] Zizheng Guo, T sung- W ei Huang, and Yibo Lin. 2023. Accelerating Static Timing Analysis Using CP U–GP U Heterogeneous Parallelism. IEEE Trans. on Computer- Aided Design of Integrated Circuits and Systems (TCAD) 42, 12 (2023), 4973–4984. doi:10.1109/TCAD.2023.3286261 [6] Zizheng Guo and Yibo Lin. 2022. Dierentiable-timing-driven global placement. In Proc. Design A utomation Conf. (DA C) . 1315–1320. doi:10.1145/3489517.3530486 [7] Jin Hu, Greg Schaeer , and Vibhor Garg. 2015. TAU 2015 contest on incremental timing analysis. In Proc. Int. Conf. on Computer- Aided Design (ICCAD) . 882–889. doi:10.1109/ICCAD.2015.7372664 [8] En-Ming Huang, Bo- Wun Cheng, Meng-Hsien Lin, Chun- Yi Lee, and T sung T ai Y eh. 2024. WER: Maximizing Parallelism of Irregular Graph Applications Through GP U W arp EqualizeR. In Proc. Asia and South Pacic Design Automation Confer- ence (ASP-D AC) . 201–206. doi:10.1109/ASP- DA C58780.2024.10473955 [9] T sung- W ei Huang, Guannan Guo, Chun-Xun Lin, and Martin D . F . W ong. 2021. OpenTimer v2: A New Parallel Incremental Timing Analysis Engine. IEEE Trans. on Computer- Aided Design of Integrated Circuits and Systems (TCAD) 40 (2021), 776–789. doi:10.1109/tcad.2020.3007319 [10] T sung- W ei Huang, Dian-Lun Lin, Chun-Xun Lin, and Yibo Lin. 2022. Tasko w: A Lightweight Parallel and Heterogeneous Task Graph Computing System. IEEE Trans. on Parallel and Distributed Systems (TPDS) 33, 6 (2022), 1303–1320. doi:10. 1109/TPDS.2021.3104255 [11] ICCAD Contest Organization. 2025. 2025 CAD Contest @ ICCAD. https://www. iccad- contest.org/2025/ . Accessed: 2025-07-20. [12] Farzad Khorasani, Rajiv Gupta, and Laxmi N. Bhuyan. 2015. Scalable SIMD- Ecient Graph Processing on GP Us. In Proc. Int. Conf. on Parallel A rchitectures and Compilation T echniques (P ACT) . 39–50. doi:10.1109/P ACT .2015.15 [13] Farzad Khorasani, Bryan Rowe, Rajiv Gupta, and Laxmi N. Bhuyan. 2016. Elimi- nating Intra- W arp Load Imbalance in Irregular Nested Patterns via Collab orative T ask Engagement. In IEEE Int. Parallel and Distributed Processing Symp. (IPDPS) . 524–533. doi:10.1109/IPDPS.2016.36 [14] Myung-Chul Kim, Jin Hu, Jiajia Li, and Natarajan Viswanathan. 2015. ICCAD- 2015 CAD contest in incremental timing-driven placement and b enchmark suite. In Proc. Int. Conf. on Computer-Aided Design (ICCAD) . 921–926. doi:10.1109/ ICCAD.2015.7372671 [15] Peiyu Liao, Dawei Guo, Zizheng Guo, Siting Liu, Yibo Lin, and Bei Yu. 2023. DREAMPlace 4.0: Timing-Driven Placement With Momentum-Based Net W eight- ing and Lagrangian-Based Renement. IEEE Trans. on Computer-Aided Design of Integrated Circuits and Systems (TCAD) 42, 10 (2023), 3374–3387. doi:10.1109/ TCAD.2023.3240132 [16] Yi-Chen Lu, Zizheng Guo, Kishor Kunal, Rongjian Liang, and Haoxing Ren. 2025. INST A: An Ultra-Fast, Dierentiable, Statistical Static Timing Analysis Engine for Industrial Physical Design Applications. In Proc. Design Automation Conf. (DA C) . [17] Amir Hossein Nodehi Sab et, Junqiao Qiu, and Zhijia Zhao. 2018. Tigr: Trans- forming Irregular Graphs for GPU-Friendly Graph Processing. ACM SIGPLAN Notices 53, 2 (2018), 622–636. doi:10.1145/3296957.3173180 [18] NVIDIA. 2025. Nsight Systems. https://developer .nvidia.com/nsight- systems Accessed: 2025-07-20. [19] Parallax Software. 2025. Parallax Static Timing Analyzer. https://github.com/ parallaxsw/OpenST A Accessed: 2025-07-20. [20] Albert Segura, Jose-Maria Arnau, and Antonio González. 2019. SCU: A GPU Stream Compaction Unit for Graph Processing. In Proc. Int. Symp. on Computer A rchitecture (ISCA) . 424–435. doi:10.1145/3307650.3322254 [21] Y unqi Shi, Siyuan Xu, Shixiong Kai, Xi Lin, Ke Xue , Mingxuan Yuan, and Chao Qian. 2025. Timing-Driven Global Placement by Ecient Critical Path Extrac- tion. In Proc. Design, A utomation and T est in Europe (D A TE) . 1–7. doi:10.23919/ DA TE64628.2025.10993273 [22] Hunta H.- W . W ang, Louis Y.-Z. Lin, Ryan H.-M. Huang, and Charles H.-P . W en. 2014. CAST A: CUDA-Accelerated Static Timing Analysis for VLSI Designs. In Proc. Int. Conf. on Parallel Processing . 192–200. doi:10.1109/ICPP .2014.28

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment