Membership Inference Attacks against Large Audio Language Models

We present the first systematic Membership Inference Attack (MIA) evaluation of Large Audio Language Models (LALMs). As audio encodes non-semantic information, it induces severe train and test distribution shifts and can lead to spurious MIA performa…

Authors: Jia-Kai Dong, Yu-Xiang Lin, Hung-Yi Lee

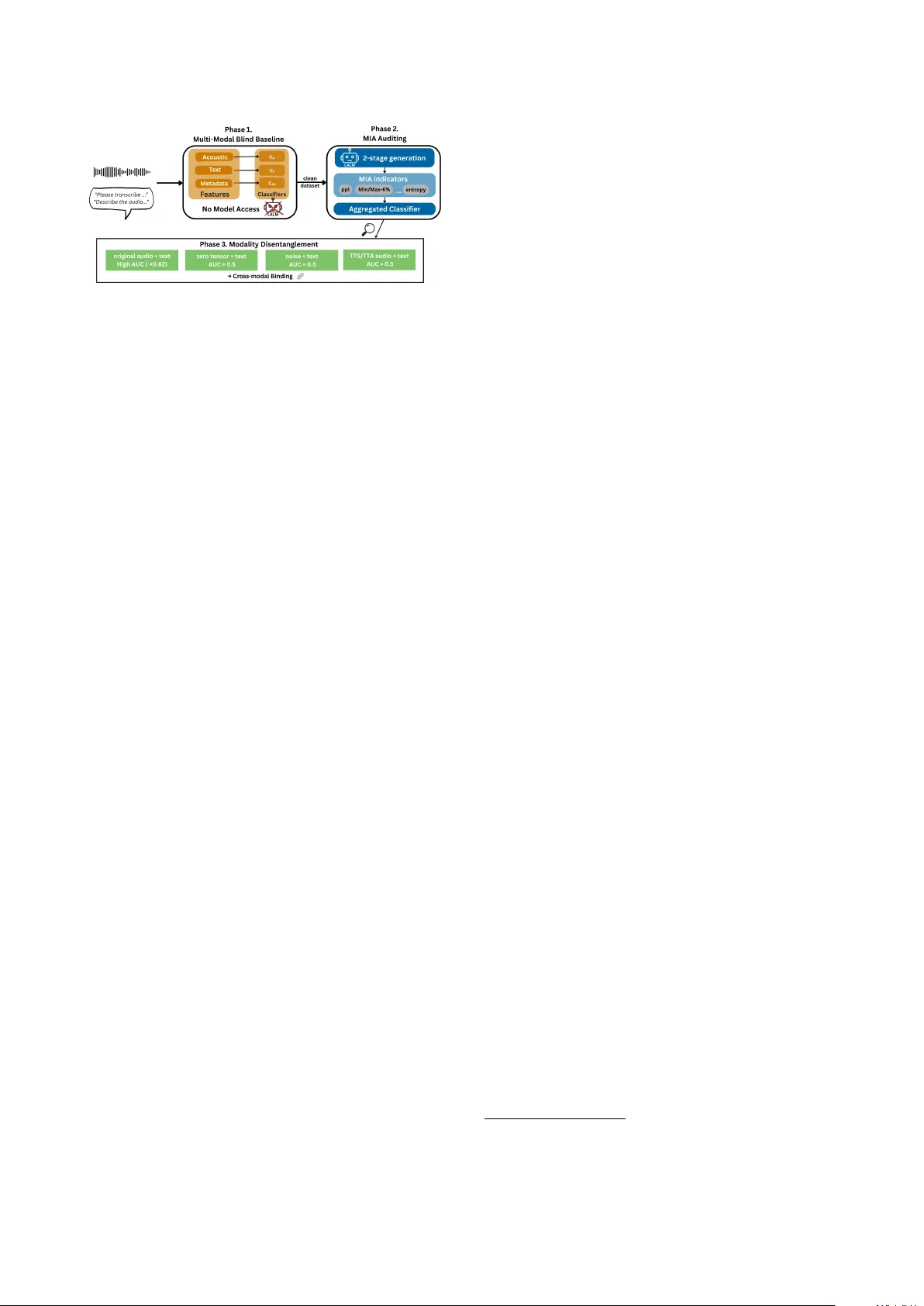

Membership Infer ence Attacks against Large A udio Language Models Jia-Kai Dong 1 , Y u-Xiang Lin 1 , Hung-yi Lee 1 , 2 1 National T aiwan Uni versity 2 NTU Artificial Intelligence Center of Research Excellence b11901067@ntu.edu.tw Abstract W e present the first systematic Membership Inference Attack (MIA) ev aluation of LALMs. As audio encodes non-semantic information that induces se vere train and test distribution shifts and can lead to spurious MIA performance. Using a multi- modal blind baseline based on textual, spectral and prosodic features, we demonstrate that common speech datasets exhibit near-perfect train/test separability (A UC ≈ 1 . 0 ) ev en without model inference, and the standard MIA scores strongly corre- late with these blind acoustic artifacts ( r > 0 . 7 ). Using this bilnd baseline, we identify the distribution-matched datasets enable reliable MIA evaluation without distribution shift con- founds. W e benchmark multiple MIA methods and conduct modality disentanglement experiment on these datasets. The results re veal that LALM memorization is cr oss-modal , arising only from binding a speaker’ s vocal identity with its text. These findings establish a principled standard for auditing LALMs be- yond spurious correlations. Index T erms : Membership Inference Attacks, Large Audio Language Models, Priv acy Auditing 1. Intr oduction Large Audio Language Models (LALMs) [1–7] ha ve recently emerged as a powerful paradigm for multimodal reasoning, uni- fying tasks such as speech recognition and audio captioning within a single framew ork. While these models achieve impres- siv e generalization by training on massiv e web-scale datasets, they raise critical concerns regarding pri vacy and copyright, particularly whether sensiti ve audio content is memorized dur- ing training. Membership Inference Attacks (MIA) , which aim to de- termine whether a specific sample was used during model training, have become a standard tool for auditing such risks [8, 9]. Although MIA has been e xtensively studied in text-based LLMs [10–16] and VLMs [17, 18], its applicability to LALMs remains une xplored. Existing priv acy studies in the audio do- main focus mainly on representation learning or speak er recog- nition systems [19, 20], where attacks target fixed-dimensional embeddings rather than sequence-le vel generation. In contrast, LALMs are trained on massiv e web-scale corpora with lim- ited exposure, often only a single epoch. While this regime is commonly assumed to mitigate verbatim memorization [11], the high capacity of LALMs still enables membership leakage through memorized cr oss-modal mappings between acoustic signals and text. Such leakage re veals speaker–content associa- tions, constituting a biometric pri vacy risk that is more granular and sev ere than textual duplication. Auditing LALMs is further complicated by the continuous nature of audio, which encodes rich non-semantic cues such as speaker identity and recording conditions. Consequently , pri- vac y threats in LALMs are dominated not by copyrighted con- tent, but by irreversible identity–content binding. Moreov er, standard audio benchmarks often exhibit acoustic distribution shifts between training and test sets that can spuriously inflate MIA performance. As demonstrated in LLMs [21], simple blind baselines that exploit such dataset artifacts can outper- form sophisticated MIAs, raising concerns about whether re- ported leakage truly reflects memorization [22]. W e conduct the first systematic MIA study on LALM pre- training corpora by leveraging two state-of-the-art open-source models: Audio-Flamingo 3 (AF3) [5] and Music-Flamingo (MF) [23]. The transparent data provenance of these models en- ables a direct audit of the foundation weights themselves. This approach sidesteps the use of computationally prohibitive and often unfaithful surrogate “shadow” models, enabling an au- thentic ev aluation of memorization across eight di verse datasets spanning ASR, Audio Captioning, and Music Understanding. Furthermore, to disentangle genuine memorization from dataset-induced artifacts, we introduce a Multi-modal Blind Baseline framew ork that quantifies distribution shifts across metadata, textual content, and acoustic features. Our contributions are threefold: • W e present the first comprehensive benchmark of sample- lev el MIA for LALMs in an audio/text-to-text setting. W e ev aluate sev en confidence-based MIA attacks methods across eight audio-centric tasks. • W e propose a rigorous Multi-modal Blind Baseline protocol to audit pri vacy risks arising from dataset design. W e show that common benchmark practices, introduce se vere acoustic distribution shifts that inflate MIA A UCs and strongly corre- late with blind acoustic classifiers ( r > 0 . 4 ). • On distribution-matched datasets, we show that LALM mem- orization is cross-modal , depending on the specific pairing of acoustic attrib utes such as speaker identity and te xt. Pri vacy risks are thus highest when sensitive content is tightly linked to a particular speaker . Overall, our findings highlight the fragility of nai ve pri- vac y assessments in the audio domain and underscore the im- portance of controlling for acoustic distrib ution shifts when au- diting LALMs. W e argue that reported MIA results should be interpreted in conjunction with blind baseline diagnostics; with- out such analysis, it is dif ficult to disentangle genuine memo- rization from distributional artif acts. 2. Methodology W e propose a multi-phase privac y auditing framework, as il- lustrated in Figure 1. Our approach begins by establishing a Figure 1: Overview of our pr oposed 3-phase LALM privacy au- diting framework. (1) Multi-Modal Blind Baseline: A model- agnostic classifier first identifies and filters out samples with confounding distrib utional shifts to pr oduce a ”clean dataset. ” (2) MIA A uditing: The targ et LALM is then audited on this clean dataset using a suite of membership indicators derived fr om a 2-stage gener ation pr ocess. (3) Modality Disentangle- ment: F inally , we systematically pr obe any detected memoriza- tion to characterize its natur e, specifically identifying cr oss- modal binding. Multi-modal Blind Baseline (Phase 1) to quantify any distri- bution shifts inherent in the data, without accessing the target LALM. W e then perform the primary MIA A uditing on the model (Phase 2) and, crucially , correlate its success with the blind baseline’ s performance. This diagnostic step allows us to disentangle spurious MIA success dri ven by data artifacts from genuine model memorization. Finally , for cases of validated memorization, we conduct Modality Disentanglement exper- iments (Phase 3) to characterize the memory’ s nature, specifi- cally testing for the existence of cr oss-modal binding . These experiments are designed to test the hypothesis that priv acy leakage in LALMs is driv en by cr oss-modal binding , where memorization is only triggered when the model encoun- ters the precise pairing of original acoustic features and their corresponding textual sequences. 2.1. Inference Protocol: T wo-Stage Generation T o simulate a realistic gray-box auditing scenario where precise ground-truth transcripts used during training may be unav ail- able, we adopt a T wo-Stage Generation protocol: Stage 1: A utonomous Decoding. Giv en an audio input x , the target LALM performs greedy decoding to produce a self- generated output sequence ˆ y . Stage 2: Self-Conditioned Scoring. W e treat ˆ y as pseudo- ground-truth and re-run the forward pass to obtain token-le vel logits corresponding to ˆ y . Membership metrics are then com- puted based on these self-conditioned probabilities. 2.2. Phase 1: Multi-modal Bias A udit Protocol T o prev ent spurious MIA success and ensure the v alidity of pri- vac y assessments, we first propose a systematic Multi-modal Blind Baseline framew ork. This protocol identifies distribu- tional shortcuts that models may e xploit across tw o hierarchical lev els: (1) Inter-dataset Shift , where mismatches between dis- parate data sources cause MIA metrics to act as tri vial domain classifiers; and (2) Intra-dataset Shift , where subtle statistical discrepancies arise between the training and test splits of the same dataset. In the audio domain, such shifts are particularly common, as train and test splits are often constructed using dif- ferent curation or preprocessing pipelines; for example, in Gi- gaSpeech [24], transcription standards lead to systematic dif- ferences between splits. Our protocol quantifies these shifts by training blind Logistic Regression classifiers on data-intrinsic features without accessing the LALMs. T o isolate the contribu- tion of dif ferent sources of distribution shift, we construct three blind baselines at increasing lev els of modality coverage: • Metadata : Structural statistics including audio duration, file size, and word/character counts. • T ext : Utterance-level TF-IDF representations (unigrams and bigrams). • Acoustic : Fixed-dimensional utterance-level descriptors ob- tained by aggregating frame-wise lo w-level acoustic features, including MFCCs, spectral statistics (centroid, bandwidth, rolloff), pitch, energy (RMS), and zero-crossing rate. These features are specifically designed to capture recording con- ditions, channel characteristics, and dataset-specific acoustic artifacts rather than linguistic content. All features are aggregated into fixed-dimensional, sample- lev el representations. W e adopt simple Logistic Regression classifiers to ensure that strong blind performance reflects salient distributional artif acts rather than classifier capacity . W e utilize the Pearson ( r ) correlation coefficient between these blind scores and the subsequent model-based MIA indica- tors (defined in Section 2.3) as a diagnostic reference. 1 Rather than applying a fixed threshold, we ar gue that a high Blind Baseline A UC, when accompanied by a non-negligible posi- tiv e correlation with the model’ s MIA scores, provides strong empirical e vidence that the detected membership is likely con- founded by distributional shortcuts. In such cases, the reported MIA performance may reflect the LALM’ s sensitivity to data- intrinsic artifacts rather than genuine memorization, thereby ne- cessitating a more cautious interpretation of the priv acy risks. 2.3. Membership Inference Indicators W e compute several complementary metrics from the model’ s output probabilities to serve as membership indicators: • Perplexity (PPL) : The av erage negati ve log-likelihood (NLL) of the sequence ˆ y [25]. • Shannon Entr opy : The average entropy of the predicted to- ken distrib utions, measuring predictive certainty [26]. • Min-k% / Max-k% Prob : A verage NLL of the k % tokens with lowest and highest probabilities, respecti vely [27, 28]. • Max-R ´ enyi MIA : Measures probability mass concentration via R ´ enyi di vergence with orders α ∈ { 0 , 1 , 2 , ∞} [17]. • Zlib Ratio : The NLL normalized by the zlib compression size of the text [25]. • Max Probability Gap : The sequence-av eraged gap between top-1 and top-2 token probabilities, capturing over -confident predictions indicativ e of memorized tokens [17]. Follo wing [9], we concatenate these metrics into a feature vec- tor and train a Logistic Regression classifier using stratified 10- fold cross-validation within each dataset to produce member- ship probabilities. 2.4. Modality Disentanglement Protocol For datasets passing the bias audit (Blind A UC ≈ 0 . 5 ), we probe the mechanistic nature of memorization via modality dis- entanglement. W e ask whether the membership signal arises 1 W e also compute Spearman rank correlations as a robustness check and observe highly consistent diagnostic conclusions. T able 1: Source Diagnosis via Corr elation Analysis. W e com- par e MIA performance of AF3 and MF . Blind A UC reflects intrinsic dataset distribution. P earson corr elation coefficients ( r AF 3 /r M F ) indicate alignment between MIA performance and blind baselines. Dataset MIA A UC Blind Baseline: AUC ( r AF 3 /r M F ) AF3 MF Metadata T ext Acoustic Gigaspeech 87.6 90.5 71.9 (.59/.55) 75.6 (.41/.50) 78.9 (.52/.55) SPGISpeech 51.9 50.7 51.6 (.35/.33) 50.0 (-.01/.06) 52.0 (0.01/0.02) Librispeech 93.8 70.9 100.0 † (.75/.35) 61.1 (.16/.11) 99.8 (.78/.34) T edlium 70.0 68.9 64.9 (.48/.53) 85.7 (.24/.41) 98.7 (.34/.30) V oxPopuli 62.0 68.1 55.1 (.24/.09) 60.3 (.10/.13) 66.7 (.07/.08) Clotho 52.4 48.9 51.1 (.01/.09) 47.1 (-.01/.00) 48.2 (.01/-.01) CochlScene 57.3 60.4 51.1 (.37/.21) 47.1 (.34/.42) 48.2 (.19/0.14) Nsynth 62.3 62.1 51.1 (-.03/-.09) 47.1 (0.08/-.09) 48.2 (.21/0.22) † Near-perfect baseline A UC indicates trivial train/test separability . from cross-modal binding , namely the memorization of a spe- cific dependency between an acoustic realization and its tex- tual content, rather than a simple textual prior . W e ev aluate the model under four configurations: • Original : Full acoustic and textual input, representing the matched training instance. • T ext-Only (Silence) : Audio input is replaced by a zero- tensor , isolating the textual prior leakage. • Noise-Only : Audio is replaced by Gaussian noise to check the impact of non-semantic acoustic activ ation. • Acoustic Resynthesis : T o isolate the impact of instance- specific acoustic features such as unique speaker identity or specific recording en vironments, we replace the original au- dio with a version synthesized from the textual content. F or speech-centric datasets, we employ T ext-to-Speech (TTS) ; for en vironmental sound datasets, we utilize T ext-to-A udio (TT A) generation. By comparing these conditions, we can quantify the contribu- tion of instance-specific acoustic features to the ov erall mem- bership signal. If the MIA performance collapses upon resyn- thesis or silence, it confirms that the model’ s memory is an- chored to the unique binding of a specific speaker’ s voice to their utterance. 3. Experimental Setup 3.1. T arget Models While numerous state-of-the-art LALMs ha ve been pro- posed [1–7], the majority of these models do not offer public access to their pre-training data, making precise membership auditing infeasible. W e therefore evaluate our auditing proto- col on two models with fully open-access resources: A udio- Flamingo 3 (AF3) [5] and Music Flamingo (MF) [23]. While AF3 is designed for general-purpose audio reasoning, Music Flamingo is specifically scaled and optimized for complex mu- sic understanding and captioning tasks. The fully open-access weights and transparent pre-training data splits of both models provide a unique opportunity to precisely define membership at the foundation stage. This allows us to audit memorization di- rectly on the primary models themselves, rather than relying on computationally expensi ve surrogate models or opaque black- box approximations. 3.2. Dataset Selection W e ev aluate our auditing framew ork on eight datasets spanning three representati ve audio tasks, selected to cover div erse acous- tic conditions, semantic structures, and data curation pipelines. • ASR : LibriSpeech , GigaSpeech , TED-LIUM , V oxPopuli , and SPGISpeech , ranging from clean read speech to large- scale, noisy , and multi-speaker recordings, enabling analysis of both intra- and inter-dataset distrib ution shifts [24, 29–32]. • A udio Captioning : Clotho and CochlScene , which empha- size non-linguistic acoustic semantics such as sound events and scenes, complementing speech-centric benchmarks [33, 34]. • Music Synthesis : NSynth , a musical instrument dataset used to analyze memorization in non-speech audio [35]. Members and non-members are defined by official train- ing and test splits, respectiv ely . For each dataset, we randomly sample a balanced cohort of 2000 to 5000 instances (compris- ing 1000–2500 pairs) to ensure statistical rigor and a consistent scale for our sample-lev el analysis. 3.3. Implementation Details Blind Baseline The classifiers utilize the three feature sets de- scribed in Section 2.2, including utterance-level acoustic repre- sentations, TF-IDF textual features, and metadata statistics. MIA Indicator For membership inference, as discussed in Section 2.3, we aggregate v arious membership inference indi- cators into a 30-dimensional feature vector , where each dimen- sion represents a distinct metric. Follo wing [9], we sweep k ∈ { 0 . 05 , 0 . 1 , . . . , 0 . 6 } for Min-k% to capture low-probability to- kens, and use α ∈ { 0 , 1 , 2 , ∞} for Max-R ´ enyi to quantify prob- ability concentration. T raining Protocol Both the aggregated MIA and blind base- line classifiers are trained using stratified Logistic Regression. W e intentionally select a linear classifier to ensure that strong performance reflects salient distributional artifacts or genuine memorization rather than the capacity of the auditor . W e em- ploy 10-fold cross-validation within each dataset to ensure ev- ery sample is ev aluated as a test instance while strictly prev ent- ing cross-sample information leakage. Acoustic Resynthesis Models T o disentangle memorization effects from surface-le vel acoustic cues, we conduct an acous- tic resynthesis ablation that replaces original audio signals with synthesized counterparts while preserving linguistic or seman- tic content. For speech datasets such as V oxPopuli and SPGIS- peech, we use Cosyvoice-0.5B [36] with a standardized ref- erence voice, where a small set of audio samples is selected from LibriSpeech as speaker reference audio to remov e orig- inal speaker identities. For audio captioning datasets includ- ing Clotho , we use T angoFlux [37] to generate synthetic soundscapes conditioned on the provided captions. Due to the highly specialized nature of musical instrument synthesis and the brevity of labels, NSynth is excluded from the resynthe- sis ablation. For each TTS or TT A setting, we generate three independently synthesized audio realizations per sample using different reference audio or random seeds, and report the final membership performance as the average A UC across the three runs to reduce variance induced by stochastic generation. 3.4. Evaluation Metrics T o ev aluate the effecti veness of the membership inference at- tacks, we treat the task as a sample-lev el binary classification problem. For each sample i in a dataset D = D mem ∪ D non , we compute its membership score S i using the aggregated classi- fier or indi vidual metrics. Membership performance is reported via the Area Under the Receiver Operating Characteristic curve (A UC-R OC) , which measures the probability that a ran- Figure 2: Cr oss-dataset membership infer ence heatmap. Di- agonal cells r epresent standar d intra-dataset MIA (T rain vs. T est). Off-diagonal cells repr esent membership-neutral pair- ings (e.g ., Dataset A T rain vs. Dataset B T rain), wher e near- perfect A UCs r eflect domain shifts rather than memorization. domly chosen member sample receiv es a higher score than a non-member . An A UC of 0.5 indicates random guessing, while 1.0 indicates perfect detection. 4. Results and Discussion 4.1. Spurious MIA Success and Distribution Shifts A prerequisite for a valid Membership Inference Attack is to ensure that observed success is not merely an artifact of dis- tribution shifts between member and non-member sets. There- fore, this section’ s primary objectiv e is to in vestigate whether high MIA performance reflects genuine model memorization or is driven by such distributional shortcuts. By identifying and controlling for these confounding factors, we aim to define a “clean dataset” that enables a fair and accurate ev aluation of true memorization in subsequent experiments. Inter -dataset Bias (Domain Shortcuts) The most severe form of spurious success occurs when membership is defined across mismatched data sources. T o isolate domain shift from memo- rization, we construct a membership-neutral ev aluation matrix. As shown in Figure 2, the off-diagonal cells represent pairings in which both subsets share the same ground-truth training status , such as comparisons between training subsets drawn from different datasets, including Spgispeech and Clotho. In an ideal, bias-free audit, such comparisons should yield an A UC of ≈ 0 . 5 . Howe ver , our results reveal that these membership-neutral pairings consistently yield near-perfect separation, with A UCs reaching 1.0 . This confirms that MIA metrics under mismatched conditions act purely as do- main classifiers , capturing broad acoustic and linguistic sig- natures that reflect systematic differences in recording environ- ments and speaking styles across datasets, rather than reflecting training-set memorization in the model. Intra-dataset Bias (The Hidden T rap) Subtler biases exist within training and test splits of the same dataset. T able 1 shows that widely used benchmarks such as LibriSpeech (A UC 93.8) and TED-LIUM (A UC 70.0) yield high MIA scores. Howev er, our Multi-modal Blind Baseline re veals these are largely spu- rious: LibriSpeech’ s acoustic features alone reach 99.8 A UC without model access, with strong Pearson correlation to MIA scores ( r = 0 . 78 for M 1 ). This suggests that much of the ob- served MIA signal is dri ven by acoustic shortcuts from system- atic train-test differences, rather than genuine memorization. T able 2: Modality Disentanglement (Sample-level AUC) on Rel- atively Clean Datasets for M 1 (AF3) and M 2 (MF). Results show a consistent collapse in MIA performance when acoustic or textual conte xt is disrupted across both models. Dataset Original Silence Noise Synthesized M 1 M 2 M 1 M 2 M 1 M 2 M 1 M 2 V oxPopuli 62.0 68.1 53.9 51.6 52.1 51.6 48.4 49.8 SPGISpeech 51.9 50.7 49.4 50.3 50.3 51.2 49.8 49.4 Clotho 57.3 48.9 51.2 49.2 49.2 51.7 47.9 50.0 Nsynth 62.3 62.1 50.0 48.1 48.8 49.0 - - 4.2. Robustness on Distribution-Matched Datasets T o establish a trustworthy audit, we focus on datasets where the Blind Baseline A UC ≈ 0 . 5 ( SPGISpeech , Clotho ). Un- der these rigorously controlled conditions, MIA performance collapses to near-random (A UC 50.7–52.4). This suggests that the ev aluated LALMs exhibit strong sample-lev el privac y ro- bustness under distrib ution-matched conditions. The lack of verbatim memorization indicates that the div erse acoustic re- alizations of a giv en text act as a natural regularizer , preventing the model from ov erfitting to specific tok en sequences at the in- stance level. These findings indicate that current MIA methods perform poorly on datasets without distributional confounds. 4.3. Cross-modal Binding While overall scores are low , modality disentanglement on the clean V oxPopuli dataset rev eals the nature of LALM memory . As shown in T able 2, the membership signal in the Original con- dition collapses when modalities are decoupled. Replacing the original audio with Silence , Noise , or TTS-Resynthesis causes MIA A UCs to drop to near -random levels ( ≈ 50% ) across both models. These results suggest that LALMs do not memorize training data as isolated textual sequences or standalone acous- tic fingerprints. Instead, memorization is instance-specific and cross-modal , emer ging only from the binding between a vocal identity and its textual content. Privacy Implications Our findings refine the threat model for audio pri vacy . The dominant risk is not the reproduction of ver - batim text, b ut linkage leakage : the model’ s ability to associate a specific individual with a specific utterance. Breaking this binding via v ocal anonymization or TTS of fers a practical miti- gation strategy for LALM training. 5. Conclusion W e conduct the first systematic inv estigation of Membership Inference Attacks (MIA) against Large Audio Language Mod- els (LALMs), uncovering that high attack performance is often merely an illusion dri ven by acoustic distribution shifts rather than genuine memorization. T o remedy this “validity crisis, ” we introduce a rigorous three-phase auditing protocol that care- fully controls for these shortcuts. Our framew ork re veals that true memorization is sparse and, most importantly , manifests as a cross-modal binding between a speaker’ s vocal identity and their specific utterances. This finding fundamentally redefines the LALM pri vac y threat, shifting the focus from text leakage to the association of voice with content. W e call for a new au- diting paradigm that moves beyond simple metrics to enable the dev elopment of truly robust priv acy-preserving models. 6. Generativ e AI Use Disclosure During the preparation of this work, the authors utilized se veral generativ e AI tools in both the manuscript writing and exper - imental implementation phases. For the manuscript, ChatGPT and Google Gemini were used for assistance with language edit- ing, rephrasing, and improving the clarity and conciseness of the text. For software dev elopment, GitHub Copilot and Cur- sor were employed to assist with code completion, boilerplate generation, and debugging. In all cases, these tools served in an assistive capacity . The core ideas, experimental design, analyses, and conclusions pre- sented in this paper are the original w ork of the human authors. All authors have revie wed the final manuscript and the asso- ciated code, and take full responsibility for the integrity and correctness of the entire work. 7. Acknowledgements W e gratefully acknowledge the National Center for High- Performance Computing (NCHC) of T aiwan for providing com- putational resources that supported this work. 8. Refer ences [1] S. Ghosh, Z. K ong, S. Kumar , S. Sakshi, J. Kim, W . Ping, R. V alle, D. Manocha, and B. Catanzaro, “ Audio flamingo 2: An audio-language model with long-audio understanding and e xpert reasoning abilities, ” 2025. [Online]. A v ailable: https://arxiv .org/abs/2503.03983 [2] C. Liu, M. Aljunied, G. Chen, H. P . Chan, W . Xu, Y . Rong, and W . Zhang, “Seallms-audio: Large audio- language models for southeast asia, ” 2025. [Online]. A vailable: https://arxiv .org/abs/2511.01670 [3] P . K. Rubenstein, C. Asawaroengchai, D. D. Nguyen, A. Bapna, Z. Borsos, F . de Chaumont Quitry , P . Chen, D. E. Badawy , W . Han, E. Kharitonov , H. Muckenhirn, D. Padfield, J. Qin, D. Rozenberg, T . Sainath, J. Schalkwyk, M. Sharifi, M. T . Ramanovich, M. T agliasacchi, A. T udor, M. V elimirovi ´ c, D. V incent, J. Y u, Y . W ang, V . Zayats, N. Zeghidour , Y . Zhang, Z. Zhang, L. Zilka, and C. Frank, “ Audiopalm: A large language model that can speak and listen, ” 2023. [Online]. A vailable: https://arxiv .org/abs/2306.12925 [4] K.-H. Lu, Z. Chen, S.-W . Fu, C.-H. H. Y ang, S.-F . Huang, C.-K. Y ang, C.-E. Y u, C.-W . Chen, W .-C. Chen, C. yu Huang, Y .-C. Lin, Y .-X. Lin, C.-A. Fu, C.-Y . Kuan, W . Ren, X. Chen, W .-P . Huang, E.-P . Hu, T .-Q. Lin, Y .-K. W u, K.-P . Huang, H.-Y . Huang, H.-C. Chou, K.-W . Chang, C.-H. Chiang, B. Ginsbur g, Y .-C. F . W ang, and H. yi Lee, “Desta2.5-audio: T oward general-purpose large audio language model with self- generated cross-modal alignment, ” 2025. [Online]. A vailable: https://arxiv .org/abs/2507.02768 [5] A. Goel, S. Ghosh, J. Kim, S. Kumar, Z. Kong, S. gil Lee, C.-H. H. Y ang, R. Duraiswami, D. Manocha, R. V alle, and B. Catanzaro, “ Audio flamingo 3: Adv ancing audio intelligence with fully open large audio language models, ” 2025. [Online]. A v ailable: https://arxiv .org/abs/2507.08128 [6] Y . Chu, J. Xu, X. Zhou, Q. Y ang, S. Zhang, Z. Y an, C. Zhou, and J. Zhou, “Qwen-audio: Advancing univ ersal audio understanding via unified large-scale audio-language models, ” 2023. [Online]. A v ailable: https://arxiv .org/abs/2311.07919 [7] C. T ang, W . Y u, G. Sun, X. Chen, T . T an, W . Li, L. Lu, Z. Ma, and C. Zhang, “Salmonn: T ow ards generic hearing abilities for large language models, ” arXiv pr eprint arXiv:2310.13289 , 2023. [8] N. Carlini, F . T ramer, E. W allace, M. Jagielski, A. Herbert-V oss, K. Lee, A. Roberts, T . Brown, D. Song, U. Erlingsson, A. Oprea, and C. Raffel, “Extracting training data from large language models, ” 2021. [Online]. A vailable: https: //arxiv .org/abs/2012.07805 [9] P . Maini, H. Jia, N. Papernot, and A. Dziedzic, “Llm dataset inference: Did you train on my dataset?” 2024. [Online]. A v ailable: https://arxiv .org/abs/2406.06443 [10] J. Mattern, F . Mireshghallah, Z. Jin, B. Sch ¨ olkopf, M. Sachan, and T . Berg-Kirkpatrick, “Membership inference attacks against language models via neighbourhood comparison, ” 2023. [Online]. A v ailable: https://arxiv .org/abs/2305.18462 [11] M. Duan, A. Suri, N. Mireshghallah, S. Min, W . Shi, L. Zettlemoyer , Y . Tsvetko v , Y . Choi, D. Ev ans, and H. Hajishirzi, “Do membership inference attacks work on large language models?” 2024. [Online]. A vailable: https: //arxiv .org/abs/2402.07841 [12] W . Fu, H. W ang, C. Gao, G. Liu, Y . Li, and T . Jiang, “Member- ship inference attacks against fine-tuned large language models via self-prompt calibration, ” vol. 37, 2024, pp. 134 981–135 010. [13] R. W en, Z. Li, M. Backes, and Y . Zhang, “Membership inference attacks against in-context learning, ” in Pr oceedings of the 2024 on ACM SIGSAC Conference on Computer and Communications Security , 2024, pp. 3481–3495. [14] F . Galli, L. Melis, and T . Cucinotta, “Noisy neighbors: Efficient membership inference attacks against llms, ” in Proceedings of the F ifth W orkshop on Privacy in Natural Language Pr ocessing , 2024, pp. 1–6. [15] Z. Song, S. Huang, and Z. Kang, “Em-mias: Enhancing member- ship inference attacks in lar ge language models through ensemble modeling, ” in ICASSP 2025-2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) . IEEE, 2025, pp. 1–5. [16] M. Zawalski, M. Boubdir, K. Bałazy , B. Nushi, and P . Ribalta, “Detecting data contamination in LLMs via in-context learning, ” in The F ourteenth International Conference on Learning Repr esentations , 2026. [Online]. A vailable: https://openreview . net/forum?id=YlpaaYxx4t [17] Z. Li, Y . W u, Y . Chen, F . T onin, E. A. Rocamora, and V . Cevher , “Membership inference attacks against large vision-language models, ” 2024. [Online]. A vailable: https: //arxiv .org/abs/2411.02902 [18] Y . Hu, Z. Li, Z. Liu, Y . Zhang, Z. Qin, K. Ren, and C. Chen, “Membership inference attacks against vision-language models, ” 2025. [Online]. A v ailable: https://arxiv .org/abs/2501.18624 [19] W .-C. Tseng, W .-T . Kao, and H. yi Lee, “Membership inference attacks against self-supervised speech models, ” 2022. [Online]. A v ailable: https://arxiv .org/abs/2111.05113 [20] G. Chen, Y . Zhang, and F . Song, “Slmia-sr: Speaker- lev el membership inference attacks against speaker recognition systems, ” in Pr oceedings 2024 Network and Distributed System Security Symposium , ser . NDSS 2024. Internet Society , 2024. [Online]. A vailable: http://dx.doi.org/10.14722/ndss.2024. 241323 [21] D. Das, J. Zhang, and F . T ram ` er , “Blind baselines beat membership inference attacks for foundation models, ” 2025. [Online]. A v ailable: https://arxiv .org/abs/2406.16201 [22] H. Puerto, M. Gubri, S. Y un, and S. J. Oh, “Scaling up member - ship inference: When and how attacks succeed on large language models, ” in Findings of the Association for Computational Lin- guistics: NAA CL 2025 , 2025, pp. 4165–4182. [23] S. Ghosh, A. Goel, L. Koroshinadze, S. gil Lee, Z. Kong, J. F . Santos, R. Duraiswami, D. Manocha, W . Ping, M. Shoeybi, and B. Catanzaro, “Music flamingo: Scaling music understanding in audio language models, ” 2025. [Online]. A vailable: https: //arxiv .org/abs/2511.10289 [24] G. Chen, S. Chai, G.-B. W ang, J. Du, W .-Q. Zhang, C. W eng, D. Su, D. Povey , J. Trmal, J. Zhang, M. Jin, S. Khudanpur, S. W atanabe, S. Zhao, W . Zou, X. Li, X. Y ao, Y . W ang, Z. Y ou, and Z. Y an, “GigaSpeech: An Ev olving, Multi-Domain ASR Cor- pus with 10,000 Hours of T ranscribed Audio, ” in Interspeech 2021 , 2021, pp. 3670–3674. [25] S. Y eom, I. Giacomelli, M. Fredrikson, and S. Jha, “Priv acy risk in machine learning: Analyzing the connection to overfitting, ” in 2018 IEEE 31st Computer Security F oundations Symposium (CSF) , 2018, pp. 268–282. [26] C. E. Shannon, “ A mathematical theory of communication, ” The Bell System T echnical Journal , v ol. 27, no. 3, pp. 379–423, 1948. [27] W . Shi, A. Ajith, M. Xia, Y . Huang, D. Liu, T . Blevins, D. Chen, and L. Zettlemoyer, “Detecting pretraining data from large language models, ” 2024. [Online]. A v ailable: https://arxiv .org/abs/2310.16789 [28] J. Zhang, J. Sun, E. Y eats, Y . Ouyang, M. Kuo, J. Zhang, H. F . Y ang, and H. Li, “Min-k%++: Improved baseline for detecting pre-training data from lar ge language models, ” arXiv preprint arXiv:2404.02936 , 2024. [29] V . Panayotov , G. Chen, D. Pov ey , and S. Khudanpur , “Lib- rispeech: An asr corpus based on public domain audio books, ” in 2015 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2015, pp. 5206–5210. [30] F . Hernandez, V . Nguyen, S. Ghannay , N. T omashenko, and Y . Es- tev e, “T ed-lium 3: T wice as much data and corpus repartition for experiments on speaker adaptation, ” in International conference on speech and computer . Springer, 2018, pp. 198–208. [31] C. W ang, M. Riviere, A. Lee, A. W u, C. T alnikar, D. Haziza, M. W illiamson, J. Pino, and E. Dupoux, “VoxPopuli: A lar ge- scale multilingual speech corpus for representation learning, semi-supervised learning and interpretation, ” in Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Confer ence on Natural Language Pr ocessing (V olume 1: Long P apers) , C. Zong, F . Xia, W . Li, and R. Navigli, Eds. Online: Association for Computational Linguistics, Aug. 2021, pp. 993–1003. [Online]. A v ailable: https://aclanthology .org/2021.acl- long.80/ [32] P . K. O’Neill, V . Lavrukhin, S. Majumdar , V . Noroozi, Y . Zhang, O. Kuchaie v , J. Balam, Y . Dovzhenko, K. Freyberg, M. D. Shul- man, B. Ginsburg, S. W atanabe, and G. Kucsko, “SPGISpeech: 5,000 Hours of Transcribed Financial Audio for Fully Formatted End-to-End Speech Recognition, ” in Interspeech 2021 , 2021, pp. 1434–1438. [33] K. Drossos, S. Lipping, and T . V irtanen, “Clotho: An audio cap- tioning dataset, ” in ICASSP 2020-2020 IEEE International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2020, pp. 736–740. [34] I.-Y . Jeong and J. Park, “Cochlscene: Acquisition of acoustic scene data using cro wdsourcing, ” in 2022 Asia-P acific Signal and Information Pr ocessing Association Annual Summit and Confer- ence (APSIP A ASC) . IEEE, 2022, pp. 17–21. [35] J. Engel, C. Resnick, A. Roberts, S. Dieleman, M. Norouzi, D. Eck, and K. Simonyan, “Neural audio synthesis of musical notes with wa venet autoencoders, ” in International confer ence on machine learning . PMLR, 2017, pp. 1068–1077. [36] Z. Du, Y . W ang, Q. Chen, X. Shi, X. Lv , T . Zhao, Z. Gao, Y . Y ang, C. Gao, H. W ang, F . Y u, H. Liu, Z. Sheng, Y . Gu, C. Deng, W . W ang, S. Zhang, Z. Y an, and J. Zhou, “Cosyvoice 2: Scalable streaming speech synthesis with lar ge language models, ” 2024. [Online]. A v ailable: https://arxiv .org/abs/2412.10117 [37] C.-Y . Hung, N. Majumder , Z. K ong, A. Mehrish, A. A. Bagherzadeh, C. Li, R. V alle, B. Catanzaro, and S. Poria, “T an- goflux: Super fast and faithful text to audio generation with flow matching and clap-ranked preference optimization, ” arXiv pr eprint arXiv:2412.21037 , 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment