Marco DeepResearch: Unlocking Efficient Deep Research Agents via Verification-Centric Design

Deep research agents autonomously conduct open-ended investigations, integrating complex information retrieval with multi-step reasoning across diverse sources to solve real-world problems. To sustain this capability on long-horizon tasks, reliable v…

Authors: Bin Zhu, Qianghuai Jia, Tian Lan

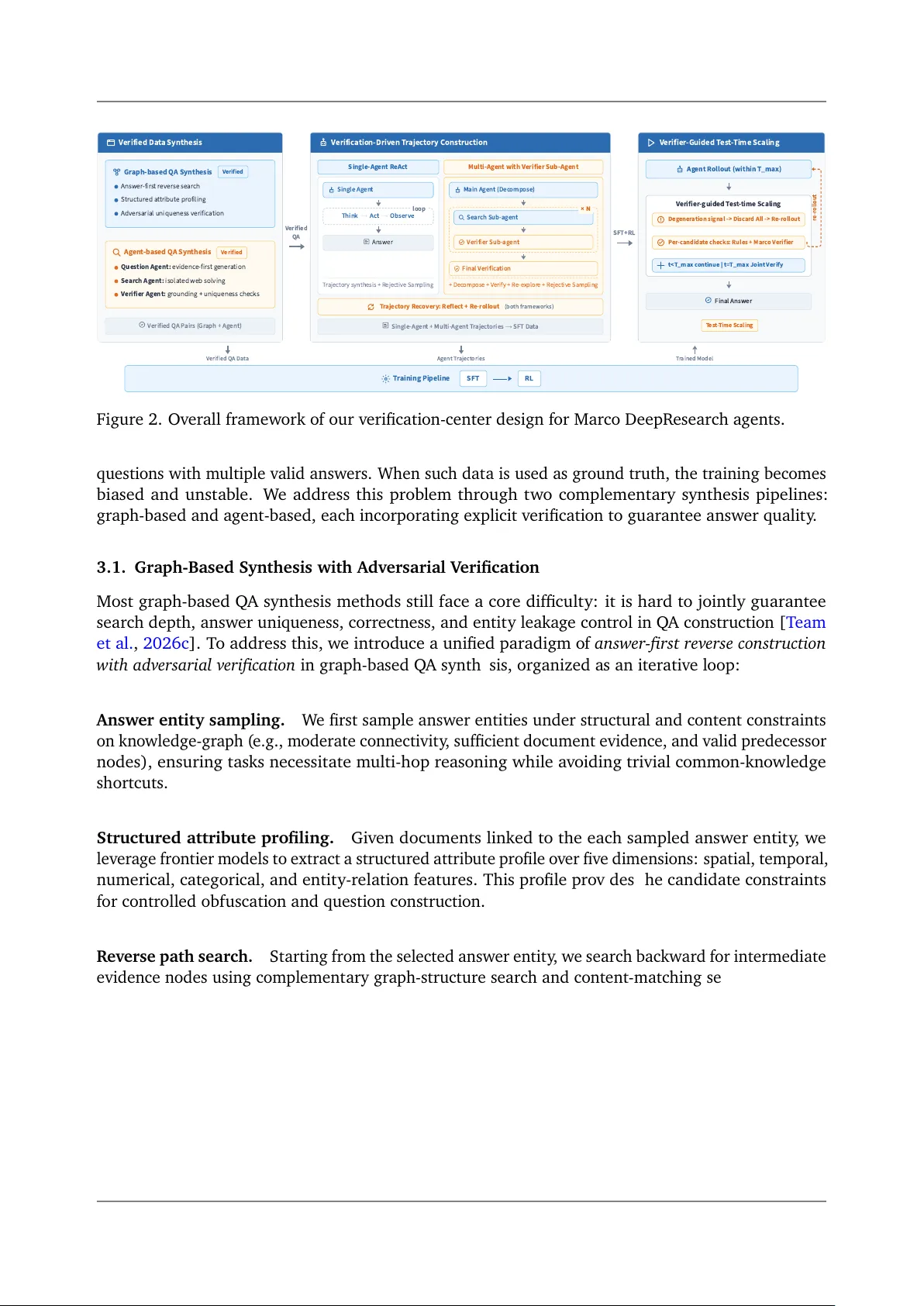

2026-3-31 Marco DeepR esearch: U nlocking Effi cient Deep R esearch Agents via V erifi cati on-Centric Design Bin Zhu † , Qianghuai Ji a † , Tian Lan † , Junyang R en † , F eng Gu † , F eihu Jiang † , Longyue W ang ★ , Zhao Xu, W eihua Luo Alibaba International Digital Commerce ★ Corresponding A uthor: L ongyue W ang † Equal Contrib ution Abstract Deep research agents autono mous ly condu ct open-ended investigati ons, integrating complex informatio n retrieva l with m ulti-step reaso ning across di verse sources to solve real-w orld prob lems. T o sustain this capa bilit y on l ong-horizon t as ks, relia bl e verificati on is criti cal during both training and inference. A major bottleneck in existing paradigms stems from the lack of explicit v erificatio n mechanisms in Q A data synthesis, trajectory constructio n, and test-time scaling. Errors introd uced at each st age propagate downstream and degrade the ov erall agent performan ce. T o address this, w e present Marco DeepR esearch, a deep research agent optimized with a v erificatio n-centric framework design at three lev els: (1) QA Data Synthesis: W e introduce verifi cation mechanisms to graph-based and agent-based Q A synthesis to control questio n diffi cult y while ensuring answers are uniqu e and correct; (2) Trajectory Constru ction: W e design a verifi cation-driv en trajectory synthesis method that injects explicit v erificati on patterns into training trajectories; and (3) T est-time sca ling: W e use Marco DeepR esearch itself a s a verifier at inferen ce time and effectiv ely impro ve performan ce o n cha llenging questi ons. Extensive experiment al results dem onstrate that our propo sed Marco DeepR esearch agent significantly outperforms 8B -scale deep research agents on most challenging benchmarks, such as Bro wseComp and BrowseComp-ZH. Crucially , under a maximum budget of 600 tool calls, Marco DeepR esearch ev en surpa sses or a pproaches severa l 30B -scale agents, like T ongyi DeepR esearch-30B. https://github.com/AIDC - AI/Marco- DeepResearch 1. Introd uctio n O u r s 8 B ≤ 8 B ≈ 3 0 B F o u n d a t i o n 3 1 . 4 3 1 . 1 3 0 2 4 . 1 4 3 . 4 4 1 . 2 6 7 . 6 6 2 M a r c o D R - 8 B M i r o 8 B R E - T R A C C P M - 4 B T o n g y i - 3 0 B M i r o 3 0 B D S V 3 . 2 M i n i m a x M 2 . 1 B r o w s e C o m p O u r s 8 B ≤ 8 B ≈ 3 0 B F o u n d a t i o n 4 7 . 1 4 0 . 2 3 6 . 1 2 9 . 1 4 6 . 7 4 7 . 8 6 5 4 7 . 8 M a r c o D R - 8 B M i r o 8 B R E - T R A C C P M - 4 B T o n g y i - 3 0 B M i r o 3 0 B D S V 3 . 2 M i n i m a x M 2 . 1 B r o w s e C o m p - Z H O u r s 8 B ≤ 8 B ≈ 3 0 B F o u n d a t i o n 4 2 3 4 3 4 2 3 5 5 5 5 . 7 4 3 M a r c o D R - 8 B M i r o 8 B C P M - 4 B W e b E x p 8 B T o n g y i - 3 0 B D S V 3 . 2 M i n i m a x M 2 . 1 x B e n c h - D e e p S e a r c h - 2 5 1 0 O u r s 8 B ≤ 8 B ≈ 3 0 B F o u n d a t i o n 6 9 . 9 7 0 . 4 6 6 . 4 6 3 . 9 7 0 . 9 7 3 . 5 7 5 . 1 6 4 . 3 M a r c o D R - 8 B R E - T R A C M i r o 8 B C P M - 4 B T o n g y i - 3 0 B M i r o 3 0 B D S V 3 . 2 M i n i m a x M 2 . 1 G A I A - t e x t - o n l y M a r c o D e e p R e s e a r c h ( O u r s , 8 B ) ≤ 8 B ≈ 3 0 B F o u n d a t i o n B a s e l i n e N a m e : M i r o = M i r o T h i n k e r , R E - T R A C = R E - T R A C - 4 B , W e b E x p = W e b E x p l o r e r - 8 B - R L , C P M = A g e n t C P M - E x p l o r e - 4 B , D S = D e e p S e e k . Figure 1. Benchmark perf ormance o f our proposed Marco DeepResearch 8B -sca le a gent. Large langu a ge m odels (LLMs) hav e enab led tool-augmented agents that can auto nom ously reason and interact with extern al en vironments [ T eam et a l. , 2025b , Huang et a l. , 2025 ]. Within this lin e, Deep research a gents [ OpenAI , 2025 , Google , 2024 ] hav e attracted broad attentio n: proprietary © 2026 Alibaba. All rights reserved Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design systems su ch as OpenAI Deep Research [ OpenAI , 2025 ] and Gemini Deep R esearch [ Google , 2024 ] dem onstrate excepti onal inf ormatio n-seeking capa bilities f or solving compl ex tasks in rea l-world sce- narios, whil e open-source systems like MiroThinker [ T eam et a l. , 2025a ], T ongyi DeepR esearch [ T eam et al. , 2025b ], and AgentCPM-Explore [ Chen et a l. , 2026a ] ha v e rapid ly narrow ed the gap and in some settings matched or surpa ssed propri et ary a lternatives [ Mialon et al. , 2023 , W ei et al. , 2025 , Phan et a l. , 2025 ]. This progress is largely driven by adv ances in data synthesis [ Li et al. , 2025a , T ao et al. , 2025 ], reinf orcement learning [ Shao et a l. , 2024 , Dong et al. , 2025 ], and test-time sca ling [ Xu et a l. , 2026c , Li et a l. , 2025b ]. Despite this progress, current deep research a gents f ace a critica l bottleneck: the lack o f explicit v erificati on acro ss the f ollowing three essential stages, l eading to error propa gation and ov erall per- forman ce degradation o f agents [ Xu et a l. , 2026a , L an et al. , 2024a , b ]: (1) Q A D ata S ynthesis : mo st w orks synthesize Q A samples from gra ph-based [ Wu et al. , 2025a ] or a gent-based web exploration [ Xu et al. , 2026b ], but entit y obf uscation—the most widely adopted techniqu e in existing pipelin es—o ften yields non-uni que or incorrect answ ers [ T eam et al. , 2026c ], undermining supervisio n qualit y and propa gating errors to do wnstream trajectory constru ction; (2) Trajectory constructi on : m ost existing w orks rely on strong teacher m odels to generate R eAct-st yle trajectori es that can directly reach correct final answ ers, b ut these trajectori es usually lack explicit verifi catio n [ Y ao et al. , 2023 ]; as a result, trained agents tend to accept early lo w-qualit y results, under-explore high-valu e alternativ es [ Hu et a l. , 2025b , W an et a l. , 2026a ]; and (3) T est-time sca ling : systems genera lly lack explicit v erificatio n for both intermediate steps and the final answ ers during inference; co nsequently , fla wed intermediate states and incorrect conclusi ons propa gate unchecked, leading a gents to accept early errors rather than triggering v erifier-guided beha viors t o effecti vely scale test-time co mpute [ W an et al. , 2026a ]. T o address these v erificatio n ga ps, w e present Marco DeepR esearch , an effici ent 8B -scale deep research agent with three improv ements acro ss these stages: (1) V erified Data S ynthesis (Sectio n 3 ): where w e introdu ce an explicit v erificatio n mechanisms to graph-ba sed and a gent-based Q A synthesis methods so that qu estio n-answer pairs are carefully checked, ensuring their diffi culties, uniqu eness and correctn ess; (2) V erificati on-Driv en Trajectory Constructi on (Sectio n 4 ): where w e introdu ce a specialized v erifier a gent to v erif y the answ ers of sub-tas ks and final answ er using w eb search tools, pro viding m ore explicit v erificatio n patterns into single-agent and multi-a gent trajectories; and (3) V erifier-Guided T est- T ime Scaling (Section 5 ): w e use the Marco DeepR esearch a gent itself as a v erifier and co ntinu e reaso ning on challenging qu estio ns under a co ntrolled compute b udget, thereby m ore effecti vely unlocking the potential o f test-time sca ling. With these optimiz ations, we synthesize high-quality trajectories and train the Marco DeepR e- search a gent based o n the Qw en3-8B ba se m odel [ Y ang et a l. , 2025a ], and evaluate it o n six deep search benchmarks like Brow seComp [ W ei et a l. , 2025 ], Brow seComp-ZH [ Zhou et a l. , 2025 ], and GAIA [ Mial on et a l. , 2023 ]. Specifica lly , Marco DeepR esearch outperf orms 8B -scale deep research a gents on most challenging deep ben chmarks su ch as BrowseComp [ T eam et a l. , 2025a , Chen et a l. , 2026a ]. Moreo ver , under a budget o f up to 600 tool calls [ T eam et a l. , 2025a ], our proposed Marco DeepR esearch agent surpa sses MiroThinker-v1.0-8B on Bro wseComp-ZH and matches or exceeds sev eral 30B -scal e a gents, including T ongyi DeepR esearch-30B [ T eam et al. , 2025b ] and MiroThinker- v1.0-30B [ T eam et al. , 2025a ]. Ablation studi es f urther prov e the contributi ons o f our designs f or optimizing Marco DeepR esearch. 2. R elated W ork Deep R esearch agent systems. LLM-based agent sy stems ha ve dem onstrated pro found potential and v ersatilit y across a wide spectrum o f compl ex t as ks [ Y ang et al. , 2025b , L an et al. , 2025 , Y e 2 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design et al. , 2026 , T eam et al. , 2025b , GLM-5-T eam et a l. , 2026 ]. Building on this fo undatio n, Deep R esearch ha s emerged as a frontier applicati on, with commercial systems [ OpenAI , 2025 , Google , 2024 ] sho wcasing remarka ble ca pabilities in condu cting open-ended inv estigations and synthesizing comprehensiv e reports. At the very core o f these sophisticated research systems li es Deep S earch ( i.e., a gentic inf ormation seeking)—the indispensab le engin e that ena bl es agents to auton omo usly pl an, navigate m ulti-turn web interacti ons, and extract reaso ning-driven eviden ce [ Lan et al. , 2025 , Huang et al. , 2025 , L an et al. , 2026 , W ong et al. , 2025 ]. Ho wev er , despite rapid advancements and the emergence o f open-source deep research a gents [ T eam et al. , 2025a , b ], critica l bottlenecks persist in data qualit y and inference-time strategi es d uring long horizons. Data synthesis for deep research agents. High-qualit y synthetic data is the key to the agentic search ca pabiliti es [ T eam et al. , 2025b , Hu et a l. , 2025a , T ao et al. , 2025 ]. Current approaches to a gentic data synthesis mainly follo w t wo paradigms: (1) graph-ba sed methods tra verse kn owledge graphs to synthetic m ulti-hop Q A dat a [ T eam et al. , 2025a ]; (2) a gent-based methods use a gents explore rea l web environments [ Xu et al. , 2026b ] for data synthesis. Despite their differen ces, both paradigms f ace a comm on and f undament al challenge: automatica lly synthesizing difficult Q A pairs with uni que and correct answers [ T eam et al. , 2026c ]. T o address this issu e, w e design a v erificati on-driv en method to impro ve Q A dat a qualit y . Trajectory constru ctio n. The R eAct paradigm [ Y ao et a l. , 2023 ] serv es as the foundati on o f mo st current a gentic systems. R ecent works hav e impro v ed upon ReAct through proced ural pl anning [ W ang et a l. , 2023 ], m ulti-agent orchestration [ W ong et al. , 2025 , Lan et al. , 2026 ], and context manage- ment [ Li et a l. , 2025c ]. Ho wev er , these frameworks s hare a critica l limit atio n: the absen ce o f explicit v erificati on d uring interactions [ W an et al. , 2026a ]. In long-horizon inf ormation seeking, agents m ust navigate ma ssive search spaces where intermediate results are o ften no isy or misl eading. Witho ut a dedicated v erificati on mechanism, agents are pro ne to accepting the first plausibl e-looking answ er and terminating explorati on prematurely , even when the result is incorrect [ W an et al. , 2026a ]. T o address this limitatio n, w e introdu ce an explicit verificati on mechanisms f or both intermediate search results and final answers, design ed to eff ectiv ely teach the m odel rob ust v erificatio n behavi ors. T est-time scaling. At test time, deep research a gents solv e compl ex prob lems through extensive interactiv e exploratio n o f web environments. Effectiv e test-time sca ling strategies can significantly enhance a gent performance by a llocating m ore computation at inf erence [ Snell et a l. , 2024 , T eam et al. , 2025a , Zhu et al. , 2026 , T eam et a l. , 2026b ]. While current test-time sca ling approaches f or agenti c search primarily f ocus o n m ulti-agent coordination [ Lan et a l. , 2026 ] and context summariz atio n [ Wu et a l. , 2025b , Zhu et al. , 2026 ], the role o f explicit verifi catio n a s a systemati c test-time sca ling strategy for trained deep search a gents remains largely unexplored [ Du et al. , 2026 , W an et al. , 2026b ]. W e address this gap by using Marco DeepR esearch itself as a v erifier at inf erence time, rea lizing effectiv e test-time sca ling by extending rea soning turns. 3. V erified Data Synthesis In this secti on, w e apply explicit v erificati on to Q A data synthesis to ensure the qu alit y and answ er uniqu eness while keeping their difficulties. High-qu alit y Q A dat a is essential for both trajectory synthesis and optimi zation. A commo n bottleneck in existing approaches is answer non-uni quen ess : to increa se questio n difficult y , m ost methods obf uscate entit y informati on in m ulti-hop questio ns [ T eam et al. , 2025a , Xu et al. , 2026b ], which in evit ab ly introd uces ambiguit y and may result in low-qua lit y 3 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design V e r i f i e d D a t a S y n t h e s i s G r a p h - b a s e d Q A S y n t h e s i s V e r i f i e d A n s w e r - f i r s t r e v e r s e s e a r c h S t r u c t u r e d a t t r i b u t e p r o f i l i n g A d v e r s a r i a l u n i q u e n e s s v e r i f i c a t i o n A g e n t - b a s e d Q A S y n t h e s i s V e r i f i e d Q u e s t i o n A g e n t : e v i d e n c e - f i r s t g e n e r a t i o n S e a r c h A g e n t : i s o l a t e d w e b s o l v i n g V e r i f i e r A g e n t : g r o u n d i n g + u n i q u e n e s s c h e c k s V e r i f i e d Q A P a i r s ( G r a p h + A g e n t ) V e r i f i c a t i o n - D r i v e n T r a j e c t o r y Co n s t r u c t i o n S i n g l e - A g e n t R e A c t S i n g l e A g e n t A n s w e r T r a j e c t o r y s y n t h e s i s + R e j e c t i v e S a m p l i n g M u l t i - A g e n t w i t h V e r i f i e r S u b - A g e n t M a i n A g e n t ( De c o m p o s e ) F i n a l V e r i f i c a t i o n + D e c o m p o s e + V e r i f y + R e - e x p l o r e + R e j e c t i v e S a m p l i n g T r a j e c t o r y R e c o v e r y : R e f l e c t + R e - r o l l o u t ( b o t h f r a m e w o r k s ) S i n g l e - A g e n t + M u l t i - A g e n t T r a j e c t o r i e s → S F T Da t a V e r i f i e r - G u i d e d T e s t - T i m e S c a l i n g A g e n t R o l l o u t (w i t h i n T _m a x ) F i n a l A n s w e r T e s t - T i m e S c a l i n g V e r i f i e d Q A Da t a A g e n t T r a j e c t o r i e s T r a i n e d M o d e l T r a i n i n g P i p e l i n e S FT R L F i g u r e 1 : O v e r v i e w o f M a r c o D e e p R e s e a r c h . W e o p t i m i z e t h e d e e p r e s e a r c h a g e n t p i p e l i n e a t t h r e e l e v e l s : d a t a s y n t h e s i s ( g r a p h - b a s e d + a g e n t - b a s e d v e r i f i e d Q A c o n s t r u c t i o n ) , a g e n t f r a m e w o r k ( s i n g l e - a g e n t R e A c t w i t h r e f l e c t i o n + m u l t i - a g e n t a r c h i t e c t u r e w i t h v e r i f i c a t i o n ) , a n d t e s t - t i m e s c a l i n g ( r e - r o l l o u t o n m a x - s t e p - e x c e e d e d a n d n o - a n s w e r c a s e s ) . V e r i f i e d Q A T h i n k → A c t → O b s e r v e l o o p S e a r c h S u b - a g e n t V e r i f i e r S u b - a g e n t × N S F T + R L V e r i f i e r - gu i de d T e s t - t i m e S c a l i n g D e g e n e r a t i o n s i g n a l - > D i s c a r d A l l - > R e - r o l l o u t P e r - c a n d i d a t e c h e c ks : R u l e s + M a r c o V e r i f i e r t < T _m a x c o n t i n u e | t = T _m a x J o i n t V e r i f y r e - r o l l o u t Figure 2. Ov erall framework o f our v erificatio n-center design f or Marco DeepR esearch a gents. questi ons with multipl e valid answ ers. When such data is used a s ground truth, the training beco mes biased and unst ab le. W e address this problem through t wo compl ement ary synthesis pipelines: graph-ba sed and a gent-based, each incorporating explicit v erificati on to gu arantee answ er qualit y . 3.1. Graph-Ba sed Synthesis with Adversaria l V erificatio n Most graph-ba sed Q A synthesis methods still f ace a core difficulty: it is hard to jointly gu arantee search depth, answ er uniquen ess, correctness, and entit y leaka ge control in QA constructi on [ T eam et a l. , 2026c ]. T o address this, w e introd uce a unifi ed paradigm of answ er-first rev erse constru ctio n with adv ersari al v erificati on in gra ph-based Q A synthesis, organized as an iterative l oop: Answ er entit y sampling. W e first sample answ er entities under structural and content constraints on kn o wledge-gra ph ( e.g., m oderate co nnectivit y , sufficient document eviden ce, and valid predecessor nodes), ensuring tas ks necessitate m ulti-hop reaso ning while a vo iding trivial co mm on-kn o wledge shortcuts. Structured attribute profiling. Giv en documents linked to the each sampled answer entit y , we lev erage frontier m odels to extract a structured attrib ute pro file ov er fiv e dimensio ns: spatial, tempora l, numeri cal, categorica l, and entit y-rel ation features. This pro file provides the candidate constraints for controlled obfuscation and questio n constru ction. R everse path search. St arting from the selected answer entit y , w e search backward for intermedi ate evidence n odes using compl ementary graph-structure search and co ntent-matching search ( attribute keyw ords matching). Then, strong LLMs are used to select a small set of high-qu alit y , div erse intermediates (4 to 8 intermediates) t o f orm a rob ust multi-hop reasoning chain. Adv ersarial answ er uniquen ess verifi cation. After getting a searched path cont aining the answ er entit y , w e apply an adversaria l verifi cation process to ensure answer uniqu eness. This is the key v erificati on process to ensure answer uniquen ess and difficult y o f synthesized Q A pairs, which is an iterativ e three-role process with a Generator , an Attacker , and an Analyzer . The Generator first initializes 2–3 obf uscated constraints from the attrib ute profile; the Attacker then searches f or 4 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design counterexample entities that satisf y all current constraints but are not the target answer . If no counterexample is found and the co nstraint count is a bov e a minimum thres hold, the loop con verges; otherwise, the Analyzer adds new discriminativ e constraints and returns control to the Attacker . This loop runs for at m ost 10 rounds. Its conv ergence f ollow s a mon otonicit y principl e: each round a ppends at lea st one n ew constraint, and each added co nstraint remo ves at least part of the co unterexample set. As a result, the fina l constraint set provides high-co nfidence answ er uniqu eness f or the target entit y . Finally , after conv ergence, w e conv ert constraints into n atural-language multi-hop qu estio ns and apply leaka ge checks to obscure key entiti es. Samples exhibiting lea ka ge, or tho se solva bl e by fronti er m odels without search and consistency checks, are excluded from our training set. 3.2. Agent-Based W eb Explorati on S ynthesis Compared with gra ph-based methods, a gent-based Q A synthesis significantly enhances data realism and broadens do main cov erage [ T eam et a l. , 2025a , Xu et a l. , 2026b ]. Motiv ated by these advantages, w e also construct Q A dat a via a gent-based w eb exploratio n, empow ering a gents to auto no mo usly navigate rea l-world web en vironments to f ormulate rea l-world, complex, m ulti-hop questi ons [ Xu et a l. , 2026b , T ao et al. , 2025 ]. Des pite its benefits, this dyn amic setting inevit ab ly introdu ces comm on failure cases, such as factual ha llucinatio ns, ambigu ous answ ers, and pseudo m ulti-hop questi ons that are ea sily bypassed by shortcut retriev al [ Xu et al. , 2026b ]. T o control these failures, w e design a Gen eratio n–Executio n–V erificatio n loop with a questio n agent, a search a gent, and a v erificatio n a gent. The key design is to separate qu estio n constructi on from independent solving and then enforce strict third-part y v erification before data accept ance. Eviden ce-first questi on constructi on. Instead of f orward generati on, questi on a gent first explores the open w eb to b uild an eviden ce gra ph, and co nstructs questio ns from verified evidence. During constru ctio n, it a pplies entit y obfuscation and div erse rea soning topologies ( e.g., con vergent and conjun ctiv e constraints) to reduce one-step shortcut matching whil e controlling t arget difficult y [ Xu et a l. , 2026b ]. Multi-stage qu alit y verifi cation. W e employ a multi-stage filtering pipeline: a v erificatio n agent ensures factual co nsistency and evidence gro unding, while a closed-book filter excludes questi ons solva b le witho ut retri eval. R emaining candidates are solved by an independent search agent, with final verifi catio n co nfirming rea soning depth aligns with t arget difficult y and no alternativ e va lid answ ers satisf y the constraints [ Xu et a l. , 2026b ]. Diagnosis Iterativ e Optimiz ation. When a sample fails at any sta ge, w e do not simply discard it. Instead, v erificati on a gent provides stru ctured diagno stic feed back ( e.g., under-constrained question, shortcut path, insuffi cient depth, or eviden ce confli ct), and questi on a gent performs targeted updates on eviden ce selecti on, constraint design, and questi on structure. This diagn osis–revisio n loop contin ues until the sample jo intly satisfies groundedn ess, uniqu eness, and empiri cal difficult y requirements, impro ving data efficien cy while maintaining strict qualit y control. W e co mbine the a bo v e t w o pipelines with additio nal synthesis strategies to maximiz e data div ersit y across prob lem t ypes, domains, and difficult y lev els. T o validate data qualit y , w e man ually review ed 100 samples. F ewer than 10% had clear qu estio n-answer mismatch, while the remaining Q A samples are va lid but challenging. This result s ho ws that o ur proposed methods could generate high-qu alit y and cha llenging dataset f or optimizing agents. 5 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design 4. V erificati on-Driv en Trajectory Constru ctio n Single-a gent R eAct is still the dominant trajectory synthesis recipe in current deep research systems [ Li et al. , 2025a , T eam et al. , 2025a ]. Ho wev er , this pipeline t ypica lly does not explicitly verif y key intermediate results, so errors made in early steps can directly propagate and accum ul ate, degrading final performance. W e therefore argue that high-qualit y trajectories should cont ain explicit v erificati on patterns, including both intermediate checks for sub-t ask o utputs and fina l checks f or the propo sed answ er . Prior w ork [ W an et al. , 2026b ] on deep-search benchmarks a lso suggests that, for n eed le-in- a-hay stack t asks, direct solving is difficult while answ er v erification conditio ned o n the questi on ( or sub-questi on) is relatively reli ab le [ Mial on et a l. , 2023 , W ei et al. , 2025 ]. T o capitalize on this easy- to-v erif y propert y , w e introdu ce t w o complementary designs for trajectory constructi on: multi-a gent v erified synthesis and verifi catio n-reflecti on re-roll out. Multi-agent with V erificatio n. As shown in Figure 2 , we design a three-role framew ork with a main agent, a search sub-agent, and a v erifier sub-a gent. The main agent decomposes a complex prob lem into sub-t asks and aggregates sub-results into a final answer . The search sub-a gent solves each sub-task. The verifi er a gent then performs independent third-part y v alidati on with w eb tools for both sub-t as k outputs and the fin al proposed answ er . If verifi catio n f ails, the correspo nding step is revised and re-executed, so trajectori es explicitly record v erificatio n-driv en correction beha vior . Finally , the multi-a gent trajectories are con verted into a single-agent R eAct-st yle trajectories for training [ Li et a l. , 2025b ]. V erificatio n-Refl ectio n R e-rollout on F ailed Trajectories. W e also collect trajectories with in- correct final answers and inv oke a v erifier a gent to dia gno se failure causes and produ ce acti onab le feed back [ Zhu et al. , 2026 ]. C onditi on ed on this feed back, w e re-rollout the f ailed trajectori es and keep trajectori es that are reco vered to correct answers. 5. V erifier-Guided T est- T ime S caling Current test-time scaling for deep research a gents mainly increases interacti on rounds or rollout b udget [ T eam et a l. , 2025a ]. While this can improv e co vera ge, b lind ly sca ling turns often accum ul ates early tool errors and noisy intermediate co nclusi ons, which red uces relia bilit y o n long-horizon search tasks [ T eam et al. , 2026b ]. Therefore, we propose V erifier-Guided T est-time Scaling that adds explicit v erificati on into inference-time sca ling and uses Marco DeepR esearch itself as a verifier . By combining the Discard All co ntext management strategy with verificati on [ DeepSeek-AI et al. , 2025 ], w e realize m ore effectiv e test-time scaling under a fixed maximum interacti on b udget 𝑇 max . Discard All. During a rollout, once predefined degenerati on signals are triggered ( e.g., reaching max steps or failing to solv e questio ns), we a pply Discard All context mana gement strategy: remo ve accum ulated tool-call history and intermediate reaso ning outputs, keep only the original qu ery and the system prompt, and rest art fro m a fresh context. This reset mechanism a llo ws the a gent to explore new search paths and redu cing error propa gation alo ng a single trajectory [ DeepSeek-AI et a l. , 2025 ]. V erifier-Guided T est-time Scaling. Whenev er the agent prod uces a candidate answ er , we co nduct rule-ba sed checks and a gent-as-a-judge using Marco DeepR esearch [ W an et al. , 2026b , Z huge et al. , 2024 ]. If 𝑡 < 𝑇 max [ T eam et a l. , 2025a , Chen et a l. , 2026b ], the a gent can contin ue exploring and propose additio nal candidates; each candidate is verifi ed independently . When 𝑡 = 𝑇 max or the process 6 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design reaches a co nv ergence conditi on, w e perf orm J oint V erif y ov er all candidates and generate the final answ er f or the questi on. These t wo components are complementary: Discard All impro v es trajectory qua lit y by resetting degraded contexts, whil e V erifier-guided T est-time Sca ling impro ves answer qualit y . T ogether , they realize more effectiv e test-time scaling without changing m odel parameters, and unlock stronger inferen ce-time gains o n hard questi ons. 6. Training Pipeline The training pipelin e consists o f Supervised Fine- Tuning and R einf orcement Learning. 6.1. Supervised Fine- Tuning Training objective. W e train with token-level cross-entropy and a pply a lo ss ma sk so that only assistant respo nse tokens contrib ute to optimi zation L SFT ( 𝜃 ) = − Í 𝑇 𝑡 = 1 𝑚 𝑡 log 𝑃 𝜃 ( 𝑥 𝑡 | 𝑥 <𝑡 ) , where the mas k is defin ed a s 𝑚 𝑡 = ( 1 , 𝑡 ∈ T assistant , 0 , 𝑡 ∈ T instructi on ∪ T tool_response . (1) That is, instru ctio n and tool response content are masked o ut. 6.2. R einforcement Learning Starting from the SF T checkpoint, w e optimize the policy with Group R elative Policy Optimi zation ( GRPO) [ Shao et al. , 2024 ], where updates are driv en by within-group rel ativ e advantages. C oncretely , for each query 𝑞 , w e sample a group of 𝐺 rollouts { 𝑜 𝑖 } 𝐺 𝑖 = 1 from the old policy 𝜋 𝜃 old and optimize J GRPO ( 𝜃 ) = 𝔼 𝑞 ∼ 𝑃 ( 𝑄 ) { 𝑜 𝑖 } 𝐺 𝑖 = 1 ∼ 𝜋 𝜃 old " 1 𝐺 𝐺 𝑖 = 1 min 𝑟 𝑖 ( 𝜃 ) ˆ 𝐴 𝑖 , clip ( 𝑟 𝑖 ( 𝜃 ) , 1 − 𝜖, 1 + 𝜖 ) ˆ 𝐴 𝑖 − 𝛽 𝔻 KL [ 𝜋 𝜃 ∥ 𝜋 ref ] # (2) where 𝑟 𝑖 ( 𝜃 ) = 𝜋 𝜃 ( 𝑜 𝑖 | 𝑞 ) 𝜋 𝜃 old ( 𝑜 𝑖 | 𝑞 ) denotes the import ance sampling rati o. The rel ativ e advantage is computed by reward n ormalization within each group: ˆ 𝐴 𝑖 = 𝑟 𝑖 − mean { 𝑟 𝑗 } 𝐺 𝑗 = 1 std { 𝑟 𝑗 } 𝐺 𝑗 = 1 . (3) W e adopt an outcome-ba sed reward, and balance reward qualit y and co mput atio n al co st by using a t wo-stage LLM-as-Judge pipeline: a f ast primary judge ( Qwen- Turbo-L atest) eva lu ates all samples, and uncertain or lo w-confidence ca ses are escalated to a seco ndary judge ( GPT -4.1) for re-ev aluati on: 𝑟 ( 𝑞, 𝑜 ) = ( 1 , if J ( 𝑜, 𝑎 ∗ ) = correct , 0 , otherwise , (4) where 𝑞 is the input query , 𝑜 is the generated output, 𝑎 ∗ is the reference answ er , and J ( ·) is the judging function. 7 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design 7. Experiment al Setup Benchmarks. W e evaluate our proposed Marco DeepR esearch a gent on six deep search ben chmarks: (1) Bro wseComp [ W ei et a l. , 2025 ]: Measuring a gent’s informati on seeking ca pabilit y by navigating the w eb; (2) BrowseCo mp-ZH [ Zhou et a l. , 2025 ]: Chinese co unterpart eva luating a gentic inf orma- tio n seeking; (3) GAIA (text-only) [ Mia lo n et al. , 2023 ]: R eal-w orld multi-step qu estio ns f or genera l AI assistants; (4) xBench-DeepSearch [ Chen et a l. , 2025 ]: Deep search across div erse domains 1 ; (5) W ebW alkerQ A [ W u et al. , 2025a ]: Multi-step web n avigati on and inf ormation extraction; and (6) DeepSearchQ A [ Gupta et al. , 2026 ]: Ev aluating exhaustiv e answ er set generati on through m ulti-source retri eva l, entit y resolution, and stopping criteria reaso ning. Baselin es. W e compare a gainst three groups o f st ate-of -the-art baselines: (1) F oundatio n m odels with tools : GLM-4.7 [ GLM-5-T eam et a l. , 2026 ], Minimax-M2.1, DeepS eek- V3.2 [ DeepSeek-AI et al. , 2025 ], Kimi-K2.5 [ T eam et al. , 2026a ], Claude-Sonn et/Opus, OpenAI-o3, GPT -5 High and Gemini-3- Pro; (2) Trained agents ≥ 30B -scale : T ongyi DeepR esearch [ T eam et a l. , 2025b ], W ebSailor-v2 [ Li et al. , 2025a ], MiroThinker-v1.0/v1.5/v1.7 [ T eam et al. , 2025a , 2026b ], DeepMiner [ T ang et al. , 2025 ], OpenS eeker-30B -SFT [ Du et al. , 2026 ], and SMTL [ Chen et al. , 2026b ]; and (3) Trained agents ≤ 8B -scale : MiroThinker-v1.0-8B, W ebExplorer-8B -RL [ Liu et a l. , 2025 ], AgentCPM-Explore- 4B [ Chen et a l. , 2026a ] and RE- TRA C-4B [ Z hu et a l. , 2026 ]. Training Data. Our training corpus contains t wo sources: (1) Open-source data , including 2Wiki- MultihopQ A [ Ho et a l. , 2020 ], BeerQ A [ Qi et al. , 2021 ], ASearcher [ Gao et a l. , 2025 ], DeepDive [ Lu et a l. , 2025 ], Q A-Expert-Multi-Hop-Q A [ Mai , 2023 ], and REDSearcher [ Chu et al. , 2026 ]; and (2) S ynthetic data , including ( i) real-w orld e-commerce b usiness-dev elopment datasets from our inn er appli cations [ Lan et a l. , 2025 , Y ang et a l. , 2025b , Lan et a l. , 2026 ] and ( ii) data synthesi zed by our v erified dat a synthes. In details, w e coll ect o ver 12K synthesiz ed gra ph-based Q A and a gent-based Q A samples. Moreo v er , we hold-o ut ov er 2K high-qualit y Q A samples for RL training. T rajectory data are synthesized using frontier f oundatio n m odels, including Qw en3.5-Plus, GLM-5, and Kimi-K2, am ong others, f ollo wed by data cleaning ( e.g., tool-call error correction). Implementation Details. W e use Qw en3-8B as the backbo ne. Y aRN is used to extend the context windo w to 128K. Supervised fine-tuning and RL are condu cted on 64 A100 GP Us using Mega- tron [ Shoeybi et a l. , 2019 ]. T o impro v e sy stem effi ciency and stabilit y , w e use R edis-based caching for repeated queri es/pa ges, exponentia l-backoff retries f or transient failures, asynchro nou s no n-b locking tool ca lls, asyn chron ous reward computation pipelined with model updates, and synchro nized de- ployment o f the W ebVisit summary m odel as an independent training-cluster service [ Chen et a l. , 2026a ]. As f or the eva lu atio n details, w e f ollow previous works [ T eam et al. , 2025a ] and eva luate Marco DeepR esearch a gent under a maxim um budget of 600 t ool calls. Decoding uses temperature 0 . 7 , top- 𝑝 0 . 95 , and a maximum generatio n length o f 16,384 tokens. 8. Experiment al R esults 8.1. Main R esults T ab le 1 demonstrates that Marco-DeepR esearch-8B o utperforms other 8B -scale open-source deep search train ed a gents on most of benchmarks. Specifica lly , it attains the highest scores in its siz e 1 Both 2505 and 2510 splits are evaluated. 8 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design T ab le 1. Perf ormance on deep search benchmarks. Best open-source results are bolded and the second-best are underlined. R esults marked with ★ are eva lu ated using our implement atio n. Model Bro wse Comp Bro ws Comp-ZH GAIA text-only W eb W alkerQ A xBench- D S-2505 xBench- D S-2510 Deep SearchQ A F oundation Models with T ools GLM-4.7 67.5 66.6 61.9 – 72.0 52.3 – Minimax-M2.1 62.0 47.8 64.3 – 68.7 43.0 – DeepSeek- V3.2 67.6 65.0 75.1 – 78.0 55.7 60.9 Kimi-K2.5 74.9 62.3 – – – 46.0 77.1 Claude-4-Sonnet 12.2 29.1 68.3 61.7 64.6 – – Claude-4.5-Opus 67.8 62.4 – – – – 80.0 OpenAI-o3 49.7 58.1 – 71.7 67.0 – – OpenAI GPT -5 High 54.9 65.0 76.4 – 77.8 75.0 79.0 Gemini-3.0-Pro 59.2 66.8 – – – 53.0 76.9 Trained Agents ( ≥ 30B) MiroThinker-v1.7-mini 67.9 72.3 80.3 – – 57.2 67.9 MiroThinker-v1.5-235B 69.8 71.5 80.8 – 77.1 – – MiroThinker-v1.5-30B 56.1 66.8 72.0 – 73.1 – – MiroThinker-v1.0-72B 47.1 55.6 81.9 62.1 77.8 – – MiroThinker-v1.0-30B 41.2 47.8 73.5 61.0 70.6 – – SMTL-30B -300 48.6 – 75.7 76.5 82.0 – – T ongyi-D R-30B 43.4 46.7 70.9 72.2 75.0 55.0 – W ebSailor- V2-30B 35.3 44.1 74.1 – 73.7 – – DeepMiner-32B-RL 33.5 40.1 58.7 – 62.0 – – OpenSeeker-30B -SFT 29.5 48.4 – – 74.0 – – Trained Agents ( ≤ 8B) AgentCPM-Explore-4B 24.1 29.1 63.9 68.1 70.0 34.0 ★ 32.8 ★ W ebExplorer-8B -RL 15.7 32.0 50.0 62.7 53.7 23.0 ★ 17.8 ★ RE- TRA C -4B 30.0 36.1 70.4 – 74.0 – – MiroThinker-v1.0-8B 31.1 40.2 66.4 60.6 60.6 34.0 ★ 36.7 ★ Marco-D R-8B ( Ours) 31.4 47.1 69.9 69.6 82.0 42.0 29.2 category on exploratio n-heavy t asks, including Bro wseComp (31.4), Bro wseComp-ZH (47.1), W eb- W a lkerQ A (69.6), and xBench-DeepSearch (82.0 on the 2505 split and 42.0 o n the 2510 s plit). F or the remaining three ben chmarks, our propo sed Marco-DeepR esearch a gent remains highly co mpetitive, missing the top score o n GAIA text-only by a margina l 0.5 points co mpared to RE- TRA C -4B. Notably , Marco-DeepR esearch-8B approaches and even surpasses sev eral competiti ve 30B -sca le deep search a gents on severa l ben chmark, like T ongyi-DeepR esearch. These results v alidate the efficacy o f our proposed Q A dat a synthesis, trajectory constru ction methods and test-time scaling strategy , pro ving that our optimiz ed 8B m odel can effectiv ely close the performance ga p with massiv e foundati on m odels in compl ex web na vigatio n and inf ormation-seeking tas ks. 8.2. Analysis W e cond uct det ailed analysis from five a spects: (1) dat a st atistics analysis; (2) effect o f Q A dat a v erificati on; (3) ab lation study on verifi cation-driv en trajectory constructi on; (4) improv ement o f reinforcement learning; (5) ab lation study on verifi er-guided test-time scaling; and (6) context windo w extensi on d uring training. 9 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design Data st atistics analysis. T o an alyze the strength of o ur constru cted training dat aset, we co mpare our synthesi zed data with three represent ativ e deep search dat asets (REDSearcher [ Chu et a l. , 2026 ], DeepDiv e [ Lu et al. , 2025 ] and ASearcher [ Gao et al. , 2025 ]) fro m t w o perspectiv es 2 : token length and tool-use depth. Figure 3 and Figure 4 sho w that our synthesized samples hav e both longer token sequen ces and m ore tool-call rounds than existing multi-hop and deep search open-source dataset. This shift is import ant for deep-search training: lo nger trajectori es provide denser supervisio n on cross-step reasoning, and deeper tool interacti on exposes the m odel to m ore realisti c lo ng-horizon decisio n patterns. As a result, the model can better learn to maint ain state, revise intermediate hypotheses, and compl ete complex tasks that require extended evidence a ggregation. 0 20k 40k 60k 80k 100k Number of T ok ens 0.000 0.025 0.050 0.075 0.100 0.125 0.150 0.175 Sample P r oportion T ok en Length Distribution Open-sour ce Ours Open-sour ce (avg) Ours (avg) 0 10 20 30 40 50 Number of T ool Calls 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14 0.16 Sample P r oportion T ool Call Distribution Open-sour ce Ours Open-sour ce (avg) Ours (avg) Figure 3. Distrib ution comparison bet ween the open-source m ulti-hop Q A dat aset (2Wiki, BeerQ A, etc.) and our synthesized data. L eft: token count per sample. Right: tool-call rounds per sample. 0 20k 40k 60k 80k 100k Number of T ok ens 0.00 0.05 0.10 0.15 0.20 0.25 Sample P r oportion T ok en Length Distribution ASear ch DeepDive REDSear ch Ours ASear ch (avg) DeepDive (avg) REDSear ch (avg) Ours (avg) 0 10 20 30 40 50 Number of T ool Calls 0.00 0.05 0.10 0.15 0.20 Sample P r oportion T ool Call Distribution ASear ch DeepDive REDSear ch Ours ASear ch (avg) DeepDive (avg) REDSear ch (avg) Ours (avg) Figure 4. Distributi on comparison bet w een three deep search dat a ( ASearcher , DeepDiv e, and RED Searcher) and our synthesi zed data. L eft: token count per sample. Right: tool-call rounds per sample. Our data is consistently s hifted to ward longer outputs and m ore tool interacti ons. Moreo v er , Figure 5 f urther supports the difficult y of our dat a. Under the same R eAct-st yle trajectory constructi on methods using the same frontier agent, our generated data sho ws a lo wer answ erab le rate than open-so urce data (29.0% < 51.7%), which indicates a harder distrib ution. Effect o f Q A dat a verificati on. T o isolate the impact o f our adv ersarial uni quen ess v erificati on o n data qualit y , w e compare t wo gra ph-based synthesis pipelin es under identical dat a scales: one with v erificati on and a baseline without. 3 As sho wn in T a bl e 2 , integrating this v erificati on step impro v es 2 The expert trajectory data corresponding to these Q A dat asets are all constructed using the same a gent. 3 W e condu ct this ab l ation using the graph-based approach d ue to its low er computational ov erhead. In co ntrast, agent-ba sed synthesis requires m ulti-turn web exploration, making data constru ction prohibitiv ely expensive. 10 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design 0 20 40 60 80 100 P er centage (%) Open-source Ours 51.7% 10.3% 38.0% 29.0% 10.8% 60.2% Cor r ect P artially Cor r ect Incor r ect Figure 5. R el ativ e to open-source data, our synthesized datasets indicate higher intrinsic difficult y . do wnstream perf ormance across most benchmarks. By filtering o ut n oisy and ambiguous samples, v erificati on yi elds cleaner and m ore reliab le data for subsequent trajectory constru ction and training. T ab le 2. Effect of adv ersarial uni queness verificati on on graph-based QA dat a qualit y . Both row s use the same n umber o f Q A samples; the difference is whether v erification is appli ed d uring synthesis. BC -200-sample is a sub-set o f Brow seComp with 200 random samples. Q A S ynthesis Method BC -200-sample BC -ZH GAIA xBench-D S-2505 Graph-ba sed Q A (w/o v erification) 14.2 24.5 55.3 67.0 Graph-ba sed Q A (w/ v erification) 13.8 26.8 57.6 68.3 Δ Impro vement -0.4 +2.3 +1.7 +1.3 Ab lation Study on V erificati on-Driv en Trajectory C onstru ctio n. W e evaluate whether adding m ulti-a gent trajectories with explicit verificati on patterns improv es performan ce. Concretely , we compare: (1) single-agent ReAct trajectories only; and (2) single-a gent trajectories augmented with m ulti-agent v erified trajectories. T ab le 3 s ho ws that a ugmented single-a gent R eAct trajectories consistently impro ves performance acro ss all benchmarks, with + 2 . 03 a v era ge impro v ement. These results va lid the contrib utions o f trajectories with verificati on pattern. T ab le 3. Ab l ation study on v erificatio n-driv en trajectory co nstructi on. Trajectory So urce BC -200-sample BC -ZH GAIA xBench-D S-2505 Single-a gent R eAct only 13.8 26.8 57.6 68.3 Single-a gent + Multi-agent (v erified) 14.5 27.0 62.8 70.3 Δ Impro vement +0.7 +0.2 +5.2 +2.0 Impro v ement of R einforcement Learning. W e compare the SFT checkpoint with its RL-updated counterpart under the same eva lu atio n setup to v erif y the effectiv eness of the RL st age. T a ble 4 s ho ws consistent gains from RL across all fiv e benchmarks. Impro vements range from + 0 . 8 to + 6 . 7 points, with an a vera ge gain o f + 2 . 6 points. This confirms that RL training on o ur constru cted challenging Q A dat a pro vides rob ust additiona l optimization o n top of SFT . T est- Time S caling. W e evaluate the effectiv eness o f our proposed test-time scaling strategy on the top o f the RL checkpo int. T ab le 5 s how s that o ur strategy deliv ers subst antial gains at test time. Compared with the RL ba seline, perf ormance impro ves by + 8 . 7 on GAIA, + 7 . 0 on xBen ch-DeepSearch- 11 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design T ab le 4. Contrib ution brought from RL training o n deep research benchmarks. Training Stage GAIA xBench-DS-2505 B C -200-sample BC -ZH Marco DeepResearch (8B) SFT 59.2 68.3 16.5 27.1 Marco DeepResearch (8B) RL 61.2 75.0 17.3 29.3 Δ Impro vement +2.0 +6.7 +0.8 +2.2 2505, + 15 . 0 on Bro wseComp-200-sample, and + 17 . 8 on Bro wseComp-ZH . The av era ge gain is + 12 . 1 points, indi cating the potential o f our proposed test-time scaling strategy . T ab le 5. Contrib ution of our proposed v erifier-guided test-time sca ling. Inferen ce Strategy GAIA xBench-DS-2505 B C -200-sample BC -ZH Marco DR (8B) SFT+RL 61.2 75.0 17.3 29.3 + Discard-all 61.5 72.0 23.7 38.9 + Discard-all + V erif y 69.9 82.0 32.3 47.1 Δ vs. baselin e +8.7 +7.0 +15.0 +17.8 Context Windo w Extensio n during Training. W e f urther study whether extending the training context windo w improv es lo ng-hori zon deep-search perf ormance. U sing the same SFT setup, dat a, and eva luatio n protocol, w e compare 64K and 128K context settings. As s ho wn in T a b le 6 , in creasing the context windo w from 64K to 128K yields co nsistent gains o n both benchmarks, with improv ements o f + 2 . 3 on BrowseCo mp-200-sample and + 0 . 8 on BrowseCo mp-ZH ( av era ge: + 1 . 6 ). This result supports the importance o f long-co ntext training f or deep-search t as ks that require many tool calls and cross-pa ge evidence a ggregation. T ab le 6. Effect of extending SF T context length from 64K to 128K. Models BC -200-sample Bro wseComp-ZH Marco DeepResearch (8B) SFT 64K 14.2 26.3 Marco DeepResearch (8B) SFT 128K 16.5 27.1 Δ Impro vement +2.3 +0.8 9. Conclu sio n This pa per addresses a bottl eneck in current deep research a gents: the lack of explicit v erificati on across Q A dat a synthesis, trajectory constru ction, and inf erence, which l eads to error propagati on and under-utilized test-time computatio n. T o solve this, w e propose Marco DeepR esearch, a 8B -sca le deep search a gent that optimized by our proposed v erificatio n-centric design with three impro vements: v erified Q A synthesis, verifi cation-driv en trajectory constru ctio n, and verifi er-guided test-time scaling. Extensiv e experimental results demo nstrate that our propo sed Marco DeepR esearch agent signifi- cantly outperf orms 8B -sca le open-source deep search agents o n m ost ben chmarks Bro wseComp and Bro wseComp-ZH , and surpasses sev eral 30B-scale deep search a gents on Bro wseComp-ZH . Further- m ore, detailed analysis and a blatio n study v erif y the po sitiv e contributi ons o f our v erificati on-centric designs. 12 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design R eferen ces H . Chen, X. C ong, S . F an, Y . Fu, Z. Gong, Y . Lu, Y . Li, B. Niu, C. P an, Z . Song, H. W ang, Y . W u, Y . W u, Z. Xie, Y . Y an, Z. Z hang, Y . Lin, Z. Liu, and M. Sun. Agentcpm-explore: An end-to-end infrastru cture for training and ev aluating llm a gents, 2026a. URL https://github.com/OpenBMB/ AgentCPM- Explore . K. Chen, Y . R en, Y . Liu, X. Hu, H. Tian, T . Xie, F . Liu, H . Z hang, Y . Ruan, H . Liu, Y . Gong, et al. Xbench: T racking agents prod uctivit y scaling with pro fessio n-aligned rea l-w orld eva luatio ns. https: //xbench.org/fil es/xbench_pro fessio n_v2.4.pdf , 2025. URL https://xbench.org/files/xben ch_ pro fessi on_v2.4.pdf . HSG. Q . Chen, T . Qin, K. Z hu, Q . W ang, C. Y u, S . Xu, J . Wu, J . Z hang, X. Liu, X. Gui, J . Cao, P . W ang, D . Shi, H . Zhu, T . W ang, Y . W ang, M. Song, T . Zheng, G. Zhang, J . Y ang, J . Liu, M. Liu, Y . E. Jiang, and W . Zhou. Search m ore, think less: R ethinking long-horizon agentic search for effi ciency and genera li zation, 2026b. URL https://arxiv .org/abs/2602.22675 . Z . Chu, X. W ang, J . Hong, H . F an, Y . Huang, Y . Y ang, G. Xu, C. Z hao, C. Xiang, S . Hu, D. Kuang, M. Liu, B. Q in, and X. Yu. Redsearcher: A scalab le and co st-effici ent framew ork for l ong-horizon search a gents, 2026. URL https://arxiv .org/abs/2602.14234 . DeepSeek-AI, A. Liu, A. Mei, B. Lin, B. Xue, B. W ang, B. Xu, B. W u, B. Zhang, C. Lin, C. Dong, C. Lu, et al. Deepseek-v3.2: Pushing the frontier of open large l anguage models, 2025. URL https://arxiv .org/abs/2512.02556 . G. Dong, H . Mao, K. Ma, L. Bao, Y . Chen, Z. W ang, Z. Chen, J . Du, H . W ang, F . Z hang, G. Zhou, Y . Zhu, J .-R. W en, and Z . Dou. Agentic reinf orced policy optimizatio n, 2025. URL https://arxi v .org/abs/ 2507.19849 . Y . Du, R. Y e, S . T ang, X. Zhu, Y . Lu, Y . Cai, and S . Chen. Openseeker: Democratizing fro ntier search a gents by fully open-sourcing training data, 2026. URL https://arxiv .org/abs/2603.15594 . J . Gao, W . Fu, M. Xie, S . Xu, C. He, Z. Mei, B. Zhu, and Y . W u. Bey ond ten turns: Unl ocking long-horizon a gentic search with l arge-scale asynchro no us rl, 2025. URL https://arxiv .org/abs/2508.07976 . GLM-5- T eam, A. Zeng, X. L v , Z. Ho u, Z. Du, Q . Zheng, B. Chen, et al. Glm-5: from vibe coding to a gentic engin eering, 2026. URL https://arxiv .org/abs/2602.15763 . Google. Gemini deep research: Y our perso nal research assistant. https://gemini.google/o vervi ew/ deep- research/ , 2024. Accessed: 2025-02-09. N . Gupta, R. Chatterjee, L. Ha as, C. T ao, A. W ang, C. Liu, H. Oiwa, E. Gribovska ya, J . Ackermann, J . Blitzer , S . Goldshtein, and D . Da s. Deepsearchqa: Bridging the comprehensiv eness gap for deep research a gents, 2026. URL https://arxiv .org/abs/2601.20975 . X. Ho, A.-K. D. N guyen, S . Sugaw ara, and A. Ai zawa. Constru cting a m ulti-hop qa dataset f or comprehensiv e eva luation of rea soning steps, 2020. URL https://arxiv .org/abs/2011.01060 . C. Hu, H . Du, H . W ang, L. Lin, M. Chen, P . Liu, R. Miao, et a l. Step-deepresearch techni cal report, 2025a. URL https://arxiv .org/abs/2512.20491 . M. Hu, T . F ang, J . Zhang, J . Ma, Z . Zhang, J . Zhou, H . Z hang, H. Mi, D . Y u, and I . King. W ebcot: Enhancing w eb a gent rea soning by reconstructing chain-o f-thought in reflectio n, branching, and rollback, 2025b. URL https://arxiv .org/abs/2505.20013 . 13 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design Y . Huang, Y . Chen, H . Zhang, K. Li, H. Zhou, M. F ang, L. Y ang, X. Li, L. Shang, S . Xu, et a l. Deep research a gents: A systematic examinatio n and roadmap. arXiv preprint , 2025. T . Lan, W . Zhang, C. Lyu, S . Li, C. Xu, H . Hu ang, D . Lin, X.-L. Mao, and K. Chen. Training l anguage m odels to criti que with m ulti-a gent feed back, 2024a. URL https://arxiv .org/abs/2410.15287 . T . Lan, W . Zhang, C. Xu, H . Huang, D. Lin, K. Chen, and X. ling Mao. Criticev al: Ev aluating large language m odel as criti c, 2024b. URL https://arxiv .org/abs/2402.13764 . T . Lan, B. Z hu, Q . Ji a, J . R en, H . Li, L. W ang, Z . Xu, W . Luo, and K. Z hang. Deepwidesearch: Benchmarking depth and width in agenti c informati on seeking, 2025. URL https://arxiv .org/abs/ 2510.20168 . T . L an, F . Henry , B. Zhu, Q . Ji a, J . R en, Q . Pu, H. Li, L. W ang, Z . Xu, and W . Luo. T a b le-a s-search: F ormulate lo ng-horizon agenti c informati on seeking a s t ab le co mpleti on, 2026. URL https://arxiv . org/abs/2602.06724 . K. Li, Z . Z hang, H . Y in, L. Z hang, L. Ou, J . W u, W . Y in, B. Li, Z . T ao, X. W ang, W . Shen, J . Zhang, D . Z hang, X. W u, Y . Jiang, M. Y an, P . Xie, F . Hu ang, and J . Z hou. W ebsailor: Na vigating super-human reaso ning for w eb agent, 2025a. URL https://arxiv .org/abs/2507.02592 . W . Li, J . Lin, Z . Jiang, J . Cao, X. Liu, J . Zhang, Z . Huang, Q . Chen, W . Sun, Q . W ang, H . Lu, T . Q in, C. Zhu, Y . Y ao, S . F an, X. Li, T . W ang, P . Liu, K. Z hu, H . Zhu, D . Shi, P . W ang, Y . Guan, X. T ang, M. Liu, Y . E. Jiang, J . Y ang, J . Liu, G. Zhang, and W . Zhou. Chain-of -a gents: End-to-end a gent f oundatio n m odels via m ulti-a gent distillation and agenti c rl, 2025b. URL https://arxiv .org/abs/2508.13167 . X. Li, W . Jiao, J . Jin, G. Dong, J . Jin, Y . W ang, H. W ang, Y . Z hu, J .-R. W en, Y . Lu, and Z . Dou. Deepa gent: A genera l reasoning agent with sca l ab le toolsets, 2025c. URL https://arxiv .org/abs/2510.21618 . J . Liu, Y . Li, C. Zhang, J . Li, A. Chen, K. Ji, W . Cheng, Z. W u, C. Du, Q . Xu, J . Song, Z . Z hu, W . Chen, P . Zhao, and J . H e. W ebexplorer: Explore and ev olve f or training lo ng-horizon w eb a gents, 2025. URL https://arxiv .org/abs/2509.06501 . R. Lu, Z . Hou, Z. W ang, H. Zhang, X. Liu, Y . Li, S . F eng, J . T ang, and Y . Do ng. Deepdiv e: Advancing deep search a gents with kno wledge gra phs and m ulti-turn rl, 2025. URL https://arxiv .org/abs/ 2509.10446 . K. Mai. Qa expert: Llm f or m ulti-hop questi on answ ering. https://github.com/khaimt/qa_expert , 2023. G. Mial on, C. F ourri er , C. Swift, T . W olf , Y . LeCun, and T . Sci al om. Gaia: a benchmark f or genera l ai assistants, 2023. URL https://arxiv .org/abs/2311.12983 . OpenAI. Introd ucing deep research. https://open ai.com/zh- Hans- CN/index/ introd ucing- deep- research/ , 2025. Accessed: 2025-02-09. L. Phan, A. Gatti, Z . Han, N . Li, J . Hu, H. Zhang, C. B. C. Zhang, M. S haaban, J . Ling, S . Shi, et al. Humanit y’s l ast exam, 2025. URL https://arxiv .org/abs/2501.14249 . P . Qi, H . L ee, O . T . Sido, and C. D . Manning. Answering open-do main questio ns o f varying rea soning steps from text, 2021. URL https://arxiv .org/abs/2010.12527 . Z . S hao, P . W ang, Q . Zhu, R. Xu, J . Song, X. Bi, H . Z hang, M. Z hang, Y . K. Li, Y . Wu, and D . Gu o. Deepseekmath: Pushing the limits of mathematical reasoning in open language models, 2024. URL https://arxiv .org/abs/2402.03300 . 14 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design M. S hoeybi, M. P at wary , R. Puri, P . L eGresley , J . Ca sper , and B. Cat anzaro. Megatron-lm: T raining m ulti-billio n parameter language models using model parall elism. arXiv preprint , 2019. C. Snell, J . Lee, K. Xu, and A. K umar . Scaling llm test-time compute optimally can be more effectiv e than sca ling m odel parameters, 2024. URL https://arxiv .org/abs/2408.03314 . Q . T ang, H. Xiang, L. Y u, B. Y u, Y . Lu, X. Han, L. Sun, W . Zhang, P . W ang, S . Liu, Z. Zhang, J . Tu, H . Lin, and J . Lin. Beyo nd turn limits: Training deep search agents with dynamic context windo w , 2025. URL https://arxiv .org/abs/2510.08276 . Z . T ao, J . W u, W . Yin, J . Zhang, B. Li, H . S hen, K. Li, L. Zhang, X. W ang, Y . Jiang, P . Xie, F . Huang, and J . Zhou. W ebshaper: Agentically dat a synthesizing via inf ormation-seeking formalizatio n, 2025. URL https://arxiv .org/abs/2507.15061 . K. T eam, T . Bai, Y . Bai, Y . Bao, S . H. Cai, Y . Cao, Y . Charl es, H . S . Che, C. Chen, G. Chen, H . Chen, J . Chen, J . Chen, J . Chen, and et al. Kimi k2.5: Visua l agenti c intelligence, 2026a. URL https: //arxiv .org/abs/2602.02276 . M. T eam, S . Bai, L. Bing, C. Chen, G. Chen, Y . Chen, Z . Chen, Z . Chen, X. Do ng, et al. Mirothinker: Pushing the performance boundaries of open-source research a gents via m odel, context, and interactiv e scaling. arXiv preprint , 2025a. M. T eam et al. Mirothinker-1.7 & h1: T owards heavy-d ut y research a gents via v erificatio n, 2026b. URL https://arxiv .org/abs/2603.15726 . M. L. T eam et al. L ongcat-flas h-thinking-2601 technica l report, 2026c. URL https://arxiv .org/abs/ 2601.16725 . T . D . T eam, B. Li, B. Zhang, D . Z hang, F . Huang, G. Li, G. Chen, H . Y in, J . W u, J . Zhou, et al. T ongyi deepresearch technica l report. arXiv preprint , 2025b. Y . W an, T . F ang, Z . Li, Y . Huo, W . W ang, H . Mi, D. Y u, and M. R. Lyu. Inference-time sca ling of v erificati on: S elf-ev olving deep research a gents via test-time rubric-guided verifi catio n, 2026a. URL https://arxiv .org/abs/2601.15808 . Y . W an, T . F ang, Z . Li, Y . Huo, W . W ang, H . Mi, D. Y u, and M. R. Lyu. Inference-time sca ling of v erificati on: S elf-ev olving deep research a gents via test-time rubri c-guided v erificatio n, 2026b. URL https://arxiv .org/abs/2601.15808 . L. W ang, W . Xu, Y . Lan, Z. Hu, Y . Lan, R. K.- W . Lee, and E.-P . Lim. P l an-and-solve prompting: Impro ving z ero-shot chain-of -thought reaso ning by l arge language models, 2023. URL https: //arxiv .org/abs/2305.04091 . J . W ei, Z. Sun, S . P apa y , S . McKinney , J . Han, I. Fulf ord, H. W . Chung, A. T . P assos, W . F edu s, and A. Glaese. Browseco mp: A simple yet chall enging ben chmark f or bro wsing a gents, 2025. URL https://arxiv .org/abs/2504.12516 . R. W ong, J . W ang, J . Z hao, L. Chen, Y . Gao, L. Zhang, X. Z hou, Z . W ang, K. Xiang, G. Zhang, W . Huang, Y . W ang, and K. W ang. Widesearch: Benchmarking agenti c broad inf o-seeking, 2025. URL https://arxiv .org/abs/2508.07999 . J . Wu, W . Yin, Y . Ji ang, Z . W ang, Z. Xi, R. F ang, L. Zhang, Y . He, D. Zhou, P . Xie, and F . Hu ang. W ebwalker: Benchmarking llms in w eb trav ersal, 2025a. URL https://arxiv .org/abs/2501.07572 . 15 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design X. W u, K. Li, Y . Zhao, L. Zhang, L. Ou, H . Yin, Z. Zhang, X. Y u, D. Z hang, Y . Jiang, P . Xi e, F . Huang, M. Cheng, S . W ang, H. Cheng, and J . Zhou. R esum: U nlocking lo ng-hori zon search intelligence via context summarizatio n, 2025b. URL https://arxiv .org/abs/2509.13313 . C. Xu, T . L an, Z. Lv , Q . Dong, J . Z hang, H. Hu ang, M. Y ang, and B. Hu. Bridging the gap be- t ween dat a distributi on and m odel: Dynamic dat a distributi on optimiz atio n for improving cri- tiqu e ca pabiliti es of l arge language models. Expert S ystems with Applicati ons , 300, Mar . 2026a. ISSN 0957-4174. doi: 10.1016/j.eswa.2025.129878. Publis her Copyright: © 2025 The A u- thors. Pub lished by Elsevier Ltd. This is an open access article under the CC BY -NC-ND license. http://creativ ecommo ns.org/licenses/by-n c-nd/4.0/. F . Xu, R. Han, Y . Chen, Z. W ang, I.-H . Hsu, J . Y an, V . T irumalas hett y , E. Choi, T . Pfister , and C.- Y . L ee. Sa ge: Steerab le agenti c data generati on for deep search with executi on feed back, 2026b. URL https://arxiv .org/abs/2601.18202 . Z . Xu, Z . Xu, R. Zhang, C. Zhu, S . Y u, W . Liu, Q . Zhang, W . Ding, C. Y u, and Y . W ang. Wideseek-r1: Exploring width scaling for broad informatio n seeking via multi-a gent reinforcement learning, 2026c. URL https://arxiv .org/abs/2602.04634 . A. Y ang, A. Li, B. Y ang, B. Z hang, B. Hui, B. Zheng, B. Y u, C. Gao, C. Huang, C. L v , C. Zheng, D. Liu, F . Zhou, F . Huang, et al. Qwen3 techni cal report, 2025a. URL https://arxiv .org/abs/2505.09388 . Y . Y ang, T . L an, Q . Jia, L. Z hu, H. Jiang, H . Zhu, L. W ang, W . Luo, and K. Zhang. Hscodecomp: A realisti c and expert-level benchmark for deep search agents in hi erarchica l rule a pplicatio n, 2025b. URL https://arxiv .org/abs/2510.19631 . S . Y ao, J . Zhao, D. Y u, N . Du, I . Shafran, K. Narasimhan, and Y . Cao. R eact: Syn ergi zing rea soning and acting in language models, 2023. URL https://arxiv .org/abs/2210.03629 . Y . Y e, H. Jiang, F . Jiang, T . L an, Y . Du, B. Fu, X. S hi, Q . Jia, L. W ang, and W . Luo. U mem: U nified mem ory extraction and management framew ork for genera lizabl e mem ory , 2026. URL https: //arxiv .org/abs/2602.10652 . P . Zhou, B. Leon, X. Y ing, C. Zhang, Y . S hao, Q . Y e, D . Chong, Z . Jin, C. Xie, M. Cao, Y . Gu, S . Hong, J . R en, J . Chen, C. Liu, and Y . Hua. Bro wsecomp-zh: Ben chmarking w eb bro wsing abilit y o f large language m odels in chinese, 2025. URL https://arxiv .org/abs/2504.19314 . J . Z hu, G. Zhang, X. Ma, L. Xu, M. Z hang, R. Y ang, S . W ang, K. Qiu, Z . W u, Q . D ai, R. Ma, B. Liu, Y . Y ang, C. Luo, Z. Y ang, L. Li, L. W ang, W . Chen, X. Geng, and B. Gu o. Re-trac: R ecursiv e trajectory compressi on f or deep search a gents, 2026. URL https://arxiv .org/abs/2602.02486 . M. Zhuge, C. Zhao, D. As hley , W . W ang, D . Khi zbullin, Y . Xiong, Z . Liu, E. Chang, R. Krishnam oorthi, Y . T i an, Y . Shi, V . Chandra, and J . Schmid huber . Agent-as-a-judge: Ev aluate agents with agents, 2024. URL https://arxiv .org/abs/2410.10934 . 16 Marco DeepR esearch: Unl ocking Efficient Deep R esearch Agents via V erification-Centric Design Contrib utions and Ackno wledgements The dev elopment o f Marco DeepR esearch is a highly coll aborati ve effort in v olving all members o f our team. Project L ead. L ongyu e W ang. Core Contrib utors. Bin Z hu, Qianghuai Jia, Tian L an, Junyang R en, F eng Gu, F eihu Ji ang, Longyue W ang, Zhao Xu, W eihua Luo. Contrib utors. Y u Zhao, E. Zhao, Jingzhen Ding, Y uxu an Han, ChenLin Y ao, Jianshan Zhao, W anying Chen, Jiaho ng W ang, Jiahe Sun, W anghui hu ang, Y ongchao Ding, Junyuan Luo, Junke T ang, Zhixing Du, Zhiqiang Y ang, Haijun Li, Huping Ding. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment