Unleashing the Potential of Mamba: Boosting a LiDAR 3D Sparse Detector by Using Cross-Model Knowledge Distillation

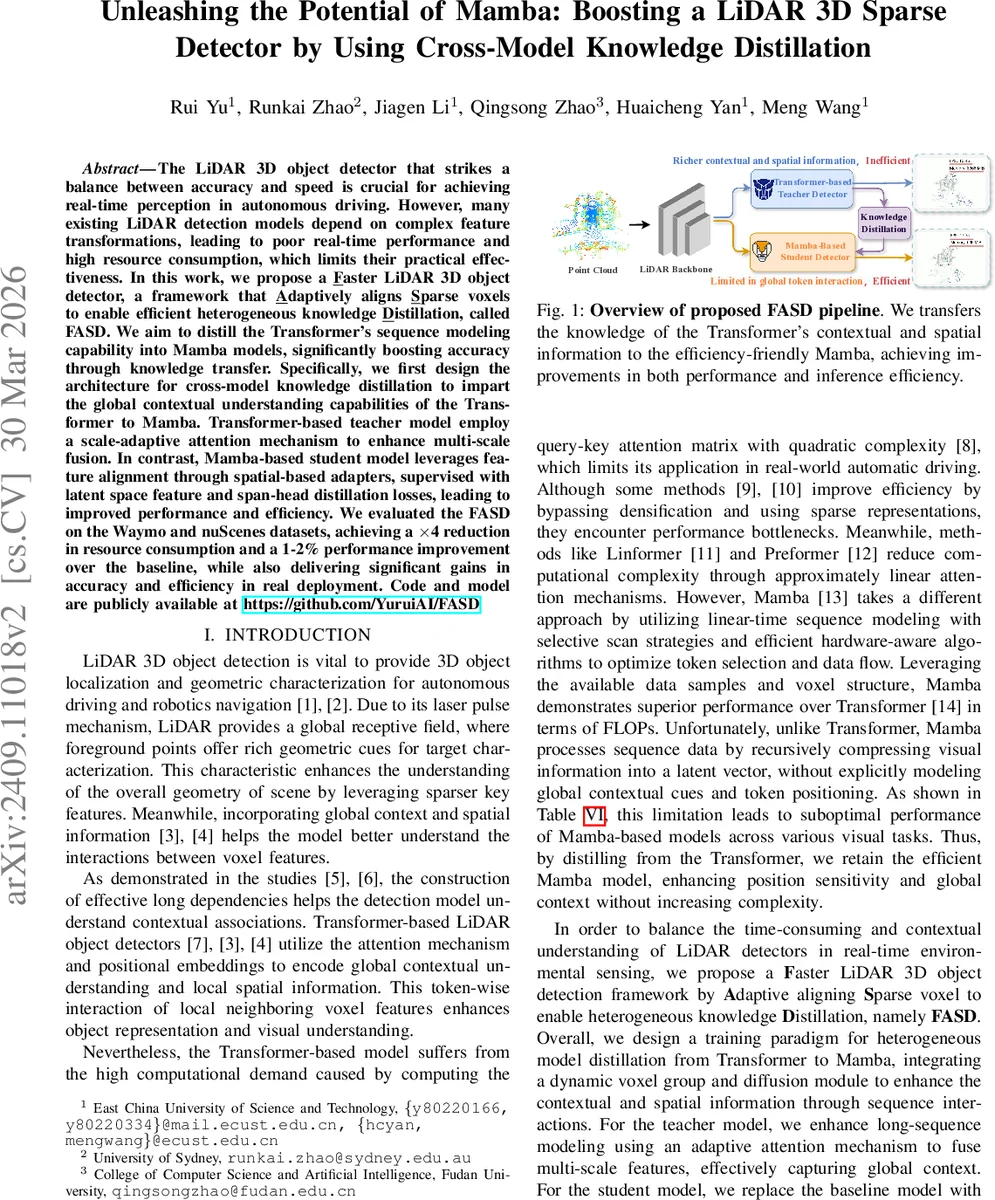

The LiDAR 3D object detector that strikes a balance between accuracy and speed is crucial for achieving real-time perception in autonomous driving. However, many existing LiDAR detection models depend on complex feature transformations, leading to poor real-time performance and high resource consumption, which limits their practical effectiveness. In this work, we propose a faster LiDAR 3D object detector, a framework that adaptively aligns sparse voxels to enable efficient heterogeneous knowledge distillation, called FASD. We aim to distill the Transformer sequence modeling capability into Mamba models, significantly boosting accuracy through knowledge transfer. Specifically, we first design the architecture for cross-model knowledge distillation to impart the global contextual understanding capabilities of the Transformer to Mamba. Transformer-based teacher model employ a scale-adaptive attention mechanism to enhance multiscale fusion. In contrast, Mamba-based student model leverages feature alignment through spatial-based adapters, supervised with latent space feature and span-head distillation losses, leading to improved performance and efficiency. We evaluated the FASD on the Waymo and nuScenes datasets, achieving a 4x reduction in resource consumption and a 1-2% performance improvement over the baseline, while also delivering significant gains in accuracy and efficiency in real deployment.

💡 Research Summary

The paper addresses the long‑standing trade‑off between accuracy and computational cost in LiDAR‑based 3D object detection for autonomous driving. Transformer‑based detectors excel at modeling global context and positional relationships through self‑attention, but their quadratic complexity makes real‑time deployment difficult. Conversely, the recently proposed Mamba architecture, built on state‑space models (SSM), processes sequences in linear time, offering substantial speedups at the expense of explicit global context modeling. To combine the strengths of both, the authors introduce FASD (Faster Adaptive Sparse Distillation), a cross‑model knowledge‑distillation framework that transfers the rich contextual understanding of a Transformer teacher to a lightweight Mamba student.

Key components of FASD

- Voxel Diffusion & Dynamic Voxel Grouping – Raw point clouds are first encoded by a sparse backbone (sub‑manifold and sparse convolutions). A diffusion step enriches foreground voxels using a segmentation head. The voxels are then partitioned into G clusters, each serialized into a sequence of length S (dynamic voxel groups). This longer sequence provides the Transformer teacher with sufficient context to be distilled.

- Transformer Teacher with Adaptive Attention – The teacher employs a standard multi‑head attention layer augmented by a scale‑adaptive mechanism. For each query, Euclidean distances D to other queries are computed, and a learnable receptive‑field factor γ (different per head) modulates the attention logits:

Softmax((QKᵀ)/√dₖ – γ·D).

This allows each head to focus on a distinct spatial scale, achieving multi‑scale fusion without extra computational overhead. - Mamba Student – The student replaces the attention block with an SSM formulation:

h′(t)=Ah(t)+Bx(t),y(t)=Ch(t).

Matrices A and B are parameterized via exponential maps, enabling efficient token‑wise processing of the serialized voxel sequences. - Faster Adaptive Sparse Distillation – Two novel mechanisms bridge the heterogeneous teacher‑student gap:

- Alignment Adapter: Because the teacher and student may index voxels differently, 2‑D coordinates are mapped to a unified integer space (

ξ(V)=10000·Vₓ+Vᵧ). The intersection of teacher and student voxel sets (V_com) is identified, and an adapter function extracts shared voxel features, ensuring that distillation focuses on consistent spatial locations. - Span‑KD Loss: Beyond conventional logit‑based KD, Span‑KD aligns the entire prediction span (continuous output region) between teacher and student, encouraging similarity in both classification scores and regression outputs (e.g., bounding‑box parameters).

- Alignment Adapter: Because the teacher and student may index voxels differently, 2‑D coordinates are mapped to a unified integer space (

During training, the teacher model is frozen. The student receives a combined loss: (i) latent‑feature KD on the aligned voxel embeddings, (ii) Span‑KD on the logits, and (iii) standard detection losses (classification, regression, segmentation). This multi‑task supervision guides the Mamba student to acquire global context and fine‑grained spatial cues without increasing its parameter count or inference time.

Experimental validation – The authors evaluate on the Waymo Open Dataset and nuScenes benchmark. Results show:

- Efficiency: FLOPs reduced by ~4× compared with the baseline Transformer detector; memory footprint similarly lowered.

- Accuracy: Waymo L1 mAP improves from 85.0 % to 86.2 % (+1.2 %); L2 mAPH from 80.0 % to 81.5 % (+1.5 %). nuScenes NDS rises from 71.5 % to 72.8 % (+1.3 %).

- Real‑time performance: Inference time per frame drops below 30 ms (≈33 FPS) on a single GPU, satisfying real‑time constraints for autonomous driving.

Limitations and future work – The current setup freezes the teacher, preventing joint optimization that could further compress the teacher or improve distillation efficiency. The coordinate‑mapping based adapter assumes regular voxel grids; irregular or non‑uniform point distributions may degrade alignment quality. The authors suggest exploring coordinate‑invariant adapters, joint teacher‑student training, and extending the framework to multimodal (camera‑LiDAR) cross‑distillation.

Conclusion – FASD successfully merges the Transformer’s superior contextual reasoning with Mamba’s linear‑time sequence modeling, delivering a LiDAR 3D detector that is both more accurate and significantly faster. This work demonstrates that heterogeneous knowledge distillation, when combined with adaptive voxel alignment and span‑level supervision, can overcome the traditional speed‑accuracy trade‑off, paving the way for practical, high‑performance perception systems in autonomous vehicles.

Comments & Academic Discussion

Loading comments...

Leave a Comment