Empirical Likelihood for Nonsmooth Functionals

Empirical likelihood is an attractive inferential framework that respects natural parameter boundaries, but existing approaches typically require smoothness of the functional and miscalibrate substantially when these assumptions are violated. For the…

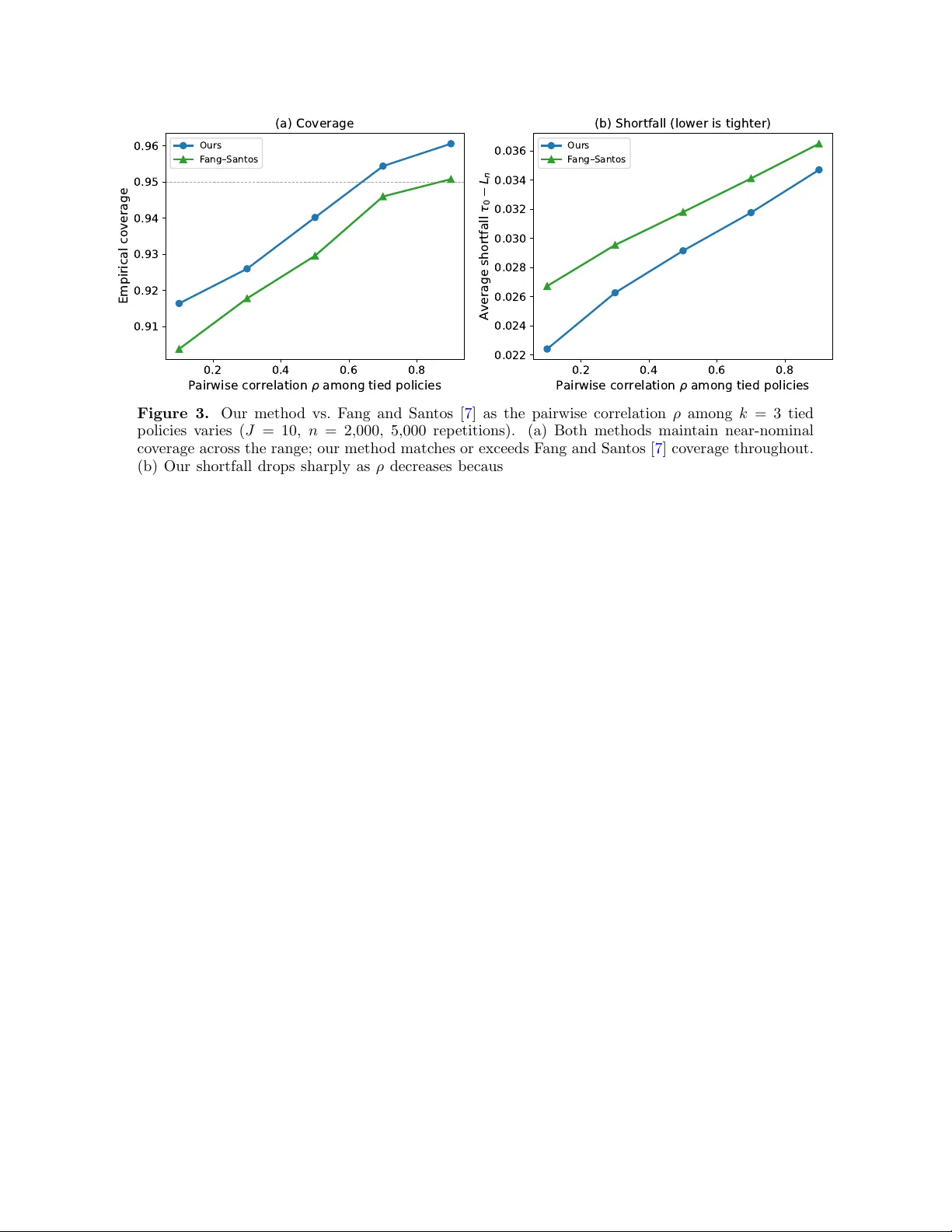

Authors: Hongseok Namkoong