Universe Reduction for APSP: Equivalence of Three Fine-Grained Hypotheses

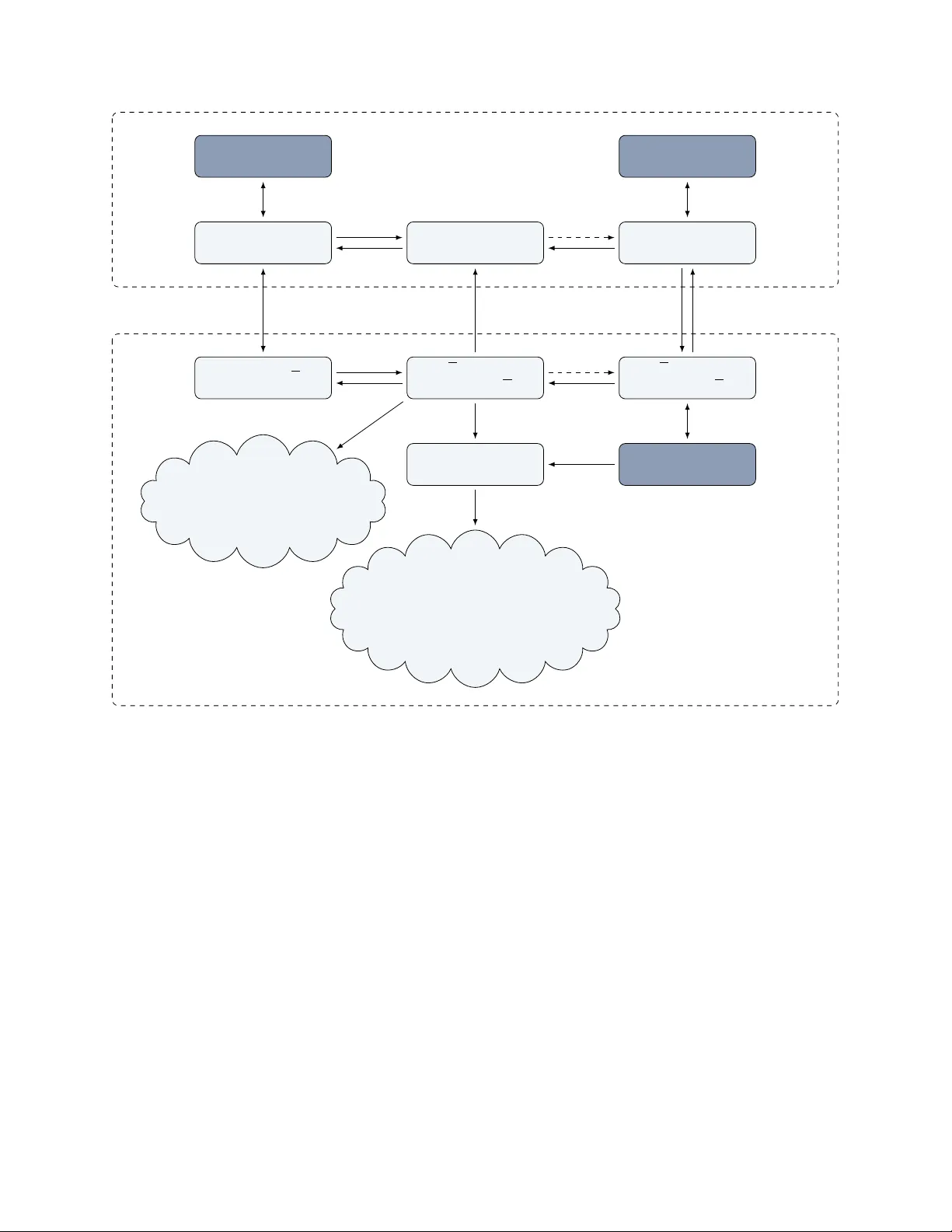

The APSP Hypothesis states that the All-Pairs Shortest Paths (APSP) problem requires time $n^{3-o(1)}$ on graphs with polynomially bounded integer edge weights. Two increasingly stronger assumptions are the Strong APSP Hypothesis and the Directed Unw…

Authors: Nick Fischer