Robust Smart Contract Vulnerability Detection via Contrastive Learning-Enhanced Granular-ball Training

Deep neural networks (DNNs) have emerged as a prominent approach for detecting smart contract vulnerabilities, driven by the growing contract datasets and advanced deep learning techniques. However, DNNs typically require large-scale labeled datasets…

Authors: Zeli Wang, Qingxuan Yang, Shuyin Xia

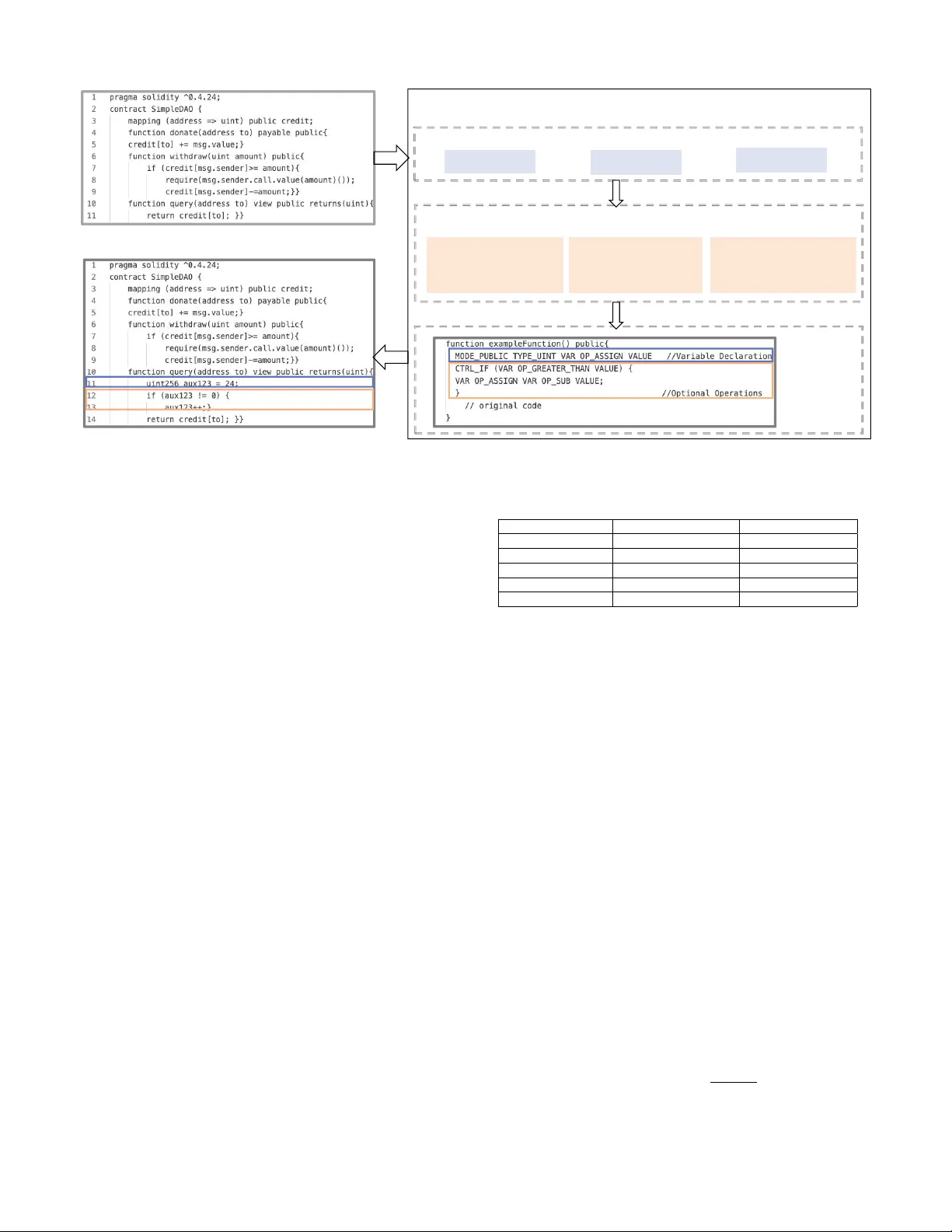

JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 1 Rob ust Smart Contract V ulnerability Detection via Contrasti v e Learning-Enhanced Granular -ball T raining Zeli W ang, Qingxuan Y ang, Shuyin Xia, Y ueming W u, Bo Liu, Longlong Lin Abstract —Blockchain has become the fundamental infrastruc- ture for building a digital economy society . As the key technology to implement Blockchain applications, smart contracts face serious security vulnerabilities. Deep neural networks (DNNs) hav e emerged as a pr ominent approach for detecting smart contract vulnerabilities, driven by the growing contract datasets and advanced deep learning techniques. However , DNNs typically requir e large-scale labeled datasets to model the relationships between contract features and vulnerability labels. In practice, the labeling process often depends on existing open-sour ced tools, whose accuracy cannot be guaranteed. Consequently, label noise poses a significant challenge for the accuracy and rob ustness of the smart contract, which is rarely explor ed in the liter - ature. T o this end, we propose Contrastiv e learning-enhanced Granular -Ball smart Contracts training, CGBC, to enhance the rob ustness of contract vulnerability detection. Specifically , CGBC first introduces a Granular-ball computing layer between the encoder layer and the classifier layer , to group similar contracts into Granular-Balls (GBs) and generate new coarse- grained representations (i.e., the center and the label of GBs) for them, which can corr ect noisy labels based on the most correct samples. An inter-GB compactness loss and an intra- GB looseness loss are combined to enhance the effectiveness of clustering. Then, to improve the accuracy of GBs, we pretrain the model through unsupervised contrastive learning supported by our novel semantic-consistent smart contract augmentation method. This procedur e can discriminate contracts with different labels by dragging the representation of similar contracts closer , assisting CGBC in clustering. Subsequently , we leverage the sym- metric cross-entr opy loss function to measure the model quality , which can combat the label noise in gradient computations. Finally , extensiv e experiments show that the proposed CGBC can significantly impr ove the rob ustness and effectiveness of the smart contract vulnerability detection under various noisy settings when contrasted with baselines. Index T erms —Granular-ball, vulnerability detection, deep learning, smart contract. I . I N T RO D U C T I O N B LOCKCHAIN is a distributed and decentralized ledger technology , which has been widely applied across var - ious domains [1], [2]. Smart contracts, which serv e as the Zeli W ang, Qingxuan Y ang, Shuyin Xia are with Chongqing Ke y Lab- oratory of Computational Intelligence, Ke y Laboratory of Cyberspace Big Data Intelligent Security , Ministry of Education, and Key Laboratory of Big Data Intelligent Computing, Chongqing Univ ersity of Posts and T elecommu- nications. Email: zlwang@cqupt.edu.cn, s230231148@stu.cqupt.edu.cn, xi- asy@cqupt.edu.cn; Y ueming W u is with the School of Cyber Science and Engineer- ing, Huazhong University of Science and T echnology . Email: yuem- ingwu@hust.edu.cn; Bo Liu is with School of Computer Science and Artificial Intelligence, Zhengzhou University , China. Email: liubo@zzu.edu.cn; Longlong Lin is with the College of Computer and Information Science, Southwest University , China. Email: longlonglin@swu.edu.cn. Manuscript received XXX, XXX; revised XXX, XXXX. core mechanism for automating and enforcing business logic on blockchain platforms, hav e become indispensable for en- abling these applications. Ho wever , the prev alence of security vulnerabilities in smart contracts remains a major obstacle, significantly hindering the broader adoption and real-world deployment of blockchain-based solutions [3]–[5]. V ulnerabil- ity detection is a widely studied approach to enhancing smart contract security . T raditional program analysis methods often depend on manually defined vulnerability patterns, which are constrained by expert knowledge and struggle to scale with the intricate logic semantics of smart contracts [6]–[8]. Deep learning of fers a promising solution by automatically learning vulnerability patterns through its powerful pattern recognition capabilities [9]–[13]. MTVHunter le verages multiple teacher models to guide the training process by aggre gating their predictions into more accurate soft labels, improving robustness and accurac y [13]. Clear achie ves significant detection accuracy improv ements by generating labeled contract pairs through data sampling and extracting semantic features using a Transformer module, followed by model fine-tuning for vulnerability detection [12]. Although these learning-based models have achiev ed remarkable performance in detecting smart contract vulner- abilities, their effecti veness relies on e xtensiv e high-quality labeled datasets. In practice, existing smart contract datasets are generally annotated by open-source tools [9], [10], [12] Howe ver , the accuracy of current contract vulnerability detec- tion tools is far from expectations: for e xample, the empirical study on 47,587 contracts and nine tools demonstrate that only 42% of contract vulnerabilities are detected [14]; the research conducted on 18 contract detection tools pro ves that they ha ve poor performance. Consequently , the existing tool- based label procedure will inevitably introduce much label noise, which can significantly degrade model performance [15]. Thus, mitigating the impact of label noise on learning- based vulnerability detection is urgent. Much research has been conducted to resolve the label noise in computer vision [16], [17] and natural language processing [18], [19], but only a fe w studies explore this problem in soft- ware vulnerability detection. A CGVD [20] adopts a framework that combines GNN and GA T , constructing a comprehensiv e graph representation that integrates v arious types of informa- tion from source code. This approach mitigates the noise in- terference that may arise from relying on a single information source, enhancing the model’ s ability to adapt to comple x data. [21] designs adaptive graph con volution operations that allow the model to adjust to noisy patterns automatically , and employs a weighted loss function to mitigate the impact of noisy labels. These tools demonstrate that deep learning-based JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 2 vulnerability detection methods in traditional software systems are susceptible to label noise and propose effecti ve mitigation strategies. Ho wev er , these approaches cannot be directly gener - alized to smart contracts due to their fundamental dif ferences, such as programming languages and logical architectures. T o the best of our kno wledge, no countermeasures hav e been proposed to address label noise in smart contract vulnerability detection frame works. An intuiti ve way to address this problem is to identify and correct noisy labels. Howe ver , it is nontrivial and computation- ally exhausti ve to spot all noisy labels precisely . T o alleviate this dilemma, bearing the core observation that most samples are correctly annotated, we aim to cluster similar smart con- tracts and remov e noise through the centers of clusters, which represent the common features and most labels. There are two key challenges to achiev e this goal: (1) generating close embeddings for similar contracts. The embeddings of smart contracts determine their similarity , namely , the correctness of clustering. Since smart contracts ha ve complicated code structures and di verse user-defined variables, it is tricky to embed contracts with the same labels closely . (2) Adaptively clustering smart contracts. Smart contracts ev en belonging to the same category may have distinct representative features. This implies that smart contracts should be clustered according to their data distribution features, rather than strictly clustering the contracts with the same label into a set. Such requirements ask for an adaptive multi-granularity clustering approach. T o overcome these challenges, we propose a nov el robust training approach for learning-based smart contract detection models through contrastiv e learning-enhanced Granular-ball computing (CGBC). Our CGBC achieves coarse-grained rep- resentation learning through a Granular-ball (GB) clustering layer . The label and vector representation of a GB center are the new coarse-grained representation for all samples in the current GB. This procedure can correct noisy labels and diminish their influence. T o address the first challenge and bring similar contracts closer , we pretrain CGBC with unsupervised contrastive learning to optimize the embeddings of smart contracts. Additionally , we introduce the intra-GB compactness loss and inter-GB looseness loss function to the fine-tuning loss function to further pull the embeddings of similar contracts closer . For the second challenge, we adopt the Granular-ball computing method, an adapti vely multi-granularity clustering algorithm, to automatically group smart contracts following data distribution features, without predefined parameters such as the amount or size of sets. By integrating these designs, CGBC realizes robust vulner- ability detection for smart contracts. W e conduct extensiv e experiments on a large-scale dataset across three common vulnerability types: Reentranc y (RE), T imestamp Dependency (TD), and Inte ger Overflo w (IO). The results demonstrate that CGBC significantly impro ves the robustness of smart contract vulnerability detection under label noise. Even with 40% noisy labels, CGBC maintains high performance with an a verage F1- score of 76.59%, outperforming all baseline methods. These findings confirm the effecti veness and robustness of CGBC in addressing label noise, enhancing the reliability of smart contract analysis. T o sum up, the contributions of this paper are listed as follows: • W e present the first contrastiv e learning-enhanced Granular-ball training method for robust learning-based smart contract vulnerability detection under noisy envi- ronments, which le verages unsupervised pretraining to initialize contract representations, and then uses coarse- grained representation learning to mitigate label noise in vulnerability detection. • W e propose a semantic-preserving smart contract aug- mentation to conduct contrastiv e learning. Furthermore, we introduce a Granular-ball-based coarse-fine robust training method, assisted by intra-GB and extra-GB qual- ity metrics for Granular-balls, to conduct robust learning for smart contract vulnerability detection. • Experimental results on abundant e valuations demonstrate the effecti veness and robustness of our proposal. More- ov er , our proposal always outperforms state-of-the-art models. I I . R E L A T E D W O R K This section re views prior studies on smart contract vul- nerability detection. Existing work can be classified into four main categories, namely program analysis, deep learning, lar ge language models, and robust vulnerability detection. T o mak e the comparison clearer , T able I summarizes representativ e studies and our method in terms of main work and remarks. A. Program Analysis Method Program analysis are classifical methods to detect smart contract vulnerabilities. They are still widely used because they provide clear semantic reasoning and good interpretabil- ity . Oyente [22] explores execution paths by symbolic e xecu- tion and uses SMT solving to detect vulnerabilities such as reentrancy and arithmetic bugs. Its main strength is path-le vel semantic analysis. Ho wever , it suf fers from path e xplosion and high analysis cost. SmartCheck [28] transforms Solidity code into XML format and detects suspicious patterns through XPath rules. This design is simple and efficient. Ho wever , its detection ability depends on predefined rules. Slither [23] performs static analysis by extracting structural information such as abstract syntax and dependency relations. It is practical and widely used. Howe ver , it still relies heavily on handcrafted rules and may miss complex semantic vulnerabilities. Securify [29] checks whether contract behaviors satisfy compliance and violation patterns. It provides clear reasoning results. Howe ver , its performance depends on the completeness of manually designed security patterns. eThor [30] performs sound static analysis for Ethereum smart contracts based on Horn-clause reasoning. It provides stronger theoretical support. Howe ver , this comes with higher analysis complexity . V erX [24] verifies safety properties through symbolic execution and delayed predicate abstraction. It is suitable for contracts with high security requirements. Howe ver , it requires formal property modeling and has high verification cost. EthPloit [25] combines fuzzing with taint analysis and feedback guidance to generate exploit-oriented test cases. It can better validate triggerable vulnerabilities than pure static analysis. Howe ver , JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 3 T ABLE I: Comparison of representativ e related studies and our method. Ref. Main work Remarks [22] Symbolic execution is used to e xplore contract e xecution paths for vulnerability detection. It gives clear semantic analysis, but it suffers from path explosion and high analysis cost. [23] Static analysis is performed by extracting structural and dependency information from smart contracts. It is practical and efficient, but it still relies heavily on handcrafted rules. [24] Formal verification is used to check safety properties of smart con- tracts. It provides stronger theoretical support, but it needs formal modeling and has high verification cost. [25] Fuzzing is combined with taint analysis and feedback guidance to generate exploit-oriented test cases. It can better v alidate triggerable vulnerabilities than pure static anal- ysis, but it is still sensitive to seed quality and path co verage. [26] Graph neural networks are combined with interpretable graph features and expert patterns for smart contract vulnerability detection. It balances representation learning and interpretability , but its flexibil- ity is limited by expert-designed knowledge. [3] V ulnerability subgraphs are constructed and graph neural networks are used for Ethereum smart contract vulnerability detection. It improves vulnerability-oriented structural modeling, but it still assumes relatively reliable labels during training. [12] Contrastiv e learning is introduced to improv e the discriminati ve quality of smart contract representations. It improves representation learning, but it does not explicitly handle robust training under noisy supervision. [27] Confidence learning and differential training are proposed to identify suspicious labels in vulnerability datasets. It sho ws the importance of noisy-label robustness, but it mainly focuses on general software vulnerability detection. Ours Contrastiv e learning and granular-ball-based robust training are jointly introduced for smart contract vulnerability detection. It improves both representation quality and robustness to noisy super- vision in one framework. its performance is still sensiti ve to seed quality and path cov erage. DFier [6] verifies suspicious paths by constructing directed inputs for vulnerable locations. This improv es vali- dation precision. Howe ver , it still depends on the quality of prior suspicious-path localization. B. Deep Learning Method Deep learning has become an important direction for smart contract vulnerability detection because it can learn vulner- ability patterns from contract data automatically . Existing studies mainly focus on graph modeling, transfer learning, multimodal fusion, and contrasti ve representation learning. The method in [31] models smart contracts as graphs and performs vulnerability detection through structural representa- tion learning. It shows the value of graph semantics. Ho wev er, early graph models are still limited in feature richness and robustness design. AME [26] combines graph neural networks with interpretable graph features and expert patterns. It bal- ances representation learning and interpretability . Howe ver , its reliance on expert-designed knowledge may reduce flex- ibility . DL V A [9] extracts control-flow graphs and applies graph con volutional networks for vulnerability analysis. It improv es structural modeling ability . Howe ver , it mainly fo- cuses on graph topology and does not directly address noisy supervision. ContractW ard [5] de velops automated vulnera- bility detection models for Ethereum smart contracts in the TNSE context. It sho ws the effecti veness of learning-based detection. Howe ver , robustness to label noise is not its main focus. ContractGNN [3] constructs vulnerability subgraphs and uses graph neural networks for Ethereum smart contract vulnerability detection. This design improves vulnerability- oriented representation. Ho wever , it still assumes relatively reliable labels during training. Cross-Modality [10] transfers knowledge from source code to bytecode through a teacher– student mutual learning frame work. Its main strength is cross- modal kno wledge transfer . Howe ver , noisy annotations are not explicitly modeled. ESCOR T [32] improves vulnerability detection through deep transfer learning and supports better generalization to unseen vulnerabilities. This is useful in lo w- resource settings. Howe ver , transfer learning alone cannot solve noisy-label ef fects. EFEVD [33] enhances feature e xtrac- tion by clustering contracts and learning graph embeddings for contract communities. It strengthens contract representation at the community level. Howe ver , it still relies on standard supervision. CLEAR [12] introduces contrasti ve learning to improv e the discriminative quality of smart contract represen- tations. It shows the value of contrastiv e objectives. Ho we ver , it does not explicitly address robust training under noisy supervision. V ulnsense [34] integrates source code, opcodes, graph structures, and language modeling for multimodal vul- nerability detection. Its multimodal design enriches semantic information. Ho wever , the framework is relati vely complex and still depends on label quality . C. LLM-assisted Smart Contract Security Analysis Large language models have recently been introduced into smart contract security research. PSCVFinder [35] applies prompt tuning to smart contract vulnerability detection. This shows that prompt-based methods can support contract anal- ysis. Howe ver , the method still depends on the stability of prompt inference. SmartGuard [36] enhances vulnerability detection with LLM-assisted analysis. Its main advantage is stronger semantic understanding. Howe ver , reliability and hallucination control remain major issues. SCALM [37] uses large language models to identify bad practices in smart con- tracts. This broadens the scope of contract analysis. Howe ver , it is less specialized for precise vulnerability discrimination. Smart-LLaMA-DPO [38] improves explainable smart contract vulnerability detection with a reinforced large language model. Its explainability is attractiv e. Howe ver , stable security judg- ment still requires stronger task-specific robustness. JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 4 D. Rob ust Method Label noise is a practical issue in vulnerability detection because many datasets are automatically labeled by analyz- ers, scanners, or heuristic rules. SySeVR [39] shows that syntax-aware and semantic-aw are representations can impro ve vulnerability detection. Its representation design is effecti ve. Howe ver , noisy labels are not explicitly handled. Rev eal [40] re visits deep-learning-based vulnerability detection and points out that reported performance may not fully reflect practical capability . This highlights the need for more robust ev aluation and training. IVDetect [41] performs vulnerability detection with fine-grained interpretations. It improv es feature granularity . Howe ver , it does not directly target label-noise correction. ReGVD [21] studies graph neural networks for vulnerability detection and confirms the importance of graph- based modeling. Howe ver , its learning process still assumes con ventional supervision. LineV ul [42] uses a transformer- based model for line-level vulnerability prediction. It improves localization granularity . Howe ver , its effecti veness can still be degraded by noisy annotations. Nie et al. [27] explicitly inv es- tigate label errors in deep-learning-based vulnerability detec- tion and propose confidence learning and dif ferential training strategies. This work clearly shows that label noise is a non- negligible issue in vulnerability datasets. SCL-CVD [43] im- prov es code vulnerability detection by supervised contrastive learning with GraphCodeBER T . It strengthens discriminativ e representations. Howe ver , it is designed for general software rather than smart contracts. V ulChecker [44] uses graph-based vulnerability localization and data augmentation to improve robustness. Its graph design is effecti ve. Howe ver , it is still not dev eloped specifically for smart contracts. GCL4SVD [45] estimates label-noise transitions through graph confident learning and corrects mislabeled samples. It provides a useful reference for noise-aware training. Howe ver , it is still targeted at software vulnerability detection. E. Comparison with Our Method T able I gives a direct comparison between representa- tiv e studies and our method. Existing program-analysis-based methods have good interpretability , but they usually rely on handcrafted rules or expensiv e path reasoning. Existing deep- learning-based methods improv e representation capability , but most of them assume relati vely reliable labels. Existing LLM- based methods improv e semantic analysis and explanation, but their reliability is still limited in precise vulnerability detection. Prior robust-learning studies also focus mainly on general software rather than smart contracts. In contrast, our method combines contrasti ve learning and granular-ball-based robust training for smart contract vulnerability detection. The contrastiv e part improves the discriminative quality of contract representations, while the granular-ball mechanism improves robustness under noisy supervision. Therefore, our method is designed to achiev e both robust representation learning and vulnerability detection in smart contracts. I I I . M E T H O D O L O G Y This section illustrates our learning-based vulnerability de- tection approach for smart contracts (i.e., CGBC), to fight against label noise in training datasets, enhancing the rob ust- ness of smart contract vulnerability detection. CGBC initial- izes and optimizes the contract encoder based on large-scale unlabeled pertaining, focusing on generating close vector rep- resentations for similar smart contracts. Subsequently , CGBC groups smart contracts to create coarse-grained labels and representations during fine-tuning, to correct noisy labels. The workflo w of CGBC is illustrated in Figure 1, consisting of two main procedures: (1) contrasti ve learning-based representa- tion initialization, which introduces a semantic-preserving ap- proach to augment smart contracts, and the e xtended datasets will pretrain the model without labels based on contrastive learning, to initialize smart contract representation through considering label consistency . (2) Robust vulnerability detec- tion by Granular-ball training, which instruments a Granular- ball computing layer between the encoder layer and the clas- sifier layer , to generate coarse-grained representations through similarity distance-based clustering, further optimized through a label-resilient symmetric cross-entropy loss function and a two-dimensional clustering loss function. After training con- ver gence, CGBC deri ves a rob ust smart contract vulnerability model despite noisy training datasets. A. Contrastiv e Learning-based Representation Initialization This module focuses on pretraining the model through un- supervised contrastiv e learning, based on large-scale positi ve sample pairs. By this procedure, on the one hand, the model can generate more precise v ector representations for smart contracts; on the other hand, the representations of similar smart contracts will be closer . 1) Smart Contract Augmentation The fundamental principle of contrasti ve learning is con- structing positi ve sample pairs. Conducting data augmentation on smart contracts is an intuitiv e way to achie ve this. Ho wev er , it is nontri vial because of the complicated business logics and syntax structures of smart contracts. Our core insight is to insert a compilable contract-free code snippet s into a random location Loc of the original contract C , which can maintain the functionality of C in such a case. Specifically , according to the syntax structure of smart contracts, the generation of such a s must firstly declare new variables s 1 , then create operation codes s 2 on these v ariables to realize contract-free, without interfering with the control and data dependencies of the raw contract C . The operations are optional, which will be created after being randomly chosen. W e can obtain the augmented code snippet s by stacking and combining s 1 and s 2 . Figure 2 presents the overall contract augmentation process, and T able II describes the meaning of formalized words that will be used later . For declaring variables, the module begins with the random selection of a modifier MOD indicating the access attributes such as ‘public’, ‘pri vate’, and ‘internal’, and a v ariable type TYPE. Subsequently , a random variable name V AR and value V ALUE are generated. This process is formalized as MOD TYPE V AR = V ALUE. Until now , the generated statements s 1 can act as a code snippet s . T o further di versify the augmentation samples, CGBC meticulously designs three kinds of operations on the JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 5 gb1 gb2 gb3 gbn L1 c1 L SCE Lcluster GBC Layer L0 c2 L1 cn L0 c3 Classifier label vector Aug Aug Data Prepr ocessing Labeled dataset Detection Original dataset Augmented dataset Phase 1: Contrastive Learning-based Representation Initialization Phase 2: Robust V ulnerability Detection via Granular -ball T raining L CL Predictor stop-grad Encoder Original vector space Pretrained vector space Encoder Encoder Fig. 1: The illustration of the overall workflo w of CGBC, consisting of two main procedures: contrasti ve learning-based representation initialization and robust vulnerability detection by Granular-ball training. new v ariables, namely assignment, computation, and condi- tion operations, as the second row of Figure 2 shows. The assignment operations ASSIGN are employed on the multiple type-identical variables declared in the first snippet s 1 . The computation operations COMP in volve arithmetic ARI and logical LOGI operations: arithmetic operations mainly handle numerical data (e.g., integers, floating-point numbers); logical operations focus on Boolean values (T rue/False) or binary bits (0/1); CGBC will select the appropriate computation method based on the operand type. The new v ariable can also participate in conditional operations CTRL to further enhance the syntactic complexity and semantic depth of the augmentation snippet, including if , while , and for et al. The generation of control statements is formalized as CTRL ( V AR OP V ALUE ) { V AR OP V AR OP V ALUE } . For instance, an if statement checks a condition in volving a variable and performs an operation based on whether the condition is true or false. Similarly , while and for loops introduce iterative behavior , allowing the code to execute a block of statements repeatedly under certain conditions. By incorporating these control structures, the augmented code snippets can simulate more realistic and complex behaviors in real-world smart contracts. Finally , CGBC will or ganize these statements to create the code snippet s . Through this rich contract-free code-generating procedure, the snippet s can be arbitrarily inserted into any smart contract, to guarantee div ersity without influencing its raw functionality . Specifi- cally , for a giv en contract C , we first identify all its func- tions, denoted as FUNC [0 ..n ] , where n represents the num- ber of functions. Subsequently , m functions are randomly selected from this set as the augmentation targets, denoted as SELECTED FUNCS, with the value of m dynamically determined based on the size of n . For each selected function FUNC ∈ SELECTED FUNCS, an augmented code snippet g is generated as mentioned above and inserted into a random location Loc in FUNC. Iterating this procedure, augmenting m SELECTED FUNCS and inserting them into the original contract C , we can get an augmented sample e C . T o ensure that augmented contract samples maintain consis- tent semantics with the original contracts, we design an auto- mated method for comparing semantic similarity . Specifically , for a gi ven pair of original and augmented contracts, we first employ static analysis tools to extract the sequence of core operations at the intermediate representation (IR) lev el from the target function. These operations encompass state variable accesses (read/write), external function calls, ev ent emissions, return statements, conditional branches, and critical b uilt-in Solidity function in vocations. Formally , for a contract file F , contract name C , and function signature S , we denote the target function as f F,C ,S , and its core operation sequence as O = [ o 1 , o 2 , ..., o n ] , (1) where each o i represents one of the operation types aforemen- tioned. W e then define a semantic encoding function f sem ( · ) JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 6 VAR_V1 OP_ADD VAR_V2 VAR_V1 OP_MUL VAR_V2 ...... VAR_V1 ASSIGN VALUE_10 VAR_V1 ASSIGN VAR_V2 OP_ADD VALUE_5 ...... CTRL_IF(VAR_V1 OP_THAN VALUE_10){ VAR_V1 ASSIGN VAR_ V1 OP_ADD VALUE_5 } ...... Optional Operati ons MOD TYPE VALUE Variable Declarat ion Original smart con tracts Augmented smart co ntracts Smart Contract Augmentation Augmented Functions Fig. 2: An illustration example of smart contract augmentation. that maps the operation sequence O to a unique semantic digest D via a cryptographic hash: D = f sem ( O ) := H ( o 1 ∥ o 2 ∥ · · · ∥ o n ) , (2) where H ( · ) denotes the SHA256 hash function and ∥ denotes sequence concatenation. During the detection phase, we gen- erate semantic digests D 1 and D 2 for the target functions of the original contract ( F 1 , C 1 , S 1 ) and the augmented contract ( F 2 , C 2 , S 2 ) , respectiv ely . If ( D 1 = D 2 ) , the two functions are considered semantically equiv alent at the core operation lev el; otherwise, a semantic deviation is assumed. T o balance efficienc y and representativ eness, we randomly select 1,000 pairs of augmented contract samples for semantic similarity verification. The results demonstrate a semantic similarity rate of 99.60%, indicating that the generated augmented samples almost entirely preserve the semantics of the original contracts at the core operation lev el. Beyond semantic equi valence, we further assess the struc- tural div ersity of the augmented samples. W e adopt a Jaccar d similarity -based approach [38] to quantify differences between the original contract and its augmented counterparts, as well as among the augmented samples themselves. The source code is tokenized into identifiers, keywords, operators, numbers, and delimiters, forming a token sequence from which contiguous k -grams are extracted. For each pair of code sequences, the Jaccard similarity is computed as the ratio of the intersection ov er the union of their k -gram sets. A similarity threshold τ is then used to distinguish structurally similar samples from sufficiently div erse ones. In our experiments, with five augmented samples generated per contract, we observe that under a threshold of 0.9, 49.49% of the comparisons indi- cate structural diversity , whereas relaxing the threshold to 0.95 increases this proportion to over 74.03%. These results demonstrate that our augmentation strategy not only preserves semantic correctness but also introduces substantial structural variation, providing a solid foundation for effecti ve contrastiv e representation learning. T ABLE II: Actual meanings of the formalized words in our methods. Formalized W ord Meaning Example TYPE V ariable type ‘uint256’, ‘bool’ MOD Modifier ‘public’, ‘priv ate’ V AR V ariable name ‘V1’, ‘V2’ OP Arithmetic Operation ‘=’, ‘+’, ‘-’, ‘*’, ‘/’ CTRL Control statement ‘if ’, ‘while’, ‘for’ 2) Contract Representation Pretraining Giv en a batch of pairs containing a smart contract C together with its augmentation contract e C , this module aims at dragging the vector representations of similar smart contracts closer , and improving the expressi ve ability of the DNN model through large-scale unsupervised Contrasti ve Learning. Specifically , C and e C are fed into a contrastive learning model consisting of two shared-weight encoders F 1 and F 2 . The encoders will output their vector representations z ∈ R d and e z ∈ R d as shown in Equation (3). z = F 1 ( C ) , e z = F 2 ( e C ) (3) Follo wed by a predictor P , a two-layer multi-layer perceptron (MLP), the predictor P performs a nonlinear transformation on one branch of the vector representation z or e z , generating the predication v ector p or e p as follo ws: p = P ( z ) , e p = e P ( e z ) (4) where p, e p ∈ R d . Then, a symmetric loss function L is employed to measure and optimize network performance. based on cosine similarity . The first step of L is to compute the cosine similarity loss between the prediction vector p and the vector representation z : L cos ( p, z ) = 1 − ⟨ p, z ⟩ ∥ p ∥∥ z ∥ (5) where ∥ . ∥ is the L2 norms, and ⟨ p, z ⟩ denotes the dot product of the vectors. By adding the constant term 1, the cosine similarity value is mapped to a non-ne gativ e value, which JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 7 is then used for loss optimization. The final symmetric loss function is defined as: L = 1 2 ( L cos ( p, e z ) + L cos ( e p, z )) (6) This inv olves calculating the similarity between the prediction vector p and the vector representation z , as well as between e p and e z , and then averaging the results. This design ensures that the loss function treats both branches symmetrically , thus prev enting bias during the optimization process. Moreo ver , by integrating stop-gradient operations into this ne gativ e-sample- free paradigm, we maximize the mutual information between augmented contract pairs, thereby achieving discriminative representation learning purely through unsupervised feature alignment. B. Rob ust V ulnerability Detection by Granular-ball Training This module aims to conduct rob ust fine-tuning based on an- notated smart contracts to endo w the model with vulnerability detection ability . T o diminish the influence of label noise in annotated datasets, CGBC introduces a Granular -ball comput- ing (GBC) layer between the encoder layer and the classifier layer . The GBC layer can cluster close smart contracts and create coarse-grained representations and labels for training samples, improving robustness. This procedure consists of two main steps, namely contract Granular -ball construction and loss-guided vulnerability detection optimization. 1) Contract Granular-ball Construction The GBC layer creates and optimizes smart contract cluster- ing to generate robust representations under a noisy environ- ment. The complete procedure is detailed in Algorithm 1. The clustering process begins with an initial queue Q containing the full training dataset D and initializes an empty Granular- ball set B (line 1). D contains a batch of samples ( x , y), where x is the embedding of the corresponding smart contract, and y is its label. F or each iteration, a cluster GB is popped from the cluster queue Q . When the number of samples in GB or the quality of GB reaches the corresponding thresholds, GB is regarded as an eligible Granular-ball. The quality is measured by Purity computed as shown in Equation (7), where 1 ( y i = y max ) is an indicator function and y max represents the label with most samples in GB . That is, the metric Purity indicates the proportion of samples with the label y max . If the purity of GB exceeds the quality threshold pur , it indicates that most samples in GB belong to the same category , and the subset is considered pure. Purity ( X ) = 1 | X | | X | X i =1 1 ( y i = y max ) (7) For the eligible Granular-ball GB , their centers and labels are calculated as Equations (8) and (9), where the center is the mean of all samples’ embeddings, and a majority vote determines the label. This cluster GB , together with its center and label, will be stored in the final outputs (lines 5-7). Oth- erwise, the algorithm identifies the two most distant samples ( x i , y i ) and ( x j , y j ) in GB based on cosine distance as sho wn in Equation (10) ( lines 9-10). Its intuition is that the tw o Algorithm 1: Granular-ball Clustering Input: Annotated dataset D , purity threshold pur Output: Granular -balls B 1 Q ← D ; B ← ∅ ; 2 while Q is not empty do 3 GB = Q.pop () ; 4 if | GB | ≤ 2 ∨ Purity ( GB ) > pur then 5 Compute center x c ← 1 | X c | P x ∈ GB x ; 6 Compute label y c ← MajorityV ote ( P y ∈ GB y ) ; 7 Store ( x c , y c , GB ) in B ; 8 Continue ; 9 Compute pairwise distance matrix G for GB ; 10 ( < x i , y i >, < x j , y j > ) ← argmax dis ( x i ,x j ) G ; 11 GB i , GB j = div ide ( GB , x i , x j ) ; Q = Q.add ( GB i , GB j ) ; 12 r eturn B ; most distant samples are likely to belong to dif ferent semantic spaces, thus serving as natural anchors for partitioning. These two anchor points are used to partition GB into two smaller groups GB i and GB j according to proximity (lines 11). The new clusters will be added to the queue Q that is pending to be processed. Iterating this procedure until the queue is empty , the original dataset D will be divided into multiple multi-granularity Purity -qualified Granular-balls. As described abov e, the whole procedure of Granular-ball construction is adaptiv e without set constraints on clusters (such as the size or number of sets), which is more fle xible and rob ust. c = 1 | X c | X x i ∈ X c x i (8) y c = MajorityV ote ( y c ) (9) CosineDistance ( x i , x j ) = 1 − x i · x j ∥ x i ∥∥ x j ∥ (10) 2) Loss-guided V ulnerability Detection Optimization Since most samples of a Purity -qualified GB are similar with the same label, the center of it represents the universal and effecti ve features of samples in the current GB. Bearing the core insight that noisy labels are inevitable but are the minority in the lar ge-scale dataset, the embedding and label of all samples in a GB are represented by the GB center and label, respectiv ely . This can improv e rob ustness, b ut its performance on smart contract vulnerability detection is limited to the accuracy of ne w coarse-grained representations. T o address this problem, we elaborately designed our loss function with two main goals: one is optimizing the contract Granular-ball (GB) to facilitate only similar smart contracts in the same GB, while the other is improving the accuracy of the model on the contract vulnerability detection task. F or the first goal, we design a clustering loss function composed of intra-GB compactness loss (Definition 1) and inter-GB looseness loss (Definition 2) as follows. The former is to ensure that samples within each GB are closer to the center , while the latter makes the GB centers with the same label closer and pushes GB with different labels farther apart. This design enhances the JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 8 accuracy of coarse-grained representation by improving the clustering performance. Definition 1 (Intra-GB Compactness Loss): Giv en a set of Granular-balls [ GB 1 , ..., GB i , ..., GB k ] generated in the current batch, each GB i contain N k samples, where the sample feature v ectors are denoted as x and the center of GB i is c i , the compactness loss is calculated as: L intra = k X i =1 ( 1 N i N i X j =1 ∥ x j − c i ∥ 2 2 ) , x j ∈ GB i (11) which is conducted on all Granular-balls generated by the GBC layer in the current batch. For each Granular -ball, the av erage squared Euclidean distance between the current Granular-ball center and all its contained samples is computed. Definition 2 (Inter-GB Looseness Loss): Giv en a set of Granular-balls [ GB 1 , ..., GB i , ..., GB k ] generated in the cur- rent batch, where each GB i has a center vector c i and a corresponding label y i , the inter -cluster Separation loss is calculated as: L inter = k X i =1 k X j = i +1 ( ∥ c i − c j ∥ 2 2 , if y i = y j max(0 , m − ∥ c i − c j ∥ 2 ) , if y i = y j (12) where m is a margin hyperparameter that enforces a min- imum distance between Granular-balls with different labels, setting as 1 . 0 to provide suf ficient separation between different Granular-balls. When y i = y j , the loss minimizes the squared Euclidean distance between the centers of same-label clusters. When y i = y j , the loss pushes the dissimilar groups apart by a mar gin. T o improve the quality of Granular-ball construction, we define a clustering objecti ve, denoted by L clustering , by combin- ing the intra-GB compactness loss and the inter-GB separation loss. This objectiv e encourages samples in the same Granular- ball to stay close to their center while keeping different Granular-balls well separated, thus producing tighter and more reliable coarse-grained representations. For vulnerability detection, we adopt the symmetric cross- entropy loss, denoted by L SCE [46], as the classification objectiv e. Compared with standard cross-entrop y , L SCE is more robust to noisy labels because it balances fast optimization and noise tolerance. The final loss of CGBC jointly considers clustering quality and classification performance: L = L clustering + L SCE . (13) I V . E X P E R I M E N TA L E V A L U A T I O N T o comprehensiv ely ev aluate the effecti veness and robust- ness of CGBC, we design a series of experiments aimed at answering the follo wing three research questions: • RQ1: Ho w effecti ve is CGBC in detecting smart contract vulnerabilities compared to state-of-the-art methods? • RQ2: How do the core components of CGBC contrib ute to its performance? • RQ3: How robust is CGBC under different noise le vels to detect vulnerabilities in smart contracts? • RQ4 : How effecti ve is Granular-ball computing for cluster- ing in smart contracts? A. Experimental Setup Datasets. T o comprehensi vely e v aluate the ef fectiveness of CGBC in detecting smart contract vulnerabilities, we cu- rate training and ev aluation datasets as follo ws: (1) Large- scale and Representative Source: The dataset should originate from publicly accessible, large-scale corpora that represent div erse real-world smart contracts. (2) V erified V ulnerability Labels: For fine-tuning and e valuation, contracts must be annotated with confirmed vulnerability types (e.g., reentrancy , integer ov erflow/underflo w , timestamp dependency). (3) T ool- Detectable W eaknesses: The labeled vulnerabilities should be detectable by mainstream static analysis tools to ensure compatibility and reproducibility . Our e xperiments le verage three k ey datasets. For pretraining, we adopt Ethereum-SC Large [9], a comprehensiv e corpus with ov er 113,000 ra w smart contracts. After preprocessing to remo ve inv alid and duplicate entries, 78,211 contracts remain for self-supervised representation learning. During fine-tuning, we use a labeled dataset from [10], which contains approximately 40,000 real- world smart contracts annotated with major vulnerability types such as reentrancy , integer overflo w/underflow , and timestamp dependency . For ev aluation, we aggre gate contracts from two widely accepted benchmarks: ReentrancyBenchmark [9] and SolidiFiBenchmark [9]. These datasets pro vide verified labels deriv ed from manual annotation and static analysis tools. Each vulnerability type is represented by a binary label per contract, where 0 indicates secure and 1 indicates vulnerable. The vulnerability detection is conducted at the contract le vel. T o elude data leakage across the pretraining, fine-tuning and testing sets, we identify and remove their o verlapping samples. Specifically , we normalize source codes and compute their SHA-256 hashes to detect identical contracts. This check prov es the isolation of the testing set and identifies 4,781 groups of identical contracts between pre-training and fine- tuning sets; these duplicates were remov ed from the fine- tuning data to ensure strict separation. Intra-set duplication was also detected, with 1,265 redundant groups in the pre- training set and 204 in the fine-tuning set, all of which were subsequently deleted. Implementation Details. T o ensure the ef fective extraction of semantic and structural information from smart contracts, we design a contract encoder based on a multi-layer T ransformer architecture. The encoder operates on tokenized contract se- quences with a maximum length of 512 and is trained with a masking strategy that encourages the model to infer contextual relationships between tokens under partially observ able input. This setting strengthens the model’ s ability to capture latent code semantics and function-lev el dependencies, which are essential for vulnerability detection. Following the encoder , we stack sev eral fully connected layers to refine the learned representation and project it into a latent space suitable for downstream classification. The model processes two views of the same input contract in parallel, enforcing representa- tion consistency across syntactically different but semantically JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 9 T ABLE III: Evaluation results on RQ1: effecti veness on vulnerability detection. The best results of a certain metric for each vulnerability type are highlighted in bold . Line # Methods RE TD IO A verage R(%) P(%) F(%) R(%) P(%) F(%) R(%) P(%) F(%) R(%) P(%) F(%) 1 sFuzz [47] 14.95 10.88 12.59 27.01 23.15 24.93 47.22 58.62 52.31 29.73 30.88 29.94 2 Smartcheck [28] 16.34 45.71 24.07 79.34 47.89 59.73 56.21 45.56 50.33 50.63 46.39 44.71 3 Osiris [48] 63.88 40.94 49.90 55.42 59.26 57.28 n/a n/a n/a n/a n/a n/a 4 Oyente [22] 63.02 46.56 53.55 59.97 61.04 59.47 n/a n/a n/a n/a n/a n/a 5 Mythril [49] 75.51 42.86 54.68 49.80 57.50 53.37 62.07 72.30 66.80 62.46 57.55 58.28 6 Securify [29] 73.06 68.40 70.41 n/a n/a n/a n/a n/a n/a n/a n/a n/a 7 Slither [23] 73.50 74.44 73.97 67.17 69.27 68.20 52.27 70.12 59.89 64.31 71.28 67.35 8 GCN [50] 73.18 74.47 73.82 77.55 74.93 76.22 69.74 69.01 69.37 73.49 72.80 73.14 9 TMP [31] 75.30 76.04 75.67 76.09 78.68 77.36 70.37 68.18 69.26 73.92 74.30 74.10 10 AME [26] 78.45 79.62 79.03 80.26 81.42 80.84 69.40 70.25 69.82 76.04 77.10 76.56 11 SMS [10] 77.48 79.46 78.46 91.09 89.15 90.11 73.69 76.97 75.29 80.75 81.86 81.29 12 DMT [10] 81.06 83.62 82.32 96.39 93.60 94.97 77.93 84.61 81.13 85.13 87.28 86.14 13 LineV ul [42] 73.01 85.19 78.63 67.46 89.47 76.92 74.20 74.10 74.15 71.56 82.92 76.57 14 Clear [12] 96.43 96.81 96.62 98.41 94.30 96.31 91.48 89.81 90.64 95.44 93.64 94.52 15 TMF-Net [51] 91.45 84.92 88.06 88.15 86.59 87.36 81.40 85.24 83.28 87 85.58 86.23 16 CGBC 96.92 92.65 94.74 95.77 98.55 97.14 100 96.77 98.36 97.56 95.99 96.75 equiv alent inputs. For the effecti veness and ablation experi- ments, the corresponding methods are first pretrained on the dataset [9] and then finetuned on the dataset [10]. The results of Granular-ball clustering is deriv ed during finetuning CGBC. In addition, the noise-injection experiments are performed by perturbing the fine-tuning dataset, and all ev aluations are con- sistently carried out on the benchmark dataset. All experiments are launched on T ensorFlo w-GPU 2.8.0 with a learning rate of 1e-5 and run on four T esla V100-SXM2-32GB GPUs. All experimental results are the av erage of fiv e independent runs. B. Ov erall Evaluations Evaluations on RQ1: Effectiveness in detecting vulnera- bility . T able III reports the results of 16 vulnerability detec- tion methods on three common smart contract vulnerabilities: Reentrancy (RE), Timestamp Dependency (TD), and Integer Overflo w (IO). W e use recall (R), precision (P), and F1- score (F) as e valuation metrics. All baseline results are taken from [12], [51]. For fairness, CGBC is trained and tested on the same datasets. Some tools, such as Osiris, Oyente, and Securify , do not support all vulnerability types, so their results are only reported on the tasks they cover . W e first compare CGBC with seven rule-based static anal- ysis tools. These methods mainly rely on handcrafted rules, so they often struggle with comple x contract logic. CGBC clearly outperforms them on all supported tasks. For RE, CGBC reaches an F1-score of 94.74%, while Slither obtains 73.97%. For TD, CGBC achiev es 97.14%, much higher than SmartCheck’ s 59.73%. For IO, CGBC reaches 98.36%, far abov e Mythril’ s 66.80%. These results show that CGBC can capture vulnerability patterns more effecti vely than rule-based tools. Next, we compare CGBC with fiv e advanced deep learning methods. Although these methods learn better features than static tools, the y are still affected by label noise and lim- ited contract semantics. Among them, DMT giv es the best av erage F1-score, which is 86.14%. CGBC achieves 96.75%, improving the a verage F1-score by 10.61%. The gain on IO is especially large, reaching 17.23%. This result suggests that the coarse-grained Granular-ball representation helps the model learn more robust features. W e also compare CGBC with LineV ul, a general pretrained code model. Its average F1-score is 76.57%, which shows that directly using a general code model is not enough for smart contract vulnerability detection. CLEAR achieves an a verage F1-score of 94.52%, establishing a strong baseline. As a more recent method, TMF-Net reports an a verage F1-score of 86.23%, outperforming most pre vious deep learning baselines. Ev en so, CGBC still performs better than both CLEAR and TMF-Net, and achie ves the best a verage F1-score of 96.75%, with the highest F1-scores on TD and IO, 97.14% and 98.36%, respectively . Answer to RQ1: CGBC consistently outperforms tradi- tional static analysis tools, general pretrained code models, and recent state-of-the-art deep learning methods, achiev- ing the best av erage precision and F1-score of 95.99% and 96.75%. This demonstrates its strong effecti veness and ro- bustness in detecting diverse smart contract vulnerabilities. Evaluations on RQ2: Ablation Studies. The o verall trend is clear . Models with CL or GB perform better than the plain model NGNC, and the model with both modules, WGWC, giv es the best results on all three tasks. This sho ws that both modules are useful, and they work best when used together . The CL module mainly improves feature learning. After JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 10 (a) Results of the precision (%) (b) Results of the recall rate (%) (c) Results of the F1-score (%) Fig. 3: Ev aluation results on RQ2: ablation studies on the core modules of CGBC. T ABLE IV: Ev aluation results on RQ3: rob ustness against label noise. RE TD IO Noise BaseLine CGBC BaseLine CGBC BaseLine CGBC Lev els P(%) R(%) F(%) P(%) R(%) F(%) P(%) R(%) F(%) P(%) R(%) F(%) P(%) R(%) F(%) P(%) R(%) F(%) 0% 74.49 60.83 66.97 92.65 96.92 94.74 65.91 82.86 73.42 98.55 95.77 97.14 60.57 81.40 69.46 96.77 100 98.36 10% 58.08 45.65 51.12 90.62 87.88 89.23 76.11 46.77 57.94 86.16 96.55 91.06 90.04 63.53 74.50 86.15 96.55 91.05 20% 47.86 39.53 43.30 71.43 97.83 82.57 61.66 37.77 46.84 72.41 84.00 77.78 86.29 56.45 68.25 75.44 72.88 74.14 30% 50.34 34.82 41.17 70.17 78.43 74.07 45.61 41.68 43.56 65.57 81.63 72.73 80.77 53.99 64.72 71.88 77.97 74.80 40% 45.93 24.71 32.97 64.10 60.98 62.50 40.31 20.35 27.05 57.58 69.10 62.81 72.73 53.70 61.78 68.12 79.67 73.44 adding CL, the model achieves clear gains on all three tasks. The F1-score increases from 66.97% to 79.46% on RE, from 73.42% to 90.53% on TD, and from 69.46% to 81.02% on IO. This suggests that large-scale pretraining helps the model learn better semantic information from smart contracts.The GB module mainly impro ves rob ustness during fine-tuning. After adding GB, the model also achiev es better results in most cases. The F1-score rises to 84.39% on RE, 76.92% on TD, and 80.48% on IO. These gains show that coarse-grained representation learning helps reduce the ef fect of noisy labels. When CL and GB are used together , the model reaches the best performance on ev ery task. The F1-scores are 94.74% on RE, 97.14% on TD, and 98.36% on IO. These results indicate that CL and GB are complementary . CL improves representation quality , while GB makes the model more robust. Answer to RQ2: Both the pretraining module and the Granular-ball training module significantly boost CGBC’ s performance. Their combination (WGWC) yields the high- est scores with an av erage precision of 95.99%, recall of 97.56%, and F1-score of 96.75%, underscoring their complementary roles and effecti veness in smart contract vulnerability detection. Evaluations on RQ3: Robustness against Label Noise. T o comprehensively assess the rob ustness of CGBC under label noise, we injected synthetic label noise into the fine- tuning training set at le vels of 10%, 20%, 30%, and 40%. At each noise le vel, a corresponding proportion of training samples were randomly selected and assigned flipped labels, while the test sets remained clean. This configuration emulates realistic annotation errors and allows a rigorous e v aluation of model stability under v arying de grees of label corruption. F or clarity , we compare CGBC against a baseline model without pretraining and without the Granular -ball layer . Results are reported in terms of precision (P), recall (R), and F1-score (F), with detailed numbers shown in T able IV. On the original dataset without added noise, CGBC already outperforms the baseline on all three tasks. Its F1-scores are 94.74% on RE, 97.14% on TD, and 98.36% on IO. The gains ov er the baseline are 27.77%, 23.72%, and 28.90%, respectiv ely . This suggests that e ven the original data may contain some noisy labels, and robust training is useful even in this case. As the noise le vel increases, the baseline degrades quickly . At 40% noise, its F1-scores drop to 32.97% on RE, 27.05% on TD, and 61.78% on IO. In contrast, CGBC remains much more stable. At the same noise le vel, its F1-scores are still 62.50% on RE, 62.81% on TD, and 73.44% on IO. Although CGBC also declines as noise grows, the drop is much smaller than that of the baseline. This rob ustness comes from the combination of contrasti ve pretraining and the GBC layer . The GBC layer reduces the ef fect of noisy labels by using coarse-grained grouping, while contrastiv e pretraining helps the model learn better semantic representations. W e also compare CGBC with CLEAR [12], which is the closest method to ours. Both methods use a two-stage framework, but CGBC adds coarse-grained fine-tuning for robustness. T able V sho ws the comparison under dif ferent noise levels. Both methods perform well on clean data, but CGBC is more stable as noise increases. At 40% noise, CGBC outperforms CLEAR on all three vulnerability types. This further shows the adv antage of CGBC in noisy settings. Answer to RQ3: CGBC exhibits strong rob ustness to label noise. Across 30 experiments across v arious noise lev els and vulnerability types, CGBC always outperforms the baseline model. Notably , under sev ere label noise (40%), JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 11 T ABLE V: Comparison results with the most related work Clear [12]. The best results of a certain metric under the same dataset and noise level are highlighted in bold . T ype RE TD IO Noise Level 0% 20% 40% 0% 20% 40% 0% 20% 40% Methods Clear CGBC Clear CGBC Clear CGBC Clear CGBC Clear CGBC Clear CGBC Clear CGBC Clear CGBC Clear CGBC P(%) 96.81 92.65 58.00 71.43 50.93 64.10 94.30 98.55 66.17 72.41 50.54 57.58 89.81 96.77 66.53 75.44 52.80 68.12 R(%) 96.43 96.92 59.18 97.83 35.50 60.98 98.41 95.77 65.19 84.00 64.83 69.10 91.48 100.00 69.30 72.88 80.73 79.67 F(%) 96.62 94.74 58.59 82.57 41.84 62.50 96.31 97.14 67.18 77.78 56.80 62.81 90.64 98.36 67.88 74.14 63.84 73.44 Label 0 Label 1 (a) The early stage of training Label 0 Label 1 (b) The middle stage of training Labe l 0 Label 1 (c) The con vergence stage of training Fig. 4: Evaluation results on RQ4: ef fectiv eness of Graunlar-ball computing in clustering smart contracts across training stages. CGBC maintains stable and high F1-scores—62.5% (RE), 62.81% (TD), and 73.44% (IO)—outperforming competi- tiv e methods. The results confirm that CGBC deli vers su- perior detection performance in noisy real-world scenarios. Evaluations on RQ4: Effecti veness of Granular -ball Com- puting in Clustering Smart Contracts. T o intuitiv ely demon- strate the adv antages of Granular-ball multi-granularity and adaptiv e characteristics, we visualize smart contract clustering results of the Granular-ball layer in different phases of fine- tuning training, as illustrated in Figure 4. The early-stage clustering performance of the GBC layer is demonstrated in Figure 4a. The smart contract representations are dispersed without identifiable clustering patterns. The formed Granular- balls (GBs) exhibit loose structures with ambiguous bound- aries, indicating the GB layer’ s limited effecti veness at this stage. Ho wev er , with the help of contrasti ve learning-based pretraining, the representations of smart contracts with the same label remain relativ ely close. As training progresses to the mid stage (Figure 4b), the contracts’ embeddings within each GB become more compact and the boundaries between the GBs start to emerge more clearly . This is because of the feedback and optimization of the intra-GB compactness and inter-GB looseness loss functions, which pull contracts with the same labels closer and push contracts with different labels farther . When training con verges (Figure 4c), the clustering structure becomes significantly clearer . Smart contracts are densely grouped within their corresponding GBs, and GBs exhibit well-separated boundaries with distinct margins. This reflects the effecti veness of the GB layer in clustering smart contracts, hinting at its accuracy in coarse-grained representa- tion learning. Answer to RQ4: The Granular -ball computing layer demonstrates effecti ve clustering performance for smart contracts. During training, the quality of clustering pro- gressiv ely improv es. Upon con ver gence, smart contracts are densely aggregated within subordinate GBs, while the GBs themselves maintain boundaries with distinct margins. C. Threats to V alidity Our e v aluations face se veral threats to v alidity . F or thr eats to external validity , they mainly stem from the ev aluated datasets and vulnerabilities. T o combat such threats, we conduct exper - iments on se veral large publicly av ailable real-world datasets. Moreov er , we select three popular vulnerability types to e val- uate model performance. For thr eats to internal validity from the implementation of CGBC and the compared approaches. T o mitigate these threats, we le verage the widespread SimSiam network framew ork [52] to implement the contrasti ve learning module. It is worth noting that the GBC layer inherently in volves randomness due to the adaptiveness of GB clustering, which may cause fluctuations in training outcomes. T o alle- viate their impacts, we performed multiple repeated training runs and reported the a verage results. The results of baselines are directly derived from work [10], and we ev aluate CGBC on the same datasets when comparing them. V . C O N C L U S I O N In this paper, we propose a robust learning-based vul- nerability detection method (CGBC) for smart contracts to fight against label noise in datasets. Through contrastive learning-based representation pretraining, CGBC can generate discriminativ e embeddings for smart contracts with different labels. Follo wing this, the Granular -ball computing Layer can adaptiv ely cluster smart contracts and create coarse-grained representations for contracts, diminishing the influence of JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 12 noisy samples during training. Extensi ve experiments demon- strate that CGBC outperforms baseline models and state- of-the-art models across large-scale real-world datasets and various noisy en vironments. Abundant ablation studies verify the ef fectiv eness of the core modules of CGBC. V I . AC K N O W L E D G M E N T S This work was supported by the National Natural Science Foundation of China under Grant Nos. 62402399, 62221005, 62450043, 62222601, and 62176033, Natural Science Founda- tion of Chongqing, China under Grant No. CSTB2025NSCQ- GPX1268, and the Science and T echnology Research Program of Chongqing Municipal Education Commission under Grant No. KJQN202500647. R E F E R E N C E S [1] T . W ang, C. Zhao, Q. Y ang, S. Zhang, and S. C. Liew , “Ethna: Analyzing the underlying peer-to-peer network of ethereum blockchain, ” IEEE T ransactions on Network Science and Engineering , vol. 8, no. 3, pp. 2131–2146, 2021. [2] Z. Liu, B. Huang, X. Hu, P . Du, and Q. Sun, “Blockchain-based renew able ener gy trading using information entrop y theory , ” IEEE T ransactions on Network Science and Engineering , vol. 11, no. 6, pp. 5564–5575, 2023. [3] Y . W ang, X. Zhao, L. He, Z. Zhen, and H. Chen, “Contractgnn: Ethereum smart contract vulnerability detection based on vulnerability sub-graphs and graph neural networks, ” IEEE T ransactions on Network Science and Engineering , v ol. 11, no. 6, pp. 6382–6395, 2024. [4] H. Jin, Z. W ang, M. W en, W . Dai, Y . Zhu, and D. Zou, “ Aroc: An automatic repair framework for on-chain smart contracts, ” IEEE Tr ans. Softwar e Eng. , vol. 48, no. 11, pp. 4611–4629, 2022. [5] W . W ang, J. Song, G. Xu, Y . Li, H. W ang, and C. Su, “Contractward: Automated vulnerability detection models for ethereum smart contracts, ” IEEE T ransactions on Network Science and Engineering , vol. 8, no. 2, pp. 1133–1144, 2021. [6] Z. W ang, W . Dai, M. Li, K. R. Choo, and D. Zou, “Dfier: A directed vulnerability verifier for ethereum smart contracts, ” J. Netw . Comput. Appl. , vol. 231, p. 103984, 2024. [7] L. Quan, L. Wu, and H. W ang, “Evulhunter: Detecting fake transfer vulnerabilities for eosio’ s smart contracts at webassembly-level, ” CoRR , vol. abs/1906.10362, 2019. [8] Q. Nguyen, A. Nguyen, V . D. Nguyen, T . Nguyen-Le, and K. Nguyen- An, “Detect abnormal behaviours in ethereum smart contracts using attack vectors, ” in FDSE , ser. Lecture Notes in Computer Science, vol. 11814, 2019, pp. 485–505. [9] T . Abdelaziz and A. Hobor , “Smart learning to find dumb contracts, ” in USENIX Security , 2023, pp. 1775–1792. [10] P . Qian, Z. Liu, Y . Y in, and Q. He, “Cross-modality mutual learning for enhancing smart contract vulnerability detection on bytecode, ” in WWW , 2023, pp. 2220–2229. [11] H. Guo, Y . Chen, X. Chen, Y . Huang, and Z. Zheng, “Smart contract code repair recommendation based on reinforcement learning and multi- metric optimization, ” A CM T rans. Softw . Eng. Methodol. , v ol. 33, no. 4, pp. 106:1–106:31, 2024. [12] Y . Chen, Z. Sun, Z. Gong, and D. Hao, “Improving smart contract se- curity with contrasti ve learning-based vulnerability detection, ” in ICSE , 2024, pp. 156:1–156:11. [13] G. Sun, Y . Zhuang, S. Zhang, X. Feng, Z. Liu, and L. Zhang, “Mtvhunter: Smart contracts vulnerability detection based on multi- teacher knowledge translation, ” CoRR , v ol. abs/2502.16955, 2025. [14] T . Durieux, J. F . Ferreira, R. Abreu, and P . Cruz, “Empirical re view of automated analysis tools on 47, 587 ethereum smart contracts, ” in ICSE , 2020, pp. 530–541. [15] C. Sendner, L. Petzi, J. Stang, and A. Dmitrienko, “Large-scale study of vulnerability scanners for ethereum smart contracts, ” in SP , 2024, pp. 2273–2290. [16] H. W ang, R. Xiao, Y . Li, L. Feng, G. Niu, G. Chen, and J. Zhao, “Pico+: Contrastiv e label disambiguation for robust partial label learning, ” IEEE T rans. P attern Anal. Mach. Intell. , vol. 46, no. 5, pp. 3183–3198, 2024. [17] W . Xuan, S. Zhao, Y . Y ao, J. Liu, T . Liu, Y . Chen, B. Du, and D. T ao, “Pnt-edge: T owards rob ust edge detection with noisy labels by learning pixel-le vel noise transitions, ” in A CM MM , 2023, pp. 1924–1932. [18] Z. W ang, T . Zhang, S. Xia, L. Lin, and G. W ang, “Gb rain : Combating textual label noise by granular-ball based robust training, ” in ICMR , 2024, pp. 357–365. [19] Z. W ang, J. Li, S. Xia, L. Lin, and G. W ang, “T ext adversarial defense via granular-ball sample enhancement, ” in ICMR , 2024, pp. 348–356. [20] M. Li, C. Li, S. Li, Y . Wu, B. Zhang, and Y . W en, “A CGVD: vulnerability detection based on comprehensi ve graph via graph neural network with attention, ” in ICICS , ser . Lecture Notes in Computer Science, vol. 12918, 2021, pp. 243–259. [21] V . Nguyen, D. Q. Nguyen, V . Nguyen, T . Le, Q. H. Tran, and D. Phung, “Regvd: Revisiting graph neural networks for vulnerability detection, ” in ICSE , 2022, pp. 178–182. [22] L. Luu, D. Chu, H. Olickel, P . Saxena, and A. Hobor , “Making smart contracts smarter, ” in SIGSA C , 2016, pp. 254–269. [23] J. Feist, G. Grieco, and A. Groce, “Slither: a static analysis framework for smart contracts, ” in WETSEB@ICSE . [24] A. Permene v , D. Dimitrov , P . Tsankov , D. Drachsler-Cohen, and M. T . V echev , “V erx: Safety verification of smart contracts, ” in SP . IEEE, 2020, pp. 1661–1677. [25] Q. Zhang, Y . W ang, J. Li, and S. Ma, “Ethploit: From fuzzing to efficient exploit generation against smart contracts, ” in SANER . IEEE, 2020, pp. 116–126. [26] Z. Liu, P . Qian, X. W ang, L. Zhu, Q. He, and S. Ji, “Smart contract vulnerability detection: From pure neural network to interpretable graph feature and expert pattern fusion, ” in IJCAI , 2021, pp. 2751–2759. [27] X. Nie, N. Li, K. W ang, S. W ang, X. Luo, and H. W ang, “Understanding and tackling label errors in deep learning-based vulnerability detection (experience paper), ” in ISST A , 2023, pp. 52–63. [28] S. Tikhomiro v , E. V oskresenskaya, I. Ivanitskiy , R. T akhaviev , E. Marchenko, and Y . Alexandrov , “Smartcheck: Static analysis of ethereum smart contracts, ” in WETSEB@ICSE , 2018, pp. 9–16. [29] P . Tsankov , A. M. Dan, D. Drachsler-Cohen, A. Gervais, F . B ¨ unzli, and M. T . V echev , “Securify: Practical security analysis of smart contracts, ” in ACM SIGSA C , 2018, pp. 67–82. [30] C. Schneidewind, I. Grishchenk o, M. Scherer , and M. Maf fei, “ethor: Practical and prov ably sound static analysis of ethereum smart con- tracts, ” in CCS . A CM, 2020, pp. 621–640. [31] Y . Zhuang, Z. Liu, P . Qian, Q. Liu, X. W ang, and Q. He, “Smart contract vulnerability detection using graph neural network, ” in IJCAI , 2020, pp. 3283–3290. [32] C. Sendner, H. Chen, H. Fereidooni, L. Petzi, J. K ¨ onig, J. Stang, A. Dmitrienko, A. Sadeghi, and F . Koushanfar , “Smarter contracts: Detecting vulnerabilities in smart contracts with deep transfer learning, ” in NDSS , 2023. [33] C. Jiang, X. Liu, S. W ang, J. Liu, and Y . Zhang, “EFEVD: enhanced feature extraction for smart contract vulnerability detection, ” in IJCAI , 2024, pp. 4246–4254. [34] P . T . Duy , N. H. Khoa, N. H. Quyen, L. C. Trinh, V . T . Kien, T . M. Hoang, and V . Pham, “V ulnsense: efficient vulnerability detection in ethereum smart contracts by multimodal learning with graph neural network and language model, ” Int. J . Inf. Sec. , v ol. 24, no. 1, p. 48, 2025. [35] L. Y u, J. Lu, X. Liu, L. Y ang, F . Zhang, and J. Ma, “Pscvfinder: A prompt-tuning based frame work for smart contract vulnerability detection, ” in ISSRE , 2023, pp. 556–567. [36] H. Ding, Y . Liu, X. Piao, H. Song, and Z. Ji, “Smartguard: An llm- enhanced framework for smart contract vulnerability detection, ” Expert Syst. Appl. , vol. 269, p. 126479, 2025. [37] Z. Li, X. Li, W . Li, and X. W ang, “SCALM: detecting bad practices in smart contracts through llms, ” CoRR , vol. abs/2502.04347, 2025. [38] L. Y u, Z. Huang, H. Y uan, S. Cheng, L. Y ang, F . Zhang, C. Shen, J. Ma, J. Zhang, J. Lu, and C. Zuo, “Smart-llama-dpo: Reinforced large language model for explainable smart contract vulnerability detection, ” Pr oc. ACM Softw . Eng. , vol. 2, no. ISST A, pp. 182–205, 2025. [39] Z. Li, D. Zou, S. Xu, H. Jin, Y . Zhu, and Z. Chen, “Sysevr: A framework for using deep learning to detect software vulnerabilities, ” IEEE T rans. Dependable Secur . Comput. , vol. 19, no. 4, pp. 2244–2258, 2022. [40] S. Chakraborty , R. Krishna, Y . Ding, and B. Ray , “Deep learning based vulnerability detection: Are we there yet?” IEEE T rans. Softwar e Eng. , vol. 48, no. 9, pp. 3280–3296, 2022. [41] Y . Li, S. W ang, and T . N. Nguyen, “V ulnerability detection with fine- grained interpretations, ” in ESEC/FSE , 2021, pp. 292–303. [42] M. Fu and C. T antithamthavorn, “Linevul: A transformer-based line- lev el vulnerability prediction, ” in MSR , 2022, pp. 608–620. JOURNAL OF L A T E X CLASS FILES, VOL. 18, NO. 9, SEPTEMBER 2020 13 [43] R. W ang, S. Xu, Y . Tian, X. Ji, X. Sun, and S. Jiang, “SCL-CVD: supervised contrastive learning for code vulnerability detection via graphcodebert, ” Comput. Secur . , vol. 145, p. 103994, 2024. [44] Y . Mirsky , G. Macon, M. D. Brown, C. Y agemann, M. Pruett, E. Down- ing, J. S. Mertoguno, and W . Lee, “V ulchecker: Graph-based vulner- ability localization in source code, ” in USENIX Security . USENIX Association, 2023, pp. 6557–6574. [45] Q. W ang, Z. Li, H. Liang, X. Pan, H. Li, T . Li, X. Li, C. Li, and S. Guo, “Graph confident learning for software vulnerability detection, ” Eng. Appl. Artif. Intell. , vol. 133, p. 108296, 2024. [46] Y . W ang, X. Ma, Z. Chen, Y . Luo, J. Y i, and J. Bailey , “Symmetric cross entropy for robust learning with noisy labels, ” in ICCV , 2019, pp. 322–330. [47] T . D. Nguyen, L. H. Pham, J. Sun, Y . Lin, and Q. T . Minh, “sfuzz: an efficient adaptive fuzzer for solidity smart contracts, ” in ICSE , 2020, pp. 778–788. [48] C. F . T orres, J. Sch ¨ utte, and R. State, “Osiris: Hunting for inte ger bugs in ethereum smart contracts, ” in ACSA C . ACM, 2018, pp. 664–676. [49] B. Mueller , “ A framework for bug hunting on the ethereum blockchain, ” ConsenSys/mythril, 2017. [50] T . N. Kipf and M. W elling, “Semi-supervised classification with graph con volutional networks, ” in ICLR , 2017. [51] T . W ang, X. Zhao, and J. Zhang, “Tmf-net: Multimodal smart contract vulnerability detection based on multiscale transformer fusion, ” Inf. Fusion , vol. 122, p. 103189, 2025. [52] X. Chen and K. He, “Exploring simple siamese representation learning, ” in CVPR , 2021, pp. 15 750–15 758. Zeli W ang recei ved the PhD de gree from Huazhong Univ ersity of Science and T echnology (HUST), in 2022. She is now a lecturer at the College of Com- puter Science and T echnology , Chongqing Uni ver- sity of Posts and T elecommunications, Chongqing, China. Her current research interests include blockchain smart contract security and the security and robustness of artificial intelligence. Qingxuan Y ang is currently pursuing an M.E. de- gree at Chongqing University of Posts and T elecom- munications. His main research direction focuses on smart contract security and deep learning. Shuyin Xia receiv ed his B.S. degree and M.S. de- gree in computer science from Chongqing Uni versity of T echnology . He received his Ph.D. degree from Chongqing University . He is currently a professor at the School of Artificial Intelligence, Chongqing Univ ersity of Posts and T elecommunications. He is also the executi ve deputy director of the Big Data and Network Security Joint Lab of CQUPT . His research interests include classifiers and granular computing. Y ueming W u recei ved the PhD degree in the School of Cyber Science and Engineering from the Huazhong University of Science and T echnology , W uhan, China, in 2021. He is currently an asso- ciate professor in the School of Cyber Science and Engineering, Huazhong University of Science and T echnology , W uhan, China. His primary research interests lie in malware analysis and vulnerability analysis. Bo Liu recei ved the M.S. degree in computer science and engineering from Zhengzhou University and the Ph.D. degree in computer architecture from the Huazhong University of Science and T echnology . She is currently a Lecturer with Zhengzhou Uni ver- sity . Her research interests focus on deep learning, AI security , and AI accelerator design. She has served as a Revie wer for top conferences or journals, such as IEEE TRANSACTIONS ON P ARALLEL AND DISTRIBUTED SYSTEMS and ACM T rans- actions on Architecture and Code Optimization. Longlong Lin receiv ed his Ph.D. degree from Huazhong University of Science and T echnology (HUST), W uhan, in 2022. He is currently an asso- ciate professor in the College of Computer and Infor- mation Science, Southwest University , Chongqing. His current research interests include graph cluster- ing and graph-based machine learning.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment