TMTE: Effective Multimodal Graph Learning with Task-aware Modality and Topology Co-evolution

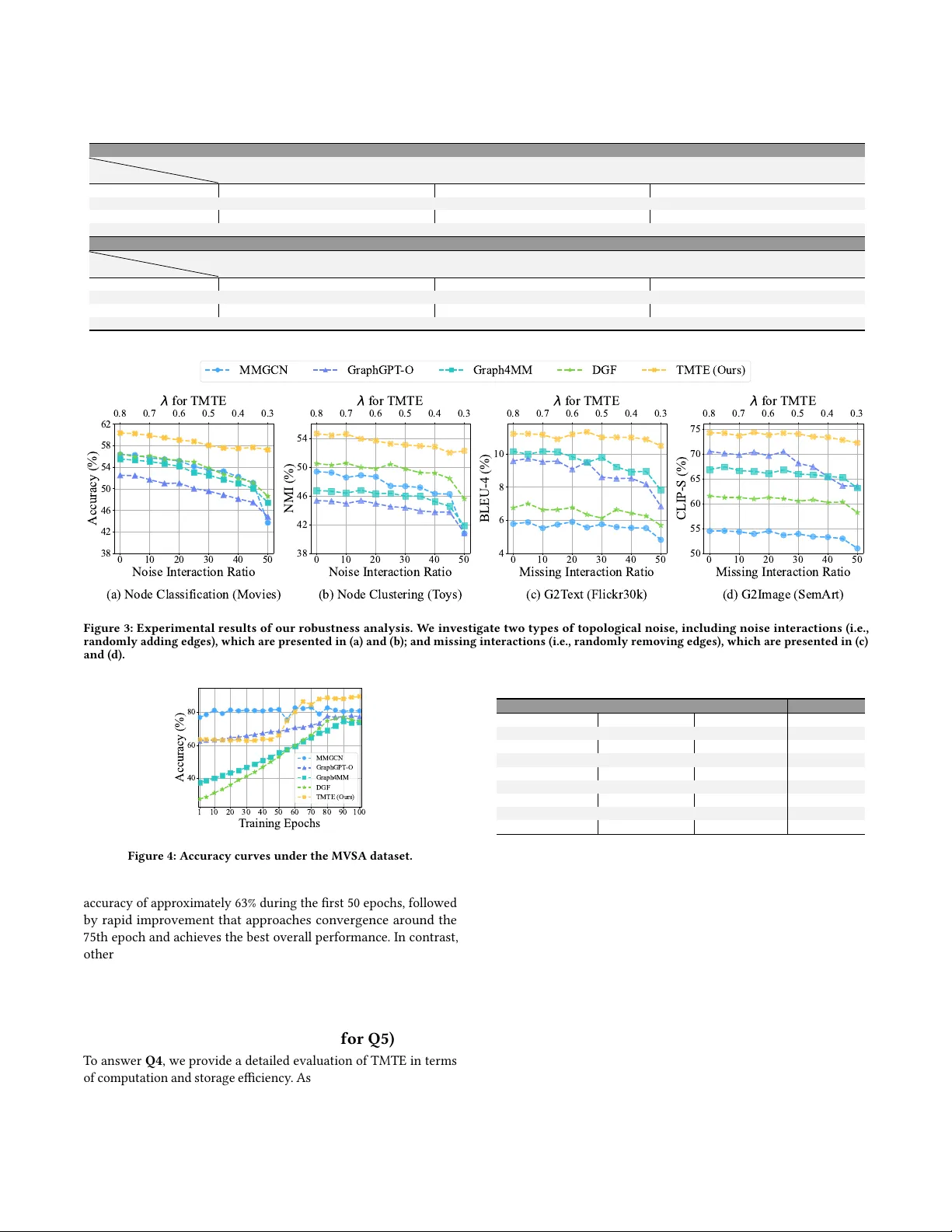

Multimodal-attributed graphs (MAGs) are a fundamental data structure for multimodal graph learning (MGL), enabling both graph-centric and modality-centric tasks. However, our empirical analysis reveals inherent topology quality limitations in real-wo…

Authors: Yinlin Zhu, Xunkai Li, Di Wu