On the role of symmetry for staircase mechanisms in local differential privacy efficiency across different privacy regimes

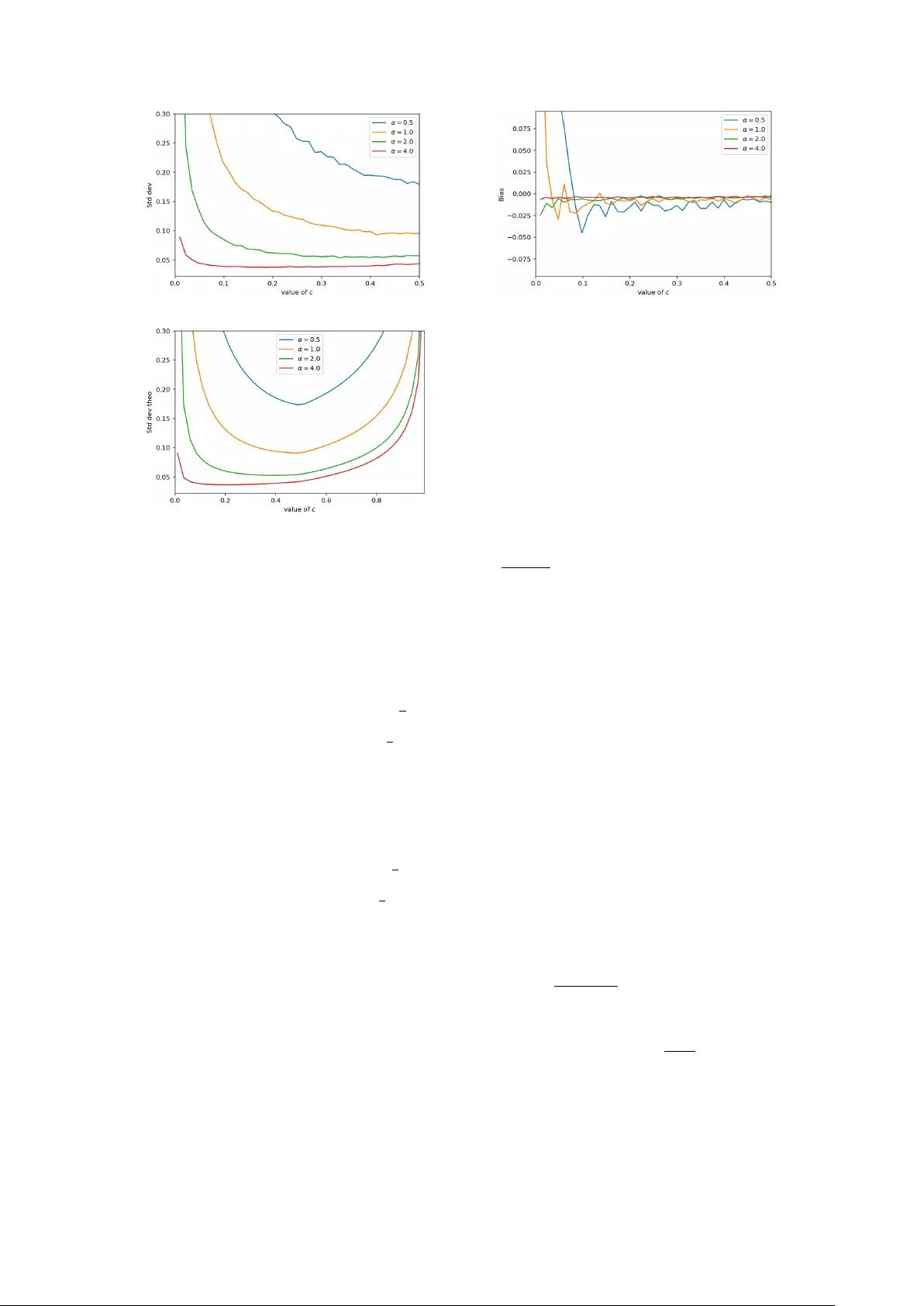

We investigate the structural foundations of statistical efficiency under $α$-local differential privacy, with a focus on maximizing Fisher information. Building on the role of continuous staircase mechanisms, we identify a fundamental symmetry regar…

Authors: Chiara Amorino, Arnaud Gloter