Zero-shot Vision-Language Reranking for Cross-View Geolocalization

Cross-view geolocalization (CVGL) systems, while effective at retrieving a list of relevant candidates (high Recall@k), often fail to identify the single best match (low Top-1 accuracy). This work investigates the use of zero-shot Vision-Language Mod…

Authors: Yunus Talha Erzurumlu, John E. Anderson, William J. Shuart

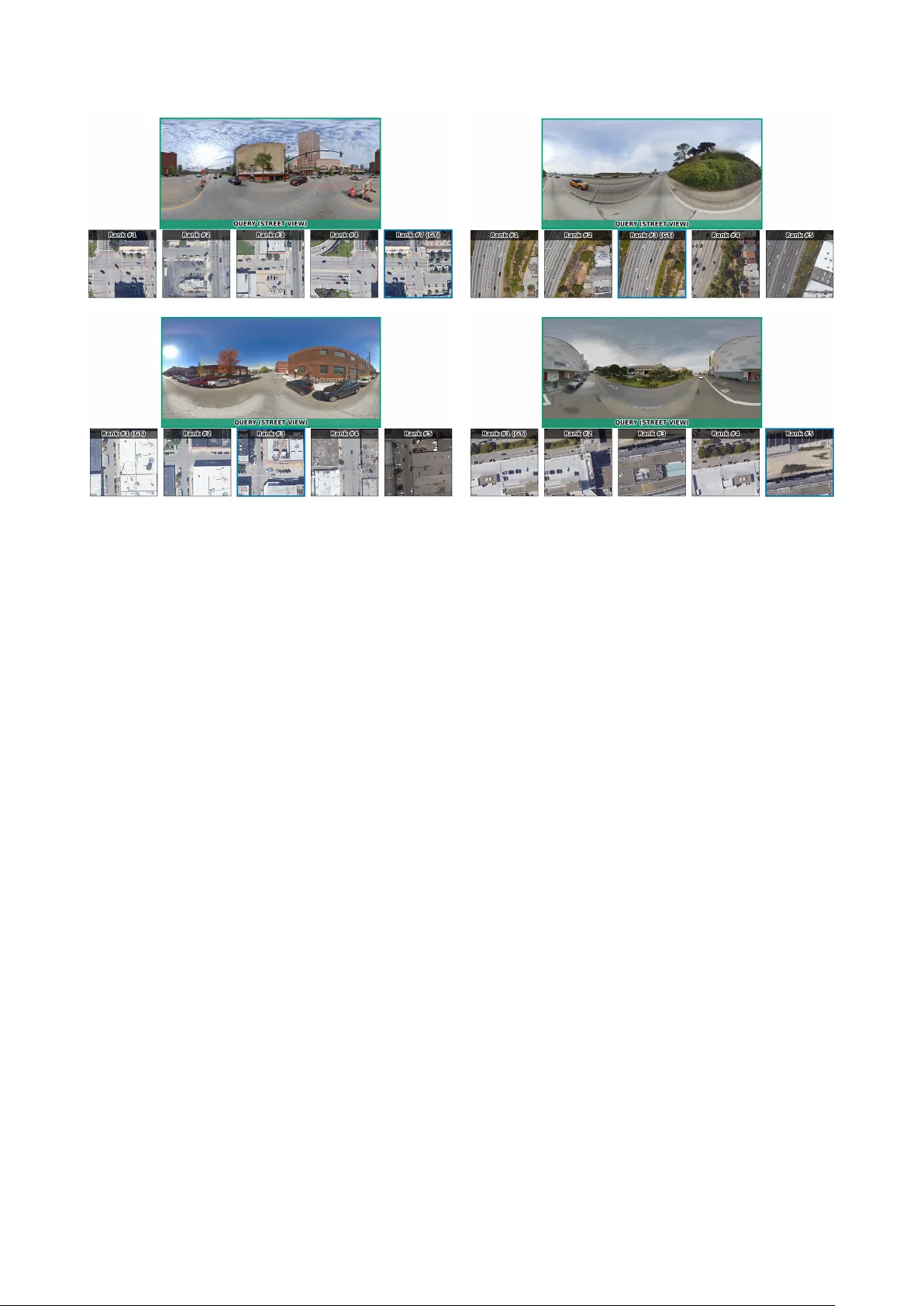

Zero-shot V ision-Language Reranking f or Cr oss-V iew Geolocalization Y unus T alha Erzurumlu 1 , John E. Anderson 2 , W illiam J. Shuart 2 , Charles T oth 3 , Alper Y ilmaz 3 1 Dept. of Electrical and Computer Engineering, The Ohio State Univ ersity , 281 W Lane A ve, Columb us, Ohio, USA erzurumlu.1@osu.edu 2 US Army Corps of Engineers Geospatial Research Lab, Corbin Field Station, W oodford, V irginia, USA (john.anderson, william.j.shuart)@erdc.dren.mil 3 Dept. of Civil Engineering, The Ohio State Uni versity , 281 W Lane A ve, Columbus, Ohio, USA (toth.2, yilmaz.15)@osu.edu Keyw ords: Cross-V iew Geolocalization, V ision-Language Models (VLMs), Reranking, Retrie val, Aerial-Ground Image Matching Abstract Cross-view geolocalization (CVGL) systems, while effectiv e at retrie ving a list of relevant candidates (high Recall@k), often fail to identify the single best match (low T op-1 accuracy). This work in vestigates the use of zero-shot V ision-Language Models (VLMs) as rerankers to address this gap. W e propose a two-stage frame work: state-of-the-art (SOT A) retrie val followed by VLM reranking. W e systematically compare two strategies: (1) Pointwise (scoring candidates indi vidually) and (2) P airwise (comparing candidates relati vely). Experiments on the VIGOR dataset show a clear di vergence: all pointwise methods cause a catastrophic drop in performance or no change at all. In contrast, a pairwise comparison strategy using LLaV A impr oves T op-1 accuracy over the strong retrie val baseline. Our analysis concludes that, these VLMs are poorly calibrated for absolute relev ance scoring but are effecti ve at fine-grained r elative visual judgment, making pairwise reranking a promising direction for enhancing CV GL precision. 1. Introduction Cross-view geolocalization (CVGL), the task of determining the geographic location of a ground-lev el query image by match- ing it ag ainst a database of geo-referenced aerial or satellite images, is a fundamental challenge in computer vision with significant real-world applications. These include autonomous vehicle and drone na vigation, augmented reality systems, and location-based services (Lin et al., 2014, W orkman et al., 2015). The core dif ficulty lies in bridging the drastic viewpoint and appearance gap between ground and aerial perspectiv es, often compounded by variations in season, illumination, and struc- tural changes ov er time (Zhu et al., 2020). Figure 1 illustrates this challenge. Current state-of-the-art (SO T A) methods, often based on con- trastiv e image retriev al, have achieved impressi ve results in re- trieving a set of relev ant candidates, demonstrating high recall rates at top-k positions (e.g., Recall@10, Recall@20) (Deuser et al., 2023). Howe ver , they frequently struggle with identifying the single best match, resulting in relati vely low top-1 accurac y . This limitation hinders deployment in scenarios requiring high precision. These models typically rely on learning global im- age representations that capture coarse-grained similarities but may o verlook the fine-grained details and semantic understand- ing necessary for exact matching. Recently , V ision-Language Models (VLMs) such as LLaV A (Liu et al., 2023) and Qwen-VL (Bai et al., 2025) have demon- strated remarkable abilities in multimodal understanding and reasoning. These models can process and interpret information from both visual and textual modalities, enabling complex tasks like visual question answering, image captioning, and detailed region description. Inspired by the success of Large Language Models (LLMs) in reranking tasks within text retriev al (e.g., RankGPT (Sun et al., 2023)), we hypothesize that VLMs can lev erage their visual-textual reasoning capabilities to perform fine-grained reranking for cross-vie w geolocalization. In this Figure 1. Example of the cross-view geolocalization challenge. a. Ground-lev el query images. b . Several aerial image candidates, only one of which is the correct match, demonstrating the dif ficulty caused by vie wpoint and appearance differences. work, we propose a two-stage framework (visualized in Figure 2) to improve top-1 geolocalization accuracy . First, a SOT A cross-view retriev al model generates an initial list of top-20 candidate aerial images for a giv en ground query . Second, we employ a VLM to rerank these candidates. W e systematically in vestigate dif ferent VLM prompting strategies for reranking: • P ointwise : Evaluating each aerial candidate independently against the ground query using direct score prediction, bin- ary (Y es/No) rele vance prediction, or Likert scale ratings. W e also explore v ariants incorporating explicit reasoning prompts. • Pairwise : Comparing two aerial candidates at a time to determine which better matches the ground query , sub- sequently using these relativ e judgments to sort the full list. W e ev aluate these strategies using LLaV A-1.5-7b and Qwen2.5VL- 7b on the VIGOR dataset (Zhu et al., 2020). Our key finding is that the pairwise comparison strategy , when implemented with LLaV A, outperforms both pointwise methods and the strong retriev al baseline, achie ving a top-1 accurac y of 64.80% com- pared to the baseline 61.20%. This suggests that VLMs could be more effecti ve at making relati ve fine-grained visual judg- ments than assigning absolute rele vance scores in this challen- ging cross-view setting. Importantly , our focus is deliberately on the zero-shot setting: rather than adapting VLMs to the task through additional train- ing, we ask whether off-the-shelf VLMs already possess enough cross-view reasoning ability to impro ve retrie val outputs through prompting alone. Figure 2. The proposed two-stage frame work. Stage 1 uses a SO T A retriev al model to get top- K candidates . Stage 2 uses a VLM reranker to produce the final ranked list. 2. Related W orks 2.1 Cross-V iew Geolocalization CVGL problem initially introduced by (Lin et al., 2014) and (W orkman et al., 2015). Recent adv ances in the problem hav e been dominated by deep learning. Con volutional Neural Net- works (CNNs) (W orkman et al., 2015, Shi et al., 2019, Zhu et al., 2020) and later T ransformers (Zhu et al., 2022, Zhu et al., 2023) ha ve been employed to learn robust feature repres- entations in variant to viewpoint changes. Architectures like Siamese networks (Koch, 2015) are commonly used to learn a shared embedding space where ground and aerial images of the same location are close, often trained with contrastiv e or triplet loss functions (van den Oord et al., 2018, Hof fer and Ailon, 2014). Attention mechanisms (Zhu et al., 2022) and fea- ture aggregation techniques (Arandjelovic et al., 2015, Hu et al., 2018, Shi et al., 2019, Zhu et al., 2023) hav e further impro ved performance by focusing on salient regions. While these meth- ods achiev e high recall@k > 5, recall@1 still remains a chal- lenge (Zhu et al., 2020). Furthermore, reasoning-based frame- works such as GeoReasoner (Li et al., 2024) leverage street- view reasoning to refine candidate selection, and alignment- tuning approaches like AddressVLM (Xu et al., 2025) enable fine-grained address-level localization. Zero-shot and open- domain methods such as StreetCLIP (Haas et al., 2023) reduce dependence on large paired datasets by transferring generalized embeddings across div erse geographic settings. Our work fo- cuses not on improving the initial retriev al but on reranking the outputs of such SO T A models to take advantage of already strong recall@k > 5 metrics. 2.2 V ision-Language Models (VLMs) VLMs combine vision encoders like V iT (Dosovitskiy et al., 2020) with LLMs like Llama (T ouvron et al., 2023) to per- form joint visual and textual understanding. Models like CLIP (Radford et al., 2021) pioneered aligning images and text in a shared embedding space. More recent models like LLaV A (Liu et al., 2023) and Qwen-VL (Bai et al., 2025) adopt an instruction-following paradigm, enabling them to perform di- verse multimodal tasks based on natural language prompts by connecting a vision encoder to an LLM via a projection layer and fine-tuning on instruction datasets. Their ability to un- derstand spatial relationships, object attributes, and scene se- mantics alongside textual reasoning makes them promising can- didates for the fine-grained analysis required in geolocalization reranking. 2.3 LLMs/VLMs for Reranking The idea of using large generativ e models for reranking has gained traction, primarily in te xt retriev al. RankGPT (Sun et al., 2023) and similar approaches (Chen et al., 2024) demonstrated that LLMs can effectiv ely rerank document lists using point- wise, pairwise, or listwise prompting strategies, often surpass- ing traditional learning-to-rank methods. Extending this to the visual domain is less explored. Our work applies this reranking concept using VLMs for the specific, challenging task of cross- view geolocalization, focusing on comparing dif ferent prompt- ing strategies within this multimodal conte xt. 3. Methodology W e propose a two-stage framework for cross-view geolocaliz- ation, le veraging a SO T A retrieval model for candidate gener- ation and a VLM for fine-grained reranking, as illustrated in Figure 2. 3.1 Stage 1: Candidate Retrieval Giv en a ground-le vel query image I g , we first employ a pre- trained SO T A cross-view geolocalization model (e.g., based on (Deuser et al., 2023)) to retrie ve the top- K most similar aeri- al/satellite images { I a, 1 , I a, 2 , ..., I a,K } from a lar ge, geo refer - enced database. In our e xperiments, we use K = 20 . This choice is moti vated by the strength of the first-stage retriever: the baseline model already achieves Recall@20 abo ve 90%, meaning that the cor - rect match is typically present in the candidate pool while keep- ing VLM inference computationally tractable. Our goal in this work is therefore not to perform exhausti ve VLM-based search ov er the full satellite database, which would be prohibiti vely expensi ve, b ut to study whether a zero-shot VLM can improv e precision within a high-recall candidate set. This also highlights a limitation of the proposed framework: if the correct match is absent from the initial top- K list, the reranker cannot recov er it. Let this initial ranked list be L initial . 3.2 Stage 2: VLM Reranking The core of our approach lies in using a VLM to rerank the ini- tial candidate list L initial to produce a refined list L rer anked , aiming to place the true match a t the top rank. W e utilize VLMs capable of processing both the ground query image I g and each aerial candidate image I a,i . W e explored several prompting strategies: 3.2.1 Pointwise Strategies These methods evaluate each aer- ial candidate I a,i independently with respect to the ground query I g . • Direct Prediction: The VLM is prompted to output a score on 0-100 scale. Example prompt: ”Assess the simil- arity ... Score:” W e directly take this as final score and rank candidates by this score. • Likert Score: The VLM is prompted to output a similarity score on a Likert scale (1-5). Example prompt: ”Assess the similarity ... Score:” W e calculate the expected score based on the VLM’ s output probability distribution over the tokens ’1’, ’2’, ’3’, ’4’, ’5’ and rank candidates by this score. Given the VLM’ s output probability p i,k = P ( s = k | I g , I a,i ) o ver the tokens k ∈ { 1 , 2 , 3 , 4 , 5 } for aerial candidate i , the expected score is: ˆ s i = 5 X k =1 k p i,k . • Y es/No Prediction: The VLM is prompted for a binary relev ance judgment. Example prompt: ”Do the ground- lev el image and the aerial image show the same location?... Answer:” Candidates are ranked based on the probability assigned to the ’Y es’ token, calculated as: P ( ’Y es’ ) P ( ’Y es’ ) + P ( ’No’ ) • Reasoning + Y es/No: W e first ask the VLM to reason about the match before providing a Y es/No answer . Ex- ample prompt: ”Compare the ground-le vel and aerial im- ages... Reasoning and Answer:” Ranking is based on the final P ( ’Y es’ ) as above. 3.2.2 Pairwise Strategies These methods compare two aer- ial candidates, I a,i and I a,j , at a time against the ground query I g . • Pairwise Comparison: The VLM is prompted to choose which of two aerial candidates is the better match for the ground query . In other words, for a fixed query I g , the VLM acts as a comparison function C ( I g , I a,i , I a,j ) that returns the preferred candidate. W e instantiate reranking as a comparison-based sorting proced- ure over the top- K candidate list, where the VLM pro vides the outcome of each pairwise comparison and the sorting algorithm determines the final order . In our implementation, we use mer ge sort , requiring approximately O ( K log K ) pairwise VLM calls. W e chose this formulation because our goal is to produce a full reranked list rather than only identify a single winner . While a tournament-style elimination scheme w ould require only K − 1 comparisons to select the top candidate, it does not directly provide a reliable ordering of the remaining candidates, which is important for ev aluating Recall@1, Recall@3, and Recall@5. In addition, full sorting is less dependent on a single early de- cision. Exploring lower -budget pairwise schemes such as elim- ination brackets is an interesting direction for future work. 3.2.3 VLMs Used W e experimented with two publicly avail- able VLMs: LLaV A-1.5-7b (Liu et al., 2023) and Qwen2.5VL- 7b (referred to as Qwen-VL in the paper (Bai et al., 2025)). 4. Experiments 4.1 Dataset and Setup W e ev aluate our approach on the VIGOR dataset (Zhu et al., 2020). VIGOR is a widely used benchmark for cross-vie w geo- localization and a challenging one. W e follo w standard pro- tocols and use the provided test split for CR OSS-AREA task, focusing on matching visible ground queries to visible satellite images. W e randomly sampled 500 queries for ef ficiency . The initial top-20 candidates ( K = 20 ) for each query were gener- ated using a SO T A retriev al model Sample4Geo (Deuser et al., 2023), providing a strong baseline. 4.2 Evaluation Metrics W e report standard retriev al metrics: T op-1 Accuracy (Recall@1), Recall@3, and Recall@5. T op-1 Accuracy is our primary met- ric, reflecting the ability to pinpoint the exact match. Recall@k measures the percentage of queries for which the correct aerial image is ranked within the top k positions. T able 1. Performance comparison of VLM reranking strategies on the VIGOR dataset (500 test queries). Baseline performance is from the initial SO T A retriev al model. Results are shown in Recall@k (%). ’-’ indicates metric not applicable or computed. Best result for each metric is in bold . Method R@1 (%) R@3 (%) R@5 (%) Baseline (Retriev al Only) 61.20 73.80 82.40 P ointwise Methods LLaV A Direct 61.20 73.80 82.40 Qwen Direct 25.00 49.00 65.20 LLaV A Likert 4.80 15.20 26.20 Qwen Likert 14.80 34.80 50.80 LLaV A Y es/No 15.20 33.40 46.00 Qwen Y es/No 15.60 36.20 53.20 LLaV A Reason Y es/No 8.00 21.60 34.00 Qwen Reason Y es/No 12.80 31.00 42.20 P airwise Methods LLaV A Pairwise 64.80 84.80 89.80 Qwen Pairwise 30.40 55.00 68.40 4.3 Implementation Details Experiments were run using the official implementations and pre-trained weights for LLaV A-1.5-7b and Qwen2.5VL-7b. W e utilized NVIDIA A6000 GPUs for the VLM inference. F or pairwise sorting, we implemented an efficient sorting algorithm using the VLM’ s comparison output. Probabilities for Y es/No and Likert scoring were extracted from the VLM’ s logits for the target tok ens. Figure 3. Analysis of pointwise reranking failures. Plots (a–b) sho w score distributions for direct score prediction, (c–d) for Likert-scale prediction, (e–f) for Y es/No prediction, and (g–h) for Y es/No prediction with explicit reasoning. In all cases, the distributions for correct and incorrect candidates strongly o verlap, indicating that the VLM-assigned scores (whether direct, Likert, or Y es/No) do not provide a clear , separable signal to distinguish the true match from other plausible-but-incorrect candidates. 4.4 The Ineffectiveness of Pointwise Reranking Our results demonstrate a clear and consistent failure of all pointwise reranking strategies. As shown in T able 1 , e very pointwise method from Likert scales to binary Y es/No judg- ments performs significantly worse than the retrieval baseline, with T op-1 accuracies collapsing to a range between 4.80% (LLaV A Likert) and 15.60% (Qwen Y es/No). The score distribution plots in Figur e 3 provide a clear explan- ation for this failure: the these VLMs appear miscalibrated for assigning absolute rele vance scores in this challenging cross- view task. The models e xhibit two distinct failure modes: • LLaV A: Lack of Discrimination. LLaV A consistently struggles to produce a useful signal. Across all pointwise experiments (Likert, Y es/No, etc.), its score distributions for correct and incorrect candidates are nearly identical. This is taken to an extreme in the direct scoring method (Figure 3.a), where LLaV A assigns the same high score to virtually all candidates, resulting in a ranking identical to the baseline and yielding no change. • Qwen-VL: Signal Drowned by V ariance. In contrast, Qwen-VL does show a clear tendency to assign higher a v- erage scores to correct matches (Figure 3.b). Howe ver , this potential signal is rendered ineffecti ve by an extremely high variance. Man y incorrect candidates also receiv e high scores, making it impossible to reliably rank the true match at the top. Furthermore, we found that adding an explicit reasoning step (Reason Y es/No) consistently de graded performance for both models. For Qwen, this prompt exacerbated the v ariance is- sue, pushing scores for all candidates e ven higher and further confusing the ranking. This collecti ve failure strongly suggests that while VLMs can identify plausible candidates, they are unsuited for making the fine-grained, absolute similarity judg- ments required by pointwise reranking, without further training at least. 4.5 The Success of Pairwise Comparison In a drastic departure from the pointwise failures, the pairwise comparison strategy yielded significantly dif ferent results. The LLaV A Pairwise method achieved the best performance o ver- all, with a T op-1 accuracy of 64.80%. This represents a 3.6% absolute impro vement ov er the strong 61.20% baseline. The Recall@3 (84.80%) and Recall@5 (89.80%) metrics also im- prov ed, indicating a more robust ranking in the top-k positions. Howe ver , this modest 3.6% improv ement from LLaV A must be interpreted with caution. Gi ven LLaV A ’ s total inability to discriminate between correct and incorrect candidates in any pointwise setting (as shown in Figure 3), its success here may be limited. It is plausible that the model is only resolving ”easy” comparisons and failing to adjudicate more ambiguous candid- ates, leading to a small statistical gain. A more telling, albeit less numerically successful, result is from Qwen-VL. While its absolute T op-1 accuracy of 30.40% re- mains low , it represents a relative leap compared to its best pointwise performance (25.00%). This suggests that Qwen- VL, which already showed a (highly variant) signal for correct candidates in the pointwise tasks, is able to lev erage the com- parativ e prompt structure more effecti vely . This finding indic- ates that Qwen-VL ’ s underlying capability is a promising can- didate for this task, which could potentially be harnessed with fine-tuning or more advanced prompt engineering to surpass the baseline. 5. Conclusion This work in vestigated the use of zero-shot V ision-Language Models to address the low top-1 accurac y of SO T A cross-vie w geolocalization systems. W e proposed a two-stage framework, using a SO T A model for candidate retrie val and a VLM for sub- sequent reranking. W e systematically compared pointwise (ab- solute scoring) and pairwise (relativ e comparison) strategies. Figure 4. Main Recall Performance Comparison. This plot shows Recall@1, Recall@3, and Recall@5 for the baseline retriev al model and all VLM reranking strategies, sorted by R@1 performance. LLaV A Pairwise (64.80% R@1) is the only method to achiev e a significant improvement o ver the Baseline (61.20% R@1). In contrast, all other pointwise methods (Direct, Likert, Y es/No) cause a catastrophic drop in performance, or no change at all. Our findings on the VIGOR dataset are twofold. First, all point- wise methods failed, with our analysis rev ealing that these VLMs appear poorly calibrated for assigning absolute similarity scores in this specific task configuration, as shown in Figure 3. Second, the LLaV A Pairwise strategy proved effecti ve, impro ving the T op-1 accuracy from 61.20% to 64.80% and R@5 from 82.40% to 89.80%. W e conclude that the task formulation is critical: while VLMs fail at absolute scoring, they possess a strong emergent capabil- ity for fine-grained relative visual judgment . This suggests that VLM-based pairwise reranking is a viable and promising dir- ection for enhancing the precision of geolocalization systems. Future work should focus on fine-tuning VLMs on a dedicated pairwise cross-view dataset to further harness this capability . References Arandjelovic, R., Gron ´ at, P ., T orii, A., Pajdla, T ., Si vic, J., 2015. NetVLAD: CNN architecture for weakly su- pervised place recognition. CoRR , abs/1511.07247. http://arxiv .org/abs/1511.07247. Bai, S., Chen, K., Liu, X., W ang, J., Ge, W ., Song, S., Dang, K., W ang, P ., W ang, S., T ang, J., Zhong, H., Zhu, Y ., Y ang, M., Li, Z., W an, J., W ang, P ., Ding, W ., Fu, Z., Xu, Y ., Y e, J., Zhang, X., Xie, T ., Cheng, Z., Zhang, H., Y ang, Z., Xu, H., Lin, J., 2025. Qwen2.5-VL T echnical Report. ArXiv , abs/2502.13923. https://api.semanticscholar .org/CorpusID:276449796. Chen, S., Guti’errez, B. J., Su, Y ., 2024. Atten- tion in Large Language Models Y ields Efficient Zero-Shot Re-Rankers. ArXiv , abs/2410.02642. ht- tps://api.semanticscholar .org/CorpusID:273098593. Deuser , F ., Habel, K., Oswald, N., 2023. Sample4Geo: Hard Negati ve Sampling For Cross-V iew Geo- Localisation. 2023 IEEE/CVF International Confer- ence on Computer V ision (ICCV) , 16801-16810. ht- tps://api.semanticscholar .org/CorpusID:257636648. Dosovitskiy , A., Beyer , L., Kolesnik ov , A., W eissenborn, D., Zhai, X., Unterthiner , T ., Dehghani, M., Minderer , M., Heigold, G., Gelly , S., Uszkoreit, J., Houlsby , N., 2020. An Image is W orth 16x16 W ords: T ransformers for Image Recognition at Scale. ArXiv , abs/2010.11929. ht- tps://api.semanticscholar .org/CorpusID:225039882. Haas, L., Alberti, S., Skreta, M., 2023. Learning Generalized Zero-Shot Learners for Open-Domain Image Geolocalization. ArXiv , abs/2302.00275. ht- tps://api.semanticscholar .org/CorpusID:256459449. Hoffer , E., Ailon, N., 2014. Deep metric learning using triplet network. International W orkshop on Similarity-Based P attern Recognition . Hu, S., Feng, M., Nguyen, R. M. H., Lee, G. H., 2018. Cvm- net: Cross-view matching network for image-based ground-to- aerial geo-localization. 2018 IEEE/CVF Conference on Com- puter V ision and P attern Recognition , 7258–7267. K och, G. R., 2015. Siamese neural networks for one-shot image recognition. Li, L., Y e, Y ., Jiang, B., Zeng, W ., 2024. GeoReasoner: Geo-localization with Reasoning in Street V iews using a Large V ision-Language Model. ArXiv , abs/2406.18572. ht- tps://api.semanticscholar .org/CorpusID:270764352. Lin, T .-Y ., Belongie, S., Hays, J., 2014. Cross-V iew Image Geo- localization. 2014 IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 891–898. Liu, H., Li, C., W u, Q., Lee, Y . J., 2023. V isual Instruction T uning. ArXiv , abs/2304.08485. ht- tps://api.semanticscholar .org/CorpusID:258179774. Radford, A., Kim, J. W ., Hallacy , C., Ramesh, A., Goh, G., Agarwal, S., Sastry , G., Askell, A., Mishkin, P ., Clark, J., Krueger , G., Sutskev er, I., 2021. Learning transferable visual models from natural language supervision. International Con- fer ence on Machine Learning . Shi, Y ., Liu, L., Y u, X., Li, H., 2019. Spatial-aw are feature ag- gregation for image based cross-vie w geo-localization. Neural Information Pr ocessing Systems . Sun, W ., Y an, L., Ma, X., Ren, P ., Y in, D., Ren, Z., 2023. Is ChatGPT Good at Search? In vestigating Large Language Models as Re-Ranking Agent. ArXiv , abs/2304.09542. ht- tps://api.semanticscholar .org/CorpusID:258212638. T ouvron, H., Lavril, T ., Izacard, G., Martinet, X., Lachaux, M.-A., Lacroix, T ., Rozi ` ere, B., Goyal, N., Ham- bro, E., Azhar , F ., Rodriguez, A., Joulin, A., Grav e, E., Lample, G., 2023. LLaMA: Open and Efficient Foundation Language Models. ArXiv , abs/2302.13971. ht- tps://api.semanticscholar .org/CorpusID:257219404. van den Oord, A., Li, Y ., V inyals, O., 2018. Representation Learning with Contrastiv e Pre- dictiv e Coding. ArXiv , abs/1807.03748. ht- tps://api.semanticscholar .org/CorpusID:49670925. W orkman, S., Souvenir , R., Jacobs, N., 2015. Wide-Area Im- age Geolocalization with Aerial Reference Imagery . 2015 IEEE International Confer ence on Computer V ision (ICCV) , IEEE, Santiago, Chile, 3961–3969. Xu, S., Zhang, C., Fan, L., Zhou, Y ., F an, B., Xiang, S., Meng, G., Y e, J., 2025. AddressVLM: Cross-view alignment tun- ing for image address localization using large vision-language models. Zhu, S., Shah, M., Chen, C., 2022. TransGeo: T rans- former Is All Y ou Need for Cross-view Image Geo- localization. 2022 IEEE/CVF Confer ence on Computer V ision and P attern Recognition (CVPR) , 1152-1161. ht- tps://api.semanticscholar .org/CorpusID:247802213. Zhu, S., Y ang, T ., Chen, C., 2020. VIGOR: Cross- V iew Image Geo-localization beyond One-to-one Re- triev al. 2021 IEEE/CVF Conference on Computer V is- ion and P attern Recognition (CVPR) , 5316-5325. ht- tps://api.semanticscholar .org/CorpusID:227151840. Zhu, Y ., Y ang, H., Lu, Y ., Huang, Q., 2023. Simple, effect- iv e and general: A new backbone for cross-view image geo- localization. A. Appendix In this part, we will provide the prompts used in experiments and some qualitativ e results. A.1 Prompts Used In Experiments Pointwise: Direct Prompt Y ou are an expert geospatial analyst. Y our task is to determine the best satellite image match for a ground- lev el query panorama. Here is the ground-lev el panor - ama query image: ’ < GR OUNDIMA GE > ’ Here is a candidate satellite image: ’ < AERIALIMA GE > ’ Carefully analyze the ground-level query image and compare it to satellite image. Focus on key cross-view features like road network, building arrangement, and landmarks. Evaluate if the satellite image corresponds to the location shown in the ground-level panorama. Provide a confidence score between 0 (no match) and 100 (perfect match). Respond ONL Y with the score. Pointwise: Y es/No Prompt Y ou are an expert geospatial analyst. Y our task is to determine the best satellite image match for a ground- lev el query panorama. Here is the ground-lev el panor - ama query image: ’ < GR OUNDIMA GE > ’ Here is a candidate satellite image: ’ < AERIALIMA GE > ’ Carefully analyze the ground-level query image and compare it to satellite image. Focus on key cross-view features like road network, building arrangement, and landmarks. Based on this comparison does the satel- lite image accurately match the location sho wn in the ground-lev el panorama? Answer ONL Y with the single word ‘Y es’ or ‘No’. Pointwise: Likert Prompt Y ou are an expert geospatial analyst. Y our task is to determine the best satellite image match for a ground- lev el query panorama. Here is the ground-lev el panor - ama query image: ’ < GR OUNDIMA GE > ’ Here is a candidate satellite image: ’ < AERIALIMA GE > ’ Carefully analyze the ground-level query image and compare it to satellite image. Focus on key cross-view features like road network, building arrangement, and landmarks On a scale of 1 (no match) to 5 (perfect match), how well does the satellite image match the loca- tion in the ground-lev el panorama? Respond ONL Y with a single digit (1, 2, 3, 4, or 5). Pointwise: Y es/No with Reasoning Prompt Y ou are an expert geospatial analyst. Y our task is to determine the best satellite image match for a ground- lev el query panorama. Here is the ground-lev el panor - ama query image: ’ < GR OUNDIMA GE > ’ Here is a candidate satellite image: ’ < AERIALIMA GE > ’ Carefully analyze the ground-level query image and compare it to satellite image. Focus on key cross-view features like road network, building arrangement, and landmarks. Provide a detailed step-by-step reasoning comparing key visual features (e.g., road layout, b uild- ing shapes, relati ve positions, landmarks). Explain your conclusion. Based on the reasoning about the match between the two images. Concisely , does the satel- lite image match the ground-le vel panorama? Answer ONL Y with the single word ’Y es’ or ’No’. Pairwise Prompt Y ou are an expert geospatial analyst. Y our task is to determine the best satellite image match for a ground- lev el query panorama. Here is the ground-lev el panor - ama query image ’ < GR OUNDIMA GE > ’ Here is satellite image 1: ’ < AERIALIMA GE1 > ’ Here is satellite image 2: ’ < AERIALIMA GE2 > ’ Carefully analyze the ground-level query image and compare it to both candidate satellite images. Focus on key cross-view features like road network, building ar- rangement, and landmarks Based on this comparison, which satellite image (1 or 2) provides the better geo- spatial match? Respond ONL Y with a JSON object in- dicating the preferred image number (1 or 2), like this: { ”preference”: ” < 1 or 2 > ” } Figure 5. Some qualitativ e results comparing the base model’ s ranking and the VLM’ s final selection. In each example, the satellite images are displayed in the order originally produced by the base model, not in the reranked order . The blue outlined image indicates the VLM’ s top-1 selection among these candidates. A.2 Qualitative Results W e provide several qualitativ e examples to illustrate ho w the VLM selects among the candidates proposed by the base model in Figure 5. In all examples, the satellite images are shown in the original order produced by the base model, while the blue outline marks the VLM’ s top-1 choice.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment