Diagnosing and Repairing Unsafe Channels in Vision-Language Models via Causal Discovery and Dual-Modal Safety Subspace Projection

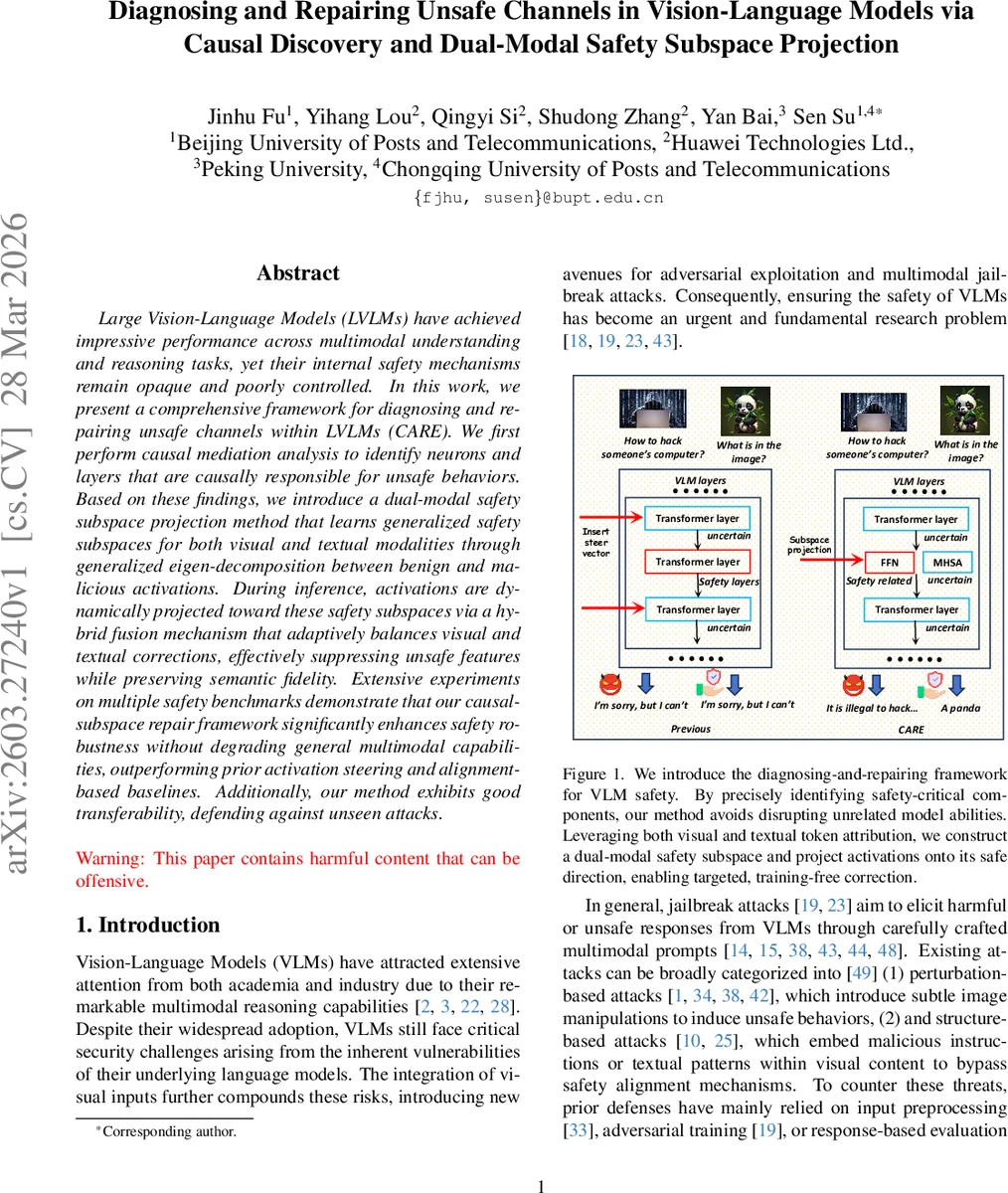

Large Vision-Language Models (LVLMs) have achieved impressive performance across multimodal understanding and reasoning tasks, yet their internal safety mechanisms remain opaque and poorly controlled. In this work, we present a comprehensive framework for diagnosing and repairing unsafe channels within LVLMs (CARE). We first perform causal mediation analysis to identify neurons and layers that are causally responsible for unsafe behaviors. Based on these findings, we introduce a dual-modal safety subspace projection method that learns generalized safety subspaces for both visual and textual modalities through generalized eigen-decomposition between benign and malicious activations. During inference, activations are dynamically projected toward these safety subspaces via a hybrid fusion mechanism that adaptively balances visual and textual corrections, effectively suppressing unsafe features while preserving semantic fidelity. Extensive experiments on multiple safety benchmarks demonstrate that our causal-subspace repair framework significantly enhances safety robustness without degrading general multimodal capabilities, outperforming prior activation steering and alignment-based baselines. Additionally, our method exhibits good transferability, defending against unseen attacks.

💡 Research Summary

The paper introduces CARE (Causal Analysis and Repair for Enhancing safety), a two‑stage framework designed to diagnose and remediate unsafe behavior in large vision‑language models (LVLMs). In the diagnosis stage, the authors apply causal mediation analysis to quantify the causal impact of individual neurons and layers on unsafe outputs. Layer‑wise ablation experiments reveal that mid‑layers (12‑14 in Qwen2.5‑VL and 16‑18 in LLaVA‑OneVision) are most responsible for safety‑related representations. Clustering metrics (Silhouette, Class Separation, Mahalanobis distance) further confirm that these layers best separate benign from malicious activations. Component‑wise ablations show that Feed‑Forward Networks (FFNs) have a stronger causal influence on unsafe generation than Multi‑Head Self‑Attention (MHSA), because FFN activations are more sample‑specific and less correlated across inputs.

For repair, the method first performs cross‑modal token attribution using a Radial Basis Function (RBF) kernel to identify visual‑textual token pairs that most strongly correlate with jailbreak or adversarial success. Using a curated set of malicious prompts (JailbreakV, AdvBench, FigStep) and benign images (COCO), the authors construct separate activation matrices for safe and unsafe cases. A generalized eigen‑decomposition between these matrices yields a “danger subspace” for each modality. During inference, the activations of the identified mid‑layer FFNs are projected onto the orthogonal complement of this subspace, with a regularization term to preserve overall representation quality. A hybrid fusion mechanism dynamically balances the amount of projection applied to visual and textual streams, preventing over‑correction of a single modality.

Empirical evaluation shows that CARE reduces attack success rates (ASR) on jailbreak benchmarks from 25‑40% down to below 10%, and cuts PGD‑based adversarial success to 5‑15%, while incurring only a 2‑8% drop in standard multimodal tasks such as SQA, MMBench, and MM‑VET. The approach outperforms prior activation‑steering methods like ASTRA and SPO‑VLM, which either degrade performance more severely or lack principled localization of unsafe components. Moreover, CARE demonstrates strong transferability: it defends against unseen attacks and works across different LVLM architectures without any additional training.

In summary, CARE provides a theoretically grounded, training‑free solution that precisely locates unsafe channels via causal analysis and mitigates them through dual‑modal safety subspace projection, achieving robust safety improvements while preserving the core multimodal capabilities of large vision‑language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment