MediHive: A Decentralized Agent Collective for Medical Reasoning

Large language models (LLMs) have revolutionized medical reasoning tasks, yet single-agent systems often falter on complex, interdisciplinary problems requiring robust handling of uncertainty and conflicting evidence. Multi-agent systems (MAS) levera…

Authors: Xiaoyang Wang, Christopher C. Yang

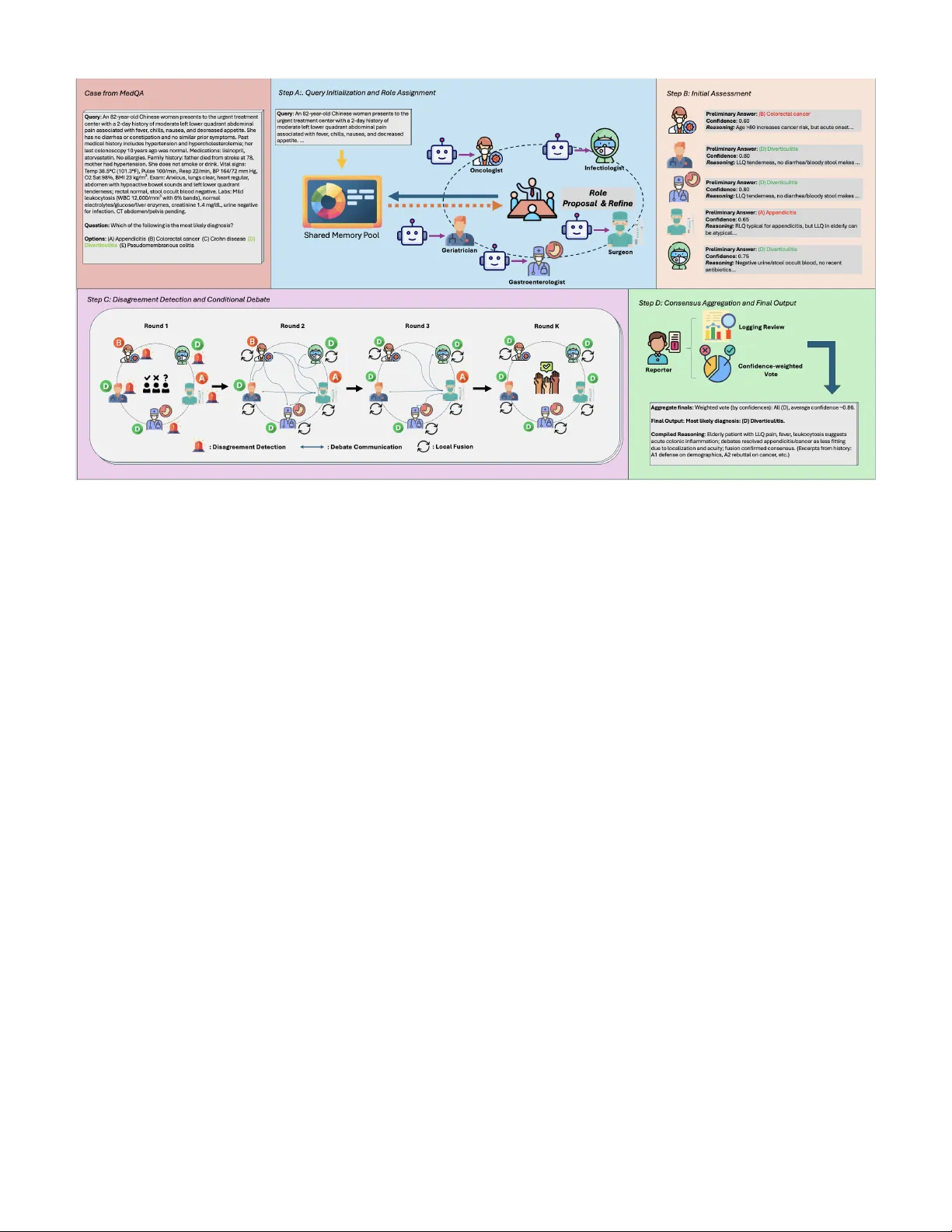

MediHi v e: A Decentralized Agent Collecti ve for Medical Reasoning Xiaoyang W ang Colle ge of Computing and Informatics Dr exel University Philadelphia, USA xw388@drex el.edu Christopher C. Y ang Colle ge of Computing and Informatics Dr exel University Philadelphia, USA chris.yang@drex el.edu Abstract —Large language models (LLMs) have re volutionized medical r easoning tasks, yet single-agent systems often falter on complex, interdisciplinary problems requiring rob ust handling of uncertainty and conflicting evidence. Multi-agent systems (MAS) leveraging LLMs enable collaborative intelligence, but prev ailing centralized architectures suffer from scalability bottlenecks, sin- gle points of failure, and role confusion in resour ce-constrained en vironments. Decentralized MAS (D-MAS) pr omise enhanced autonomy and resilience via peer-to-peer interactions, but their application to high-stakes healthcare domains remains under- explored. W e introduce MediHive, a novel decentralized multi- agent framework for medical question answering that integrates a shared memory pool with iterative fusion mechanisms. MediHive deploys LLM-based agents that autonomously self-assign special- ized r oles, conduct initial analyses, detect diver gences thr ough conditional evidence-based debates, and locally fuse peer insights over multiple rounds to achieve consensus. Empirically , MediHive outperforms single-LLM and centralized baselines on MedQA and PubMedQA datasets, attaining accuracies of 84.3% and 78.4%, respectively . Our work advances scalable, fault-tolerant D-MAS f or medical AI, addressing key limitations of centralized designs while demonstrating superior perf ormance in reasoning- intensive tasks. Index T erms —Healthcare AI, Large Language Model, Decen- tralized Multi-Agent System, Medical Q&A. I . I N T RO D U C T I O N The advent of Large Language Models (LLMs) has trans- formed medical reasoning, enabling advanced capabilities in diagnostic support, treatment planning, and kno wledge synthe- sis across healthcare domains such as disease diagnosis [1], patient management, and clinical decision-making [2]–[4]. Howe ver , single LLM-based agents frequently encounter limi- tations in handling the intricate, multifaceted nature of medical problems, which demand interdisciplinary expertise, real-time adaptation to patient-specific data, and robust handling of un- certainties like incomplete information or conflicting evidence. This has driv en the emergence of multi-agent systems (MAS) in healthcare, where autonomous agents collaborate to sim- ulate multi-disciplinary team (MDT) discussions, decompose diagnostic tasks, share insights, and refine reasoning through iterativ e interactions, thereby achieving collecti ve intelligence tailored to medical contexts [5]. In LLM-based MAS for medical reasoning, agents harness the natural language processing, logical inference, and kno wl- edge inte gration strengths of LLMs to interpret patient data [6], query medical databases, and generate e vidence-based recom- mendations collaborativ ely . Con ventional MAS architectures in medicine often rely on centralized coordination, such as a primary agent overseeing workflows or static hierarchies for task allocation [7]. While effecti ve in controlled environments, these centralized approaches suffer from se veral dra wbacks. First, they e xhibit limited scalability , as the central agent becomes a bottleneck when handling lar ge numbers of agents or high-volume queries, leading to performance degradation in expansi ve systems. Second, they introduce a single point of failure, where the malfunction or ov erload of the coordinator can halt the entire system, compromising reliability in critical applications. Third, in resource-constrained scenarios where a single LLM instance simulates multiple positions within the MAS, there is a heightened risk of confusion, leakage, or disarray among roles and knowledge bases. This can manifest as agents inadvertently blending personas, leading to incon- sistent reasoning or diluted expertise, ultimately undermining the system’ s integrity and output quality [8]. Decentralized Multi-Agent Systems (D-MAS) emerge as a promising alternati ve, addressing these limitations by em- powering agents to operate autonomously through peer-to- peer communication and self-or ganization, without reliance on a central authority . This approach enhances scalability by distributing computational load and decision-making, im- prov es fault tolerance as the system can persist e ven if in- dividual agents fail, and mitigates role confusion by allowing each agent—potentially instantiated as separate LLM calls—to maintain isolated yet collaborativ e kno wledge states. Exist- ing D-MAS works, such as AgentNet’ s self-ev olving roles and decentralized collaboration [9], or Symphony’ s pri vac y- saving orchestration with lo w ov erhead [10], hav e demon- strated efficac y in general tasks. Howe ver , explorations in domain-specific applications, particularly healthcare, remain insufficient. Medical question answering, with its high-stakes requirements for accuracy , ethical reasoning, and handling datasets like PubMedQA or MedQA, demands tailored D- MAS designs that integrate medical expertise without e xternal tools like knowledge graphs or retrie val-augmented generation, yet few studies have delved deeply into this intersection. T o bridge this gap, we introduce MediHiv e, a novel decen- tralized multi-agent framework with a shared memory pool for medical reasoning. MediHi ve employs multiple LLM- based agents in a decentralized coordination architecture, enabling autonomous operations without a central decision- making agent. MediHiv e unfolds in a structured, iterative manner to foster emer gent consensus, beginning with query initialization where the medical question is broadcast to the shared memory pool to provide a common starting point for all agents. This is follo wed by self-ev olving role assign- ment, during which agents propose and refine specialized roles through self-reflection and re view of peers’ proposals to ensure div ersity and relev ance. Next comes the initial analysis phase, where each agent independently generates reasoning and preliminary answers based on their role, posting these contributions to the shared pool. Agents then autonomously detect diver gences in the analyses. If significant disagreements arise, a conditional debate phase activ ates, incorporating turn- based reb uttals, defenses, and proposals to refine arguments and build toward alignment. Post-debate, the iterative shared fusion stage ensues, allo wing agents to locally fuse shared information by critiquing and integrating peers’ insights, thereby updating their reasonings and answers over multiple rounds until conv ergence criteria are met. Finally , consensus is reached through multiple rounds of deliberation, yielding a consolidated final response complete with compiled reasoning excerpts from the history . Overall, the main contributions of our work are threefold: • W e propose MediHiv e as a novel decentralized multi- agent framework that integrates shared memory and fu- sion mechanisms, of fering a scalable and robust solution for LLM-based systems in medical question answering. This frame work stands out by eliminating the need for a central coordinator , instead relying on autonomous agents that self-organize to handle complex queries efficiently . • It directly targets the aforementioned challenges of cen- tralized designs by enabling autonomous agent opera- tions, robust fault tolerance, and clear separation of roles to prev ent knowledge disarray . • Through rigorous e xperiments on PubMedQA and MedQA datasets, we demonstrate the framework’ s valid- ity , achieving predictiv e accuracies of 84.3% and 78.4% respectiv ely , alongside improv ed agreement rates and ef- ficient interactions, validating its superiority over single- LLM and centralized baselines. I I . R E L A T E D W O R K A. LLM-based Multi-Agent The paradigm of multi-agent systems (MAS), which in- volv es multiple autonomous agents interacting within an en vironment to achiev e collecti ve goals, has been signifi- cantly revitalized by the integration of Large Language Mod- els (LLMs). LLMs serve as the cognitiv e core for these agents, equipping them with advanced capabilities for plan- ning, reasoning, and communication. Early explorations into this domain established frameworks where LLM-agents could collaborate on complex tasks. For instance, AutoGen [11] introduced a framework enabling the creation of conv ersa- tional agent workflows for tasks like code generation and problem-solving, where agents with different roles (e.g., com- mander , writer) collaborate to fulfill user requests. Recent advancements focus on multi-agent collaboration mechanisms, enabling groups of LLM agents to coordinate on complex tasks through iterati ve consensus-b uilding and role specializa- tion [12]. AgentNet [9] proposes decentralized ev olutionary coordination, where agents optimize expertise through pro- file evolution and peer interactions, addressing limitations in centralized systems. These dev elopments underscore the shift tow ard scalable, fault-tolerant designs, though domain-specific adaptations remain a key area for exploration. B. LLM-based Multi-Agent in Medical Domains In healthcare, LLM-based MAS have gained traction for simulating multi-disciplinary teams (MDTs) in tasks like di- agnosis, treatment planning, and question answering, where agents collaborate to handle uncertainty and interdisciplinary knowledge [13]. MedAgents [14] pioneers zero-shot medical collaboration using LLM agents in role-playing scenarios, enabling multi-round discussions for impro ved accuracy on datasets like MedQA without external tools. Building on this, MD Agents [15] introduces adaptiv e collaboration, assigning medical expertise to agents that operate independently or co- operativ ely based on query complexity , enhancing efficienc y in decision-making. AgentHospital [16] simulates virtual hospital en vironments with LLM-driven doctors, nurses, and patients, modeling full care cycles and demonstrating gains in clini- cal reasoning through agent interactions. LLM-MedQA [17] focuses on case-based reasoning in multi-agent setups, using debates and consensus to refine answers on medical QA bench- marks. Similarly , other centralized frameworks ha ve sho wn the benefits of collaboration between multiple LLMs for medical question answering [18]. Beyond question answering, such architectures have also been applied to specific clinical NLP tasks, including the automated detection of clinical problems from SOAP notes [19]. Debate protocols, as explored in multi- agent debate strate gies, have been adapted for medical contexts to handle discrepancies through argumentativ e exchanges [20]. Despite these innovations, decentralized designs tailored to healthcare without central coordinators are undere xplored, mo- tiv ating our focus on scalable, robust frameworks for medical QA. I I I . M E T H O D O L O G Y In this section, we delineate the methodology underpinning our MediHiv e framework, a decentralized multi-agent system designed for medical question answering. Centralized multi- agent systems, while ef fecti ve for simple tasks, often encounter scalability bottlenecks, single points of failure, and inefficien- cies in handling conflicting e vidence—particularly in high- stakes domains like healthcare. T o address these, MediHiv e adopts a decentralized coordination architecture, where agents collaborate autonomously through peer-to-peer interactions and a shared memory pool. Importantly , the shared memory pool serves solely as a passi ve, append-only repository for agent contributions; it performs no decision-making or coor- dination logic, and all reasoning, e valuation, and consensus- building are carried out autonomously by the indi vidual agents. This design promotes resilience, adaptability , and emer gent consensus without relying on a central coordinating agent or external tools, enabling rob ust performance on complex, inter - disciplinary medical queries. Let Q denote the input medical query (e.g., from datasets like PubMedQA or MedQA), and let A = { A 1 , A 2 , . . . , A N } represent the set of N LLM- based agents. M signifies the shared memory pool as an append-only , timestamped repository for all agent interactions, and K indicates the maximum number of iterativ e fusion rounds. The framework operates under various prompting paradigms, le veraging internal collaborations to foster consen- sus via autonomous processes. The remainder of this section is structured according to the workflo w: we begin with query initialization, followed by self-ev olving role assignment, ini- tial analysis, disagreement detection with conditional debate, iterativ e shared fusion, and finally consensus aggre gation and output generation. Figure 1 presents an overvie w of the proposed framew ork, illustrating the end-to-end workflo w and agent interactions. A. Query Initialization and Role Assignment The reasoning process commences with Query Initialization, where the input medical query Q is broadcast to all N agents by being appended to the shared memory pool, M . This is immediately follo wed by the Self-Evolving Role Assignment, a single-round ”warm-up” phase designed to establish specialized, complementary roles without a central coordinator . This phase unfolds in two steps. First, each agent A i ∈ A independently analyzes Q and generates an initial role proposal, R i, 0 . This proposal includes not only the intended specialization (e.g., ”Pulmonologist”) but also a brief reasoning for selecting that role based on the query . This proposal is then appended to M . Second, after all initial proposals are posted, each agent A i reads the full set of proposals { R j, 0 } N j =1 from M . The agent then performs a self- reflection step to update its role from R i, 0 to a final, refined role R i . This refinement, which also includes a rationale for the update, is guided by a prompt instructing the agent to optimize for three internal metrics: Clarity (a well-defined specialty), Dif ferentiation (low semantic ov erlap with peers’ roles), and Alignment (high rele vance to Q ). Each agent posts its final role R i to M , ensuring the set of agents A adopts a div erse and query-relev ant distribution of expertise before the main analysis begins. An example of the output for this phase is shown in Fig. 2 Query ( Q ): An 83-year-old woman presents with a 2-day history of moderate left lower quadrant abdominal pain. Exam reveals localized tenderness with guarding. Labs: WBC 14,200/mm 3 . What is the most likely diagnosis? Options: (A) Appendicitis (B) Colorectal cancer (C) Colonic diverticulitis (D) Pseudomembranous colitis Step 1: Role Proposals → M [A1] Role: Gastroenterologist Reasoning: Abdominal pain with localized tenderness and elev ated WBC suggests a GI etiology requiring differential diagnosis. [A2] Role: Geriatrician Reasoning: Patient’ s adv anced age (83) significantly alters the differential for abdominal pathology . [A3] Role: Surgeon Reasoning: LLQ tenderness with guarding and leukocytosis may indicate a surgical emergency . Step 2: Refined Roles → M [A1] Role: GI Specialist (Colorectal Disorders) Rationale: With A2 covering age-related factors, I narrow my focus to colorectal pathology specific to the LLQ. [A2] Role: Geriatric Medicine Specialist Rationale: Complementary to A1 and A3. I will analyze age-related risk factors and atypical presentations. [A3] Role: Surgical Consultant (Acute Abdomen) Rationale: A1 handles GI diagnostics; I focus on surgical indications and the urgency of the presentation. Fig. 2. Illustrativ e output of the Role Assignment phase for the sample MedQA query from Fig. 1, showing initial proposals and peer -aware refine- ments posted to M . B. Analysis, Debate, and Iterative Fusion Follo wing the role assignment, the core reasoning process of MediHiv e unfolds. This multi-stage process is designed to gen- erate independent initial insights, identify and resolv e conflicts through a structured debate, and finally con verge on a robust, group-vetted consensus through iterativ e refinement. Figure 3 contrasts centralized and decentralized designs, highlighting MediHiv e’ s key adv antages in coordination, scalability , and fault tolerance. 1) Initial Analysis and Confidence Assessment: In the initial formal reasoning round, each agent A i ∈ A begins its work. Guided by its finalized role R i , each agent inde- pendently analyzes the query Q and the context from the role assignment phase. The agent is prompted to generate a comprehensi ve initial output, which includes: (1) a de- tailed reasoning trace (e.g., a Chain-of-Thought) explaining its diagnostic process, (2) a specific final answer Ans i, 1 (e.g., ’yes’ for PubMedQA, or ’Option C’ for MedQA), and (3) a self-assessed confidence score c i, 1 ∈ [0 , 1] . This initial, independent analysis serves as a critical baseline, capturing each agent’ s specialized perspectiv e befor e it is influenced by its peers. This step is crucial for surfacing a div erse set of initial hypotheses and identifying the primary points of disagreement. The confidence score is not a statistical proba- bility but a self-assessed metric where the LLM is prompted to rate its conviction in its answer . This score provides a Fig. 1. Overvie w of the MediHiv e framework’ s decentralized workflo w for medical question answering, illustrated with a sample query from the MedQA dataset. The process unfolds in four key steps: (A) Query initialization and autonomous role assignment among LLM agents via the shared memory pool; (B) Initial assessments by specialized agents, including preliminary diagnoses with confidence scores and reasoning; (C) Disagreement detection triggering conditional multi-round debates, followed by local fusion of insights; and (D) Consensus aggregation through confidence-weighted voting, culminating in the final compiled reasoning and output. vital heuristic for agents to weigh their own and their peers’ opinions in subsequent rounds. The complete initial output ( Reasoning i, 1 , Ans i, 1 , c i, 1 ) is then appended to the shared memory pool M . This description can be expressed by the following formula: Reasoning i, 1 , Ans i, 1 , c i, 1 = A i ( Q , R i , M ) , i ∈ { 1 , . . . , N } . (1) 2) Disagr eement Detection and Conditional Debate: After all agents ha ve posted their initial analysis, each agent A i autonomously ex ecutes a disagreement detection protocol— there is no central manager to check for consensus. Each agent independently reads the full set of initial answers { Ans j, 1 } N j =1 from M and computes the current level of agree- ment. For tasks like PubMedQA, this is a direct tally of the ’yes’/’no’/’maybe’ votes; for MedQA, it is a count of the se- lected options. If no single answer holds a supermajority (i.e., the agreement le vel falls below a predefined threshold τ ag r ee , e.g., 0.8), the agents determine that significant disagreement exists, and the conditional debate phase is acti v ated. Because ev ery agent independently ev aluates the same shared e vidence, this check requires no central arbiter while ensuring that all participants reach a consistent assessment of the group’ s state. When agents already agree, the frame work bypasses the debate and proceeds directly to the fusion stage. If activ ated, the debate proceeds for T debate cycles (e.g., T debate = 2 ). The purpose of this stage is adversarial: to stress- test initial hypotheses and enrich the shared evidence base before agents attempt to con verge. In each cycle, all agents simultaneously contribute a structured argument to M . Each argument takes one of three forms: • Rebuttal: A tar geted, evidence-based challenge to a spe- cific peer’ s reasoning (e.g., “ Addressing A2: Y our reliance on [fact] is contradicted by ... ”). This exposes weaknesses in arguments and promotes critical ev aluation. • Defense: A reinforcement of the agent’ s own position, providing additional e vidence or strengthening its logic in response to a peer’ s challenge. This ensures that well- supported positions are not prematurely abandoned. • Proposal: A new , synthesized hypothesis that integrates insights from multiple peers into a revised position, bridging opposing vie ws and seeding potential consensus for the subsequent fusion stage. Crucially , the debate does not require agents to update their formal answers or confidence scores; its role is to generate a rich adversarial evidence base that informs the subsequent fusion stage. All debate contributions are logged to M , creating a transparent and auditable record. The debate runs for the full T debate cycles to ensure thorough examination of the disagreements before the framework transitions to con vergence. 3) Iterative Shar ed Fusion: Follo wing the initial analysis (and the conditional debate, if it occurred), the framework enters the iterati ve shared fusion stage. While the debate is Fig. 3. Comparison of centralized and proposed MediHive framework, highlighting coordination, resilience, and adaptability . adversarial —designed to challenge assumptions and enrich the e vidence base—the fusion stage is inte grative : each agent synthesizes the accumulated evidence into a formal, structured position. This is the sole stage in which agents produce updated answers and confidence scores, and the sole stage in which formal con vergence is measured. The fusion process proceeds in rounds beginning at k = 2 , with the scope of each agent’ s input determined by the round. a) Comprehensive Integr ation (Round k=2): In the first fusion round, each agent A i reads the entire interaction history stored in M : the initial analyses from all peers ( k = 1 ) and, if a debate was triggered, its full argumentati ve log. The agent then ex ecutes a structured integration process: (1) Critique the strengths and weaknesses of peer arguments, (2) Integrate the most compelling evidence into its own reasoning, and (3) Revise its initial stance accordingly . This comprehensiv e revie w is essential because it is the first point at which agents formally update their positions in light of all accumulated evidence. This yields the agent’ s first fusion-based entry: ( Reasoning i, 2 , Ans i, 2 , c i, 2 ) . b) Incremental Refinement (Rounds k > 2): In subse- quent rounds (up to the maximum K ), each agent reads the full set of peer outputs from the immediately preceding round, { ( Reasoning j,k − 1 , Ans j,k − 1 , c j,k − 1 ) } N j =1 , rather than the entire history . This narrowing of scope is justified because the comprehensiv e revie w at k =2 has already incorporated the debate’ s insights; further rounds serve to refine positions in response to peers’ e volving stances. Each agent assesses the current group consensus, critiques peers’ latest reasoning, and updates its own output, producing ( Reasoning i,k , Ans i,k , c i,k ) . The fusion loop terminates when the agreement level reaches or exceeds τ ag r ee for two consecuti ve rounds, ensuring that con ver gence is stable rather than transient, or when the maximum round limit K is reached. C. Final Synthesis by the Reporter Upon the termination of the iterativ e fusion loop, the frame- work transitions from decentralized collaborativ e reasoning to a final synthesis stage. This stage is handled by a specialized Reporter , whose role is strictly limited to aggregating and sum- marizing the independently generated outputs of the reasoning agents, rather than coordinating or influencing their decision processes. The Reporter operates in a post-hoc manner and applies an adaptiv e aggregation strategy depending on how the fusion loop concludes. Specifically , the Reporter first determines the termination condition of the loop: • If a strong consensus was reached (i.e., the loop terminated because agreement surpassed the threshold τ ag r ee ), the Reporter’ s role is confirmatory . It identifies the answer supported by the supermajority of agents and designates it as the final answer, a ∗ . In this case, Algorithm 1 The MediHi ve Frame work W orkflow 1: procedure M E D I H I V E ( Q , A , K, T debate , τ ag r ee ) 2: M ← {Q} ▷ Initialize shared memory pool with query ▷ Phase 1: Role Assignment 3: for each A i ∈ A do ▷ Step 1: Independent role proposals 4: R i, 0 ← A i . ProposeRole ( Q ) ; Append ( M , R i, 0 ) 5: for each A i ∈ A do ▷ Step 2: Peer-a ware refinement 6: R i ← A i . RefineRole ( M ) ; Append ( M , R i ) ▷ Phase 2: Initial Analysis ( k =1) 7: for each A i ∈ A do 8: ( Reas i, 1 , Ans i, 1 , c i, 1 ) ← A i . Analyze ( Q , R i , M ) ; Append ( M , · ) ▷ Phase 3: Disagreement Detection and Conditional Debate 9: if max( T ally ( { Ans i, 1 } )) / N < τ ag r ee then 10: for t ← 1 to T debate do ▷ Debate: Reb uttal, Defend, or Propose 11: for each A i ∈ A do 12: Append ( M , A i . Debate ( M )) ▷ Phase 4: Iterative Shared Fusion ( k =2 to K ) 13: prevAgrmt ← 0 14: for k ← 2 to K do 15: ctx ← ( M . readAll () if k = 2 { ( Reas j,k − 1 , Ans j,k − 1 , c j,k − 1 ) } N j =1 if k > 2 16: for each A i ∈ A do 17: ( Reas i,k , Ans i,k , c i,k ) ← A i . Fuse ( ctx ) ; Append ( M , · ) 18: currAgrmt ← max( T ally ( { Ans i,k } )) / N 19: if currAgrmt ≥ τ ag r ee and prevAgrmt ≥ τ ag r ee then break ▷ Stable consensus 20: prevAgrmt ← currAgrmt ▷ Phase 5: Final Output (Reporter) 21: k ∗ ← last round 22: a ∗ ← MajorityAnswer ( { Ans i,k ∗ } ) if agreement ≥ τ ag r ee arg max a P i c i,k ∗ · 1 ( Ans i,k ∗ = a ) otherwise 23: retur n ( a ∗ , SynthesizeT race ( M )) no additional decision logic is introduced, as collective agreement has already emerged from decentralized agent reasoning. • If no consensus was reached (i.e., the loop terminated upon reaching the maximum round limit K ), the Reporter applies a predefined resolution mechanism in the form of a confidence-weighted vote . Importantly , this procedure does not alter agent reasoning or retroactiv ely enforce agreement, but serves solely as a deterministic aggrega- tion rule to produce a single output from div ergent agent conclusions. The process consists of two steps: First, the Reporter computes a total confidence score, S ( a ) , for each candidate answer a in the set of all possible answers V , by summing the confidence scores ( c i,k ) of agents whose final answer ( a i,k ) matches a : S ( a ) = N X i =1 c i,k · 1 ( a i,k = a ) Second, the final answer is selected as: a ∗ = arg max a ∈V S ( a ) Beyond answer aggregation, the Reporter also provides an explanatory summary of the reasoning process. It constructs a reasoning trace by parsing the shared memory M , which stores the historical outputs generated by agents during the fusion loop. This trace highlights salient elements of the interaction history , including initial role assignments, key debate exchanges, and representati ve reasoning steps. The final output consists of the selected answer a ∗ together with its supporting reasoning trace. This design supports transparency by exposing the intermediate reasoning artifacts generated during decentralized agent interaction, thereby fa- cilitating post-hoc inspection and analysis without introducing centralized control into the reasoning process. I V . E X P E R I M E N T S A. Dataset W e ev aluate our proposed framew ork on two widely used benchmarks for medical question answering: PubMedQA [21] and MedQA [22], which test the system’ s ability to handle biomedical reasoning and clinical kno wledge. PubMedQA is a dataset designed for biomedical research question answering, comprising questions paired with PubMed abstracts that re- quire yes/no/maybe answers along with supporting reasoning. It emphasizes the need for quantitative and e vidence-based inference over scientific texts. W e focus on the test set for ev aluation, assessing performance in a closed-book setting where agents rely solely on internal knowledge and collabora- tion. MedQA is a large-scale open-domain question answering dataset derived from United States Medical Licensing Exami- nation (USMLE) questions, featuring multiple-choice formats with four or fi ve options. This dataset challenges models on comprehensi ve medical kno wledge, including diagnosis, treatment, and ethical considerations, making it ideal for validating multi-agent reasoning in high-stakes scenarios. Our experiments utilize the test split to measure accuracy in zero- shot collaborativ e settings. B. Main Results In this section, we present a comprehensive e valuation of our proposed framework, MediHiv e, focusing on its zero- shot accuracy and F1-score advantages on the MedQA and PubMedQA datasets. All experiments were conducted using Llama-3.1-70B-Instruct as the base large language model. All baselines, including multi-agent methods originally ev aluated with different models, were reimplemented under this same backbone to ensure a fair comparison across all configurations. The results, summarized in T able I, benchmark our method against two primary cate gories of baselines. The Single-Agent configurations ev aluate the performance of the base LLM under various prompting strategies: standard zero-shot, zero- shot with Chain-of-Thought (w/ CoT) to elicit step-by-step reasoning, and an enhanced v ersion that adds Self-Consistency (w/ CoT + SC) by sampling multiple reasoning paths. These baselines establish a performance ceiling for non-collaborati ve approaches, topping out at a 74.1% average accuracy . The Multi-Agent baselines demonstrate the inherent benefits of collaborativ e reasoning, setting a higher competiti ve standard. These include a centralized multi-agent system, in which a sin- gle coordinator agent assigns specialist roles to N =5 agents, moderates their multi-round discussion, and synthesizes the final answer—a design consistent with standard centralized configurations in the literature [1], [15]—as well as state- of-the-art frame works: MedAgents [14], which uses role- based collaborati ve consultation with centralized report syn- thesis, and Multiagent Debate [23], which improves factuality through iterativ e multi-agent debate rounds. Our framework, MediHiv e, operates in the same zero- shot setting, le veraging its decentralized architecture with fiv e agents (N=5) to achiev e state-of-the-art performance. As shown in the results, MediHi ve attains an overall a verage ac- curacy of 81.4%, driv en by strong indi vidual performances of 84.3% on MedQA and 78.4% on PubMedQA. This represents a substantial impro vement of 7.3 percentage points over the strongest single-agent method. More importantly , MediHive outperforms the leading multi-agent baselines. For instance, while MedAgents achieves a commendable 80.3% av erage ac- curacy , MediHiv e surpasses it by 1.1 points, with a particularly strong sho wing on the complex MedQA dataset. This superior performance validates the efficacy of our framework’ s unique components—such as self-ev olving roles, conditional debate, and efficient iterative fusion—in fostering a more robust and accurate consensus for complex medical reasoning. C. Ablation Study T ABLE II A B LAT IO N S TU DY R ES U LTS Method MedQA(%) PubMedQA(%) MediHiv e 84.3 78.4 w/o CoT 78.0 73.0 w/o Self-Evolving Role Assignment 81.5 75.9 w/o Confidence-W eighted V oting 82.4 76.6 T o e valuate the contributions of key components in the MediHiv e frame work, we conducted an ablation study on the MedQA and PubMedQA datasets, with results summarized in T able II. The full MediHive frame work achiev es accuracies of 84.3% on MedQA and 78.4% on PubMedQA, serving as the baseline. W e target three modular components that can be cleanly removed without altering the overall pipeline structure: Chain-of-Thought (CoT) reasoning, Self-Evolving Role Assignment, and Confidence-W eighted V oting. Remov- ing CoT reasoning, which guides agents to produce step-by- step logical inferences, results in a noticeable performance drop to 78.0% on MedQA and 73.0% on PubMedQA. This decline underscores the importance of structured reasoning for navigating the complex, evidence-based requirements of medical question answering, particularly in datasets demand- ing nuanced interpretation. Disabling Self-Evolving Role As- signment, where agents dynamically specialize based on query context and peer proposals, reduces accuracies to 81.5% on MedQA and 75.9% on PubMedQA. This indicates that adap- tiv e role specialization is critical for ensuring div erse expertise, prev enting redundant analyses, and enhancing collaborativ e accuracy . Omitting Confidence-W eighted V oting, which prior- itizes high-confidence outputs during final aggregation, yields accuracies of 82.4% on MedQA and 76.6% on PubMedQA. The smaller drop suggests that while weighted voting refines consensus by leveraging agent certainty , majority voting still captures collective decisions, albeit with reduced precision. These results v alidate that each component—CoT , role as- signment, and weighted voting—plays a significant role in MediHiv e’ s ef fectiveness, with CoT and role assignment being particularly crucial for high-stakes medical tasks. W e note T ABLE I M A IN R E SU LT S O N A CC U R AC Y A N D F 1 - S CO R E AC RO S S M E D Q A A N D P U B M E D Q A D A TA SE T S Method MedQA PubMedQA A vg. Accuracy (%) F1-score (%) Accuracy (%) F1-score (%) Accuracy (%) Single-Agent *zer o-shot setting Zero-shot 71.5 66.8 69.3 67.8 70.4 Zero-shot w/ CoT 73.2 70.5 70.7 69.2 72.0 Zero-shot w/ CoT + SC 73.8 70.9 72.1 70.6 73.0 *few-shot setting Fe w-shot 72.8 71.0 71.5 69.9 72.2 Fe w-shot w/ CoT 74.6 73.1 72.3 70.7 73.5 Fe w-shot w/ CoT + SC 74.4 72.9 73.8 72.1 74.1 Multi-Agent Centralized Multi-Agent System 77.8 76.1 75.3 74.1 76.6 MedAgents [14] 83.7 82.1 76.8 75.1 80.3 Multiagent Debate [23] 80.4 78.3 78.2 76.4 79.3 MediHive (Ours) 84.3 82.5 78.4 76.8 81.4 Fig. 4. Performance on MedQA and PubMedQA datasets as a function of the number of collaborating agents ( N ). The optimal accuracy for both datasets is achieved with N = 5 . that the conditional debate and iterative fusion mechanisms are structural components of the pipeline whose removal would fundamentally alter the system’ s architecture rather than isolate a single variable; their collectiv e contribution is instead reflected in MediHiv e’ s consistent advantage ov er the centralized baseline, which lacks both mechanisms. D. Impact of the Number of Agents As our MediHi ve frame work is predicated on the collab- oration between multiple autonomous agents, we explored how the number of agents ( N ) influences the system’ s o verall performance. W e varied the number of agents in the system, testing configurations of N ∈ { 3 , 5 , 7 } , while holding all other parameters constant. The results of this analysis on both the MedQA and PubMedQA datasets are illustrated in Figure 4. Our key observ ation is that performance is not mono- tonically increasing with the number of agents. Instead, we find a consistent peak for both datasets, with the optimal accuracy being achieved with a configuration of fi ve agents. The performance degradation with fewer agents ( N = 3 ) suggests that a smaller group may lack the necessary di versity of self-assigned roles to comprehensively address the query . Con versely , increasing the group to seven agents also leads to a decline in accuracy . W e hypothesize this is due to an increase in con versational noise and the greater difficulty in reaching a stable consensus, potentially caused by role redundancy . This finding suggests that five agents strike an optimal balance between expert di versity and efficient, focused collaboration. Based on this analysis, we set N = 5 as the default configuration for all other experiments in this paper . V . L I M I TA T I O N S A N D F U T U R E W O R K Despite its promising results, our framew ork has se veral limitations that present clear opportunities for future research. First, the frame work’ s effecti veness has been demonstrated on controlled medical question answering benchmarks. While such datasets are commonly used to study medical reasoning, they do not fully capture the complexity , ambiguity , and con- textual richness of real-world clinical workflows. As a result, MediHiv e is not intended for direct clinical decision-making, and real-world clinical validation remains an important future direction. Second, our ev aluation primarily focuses on mean perfor- mance trends under controlled benchmark settings, without conducting formal statistical significance testing, confidence interval analysis, or a detailed assessment of computational efficienc y (e.g., inference latency , token usage, and scalabil- ity). While this choice allo ws us to concentrate on system- lev el coordination beha vior , a more comprehensiv e ev aluation framew ork that integrates statistical rigor with ef ficiency and scalability analysis will be an important direction for future work. Third, our ev aluation was conducted in a closed-book set- ting, where agents rely solely on their internal kno wledge. The framew ork does not currently incorporate e xternal tools for real-time information retriev al. A key av enue for future work is to integrate a Retrie val-Augmented Generation (RA G) pipeline, which would empower agents to ground their rea- soning in the latest medical literature and clinical guidelines, potentially improving accuracy and reducing hallucinations. Finally , although we discuss potential failure modes and safety concerns, performing a systematic safety or clinical risk assessment will contribute more to potential clinical application. Addressing hallucination risks, high-confidence incorrect answers, and safety-aw are ev aluation metrics will be critical in future studies. V I . C O N C L U S I O N In this work, we introduced MediHive, a decentralized multi-agent framework designed to support complex medical reasoning through structured coordination. Rather than relying on a central coordinator , MediHi ve enables agents to operate with autonomous reasoning roles, adapt dynamically to the input query , and reach consensus via conditional debate and iterativ e information fusion. Experimental results on the MedQA and PubMedQA bench- marks demonstrate that MediHive consistently outperforms single-agent approaches and competiti ve multi-agent baselines under the same model backbone, highlighting the effecti veness of decentralized coordination in medical question answering. In addition, ablation studies confirm that each architectural component contrib utes meaningfully to the ov erall system performance. T ogether , these findings suggest that decentralized role- based coordination of fers a promising direction for impro ving robustness and reasoning quality in LLM-based medical QA systems, and provide a foundation for future research on scal- able, reliable multi-agent reasoning frameworks in healthcare contexts. A C K N O W L E D G M E N T This work was supported in part by the National Science Foundation under the Grants IIS-1741306 and IIS-2235548, and by the Department of Defense under the Grant DoD W91XWH-05-1-023. This material is based upon work sup- ported by (while serving at) the National Science Founda- tion. Any opinions, findings, conclusions, or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the vie ws of the National Science Foundation. R E F E R E N C E S [1] X. Chen, H. Yi, M. Y ou, W . Liu, L. W ang, H. Li, X. Zhang, Y . Guo, L. Fan, G. Chen et al. , “Enhancing diagnostic capability with multi- agents con versational large language models, ” NPJ digital medicine , vol. 8, no. 1, p. 159, 2025. [2] F . R. Altermatt, A. Neyem, N. Sumonte, M. Mendoza, I. V illagran, and H. J. Lacassie, “Performance of single-agent and multi-agent language models in spanish language medical competency e xams, ” BMC Medical Education , vol. 25, no. 1, p. 666, 2025. [3] C.-H. Chang, M. M. Lucas, Y . Lee, C. C. Y ang, and G. Lu-Y ao, “Beyond self-consistency: Ensemble reasoning boosts consistency and accuracy of llms in cancer staging, ” in International Conference on Artificial Intelligence in Medicine . Springer , 2024, pp. 224–228. [4] M. M. Lucas, J. Y ang, J. K. Pomeroy , and C. C. Y ang, “Reasoning with large language models for medical question answering, ” J ournal of the American Medical Informatics Association , vol. 31, no. 9, pp. 1964–1975, 2024. [5] T . Guo, X. Chen, Y . W ang, R. Chang, S. Pei, N. V . Chawla, O. W iest, and X. Zhang, “Large language model based multi-agents: A survey of progress and challenges, ” in Pr oceedings of the Thirty-Third Interna- tional J oint Confer ence on Artificial Intelligence, IJCAI-24 , K. Larson, Ed. International Joint Conferences on Artificial Intelligence Organi- zation, 2024, pp. 8048–8057. [6] R. Li, X. W ang, D. Berlo witz, J. Mez, H. Lin, and H. Y u, “Care-ad: a multi-agent large language model framework for alzheimer’ s disease prediction using longitudinal clinical notes, ” npj Digital Medicine , v ol. 8, no. 1, p. 541, 2025. [7] X. Li, S. W ang, S. Zeng, Y . Wu, and Y . Y ang, “ A survey on llm- based multi-agent systems: workflow , infrastructure, and challenges, ” V icinagearth , vol. 1, no. 1, p. 9, 2024. [8] M. Cemri, M. Z. Pan, S. Y ang, L. A. Agrawal, B. Chopra, R. Tiwari, K. Keutzer , A. Parameswaran, D. Klein, K. Ramchandran et al. , “Why do multi-agent llm systems fail?” arXiv pr eprint arXiv:2503.13657 , 2025. [9] Y . Y ang, H. Chai, S. Shao, Y . Song, S. Qi, R. Rui, and W . Zhang, “ Agentnet: Decentralized evolutionary coordination for llm-based multi- agent systems, ” arXiv pr eprint arXiv:2504.00587 , 2025. [10] J. W ang, K. Chen, X. Song, K. Zhang, L. Ai, E. Y ang, and B. Shi, “Symphony: A decentralized multi-agent frame work for scalable collec- tiv e intelligence, ” arXiv pr eprint arXiv:2508.20019 , 2025. [11] Q. W u, G. Bansal, J. Zhang, Y . W u, B. Li, E. Zhu, L. Jiang, X. Zhang, S. Zhang, J. Liu et al. , “ Autogen: Enabling ne xt-gen llm applications via multi-agent con versations, ” in Fir st Confer ence on Language Modeling , 2024. [12] K.-T . Tran, D. Dao, M.-D. Nguyen, Q.-V . Pham, B. O’Sulli van, and H. D. Nguyen, “Multi-agent collaboration mechanisms: A survey of llms, ” arXiv preprint , 2025. [13] W . W ang, Z. Ma, Z. W ang, C. Wu, J. Ji, W . Chen, X. Li, and Y . Y uan, “ A survey of llm-based agents in medicine: Ho w far are we from baymax?” arXiv preprint arXiv:2502.11211 , 2025. [14] X. T ang, A. Zou, Z. Zhang, Z. Li, Y . Zhao, X. Zhang, A. Cohan, and M. Gerstein, “Medagents: Large language models as collaborators for zero-shot medical reasoning, ” in Findings of the Association for Computational Linguistics: ACL 2024 , 2024, pp. 599–621. [15] Y . Kim, C. Park, H. Jeong, Y . S. Chan, X. Xu, D. McDuff, H. Lee, M. Ghassemi, C. Breazeal, and H. W . Park, “Mdagents: An adaptive collaboration of llms for medical decision-making, ” Advances in Neural Information Processing Systems , vol. 37, pp. 79 410–79 452, 2024. [16] J. Li, Y . Lai, W . Li, J. Ren, M. Zhang, X. Kang, S. W ang, P . Li, Y .-Q. Zhang, W . Ma et al. , “ Agent hospital: A simulacrum of hospital with ev olvable medical agents, ” arXiv preprint , 2024. [17] H. Y ang, H. Chen, H. Guo, Y . Chen, C.-S. Lin, S. Hu, J. Hu, X. W u, and X. W ang, “Llm-medqa: Enhancing medical question an- swering through case studies in large language models, ” arXiv preprint arXiv:2501.05464 , 2024. [18] K. Shang, C.-H. Chang, and C. C. Y ang, “Collaboration among multiple large language models for medical question answering, ” arXiv preprint arXiv:2505.16648 , 2025. [19] Y . Lee, X. W ang, and C. C. Y ang, “ Automated clinical problem detection from soap notes using a collaborative multi-agent llm architecture, ” arXiv preprint arXiv:2508.21803 , 2025. [20] A. Smit, P . Duckworth, N. Grinsztajn, T . D. Barrett, and A. Pretorius, “Should we be going mad? a look at multi-agent debate strategies for llms, ” arXiv preprint , 2023. [21] Q. Jin, B. Dhingra, Z. Liu, W . Cohen, and X. Lu, “Pubmedqa: A dataset for biomedical research question answering, ” in Proceedings of the 2019 confer ence on empirical methods in natural language pr ocessing and the 9th international joint conference on natural language pr ocessing (EMNLP-IJCNLP) , 2019, pp. 2567–2577. [22] D. Jin, E. Pan, N. Oufattole, W .-H. W eng, H. Fang, and P . Szolovits, “What disease does this patient hav e? a large-scale open domain question answering dataset from medical exams, ” Applied Sciences , vol. 11, no. 14, p. 6421, 2021. [23] Y . Du, S. Li, A. T orralba, J. B. T enenbaum, and I. Mordatch, “Improving factuality and reasoning in language models through multiagent debate, ” in F orty-first international confer ence on machine learning , 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment